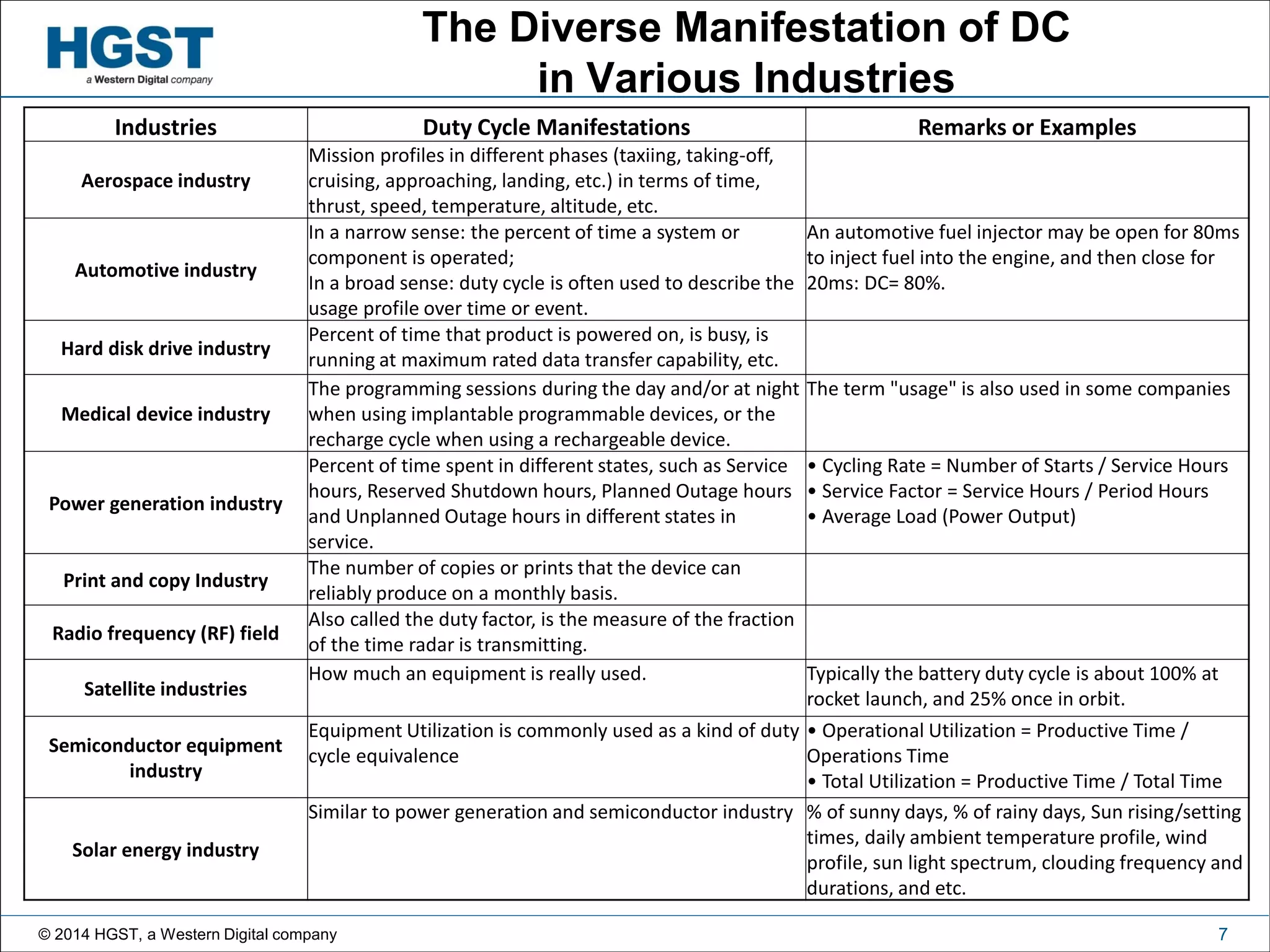

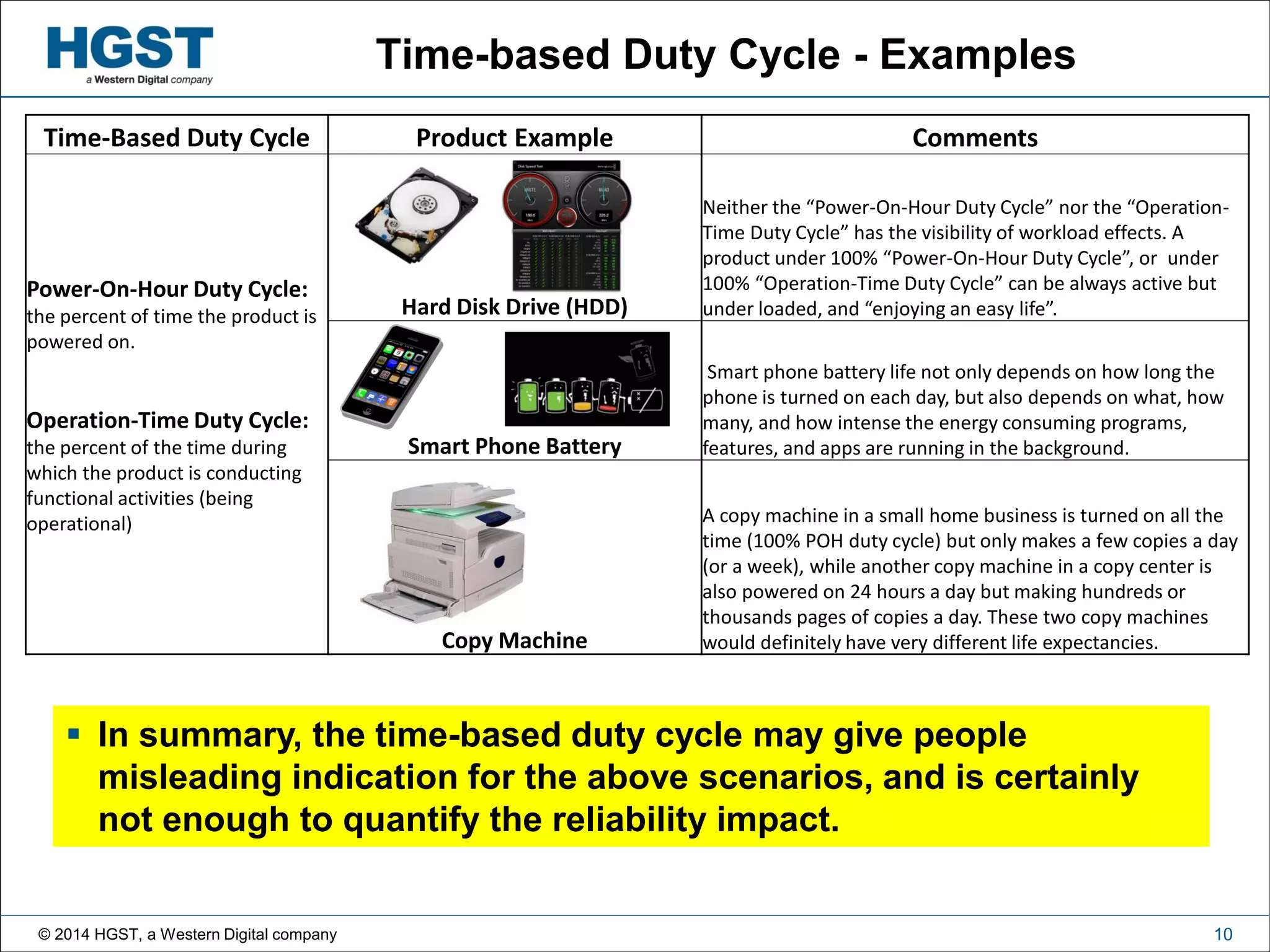

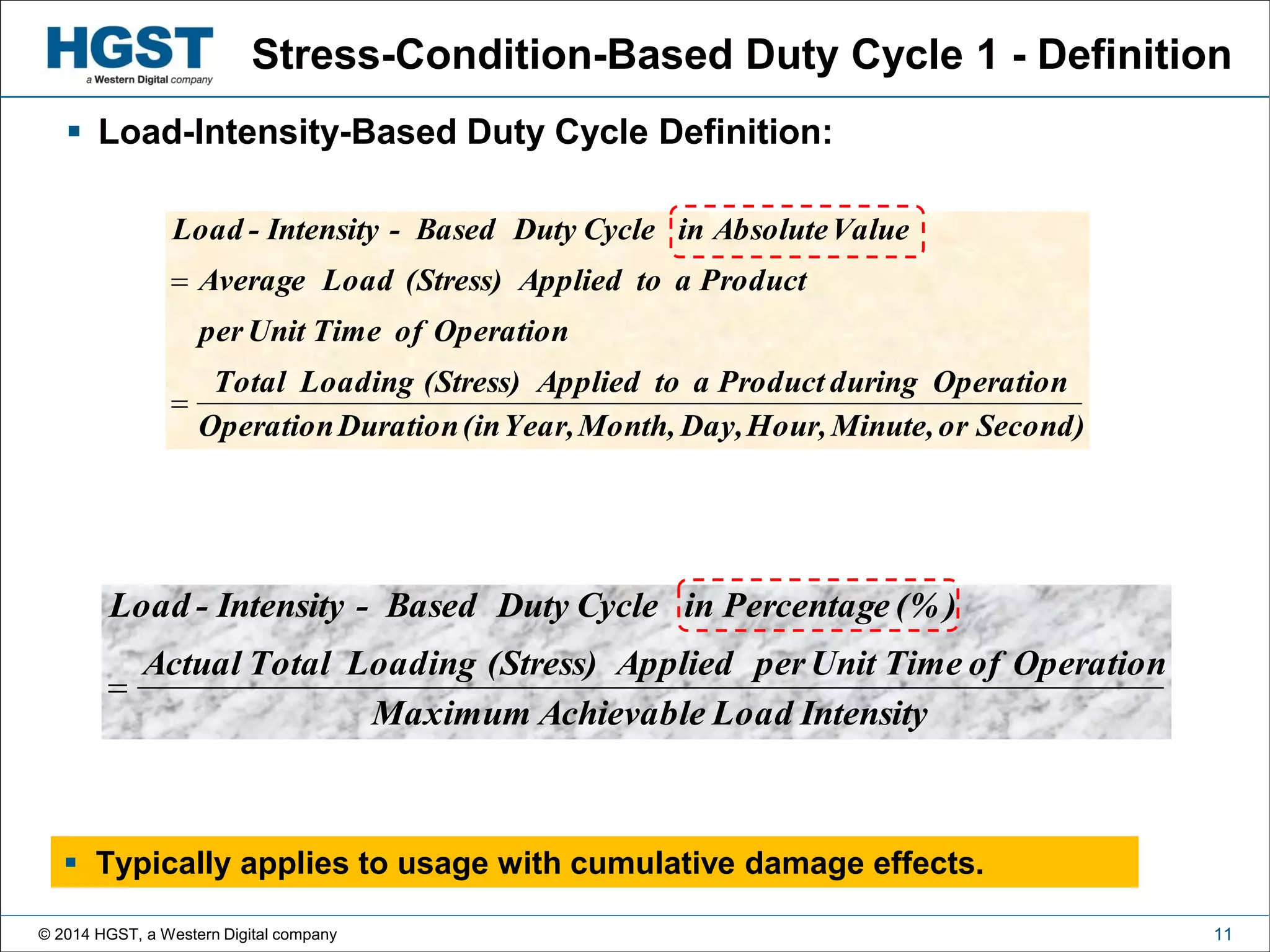

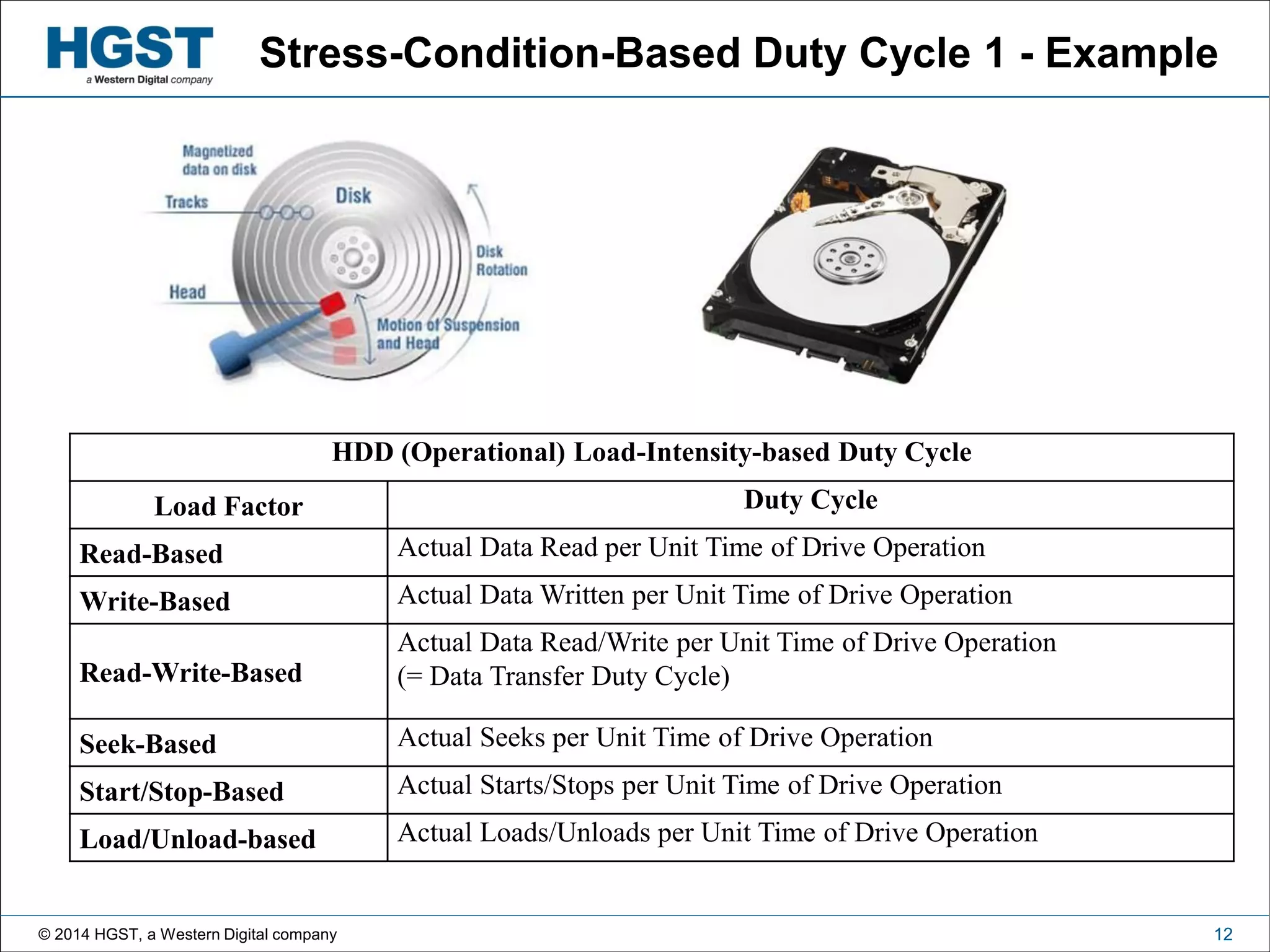

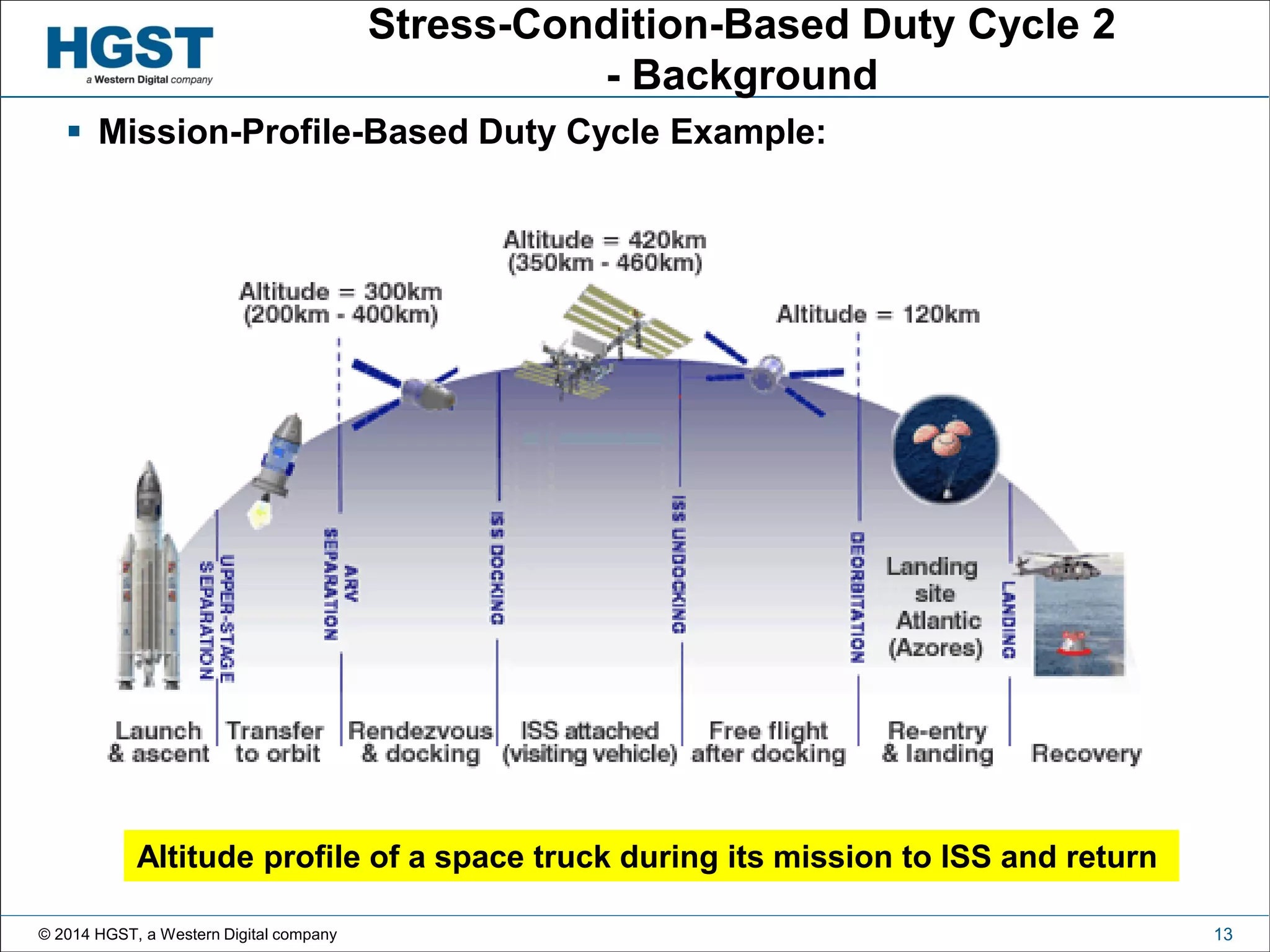

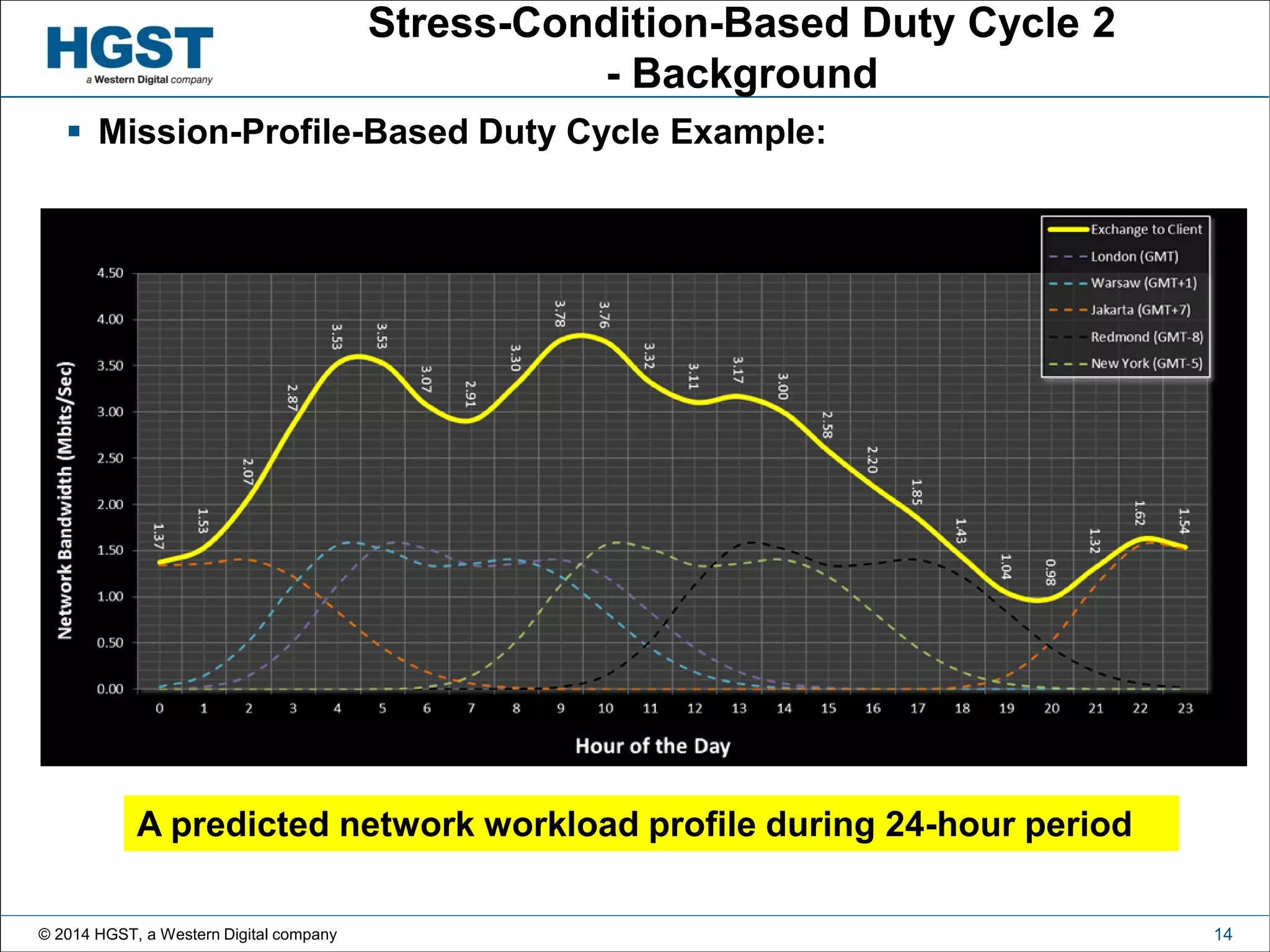

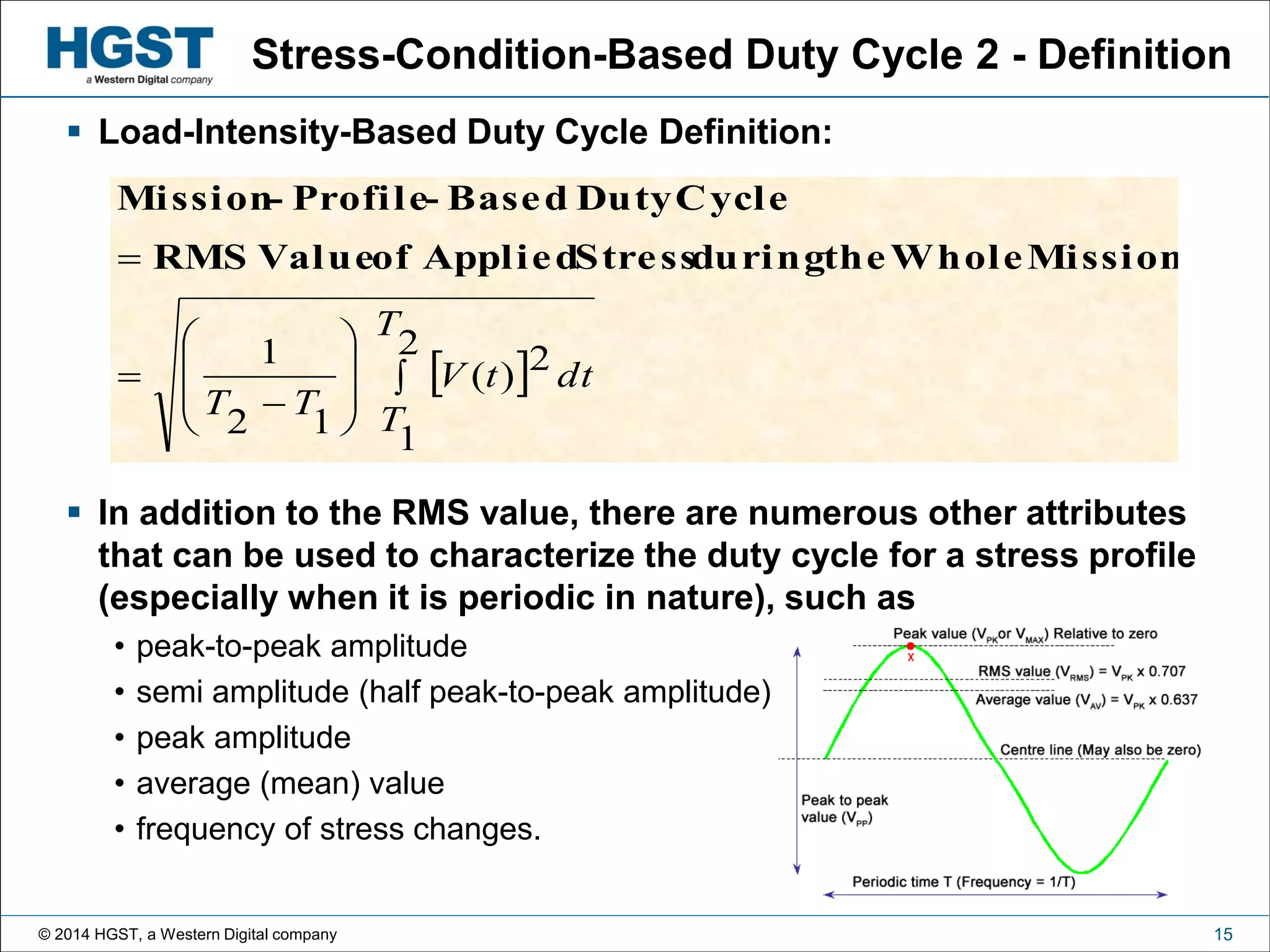

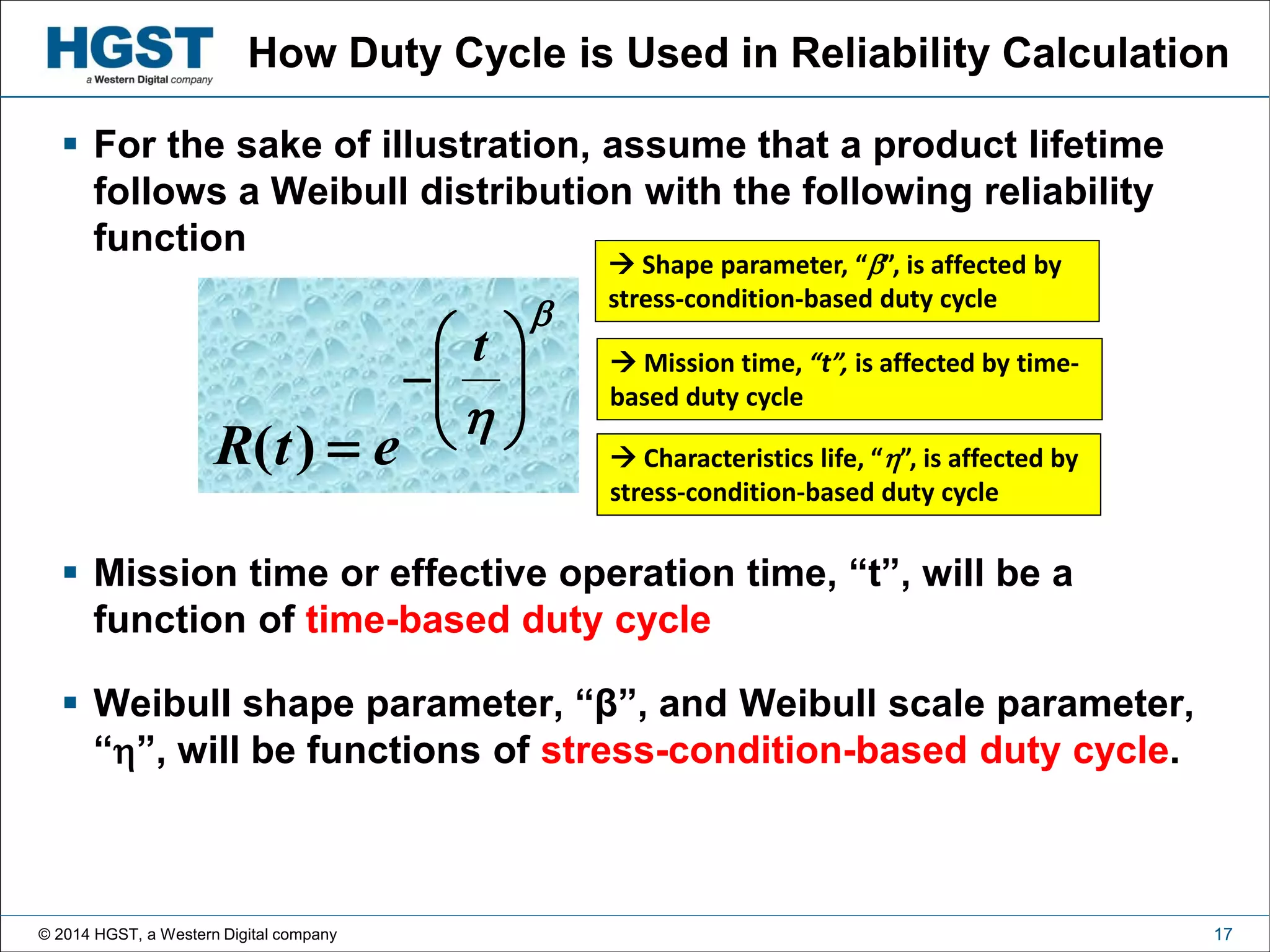

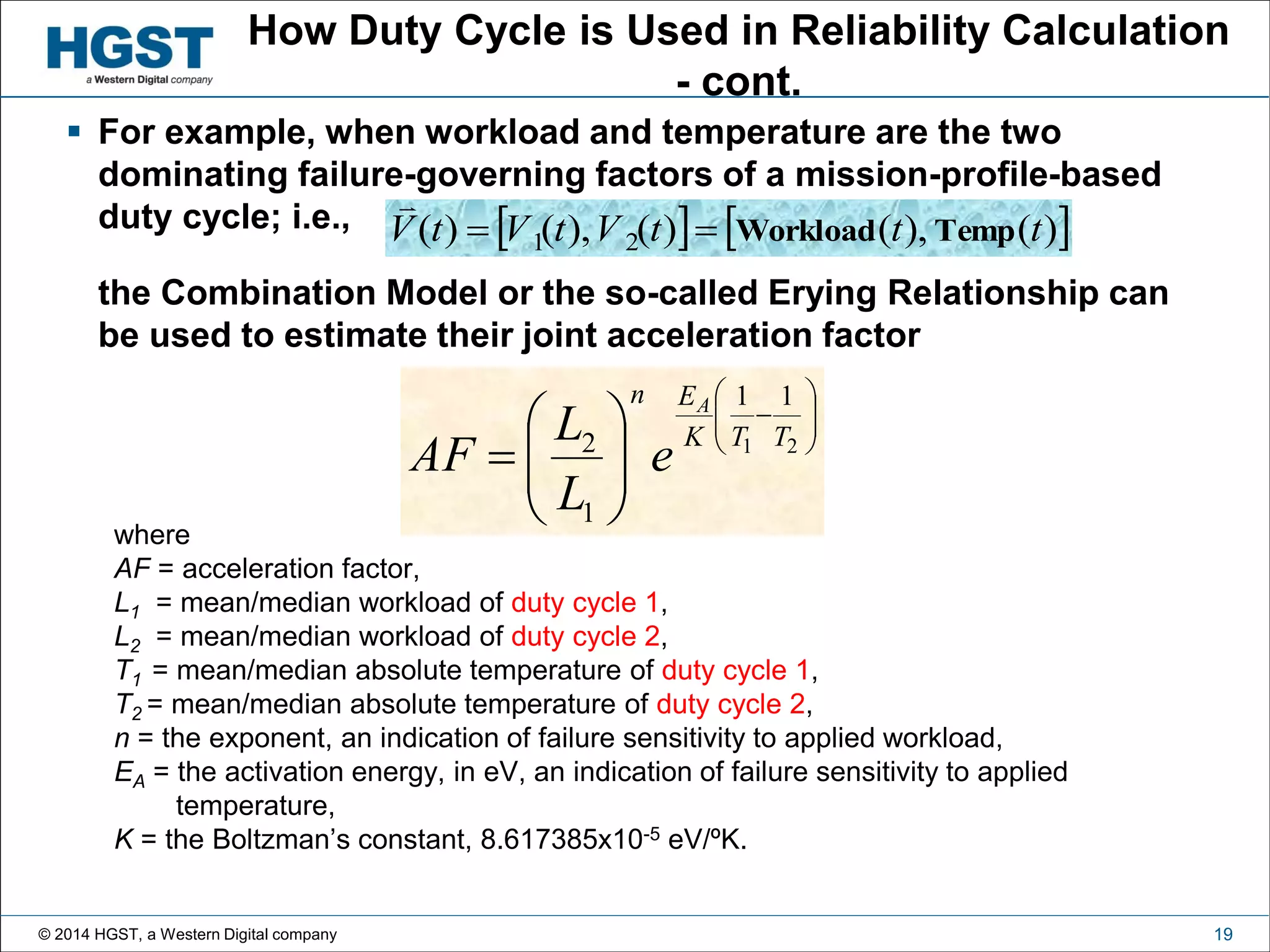

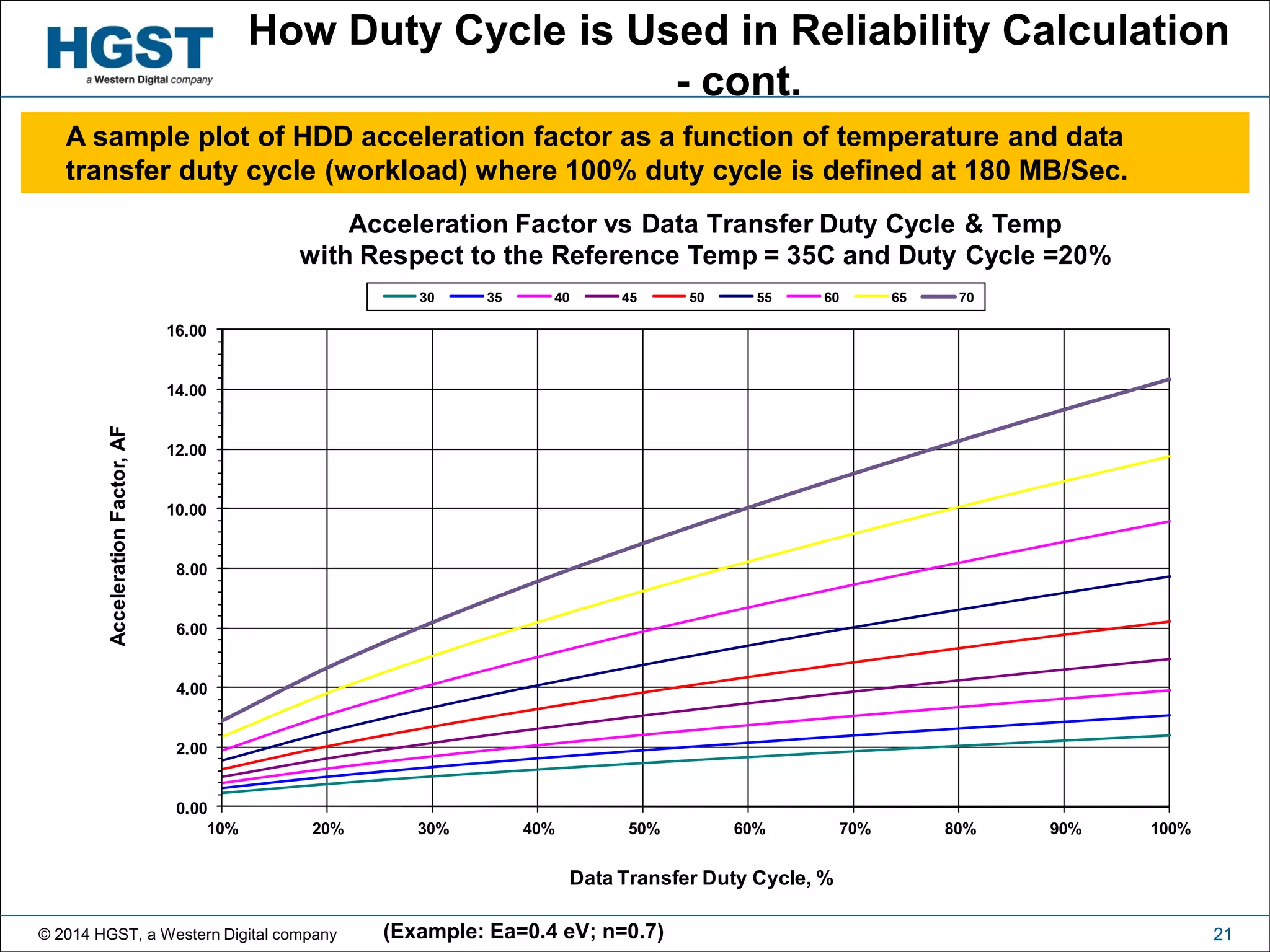

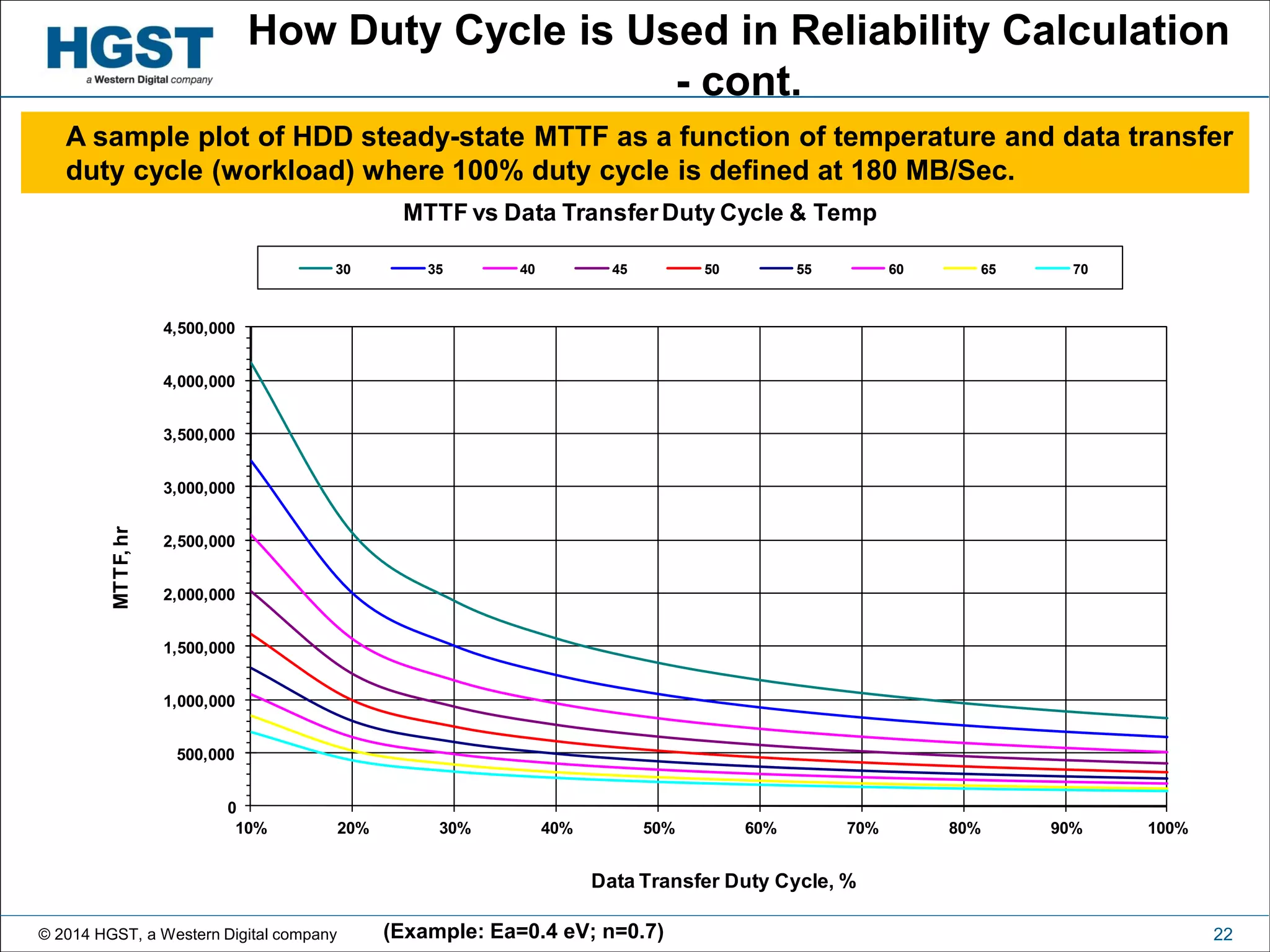

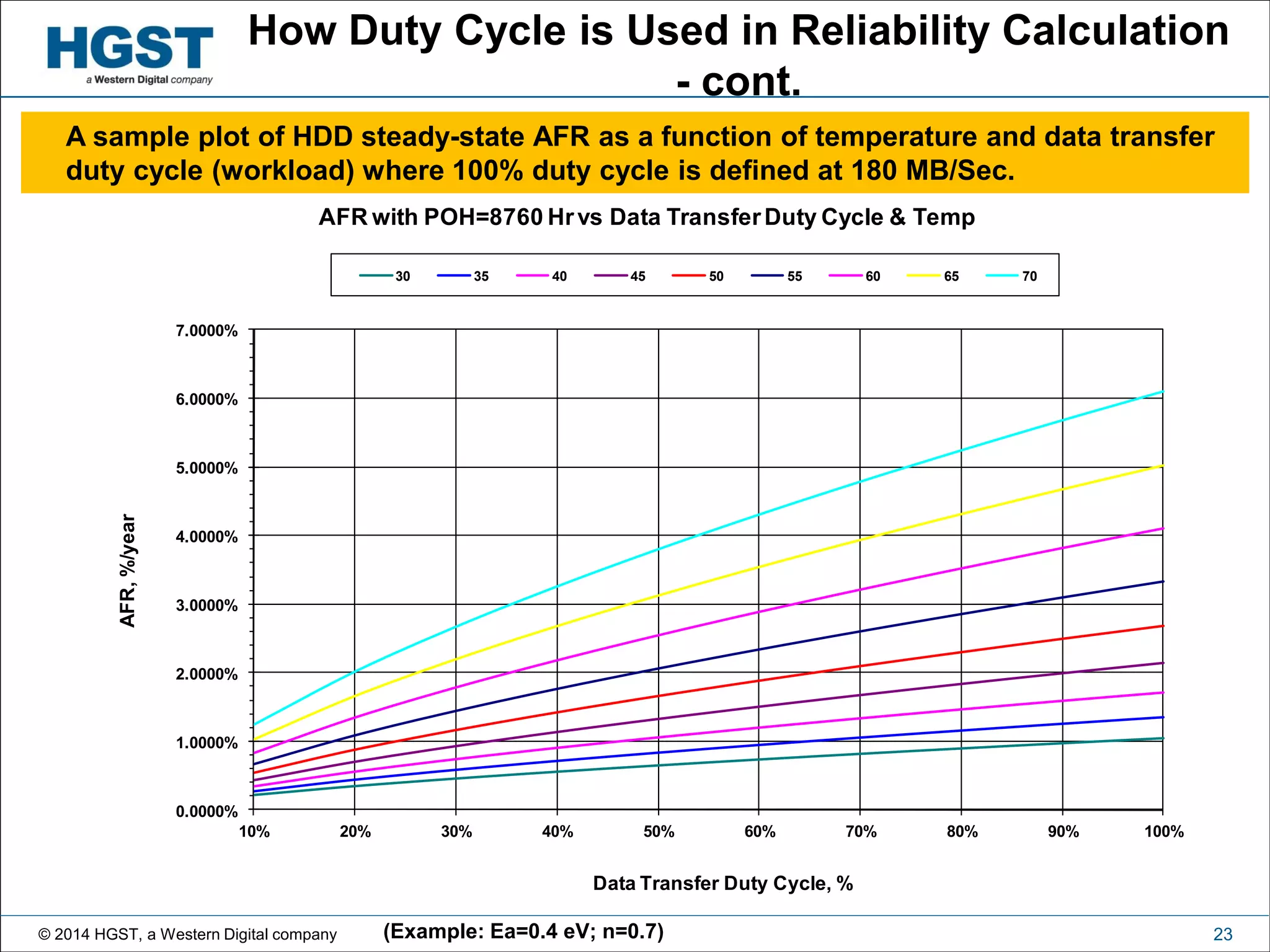

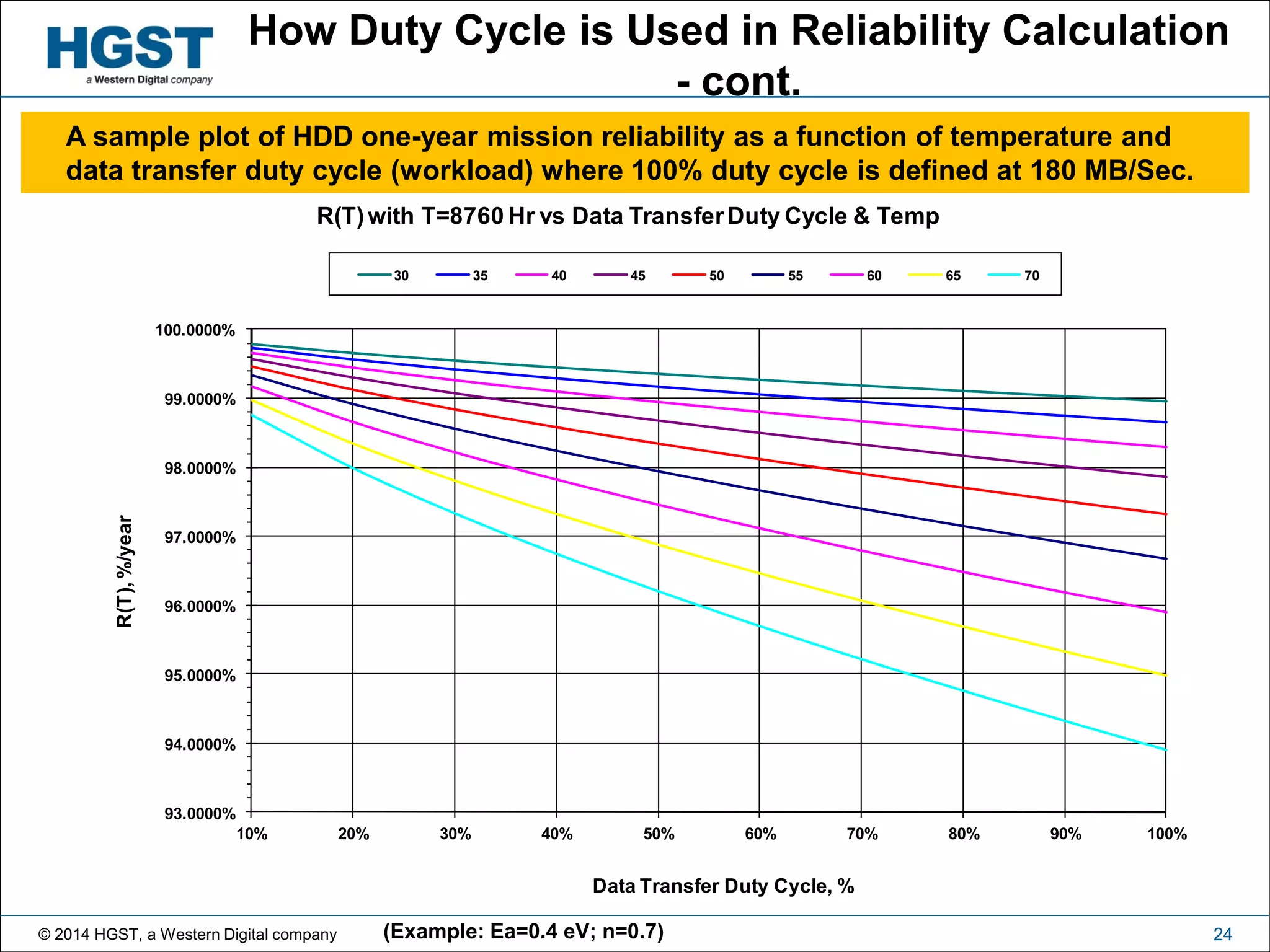

This document discusses duty cycle concepts in reliability engineering. It begins with definitions of time-based and stress-condition-based duty cycles. Time-based duty cycle is the proportion of time a system is active, while stress-condition-based duty cycle considers the level of stress applied. The document then discusses how duty cycle manifests differently across various industries and how it is used to calculate reliability, with duty cycle affecting mission time, failure mechanisms, and characteristic life. Examples are provided for hard disk drives to illustrate the effects of duty cycle on acceleration factors and mean time to failure.