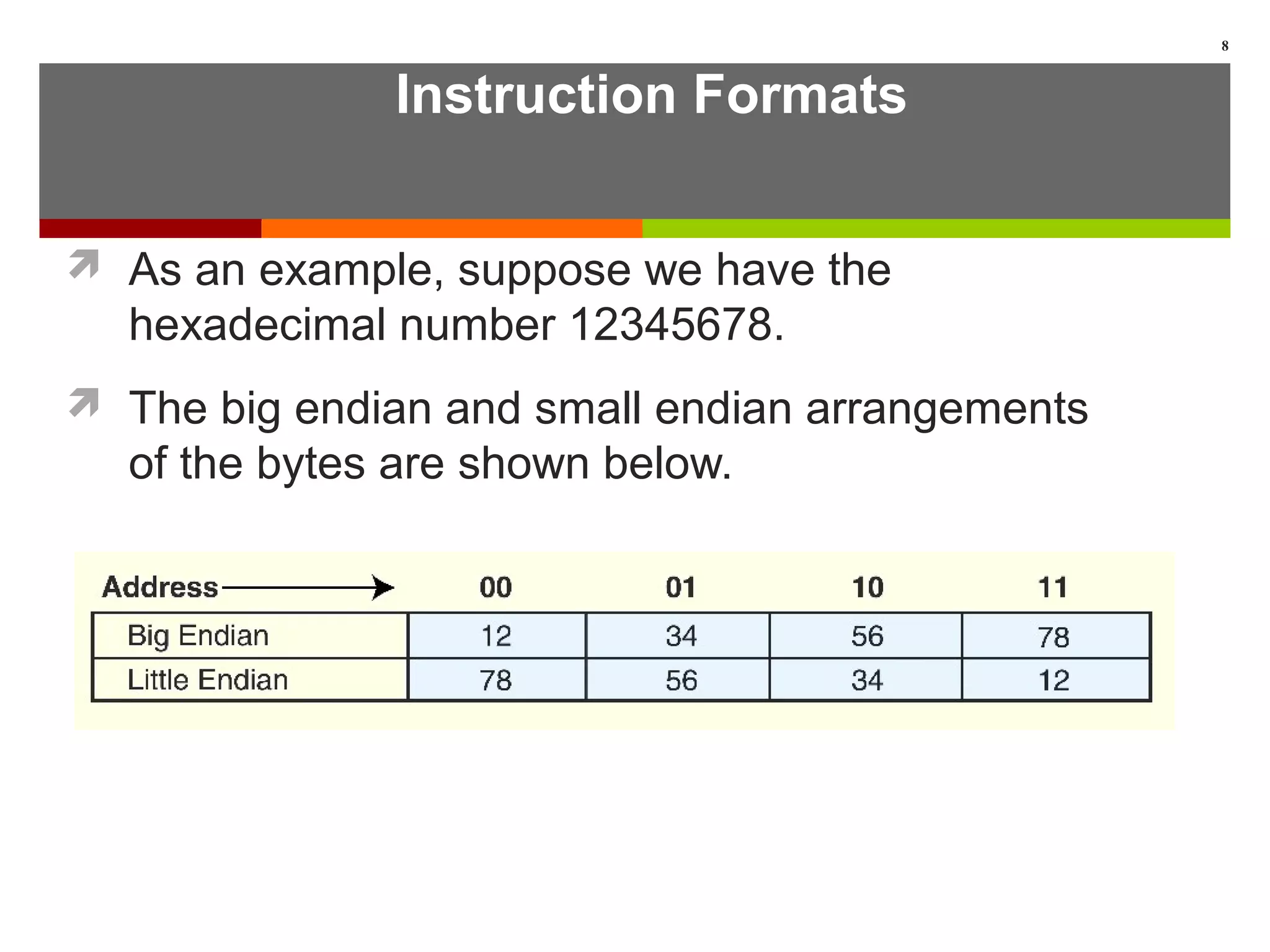

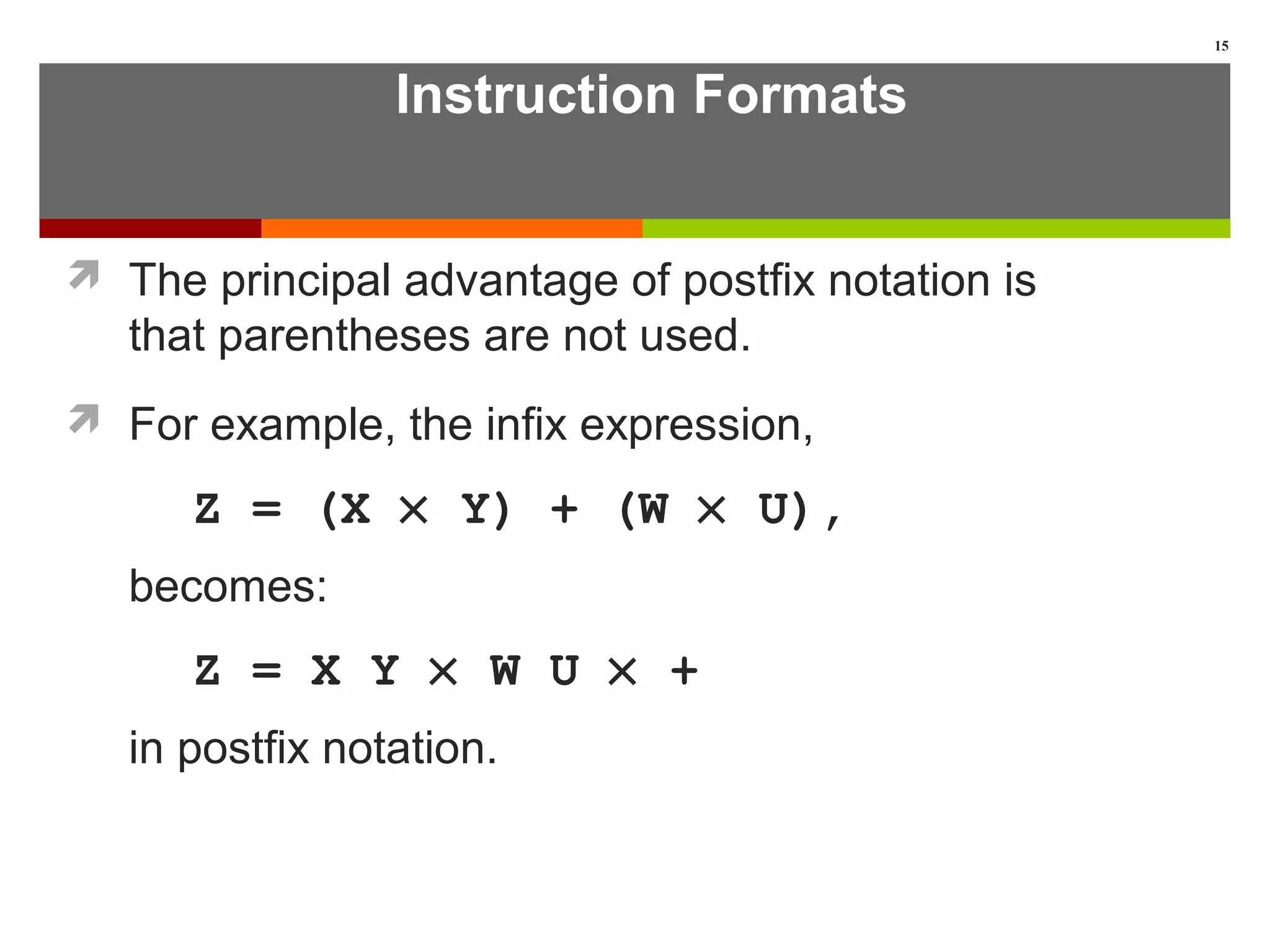

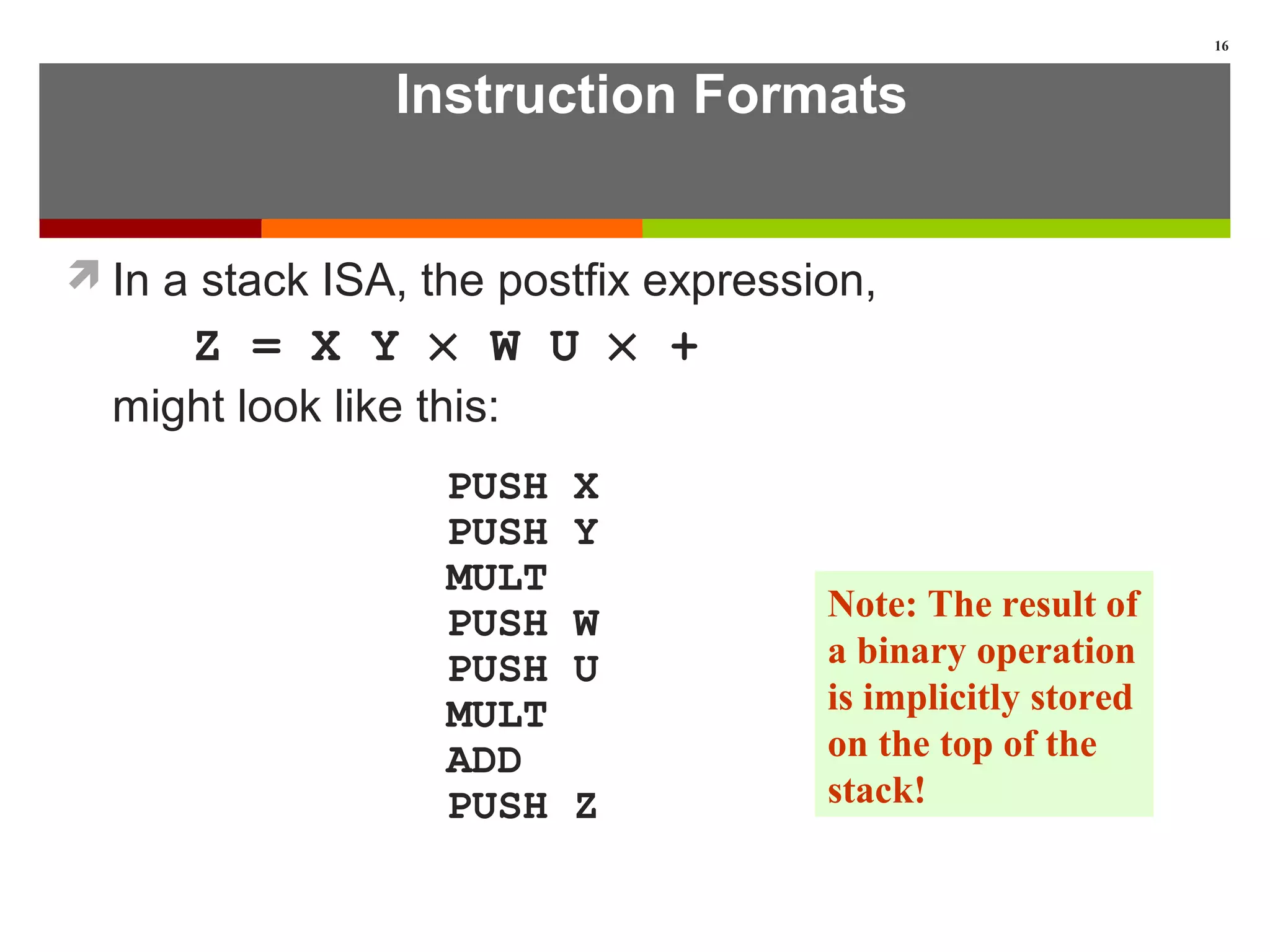

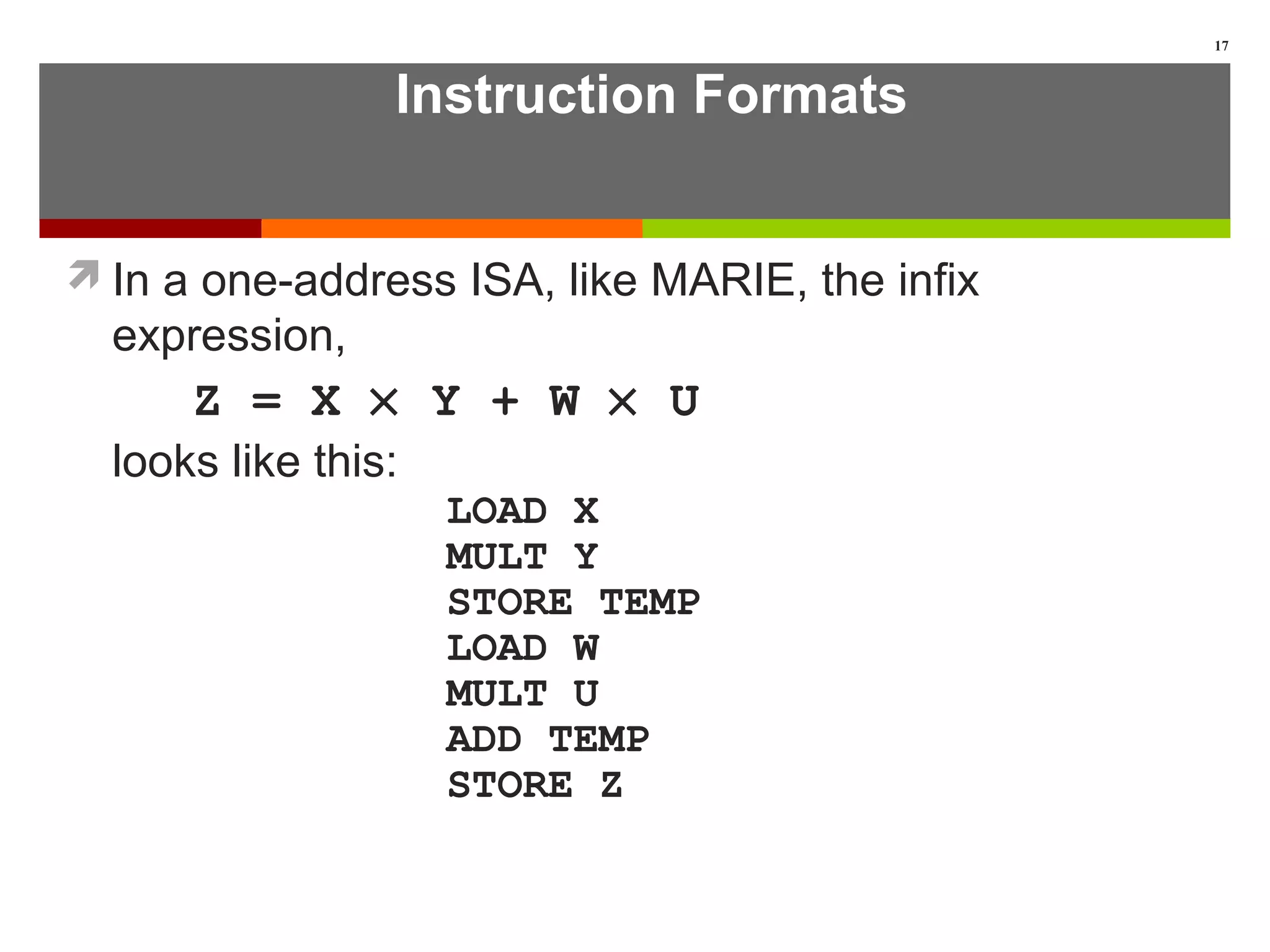

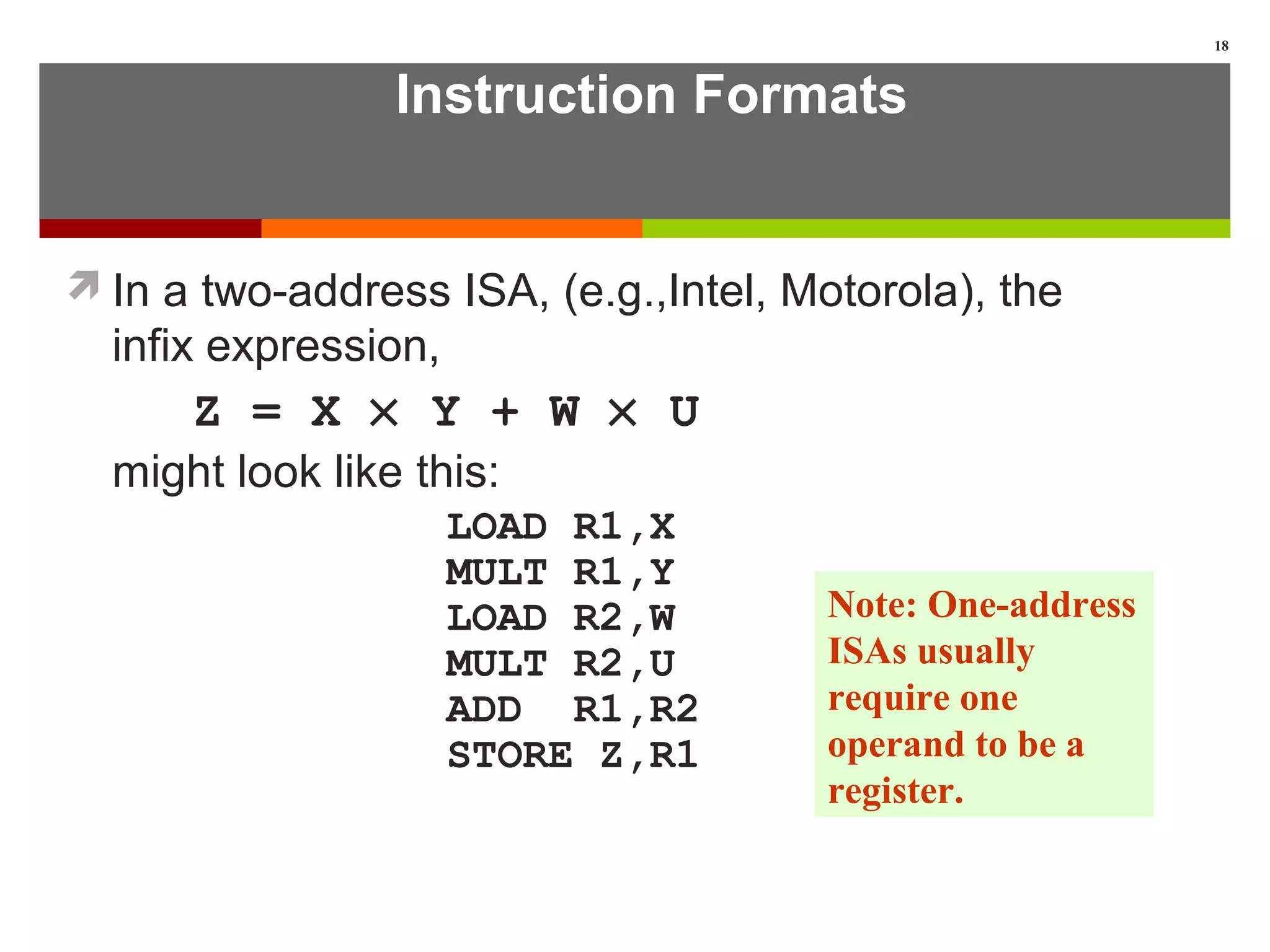

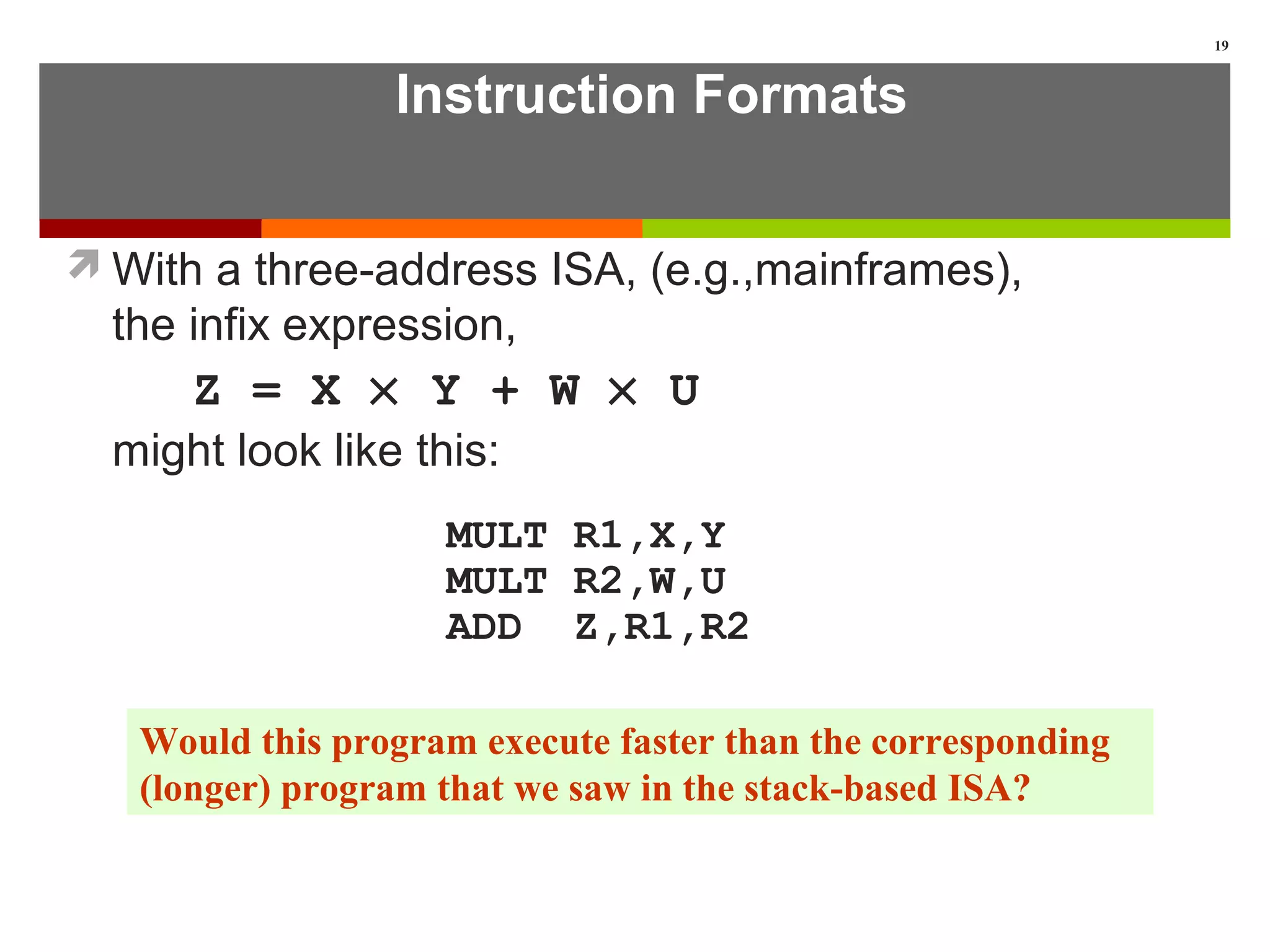

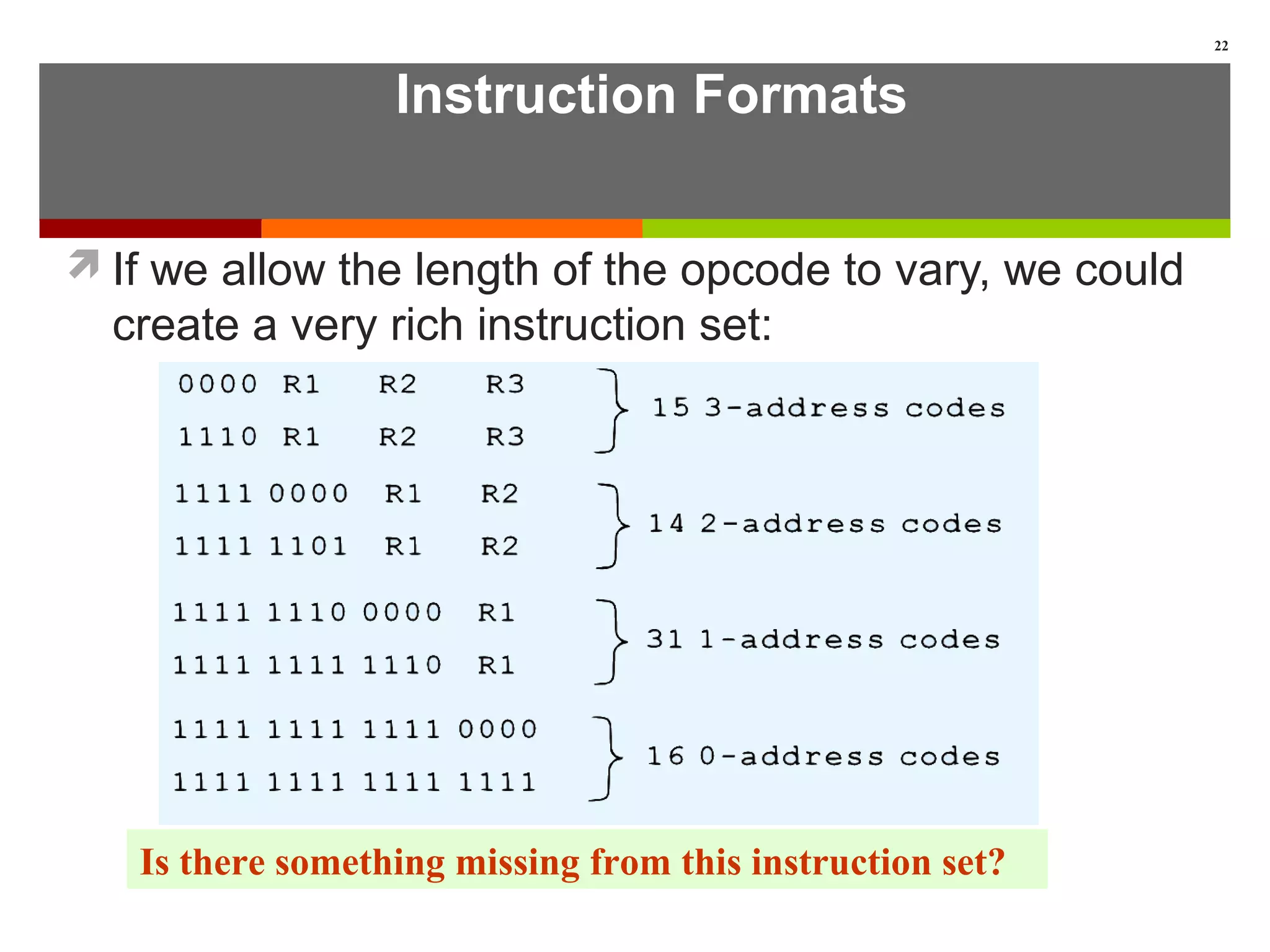

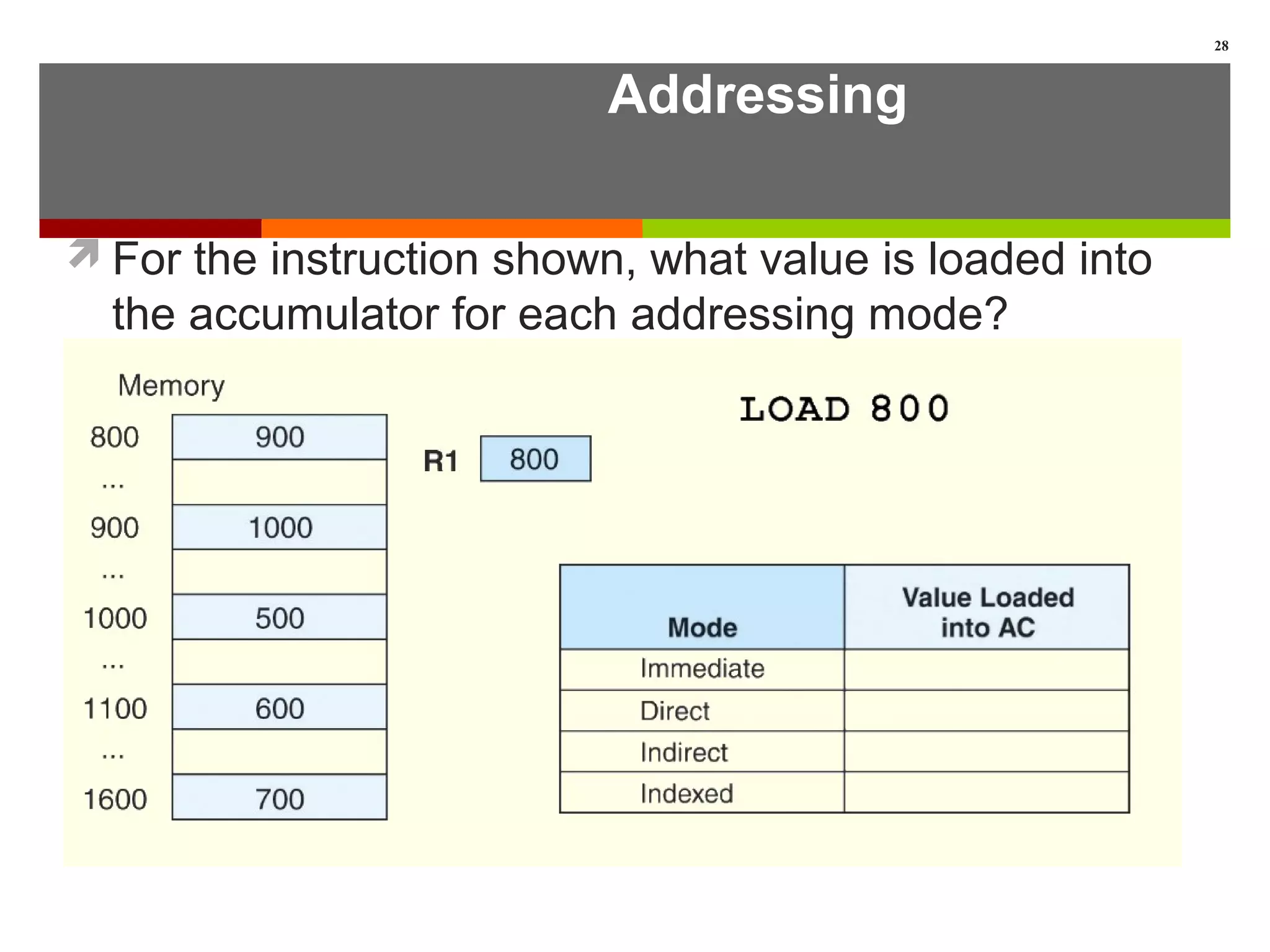

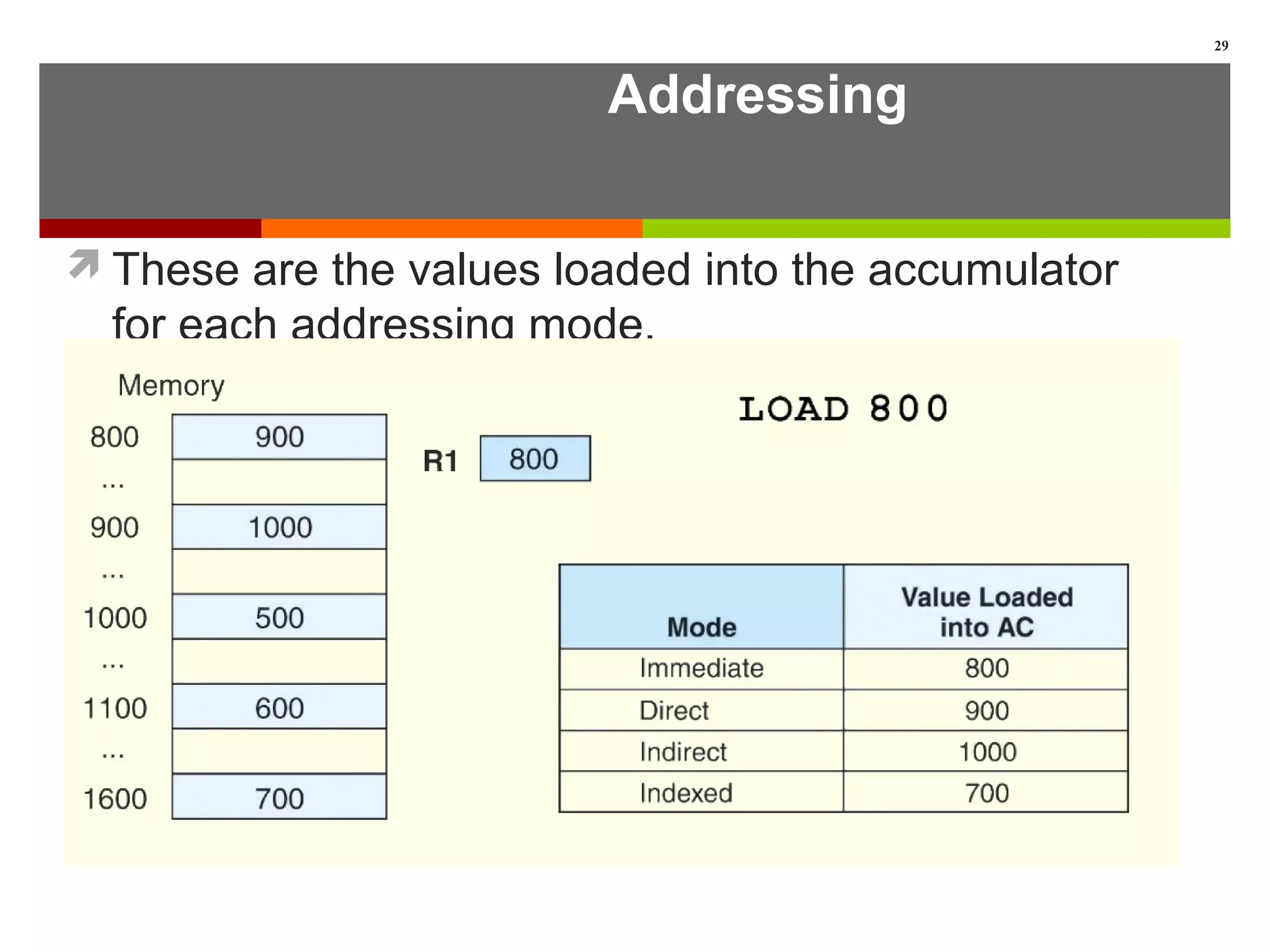

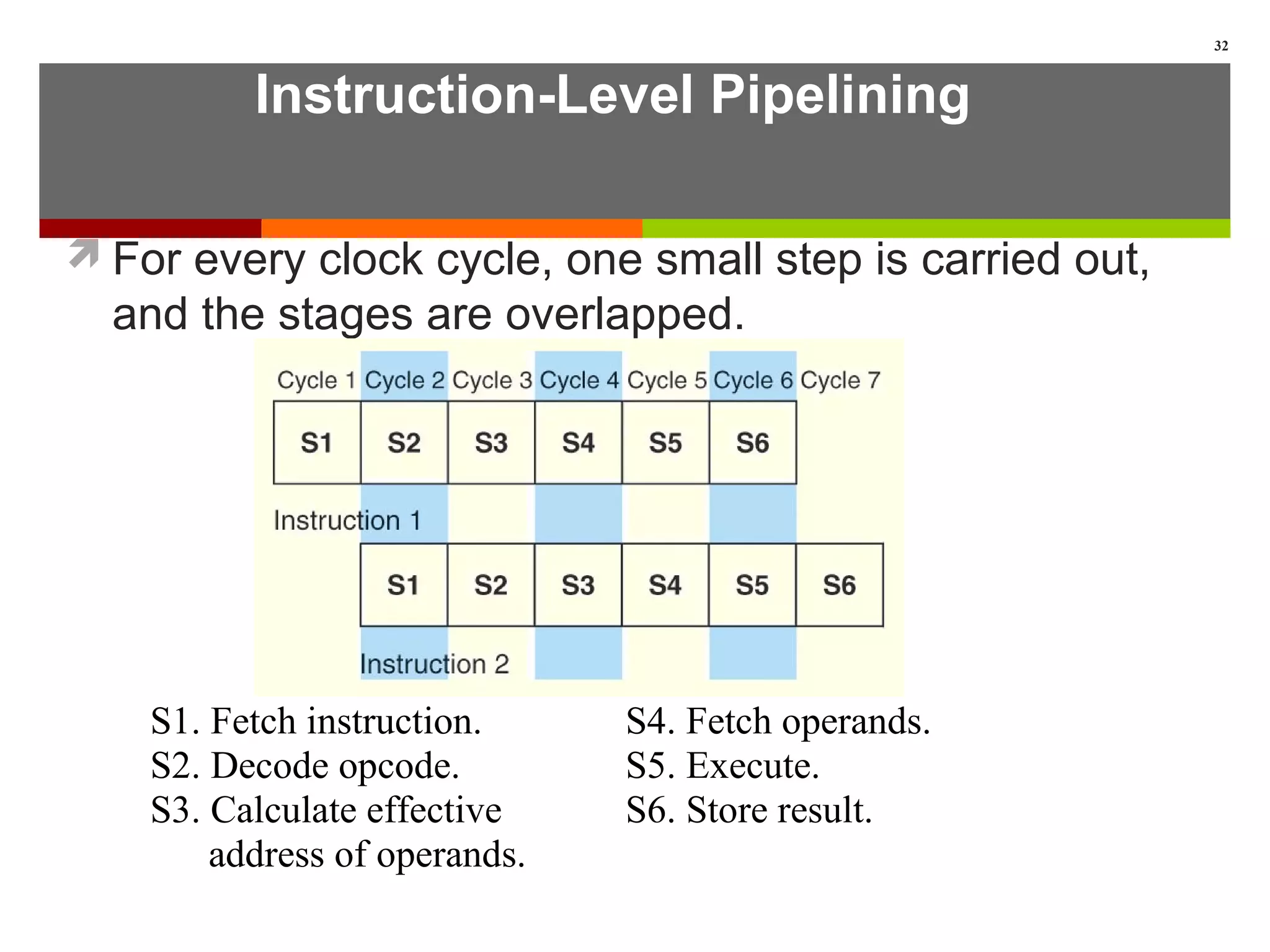

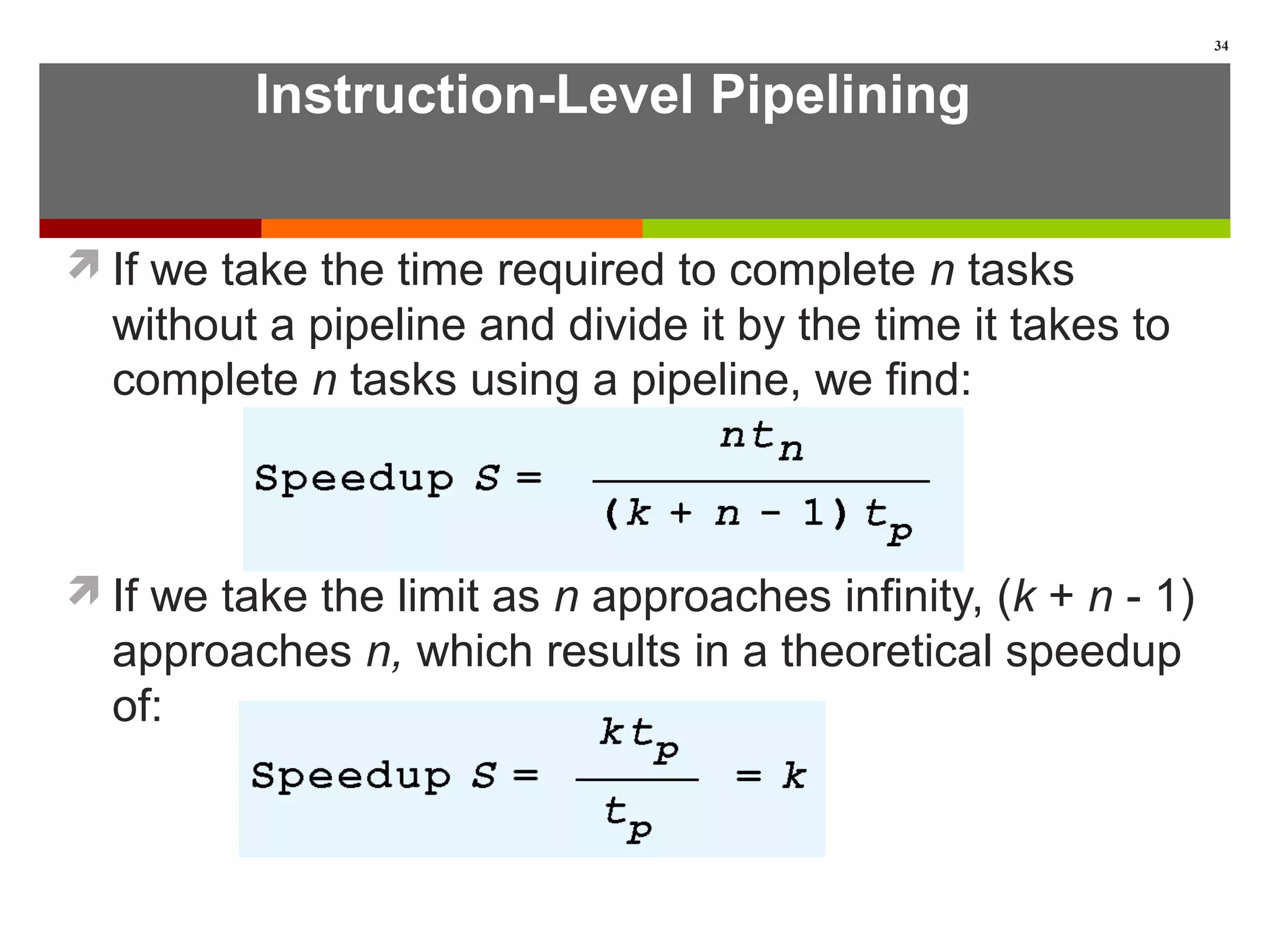

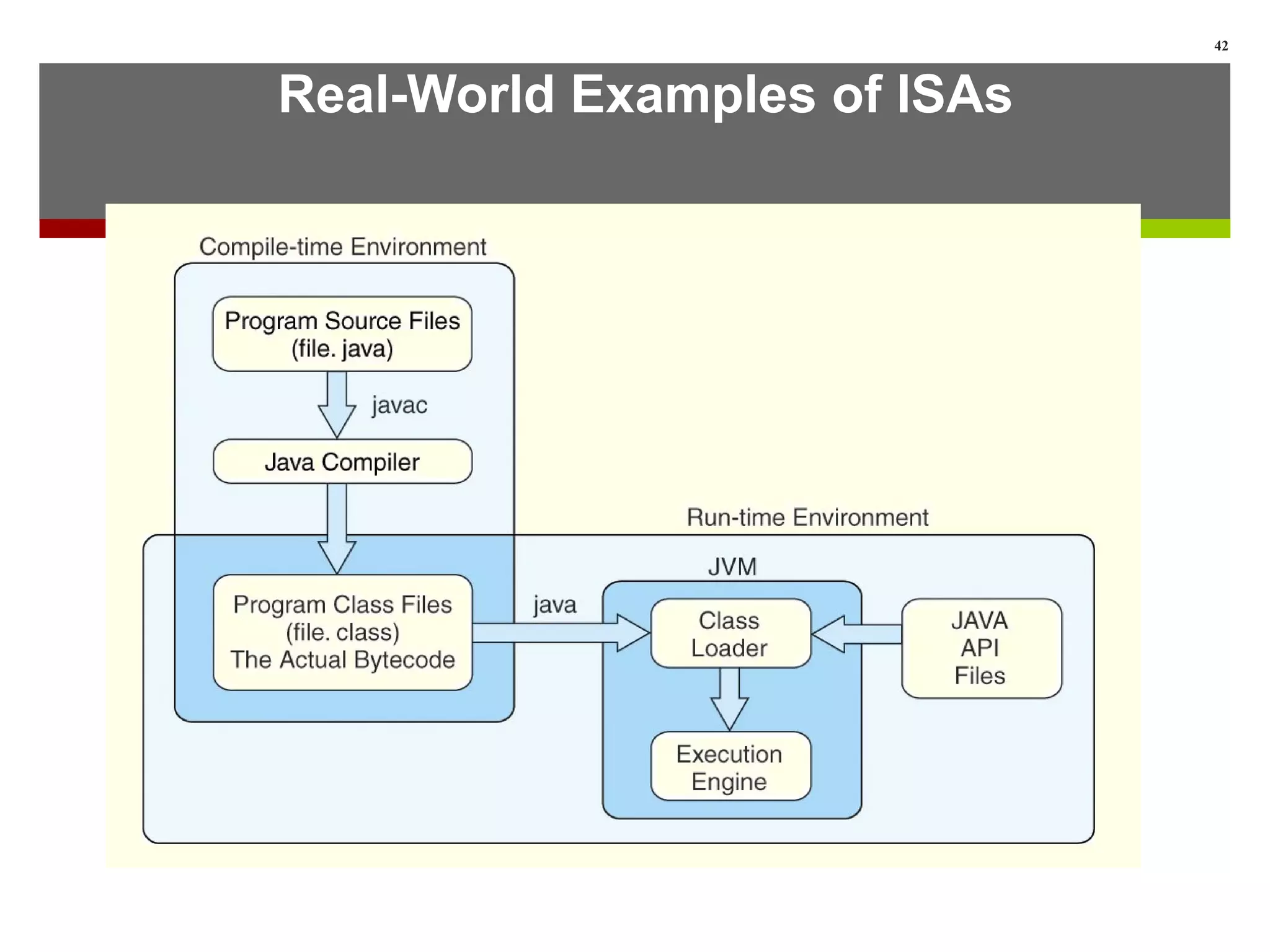

This lecture provides a detailed look at instruction set architectures (ISAs). It discusses instruction formats, including the number of operands, operand locations and types. It also covers addressing modes like immediate, direct, register, indirect and indexed. Additionally, it examines different approaches to storing data like stack, accumulator and general purpose register architectures. The lecture concludes by discussing instruction-level pipelining and examples of ISAs like Intel, MIPS and Java Virtual Machine.