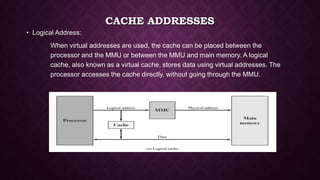

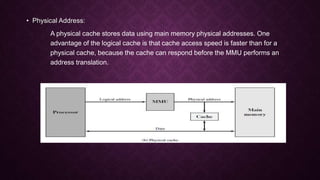

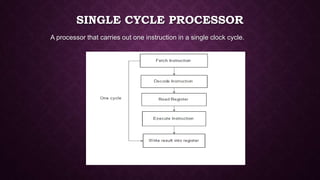

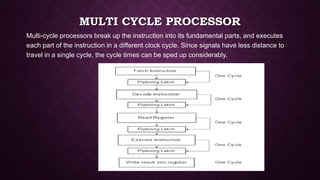

The document outlines key elements of cache design, including cache addresses, size, mapping functions, replacement algorithms, write policies, line size, and the number of caches. It differentiates between single cycle and multi-cycle processors, explaining that single cycle processors perform one instruction per clock cycle while multi-cycle processors execute instructions over several clock cycles. Additionally, it discusses various caching techniques such as direct, associative, and set-associative mapping, as well as the advantages of using unified versus split caches.