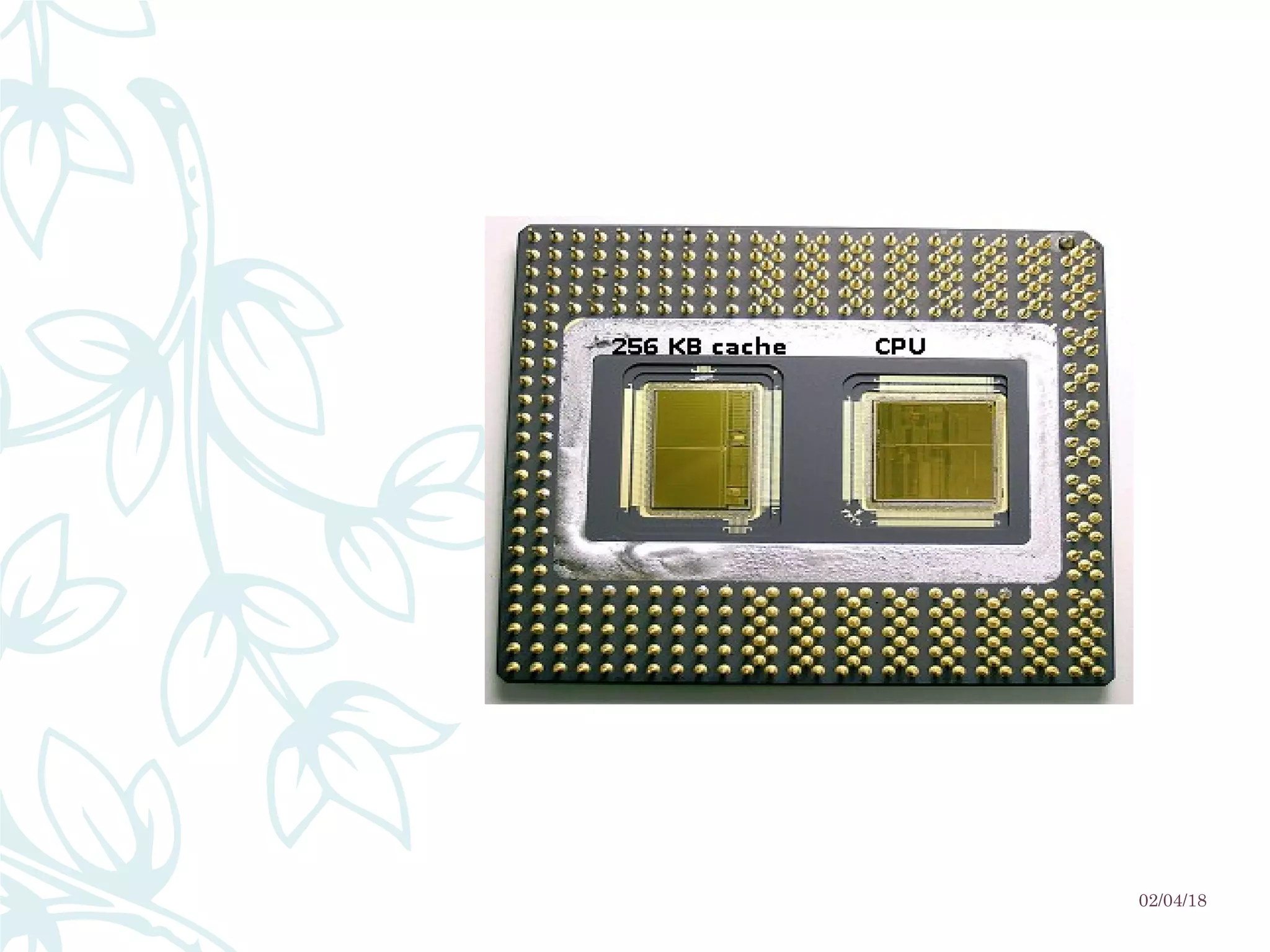

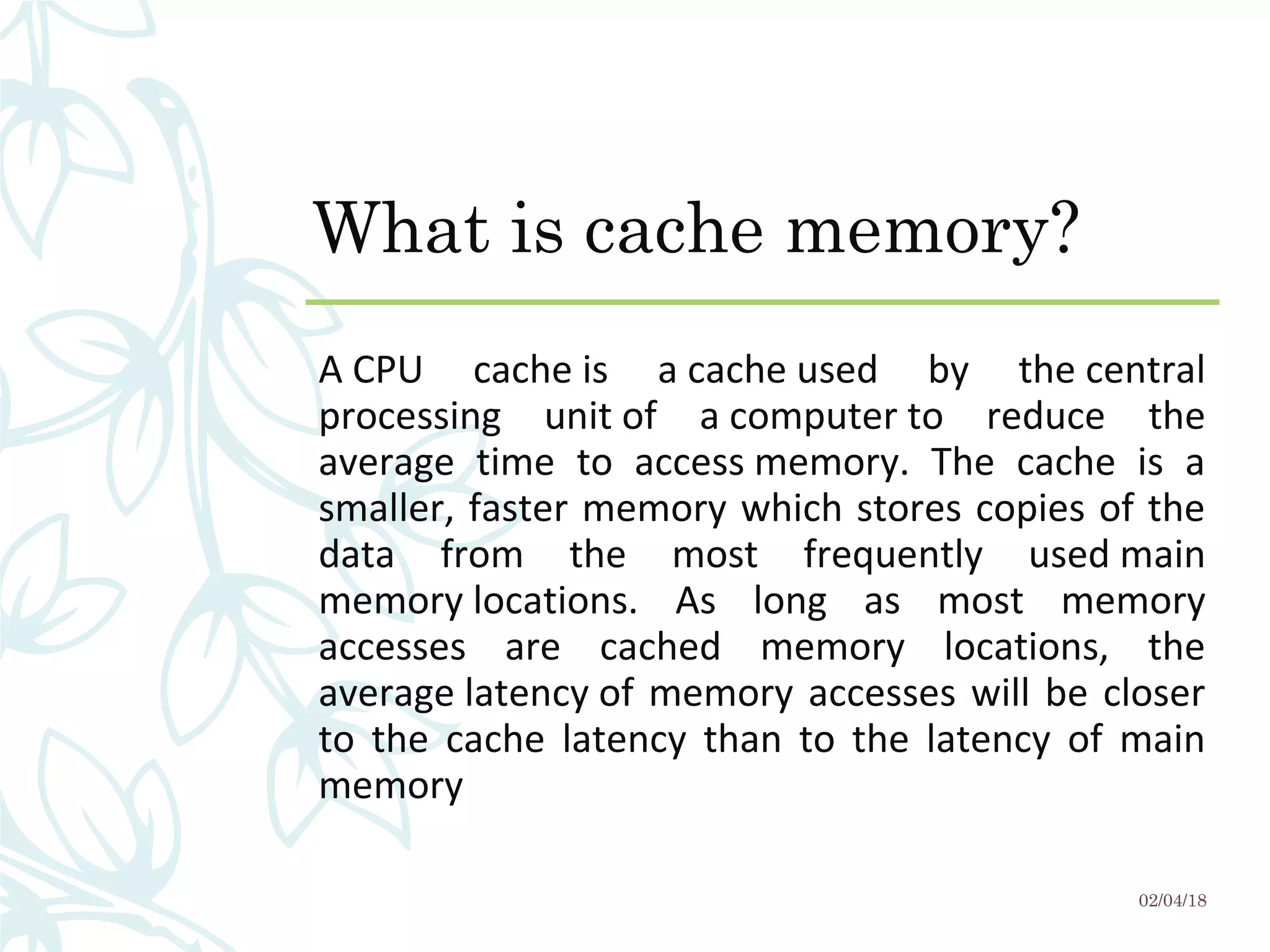

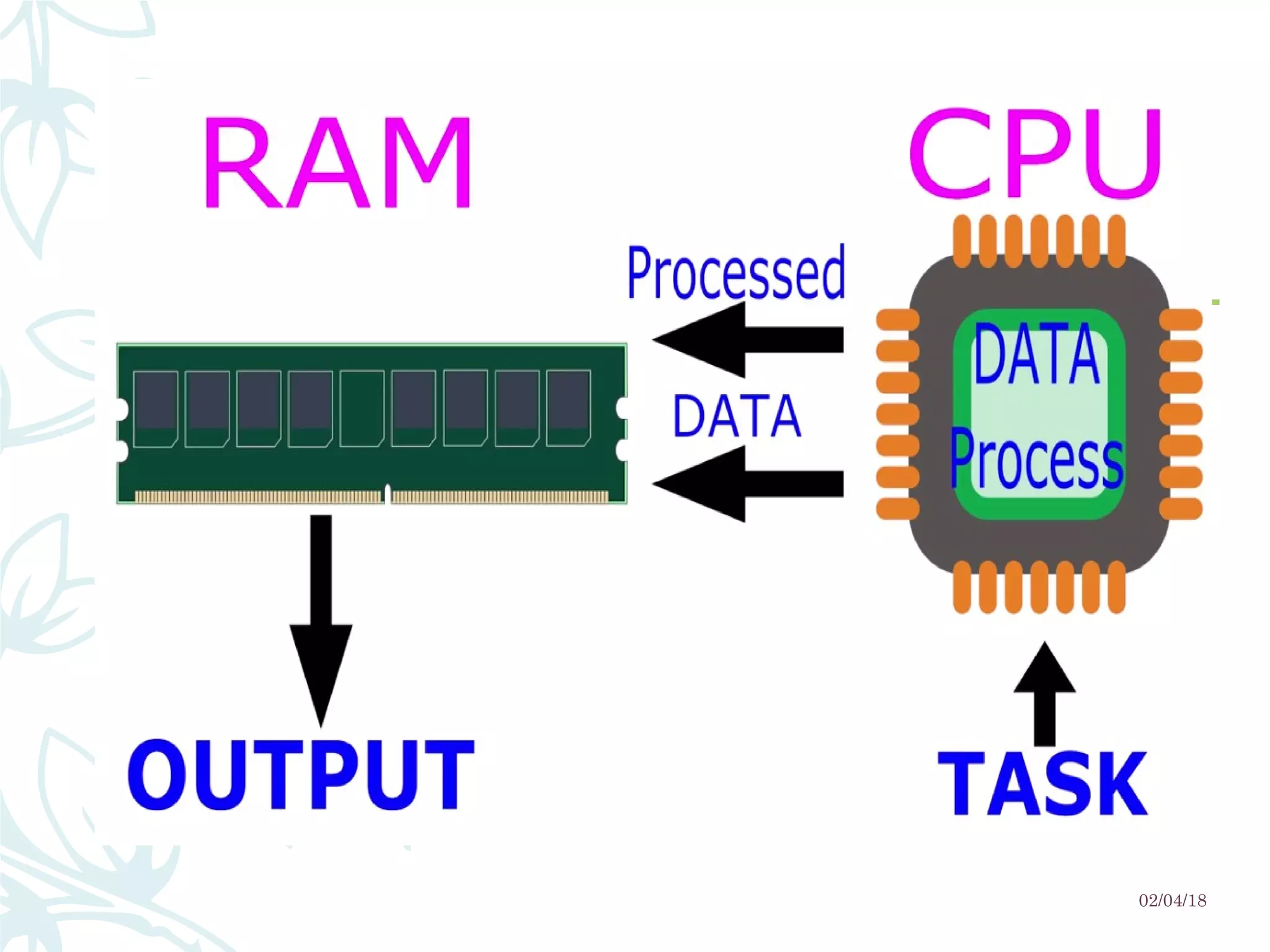

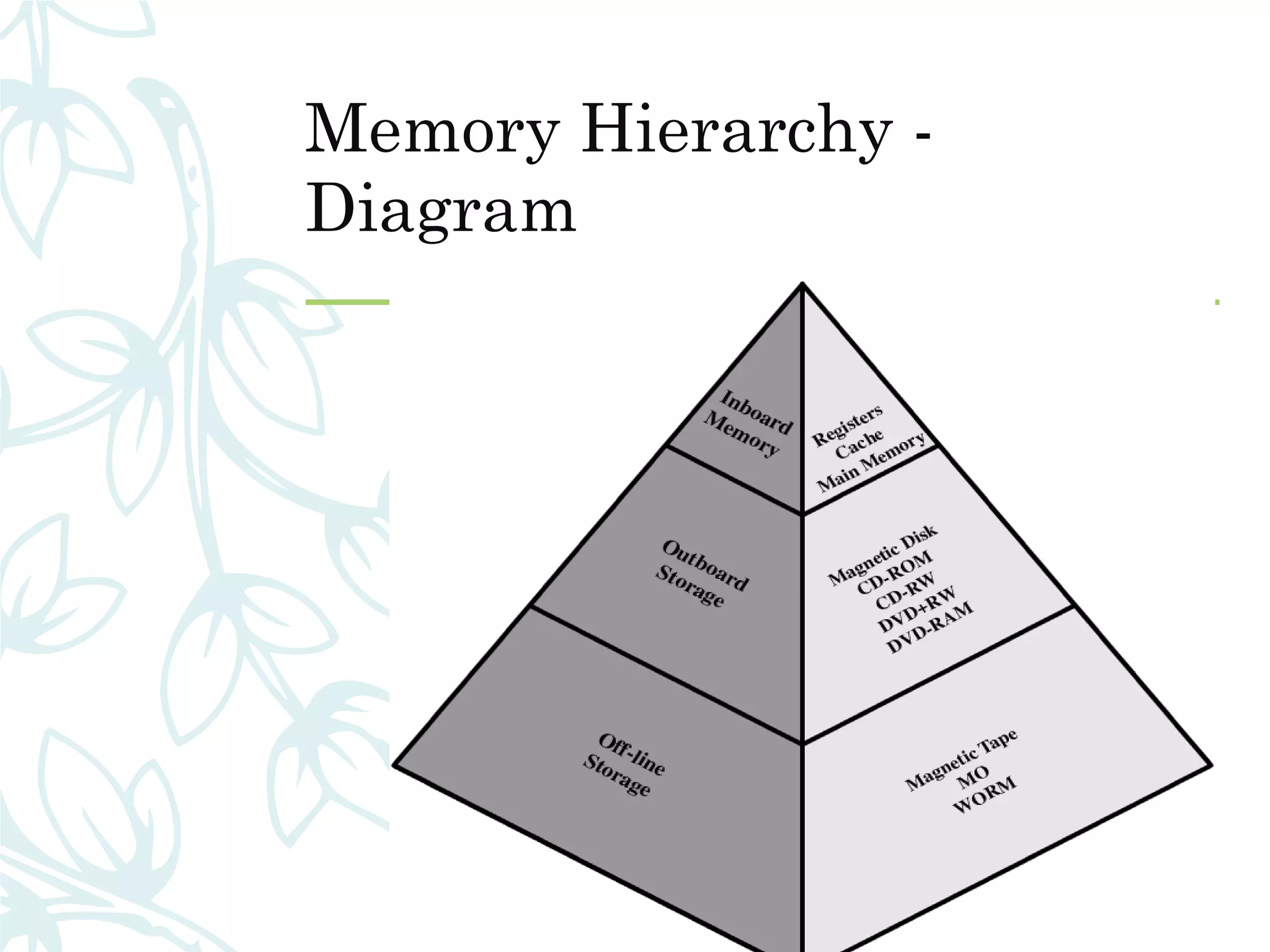

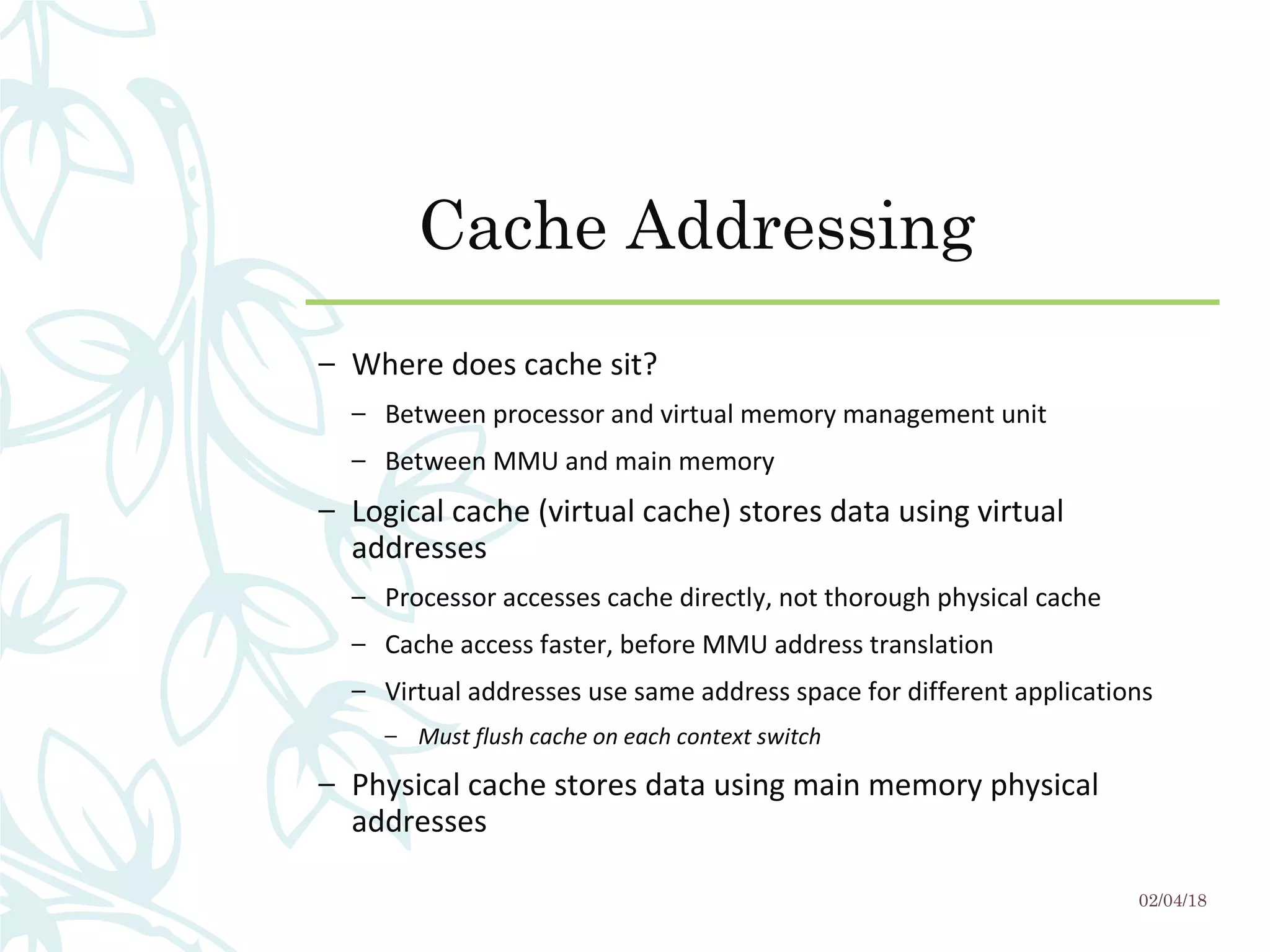

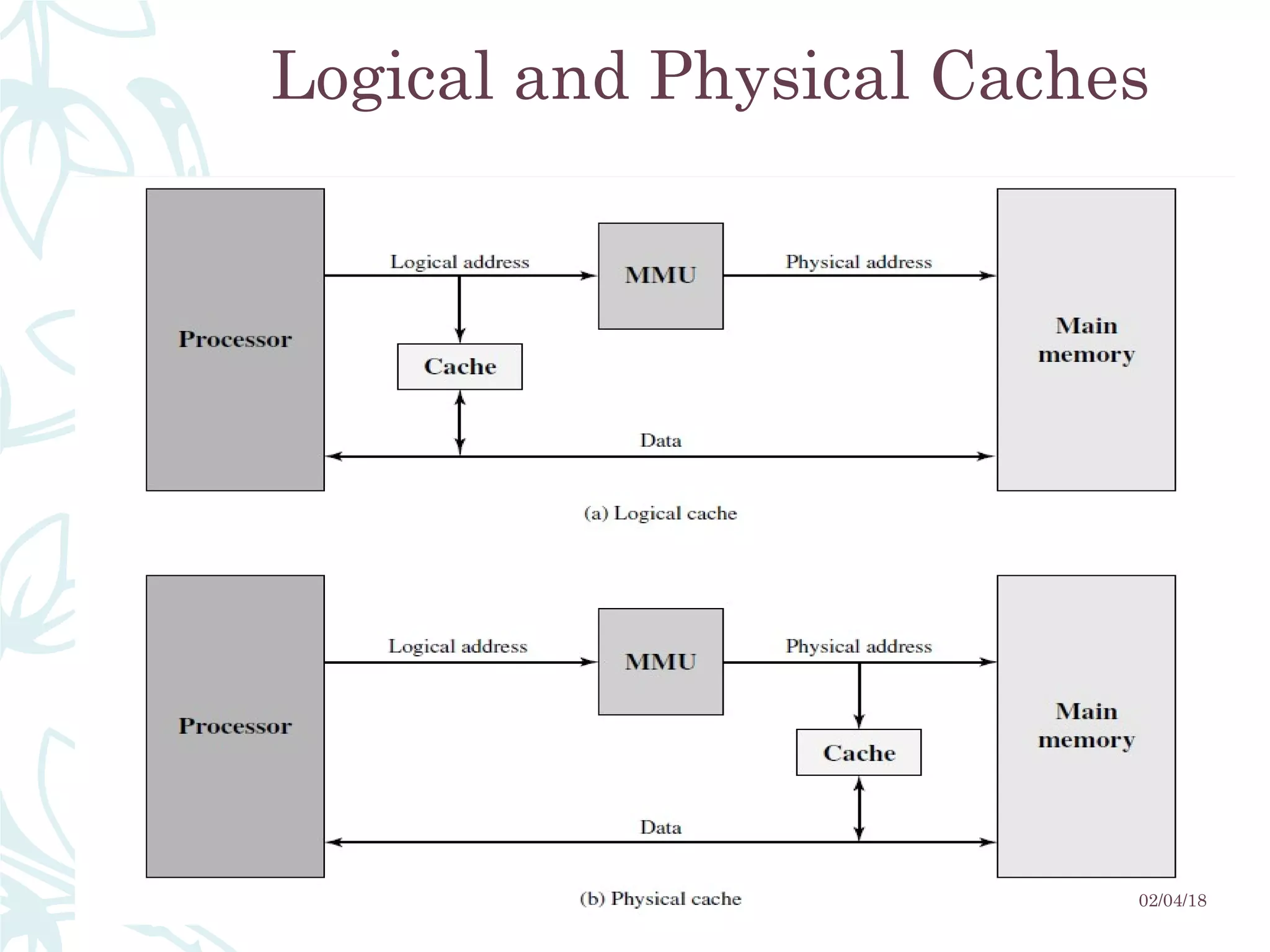

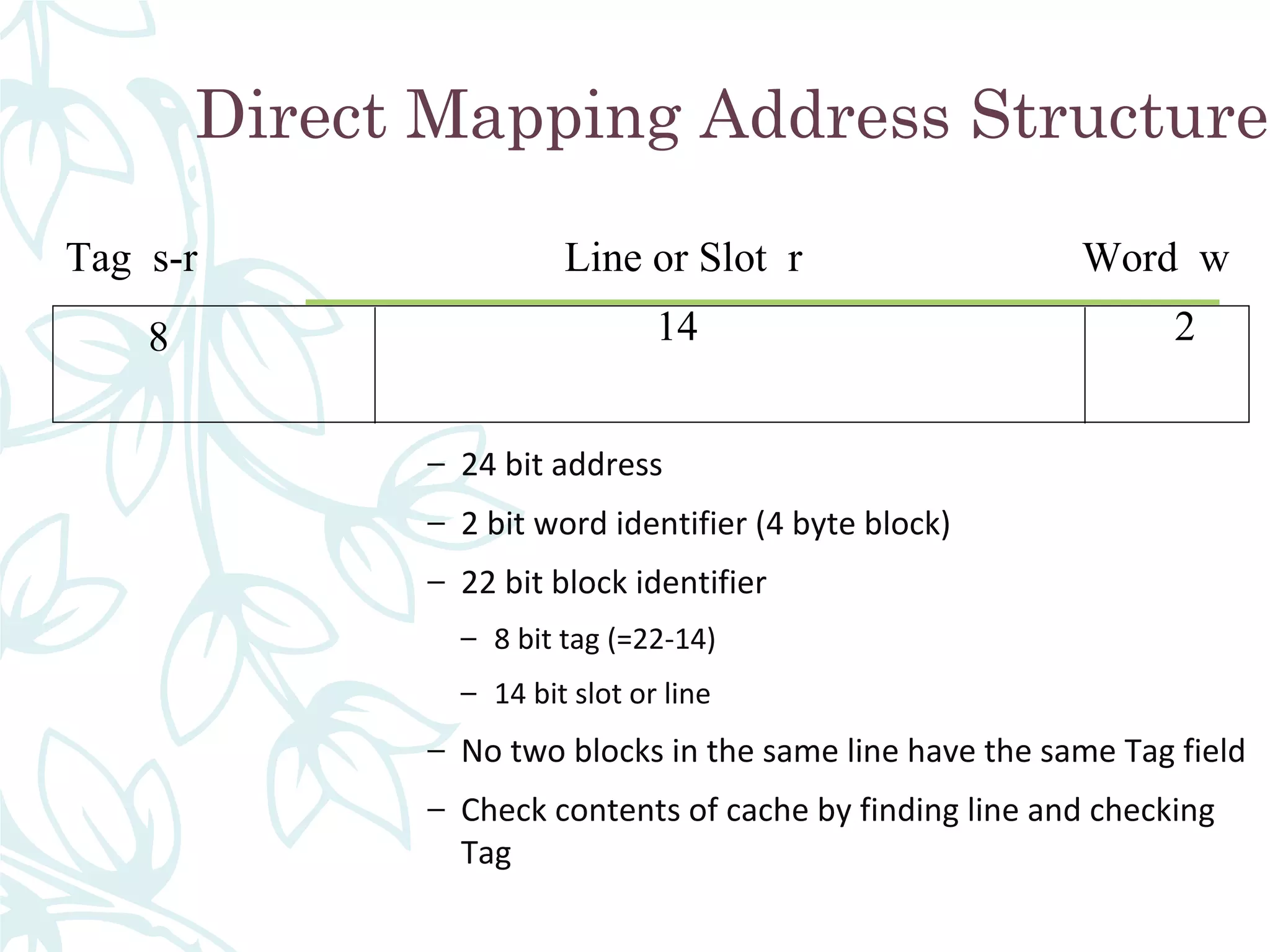

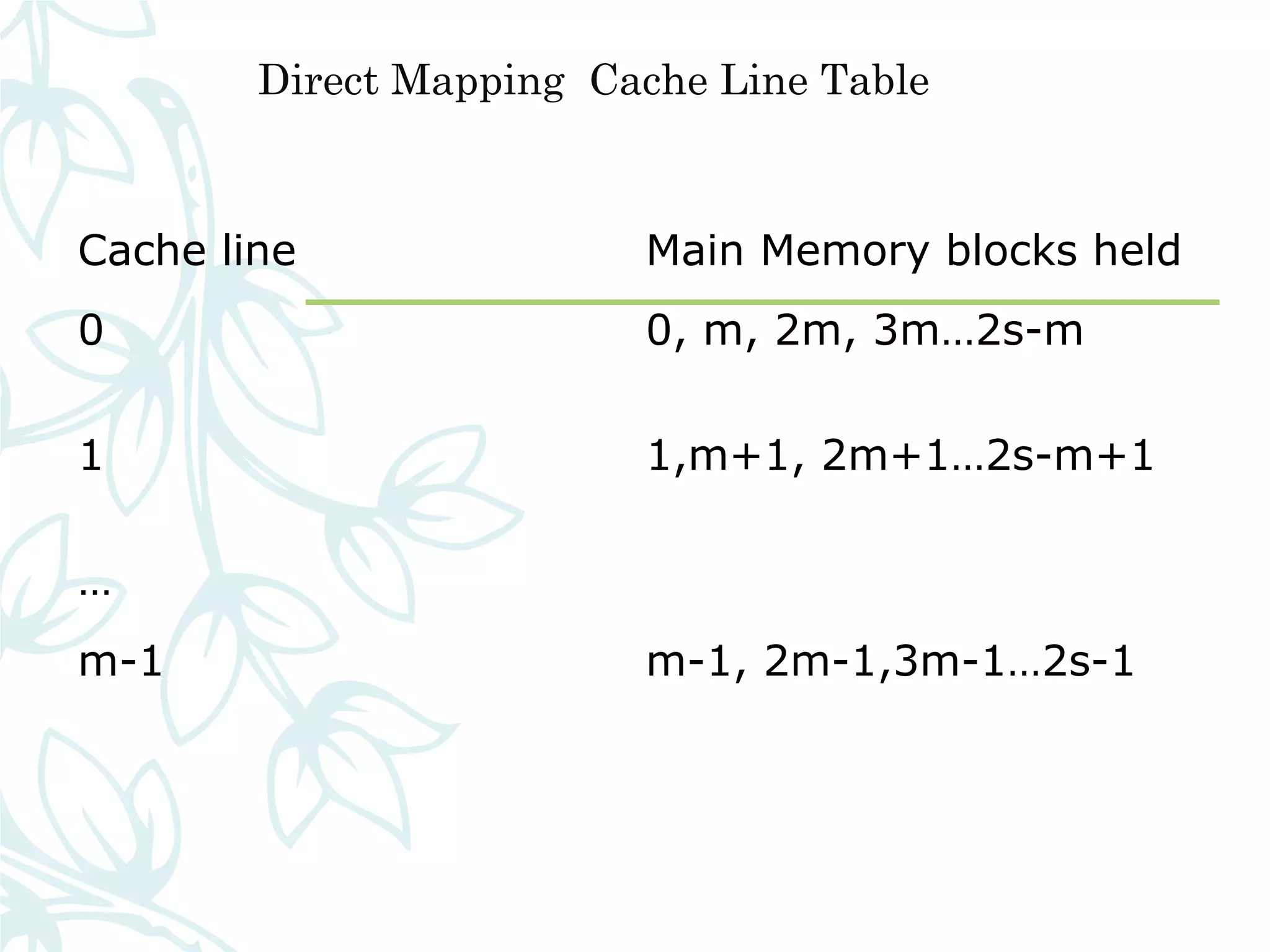

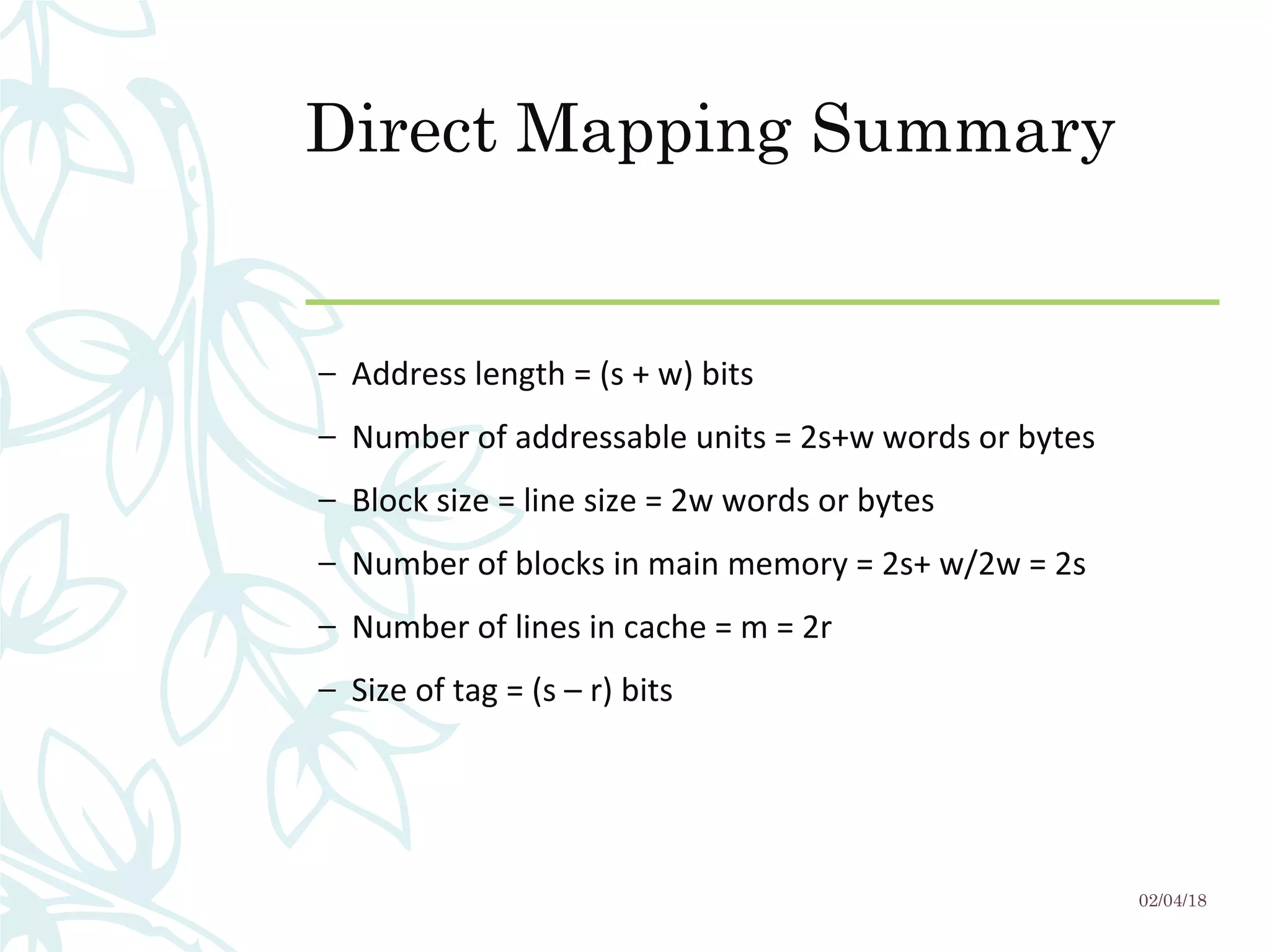

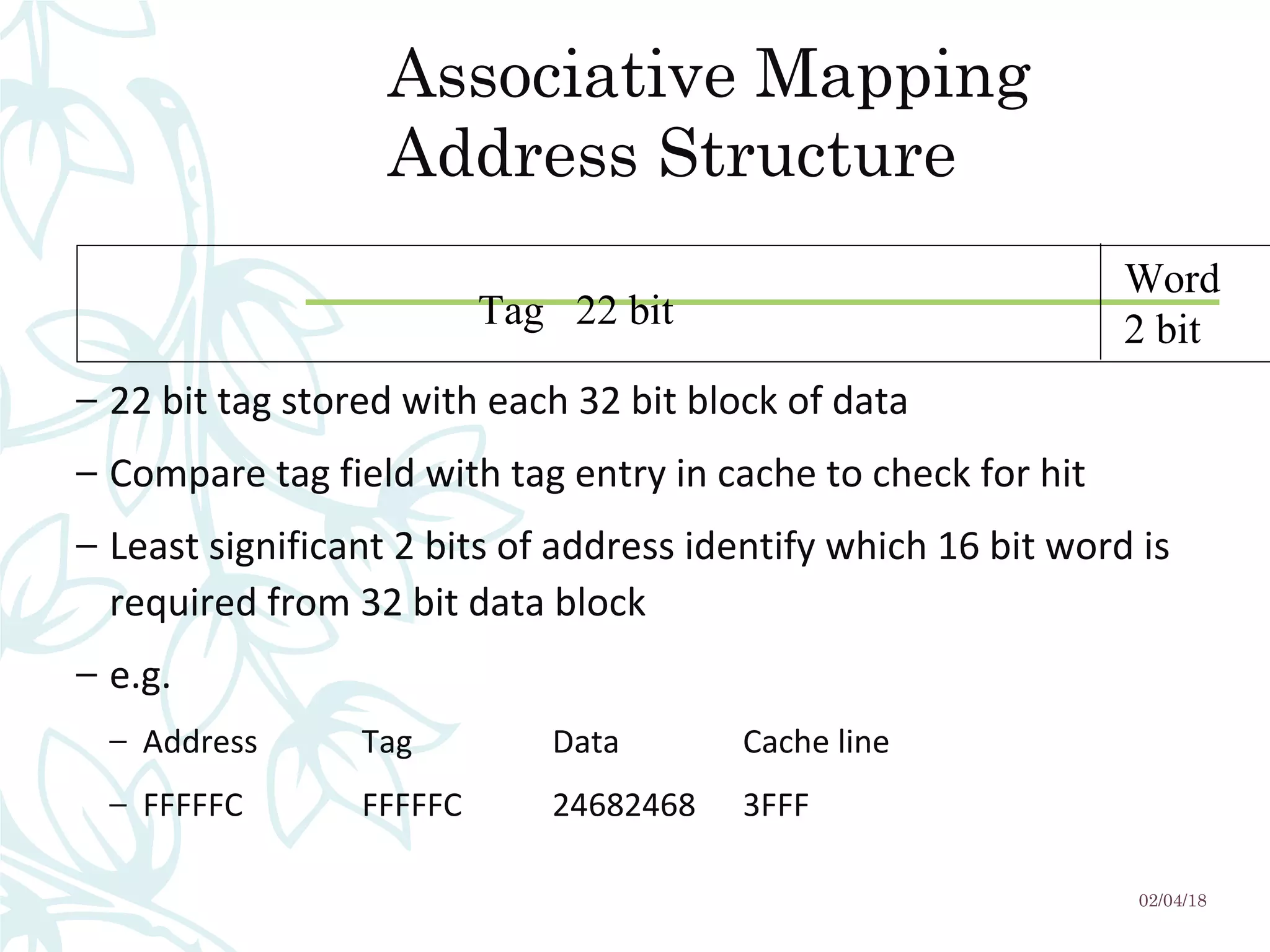

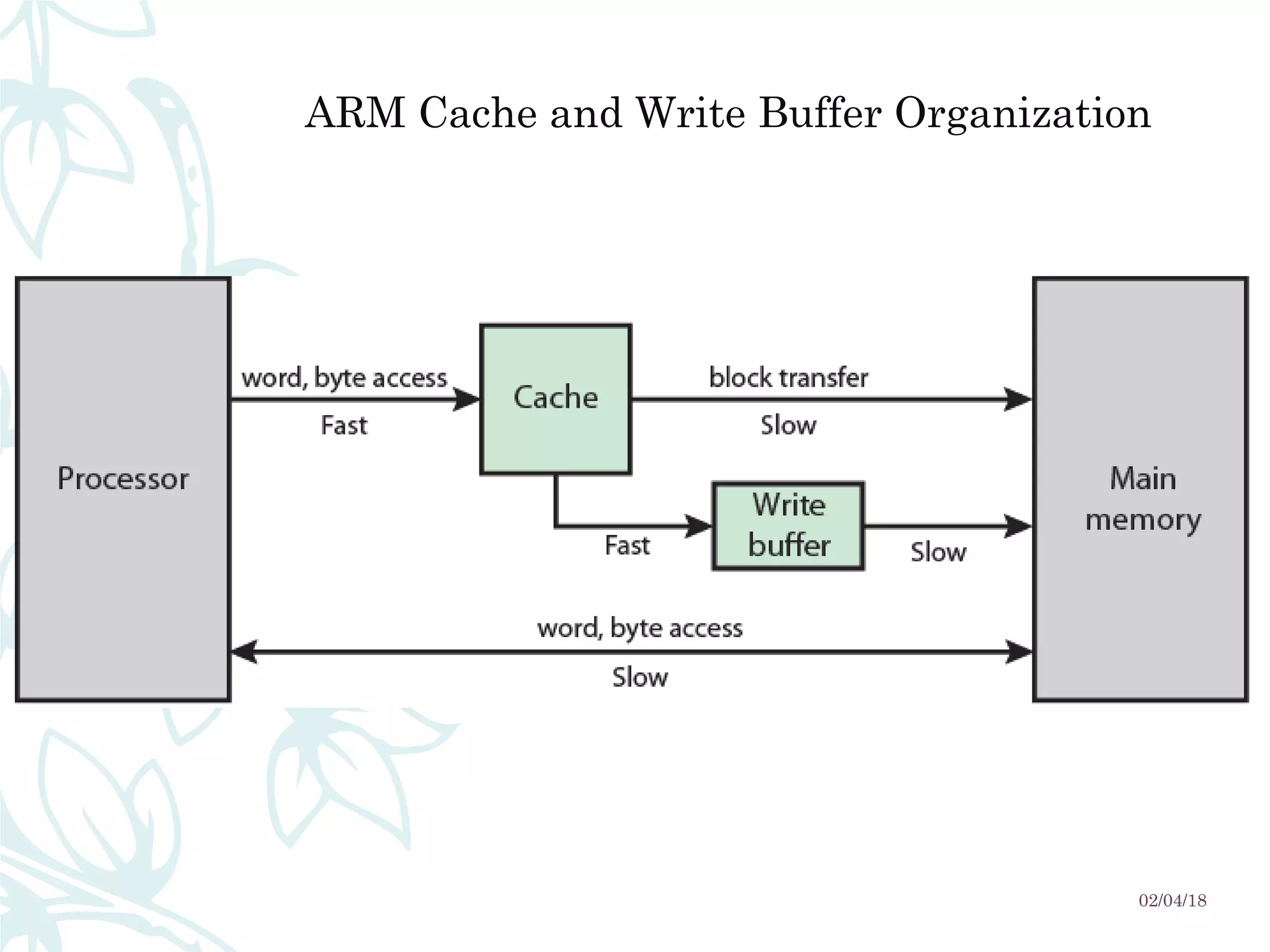

This document discusses cache memory and its characteristics. It begins by defining cache memory as a smaller, faster memory located close to the CPU that stores copies of frequently accessed data from main memory. This is done to achieve higher CPU performance by allowing faster access to cached data compared to main memory. The document then covers various characteristics of cache memory like location, capacity, unit of transfer, access methods, performance, organization, mapping functions, replacement algorithms, and write policies. Diagrams are included to illustrate cache read operations and different mapping approaches.