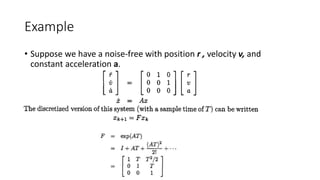

This document covers Kalman filters, emphasizing their optimal and recursive nature, discrete-time implementation, and state-space representation. It details the processes involved in state estimation, including time and measurement updates, innovation, and covariance propagation, alongside derivations and practical examples. The presentation also highlights the limitations and assumptions of Kalman filters when applied to real-world systems, including modeling errors and finite precision arithmetic.

![Av-738

Adaptive Filter Theory

Lecture 4- Optimum Filtering

[Kalman Filters]

Dr. Bilal A. Siddiqui

Air University (PAC Campus)

Spring 2018](https://image.slidesharecdn.com/av-738-aft-spr18-lecture04-optimumfilters-kalmanwk4-180216000008/75/Av-738-Adaptive-Filtering-Kalman-Filters-1-2048.jpg)

![Kalman Filters

• Kalman filter is both an optimal and recursive (adaptive filter)

• It has a unique mathematical formulation (i.e. state space concepts)

• It is solved recursively. It is updated using previous state estimates and

new data (innovation), therefore no need for storage.

• It is mostly implemented in the discrete form, and easily implementable

on digital computers

• It gives a unifying framework for RLS filters.

• Developed by Rudolf Kalman in 1969 []](https://image.slidesharecdn.com/av-738-aft-spr18-lecture04-optimumfilters-kalmanwk4-180216000008/85/Av-738-Adaptive-Filtering-Kalman-Filters-2-320.jpg)

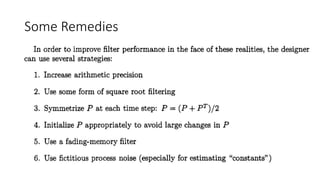

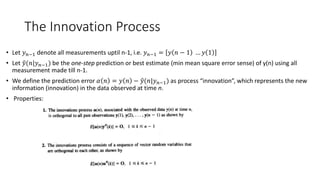

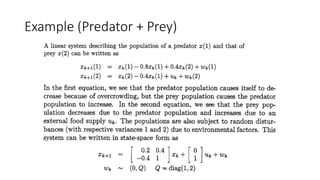

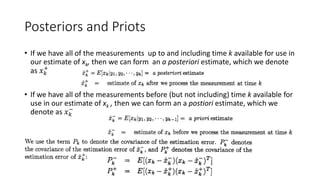

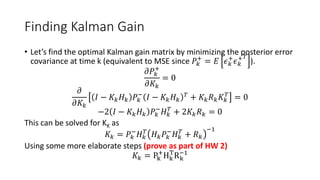

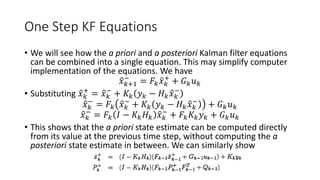

![Simulation

%%

clc;clear all;close;

%Kalman filter for position measurement

%AV-738 Spr 18, Dr. Bilal A. Siddiqui

T=5;

R=30^2;

a=0.01;

F=[1 T T^2/2 %position

0 1 T %velocity

0 0 1]; %acceleration

G=0;

H=[0 0 1];% only position being measured

w=0;%process noise

x(:,1)=[0 0 a]';%initial value of state vector

x_post(:,1)=[0 0 0]';%initial estimate of state

vector

P_post(:,:,1)=1E5*eye(3);%initial estimate of error

covariance

for k=2:60

%calculate a priori covariance estimate

P_prior(:,:,k)=F*P_post(:,:,k-1)*F';

%calculate Kalman gain

K(:,:,k)=P_prior(:,:,k)*H'*(H*P_prior(:,:,k)*H'+R)^-1;

%calculate a priori estimate of state

x_prior(:,k)=F*x(:,k-1);

%system evolution

x(:,k)=F*x(:,k-1);%take measurement

y(k)=H*x(:,k)+sqrt(R)*randn;

%calculate innovation

innov(k)=y(k)-H*x_prior(:,k);

%calculate a posteriori estimate of state

x_post(:,k)=x_prior(:,k)+K(:,:,k)*innov(k);

%calculate a posteriori estimate of covariance

P_post(:,:,k)=(eye(3)- ...

K(:,:,k)*H)*P_prior(:,:,k)*+(eye(3)-K(:,:,k)*H)'+...

K(:,:,k)*R*K(:,:,k)';

end](https://image.slidesharecdn.com/av-738-aft-spr18-lecture04-optimumfilters-kalmanwk4-180216000008/85/Av-738-Adaptive-Filtering-Kalman-Filters-25-320.jpg)