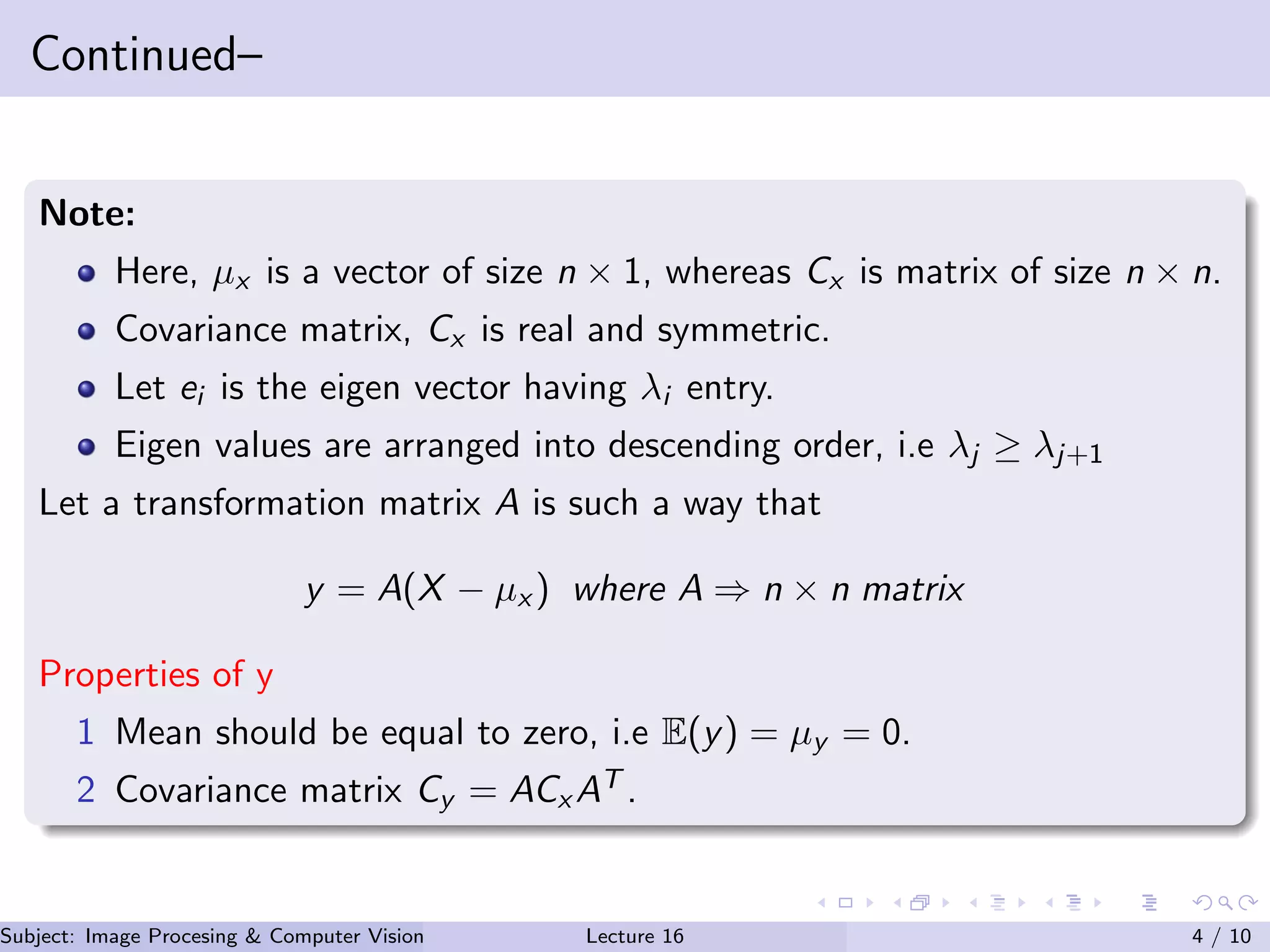

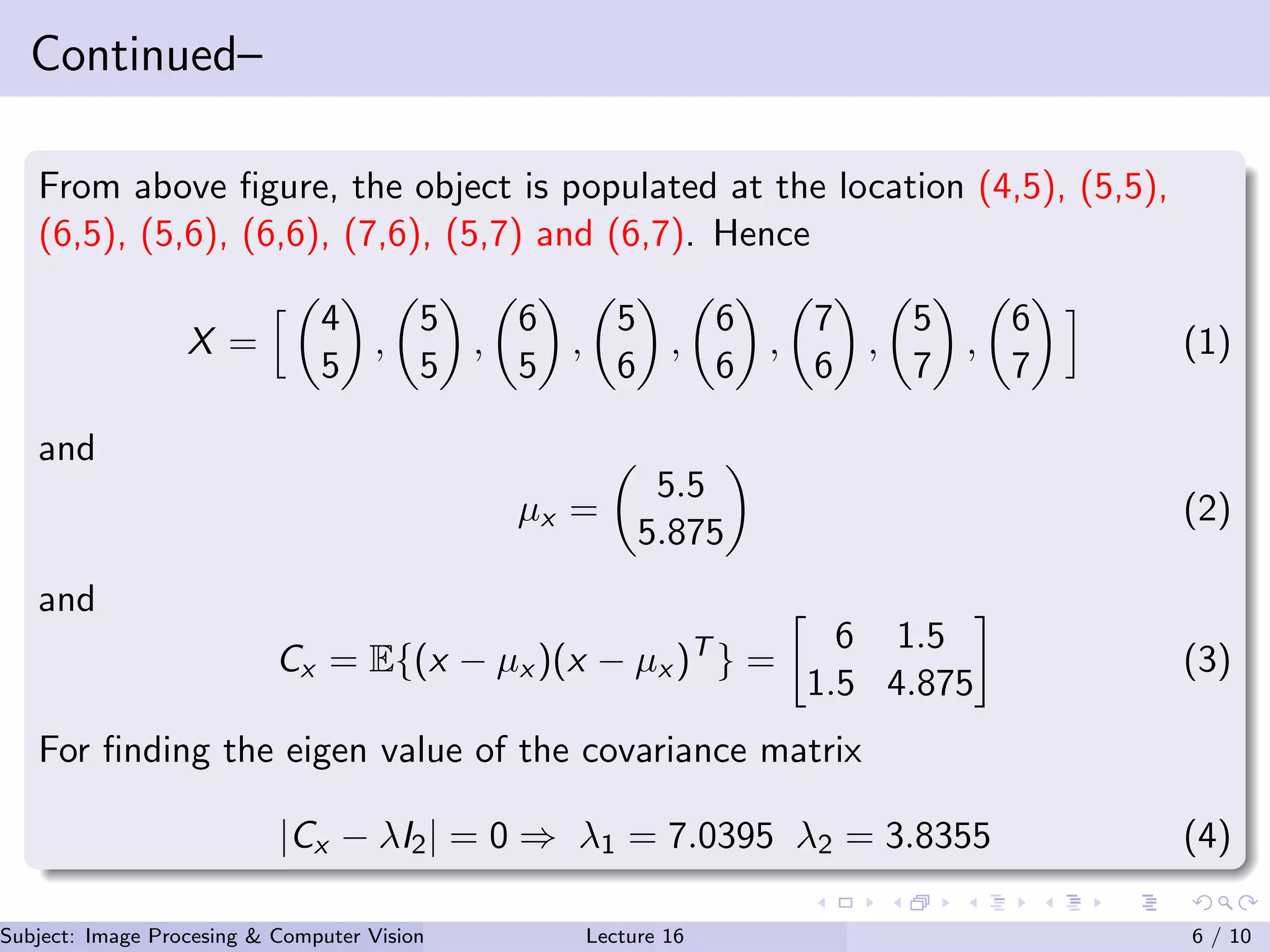

The KL transform is a data-driven transformation where the kernel is derived from the statistics of the data, unlike transforms like DFT where the kernel is fixed. (1) It represents data as a vector based on the mean and covariance matrix of the population. (2) The transformation matrix is chosen such that the transformed data is statistically uncorrelated and ordered by decreasing variance. (3) This transformation optimally compacts the energy but requires high computational complexity.

![Continued–

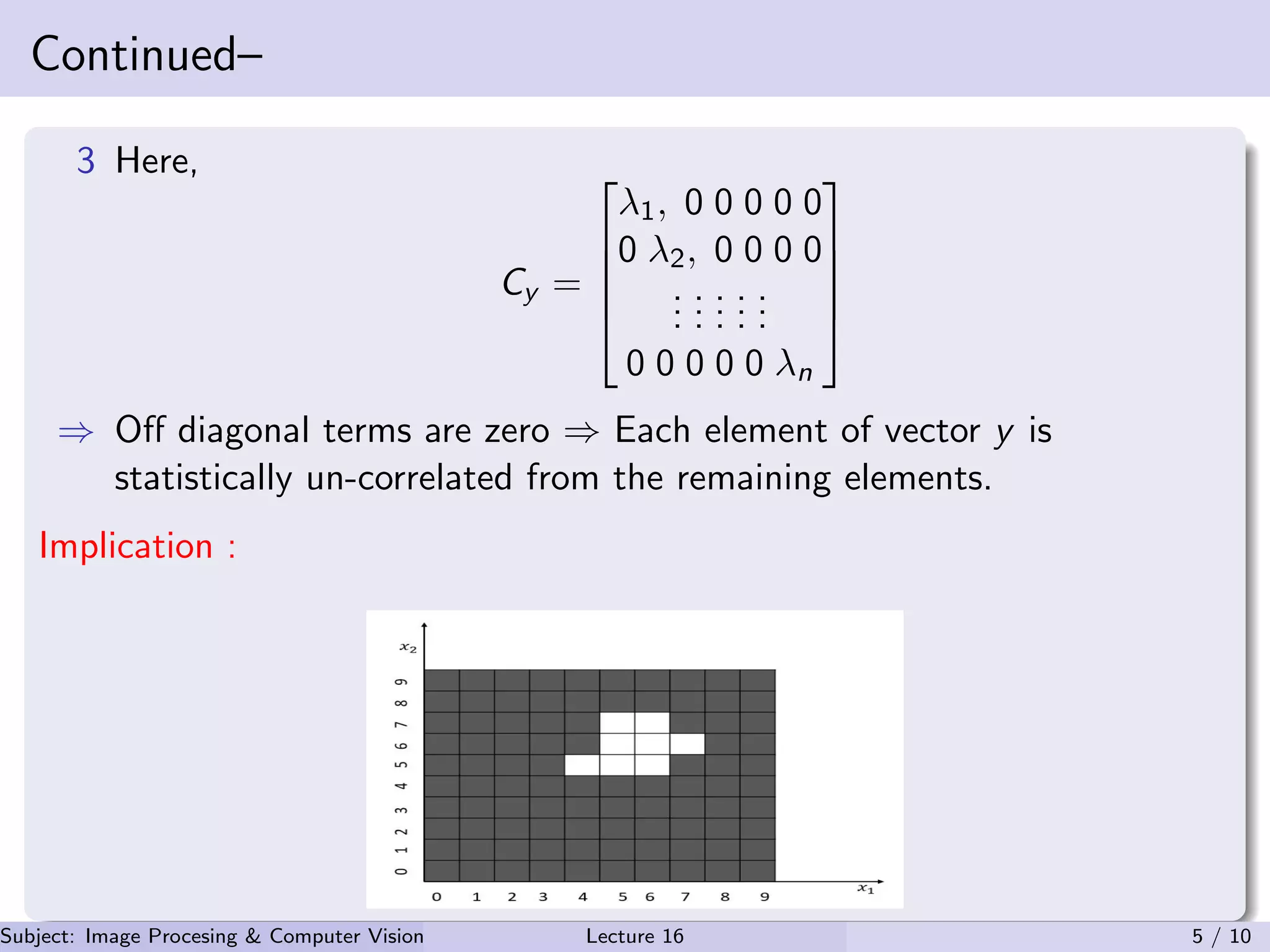

Modified relation

Also, e1 =

0.5696

−0.8219

and e2 =

−0.8219

−0.5696

In this case transformation

matrix A = [e1, e2]. Hence, through KL transformation,

Forwrd transform ⇒ y = A(X − µx ) (5)

Here A−1 = AT . Hence, inverse transform is

X = AT

y + µx (6)

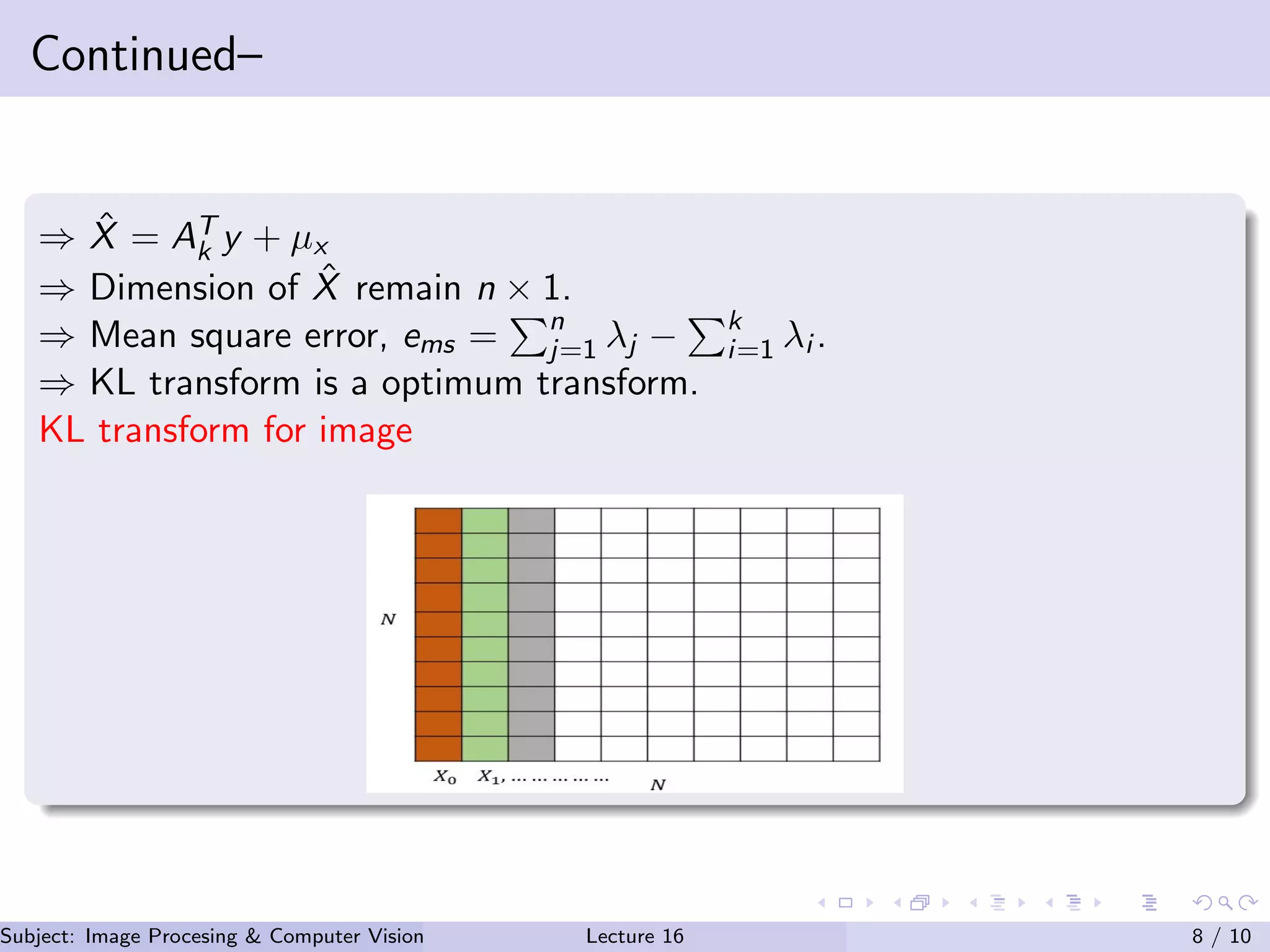

Let Ak → Matrix having k number of eigen value.

After transformation y = Ak(X − µx ) → Dimension reduces from n × n to

k × n.

Subject: Image Procesing & Computer Vision Dr. Varun Kumar (IIIT Surat)Lecture 16 7 / 10](https://image.slidesharecdn.com/lecture16-201016114705/75/Lecture-16-KL-Transform-in-Image-Processing-7-2048.jpg)