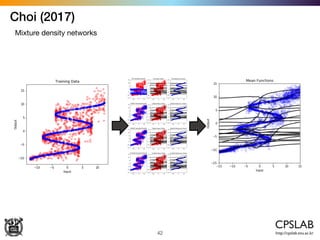

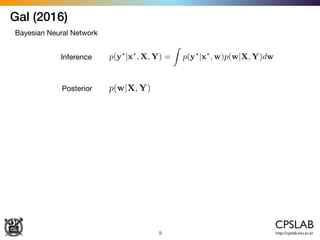

The document discusses various uncertainties in deep learning, particularly focusing on model uncertainty and its implications in applications like image classification and reinforcement learning. It highlights different methods for estimating uncertainties, including Bayesian neural networks and mixture density networks, and explores the distinction between aleatoric and epistemic uncertainties. The work references multiple studies that propose solutions for uncertainty-aware learning, emphasizing the importance of addressing these challenges in deep learning models.

![Kendal (2017)

36

Aleatoric & epistemic uncertainties

ˆW ⇠ q(W)

x

ˆy ˆW(x) ˆ2

ˆW

(x)

[ˆy, ˆ2

] = f

ˆW

(x)

L =

1

N

NX

i=1

log N(yi; ˆy ˆW(x), ˆ2

ˆW

(x))](https://image.slidesharecdn.com/uncertaintyindeeplearning-171216053607/85/Uncertainty-Modeling-in-Deep-Learning-36-320.jpg)

![Kendal (2017)

37

Aleatoric & epistemic uncertainties

ˆW ⇠ q(W)

x

ˆy ˆW(x) ˆ2

ˆW

(x)

[ˆy, ˆ2

] = f

ˆW

(x)

Var(y) ⇡

1

T

TX

t=1

ˆy2

t

TX

t=1

ˆyt

!2

+

1

T

TX

t=1

ˆ2

t

Epistemic unct. Aleatoric unct,](https://image.slidesharecdn.com/uncertaintyindeeplearning-171216053607/85/Uncertainty-Modeling-in-Deep-Learning-37-320.jpg)

![Kendal (2017)

38

Heteroscedastic uncertainty as loss attenuation

ˆW ⇠ q(W)

x

ˆy ˆW(x) ˆ2

ˆW

(x)

[ˆy, ˆ2

] = f

ˆW

(x)](https://image.slidesharecdn.com/uncertaintyindeeplearning-171216053607/85/Uncertainty-Modeling-in-Deep-Learning-38-320.jpg)