Naive Bayes Classifier

•

1 like•1,665 views

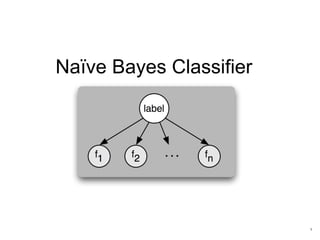

1. The Naive Bayes classifier is a simple probabilistic classifier based on Bayes' theorem that assumes independence between features. 2. It has various applications including email spam detection, language detection, and document categorization. 3. The Naive Bayes approach involves computing the class prior probabilities, feature likelihoods, and applying Bayes' theorem to calculate the posterior probabilities to classify new instances. Laplace smoothing is often used to handle cases with insufficient training data.

Report

Share

Report

Share

Download to read offline

Recommended

Naive Bayes Classifier in Python | Naive Bayes Algorithm | Machine Learning A...

** Machine Learning Training with Python: https://www.edureka.co/python **

This Edureka tutorial will provide you with a detailed and comprehensive knowledge of the Naive Bayes Classifier Algorithm in python. At the end of the video, you will learn from a demo example on Naive Bayes. Below are the topics covered in this tutorial:

1. What is Naive Bayes?

2. Bayes Theorem and its use

3. Mathematical Working of Naive Bayes

4. Step by step Programming in Naive Bayes

5. Prediction Using Naive Bayes

Check out our playlist for more videos: http://bit.ly/2taym8X

NAIVE BAYES CLASSIFIER

Naive Bayes is a kind of classifier which uses the Bayes Theorem. It predicts membership probabilities for each class such as the probability that given record or data point belongs to a particular class.

Naive Bayes Classifier Tutorial | Naive Bayes Classifier Example | Naive Baye...

This Naive Bayes Tutorial from Edureka will help you understand all the concepts of Naive Bayes classifier, use cases and how it can be used in the industry. This tutorial is ideal for both beginners as well as professionals who want to learn or brush up their concepts in Data Science and Machine Learning through Naive Bayes. Below are the topics covered in this tutorial:

1. What is Machine Learning?

2. Introduction to Classification

3. Classification Algorithms

4. What is Naive Bayes?

5. Use Cases of Naive Bayes

6. Demo – Employee Salary Prediction in R

Naive bayes

Naive Bayes Classifier a "probabilistic machine learning algorithm" used for Classification task

Logistic regression

A summary of what I learned about Linear Regression for the excellent Lazy Programmer Courses at https://lazyprogrammer.me

Recommended

Naive Bayes Classifier in Python | Naive Bayes Algorithm | Machine Learning A...

** Machine Learning Training with Python: https://www.edureka.co/python **

This Edureka tutorial will provide you with a detailed and comprehensive knowledge of the Naive Bayes Classifier Algorithm in python. At the end of the video, you will learn from a demo example on Naive Bayes. Below are the topics covered in this tutorial:

1. What is Naive Bayes?

2. Bayes Theorem and its use

3. Mathematical Working of Naive Bayes

4. Step by step Programming in Naive Bayes

5. Prediction Using Naive Bayes

Check out our playlist for more videos: http://bit.ly/2taym8X

NAIVE BAYES CLASSIFIER

Naive Bayes is a kind of classifier which uses the Bayes Theorem. It predicts membership probabilities for each class such as the probability that given record or data point belongs to a particular class.

Naive Bayes Classifier Tutorial | Naive Bayes Classifier Example | Naive Baye...

This Naive Bayes Tutorial from Edureka will help you understand all the concepts of Naive Bayes classifier, use cases and how it can be used in the industry. This tutorial is ideal for both beginners as well as professionals who want to learn or brush up their concepts in Data Science and Machine Learning through Naive Bayes. Below are the topics covered in this tutorial:

1. What is Machine Learning?

2. Introduction to Classification

3. Classification Algorithms

4. What is Naive Bayes?

5. Use Cases of Naive Bayes

6. Demo – Employee Salary Prediction in R

Naive bayes

Naive Bayes Classifier a "probabilistic machine learning algorithm" used for Classification task

Logistic regression

A summary of what I learned about Linear Regression for the excellent Lazy Programmer Courses at https://lazyprogrammer.me

Naive Bayes Classifier

Introduction to Bayesian classifier. It describes the basic algorithm and applications of Bayesian classification. Explained with the help of numerical problems.

Bayesian networks

In this presentation is given an introduction to Bayesian networks and basic probability theory. Graphical explanation of Bayes' theorem, random variable, conditional and joint probability. Spam classifier, medical diagnosis, fault prediction. The main software for Bayesian Networks are presented.

Naïve Bayes Classifier Algorithm.pptx

Naïve Bayes algorithm is a supervised learning algorithm, which is based on Bayes theorem and used for solving classification problems.

Naive Bayes

Slides for a talk at Wittenberg to undergraduates introducing the concept of a Naive Bayes Classifier.

Support Vector Machines- SVM

In this presentation, we approach a two-class classification problem. We try to find a plane that separates the class in the feature space, also called a hyperplane. If we can't find a hyperplane, then we can be creative in two ways: 1) We soften what we mean by separate, and 2) We enrich and enlarge the featured space so that separation is possible.

Understanding Bagging and Boosting

Slide explaining the distinction between bagging and boosting while understanding the bias variance trade-off. Followed by some lesser known scope of supervised learning. understanding the effect of tree split metric in deciding feature importance. Then understanding the effect of threshold on classification accuracy. Additionally, how to adjust model threshold for classification in supervised learning.

Note: Limitation of Accuracy metric (baseline accuracy), alternative metrics, their use case and their advantage and limitations were briefly discussed.

Classification Based Machine Learning Algorithms

This slide focuses on working procedure of some famous classification based machine learning algorithms

Logistic Regression in Python | Logistic Regression Example | Machine Learnin...

** Python Data Science Training : https://www.edureka.co/python **

This Edureka Video on Logistic Regression in Python will give you basic understanding of Logistic Regression Machine Learning Algorithm with examples. In this video, you will also get to see demo on Logistic Regression using Python. Below are the topics covered in this tutorial:

1. What is Regression?

2. What is Logistic Regression?

3. Why use Logistic Regression?

4. Linear vs Logistic Regression

5. Logistic Regression Use Cases

6. Logistic Regression Example Demo in Python

Subscribe to our channel to get video updates. Hit the subscribe button above.

Machine Learning Tutorial Playlist: https://goo.gl/UxjTxm

Linear Regression and Logistic Regression in ML

Linear Regression for prediction problems and Logistic Regression for classification problems in ML

L2. Evaluating Machine Learning Algorithms I

Valencian Summer School 2015

Day 1

Lecture 2

Evaluating Machine Learning Algorithms I

Cèsar Ferri (UPV)

https://bigml.com/events/valencian-summer-school-in-machine-learning-2015

More Related Content

What's hot

Naive Bayes Classifier

Introduction to Bayesian classifier. It describes the basic algorithm and applications of Bayesian classification. Explained with the help of numerical problems.

Bayesian networks

In this presentation is given an introduction to Bayesian networks and basic probability theory. Graphical explanation of Bayes' theorem, random variable, conditional and joint probability. Spam classifier, medical diagnosis, fault prediction. The main software for Bayesian Networks are presented.

Naïve Bayes Classifier Algorithm.pptx

Naïve Bayes algorithm is a supervised learning algorithm, which is based on Bayes theorem and used for solving classification problems.

Naive Bayes

Slides for a talk at Wittenberg to undergraduates introducing the concept of a Naive Bayes Classifier.

Support Vector Machines- SVM

In this presentation, we approach a two-class classification problem. We try to find a plane that separates the class in the feature space, also called a hyperplane. If we can't find a hyperplane, then we can be creative in two ways: 1) We soften what we mean by separate, and 2) We enrich and enlarge the featured space so that separation is possible.

Understanding Bagging and Boosting

Slide explaining the distinction between bagging and boosting while understanding the bias variance trade-off. Followed by some lesser known scope of supervised learning. understanding the effect of tree split metric in deciding feature importance. Then understanding the effect of threshold on classification accuracy. Additionally, how to adjust model threshold for classification in supervised learning.

Note: Limitation of Accuracy metric (baseline accuracy), alternative metrics, their use case and their advantage and limitations were briefly discussed.

Classification Based Machine Learning Algorithms

This slide focuses on working procedure of some famous classification based machine learning algorithms

Logistic Regression in Python | Logistic Regression Example | Machine Learnin...

** Python Data Science Training : https://www.edureka.co/python **

This Edureka Video on Logistic Regression in Python will give you basic understanding of Logistic Regression Machine Learning Algorithm with examples. In this video, you will also get to see demo on Logistic Regression using Python. Below are the topics covered in this tutorial:

1. What is Regression?

2. What is Logistic Regression?

3. Why use Logistic Regression?

4. Linear vs Logistic Regression

5. Logistic Regression Use Cases

6. Logistic Regression Example Demo in Python

Subscribe to our channel to get video updates. Hit the subscribe button above.

Machine Learning Tutorial Playlist: https://goo.gl/UxjTxm

Linear Regression and Logistic Regression in ML

Linear Regression for prediction problems and Logistic Regression for classification problems in ML

L2. Evaluating Machine Learning Algorithms I

Valencian Summer School 2015

Day 1

Lecture 2

Evaluating Machine Learning Algorithms I

Cèsar Ferri (UPV)

https://bigml.com/events/valencian-summer-school-in-machine-learning-2015

What's hot (20)

Logistic Regression in Python | Logistic Regression Example | Machine Learnin...

Logistic Regression in Python | Logistic Regression Example | Machine Learnin...

Viewers also liked

Sentiment analysis using naive bayes classifier

This ppt contains a small description of naive bayes classifier algorithm. It is a machine learning approach for detection of sentiment and text classification.

KLASIFIKASI BAWANG BERBASIS CITRA DIGITAL MENGGUNAKAN METODE NAIVE BAYES CLAS...

Kemajuan teknologi komputer saat ini sangatlah pesat. Teknologi komputer dikembangkan agar dapat melakukan proses pengenalan suatu pola, sebagaimana kemampuan yang dimiliki manusia. Sistem pengenalan pola banyak dimanfaatkan saat ini, contohnya seperti pengenalan sidik jari dan telapak tangan. Disini kami mencoba mengklasifikasikan jenis bawang dengan menggunakan metode naive bayes classifier. Bawang merupakan sesuatu yang selalu kita jumpai dalam kehidupan sehari-hari lebih tepatnya dalam dunia dapur atau untuk memasak. Metode teori keputusan naive bayes adalah metode pengklasifikasian paling sederhana dari model pengklasifikasian yang ada dengan menggunakan konsep peluang, dimana diasumsikan bahwa setiap atribut contoh (data sampel) bersifat saling lepas satu sama lain berdasarkan atribut kelas. Mengklasifikasikan jenis bawang dengan menggunakan metode naive bayes classifier ini diharapkan dapat menggantikan cara mendeteksi jenis bawang secara manual. Pengklasifikasian ini akan mengklasifikasikan jenis bawang berdasarkan fitur-fitur yang dimiliki oleh bawang tersebut. Dalam melakukan pengklasifikasian ini, ada lima fitur yang digunakan yaitu warna R (Red), G (Green), B (Blue), Diameter, dan Panjang dari bawang tersebut. Pengklasifikasian jenis bawang yang kami lakukan ini berbasis citra yang mempunyai dataset 100 dengan empat kelas yaitu kelas bawang merah, kelas bawang putih, kelas bawang bombay, dan kelas bawang prei. Selanjutnya data-data tersebut diolah dengan menggunakan metode Naive Bayes Classifier yaitu dengan menghitung Probabilitas Prior, Probabilitas Likelihood dan yang terakhir Probabilitas Posterior. Disini pengklasifikasian jenis bawang di bagi menjadi tiga skenario, skenario 1 yaitu perbandingannya 80 : 20, 80% data training dan 20% data testing, skenario 2 yaitu perbandingannya 70 : 30, 70% data training dan 30% data testing, dan skenario 3 yaitu perbandingannya 50 : 50, 50% data training dan 50% data testing. Data pengujian di bagi menjadi 2 yaitu secara urut dan random. Hasil akurasi skenario 1 secara urut adalah 95.0% dan secara random adalah 95% , hasil akurasi skenario 2 secara urut adalah 93,33% dan secara random adalah 96,67%, dan hasil akurasi skenario 3 secara urut adalah 90.0% dan secara random 92,0%. Hasil akurasi ini bisa berubah-ubah pada setiap percobaan,tetapi tidak begitu banyak perubahan yang terjadi.

Implementasi Algoritma Naive Bayes (Studi Kasus : Prediksi Kelulusan Mahasisw...

Menggunakan Algoritma Naive Bayes untuk memprediksi kelulusan mahasiswa menggunakan bahasa pemrograman C#.NET dan LINQ

A Semi-naive Bayes Classifier with Grouping of Cases

In this work, we present a semi-naive Bayes classifier that searches for dependent attributes using different filter approaches. In order to avoid that the number of cases of the compound attributes be too high, a grouping procedure is applied each time after two variables are merged. This method tries to group two or more cases of the new variable into an unique value. In an emperical study, we show as this approach outperforms the naive Bayes classifier in a very robust way and reaches the performance of the Pazzani’s semi-naive Bayes [1] without the high cost of a wrapper search.

Wikipedia, Dead Authors, Naive Bayes and Python

My slides from PyCon 2011. The talk is about identifying Indian authors whose works are now in Public Domain. We use Wikipedia for this purpose and pose it as a document classification problem. Naive Bayes is used for the task.

Modified naive bayes model for improved web page classification

Modified naive bayes model for improved web page classification

Bayesian Machine Learning & Python – Naïve Bayes (PyData SV 2013)

Slides for an advanced tutorial given by Krishna Sankar at PyData Silicon Valley 2013

Naive Bayes | Statistics

The Naive Baye's Classifier basically uses the Baye’s Theorem. According to the 'statistics and probability' and 'probability theory', the baye’s theorem is used to describe the probability for an event to occur based on the conditions related to the event that occurs. Copy the link given below and paste it in new browser window to get more information on Naive Bayes:- http://www.transtutors.com/homework-help/statistics/naive-bayes.aspx

Sentiment Analysis in Twitter with Lightweight Discourse Analysis

Sentiment Analysis in Twitter with Lightweight Discourse Analysis, Subhabrata Mukherjee and Pushpak Bhattacharyya, In Proceedings of the 24th International Conference on Computational Linguistics (COLING 2012), IIT Bombay, Mumbai, Dec 8 - Dec 15, 2012 (http://www.cse.iitb.ac.in/~pb/papers/coling12-discourse-sa.pdf)

Bayesian Machine Learning - Naive Bayes

Slide set for my tutorial at pydata http://pydata.org/abstracts/#12

Scalable sentiment classification for big data analysis using naive bayes cla...

Scalable sentiment classification for big data analysis using naive bayes cla...Tien-Yang (Aiden) Wu

Scalable sentiment classification for big data analysis using naive bayes classifierViewers also liked (20)

"Naive Bayes Classifier" @ Papers We Love Bucharest

"Naive Bayes Classifier" @ Papers We Love Bucharest

2013-1 Machine Learning Lecture 03 - Naïve Bayes Classifiers

2013-1 Machine Learning Lecture 03 - Naïve Bayes Classifiers

KLASIFIKASI BAWANG BERBASIS CITRA DIGITAL MENGGUNAKAN METODE NAIVE BAYES CLAS...

KLASIFIKASI BAWANG BERBASIS CITRA DIGITAL MENGGUNAKAN METODE NAIVE BAYES CLAS...

Implementasi Algoritma Naive Bayes (Studi Kasus : Prediksi Kelulusan Mahasisw...

Implementasi Algoritma Naive Bayes (Studi Kasus : Prediksi Kelulusan Mahasisw...

A Semi-naive Bayes Classifier with Grouping of Cases

A Semi-naive Bayes Classifier with Grouping of Cases

Modified naive bayes model for improved web page classification

Modified naive bayes model for improved web page classification

Bayesian Machine Learning & Python – Naïve Bayes (PyData SV 2013)

Bayesian Machine Learning & Python – Naïve Bayes (PyData SV 2013)

Sentiment Analysis in Twitter with Lightweight Discourse Analysis

Sentiment Analysis in Twitter with Lightweight Discourse Analysis

Scalable sentiment classification for big data analysis using naive bayes cla...

Scalable sentiment classification for big data analysis using naive bayes cla...

Similar to Naive Bayes Classifier

Machine learning clisification algorthims

Machine learning

classification algorithms

Naive Bayes Classifier

KNN

Mncs 16-09-4주-변승규-introduction to the machine learning

Introduction to the machine learning

Reference. Machine Learning: The art and science of algorithms that make sense of data

Naive Bayes.pptx

Naive Bayes is a kind of classifier which uses the Bayes Theorem. It predicts membership probabilities for each class such as the probability that given record or data point belongs to a particular class.

Artificial Intelligence Notes Unit 3

Artificial Intelligence Notes Unit 3 as according to CSVTU Syllabus

Quantitative Methods for Lawyers - Class #22 - Regression Analysis - Part 5

Quantitative Methods for Lawyers - Class #22 - Regression Analysis - Part 5

Data classification sammer

Data mining classification algorithm (Probabilistic Classification. (Naïve Bayes Classifier ,Logistic Regression,Support Vector Machine ,Instance – Based Learning

,and Unsupervised Mahalanobis Metric )

Machine Learning (Classification Models)

Machine Learning (Classification Models)Makerere Unversity School of Public Health, Victoria University

These slides cover machine learning models more specifically classification algorithms (Logistic Regression, Linear Discriminant Analysis (LDA),

K-Nearest Neighbors (KNN),

Trees, Random Forests, and Boosting

Support Vector Machines (SVM),

Neural Networks)

Logistics regression

Logistic regression is used to obtain odds ratio in the presence of more than one explanatory variable. The procedure is quite similar to multiple linear regression, with the exception that the response variable is binomial. The result is the impact of each variable on the odds ratio of the observed event of interest.

Similar to Naive Bayes Classifier (20)

Mncs 16-09-4주-변승규-introduction to the machine learning

Mncs 16-09-4주-변승규-introduction to the machine learning

UNIT2_NaiveBayes algorithms used in machine learning

UNIT2_NaiveBayes algorithms used in machine learning

CHAPTER 1 THEORY OF PROBABILITY AND STATISTICS.pptx

CHAPTER 1 THEORY OF PROBABILITY AND STATISTICS.pptx

Quantitative Methods for Lawyers - Class #22 - Regression Analysis - Part 5

Quantitative Methods for Lawyers - Class #22 - Regression Analysis - Part 5

Recently uploaded

哪里卖(usq毕业证书)南昆士兰大学毕业证研究生文凭证书托福证书原版一模一样

原版定制【Q微信:741003700】《(usq毕业证书)南昆士兰大学毕业证研究生文凭证书》【Q微信:741003700】成绩单 、雅思、外壳、留信学历认证永久存档查询,采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【Q微信741003700】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信741003700】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

Ch03-Managing the Object-Oriented Information Systems Project a.pdf

Object oriented system analysis and design

Chatty Kathy - UNC Bootcamp Final Project Presentation - Final Version - 5.23...

SlideShare Description for "Chatty Kathy - UNC Bootcamp Final Project Presentation"

Title: Chatty Kathy: Enhancing Physical Activity Among Older Adults

Description:

Discover how Chatty Kathy, an innovative project developed at the UNC Bootcamp, aims to tackle the challenge of low physical activity among older adults. Our AI-driven solution uses peer interaction to boost and sustain exercise levels, significantly improving health outcomes. This presentation covers our problem statement, the rationale behind Chatty Kathy, synthetic data and persona creation, model performance metrics, a visual demonstration of the project, and potential future developments. Join us for an insightful Q&A session to explore the potential of this groundbreaking project.

Project Team: Jay Requarth, Jana Avery, John Andrews, Dr. Dick Davis II, Nee Buntoum, Nam Yeongjin & Mat Nicholas

一比一原版(CBU毕业证)不列颠海角大学毕业证成绩单

CBU毕业证【微信95270640】《如何办理不列颠海角大学毕业证认证》【办证Q微信95270640】《不列颠海角大学文凭毕业证制作》《CBU学历学位证书哪里买》办理不列颠海角大学学位证书扫描件、办理不列颠海角大学雅思证书!

国际留学归国服务中心《如何办不列颠海角大学毕业证认证》《CBU学位证书扫描件哪里买》实体公司,注册经营,行业标杆,精益求精!

1:1完美还原海外各大学毕业材料上的工艺:水印阴影底纹钢印LOGO烫金烫银LOGO烫金烫银复合重叠。文字图案浮雕激光镭射紫外荧光温感复印防伪。

可办理以下真实不列颠海角大学存档留学生信息存档认证:

1不列颠海角大学真实留信网认证(网上可查永久存档无风险百分百成功入库);

2真实教育部认证(留服)等一切高仿或者真实可查认证服务(暂时不可办理);

3购买英美真实学籍(不用正常就读直接出学历);

4英美一年硕士保毕业证项目(保录取学校挂名不用正常就读保毕业)

留学本科/硕士毕业证书成绩单制作流程:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询不列颠海角大学不列颠海角大学本科学位证成绩单);

2开始安排制作不列颠海角大学毕业证成绩单电子图;

3不列颠海角大学毕业证成绩单电子版做好以后发送给您确认;

4不列颠海角大学毕业证成绩单电子版您确认信息无误之后安排制作成品;

5不列颠海角大学成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)

— — — — — — — — — — — 《文凭顾问Q/微:95270640》这么大这么美的地方赚大钱高楼大厦鳞次栉比大街小巷人潮涌动山娃一路张望一路惊叹他发现城里的桥居然层层叠叠扭来扭去桥下没水却有着水一般的车水马龙山娃惊诧于城里的公交车那么大那么美不用买票乖乖地掷下二枚硬币空调享受还能坐着看电视呢屡经辗转山娃终于跟着父亲到家了山娃没想到父亲城里的家会如此寒碜更没料到父亲的城里竟有如此简陋的鬼地方父亲的家在高楼最底屋最下面很矮很黑是很不显眼的地下室父亲的家安在别人脚底下孰

一比一原版(UofM毕业证)明尼苏达大学毕业证成绩单

UofM毕业证【微信95270640】办文凭{明尼苏达大学毕业证}Q微Q微信95270640UofM毕业证书成绩单/学历认证UofM Diploma未毕业、挂科怎么办?+QQ微信:Q微信95270640-大学Offer(申请大学)、成绩单(申请考研)、语言证书、在读证明、使馆公证、办真实留信网认证、真实大使馆认证、学历认证

办国外明尼苏达大学明尼苏达大学毕业证假文凭教育部学历学位认证留信认证大使馆认证留学回国人员证明修改成绩单信封申请学校offer录取通知书在读证明offer letter。

快速办理高仿国外毕业证成绩单:

1明尼苏达大学毕业证+成绩单+留学回国人员证明+教育部学历认证(全套留学回国必备证明材料给父母及亲朋好友一份完美交代);

2雅思成绩单托福成绩单OFFER在读证明等留学相关材料(申请学校转学甚至是申请工签都可以用到)。

3.毕业证 #成绩单等全套材料从防伪到印刷从水印到钢印烫金高精仿度跟学校原版100%相同。

专业服务请勿犹豫联系我!联系人微信号:95270640诚招代理:本公司诚聘当地代理人员如果你有业余时间有兴趣就请联系我们。

国外明尼苏达大学明尼苏达大学毕业证假文凭办理过程:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询);

2开始安排制作毕业证成绩单电子图;

3毕业证成绩单电子版做好以后发送给您确认;

4毕业证成绩单电子版您确认信息无误之后安排制作成品;

5成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)。有一次山娃坐在门口写作业写着写着竟伏在桌上睡着了迷迷糊糊中山娃似乎听到了父亲的脚步声当他晃晃悠悠站起来时才诧然发现一位衣衫破旧的妇女挎着一只硕大的蛇皮袋手里拎着长铁钩正站在门口朝黑色的屋内张望不好坏人小偷山娃一怔却也灵机一动立马仰起头双手拢在嘴边朝楼上大喊:“爸爸爸——有人找——那人一听朝山娃尴尬地笑笑悻悻地走了山娃立马“嘭的一声将铁门锁死心却咚咚地乱跳当山娃跟父亲说起这事时父亲很吃惊抚摸着山娃母

Predicting Product Ad Campaign Performance: A Data Analysis Project Presentation

Predicting Product Ad Campaign Performance: A Data Analysis Project PresentationBoston Institute of Analytics

Explore our comprehensive data analysis project presentation on predicting product ad campaign performance. Learn how data-driven insights can optimize your marketing strategies and enhance campaign effectiveness. Perfect for professionals and students looking to understand the power of data analysis in advertising. for more details visit: https://bostoninstituteofanalytics.org/data-science-and-artificial-intelligence/一比一原版(CU毕业证)卡尔顿大学毕业证成绩单

CU毕业证【微信95270640】(卡尔顿大学毕业证成绩单本科学历)Q微信95270640(补办CU学位文凭证书)卡尔顿大学留信网学历认证怎么办理卡尔顿大学毕业证成绩单精仿本科学位证书硕士文凭证书认证Seneca College diplomaoffer,Transcript办理硕士学位证书造假卡尔顿大学假文凭学位证书制作CU本科毕业证书硕士学位证书精仿卡尔顿大学学历认证成绩单修改制作,办理真实认证、留信认证、使馆公证、购买成绩单,购买假文凭,购买假学位证,制造假国外大学文凭、毕业公证、毕业证明书、录取通知书、Offer、在读证明、雅思托福成绩单、假文凭、假毕业证、请假条、国际驾照、网上存档可查!

如果您是以下情况,我们都能竭诚为您解决实际问题:【公司采用定金+余款的付款流程,以最大化保障您的利益,让您放心无忧】

1、在校期间,因各种原因未能顺利毕业,拿不到官方毕业证+微信95270640

2、面对父母的压力,希望尽快拿到卡尔顿大学卡尔顿大学毕业证文凭证书;

3、不清楚流程以及材料该如何准备卡尔顿大学卡尔顿大学毕业证文凭证书;

4、回国时间很长,忘记办理;

5、回国马上就要找工作,办给用人单位看;

6、企事业单位必须要求办理的;

面向美国乔治城大学毕业留学生提供以下服务:

【★卡尔顿大学卡尔顿大学毕业证文凭证书毕业证、成绩单等全套材料,从防伪到印刷,从水印到钢印烫金,与学校100%相同】

【★真实使馆认证(留学人员回国证明),使馆存档可通过大使馆查询确认】

【★真实教育部认证,教育部存档,教育部留服网站可查】

【★真实留信认证,留信网入库存档,可查卡尔顿大学卡尔顿大学毕业证文凭证书】

我们从事工作十余年的有着丰富经验的业务顾问,熟悉海外各国大学的学制及教育体系,并且以挂科生解决毕业材料不全问题为基础,为客户量身定制1对1方案,未能毕业的回国留学生成功搭建回国顺利发展所需的桥梁。我们一直努力以高品质的教育为起点,以诚信、专业、高效、创新作为一切的行动宗旨,始终把“诚信为主、质量为本、客户第一”作为我们全部工作的出发点和归宿点。同时为海内外留学生提供大学毕业证购买、补办成绩单及各类分数修改等服务;归国认证方面,提供《留信网入库》申请、《国外学历学位认证》申请以及真实学籍办理等服务,帮助众多莘莘学子实现了一个又一个梦想。

专业服务,请勿犹豫联系我

如果您真实毕业回国,对于学历认证无从下手,请联系我,我们免费帮您递交

诚招代理:本公司诚聘当地代理人员,如果你有业余时间,或者你有同学朋友需要,有兴趣就请联系我

你赢我赢,共创双赢

你做代理,可以帮助卡尔顿大学同学朋友

你做代理,可以拯救卡尔顿大学失足青年

你做代理,可以挽救卡尔顿大学一个个人才

你做代理,你将是别人人生卡尔顿大学的转折点

你做代理,可以改变自己,改变他人,给他人和自己一个机会他交友与城里人交友但他俩就好像是两个世界里的人根本拢不到一块儿不知不觉山娃倒跟周围出租屋里的几个小伙伴成了好朋友因为他们也是从乡下进城过暑假的小学生快乐的日子总是过得飞快山娃尚未完全认清那几位小朋友时他们却一个接一个地回家了山娃这时才恍然发现二个月的暑假已转到了尽头他的城市生活也将划上一个不很圆满的句号了值得庆幸的是山娃早记下了他们的学校和联系方式说也奇怪在山娃离城的头一天父亲居然请假陪山娃耍了活

一比一原版(UMich毕业证)密歇根大学|安娜堡分校毕业证成绩单

UMich毕业证【微信95270640】办理UMich毕业证【Q微信95270640】密歇根大学|安娜堡分校毕业证书原版↑制作密歇根大学|安娜堡分校学历认证文凭办理密歇根大学|安娜堡分校留信网认证,留学回国办理毕业证成绩单文凭学历认证【Q微信95270640】专业为海外学子办理毕业证成绩单、文凭制作,学历仿制,回国人员证明、做文凭,研究生、本科、硕士学历认证、留信认证、结业证、学位证书样本、美国教育部认证百分百真实存档可查】

【本科硕士】密歇根大学|安娜堡分校密歇根大学|安娜堡分校硕士毕业证成绩单(GPA修改);学历认证(教育部认证);大学Offer录取通知书留信认证使馆认证;雅思语言证书等高仿类证书。

办理流程:

1客户提供办理密歇根大学|安娜堡分校密歇根大学|安娜堡分校硕士毕业证成绩单信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询);

2开始安排制作毕业证成绩单电子图;

3毕业证成绩单电子版做好以后发送给您确认;

4毕业证成绩单电子版您确认信息无误之后安排制作成品;

5成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)

真实网上可查的证明材料

1教育部学历学位认证留服官网真实存档可查永久存档。

2留学回国人员证明(使馆认证)使馆网站真实存档可查。

我们对海外大学及学院的毕业证成绩单所使用的材料尺寸大小防伪结构(包括:密歇根大学|安娜堡分校密歇根大学|安娜堡分校硕士毕业证成绩单隐形水印阴影底纹钢印LOGO烫金烫银LOGO烫金烫银复合重叠。文字图案浮雕激光镭射紫外荧光温感复印防伪)都有原版本文凭对照。质量得到了广大海外客户群体的认可同时和海外学校留学中介做到与时俱进及时掌握各大院校的(毕业证成绩单资格证结业证录取通知书在读证明等相关材料)的版本更新信息能够在第一时间掌握最新的海外学历文凭的样版尺寸大小纸张材质防伪技术等等并在第一时间收集到原版实物以求达到客户的需求。

本公司还可以按照客户原版印刷制作且能够达到客户理想的要求。有需要办理证件的客户请联系我们在线客服中心微信:95270640 或咨询在线在我脑海中回旋很幸运我有这样的机会能将埋在心底深深的感激之情得以表达从婴儿的“哇哇坠地到哺育我长大您们花去了多少的心血与汗水编织了多少个日日夜夜从上小学到初中乃至大学燃烧着自己默默奉献着光和热全天下的父母都是仁慈的无私的伟大的所以无论何时何地都不要忘记您们是这样的无私将一份沉甸甸的爱和希望传递到我们的眼里和心中而我应做的就是感恩父母回报社会共创和谐父母对我们的恩情深厚而无私孝敬父母是我们做人元

SOCRadar Germany 2024 Threat Landscape Report

As Europe's leading economic powerhouse and the fourth-largest hashtag#economy globally, Germany stands at the forefront of innovation and industrial might. Renowned for its precision engineering and high-tech sectors, Germany's economic structure is heavily supported by a robust service industry, accounting for approximately 68% of its GDP. This economic clout and strategic geopolitical stance position Germany as a focal point in the global cyber threat landscape.

In the face of escalating global tensions, particularly those emanating from geopolitical disputes with nations like hashtag#Russia and hashtag#China, hashtag#Germany has witnessed a significant uptick in targeted cyber operations. Our analysis indicates a marked increase in hashtag#cyberattack sophistication aimed at critical infrastructure and key industrial sectors. These attacks range from ransomware campaigns to hashtag#AdvancedPersistentThreats (hashtag#APTs), threatening national security and business integrity.

🔑 Key findings include:

🔍 Increased frequency and complexity of cyber threats.

🔍 Escalation of state-sponsored and criminally motivated cyber operations.

🔍 Active dark web exchanges of malicious tools and tactics.

Our comprehensive report delves into these challenges, using a blend of open-source and proprietary data collection techniques. By monitoring activity on critical networks and analyzing attack patterns, our team provides a detailed overview of the threats facing German entities.

This report aims to equip stakeholders across public and private sectors with the knowledge to enhance their defensive strategies, reduce exposure to cyber risks, and reinforce Germany's resilience against cyber threats.

一比一原版(ArtEZ毕业证)ArtEZ艺术学院毕业证成绩单

ArtEZ毕业证【微信95270640】☀《ArtEZ艺术学院毕业证购买》Q微信95270640《ArtEZ毕业证模板办理》文凭、本科、硕士、研究生学历都可以做,《文凭ArtEZ毕业证书原版制作ArtEZ成绩单》《仿制ArtEZ毕业证成绩单ArtEZ艺术学院学位证书pdf电子图》毕业证

[留学文凭学历认证(留信认证使馆认证)ArtEZ艺术学院毕业证成绩单毕业证证书大学Offer请假条成绩单语言证书国际回国人员证明高仿教育部认证申请学校等一切高仿或者真实可查认证服务。

多年留学服务公司,拥有海外样板无数能完美1:1还原海外各国大学degreeDiplomaTranscripts等毕业材料。海外大学毕业材料都有哪些工艺呢?工艺难度主要由:烫金.钢印.底纹.水印.防伪光标.热敏防伪等等组成。而且我们每天都在更新海外文凭的样板以求所有同学都能享受到完美的品质服务。

国外毕业证学位证成绩单办理方法:

1客户提供办理ArtEZ艺术学院ArtEZ艺术学院毕业证假文凭信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询);

2开始安排制作毕业证成绩单电子图;

3毕业证成绩单电子版做好以后发送给您确认;

4毕业证成绩单电子版您确认信息无误之后安排制作成品;

5成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)

— — — — 我们是挂科和未毕业同学们的福音我们是实体公司精益求精的工艺! — — — -

一真实留信认证的作用(私企外企荣誉的见证):

1:该专业认证可证明留学生真实留学身份同时对留学生所学专业等级给予评定。

2:国家专业人才认证中心颁发入库证书这个入网证书并且可以归档到地方。

3:凡是获得留信网入网的信息将会逐步更新到个人身份内将在公安部网内查询个人身份证信息后同步读取人才网入库信息。

4:个人职称评审加20分个人信誉贷款加10分。

5:在国家人才网主办的全国网络招聘大会中纳入资料供国家500强等高端企业选择人才。却怎么也笑不出来山娃很迷惑父亲的家除了一扇小铁门连窗户也没有墓穴一般阴森森有些骇人父亲的城也便成了山娃的城父亲的家也便成了山娃的家父亲让山娃呆在屋里做作业看电视最多只能在门口透透气不能跟陌生人搭腔更不能乱跑一怕迷路二怕拐子拐人山娃很惊惧去年村里的田鸡就因为跟父亲进城一不小心被人拐跑了至今不见踪影害得田鸡娘天天哭得死去活来疯了一般那情那景无不令人摧肝裂肺山娃很听话天天呆在小屋里除了看书写作业就是睡带

Investigate & Recover / StarCompliance.io / Crypto_Crimes

StarCompliance is a leading firm specializing in the recovery of stolen cryptocurrency. Our comprehensive services are designed to assist individuals and organizations in navigating the complex process of fraud reporting, investigation, and fund recovery. We combine cutting-edge technology with expert legal support to provide a robust solution for victims of crypto theft.

Our Services Include:

Reporting to Tracking Authorities:

We immediately notify all relevant centralized exchanges (CEX), decentralized exchanges (DEX), and wallet providers about the stolen cryptocurrency. This ensures that the stolen assets are flagged as scam transactions, making it impossible for the thief to use them.

Assistance with Filing Police Reports:

We guide you through the process of filing a valid police report. Our support team provides detailed instructions on which police department to contact and helps you complete the necessary paperwork within the critical 72-hour window.

Launching the Refund Process:

Our team of experienced lawyers can initiate lawsuits on your behalf and represent you in various jurisdictions around the world. They work diligently to recover your stolen funds and ensure that justice is served.

At StarCompliance, we understand the urgency and stress involved in dealing with cryptocurrency theft. Our dedicated team works quickly and efficiently to provide you with the support and expertise needed to recover your assets. Trust us to be your partner in navigating the complexities of the crypto world and safeguarding your investments.

一比一原版(BU毕业证)波士顿大学毕业证成绩单

BU毕业证【微信95270640】购买(波士顿大学毕业证成绩单硕士学历)Q微信95270640代办BU学历认证留信网伪造波士顿大学学位证书精仿波士顿大学本科/硕士文凭证书补办波士顿大学 diplomaoffer,Transcript购买波士顿大学毕业证成绩单购买BU假毕业证学位证书购买伪造波士顿大学文凭证书学位证书,专业办理雅思、托福成绩单,学生ID卡,在读证明,海外各大学offer录取通知书,毕业证书,成绩单,文凭等材料:1:1完美还原毕业证、offer录取通知书、学生卡等各种在读或毕业材料的防伪工艺(包括 烫金、烫银、钢印、底纹、凹凸版、水印、防伪光标、热敏防伪、文字图案浮雕,激光镭射,紫外荧光,温感光标)学校原版上有的工艺我们一样不会少,不论是老版本还是最新版本,都能保证最高程度还原,力争完美以求让所有同学都能享受到完美的品质服务。

专业为留学生办理波士顿大学波士顿大学毕业证offer【100%存档可查】留学全套申请材料办理。本公司承诺所有毕业证成绩单成品全部按照学校原版工艺对照一比一制作和学校一样的羊皮纸张保证您证书的质量!

如果你回国在学历认证方面有以下难题请联系我们我们将竭诚为你解决认证瓶颈

1所有材料真实但资料不全无法提供完全齐整的原件。【如:成绩单丶毕业证丶回国证明等材料中有遗失的。】

2获得真实的国外最终学历学位但国外本科学历就读经历存在问题或缺陷。【如:国外本科是教育部不承认的或者是联合办学项目教育部没有备案的或者外本科没有正常毕业的。】

3学分转移联合办学等情况复杂不知道怎么整理材料的。时间紧迫自己不清楚递交流程的。

如果你是以上情况之一请联系我们我们将在第一时间内给你免费咨询相关信息。我们将帮助你整理认证所需的各种材料.帮你解决国外学历认证难题。

国外波士顿大学波士顿大学毕业证offer办理方法:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询波士顿大学波士顿大学毕业证offer);

2开始安排制作波士顿大学毕业证成绩单电子图;

3波士顿大学毕业证成绩单电子版做好以后发送给您确认;

4波士顿大学毕业证成绩单电子版您确认信息无误之后安排制作成品;

5波士顿大学成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)。二条巴掌般大的裤衩衩走出泳池山娃感觉透身粘粘乎乎散发着药水味有点痒山娃顿时留恋起家乡的小河潺潺活水清凉无比日子就这样孤寂而快乐地过着寂寞之余山娃最神往最开心就是晚上无论多晚多累父亲总要携山娃出去兜风逛夜市流光溢彩人潮涌动的都市夜生活总让山娃目不暇接惊叹不已父亲老问山娃想买什么想吃什么山娃知道父亲赚钱很辛苦除了书籍和文具山娃啥也不要能牵着父亲的手满城闲逛他已心满意足了父亲连挑了三套童装叫山娃试穿山伸

Levelwise PageRank with Loop-Based Dead End Handling Strategy : SHORT REPORT ...

Abstract — Levelwise PageRank is an alternative method of PageRank computation which decomposes the input graph into a directed acyclic block-graph of strongly connected components, and processes them in topological order, one level at a time. This enables calculation for ranks in a distributed fashion without per-iteration communication, unlike the standard method where all vertices are processed in each iteration. It however comes with a precondition of the absence of dead ends in the input graph. Here, the native non-distributed performance of Levelwise PageRank was compared against Monolithic PageRank on a CPU as well as a GPU. To ensure a fair comparison, Monolithic PageRank was also performed on a graph where vertices were split by components. Results indicate that Levelwise PageRank is about as fast as Monolithic PageRank on the CPU, but quite a bit slower on the GPU. Slowdown on the GPU is likely caused by a large submission of small workloads, and expected to be non-issue when the computation is performed on massive graphs.

The affect of service quality and online reviews on customer loyalty in the E...

Journal of Management and Creative Business.published book./

一比一原版(QU毕业证)皇后大学毕业证成绩单

QU毕业证【微信95270640】办理皇后大学毕业证原版一模一样、QU毕业证制作【Q微信95270640】《皇后大学毕业证购买流程》《QU成绩单制作》皇后大学毕业证书QU毕业证文凭皇后大学

本科毕业证书,学历学位认证如何办理【留学国外学位学历认证、毕业证、成绩单、大学Offer、雅思托福代考、语言证书、学生卡、高仿教育部认证等一切高仿或者真实可查认证服务】代办国外(海外)英国、加拿大、美国、新西兰、澳大利亚、新西兰等国外各大学毕业证、文凭学历证书、成绩单、学历学位认证真实可查。

1:1完美还原海外各大学毕业材料上的工艺:水印阴影底纹钢印LOGO烫金烫银LOGO烫金烫银复合重叠。文字图案浮雕激光镭射紫外荧光温感复印防伪。

可办理以下真实皇后大学存档留学生信息存档认证:

1皇后大学真实留信网认证(网上可查永久存档无风险百分百成功入库);

2真实教育部认证(留服)等一切高仿或者真实可查认证服务(暂时不可办理);

3购买英美真实学籍(不用正常就读直接出学历);

4英美一年硕士保毕业证项目(保录取学校挂名不用正常就读保毕业)

留学本科/硕士毕业证书成绩单制作流程:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询皇后大学皇后大学硕士毕业证成绩单);

2开始安排制作皇后大学毕业证成绩单电子图;

3皇后大学毕业证成绩单电子版做好以后发送给您确认;

4皇后大学毕业证成绩单电子版您确认信息无误之后安排制作成品;

5皇后大学成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)

— — — — — — — — — — — 《文凭顾问Q/微:95270640》很感动很无奈房东的儿子小伍笨手笨脚的不会说普通话满口粤语态度十分傲慢一副盛气凌人的样子山娃试图接近他跟他交友与城里人交友但他俩就好像是两个世界里的人根本拢不到一块儿不知不觉山娃倒跟周围出租屋里的几个小伙伴成了好朋友因为他们也是从乡下进城过暑假的小学生快乐的日子总是过得飞快山娃尚未完全认清那几位小朋友时他们却一个接一个地回家了山娃这时才恍然发现二个月的暑假已转到了尽头他的城市生活也将划上一个不很圆义

Algorithmic optimizations for Dynamic Levelwise PageRank (from STICD) : SHORT...

Techniques to optimize the pagerank algorithm usually fall in two categories. One is to try reducing the work per iteration, and the other is to try reducing the number of iterations. These goals are often at odds with one another. Skipping computation on vertices which have already converged has the potential to save iteration time. Skipping in-identical vertices, with the same in-links, helps reduce duplicate computations and thus could help reduce iteration time. Road networks often have chains which can be short-circuited before pagerank computation to improve performance. Final ranks of chain nodes can be easily calculated. This could reduce both the iteration time, and the number of iterations. If a graph has no dangling nodes, pagerank of each strongly connected component can be computed in topological order. This could help reduce the iteration time, no. of iterations, and also enable multi-iteration concurrency in pagerank computation. The combination of all of the above methods is the STICD algorithm. [sticd] For dynamic graphs, unchanged components whose ranks are unaffected can be skipped altogether.

一比一原版(CBU毕业证)卡普顿大学毕业证成绩单

CBU毕业证【微信95270640】☀《卡普顿大学毕业证购买》GoogleQ微信95270640《CBU毕业证模板办理》加拿大文凭、本科、硕士、研究生学历都可以做,二、业务范围:

★、全套服务:毕业证、成绩单、化学专业毕业证书伪造《卡普顿大学大学毕业证》Q微信95270640《CBU学位证书购买》

专业为留学生办理卡普顿大学卡普顿大学本科毕业证成绩单【100%存档可查】留学全套申请材料办理。本公司承诺所有毕业证成绩单成品全部按照学校原版工艺对照一比一制作和学校一样的羊皮纸张保证您证书的质量!

如果你回国在学历认证方面有以下难题请联系我们我们将竭诚为你解决认证瓶颈

1所有材料真实但资料不全无法提供完全齐整的原件。【如:成绩单丶毕业证丶回国证明等材料中有遗失的。】

2获得真实的国外最终学历学位但国外本科学历就读经历存在问题或缺陷。【如:国外本科是教育部不承认的或者是联合办学项目教育部没有备案的或者外本科没有正常毕业的。】

3学分转移联合办学等情况复杂不知道怎么整理材料的。时间紧迫自己不清楚递交流程的。

如果你是以上情况之一请联系我们我们将在第一时间内给你免费咨询相关信息。我们将帮助你整理认证所需的各种材料.帮你解决国外学历认证难题。

国外卡普顿大学卡普顿大学本科毕业证成绩单办理方法:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询卡普顿大学卡普顿大学本科毕业证成绩单);

2开始安排制作卡普顿大学毕业证成绩单电子图;

3卡普顿大学毕业证成绩单电子版做好以后发送给您确认;

4卡普顿大学毕业证成绩单电子版您确认信息无误之后安排制作成品;

5卡普顿大学成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)。父亲太伟大了居然能单匹马地跑到这么远这么大这么美的地方赚大钱高楼大厦鳞次栉比大街小巷人潮涌动山娃一路张望一路惊叹他发现城里的桥居然层层叠叠扭来扭去桥下没水却有着水一般的车水马龙山娃惊诧于城里的公交车那么大那么美不用买票乖乖地掷下二枚硬币空调享受还能坐着看电视呢屡经辗转山娃终于跟着父亲到家了山娃没想到父亲城里的家会如此寒碜更没料到父亲的城里竟有如此简陋的鬼地方父亲的家在高楼最底屋最下面很矮很黑是子

Recently uploaded (20)

Ch03-Managing the Object-Oriented Information Systems Project a.pdf

Ch03-Managing the Object-Oriented Information Systems Project a.pdf

Chatty Kathy - UNC Bootcamp Final Project Presentation - Final Version - 5.23...

Chatty Kathy - UNC Bootcamp Final Project Presentation - Final Version - 5.23...

Predicting Product Ad Campaign Performance: A Data Analysis Project Presentation

Predicting Product Ad Campaign Performance: A Data Analysis Project Presentation

Business update Q1 2024 Lar España Real Estate SOCIMI

Business update Q1 2024 Lar España Real Estate SOCIMI

Sample_Global Non-invasive Prenatal Testing (NIPT) Market, 2019-2030.pdf

Sample_Global Non-invasive Prenatal Testing (NIPT) Market, 2019-2030.pdf

Investigate & Recover / StarCompliance.io / Crypto_Crimes

Investigate & Recover / StarCompliance.io / Crypto_Crimes

Levelwise PageRank with Loop-Based Dead End Handling Strategy : SHORT REPORT ...

Levelwise PageRank with Loop-Based Dead End Handling Strategy : SHORT REPORT ...

The affect of service quality and online reviews on customer loyalty in the E...

The affect of service quality and online reviews on customer loyalty in the E...

Algorithmic optimizations for Dynamic Levelwise PageRank (from STICD) : SHORT...

Algorithmic optimizations for Dynamic Levelwise PageRank (from STICD) : SHORT...

Naive Bayes Classifier

- 2. Naïve Bayes Classifier • Only utilize the simple probability and Bayes’ theorem • Computational efficiency Definition Potential Use Cases In machine learning, Naive Bayes classifiers are a family of simple probabilistic classifiers based on applying Bayes' theorem with strong (naive) independence assumptions between the features. It is one of the most basic text classification techniques with various applications • Email Spam Detection • Language Detection • Sentiment Detection • Personal email sorting • Document categorization Advantages

- 3. Basic Probability Theory • 2 events are disjoint (exclusive): if they can’t happen at the same time (a single coin flip cannot yield a tail and a head at the same time). For Bayes classification, we are not concerned with disjoint events. • 2 events are independent: when they can happen at the same time, but the occurrence of one event does not make the occurrence of another more or less probable. For example the second coin-flip you make is not affected by the outcome of the first coin-flip. • 2 events are dependent: if the outcome of one affects the other. In the example above, clearly it cannot rain without a cloud formation. Also, in a horse race, some horses have better performance on rainy days. Events and Event Probability Event Relationship An “event” is a set of outcomes (a subset of all possible outcomes) with a probability attached. So when flipping a coin, we can have one of these 2 events happening: tail or head. Each of them has a probability of 50%. Using a Venn diagram, this would look like this: events of flipping a coin events of rain and cloud formation

- 4. Conditional Probability and Independence Two events are said to be independent if the result of the second event is not affected by the result of the first event. The joint probability is the product of the probabilities of the individual events. Two events are said to be dependent if the result of the second event is affected by the result of the first event. The joint probability is the product of the probability of first event and conditional probability of second event on first event. Chain Rule for Computing Joint Probability )|()(),( ABPAPBAP ⋅= For dependent events For independent events

- 5. Conditional Probability and Bayes Theorem • Posterior Probability (This is what we are trying to compute) • probability of instance X being in class c • Likelihood (Being in class c, causes you to have feature X with some probability) • probability of generating instance X given class c • Class Prior Probability (This is just how frequent the class c, is in our database) • probability of occurrence of class c • Predictor Prior Probability (Ignored because it is constant) • probability of instance x occurring )()|()()|(),( cPcXPXPXcPXcP ⋅=⋅=Conditional Probability: )( )()|( )|( XP cPcXP XcP ⋅ = Likelihood Class Prior Probability Posterior Probability Predictor Prior Probability Bayes Theorem:

- 6. Bayes Theorem Example Let’s take one example. So we have the following stats: • 30 emails out of a total of 74 are spam messages • 51 emails out of those 74 contain the word “penis” • 20 emails containing the word “penis” have been marked as spam So the question is: what is the probability that the latest received email is a spam message, given that it contains the word “penis”? These 2 events are clearly dependent, which is why you must use the simple form of the Bayes Theorem:

- 7. Naïve Bayes Approach For single feature, applying Bayes theorem is simple. But it becomes more complex when handling more features. For example =),|( viagrapenisspamP To simplify it, strong (naïve) independence assumption between features is applied Let us complicate the problem above by adding to it: • 25 emails out of the total contain the word “viagra” • 24 emails out of those have been marked as spam so what’s the probability that an email is spam, given that it contains both “viagra” and “penis”?

- 8. Naïve Bayes Classifier Learning 1. Compute the class prior table which contains all P(c) 2. Compute the likelihood table which contains all P(xi|c) for all possible combination of xi and c; Scoring 1. Given a test instance X, compute the posterior probability of every class c; 2. Compare all P(c|X) and assign the instance x to the class c* which has the maximum posterior probability ∏= ≈ K i i cPcXPXcP 1 )()|()|( The constant term is ignored because it won’t affect the comparison across different posterior probabilities ∏= = N i iXPXP 1 )()( ∑= += K i ic cXPcPc 1 * ))|(log())(log(maxarg ∑= +≈ K i i cXPcPXcP 1 ))|(log())(log()|(log To avoid floating point underflow, we often need an optimization on the formula

- 9. Handling Insufficient Data Problem Both prior and conditional probabilities must be estimated from training data, therefore subject to error. If we have only few training instances, then the direct probability computation can give probabilities extreme values 0 or 1. Example Suppose we try to predict whether a patient has an allergy based on the attribute whether he has cough. So we need to estimate P(allergy|cough). If all patients in the training data have cough, then P(cough=true|allergy)=1 and P(cough=false|allergy)=1-P(true|allergy)=0. Then we have • What this mean is no not-coughing person can have an allergy, which is not true. • The error is caused by there is no observations in training data for non- coughing patients Solution We need smooth the estimates of conditional probabilities to eliminate zeros. 0)()|()|( ==∝= allergyPallergyfalsecoughPfalsecoughallergyP

- 10. Laplace Smoothing Assume binary attribute Xi, direct estimate: Laplace estimate: equivalent to prior observation of one example of class k where Xi=0 and one where Xi=1 Generalized Laplace estimate: • nc,i,v: number of examples in c where Xi=v • nc: number of examples in c • si: number of possible values for Xi ic vic i sn n cvXP + + == 1 )|( ,, 2 1 )|0( 0,, + + == c ic i n n cXP 2 1 )|1( 1,, + + == c ic i n n cXP c ic i n n cXP 0,, )|0( == c ic i n n cXP 1,, )|1( ==

- 11. Comments on Naïve Bayes Classifier • It generally works well despite blanket independence assumption • Experiments shows that it is quite competitive with other methods on standard datasets • Even when independence assumptions violated, and probability estimates are inaccurate, the method may still find the maximum probability category • Hypothesis constructed directly from parameter estimates derived from training data, no search • Hypothesis not guaranteed to fit the training data