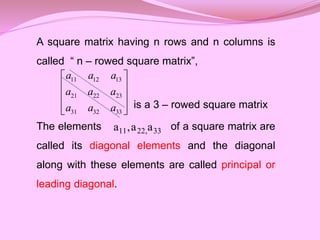

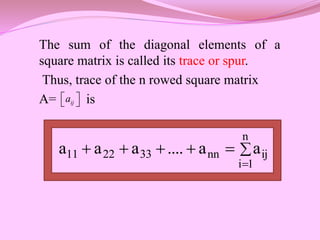

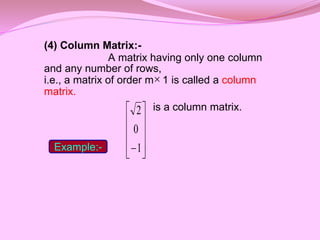

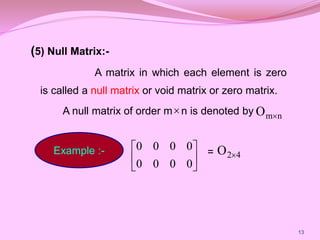

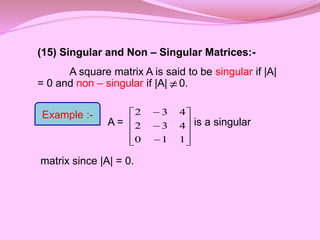

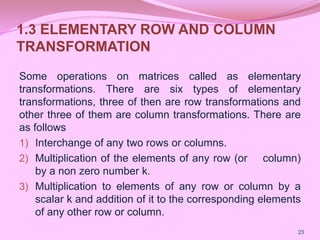

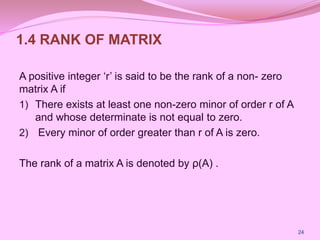

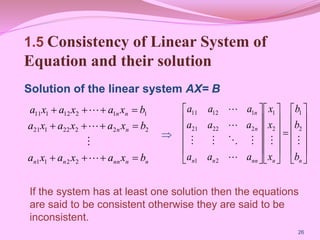

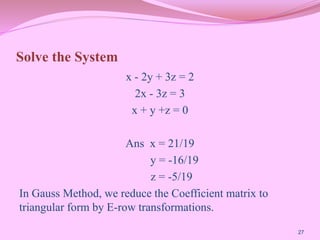

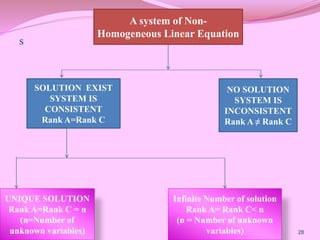

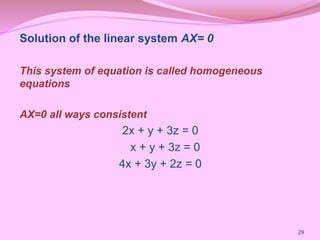

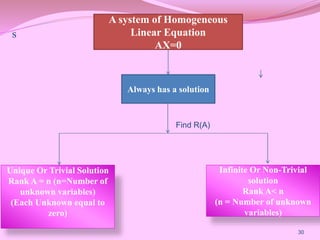

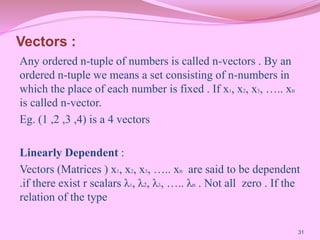

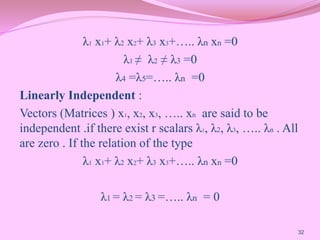

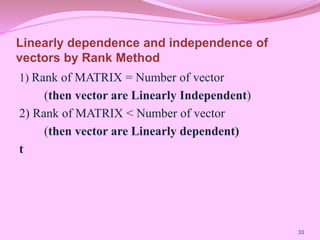

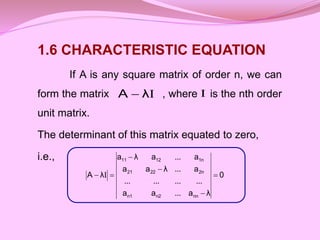

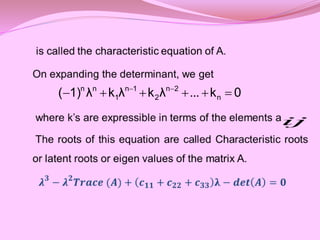

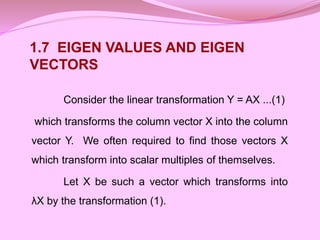

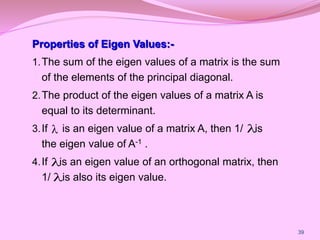

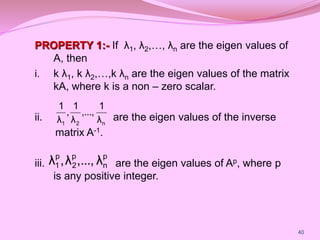

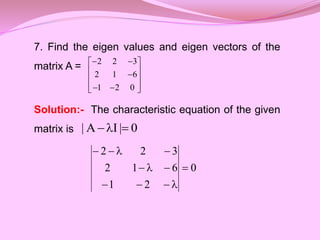

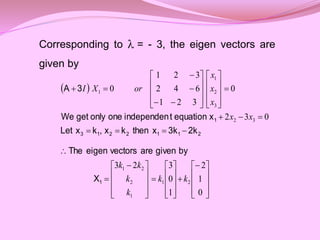

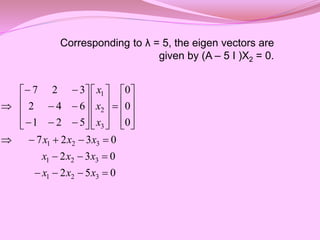

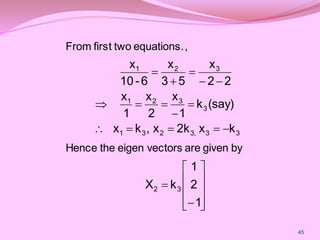

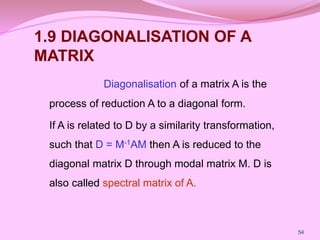

This document provides definitions and examples of different types of matrices including: real matrix, square matrix, row matrix, column matrix, null matrix, sub-matrix, diagonal matrix, scalar matrix, unit matrix, upper triangular matrix, lower triangular matrix, triangular matrix, single element matrix, equal matrices, singular and non-singular matrices. It also discusses elementary row and column transformations, rank of a matrix, solutions to homogeneous and non-homogeneous systems of linear equations, characteristic equations, eigenvectors and eigenvalues.

![(3) Row Matrix :-

A matrix having only one row and any

number of columns,

i.e., a matrix of order 1 n is called a row

matrix.

[2 5 -3 0] is a row matrix.

Example:-](https://image.slidesharecdn.com/matrixppt-230208150112-07b118ba/85/Matrix_PPT-pptx-11-320.jpg)

![(7) Diagonal Matrix :-

A square matrix in which all non – diagonal

elements are zero is called a diagonal matrix.

i.e., A = [a ] is a diagonal matrix if a = 0 for i j.

is a diagonal matrix.

ij n

n ij

Example:-

0

0

0

0

1

0

0

0

2](https://image.slidesharecdn.com/matrixppt-230208150112-07b118ba/85/Matrix_PPT-pptx-15-320.jpg)

![(8) Scalar Matrix:-

A diagonal matrix in which all the diagonal

elements are equal to a scalar, say k, is called a

scalar matrix.

i.e., A = [a ] is a scalar matrix if

is a scalar matrix.

2

0

0

0

2

0

0

0

2

ij n

n

j

i

when

k

j

i

when

0

aij

Example :-](https://image.slidesharecdn.com/matrixppt-230208150112-07b118ba/85/Matrix_PPT-pptx-16-320.jpg)

![(9) Unit Matrix or Identity Matrix:-

A scalar matrix in which each diagonal

element is 1 is called a unit or identity matrix. It is

denoted by .

i.e., A = [a ] is a unit matrix if

is a unit matrix.

n

I

ij n

n

j

i

when

1

j

i

when

0

aij

Example

1

0

0

1](https://image.slidesharecdn.com/matrixppt-230208150112-07b118ba/85/Matrix_PPT-pptx-17-320.jpg)

![(10) Upper Triangular Matrix.

A square matrix in which all the elements

below the principal diagonal are zero is called an

upper triangular matrix.

i.e., A = [a ] is an upper triangular matrix if a = 0

for i > j

is an upper triangular

matrix

3

0

0

5

1

0

4

3

2

ij n

n ij

Example:-](https://image.slidesharecdn.com/matrixppt-230208150112-07b118ba/85/Matrix_PPT-pptx-18-320.jpg)

![(11) Lower Triangular Matrix.

A square matrix in which all the elements

above the principal diagonal are zero is called a

lower triangular matrix.

i.e., A = [a ] is a lower triangular matrix if a = 0

for i < j

is a lower triangular

matrix.

ij n

n ij

Example:-

0

2

3

0

6

5

0

0

1](https://image.slidesharecdn.com/matrixppt-230208150112-07b118ba/85/Matrix_PPT-pptx-19-320.jpg)

![(12) Triangular Matrix:-

A triangular matrix is either upper

triangular or lower triangular.

(13) Single Element Matrix:-

A matrix having only one element is

called a single element matrix.

i.e., any matrix [3] is a single element matrix.](https://image.slidesharecdn.com/matrixppt-230208150112-07b118ba/85/Matrix_PPT-pptx-20-320.jpg)

![(14) Equal Matrices:-

Two matrices A and B are said to be equal iff

they have the same order and their corresponding

elements are equal.

i.e., if A = and B = , then A = B

iff a) m = p and n = q

b) a = b for all i and j.

q

p

ij]

b

[

n

m

ij]

a

[

ij ij](https://image.slidesharecdn.com/matrixppt-230208150112-07b118ba/85/Matrix_PPT-pptx-21-320.jpg)

![Thus,

5

3,

3,

λ

0

5)

3)(λ

3)(λ

(λ

0

15)

2λ

3)(λ

(λ

it.

satisfies

3

λ

trial,

By

0

45

21λ

λ

λ

0

λ)]

1(1

4

3[

6]

2λ

2[

12]

λ)

λ(1

λ)[

2

(

2

2

3

the eigen values of A are -3, -3, 5.](https://image.slidesharecdn.com/matrixppt-230208150112-07b118ba/85/Matrix_PPT-pptx-42-320.jpg)

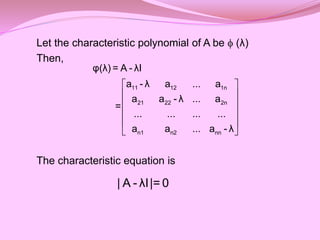

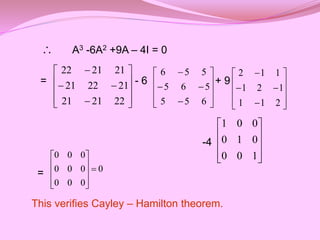

![1.8 CAYLEY HAMILTON THEOREM

Every square matrix satisfies its own

characteristic equation.

Let A = [aij]n×n be a square matrix

then,

n

n

nn

2

n

1

n

n

2

22

21

n

1

12

11

a

...

a

a

....

....

....

....

a

...

a

a

a

...

a

a

A

](https://image.slidesharecdn.com/matrixppt-230208150112-07b118ba/85/Matrix_PPT-pptx-46-320.jpg)

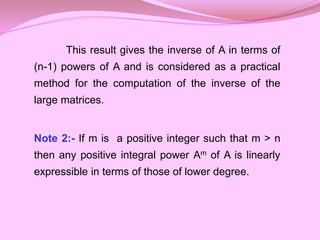

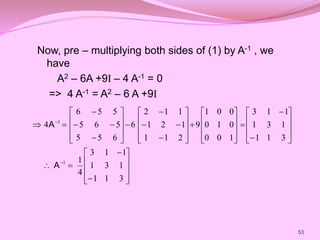

![Note 1:- Premultiplying equation (1) by A-1 , we

have

n n-1 n-2

0 1 2 n

n n-1 n-2

0 1 2 n

We are to prove that

p λ +p λ +p λ +...+p = 0

p A +p A +p A +...+p I= 0 ...(1)

I

n-1 n-2 n-3 -1

0 1 2 n-1 n

-1 n-1 n-2 n-3

0 1 2 n-1

n

0 =p A +p A +p A +...+p +p A

1

A =- [p A +p A +p A +...+p I]

p](https://image.slidesharecdn.com/matrixppt-230208150112-07b118ba/85/Matrix_PPT-pptx-48-320.jpg)

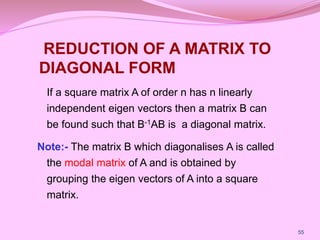

![

3

0

0

0

2

0

0

0

1

2

0

0

2

1

-

0

3

1

1

3

0

0

1

2

0

2

1

1

2

1

0

0

1

1

-

0

2

1

1

1

AM

M 1

62

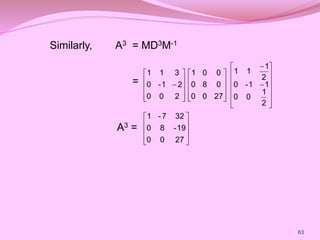

Now, since D = M-1AM

=> A = MDM-1

A2 = (MDM-1) (MDM-1)

= MD2M-1 [since M-1M = I]](https://image.slidesharecdn.com/matrixppt-230208150112-07b118ba/85/Matrix_PPT-pptx-62-320.jpg)