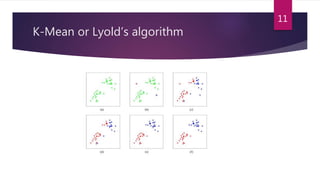

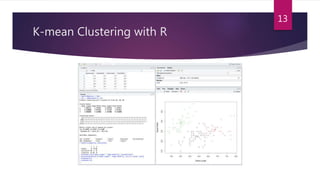

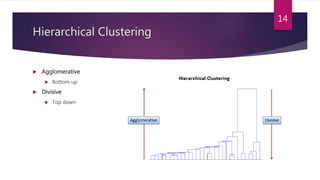

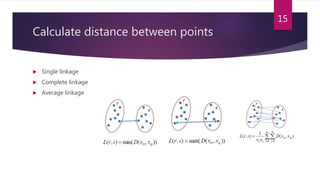

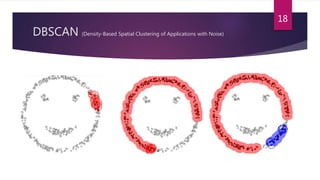

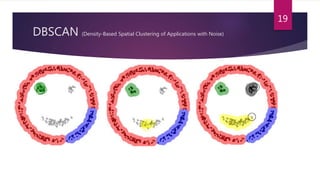

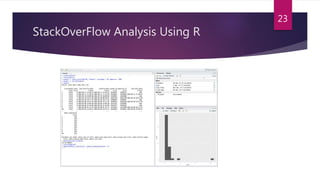

The document presents an overview of data science and its applications, particularly emphasizing machine learning and clustering algorithms. It discusses various types of clustering methods, including partitioning, hierarchical, and density-based techniques, and highlights their implementations in scenarios like market segmentation and city planning. Additionally, the document covers practical aspects, such as implementing k-means clustering with R and analyzing high-dimensional data.