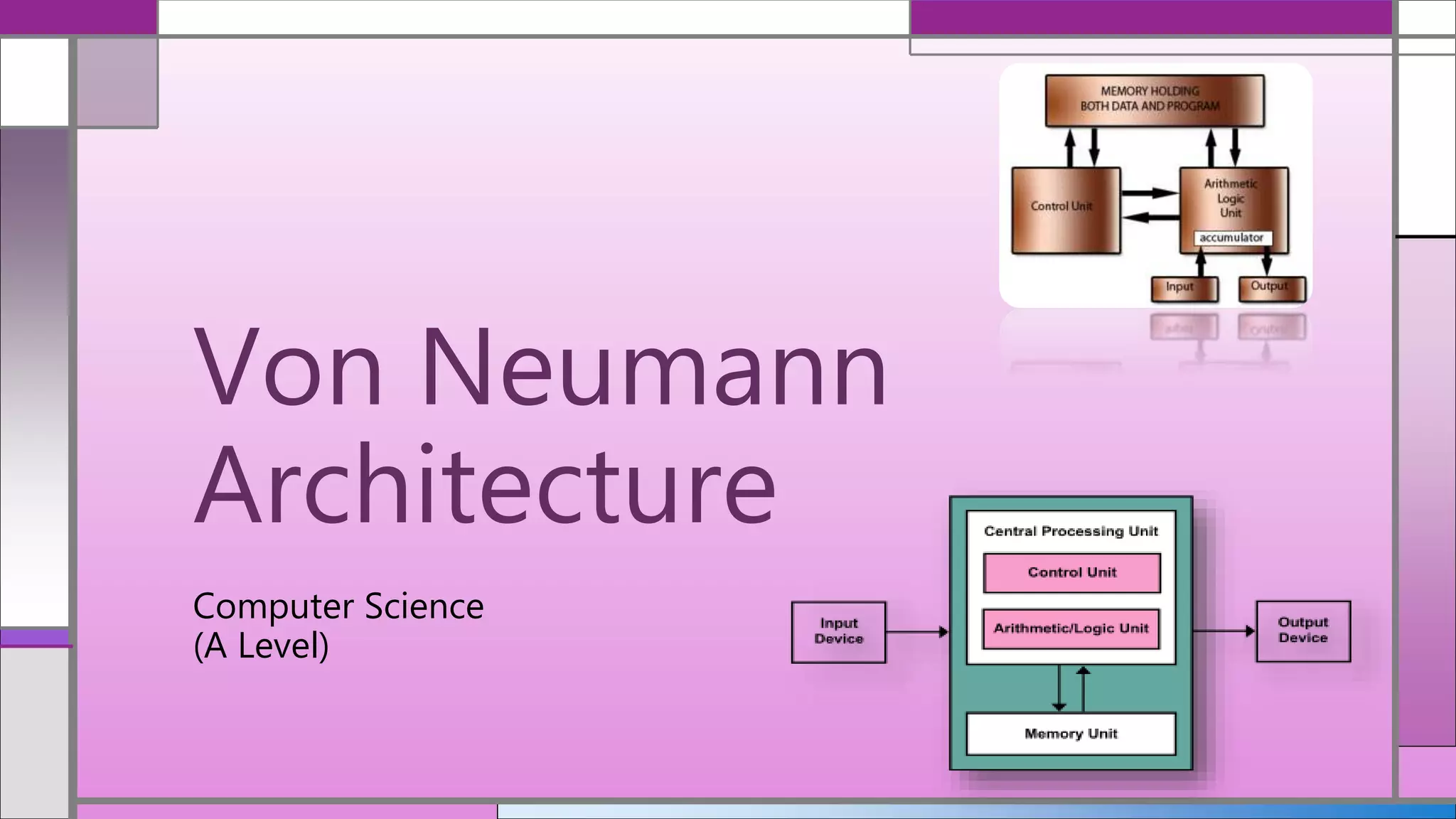

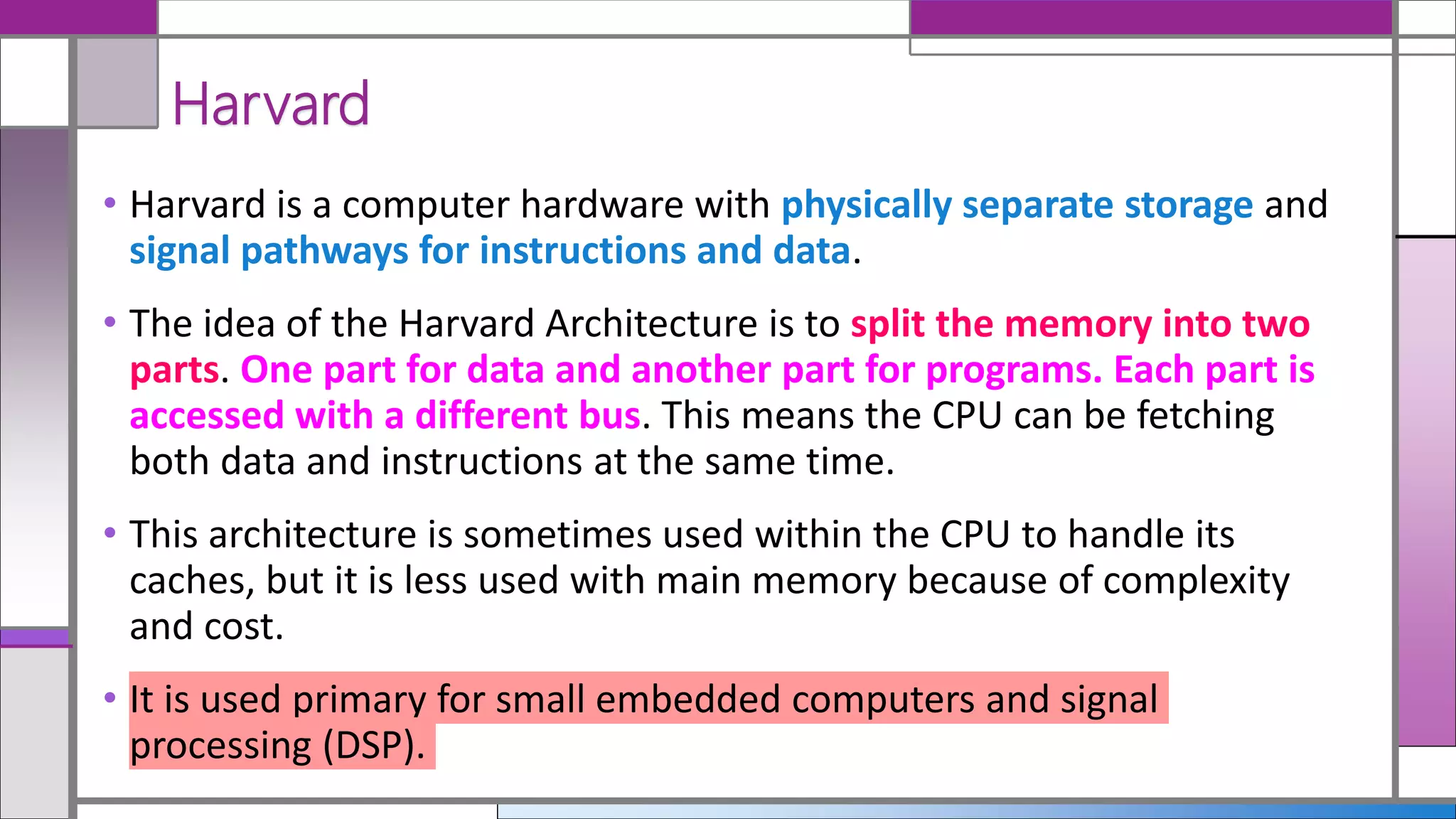

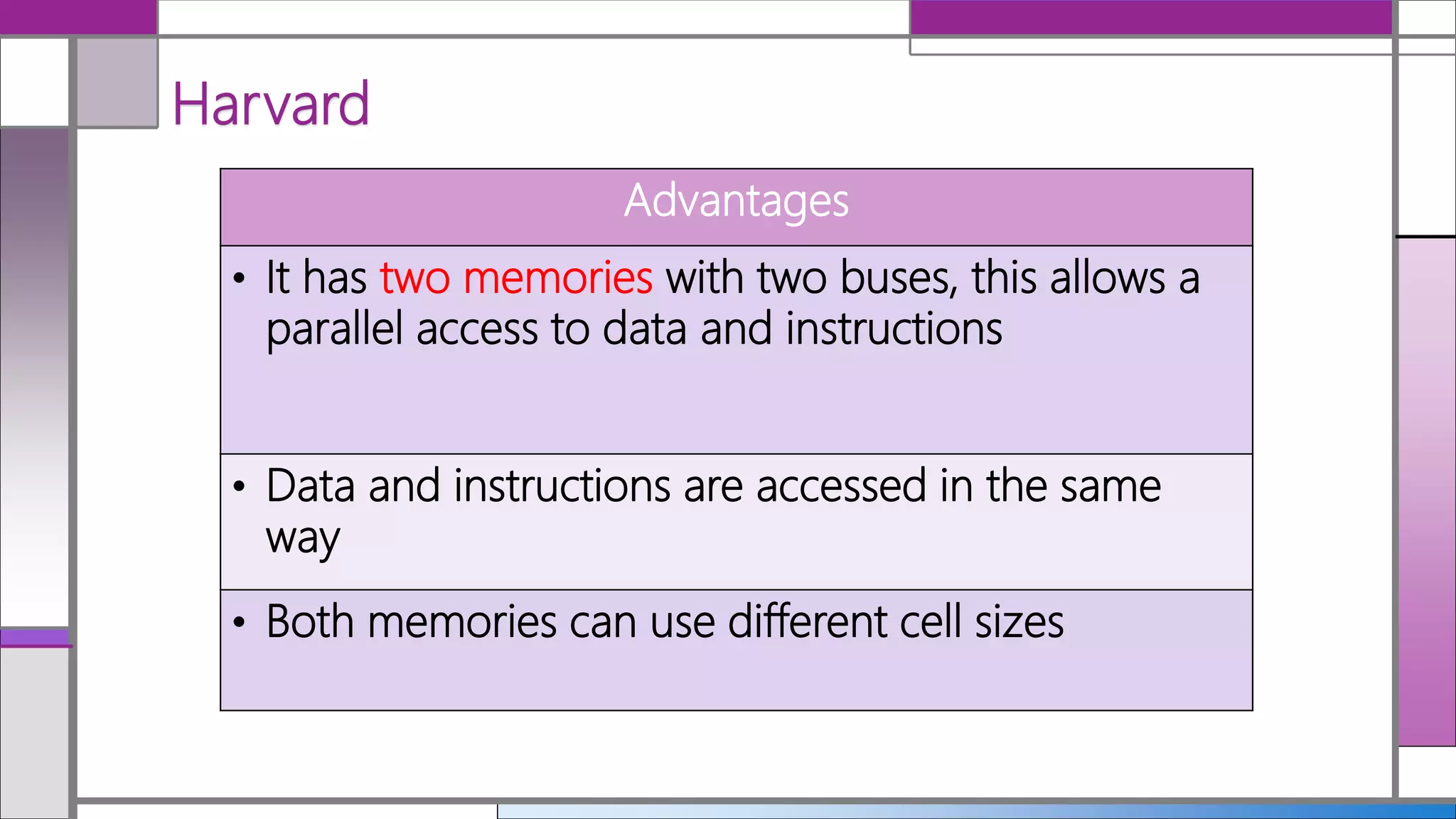

The document compares the Von Neumann architecture and Harvard architecture in computer science, highlighting their definitions, advantages, and disadvantages. The Von Neumann architecture uses a single memory system for both data and instructions, simplifying design but causing potential bottlenecks. In contrast, the Harvard architecture features separate memory for data and instructions, allowing parallel access but resulting in increased complexity and cost.