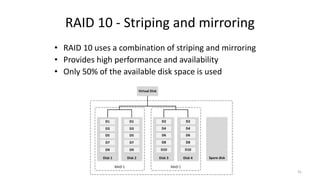

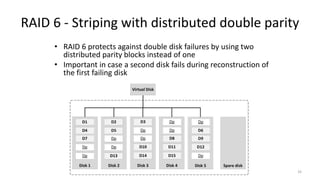

This document provides an overview of storage technologies and concepts. It discusses the history of storage technologies from early drum memory to modern hard disks and solid state drives. Key concepts covered include RAID configurations, disk interfaces like SATA and SAS, tape storage technologies, storage controllers, and virtual tape libraries. The document concludes with a discussion of Kryder's law and projections for future disk capacity growth.