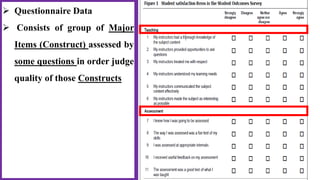

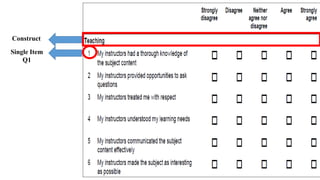

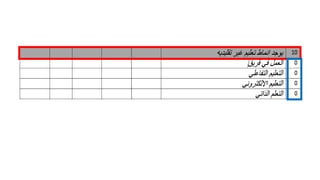

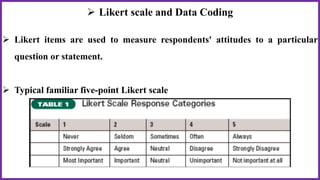

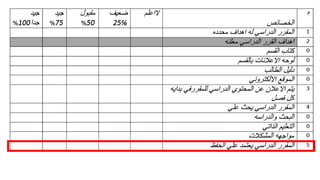

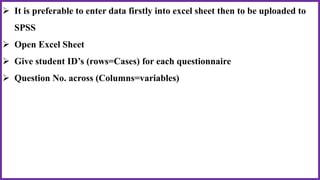

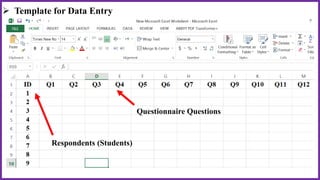

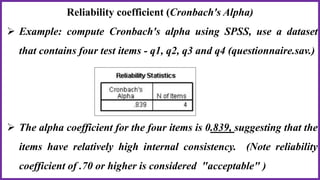

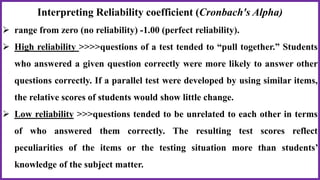

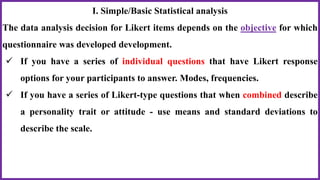

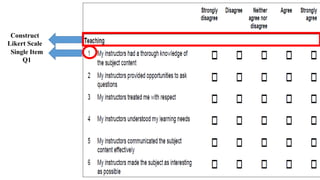

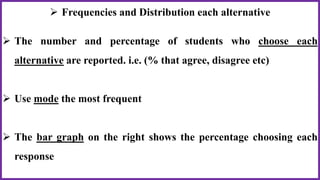

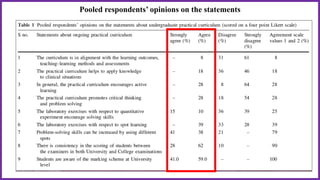

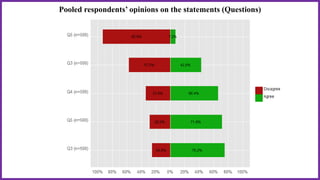

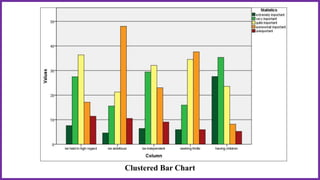

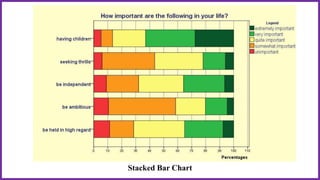

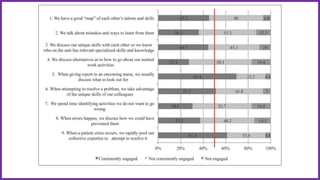

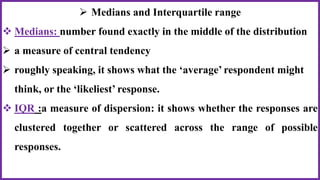

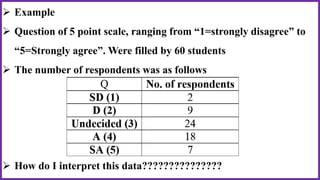

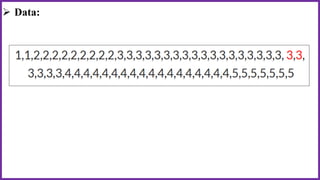

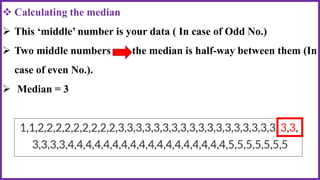

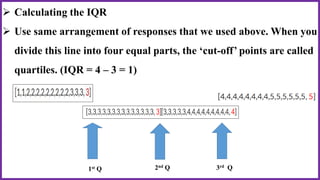

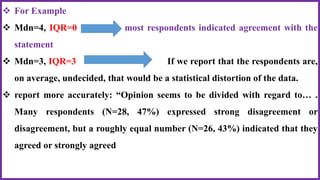

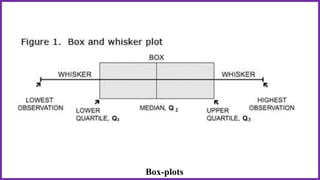

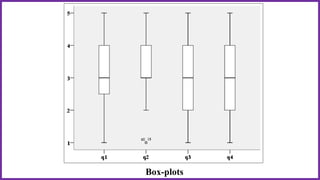

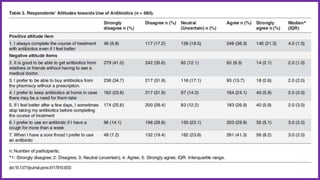

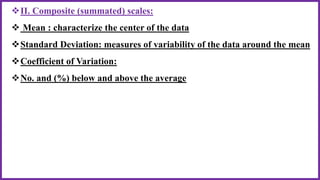

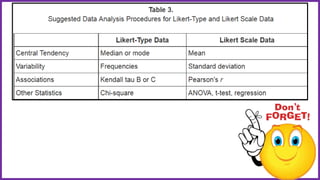

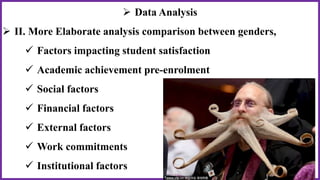

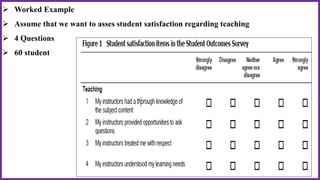

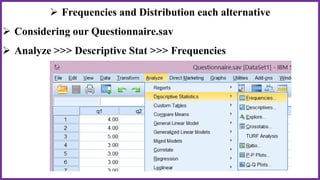

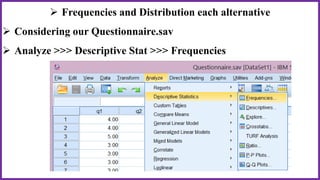

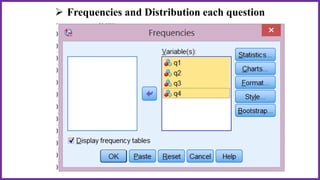

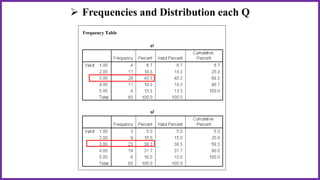

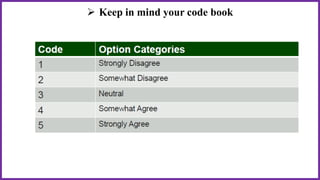

This document provides an overview of statistical analysis of questionnaire data. It discusses topics like questionnaire construction, data entry, reliability analysis using Cronbach's alpha, descriptive statistics for Likert scale items including frequencies, medians, interquartile ranges and box plots. It also covers composite scale analysis using means, standard deviations and comparisons between groups. An example is provided on assessing student satisfaction regarding teaching using 4 questionnaire items from 60 students. Results would be reported using tables and figures with interpretations.