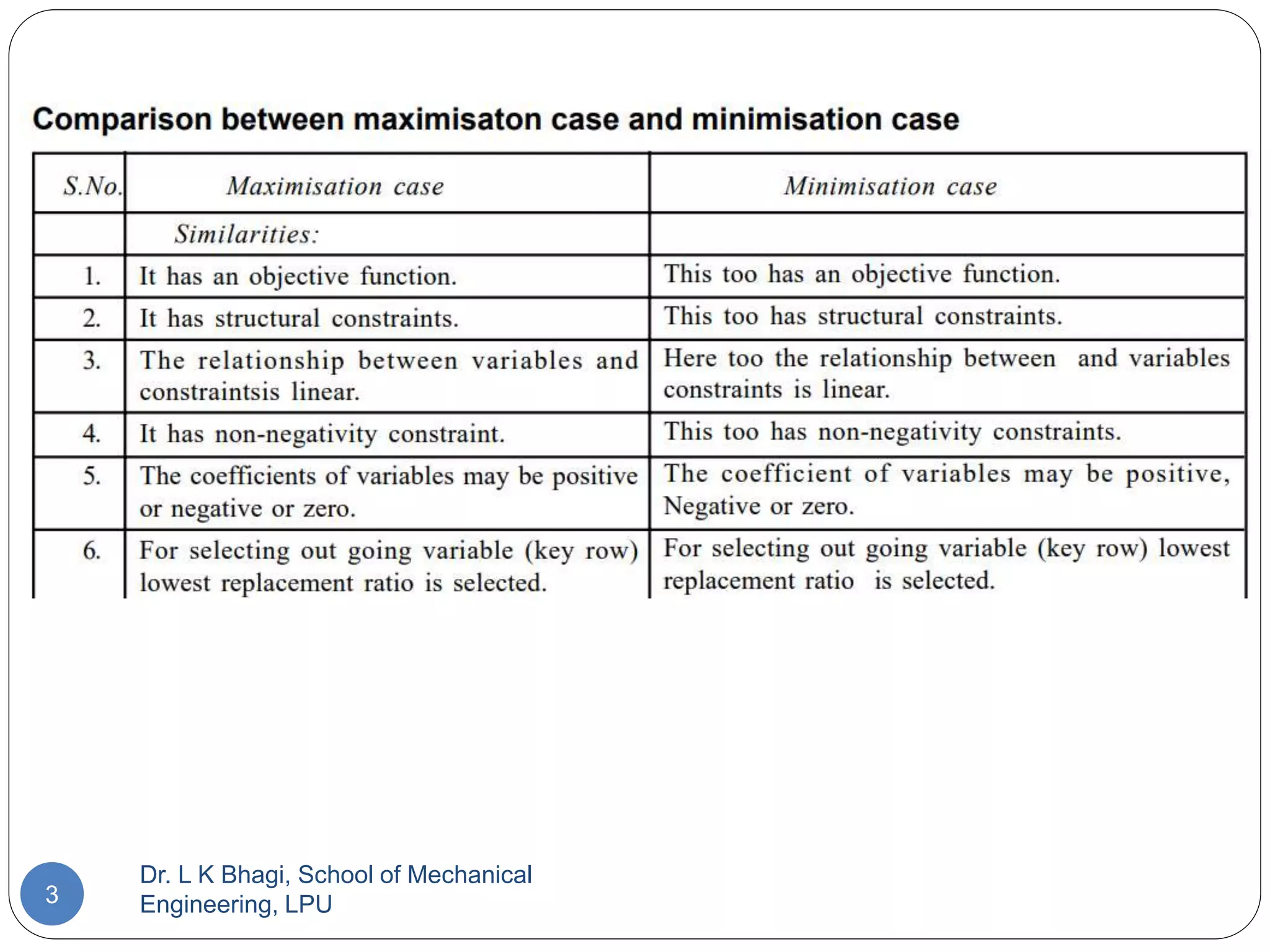

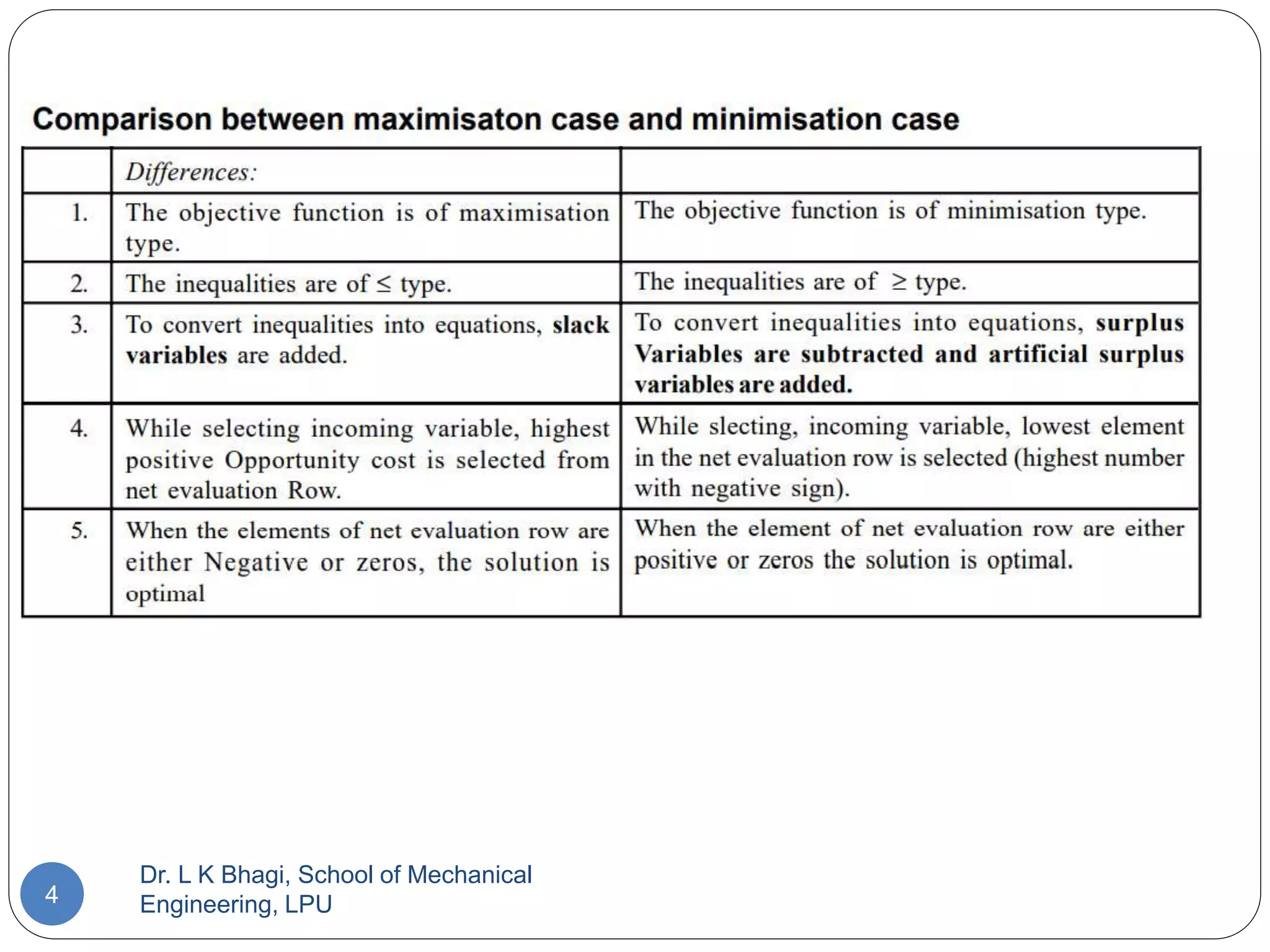

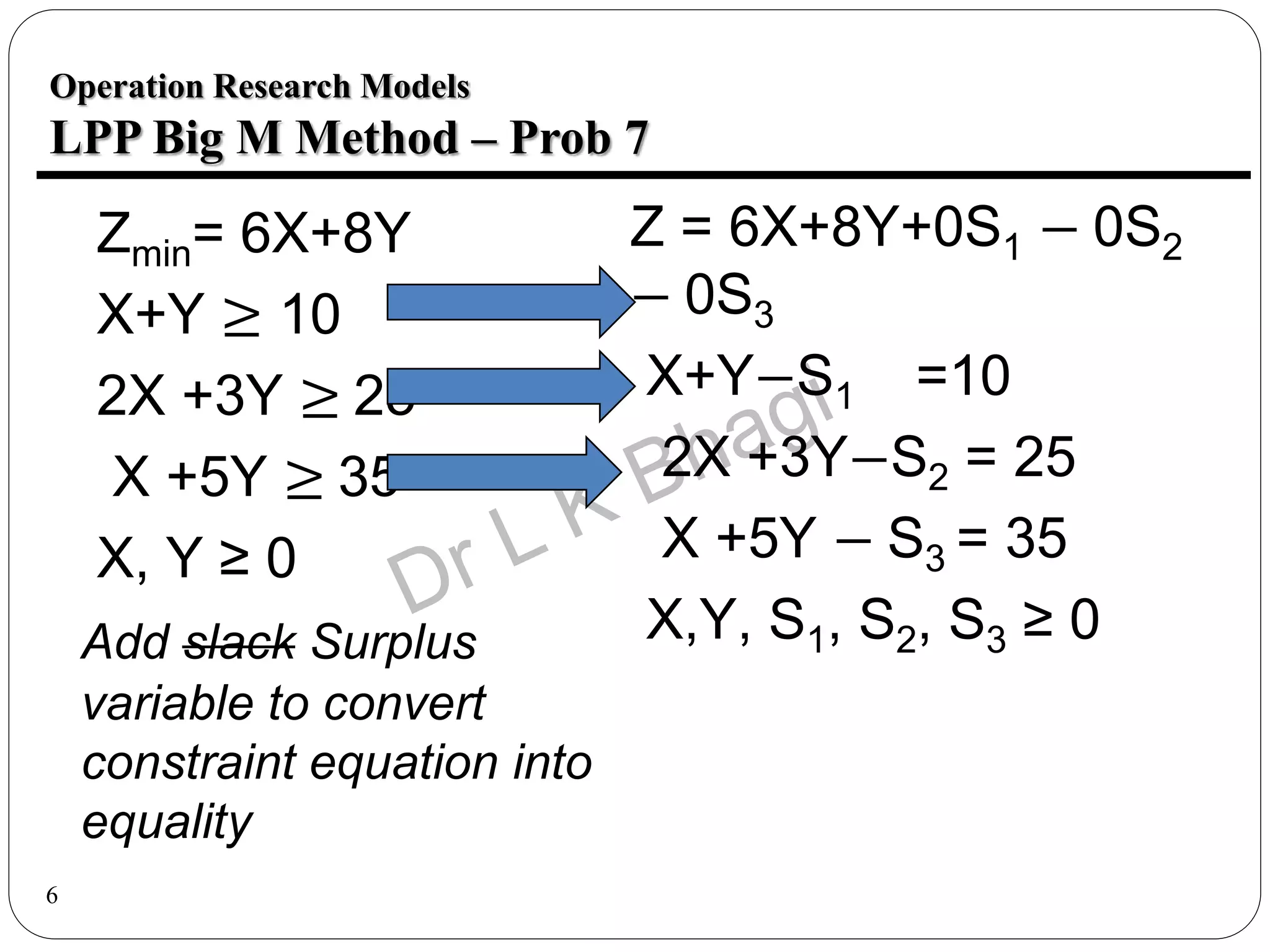

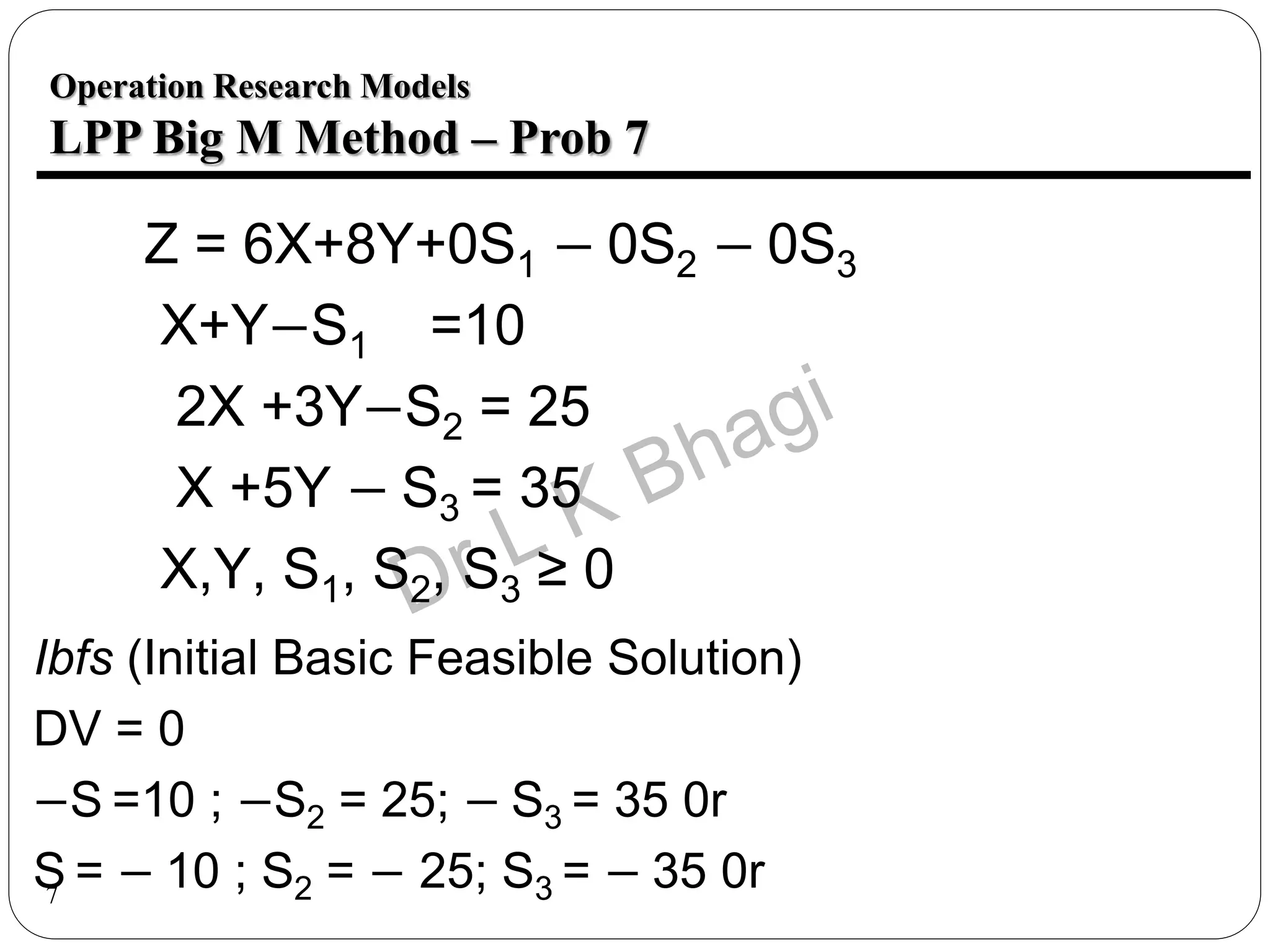

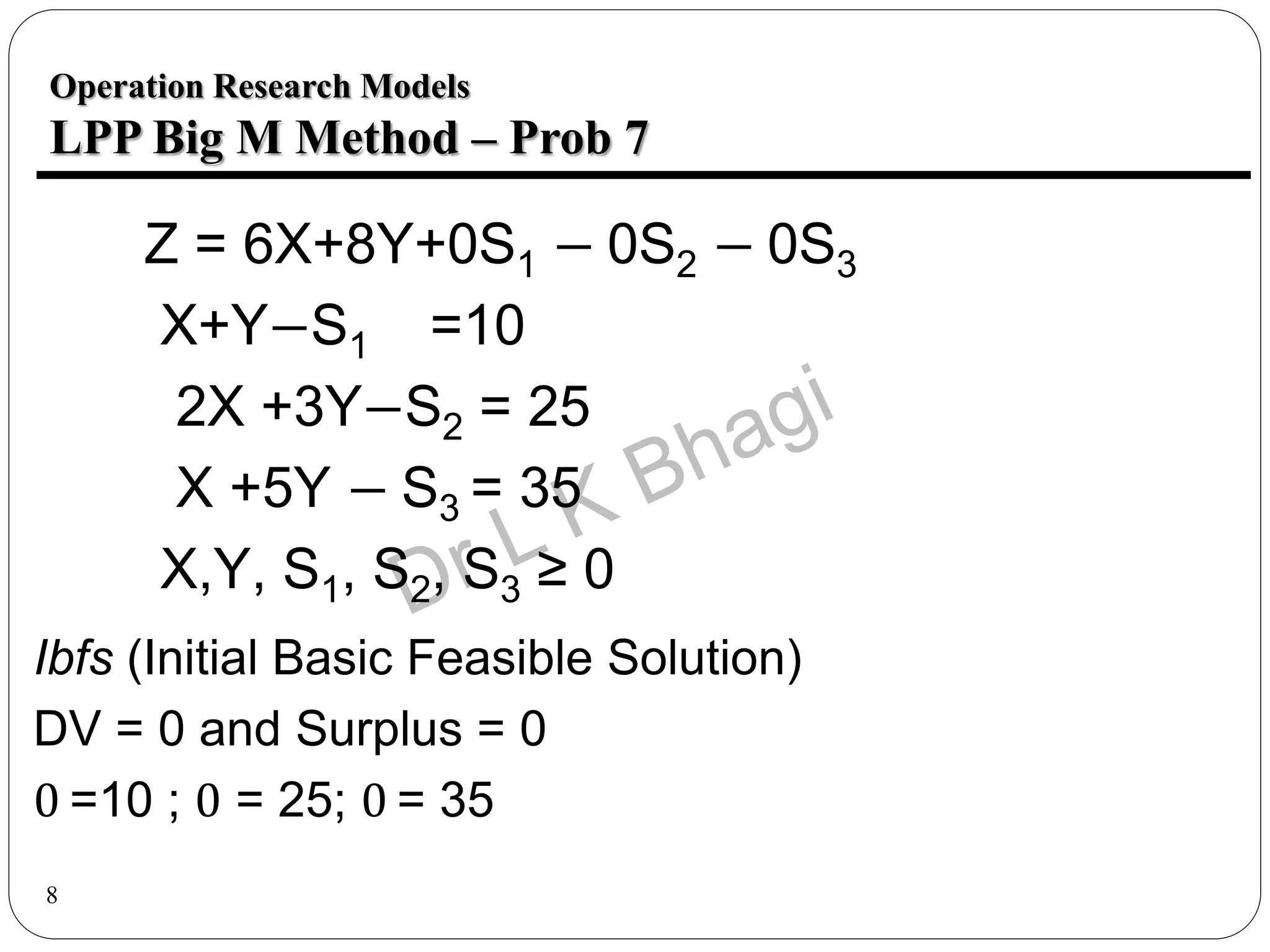

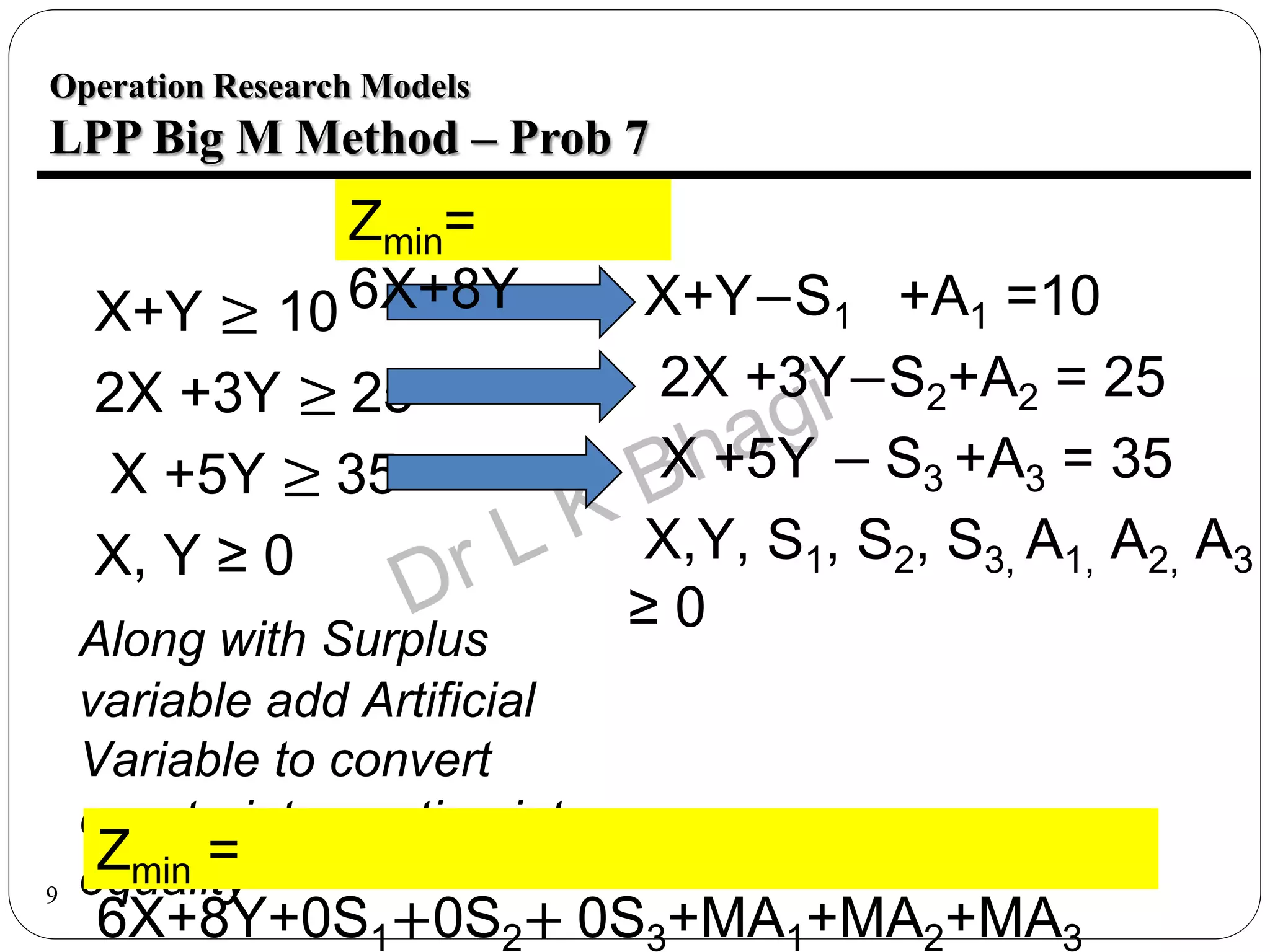

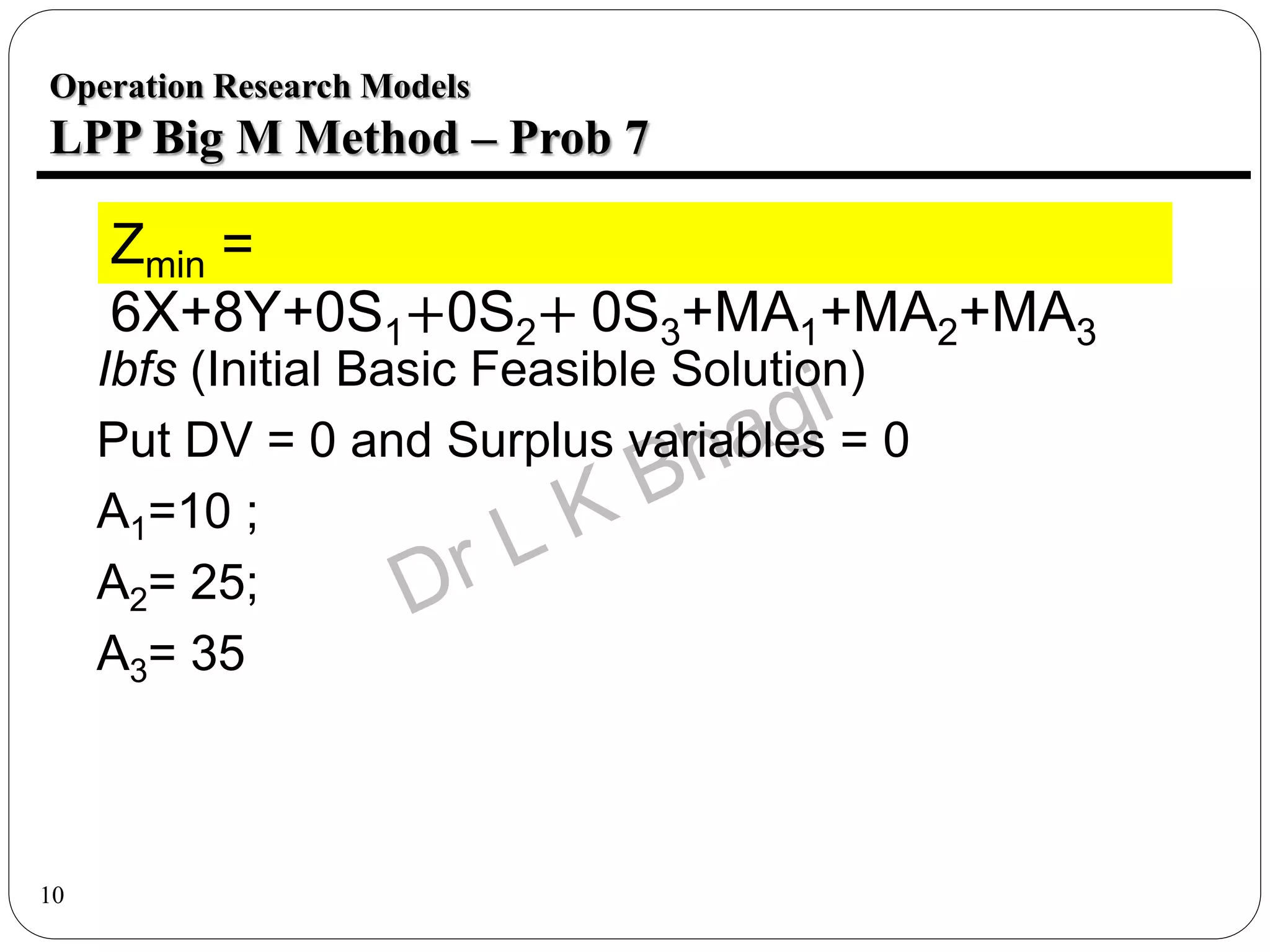

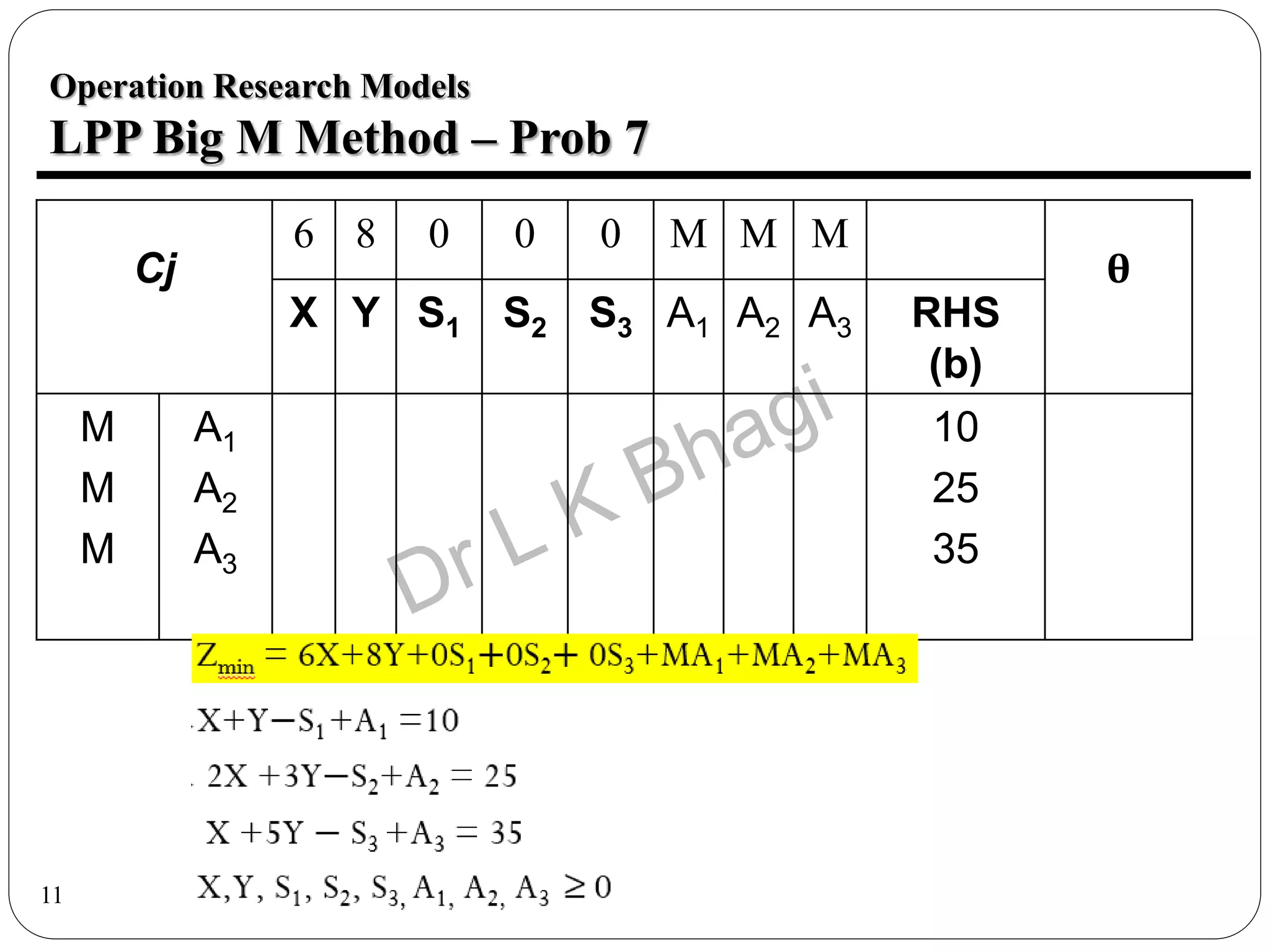

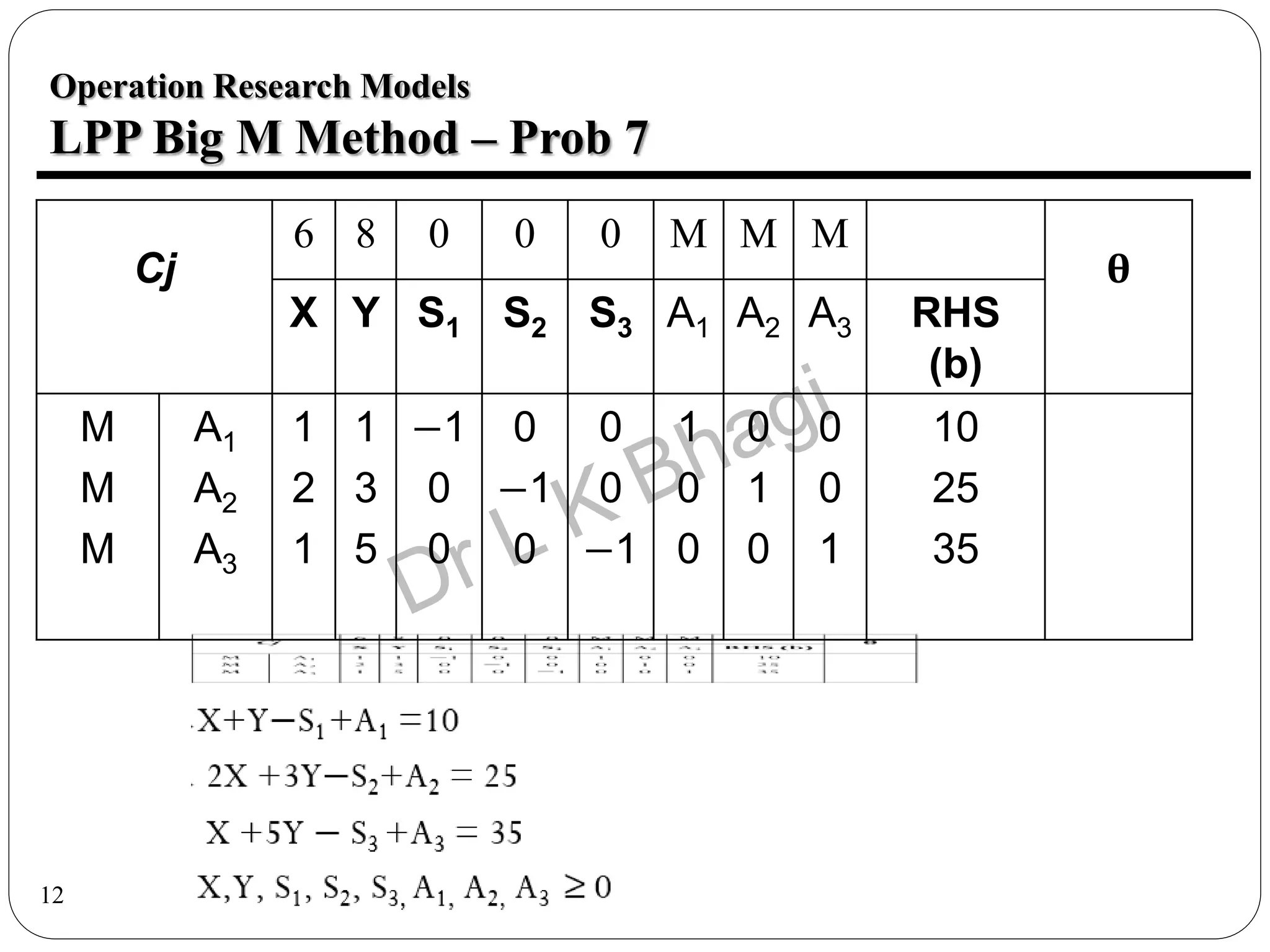

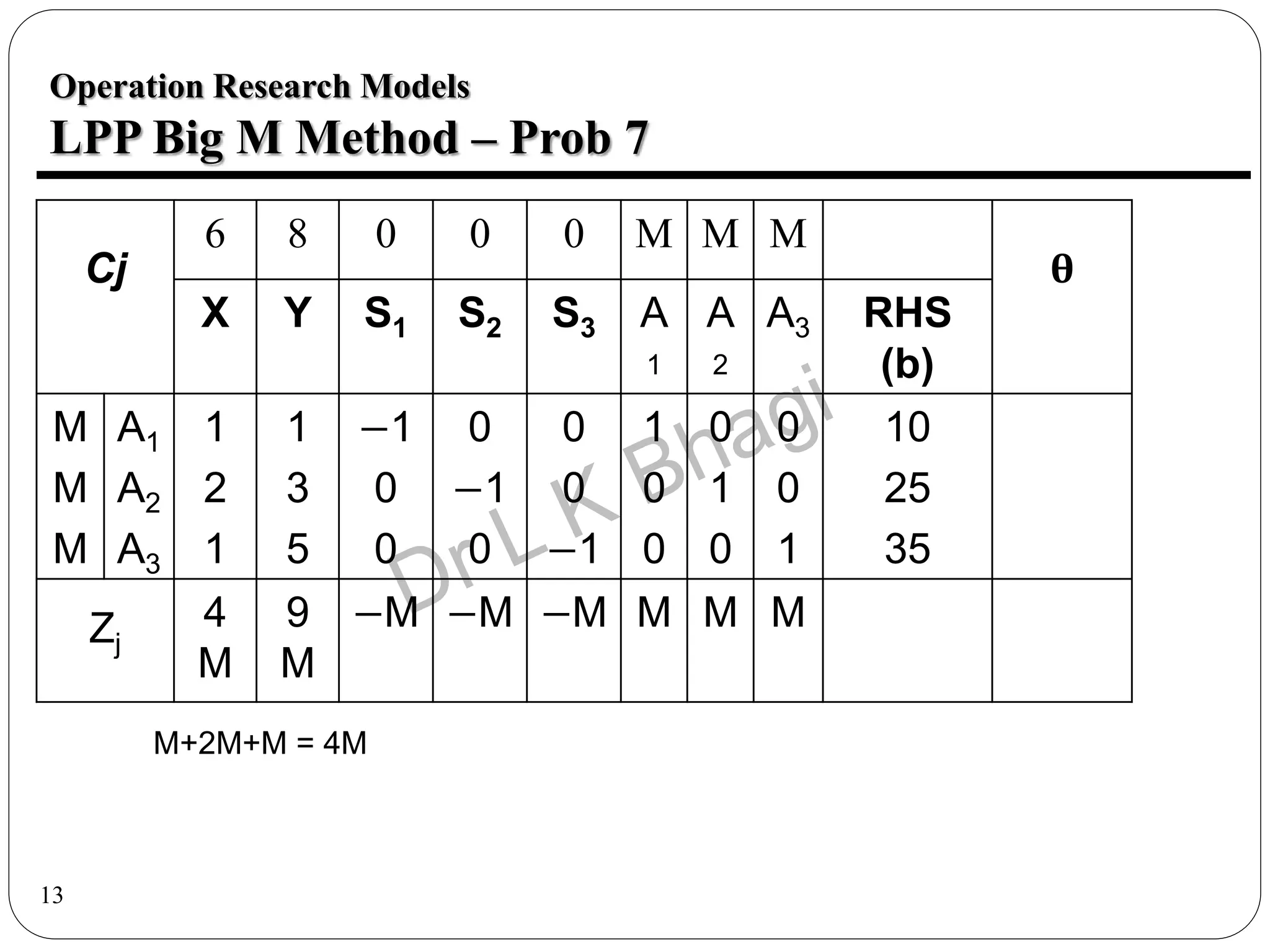

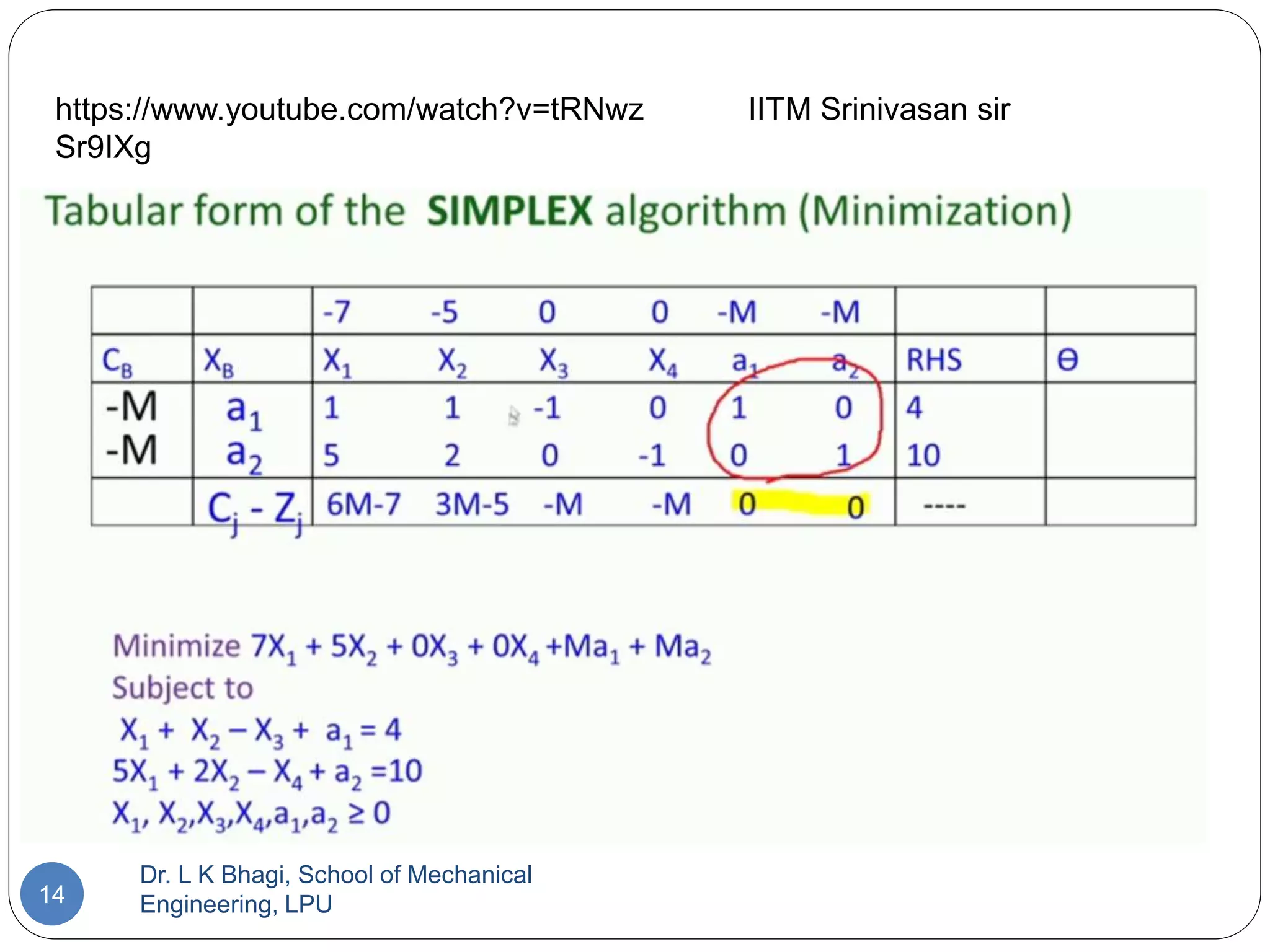

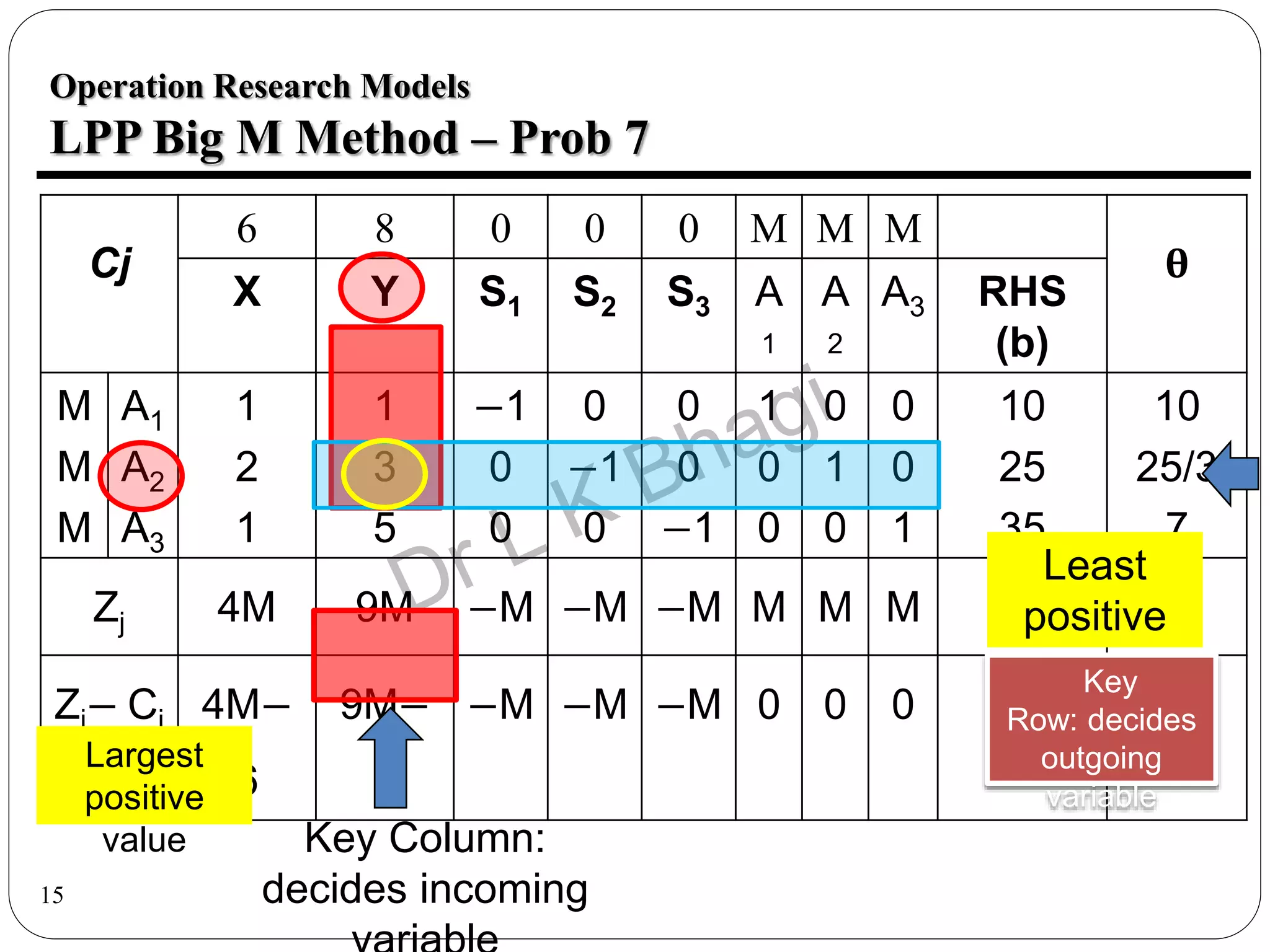

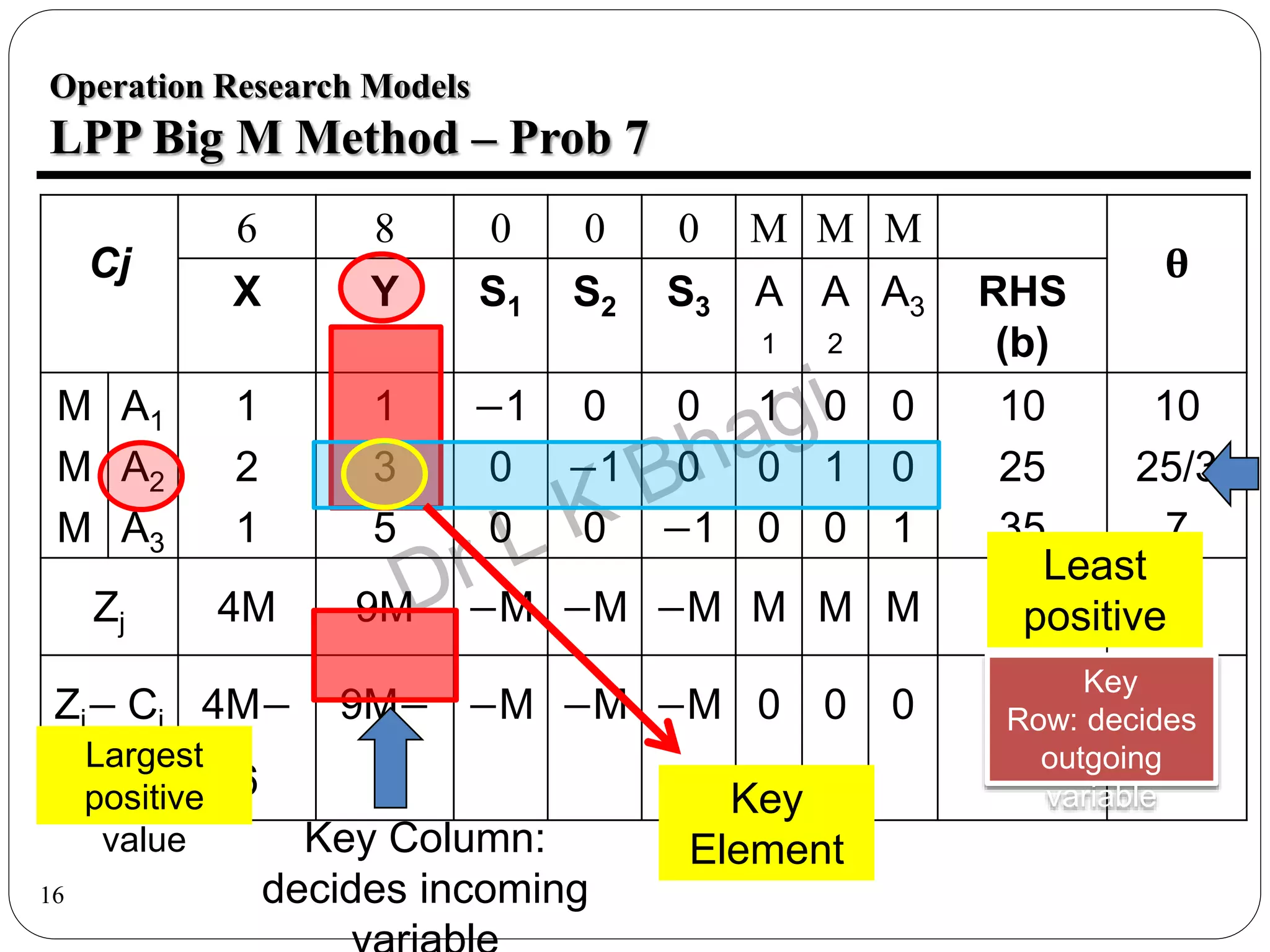

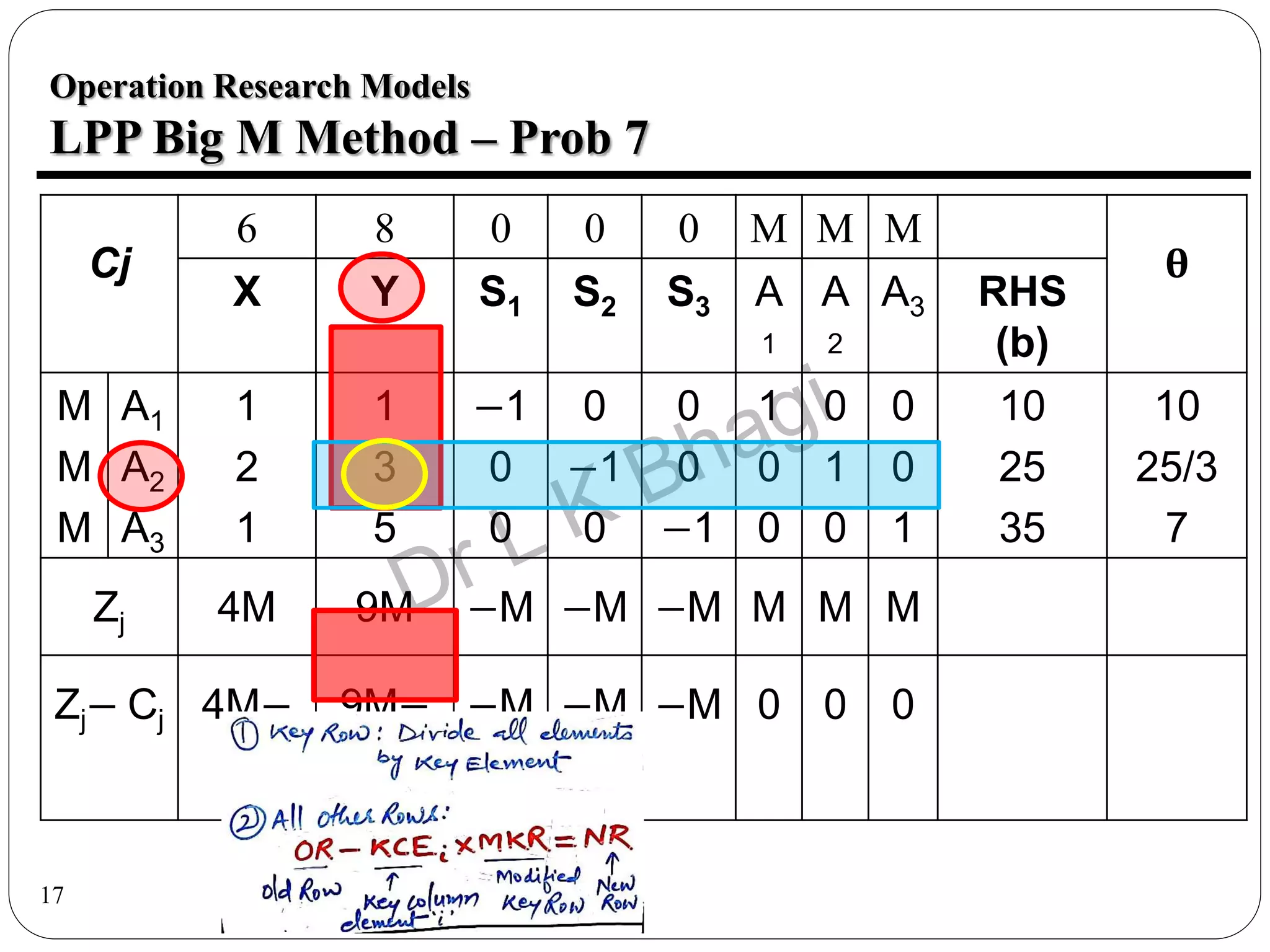

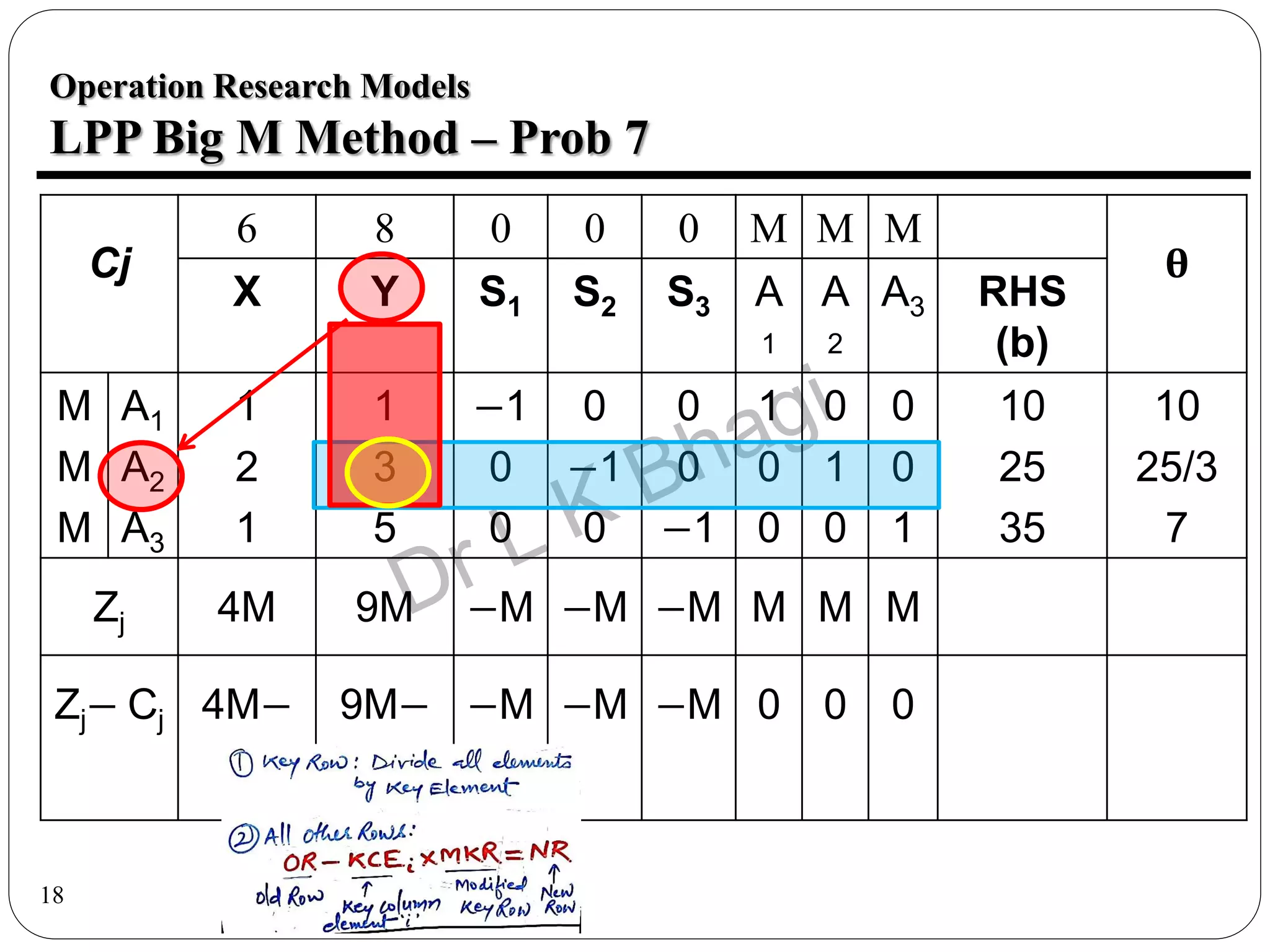

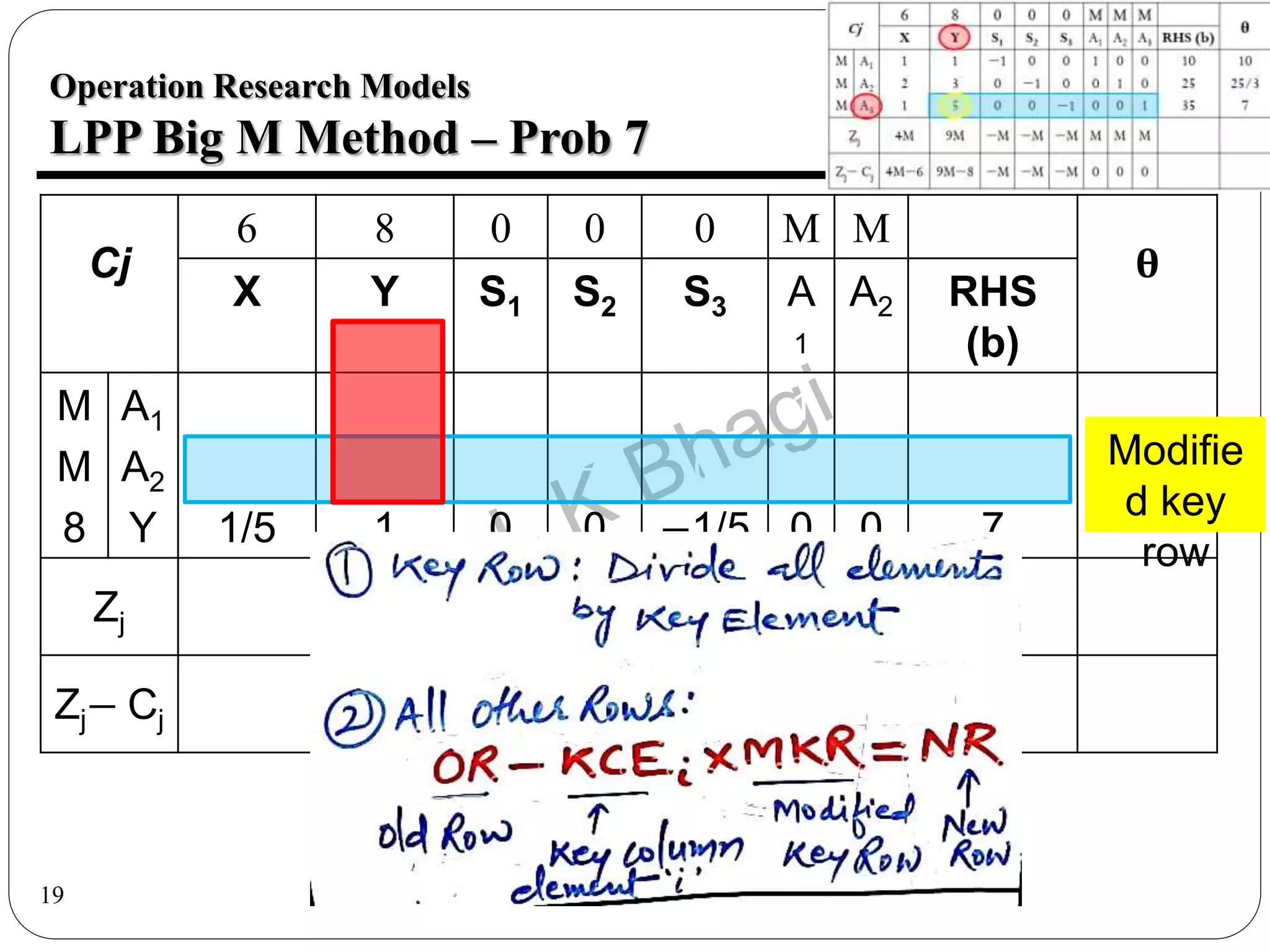

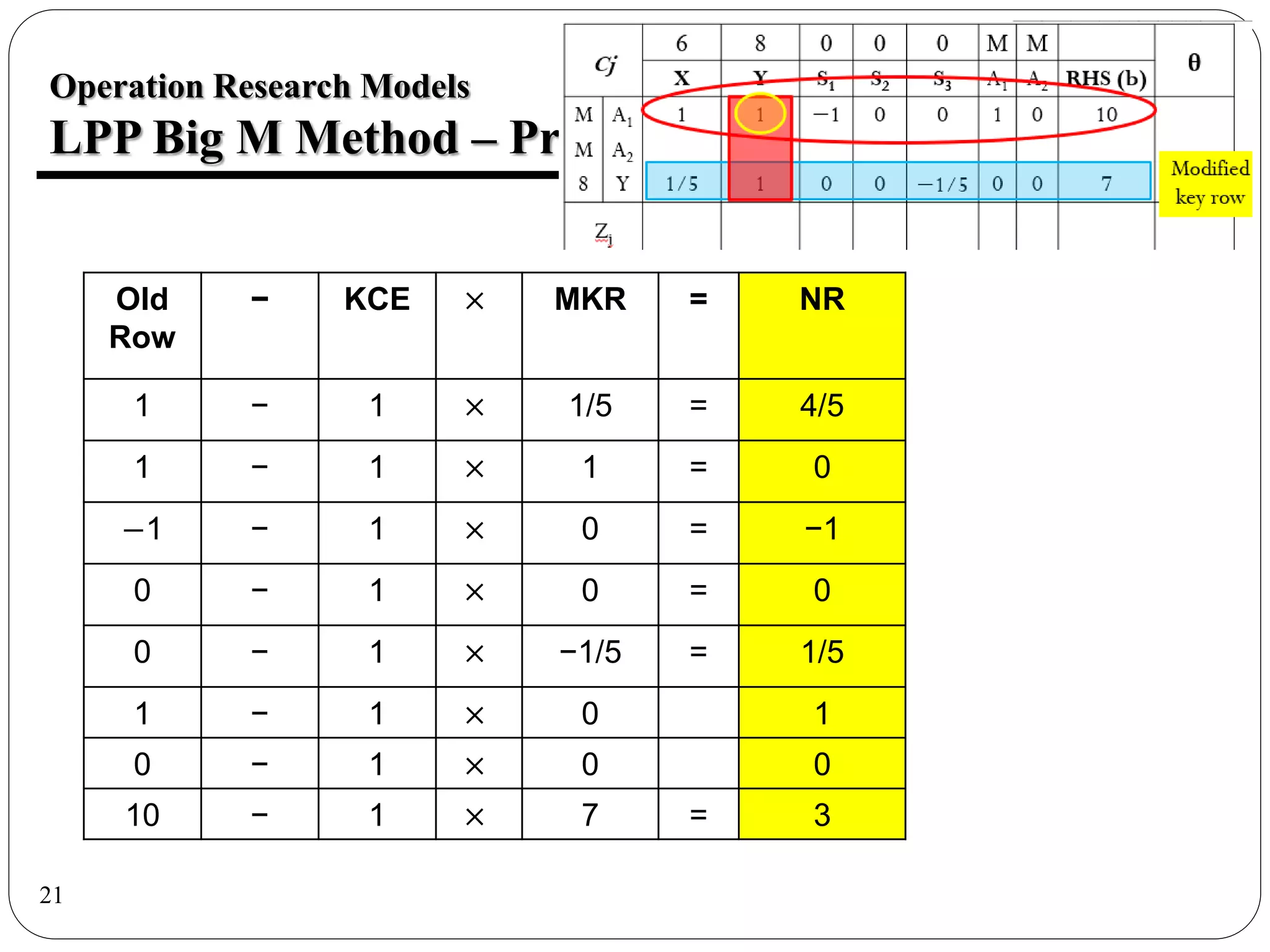

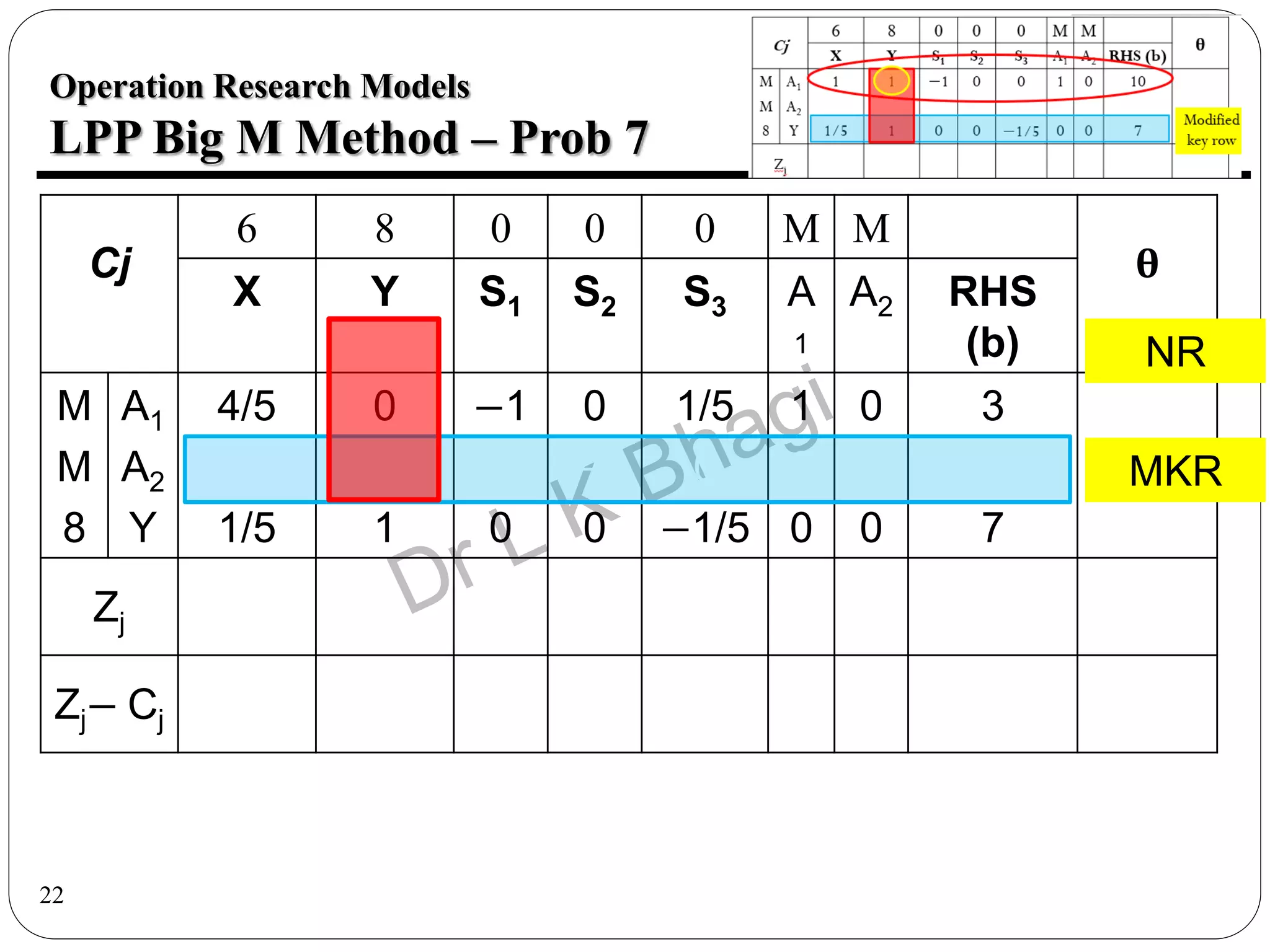

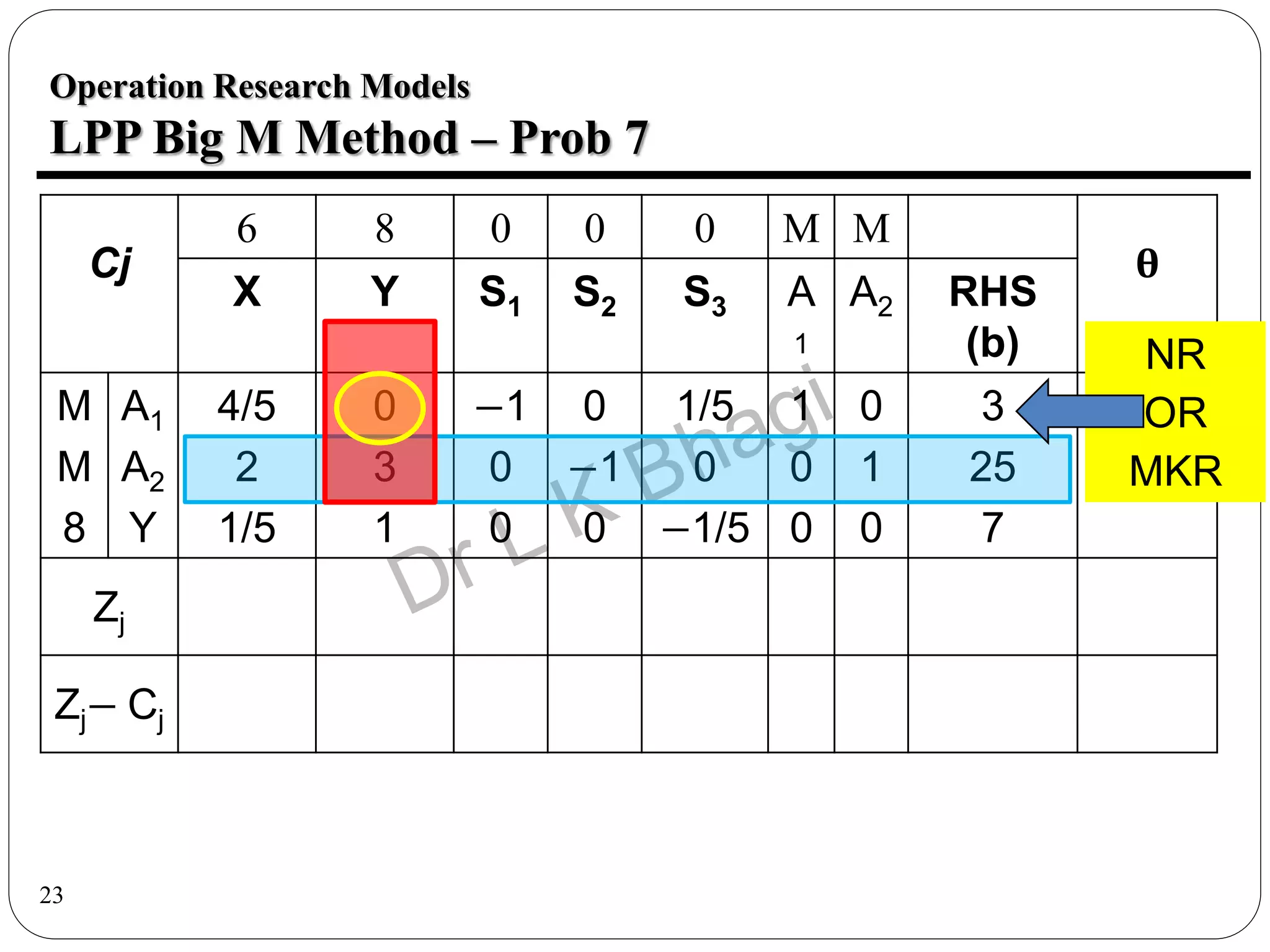

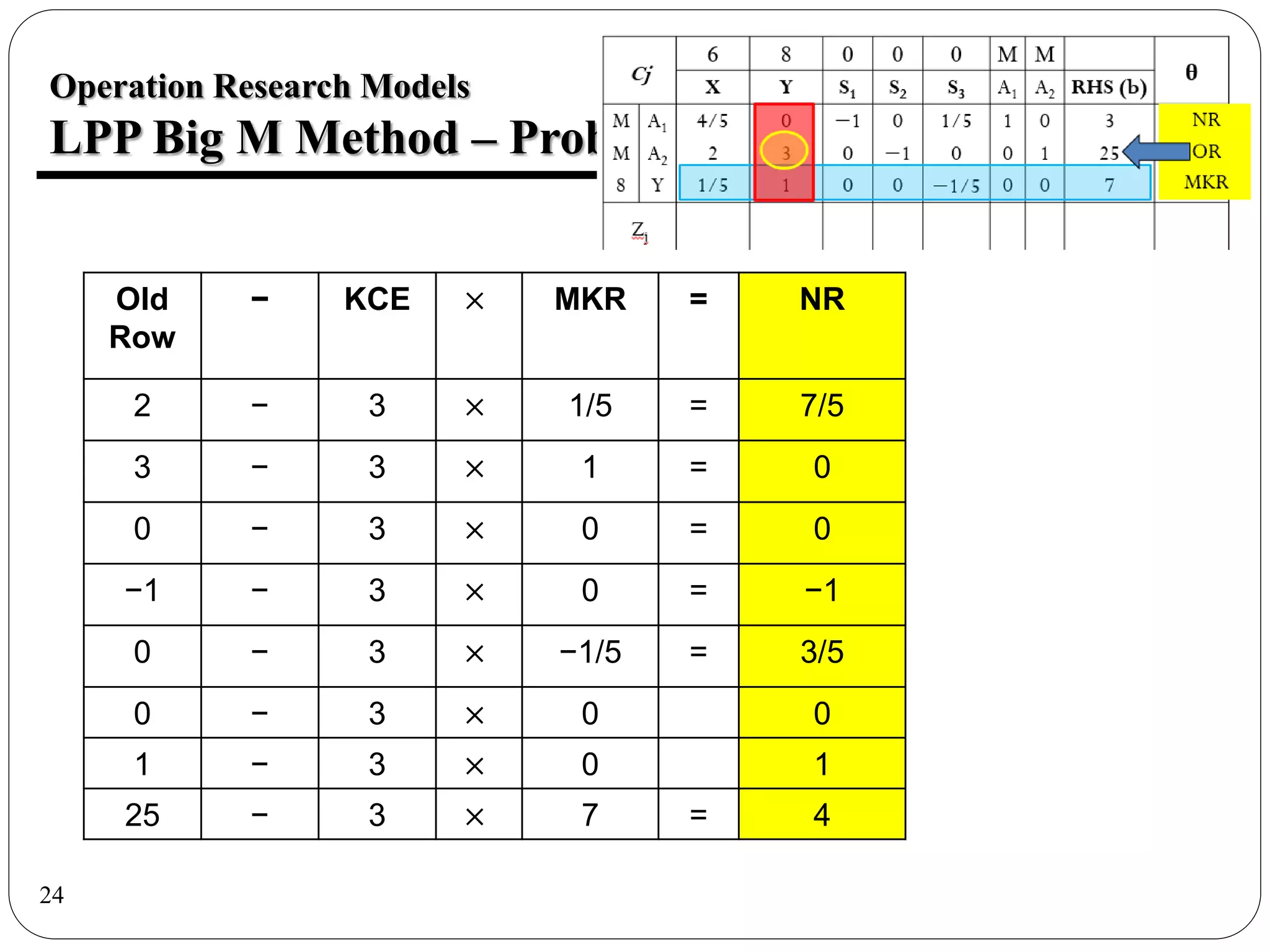

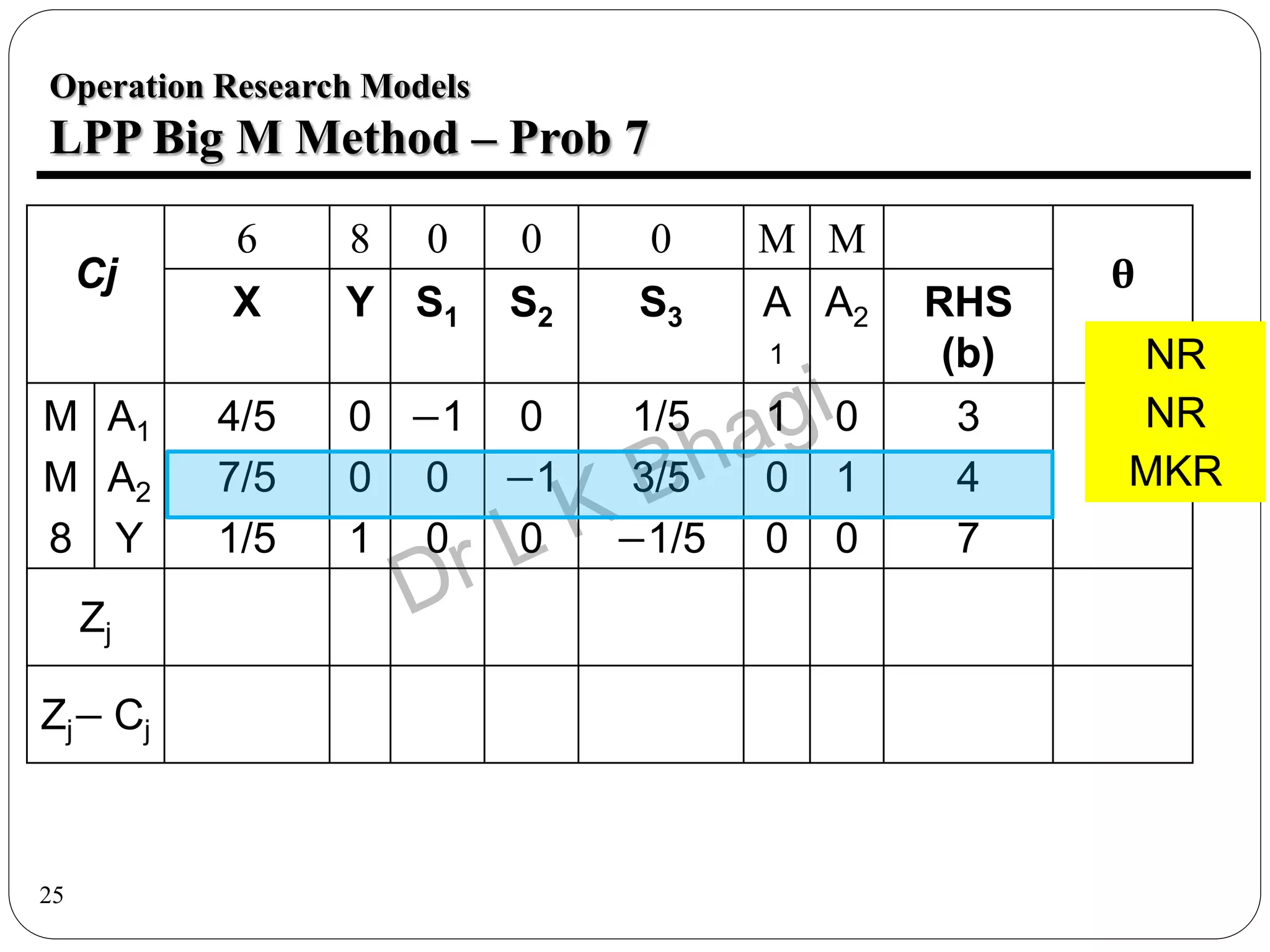

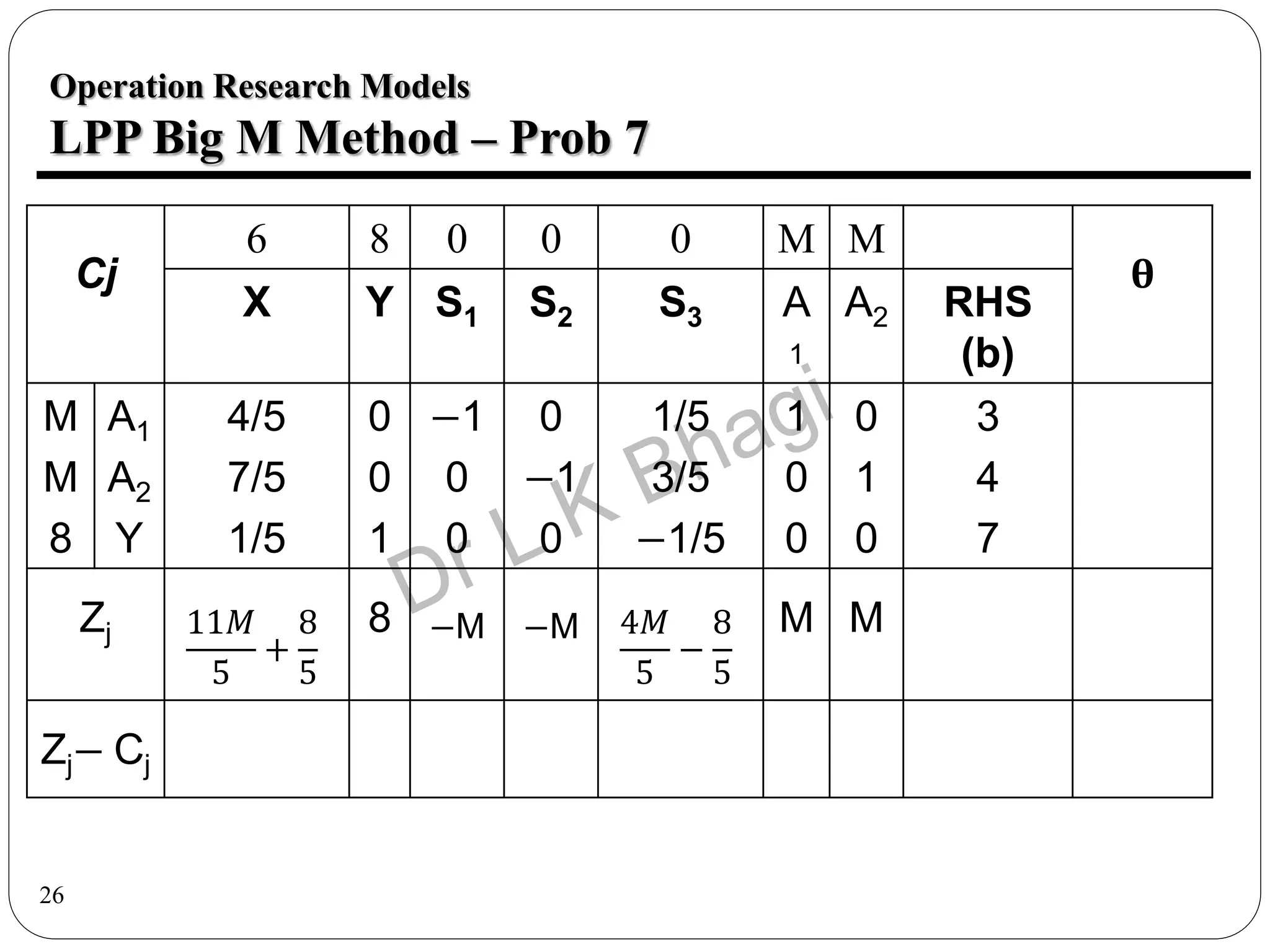

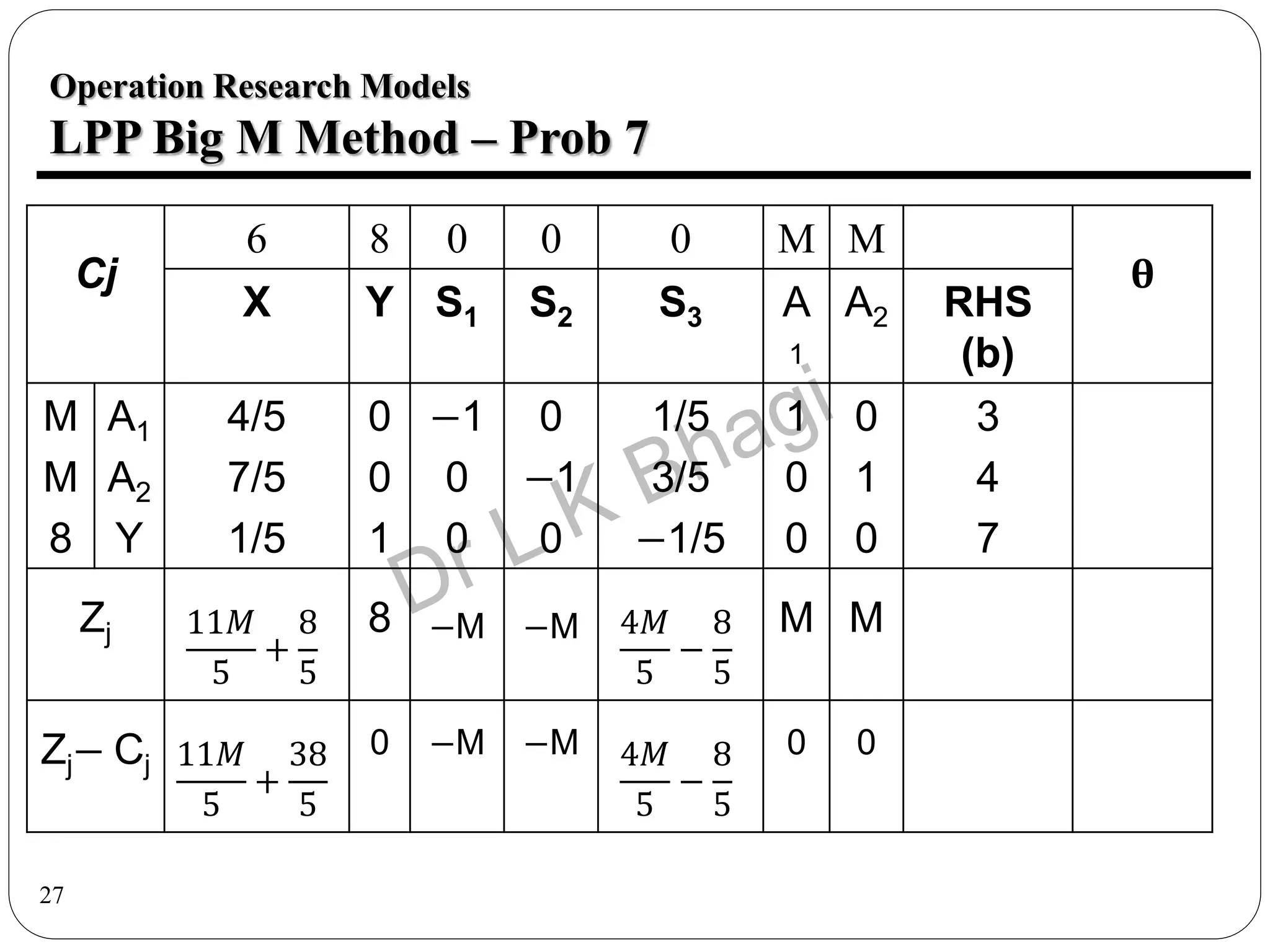

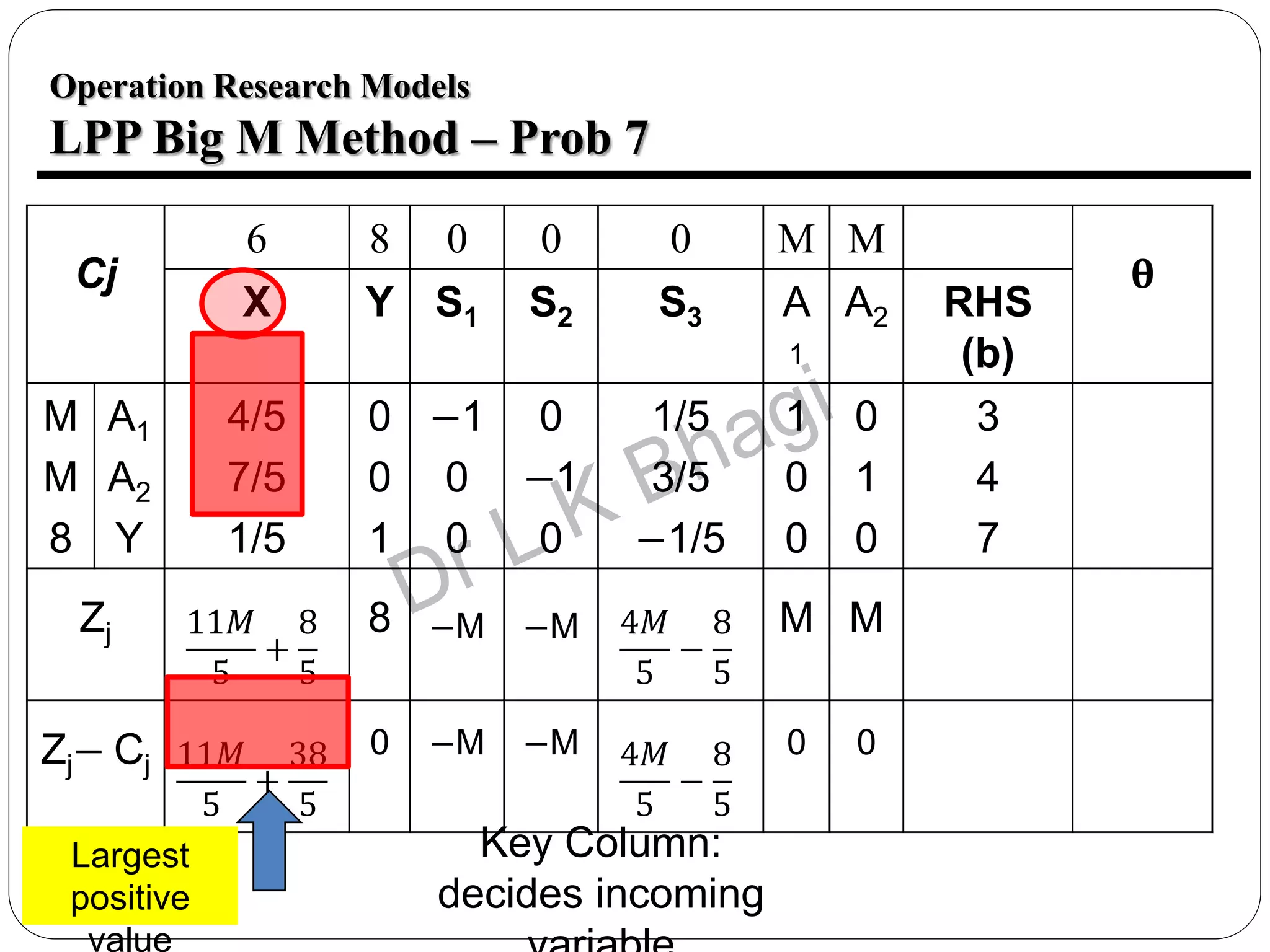

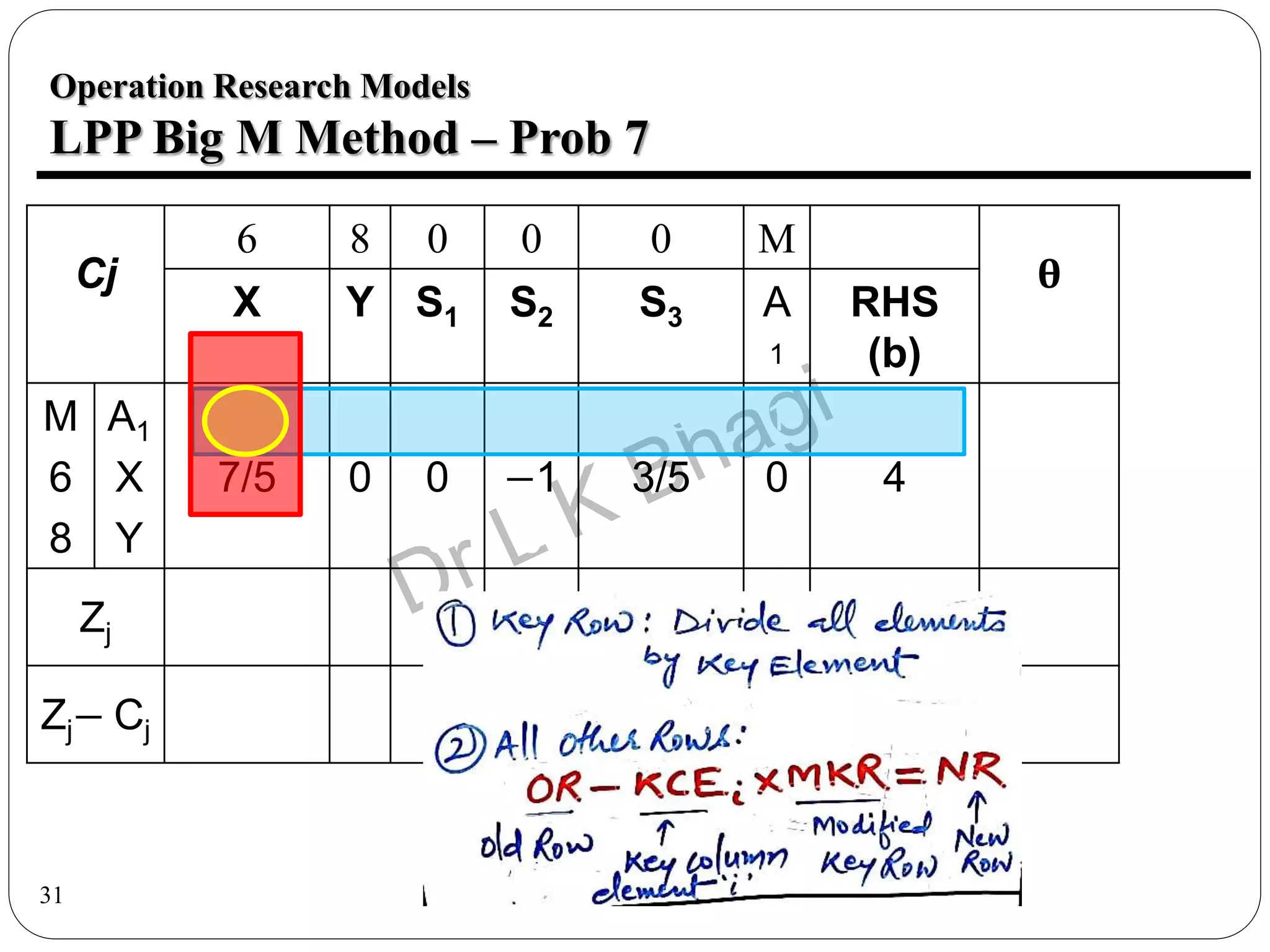

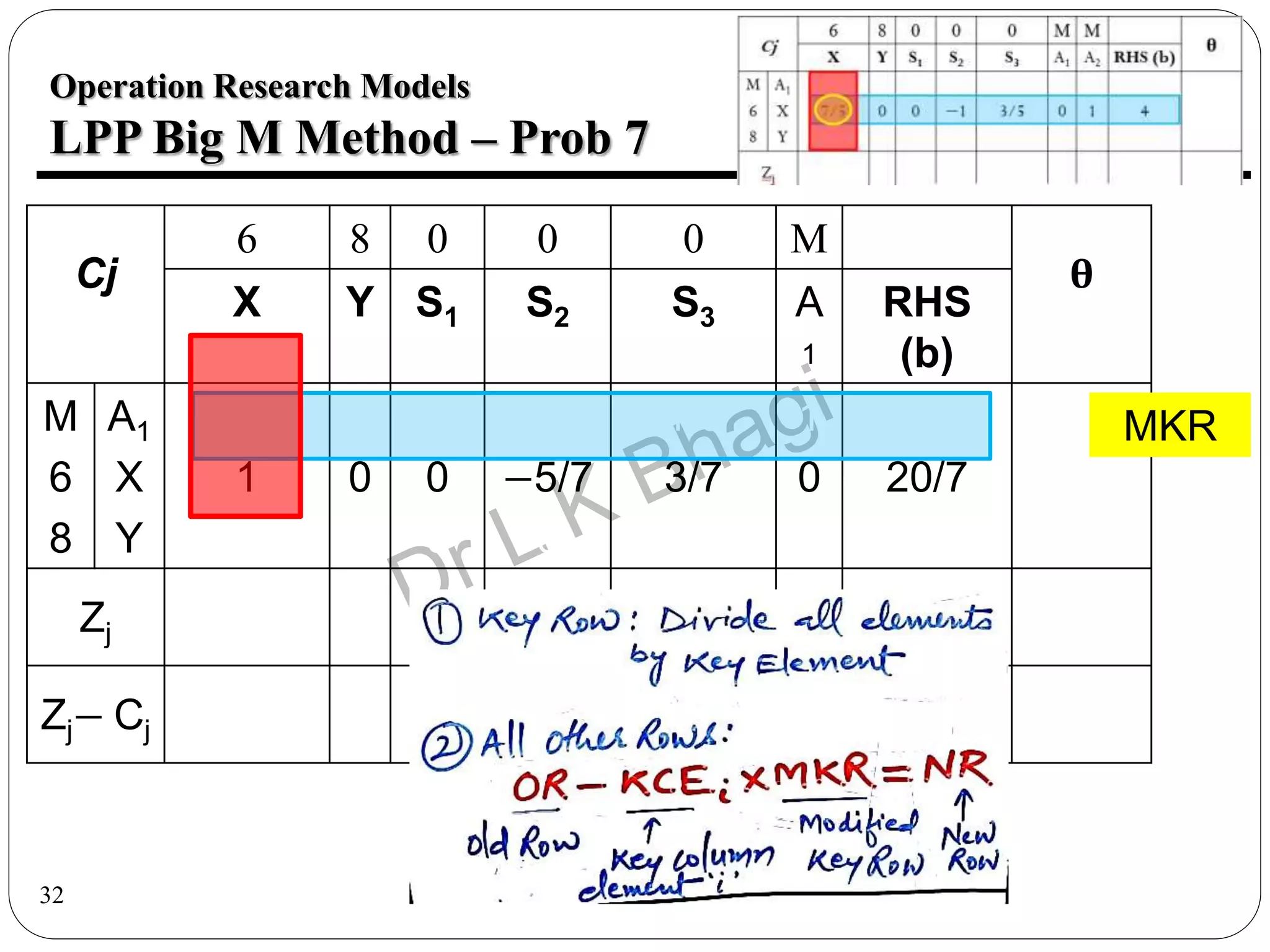

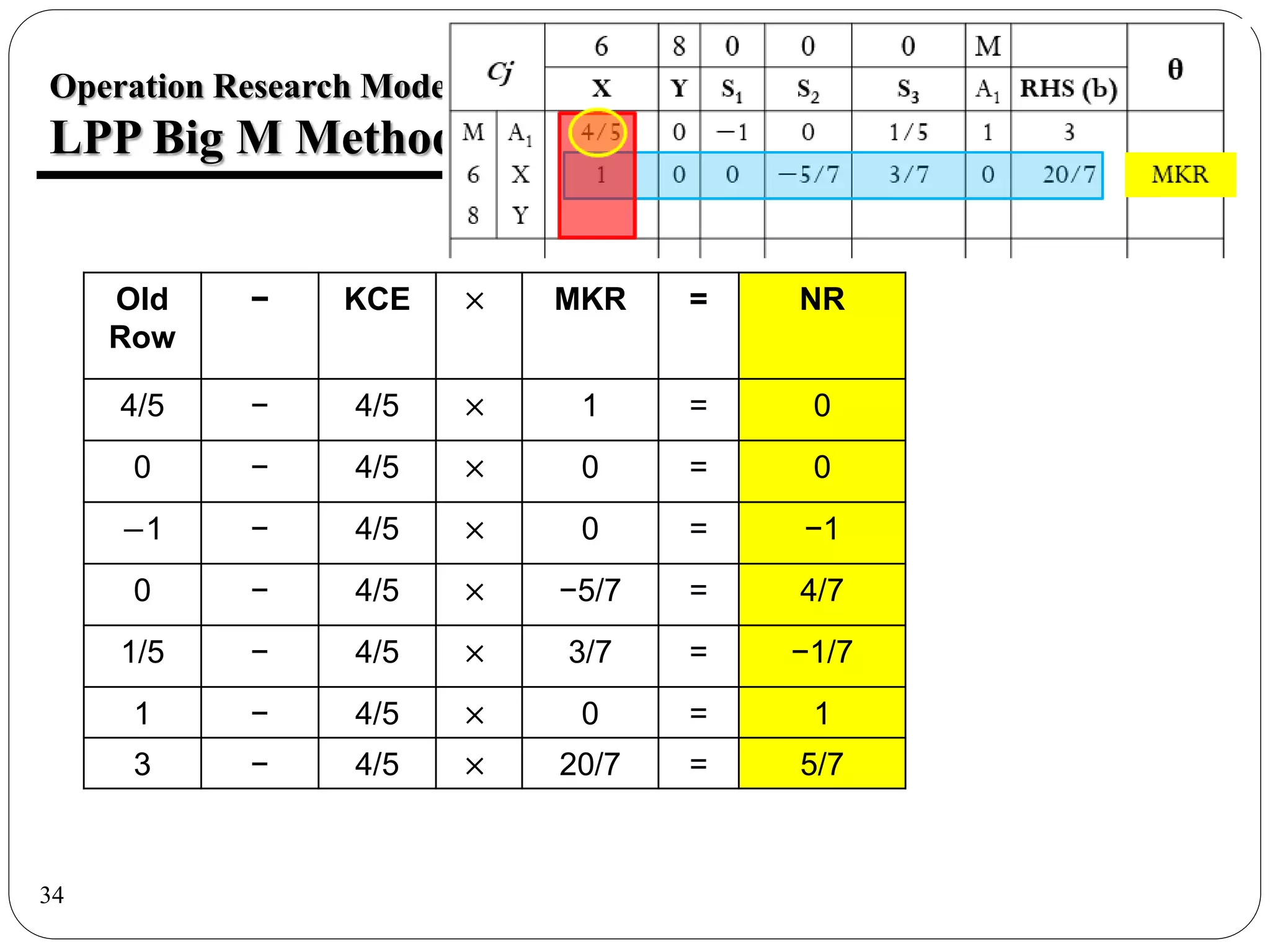

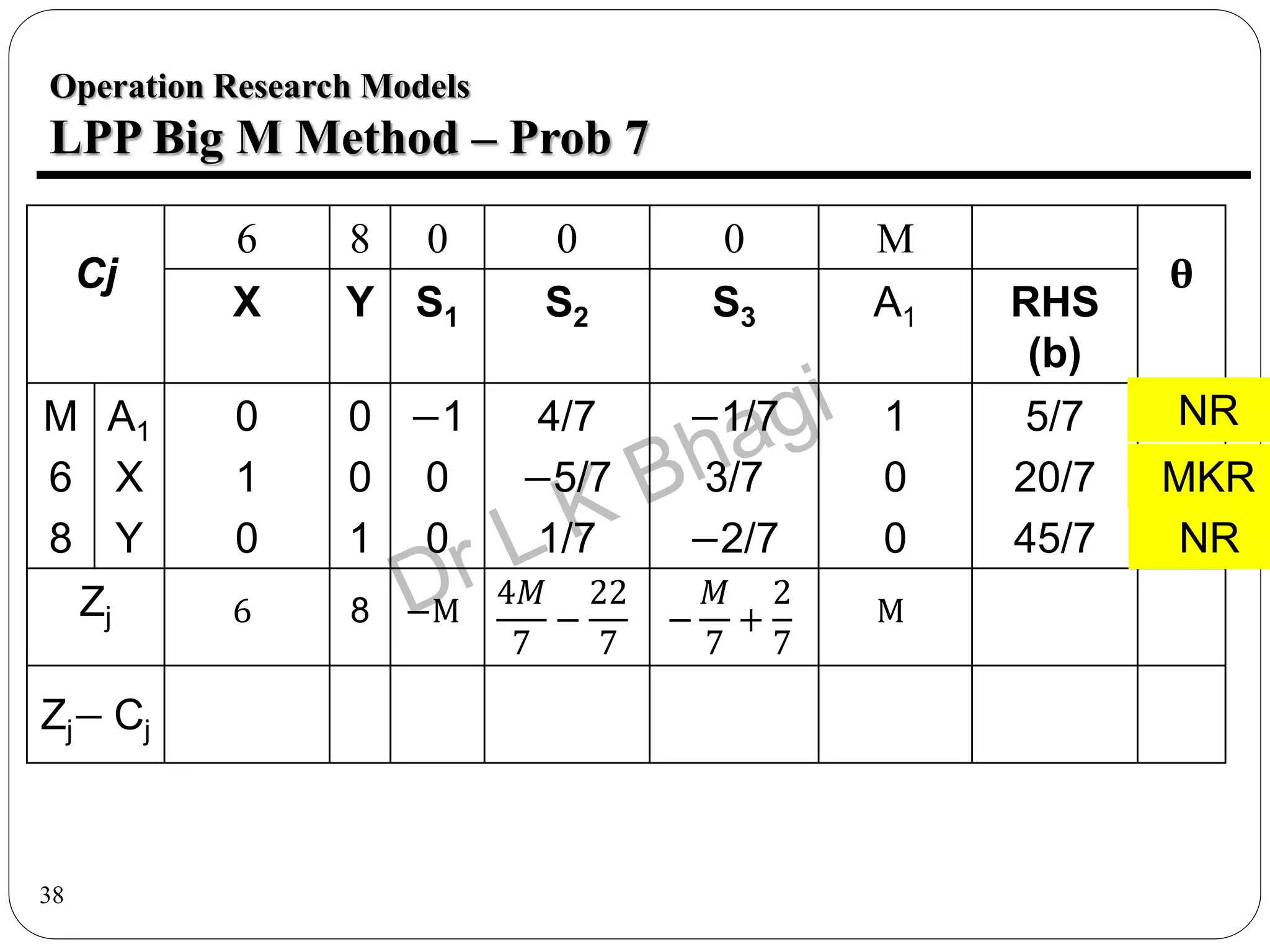

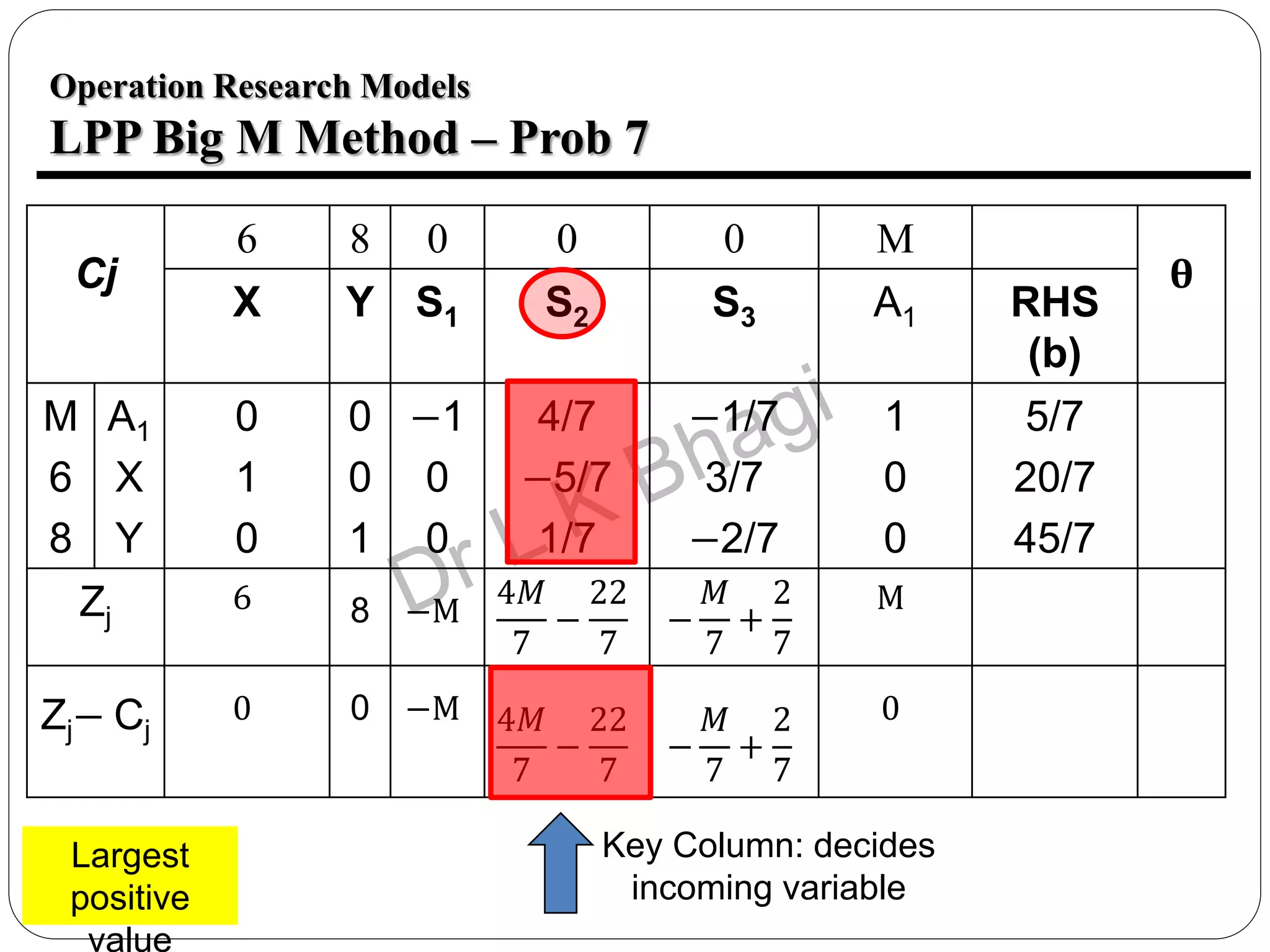

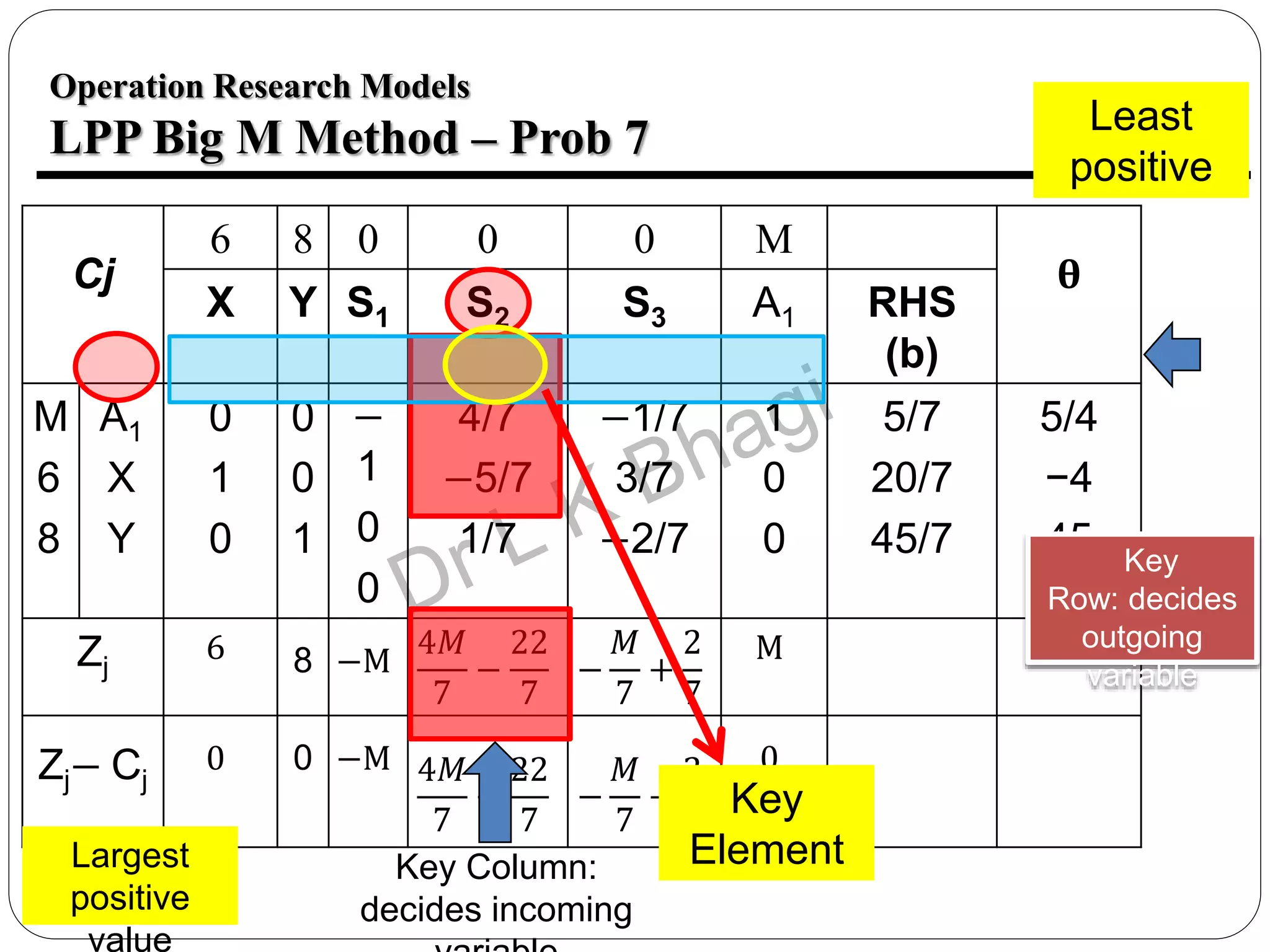

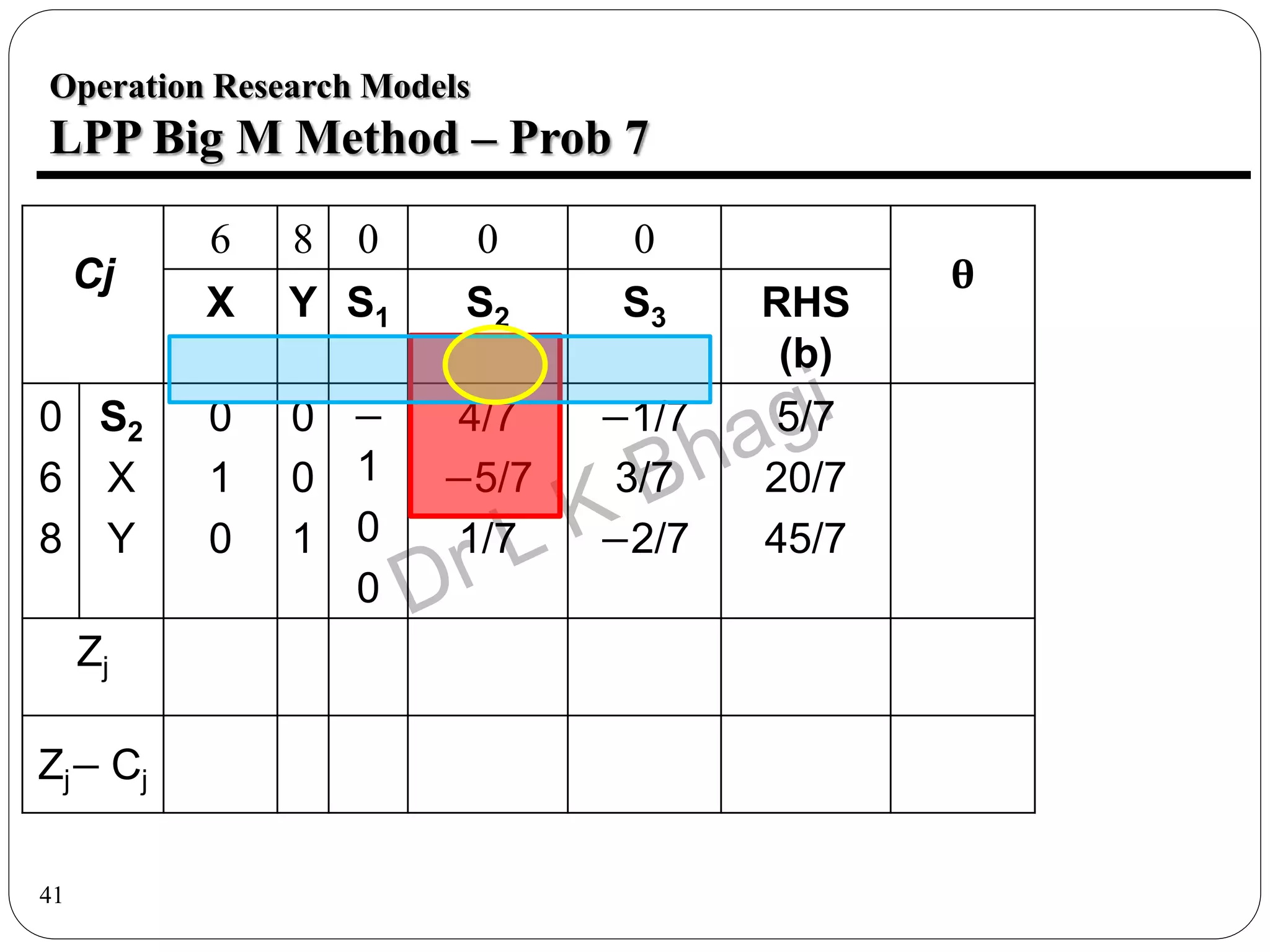

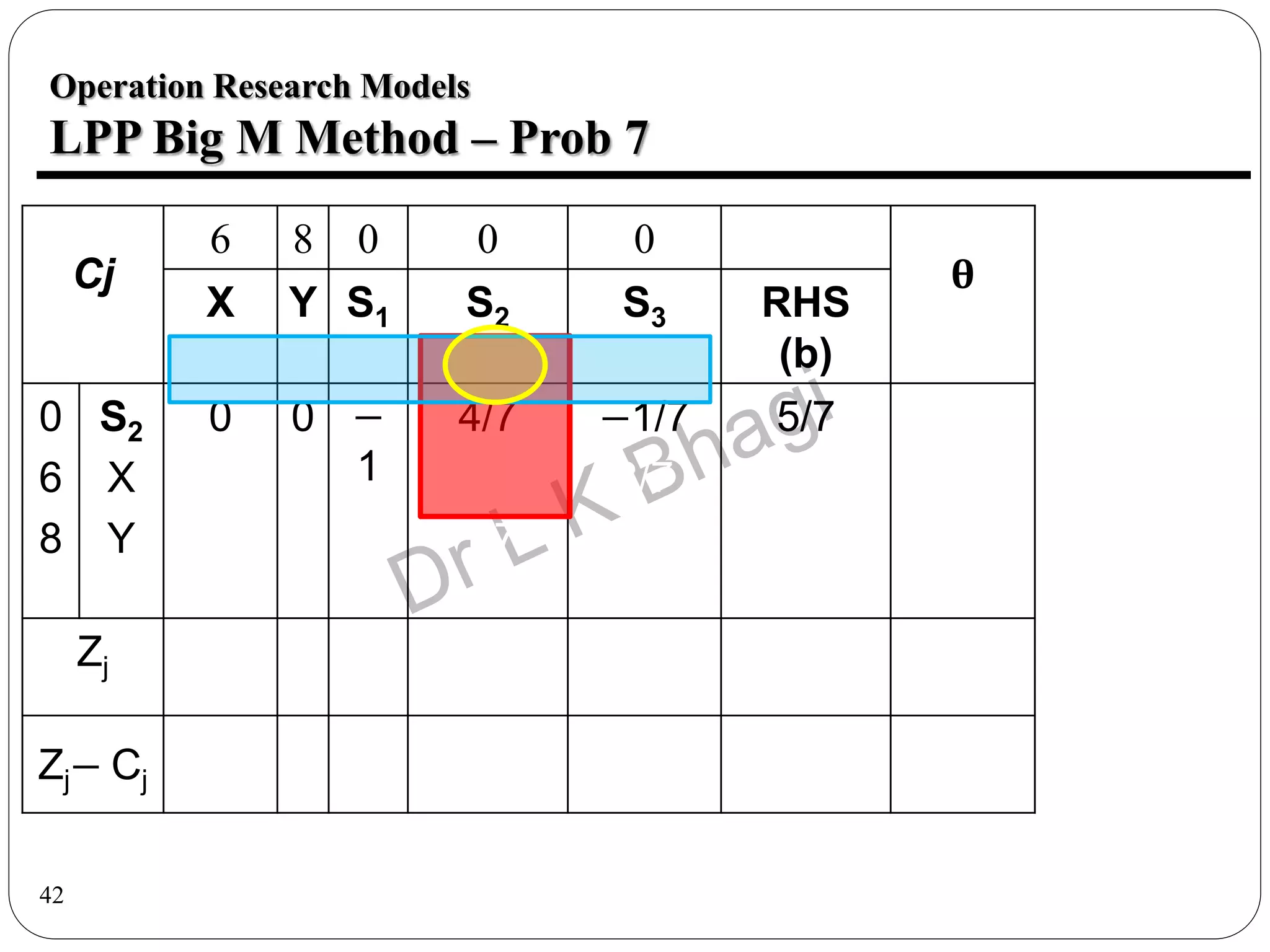

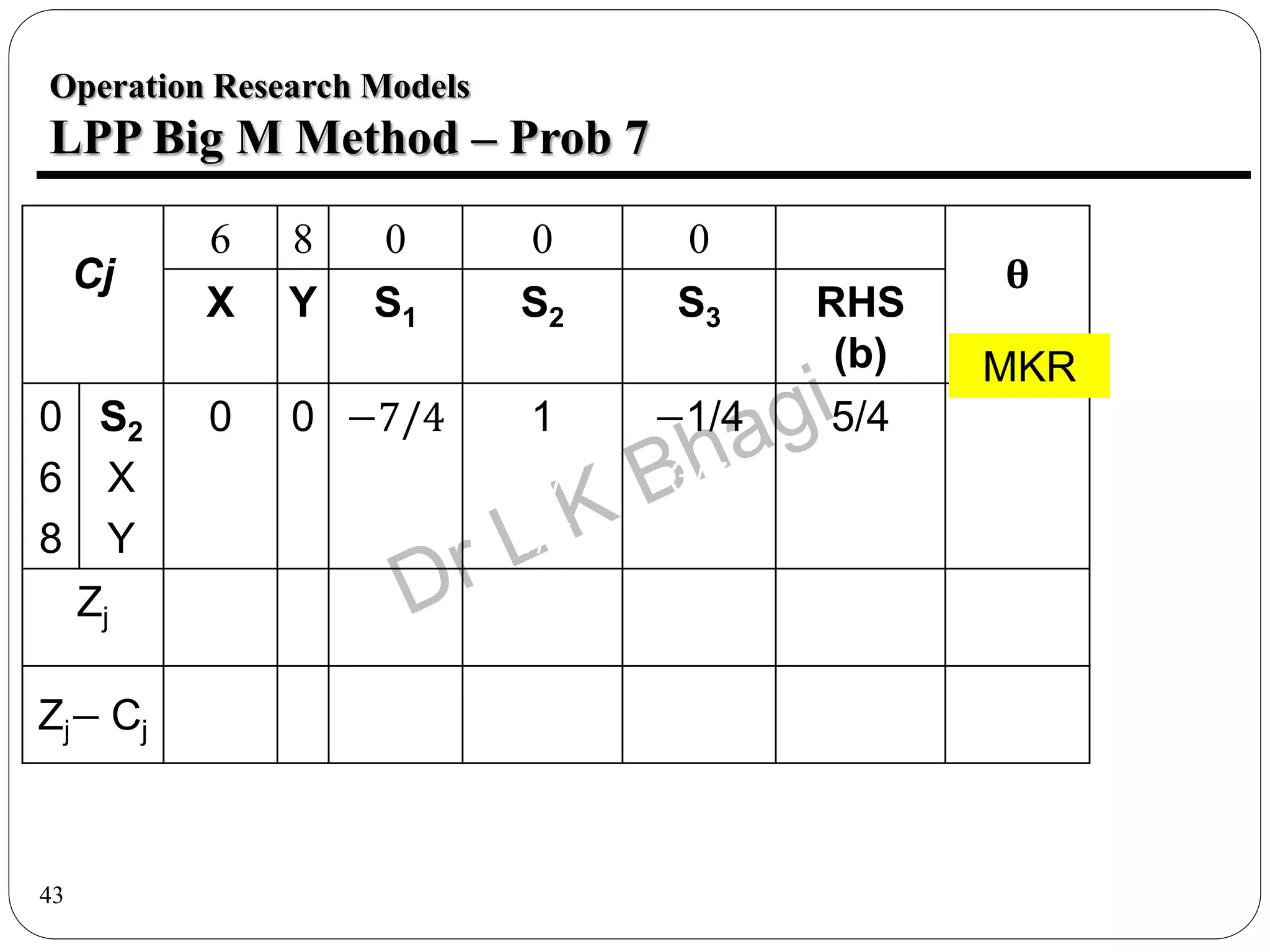

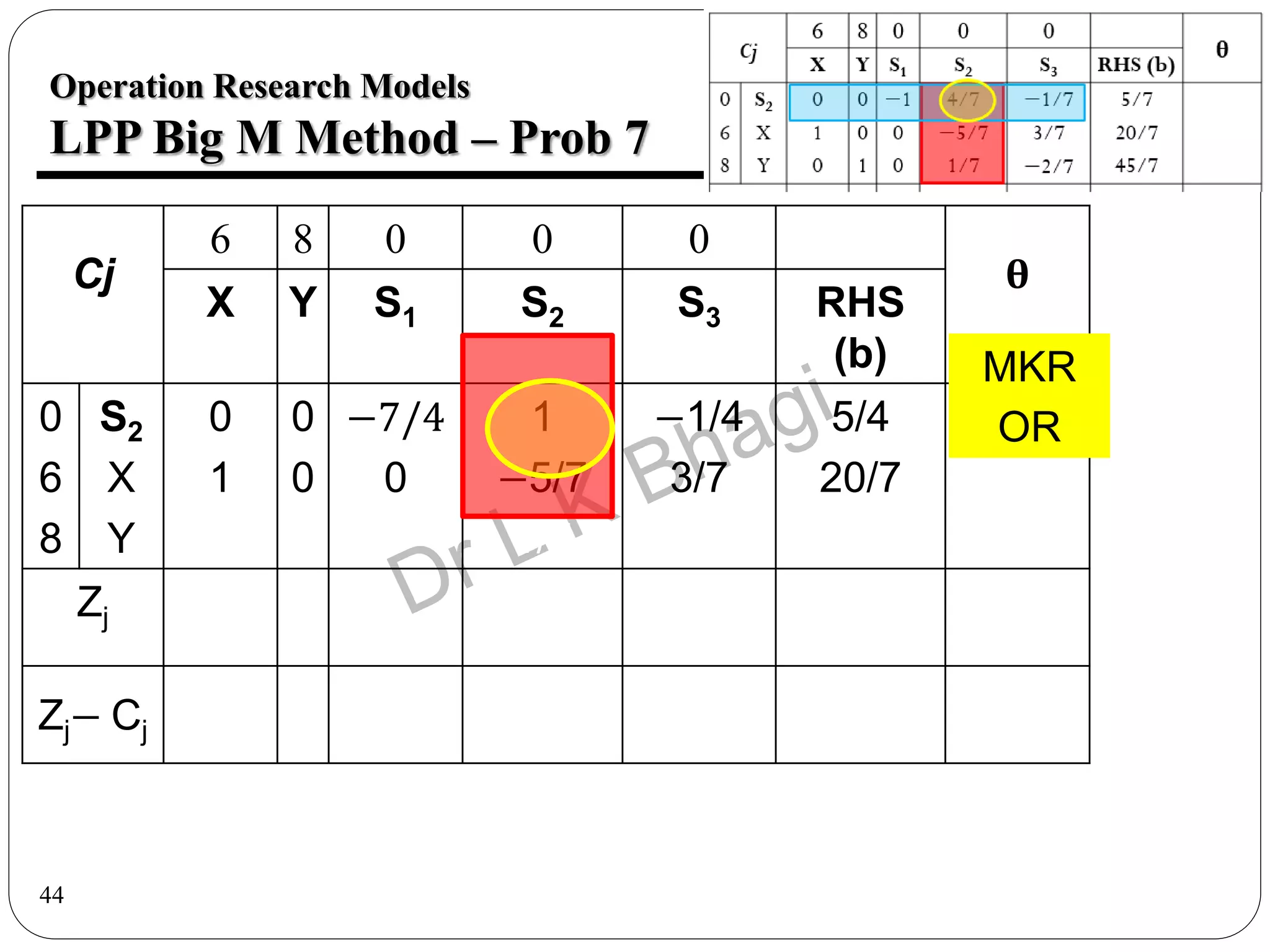

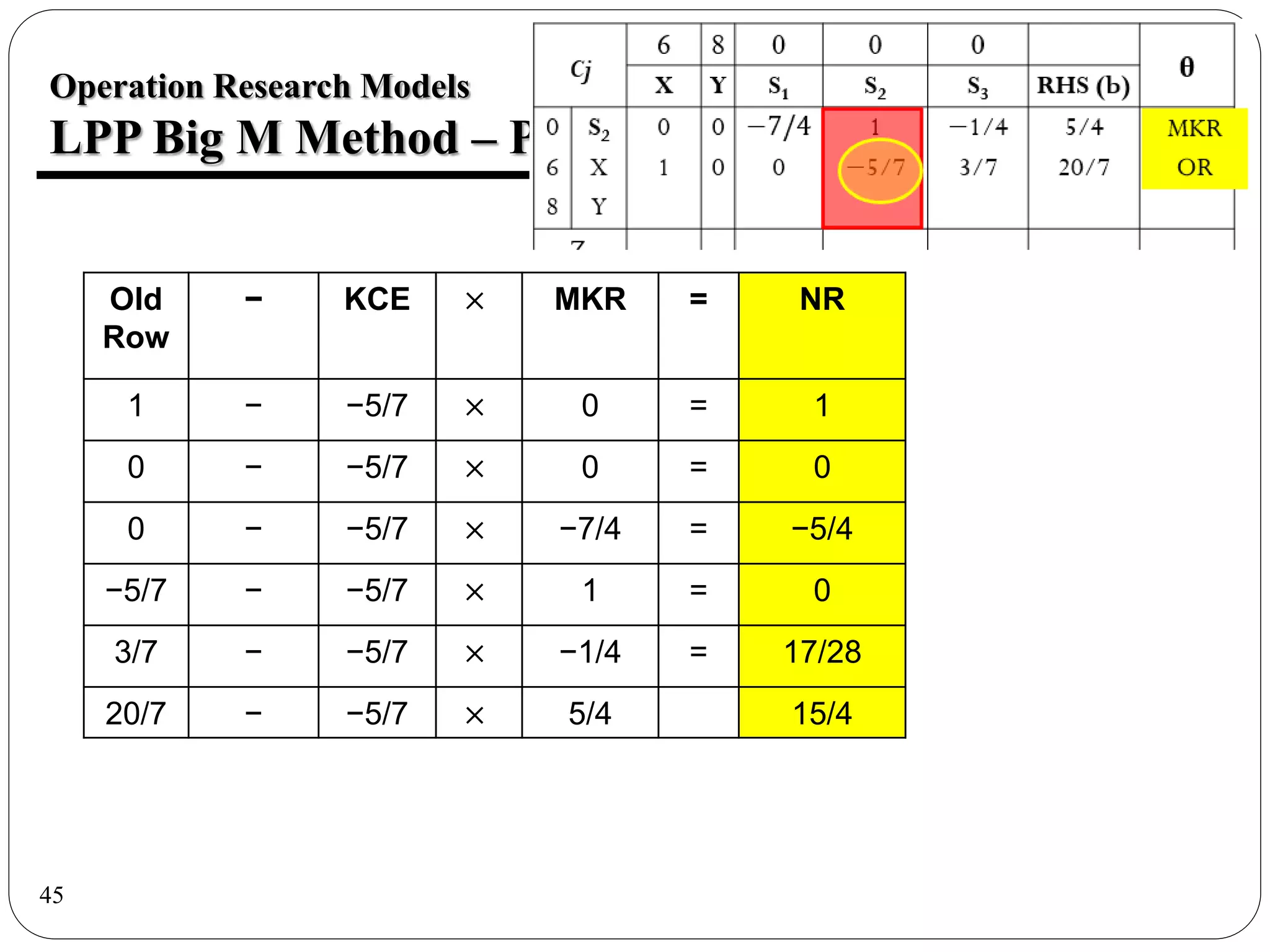

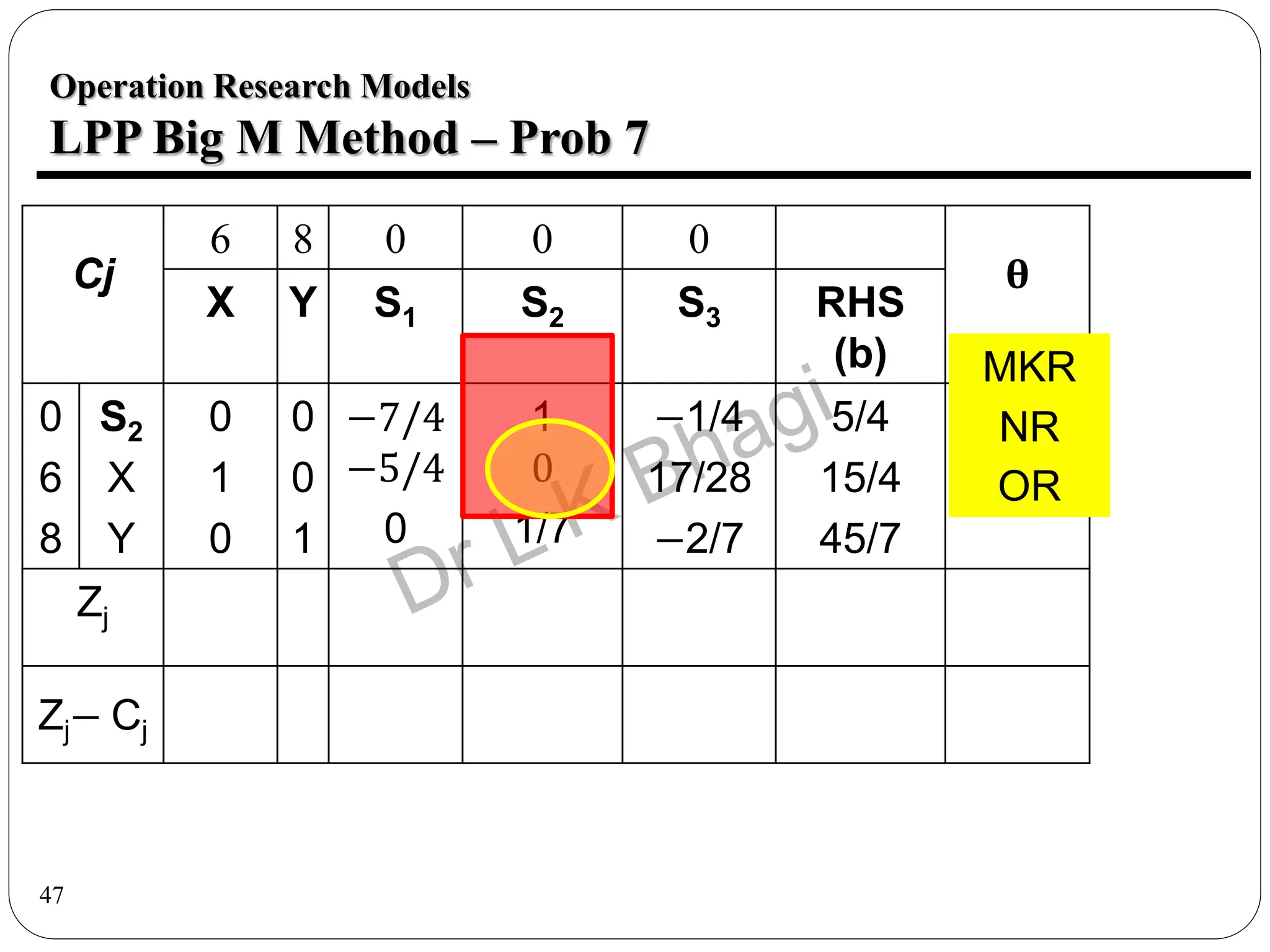

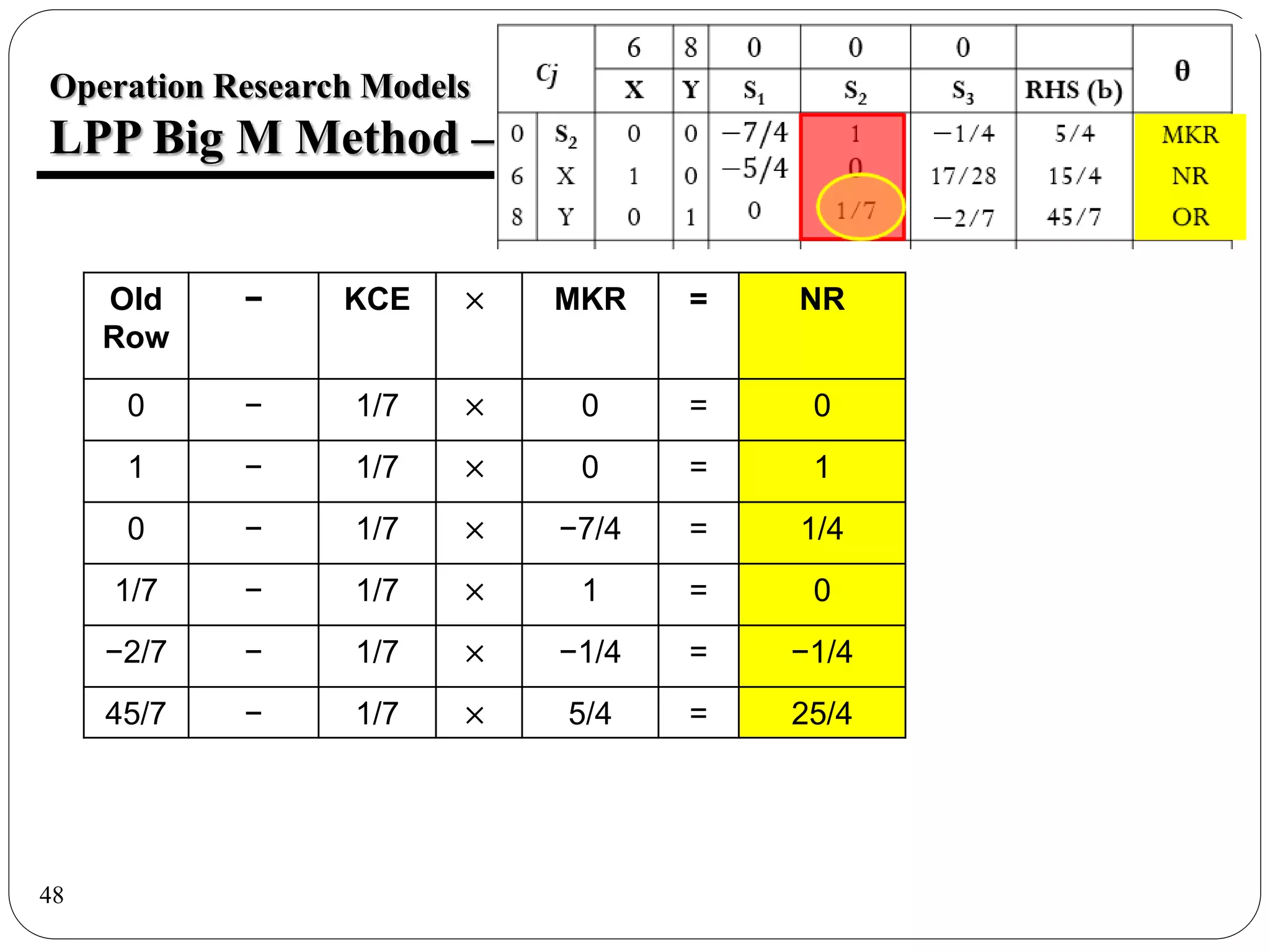

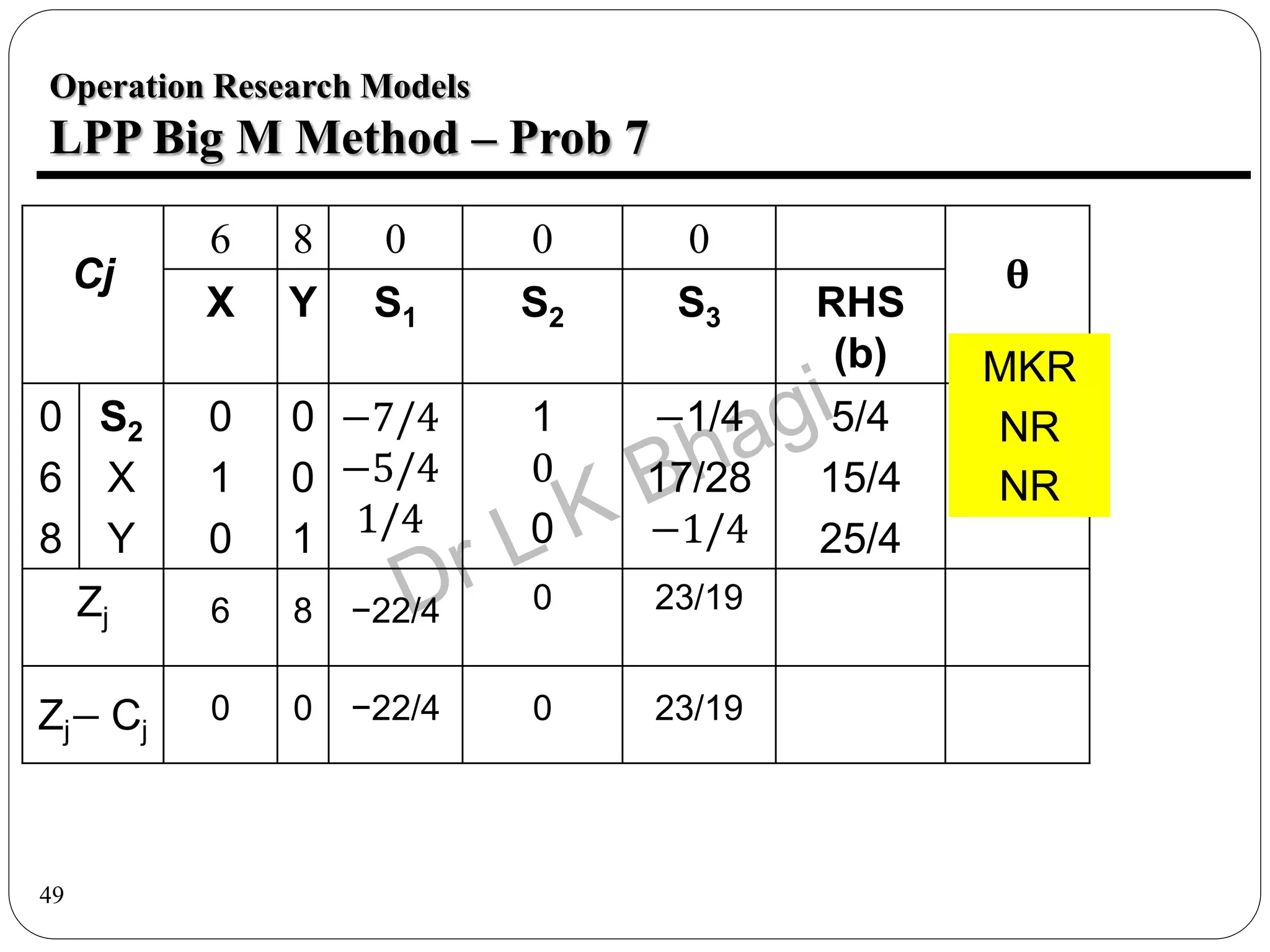

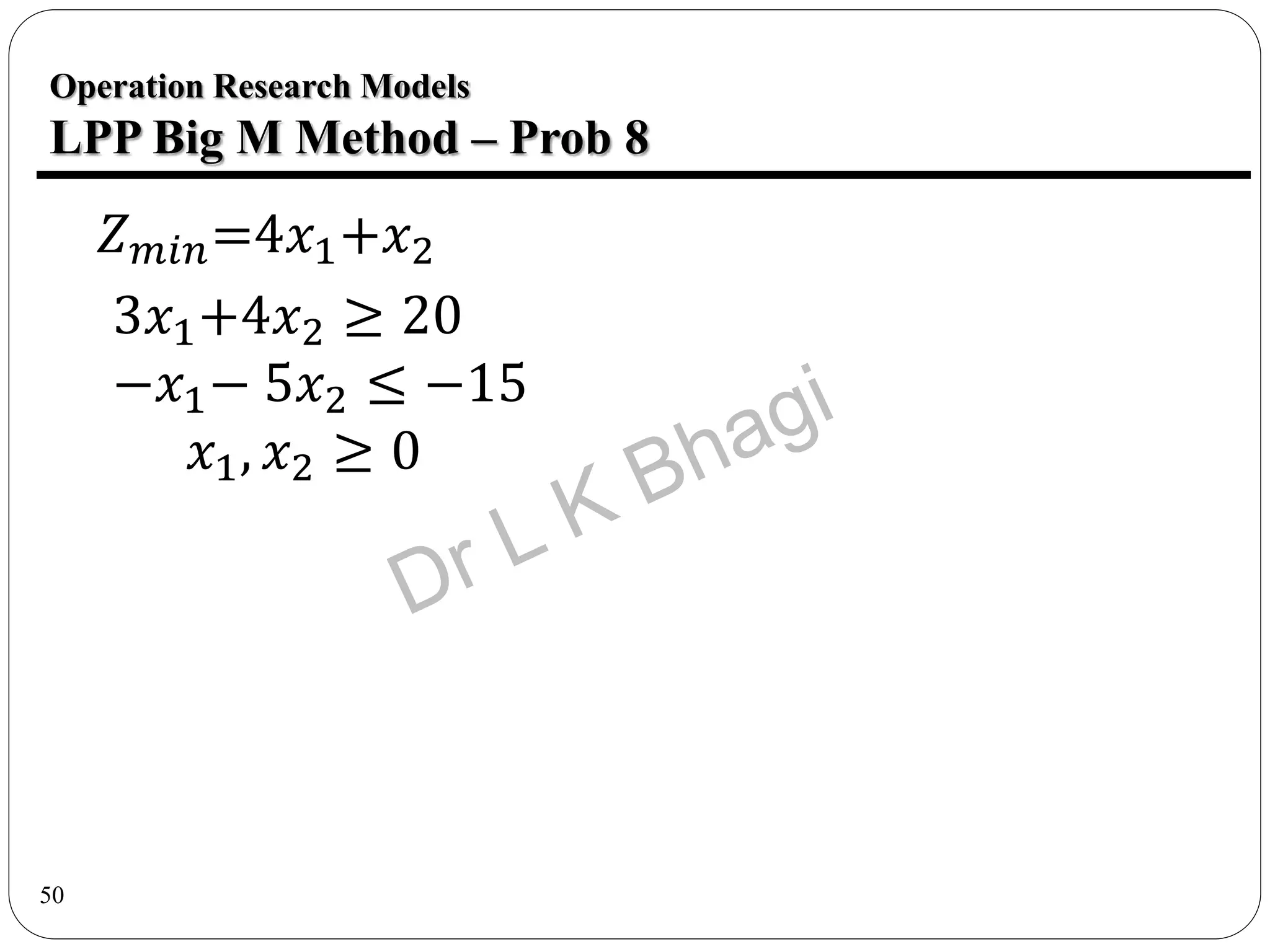

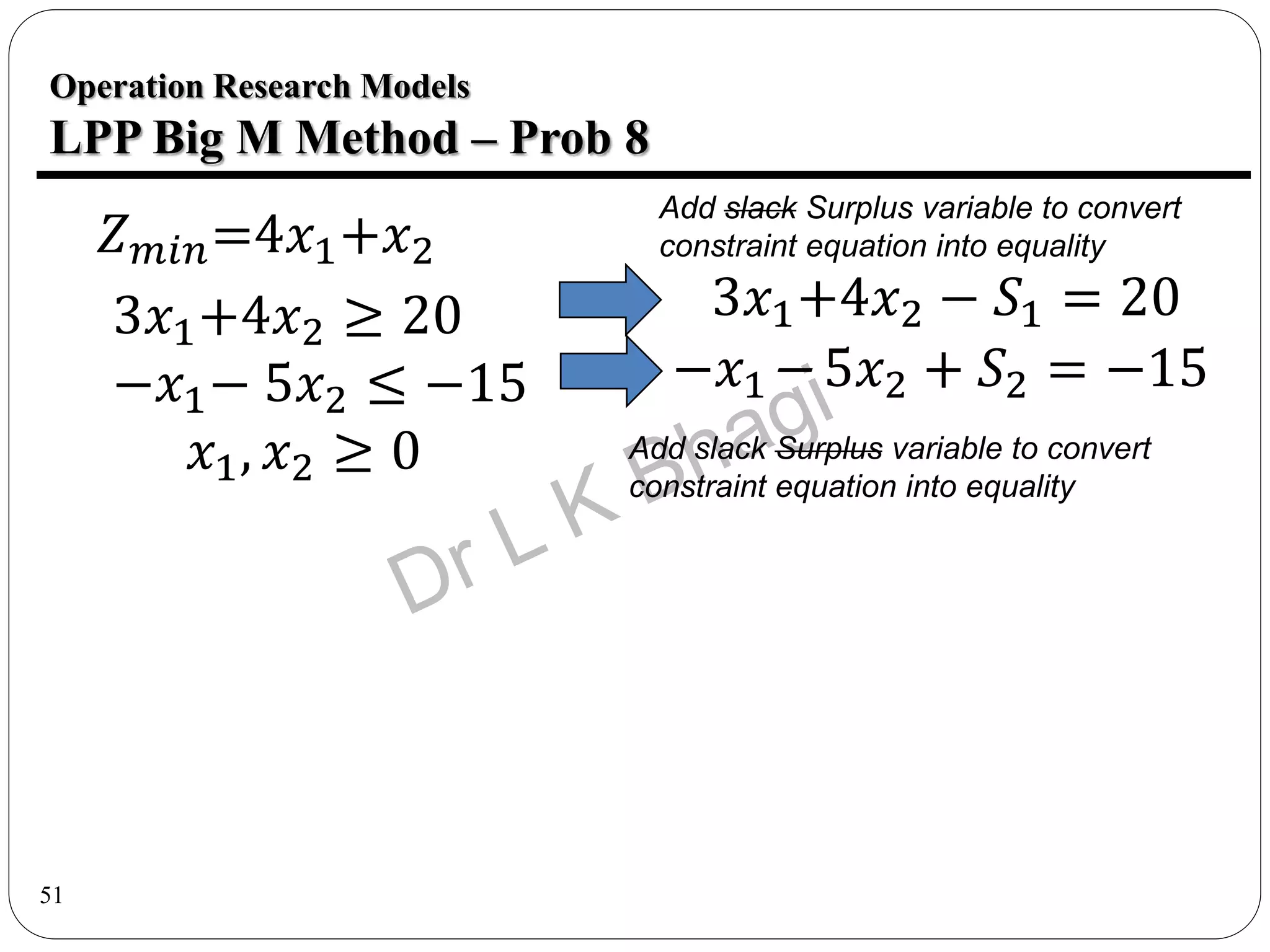

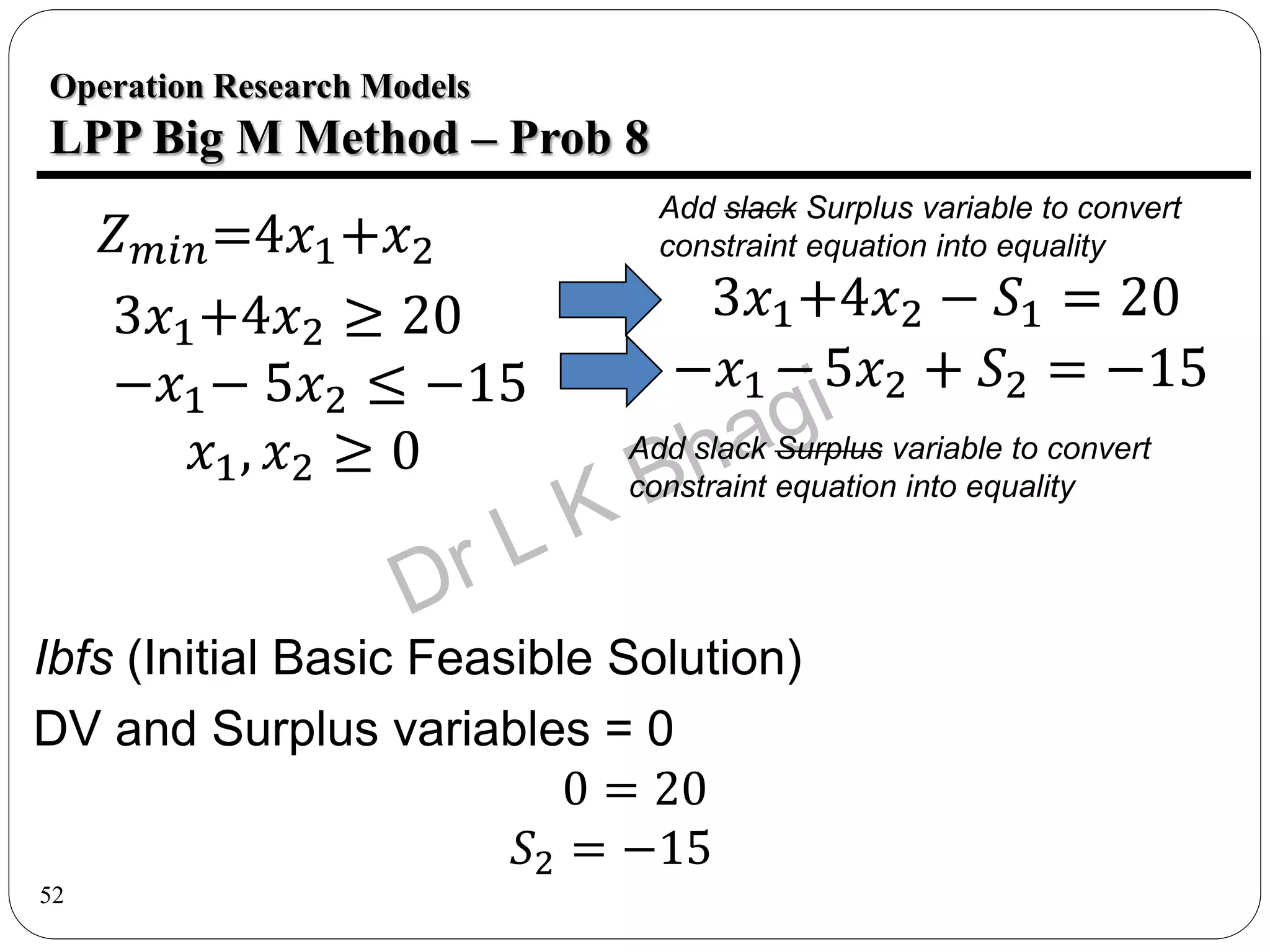

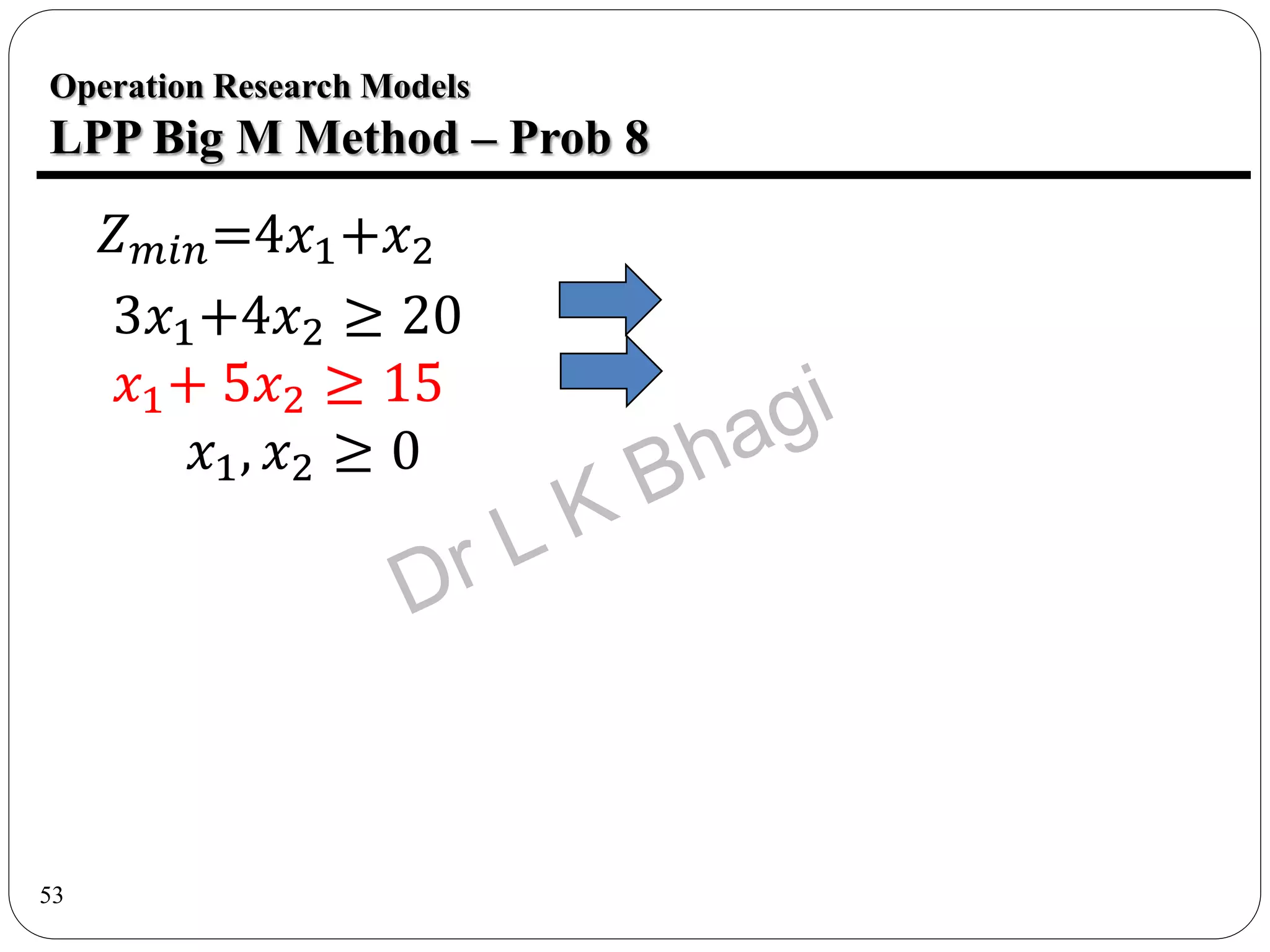

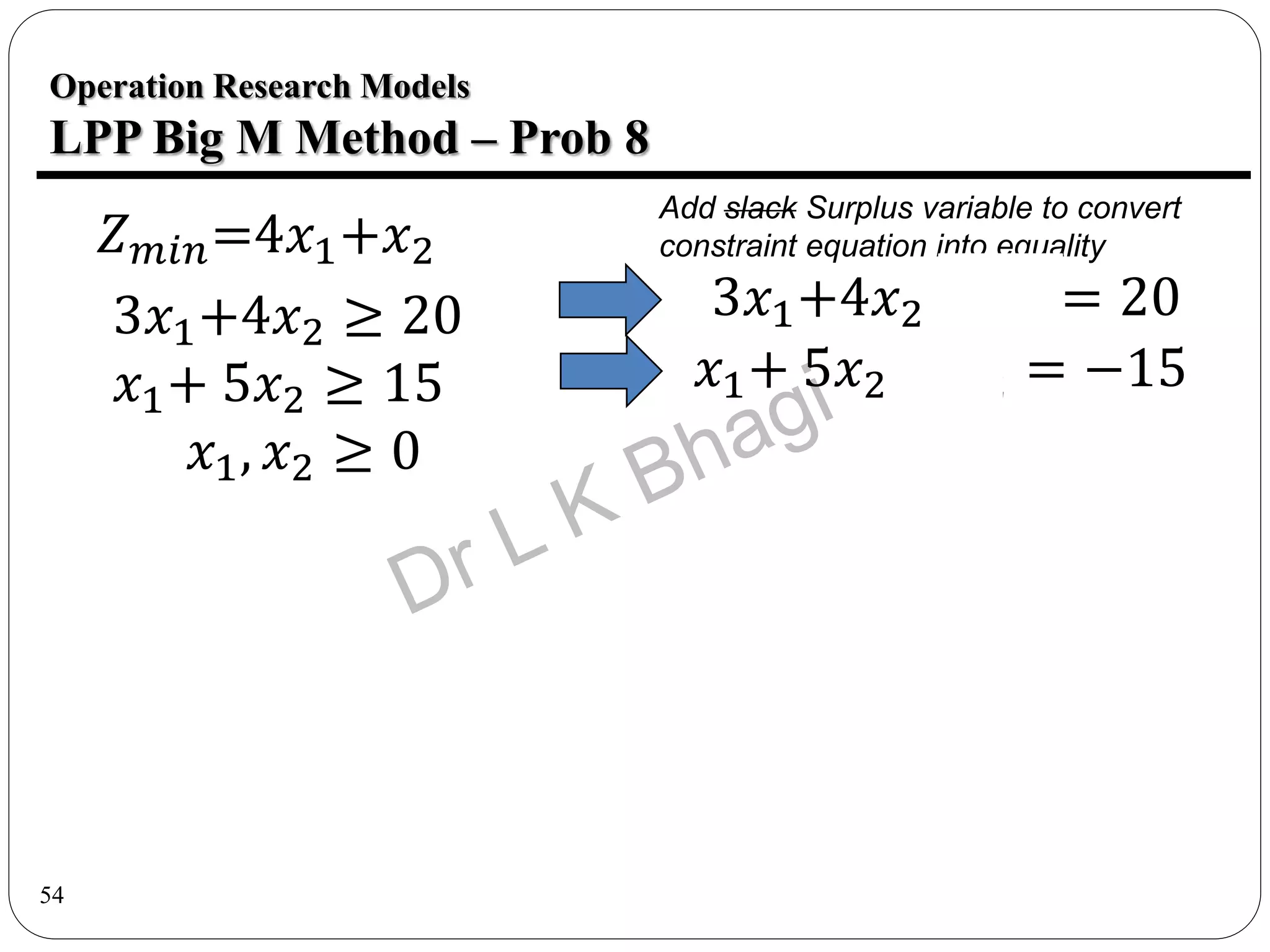

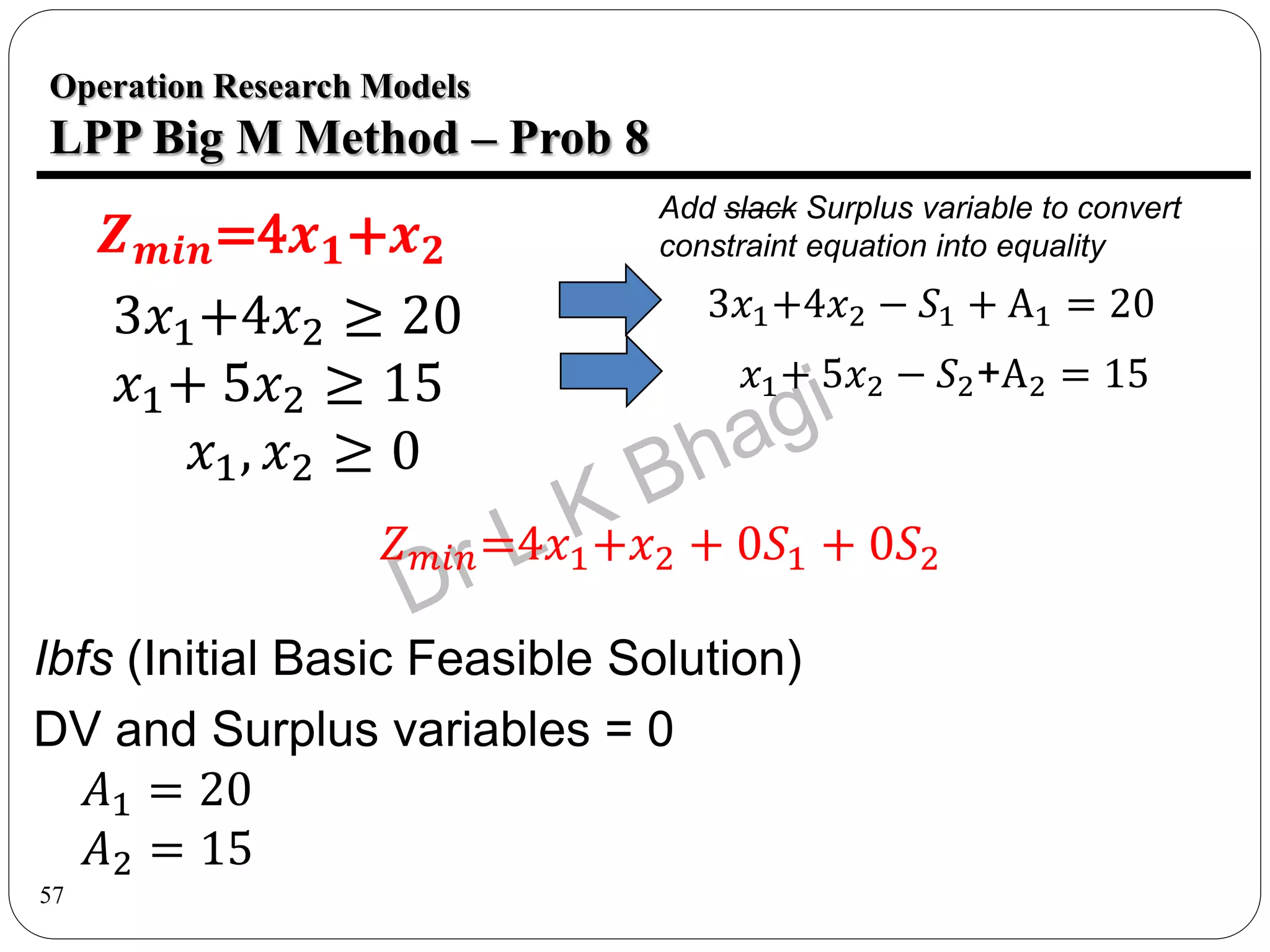

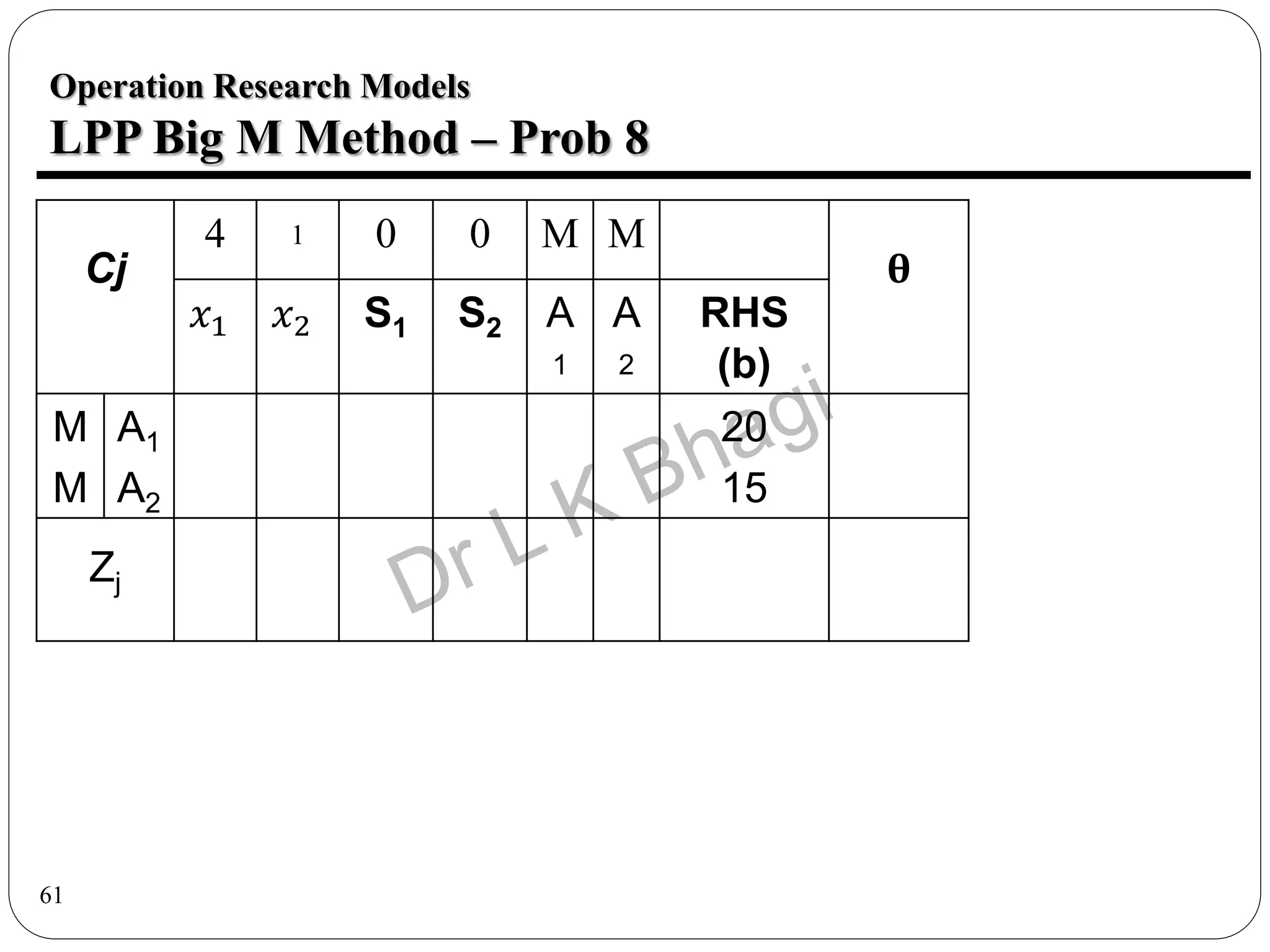

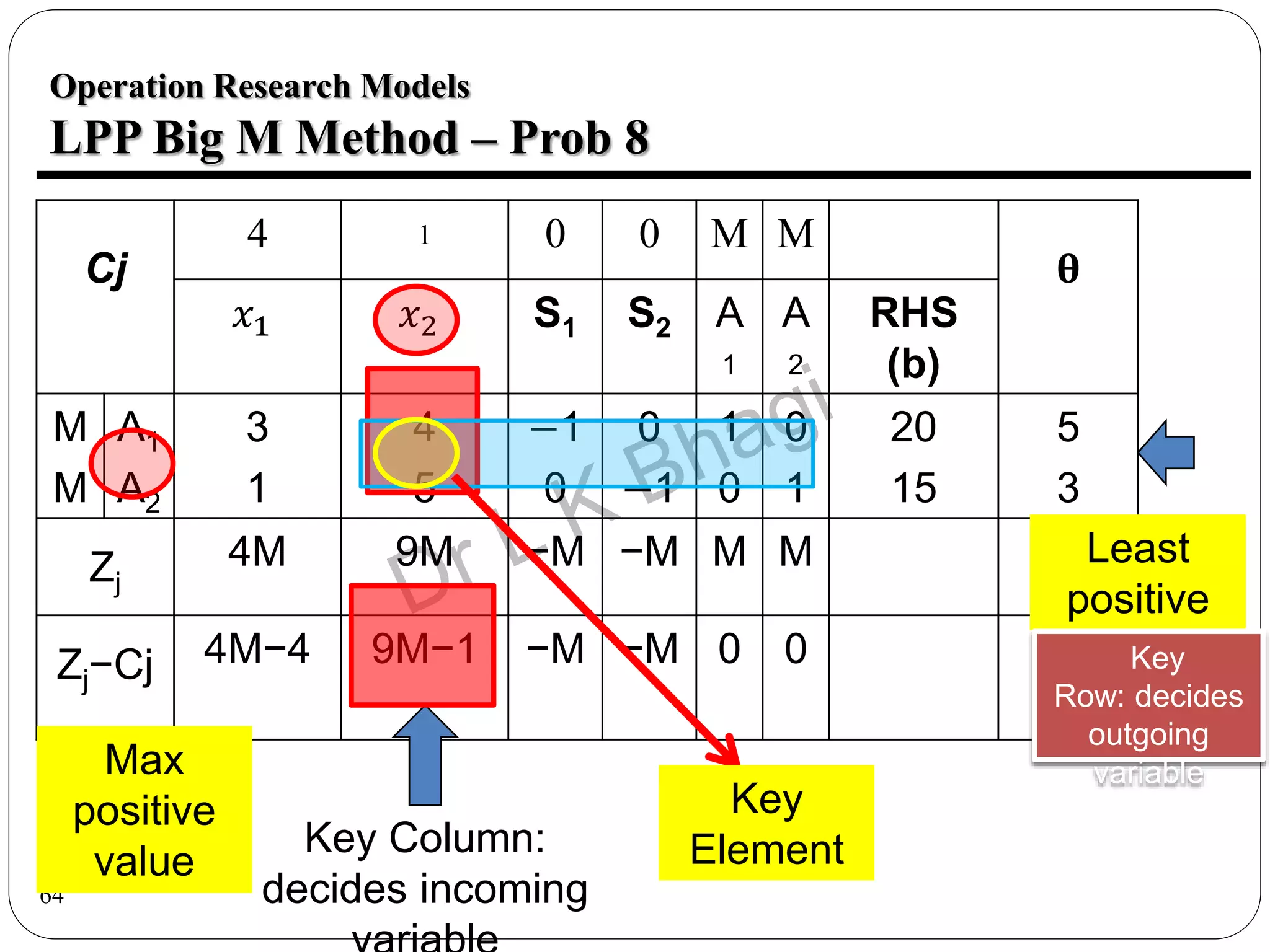

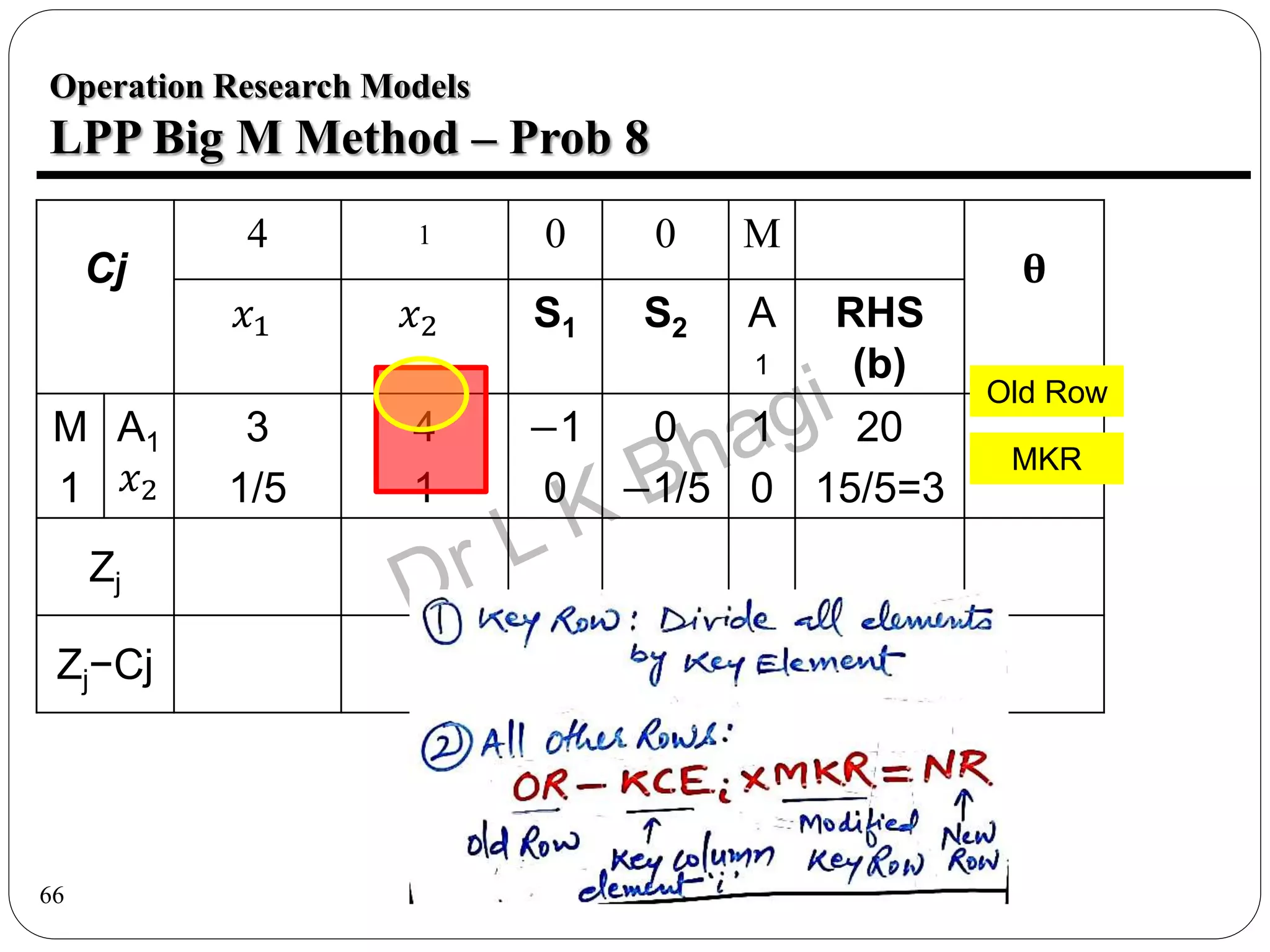

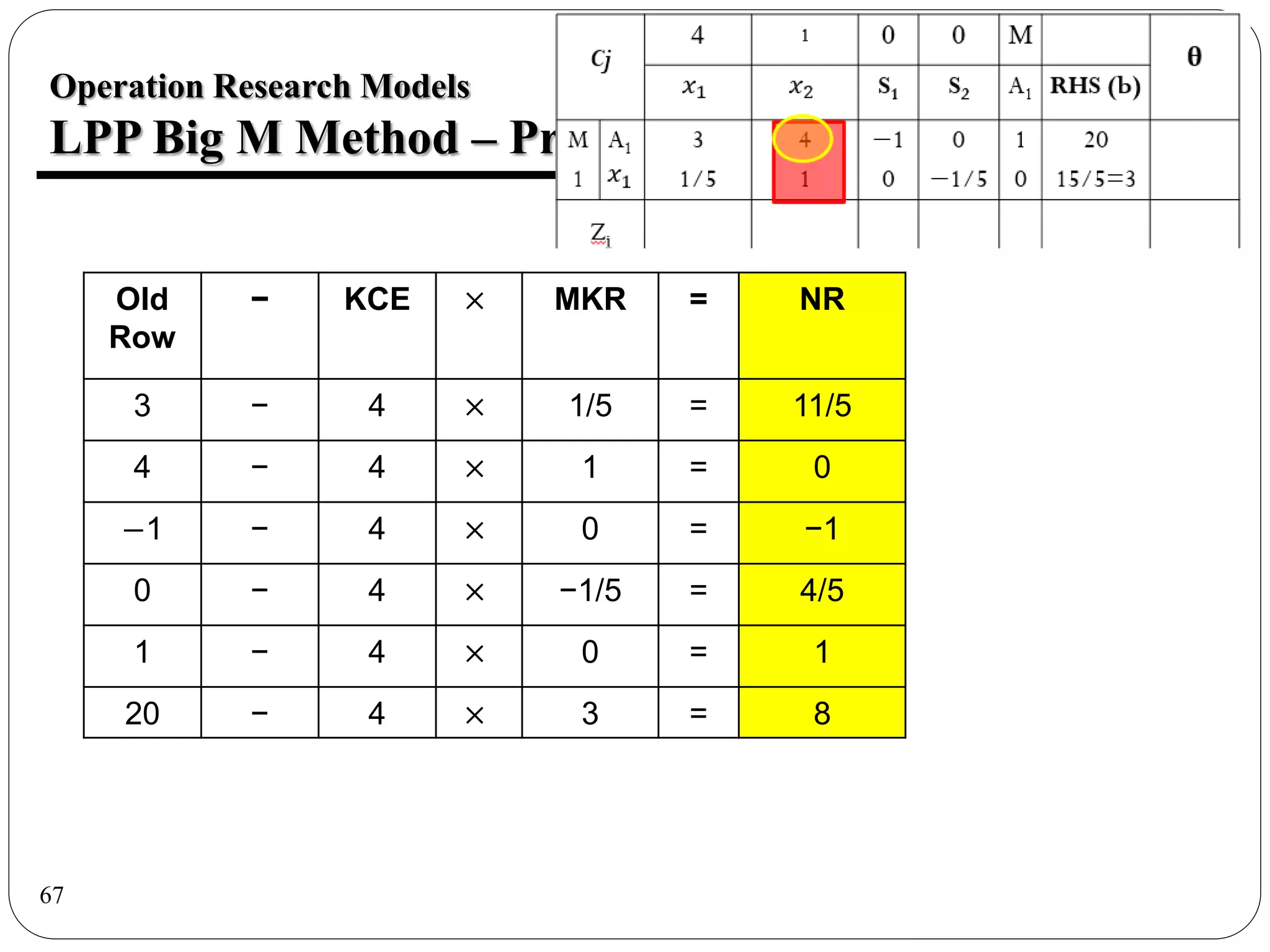

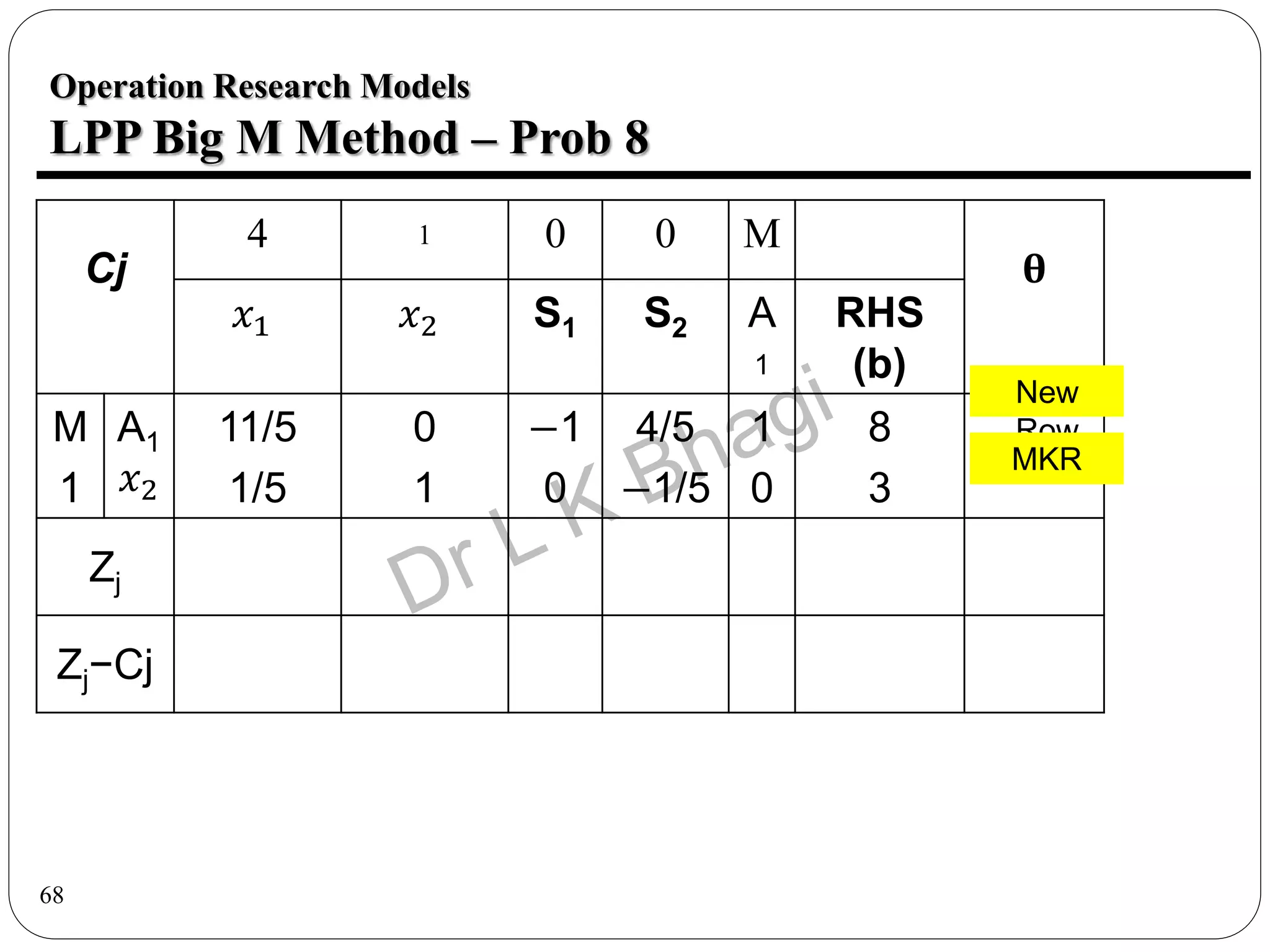

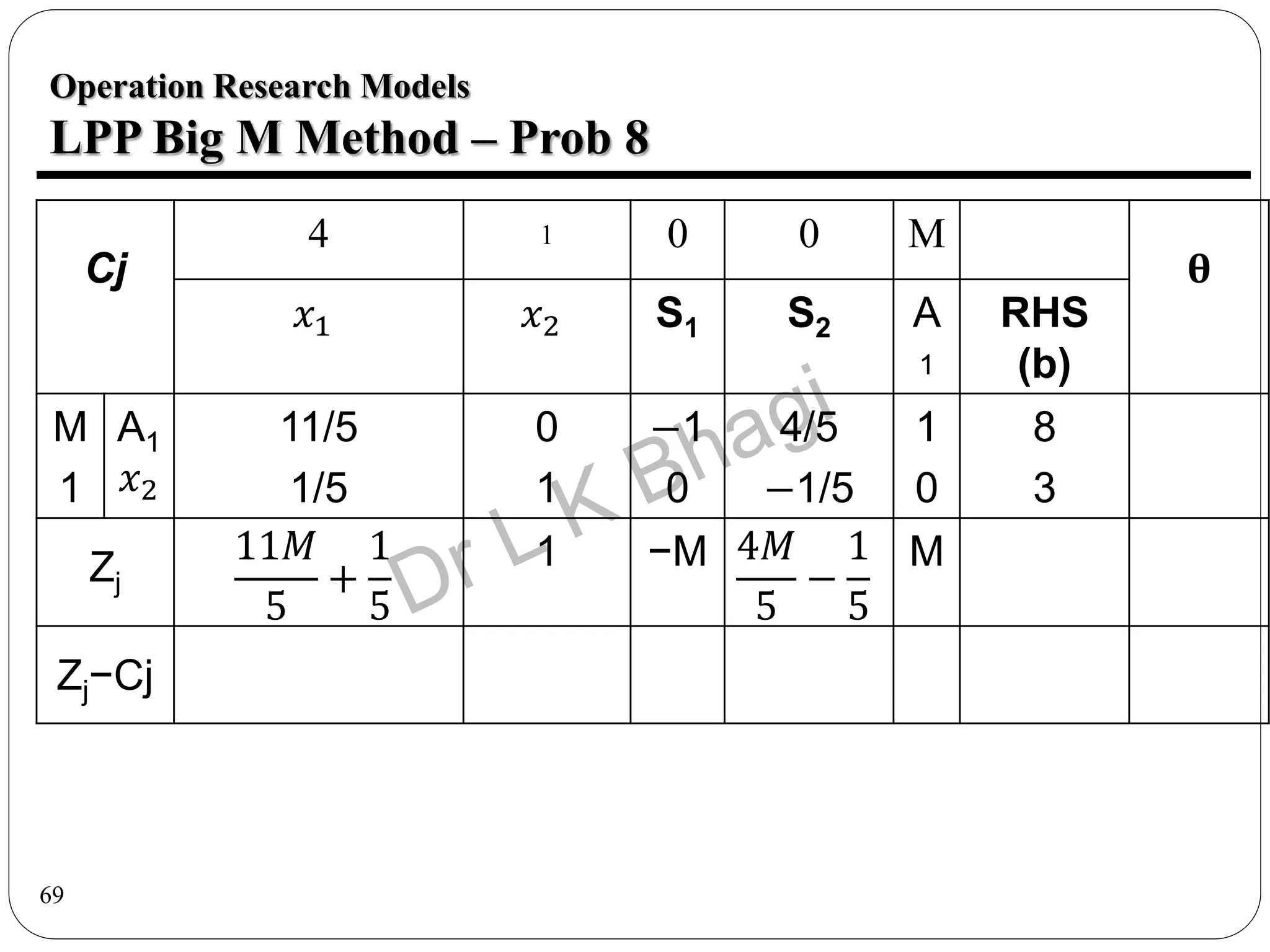

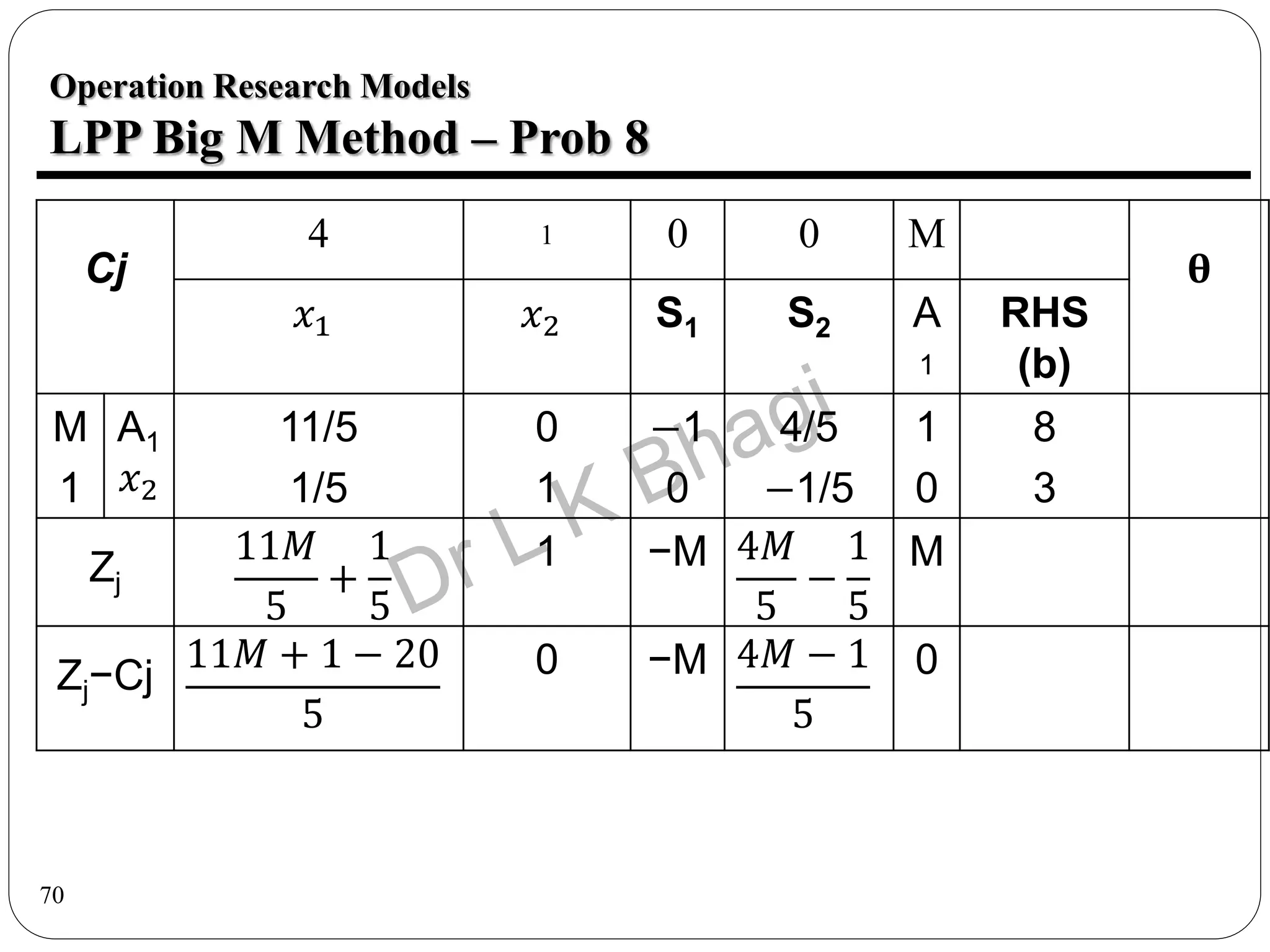

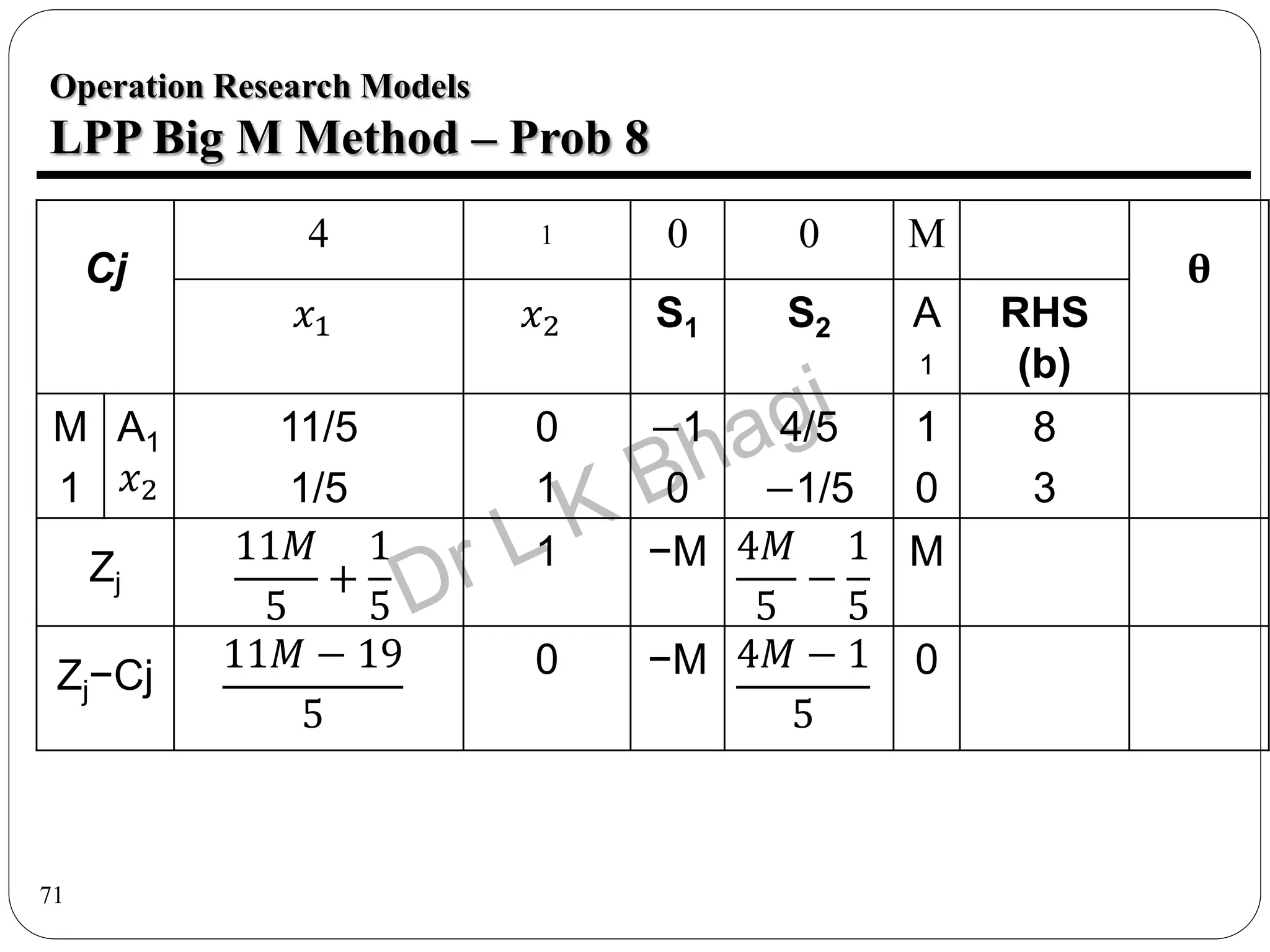

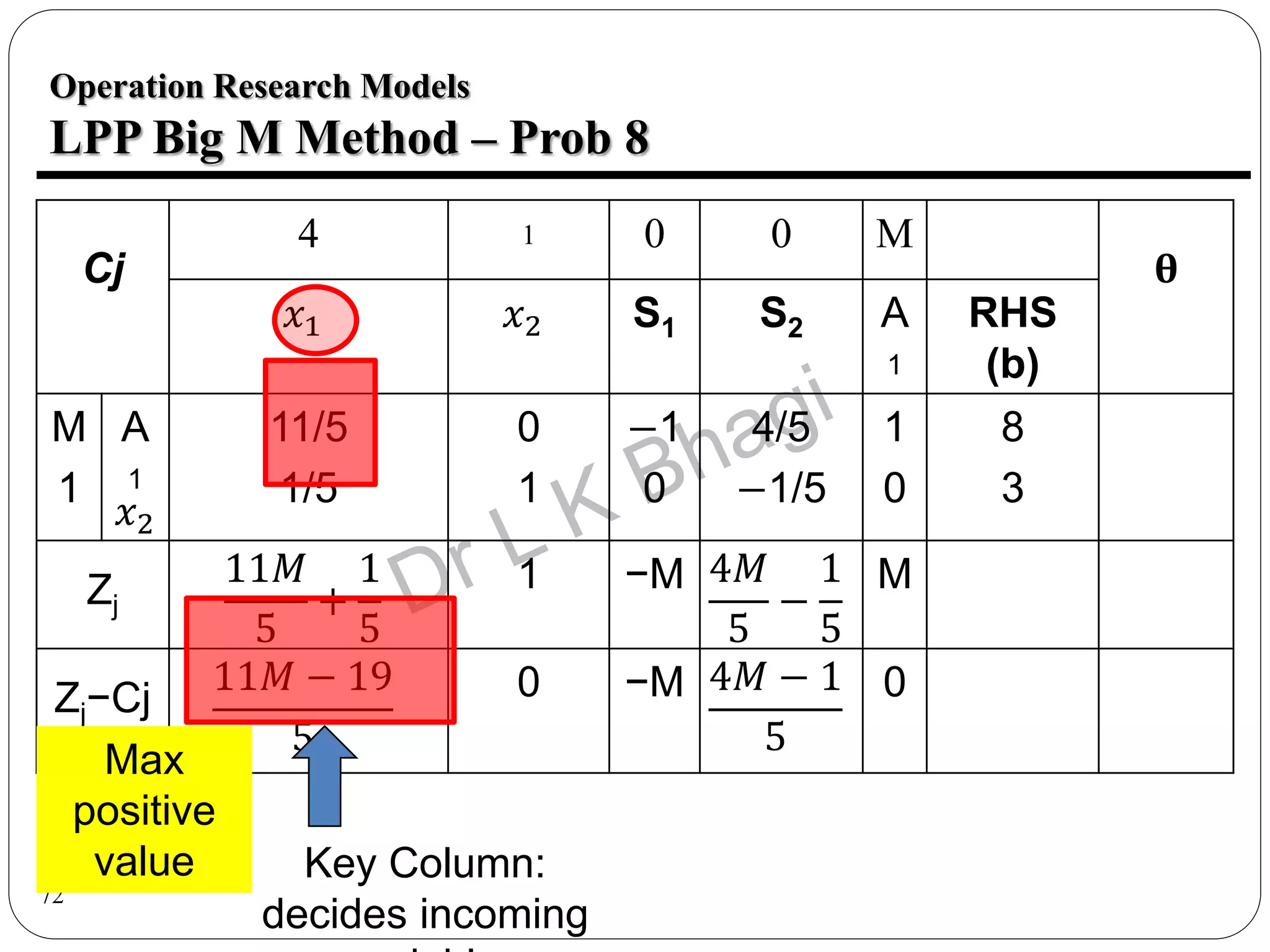

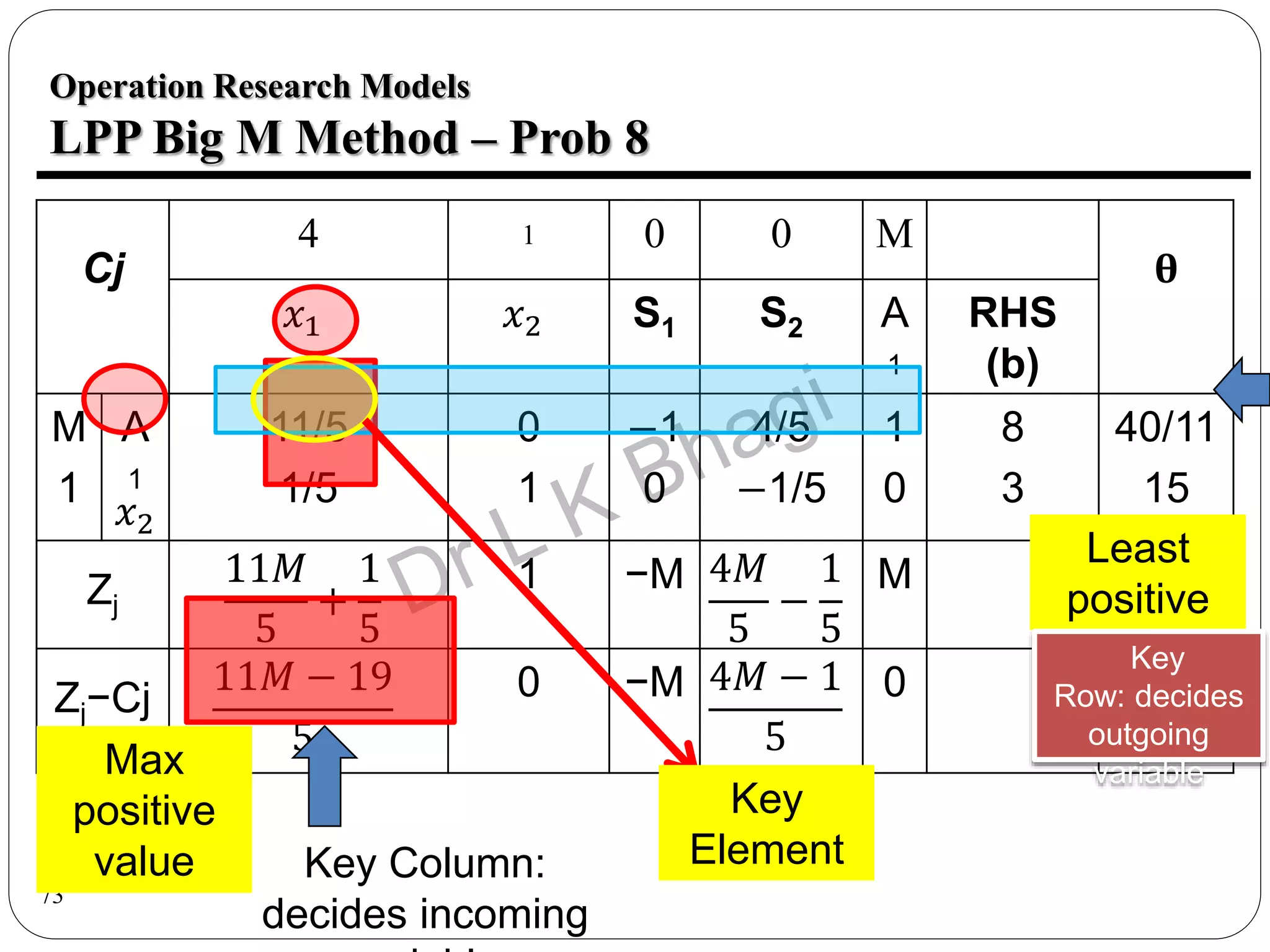

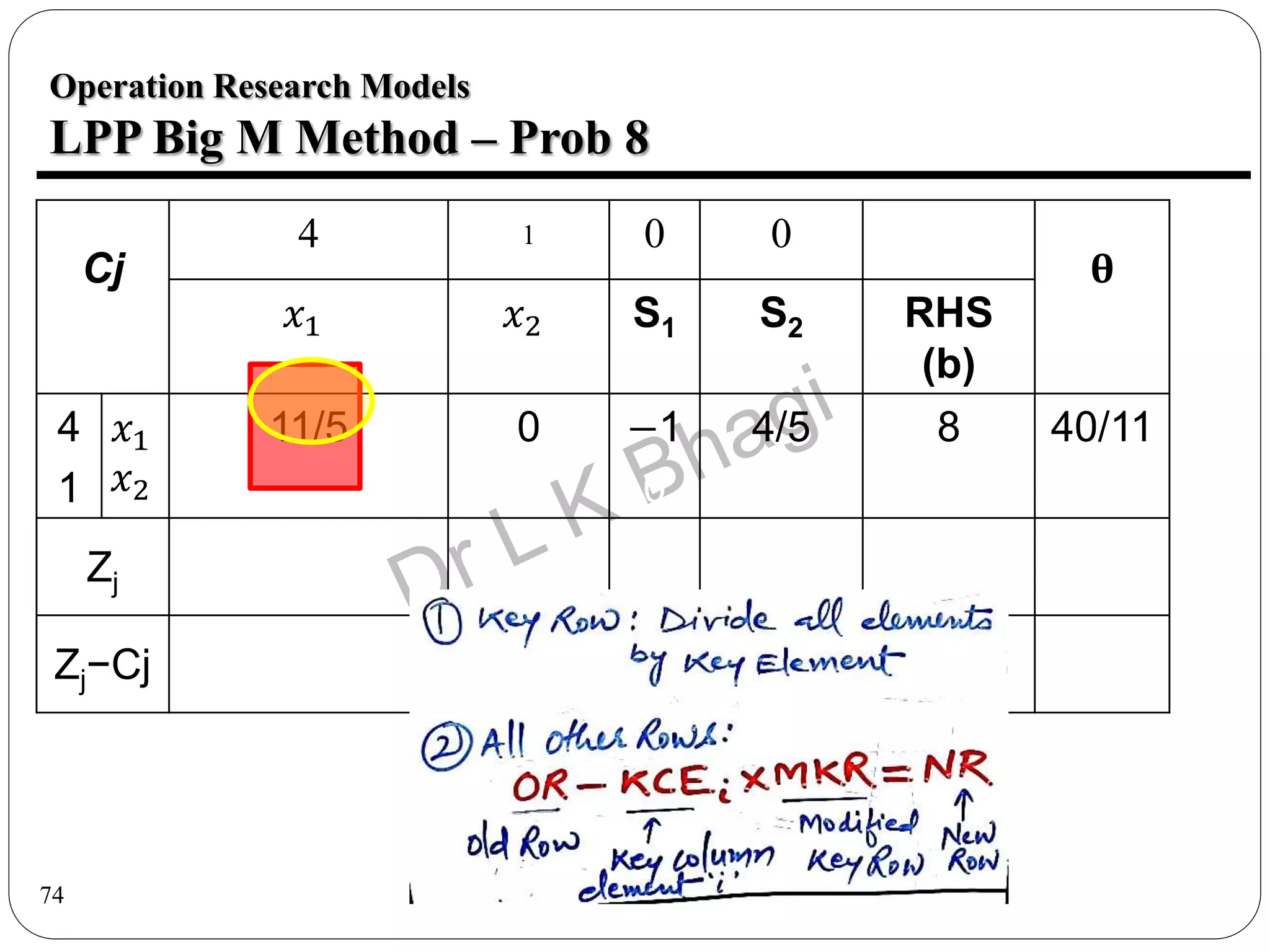

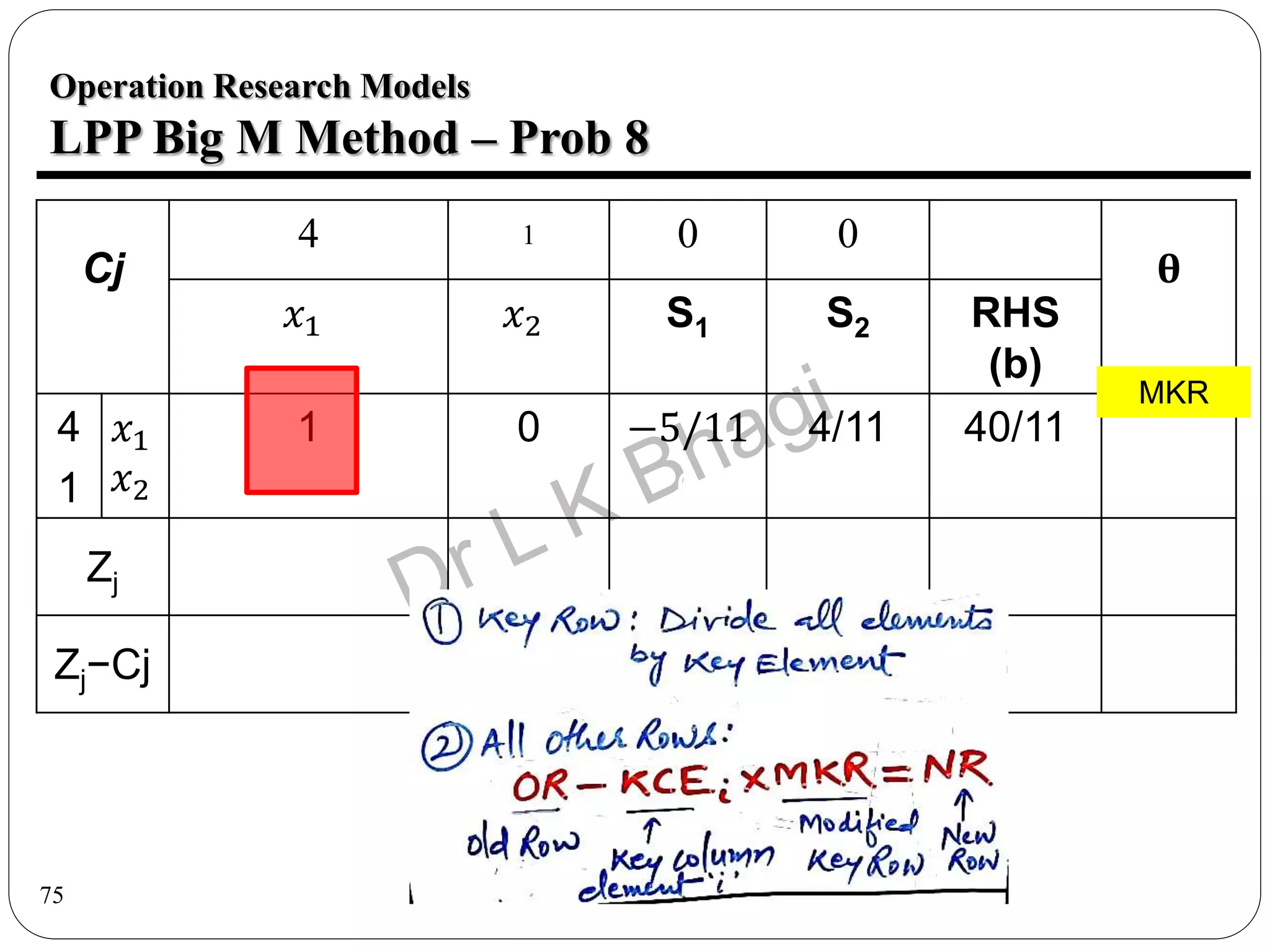

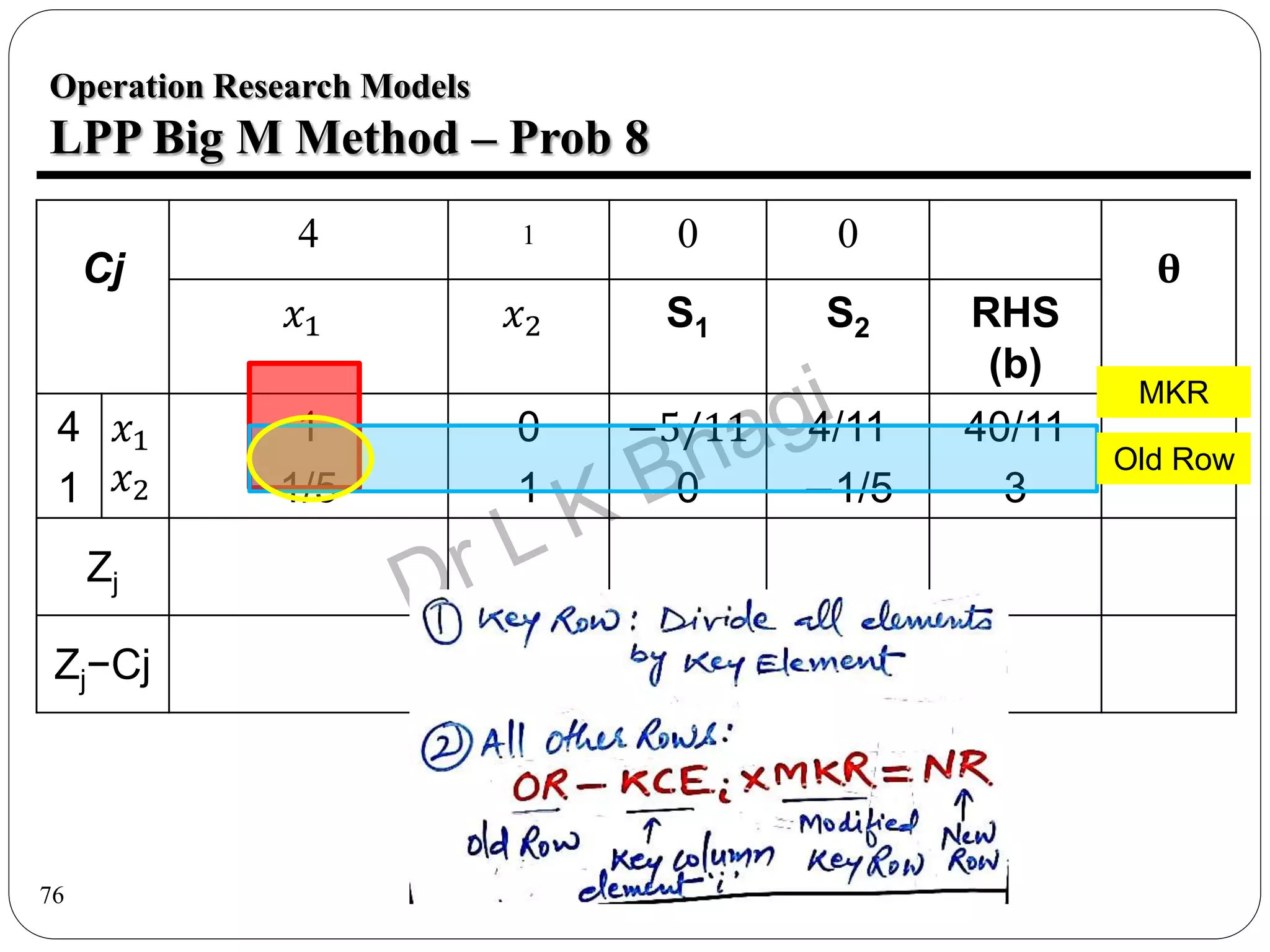

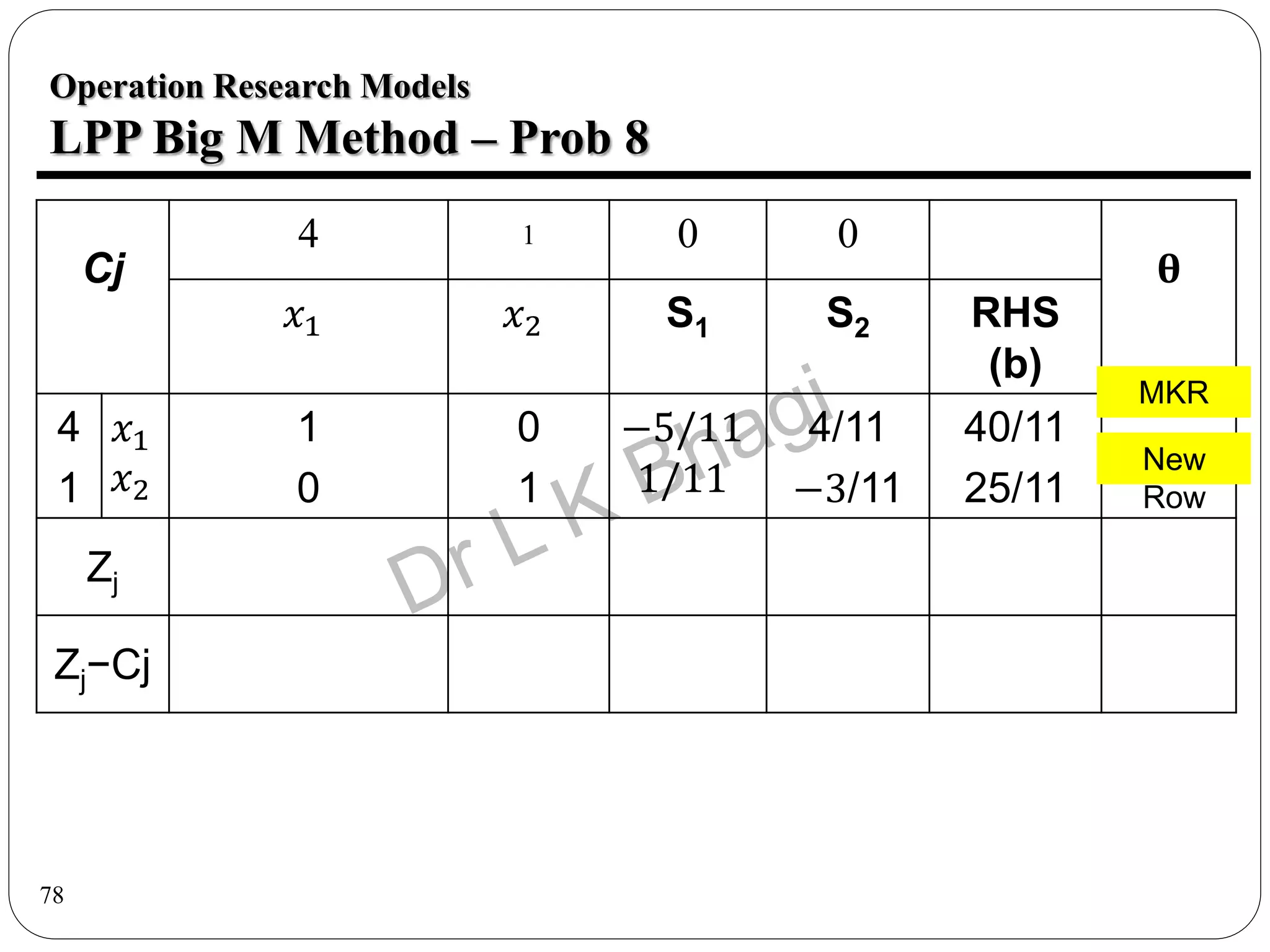

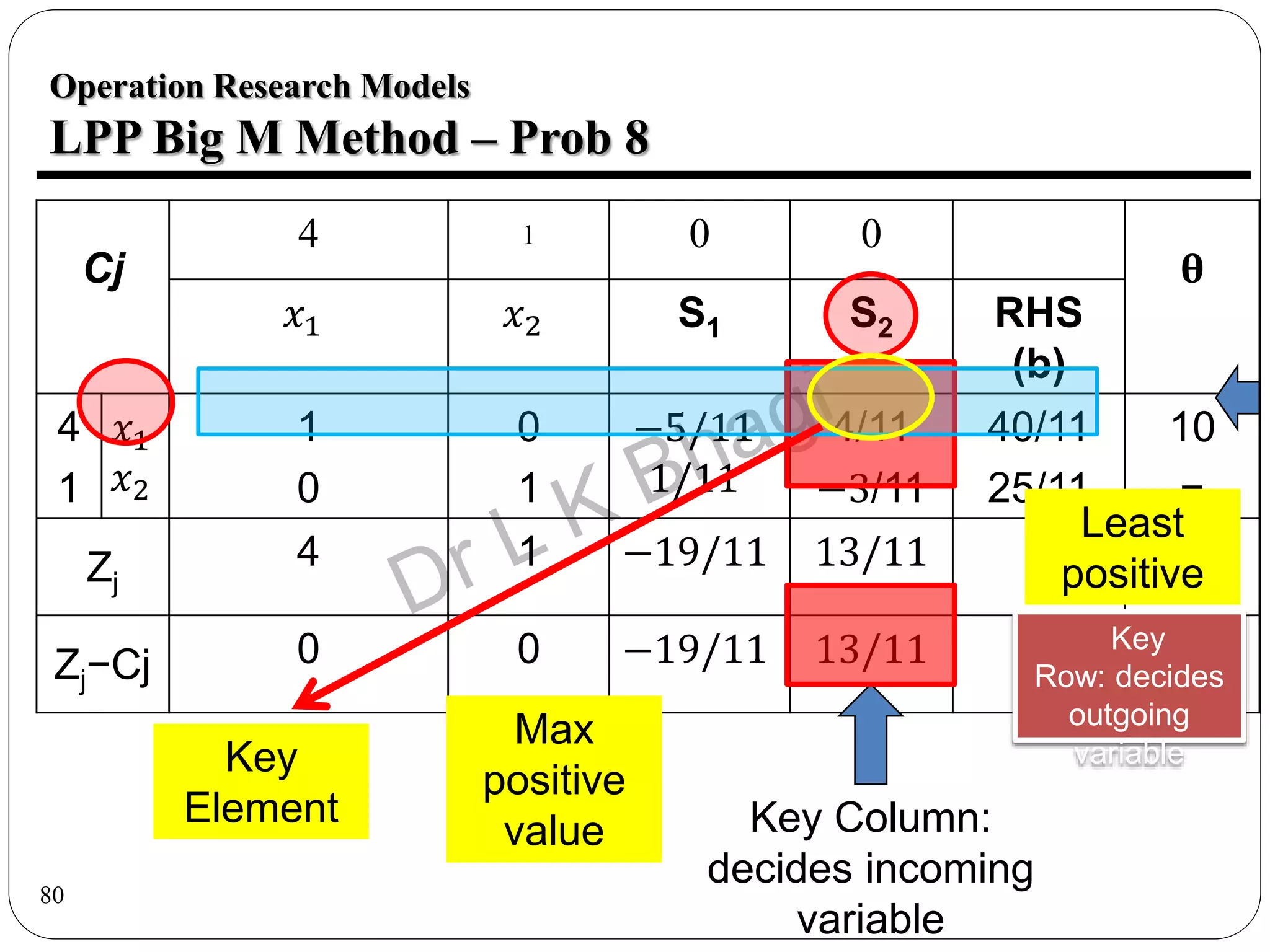

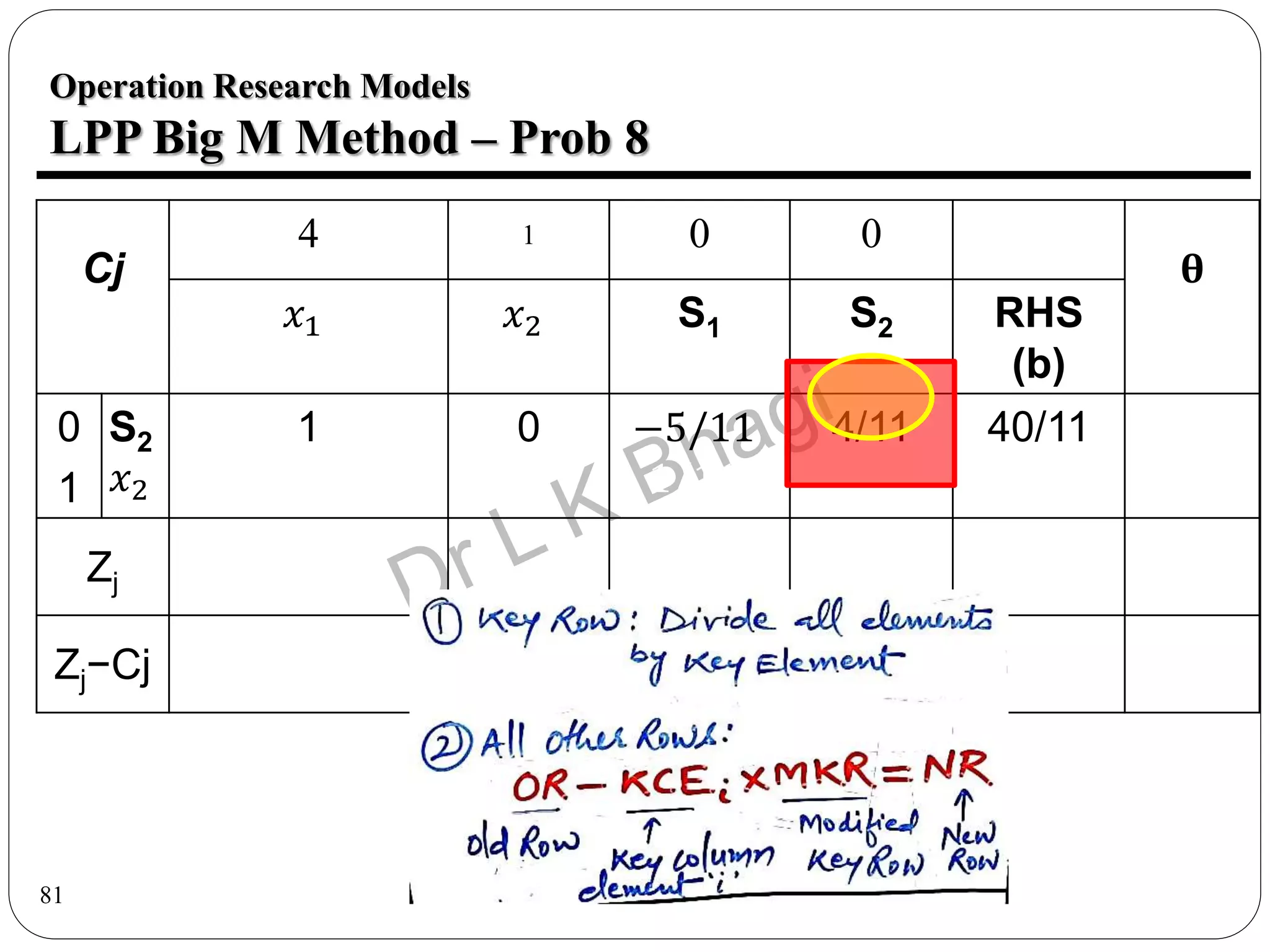

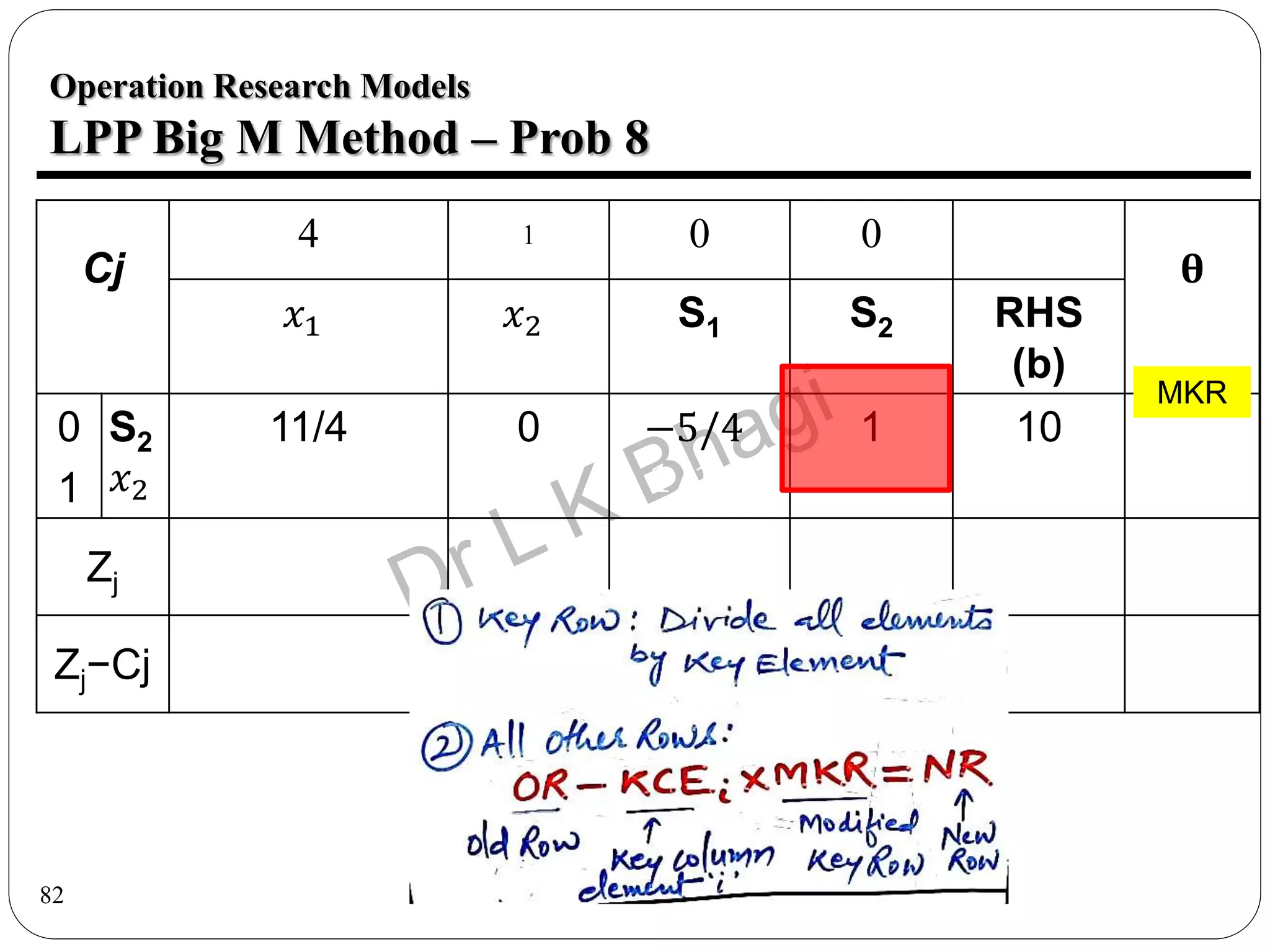

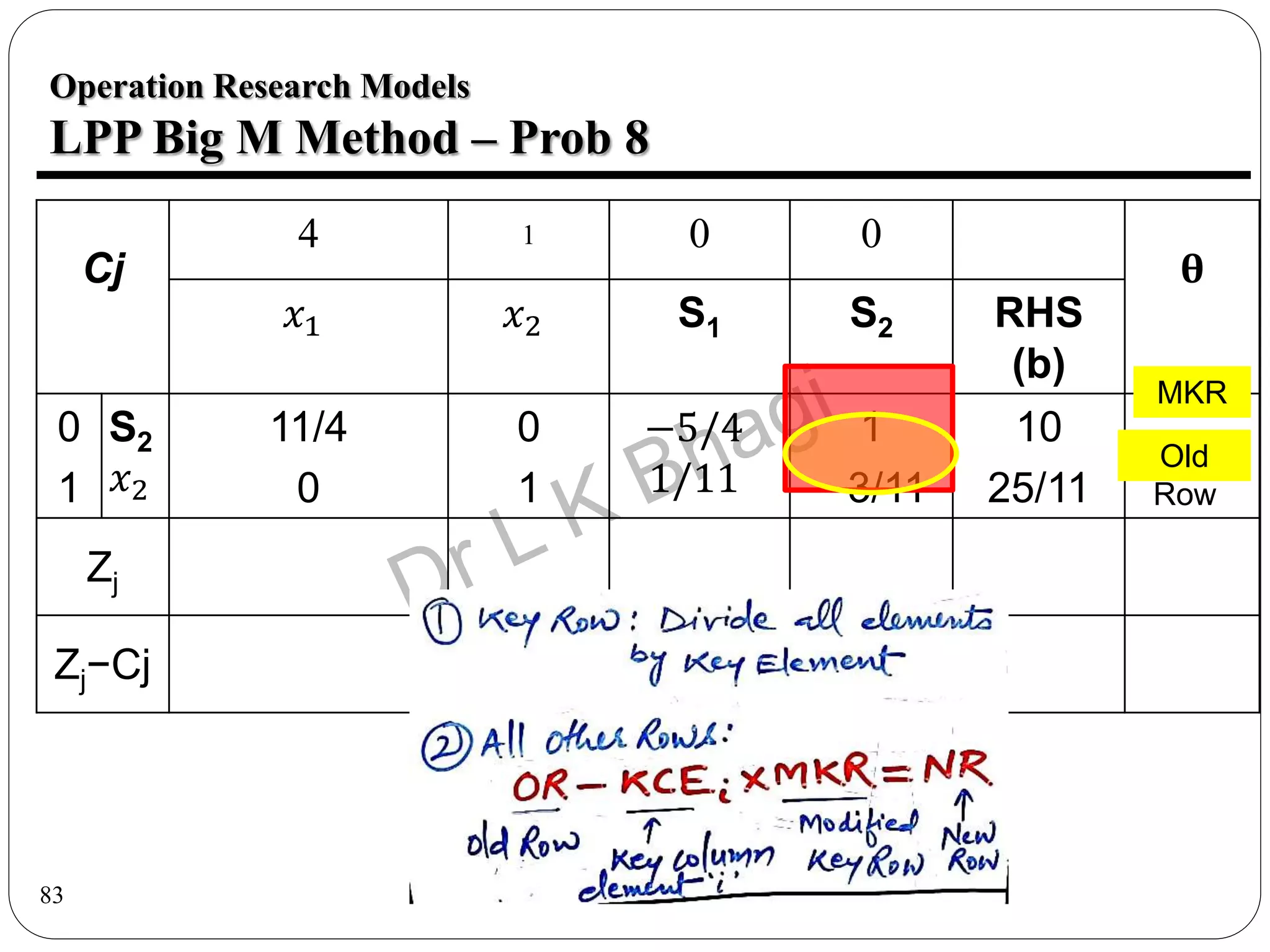

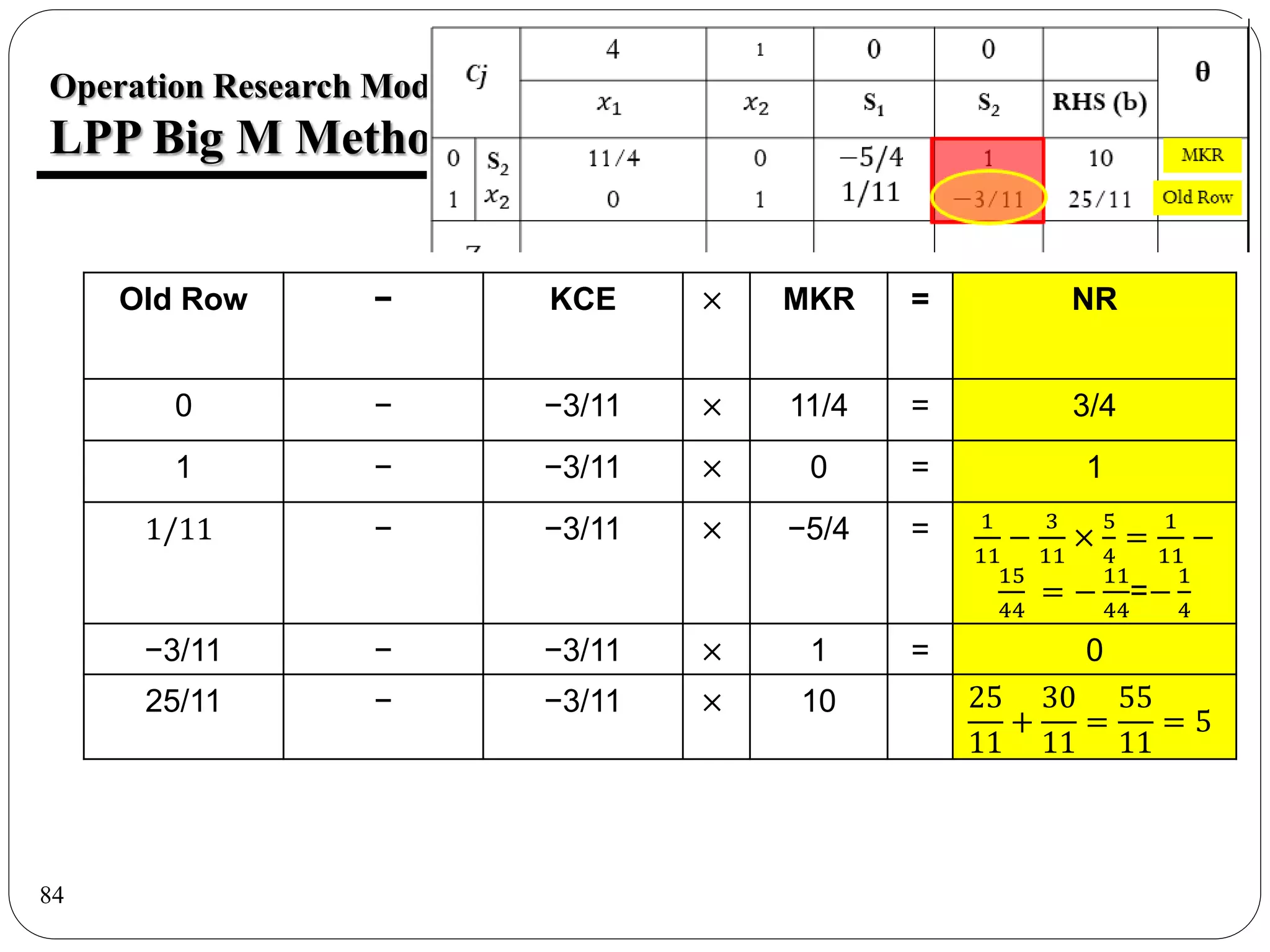

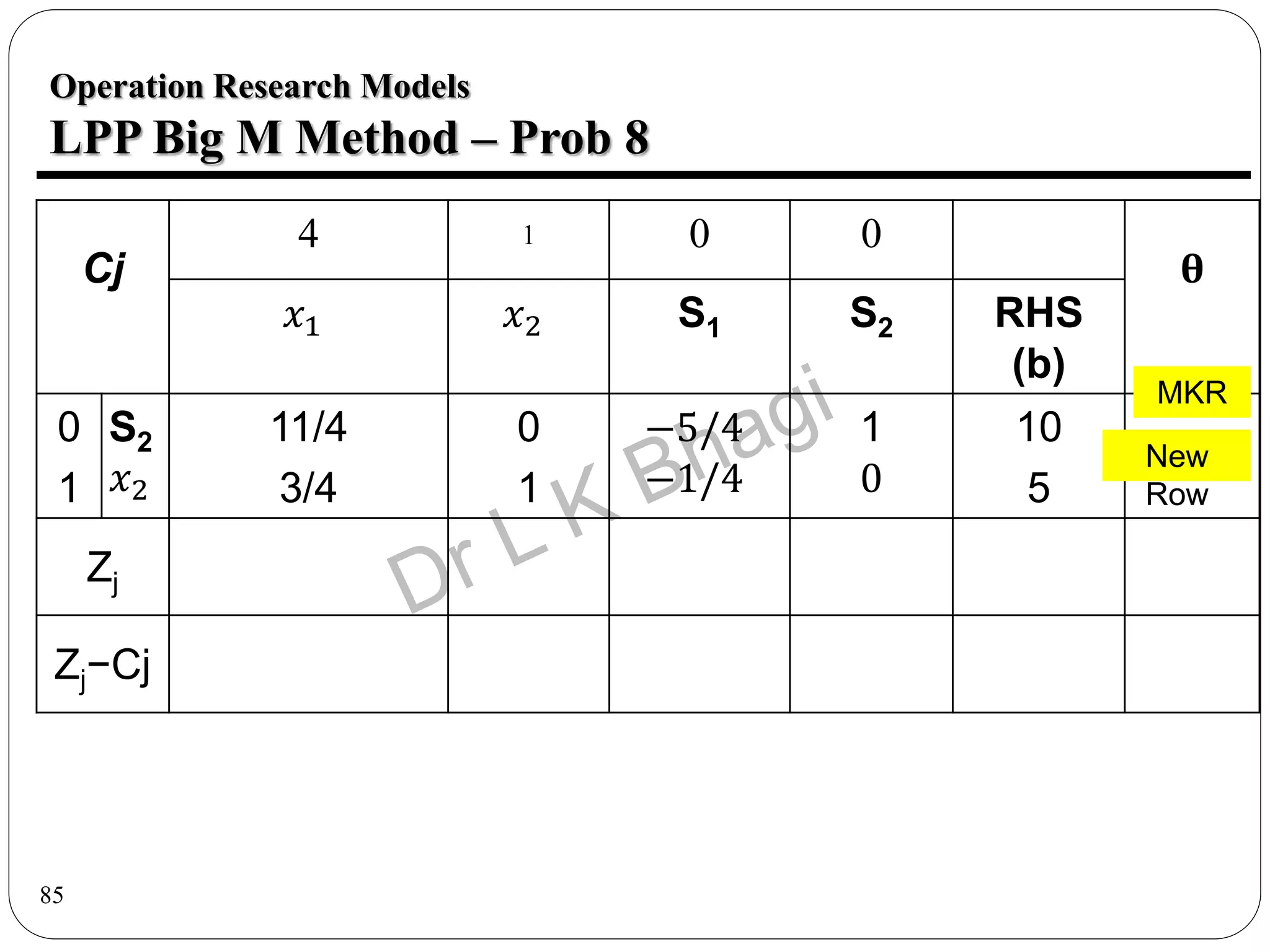

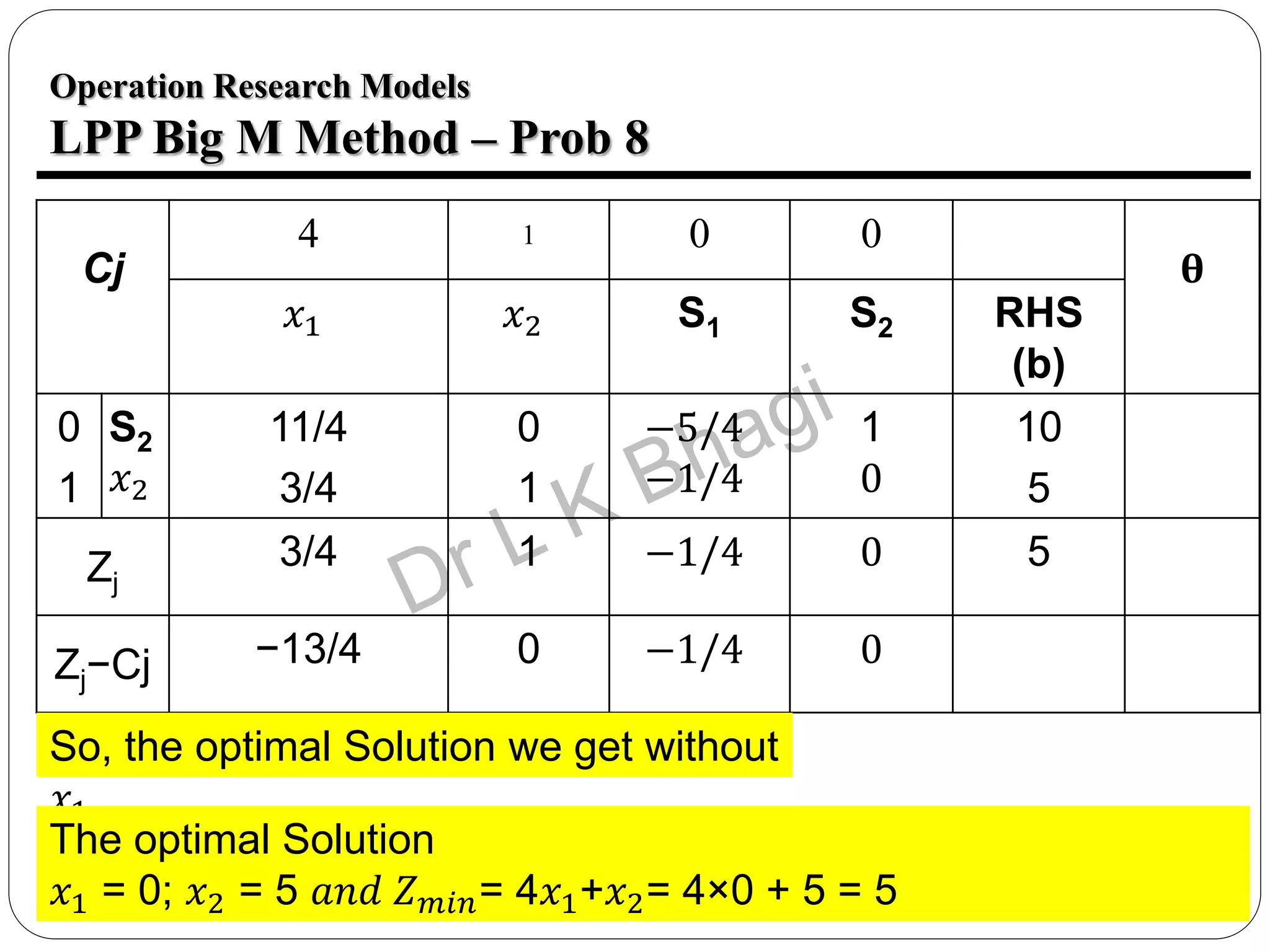

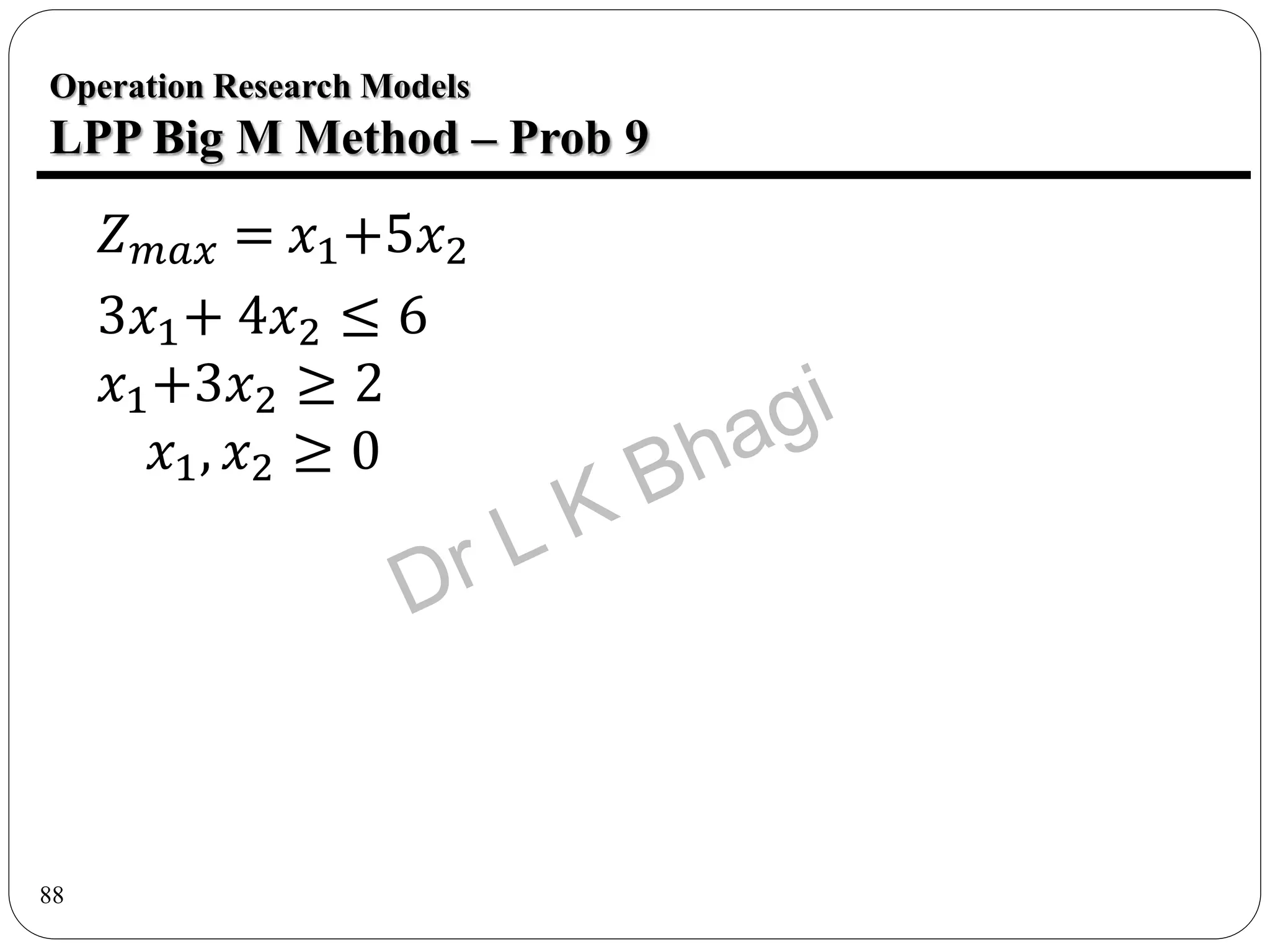

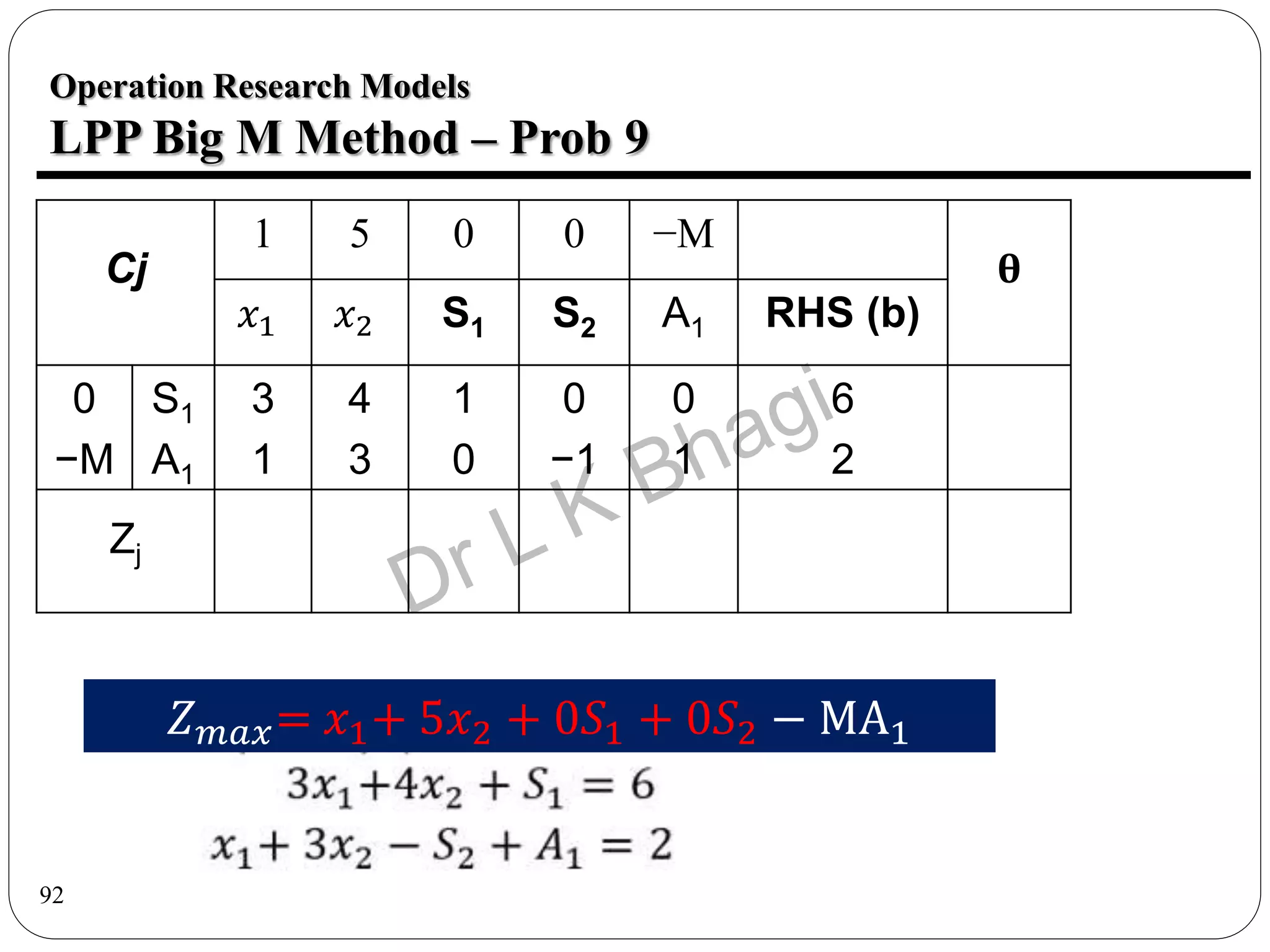

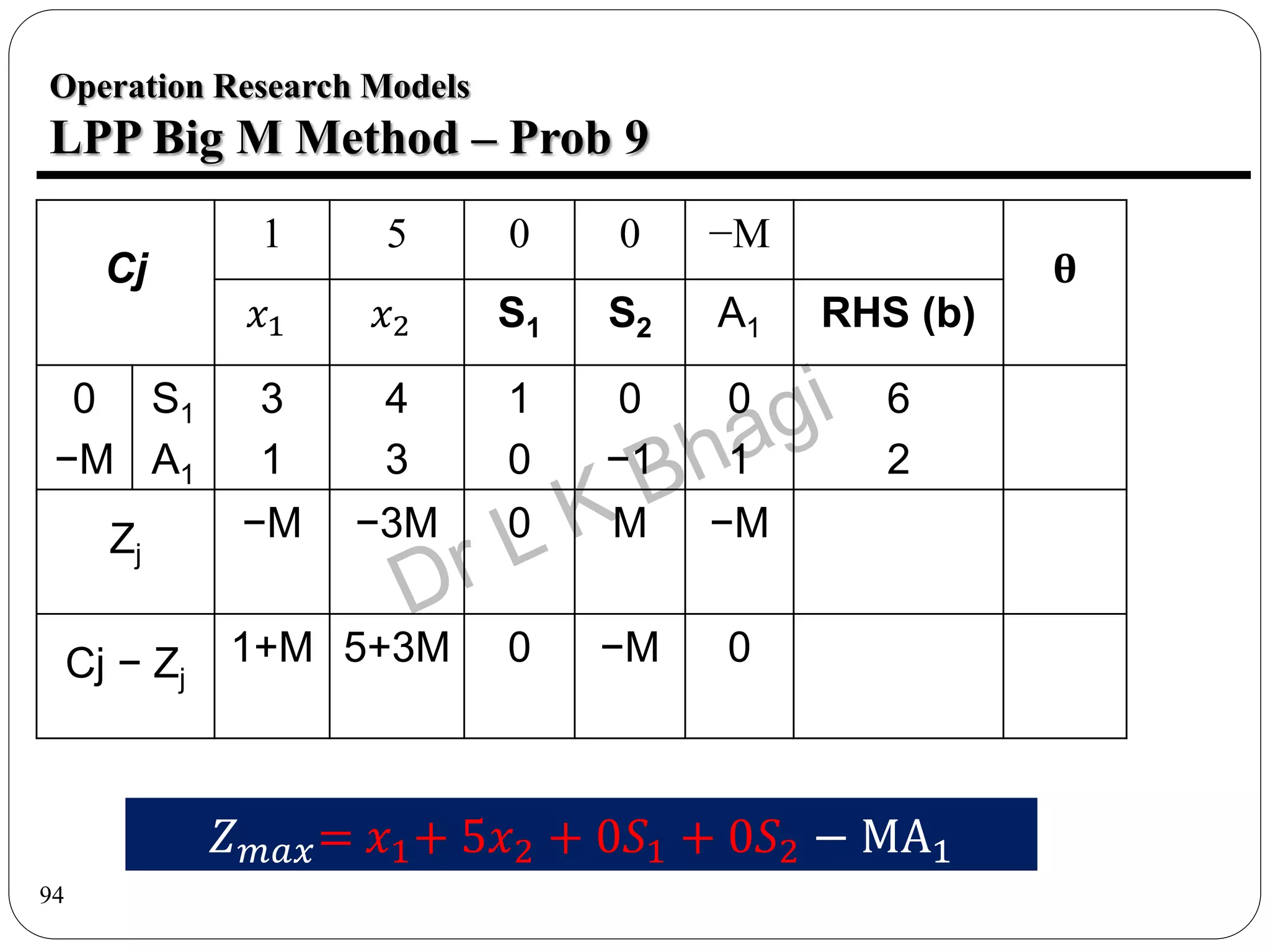

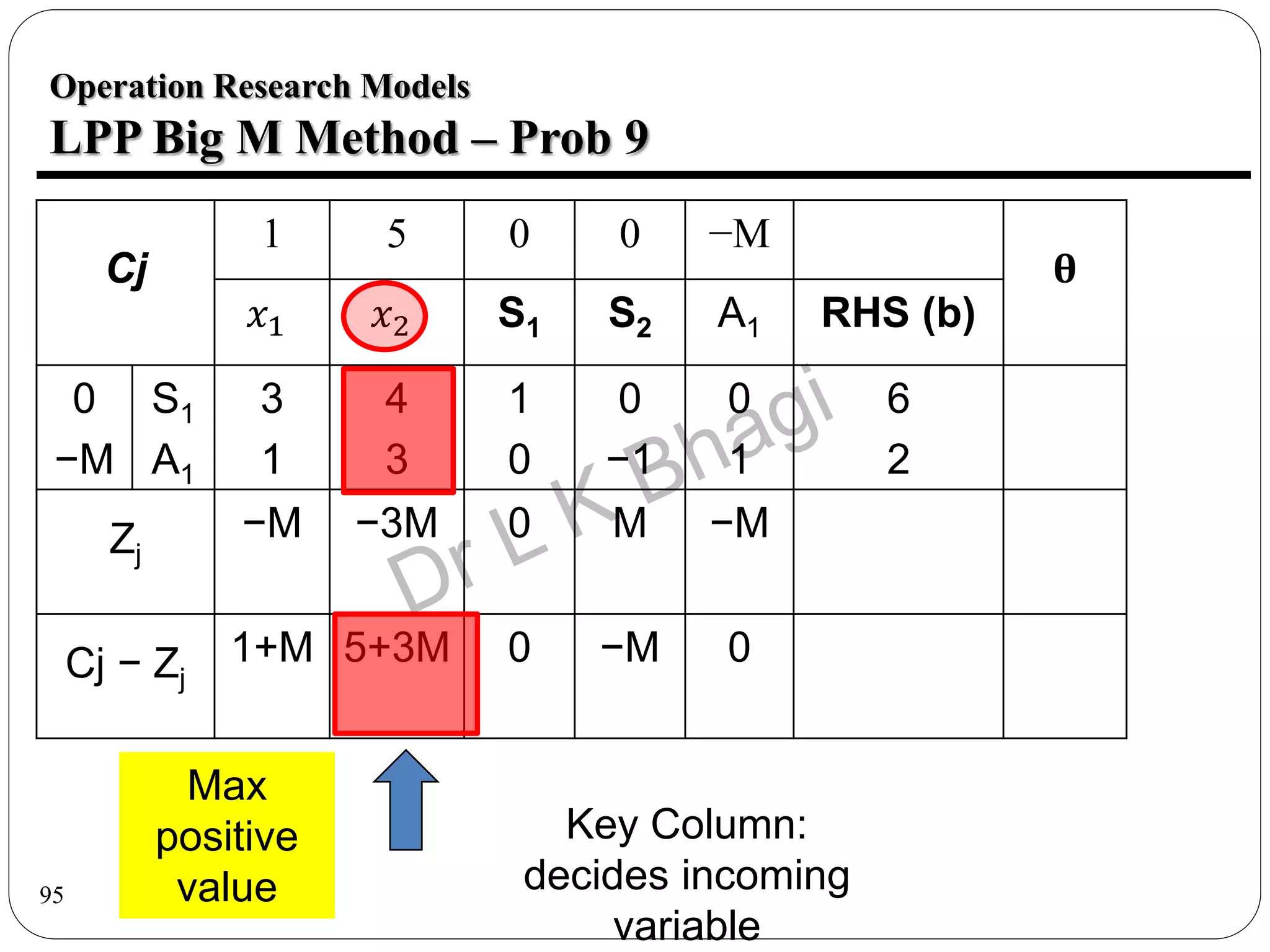

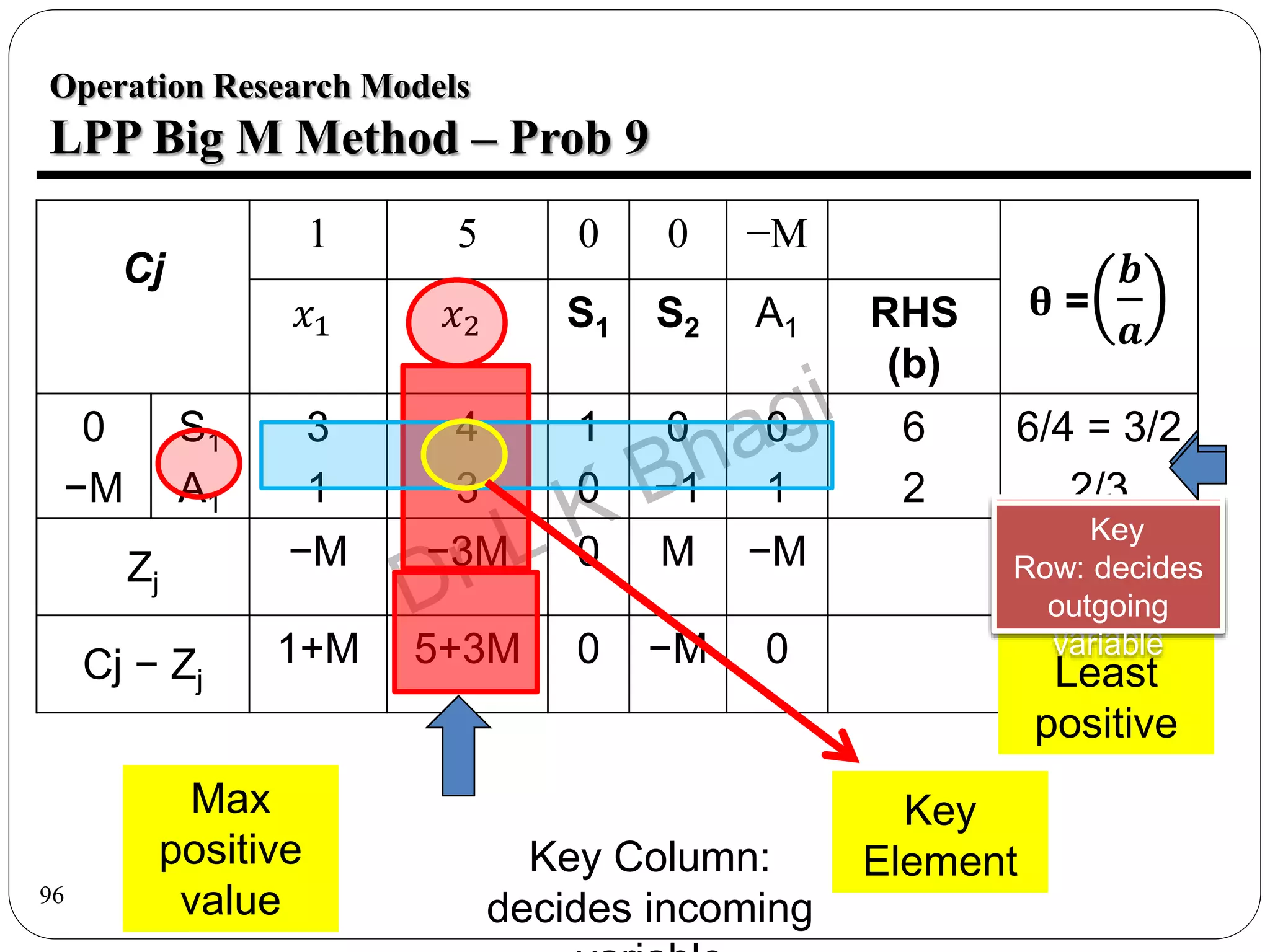

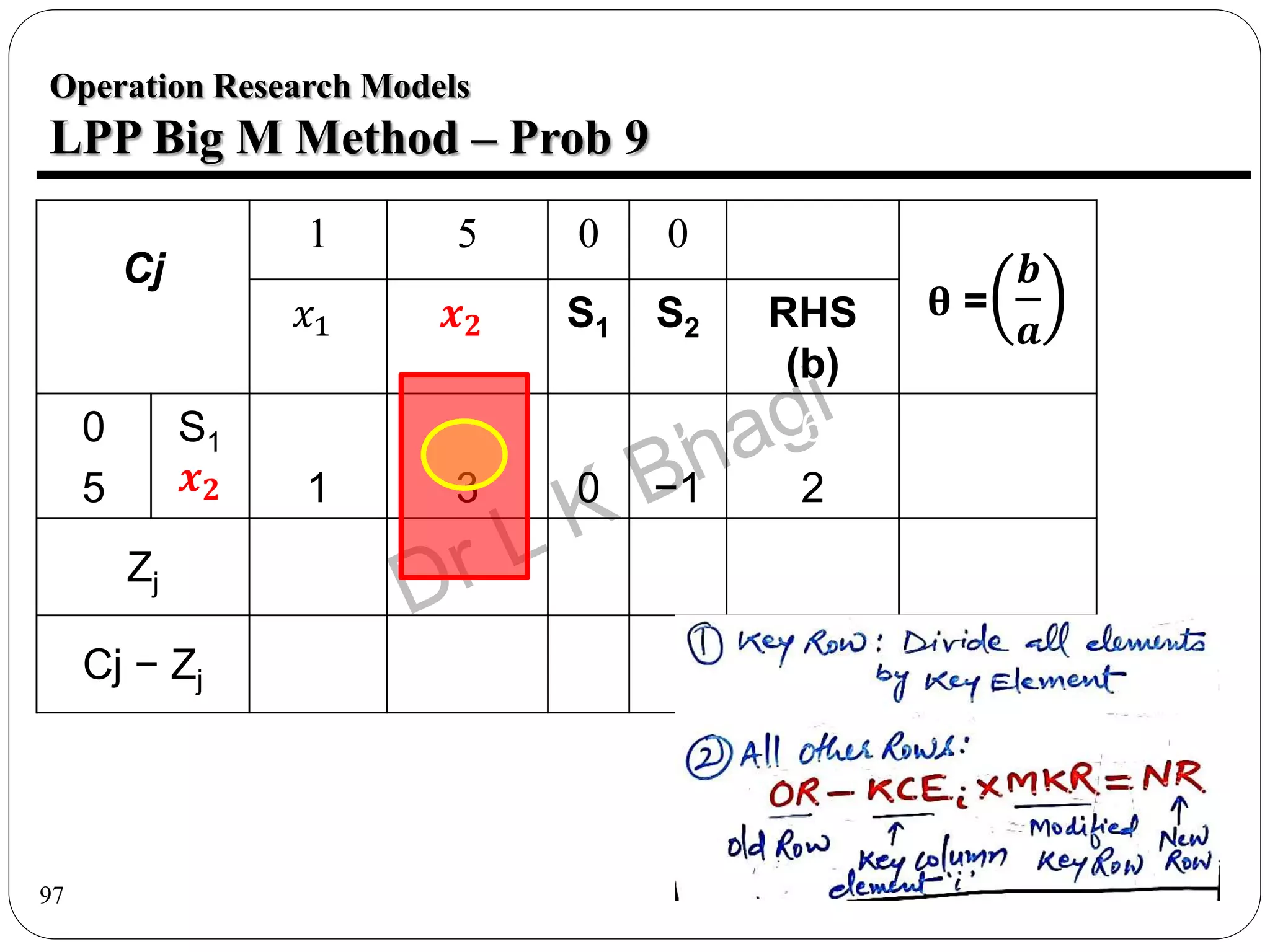

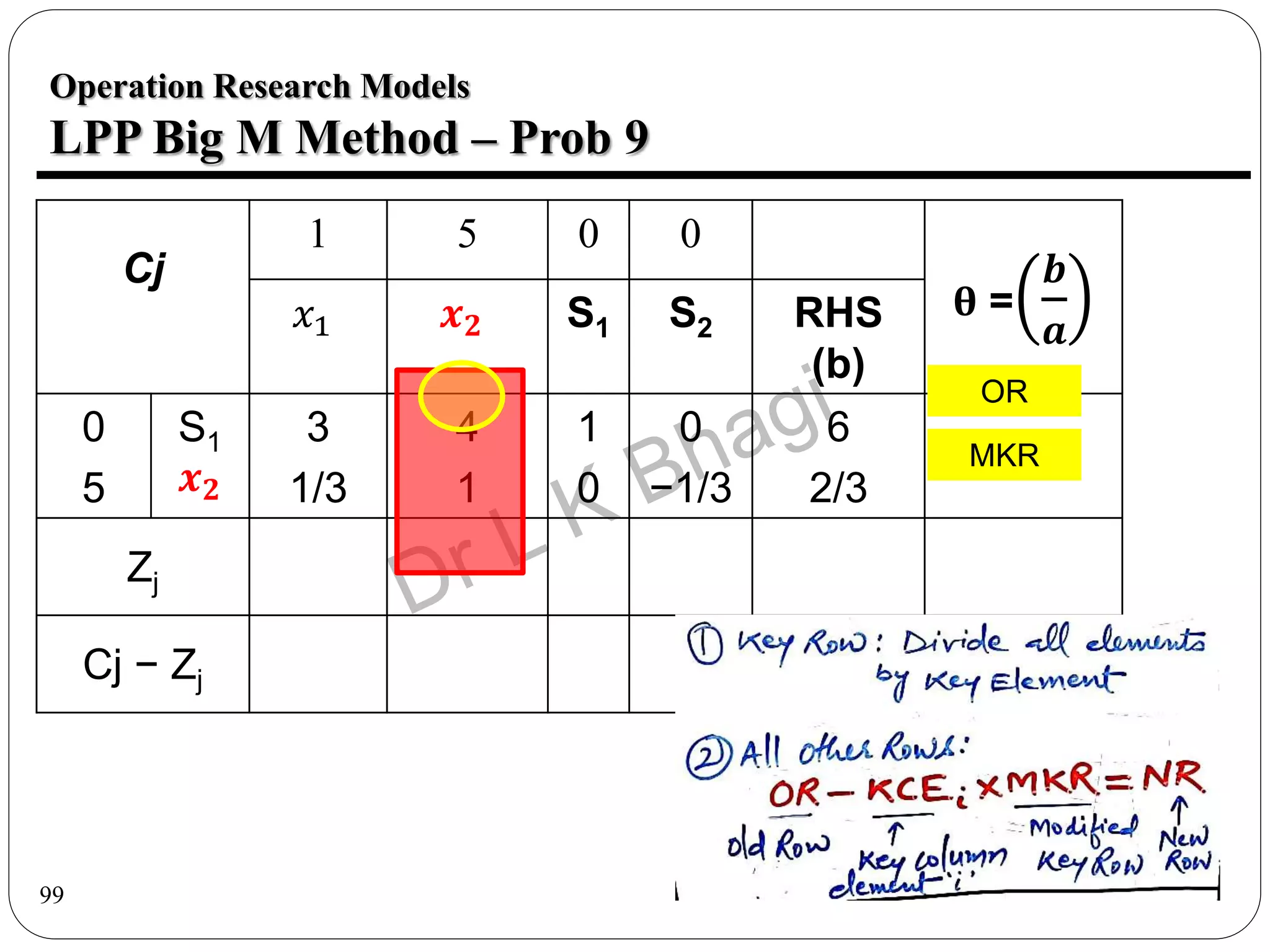

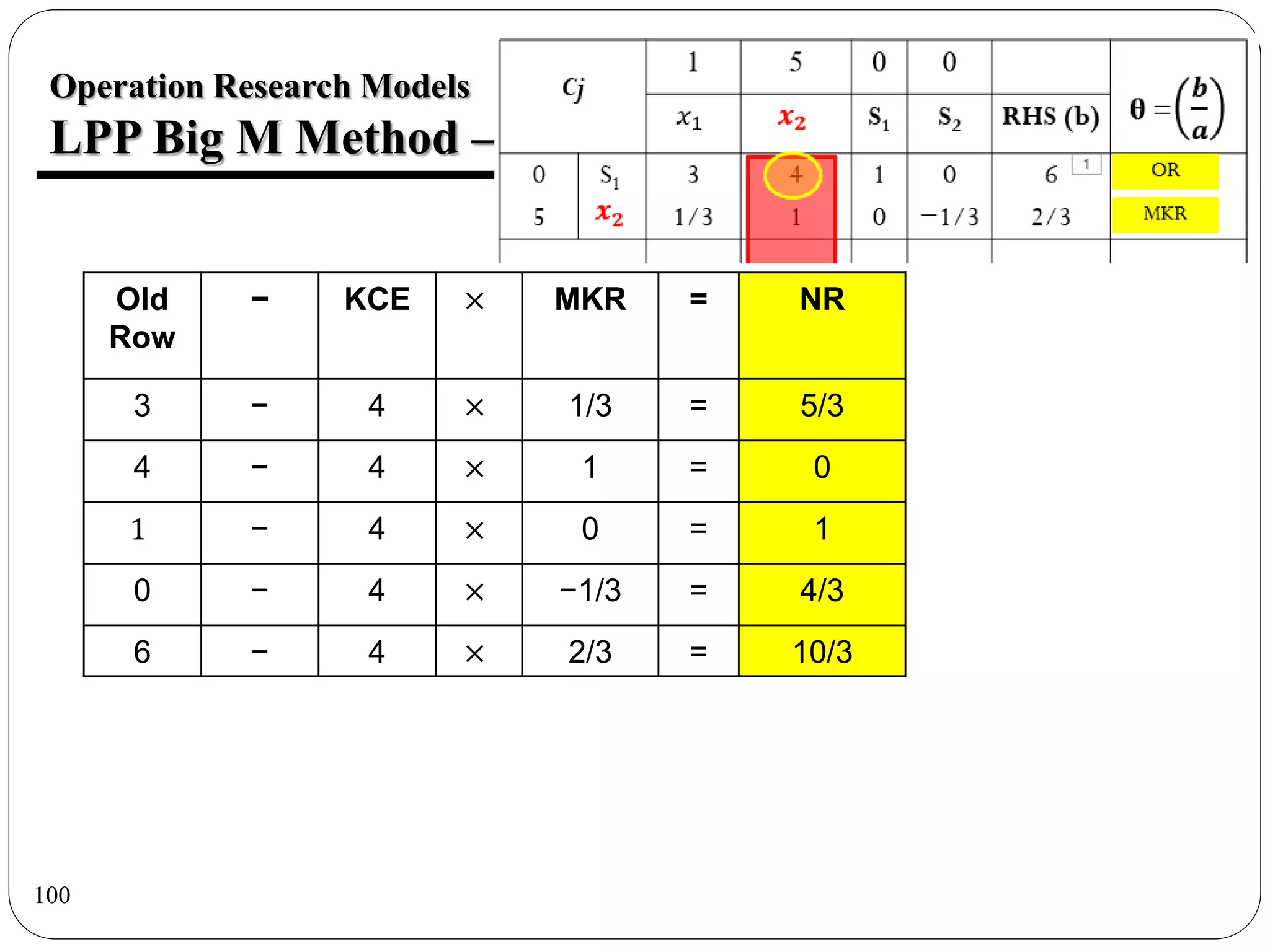

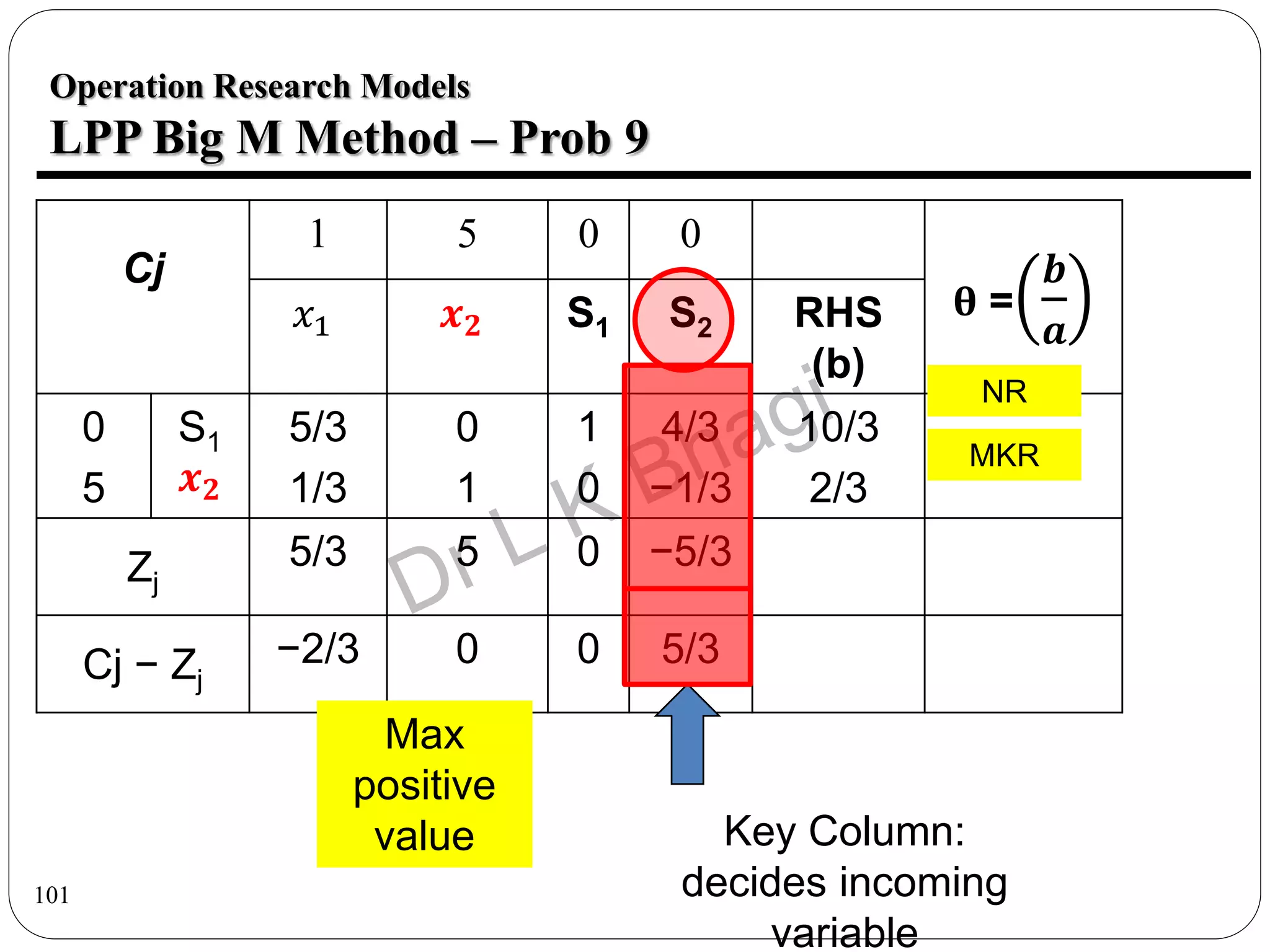

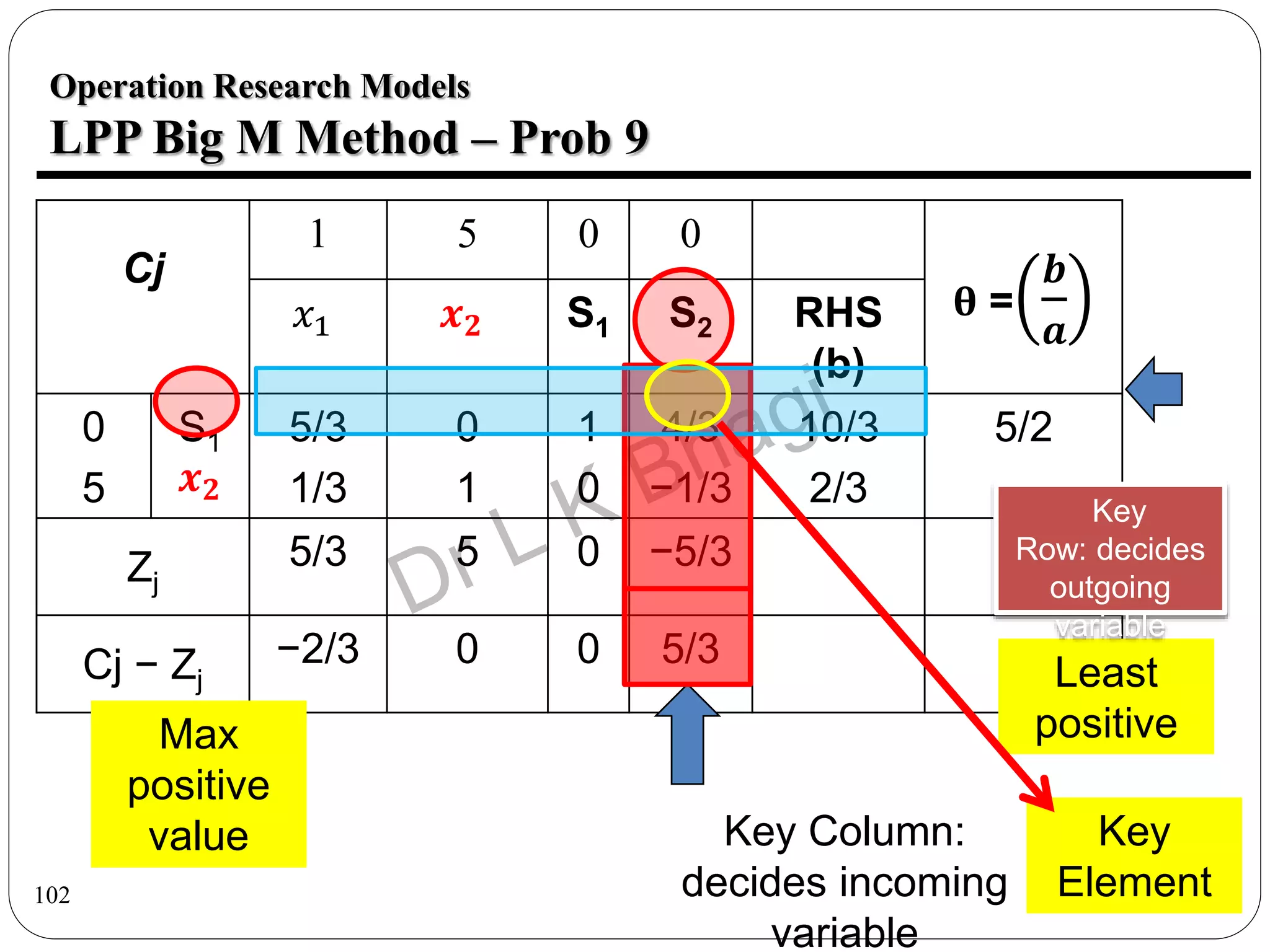

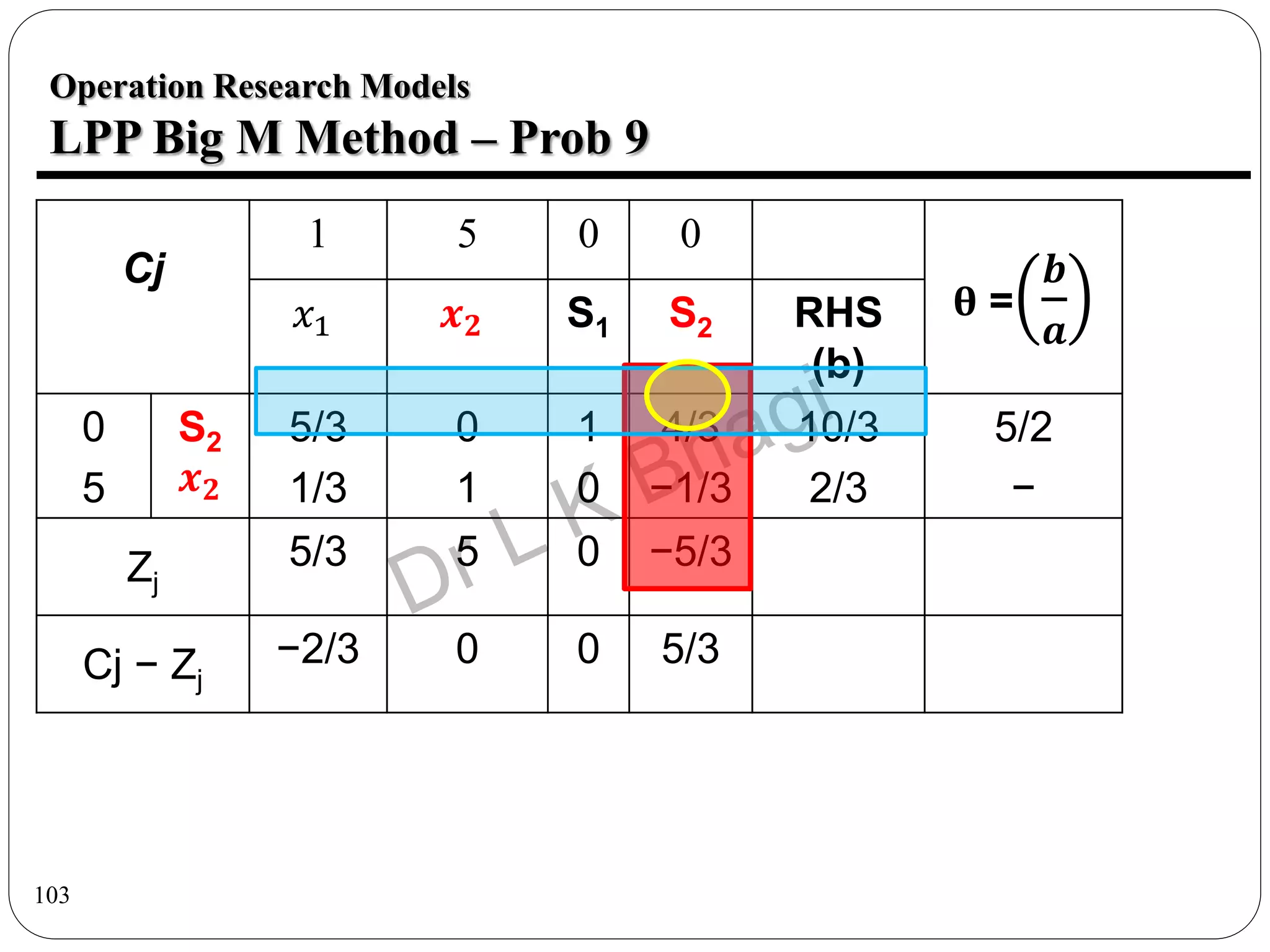

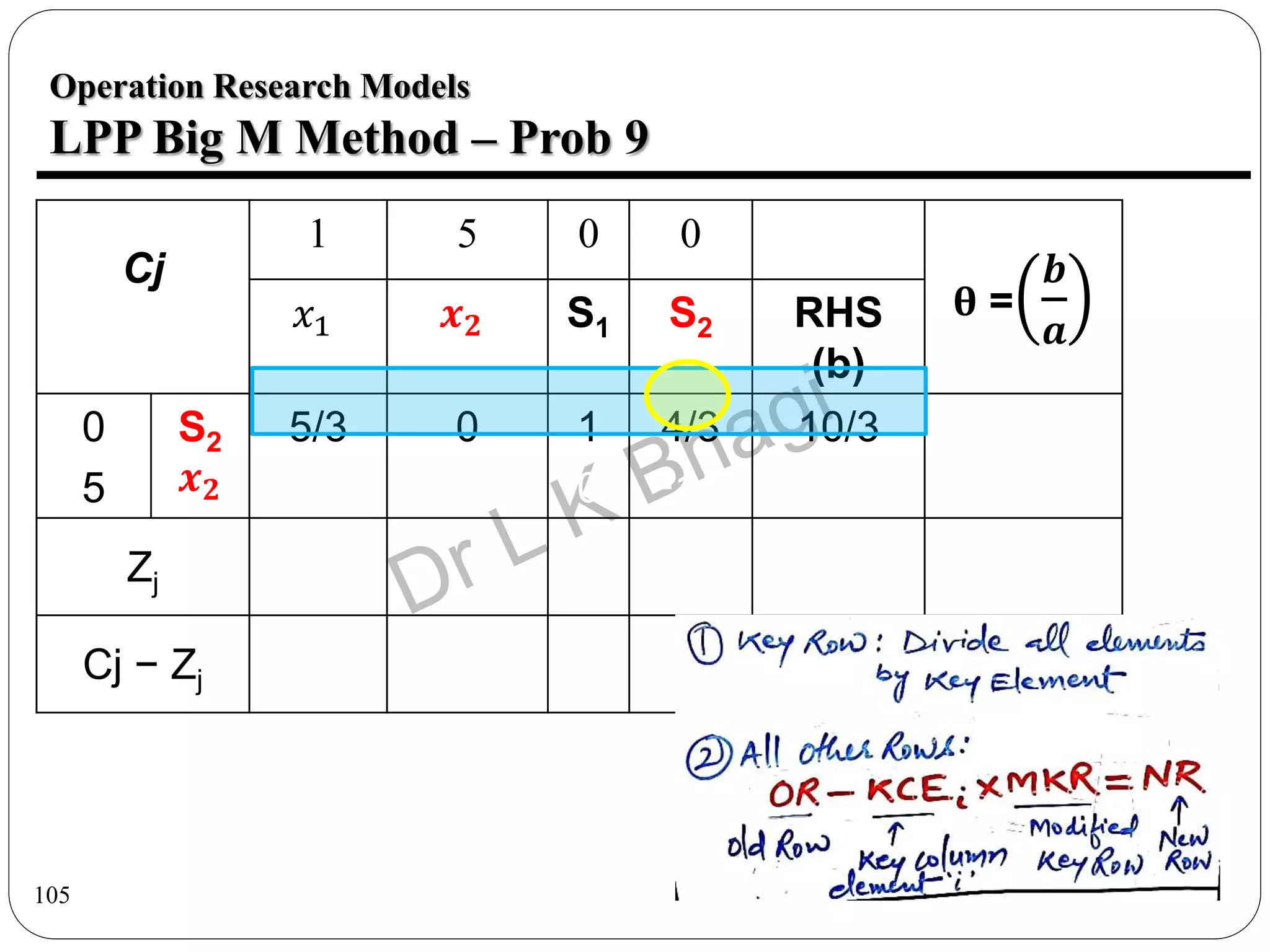

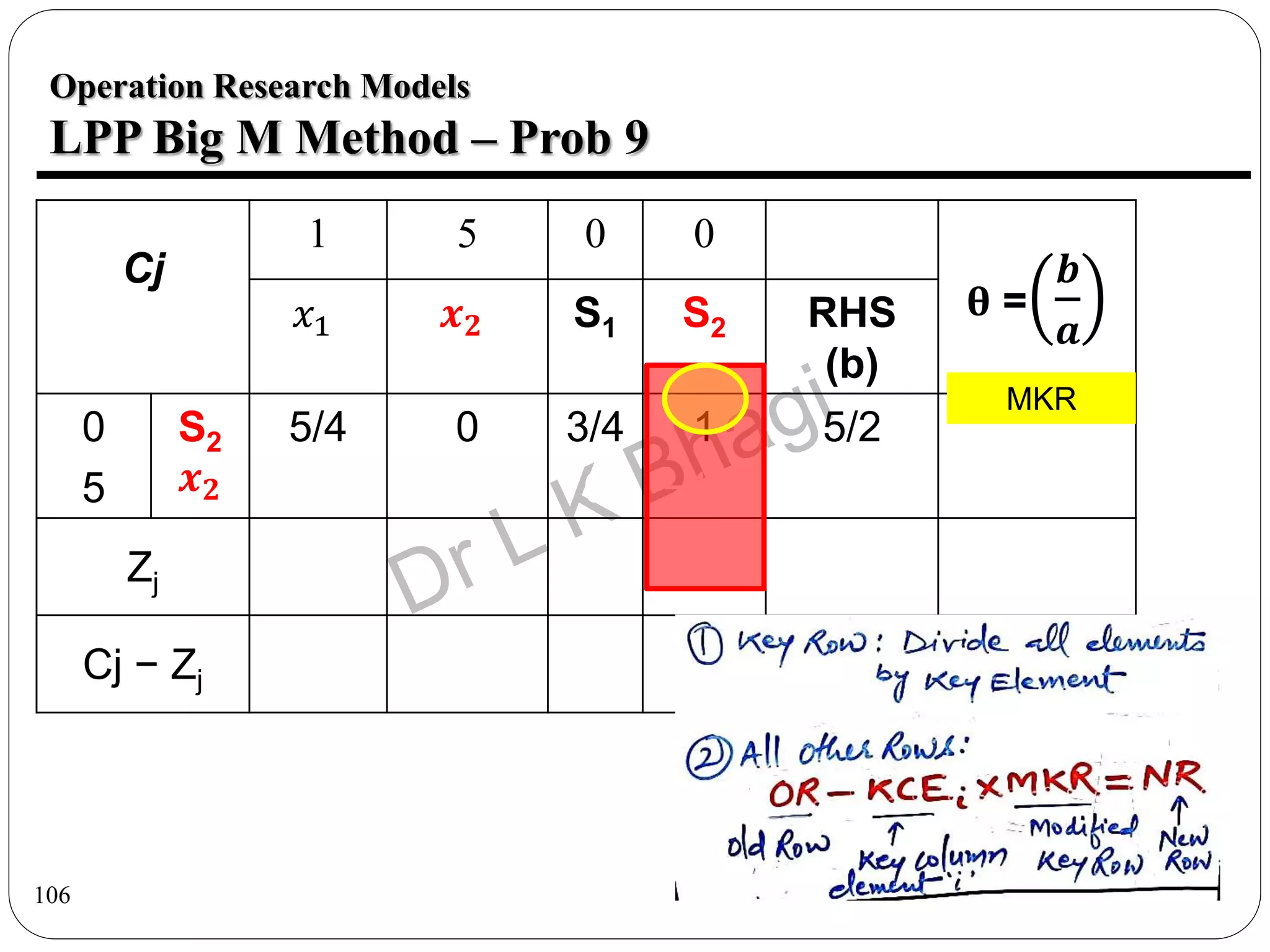

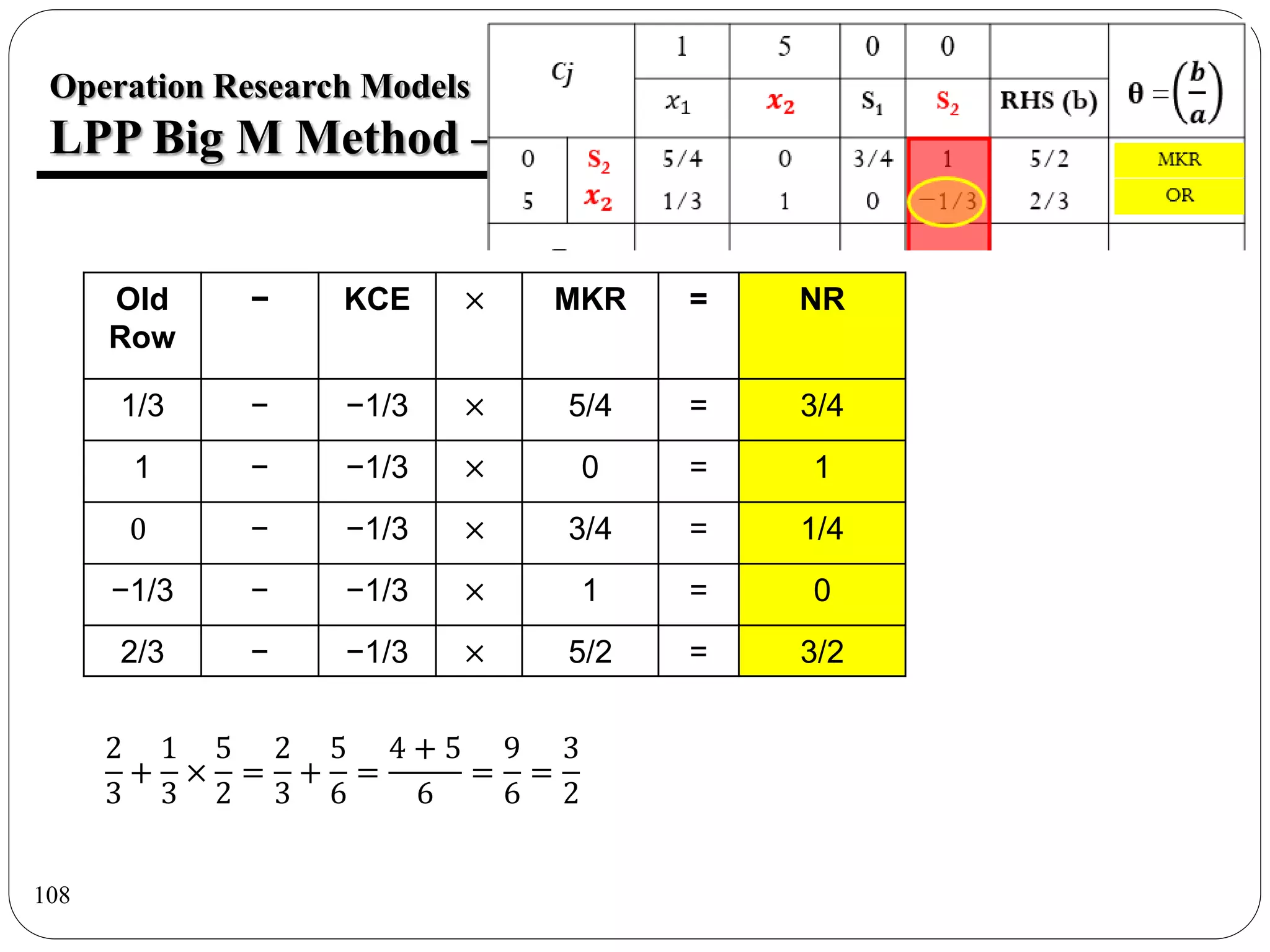

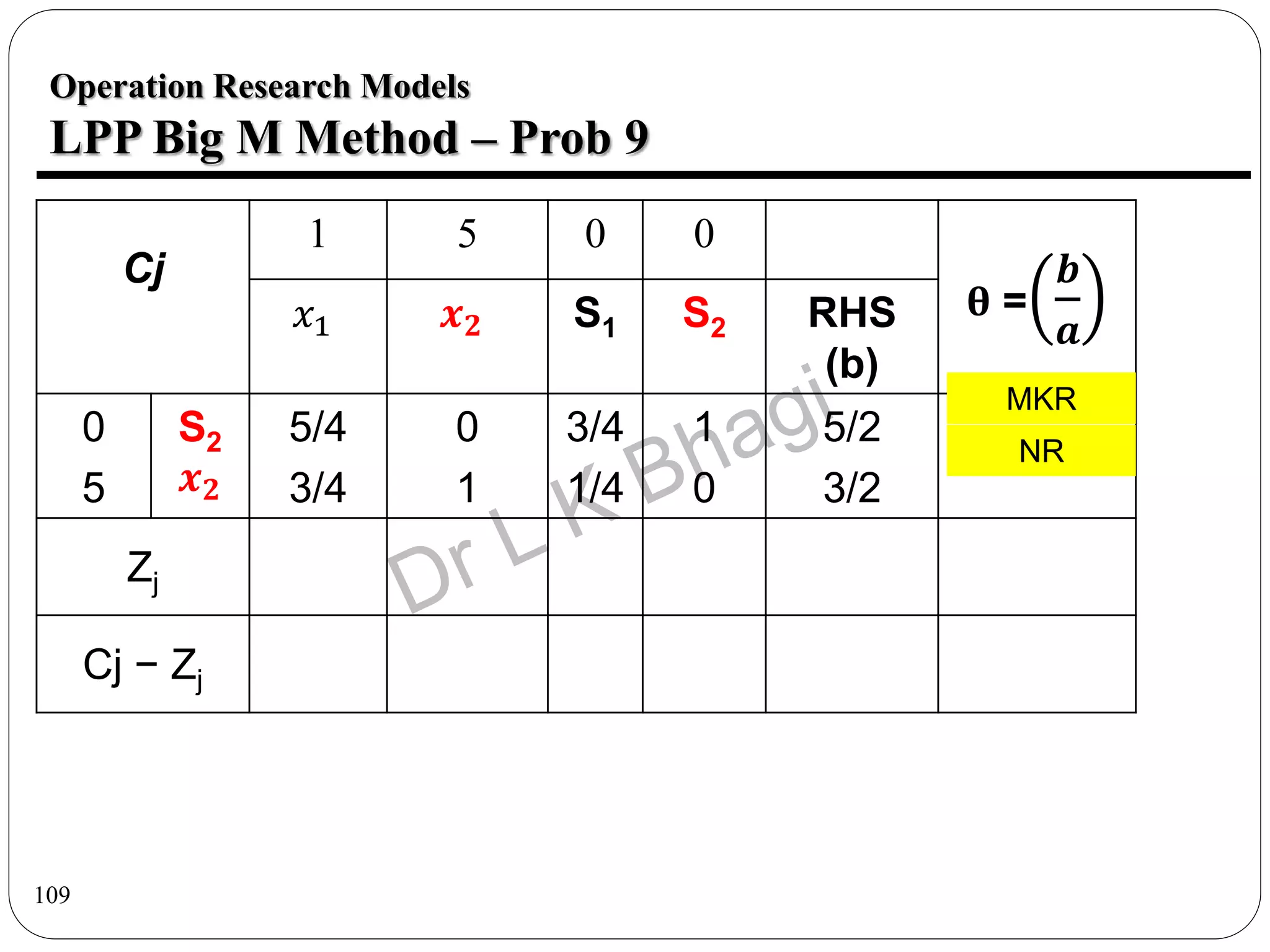

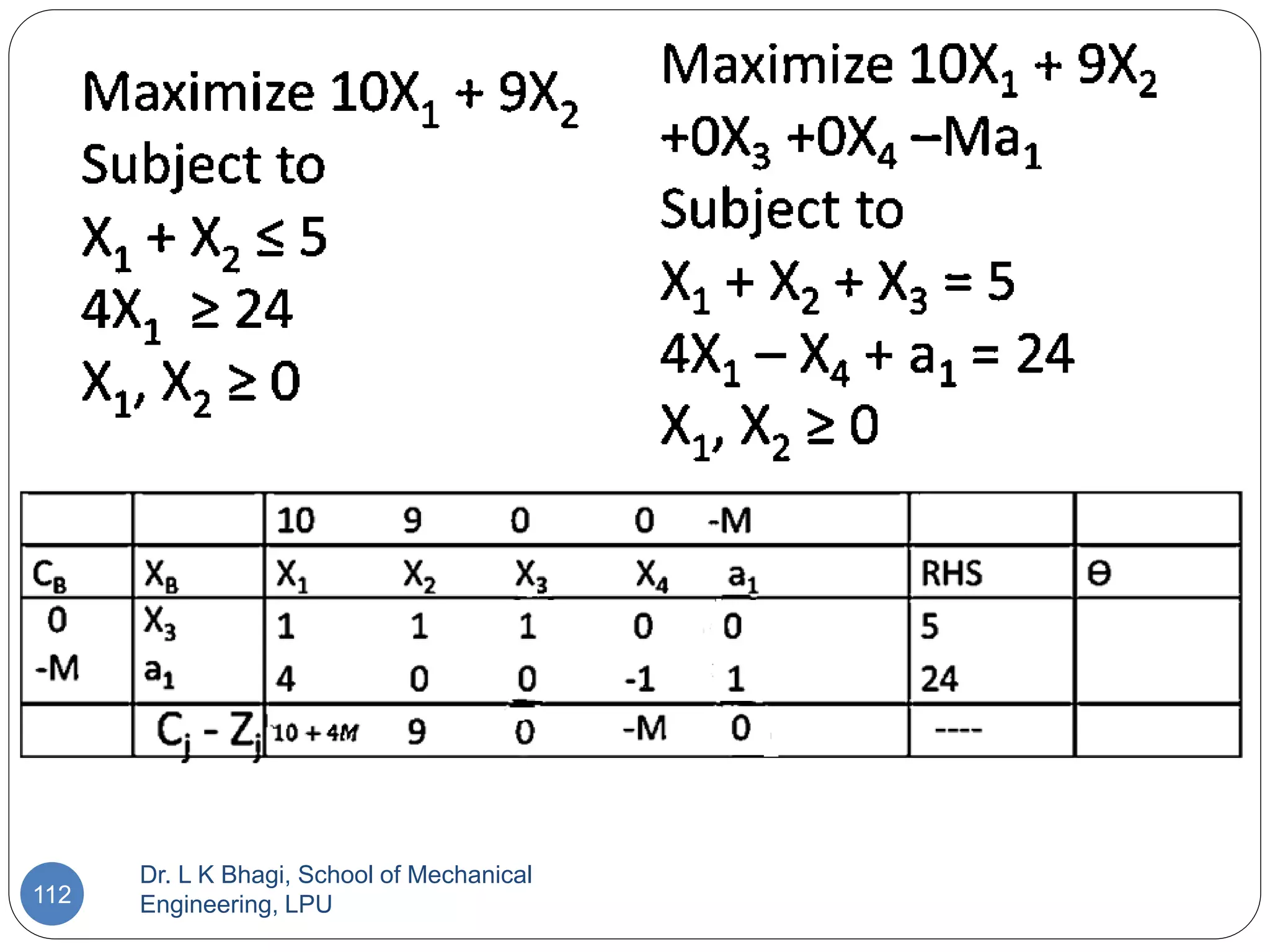

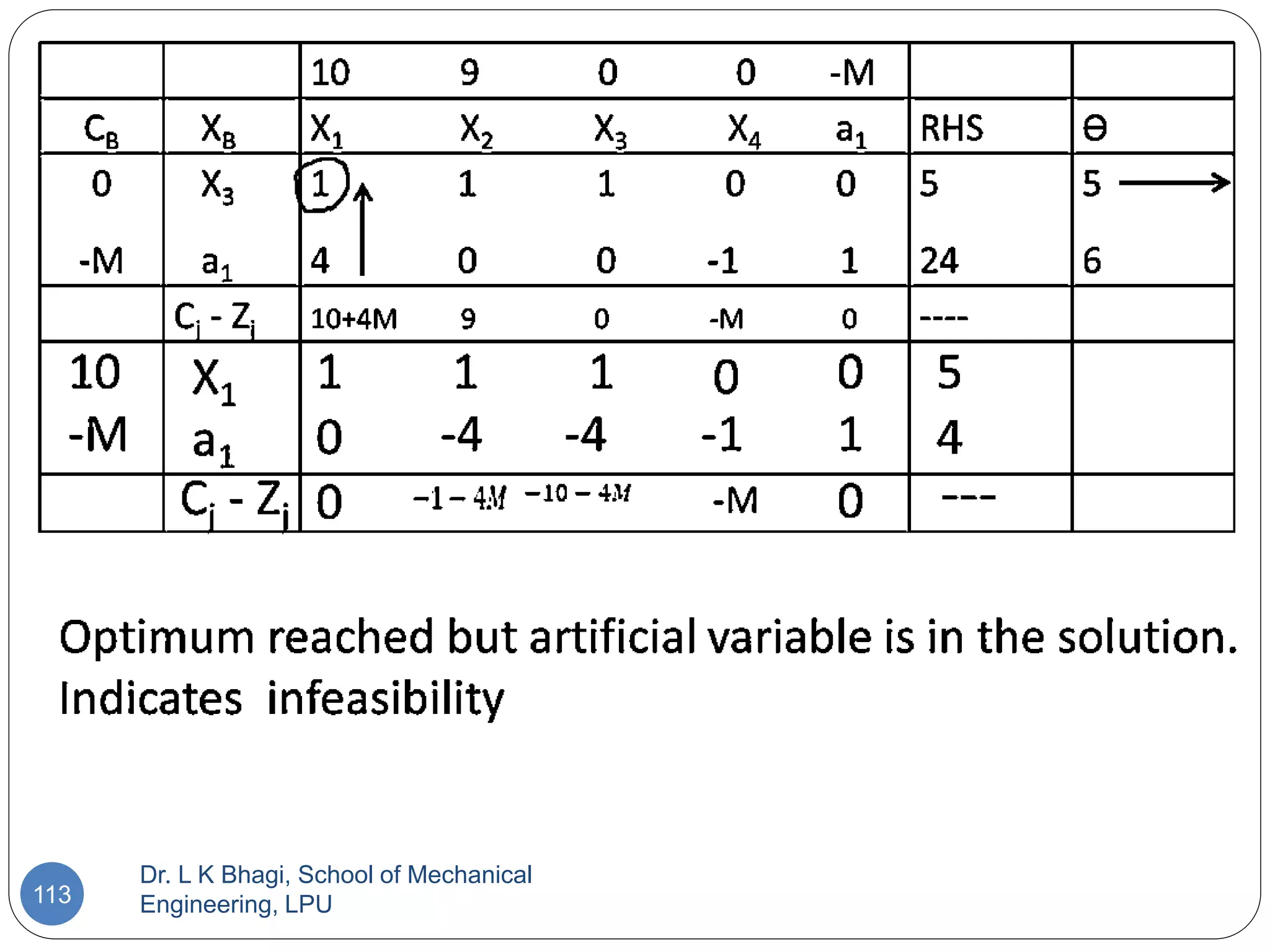

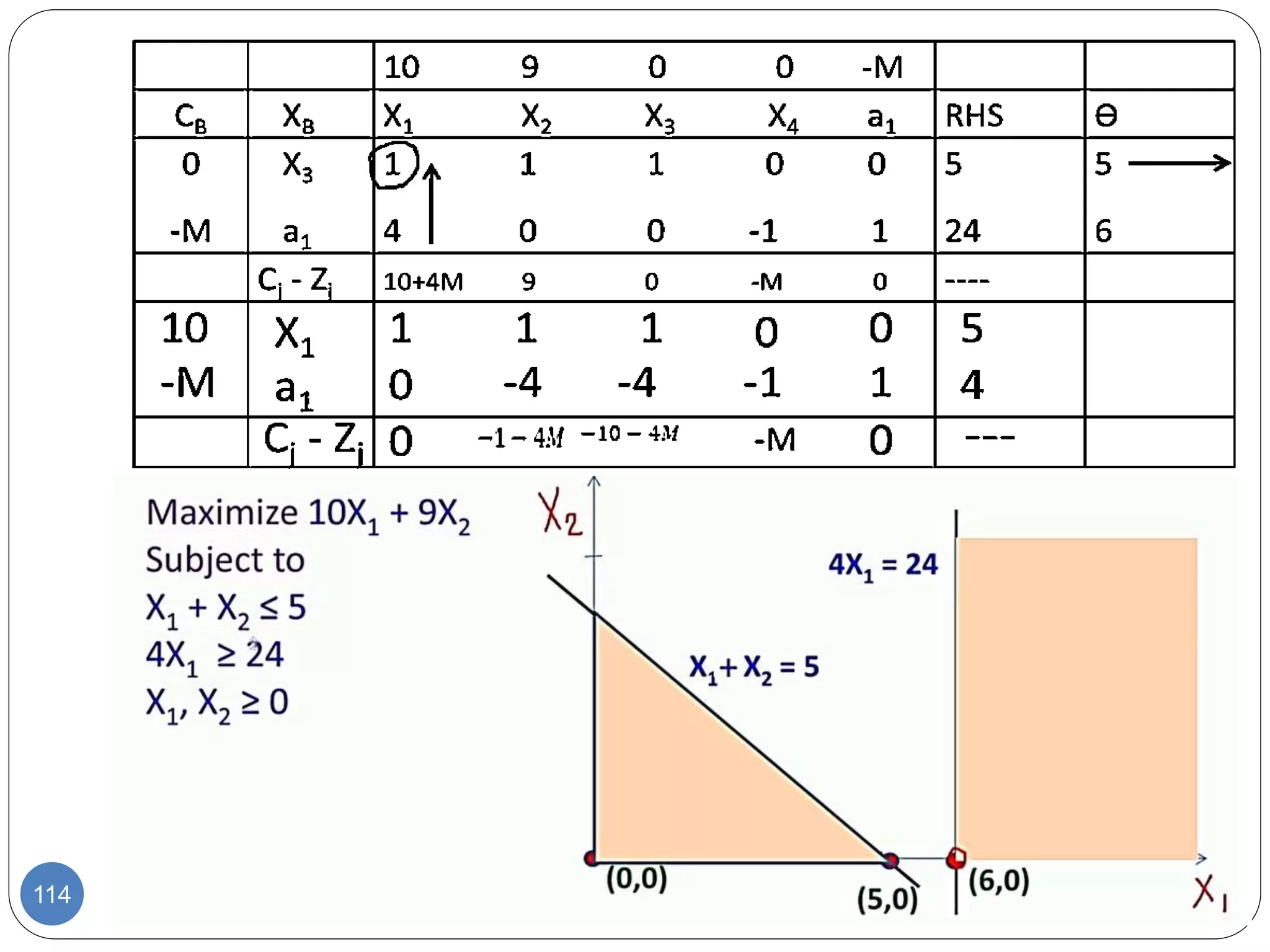

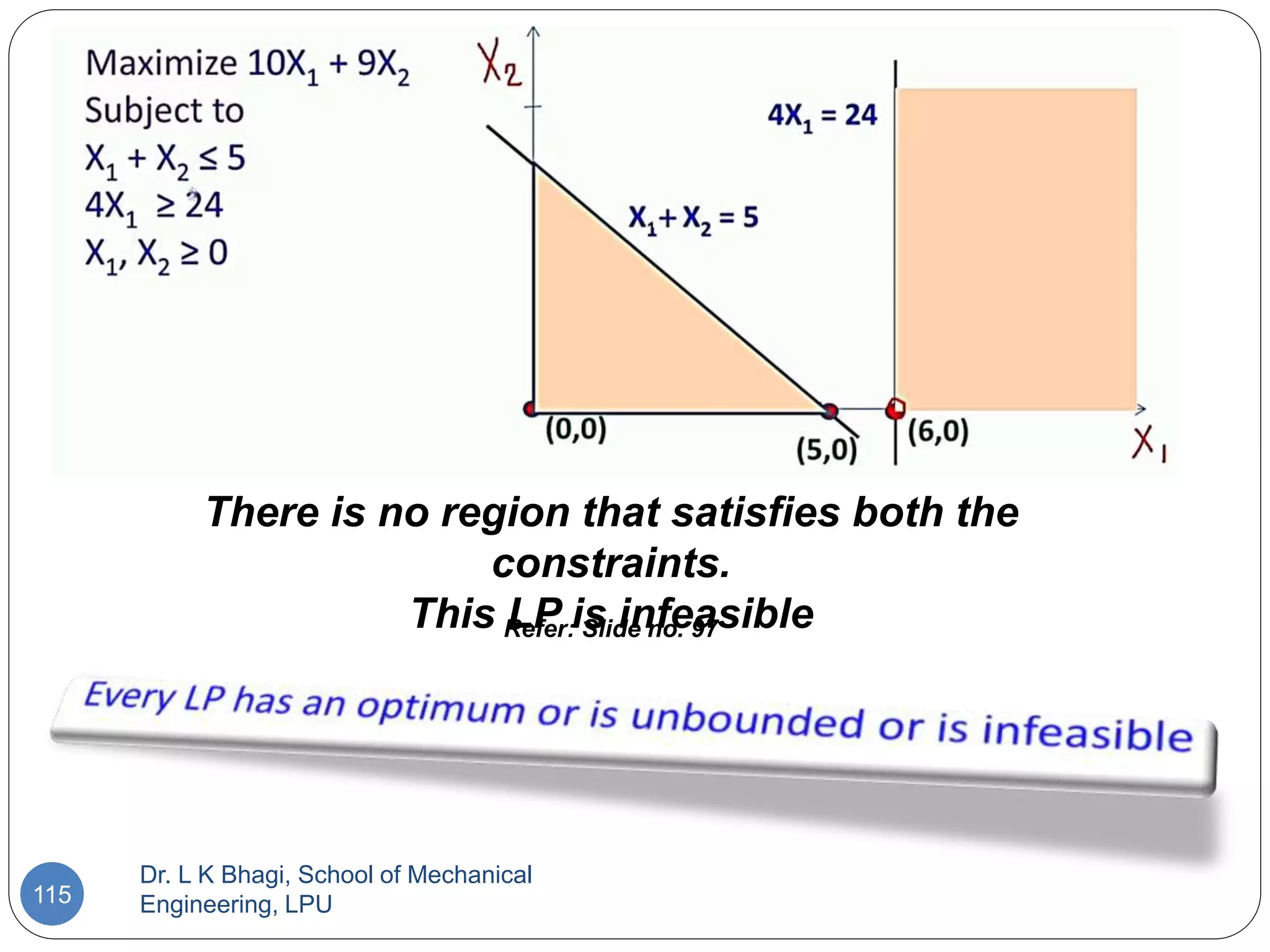

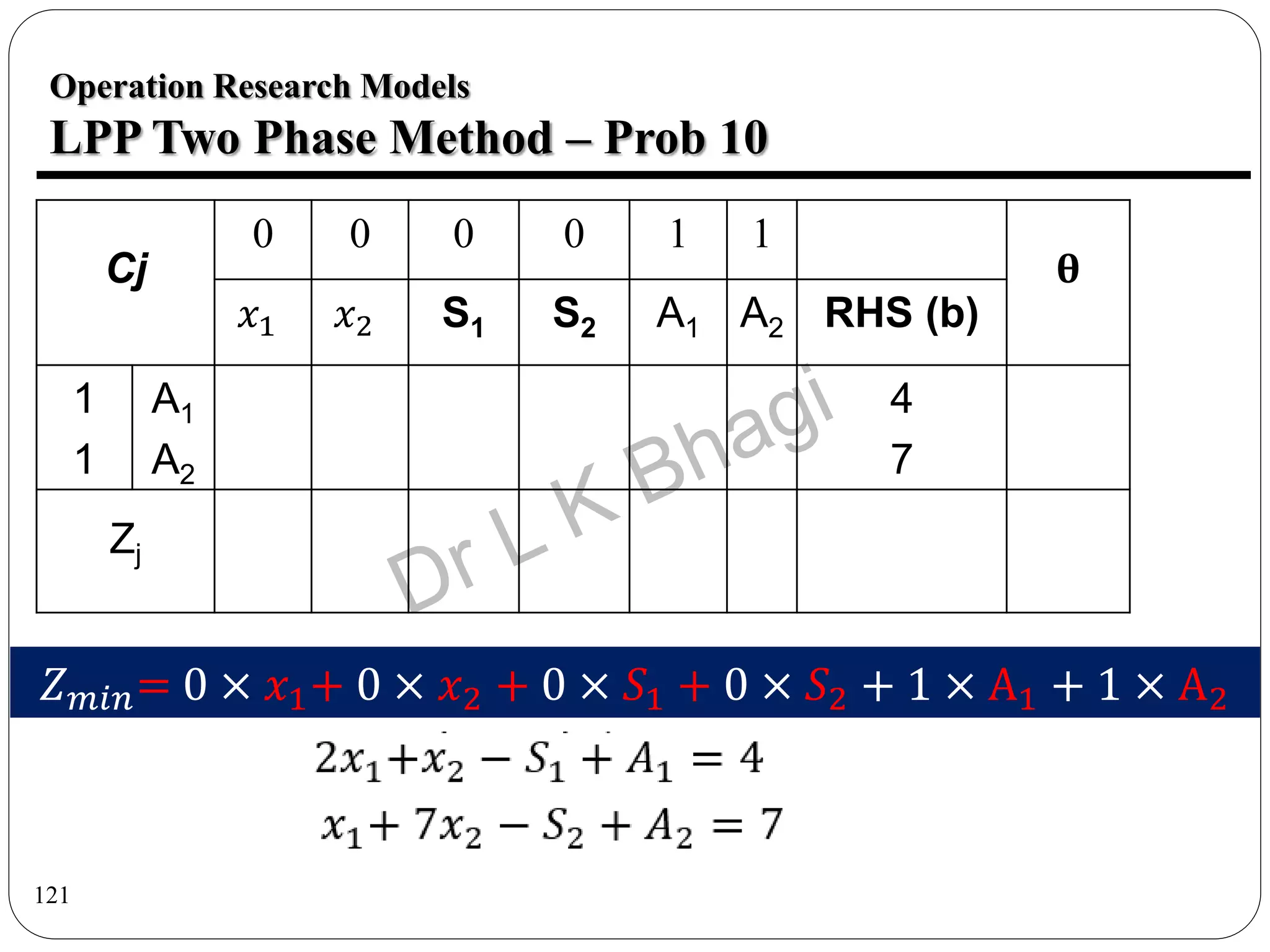

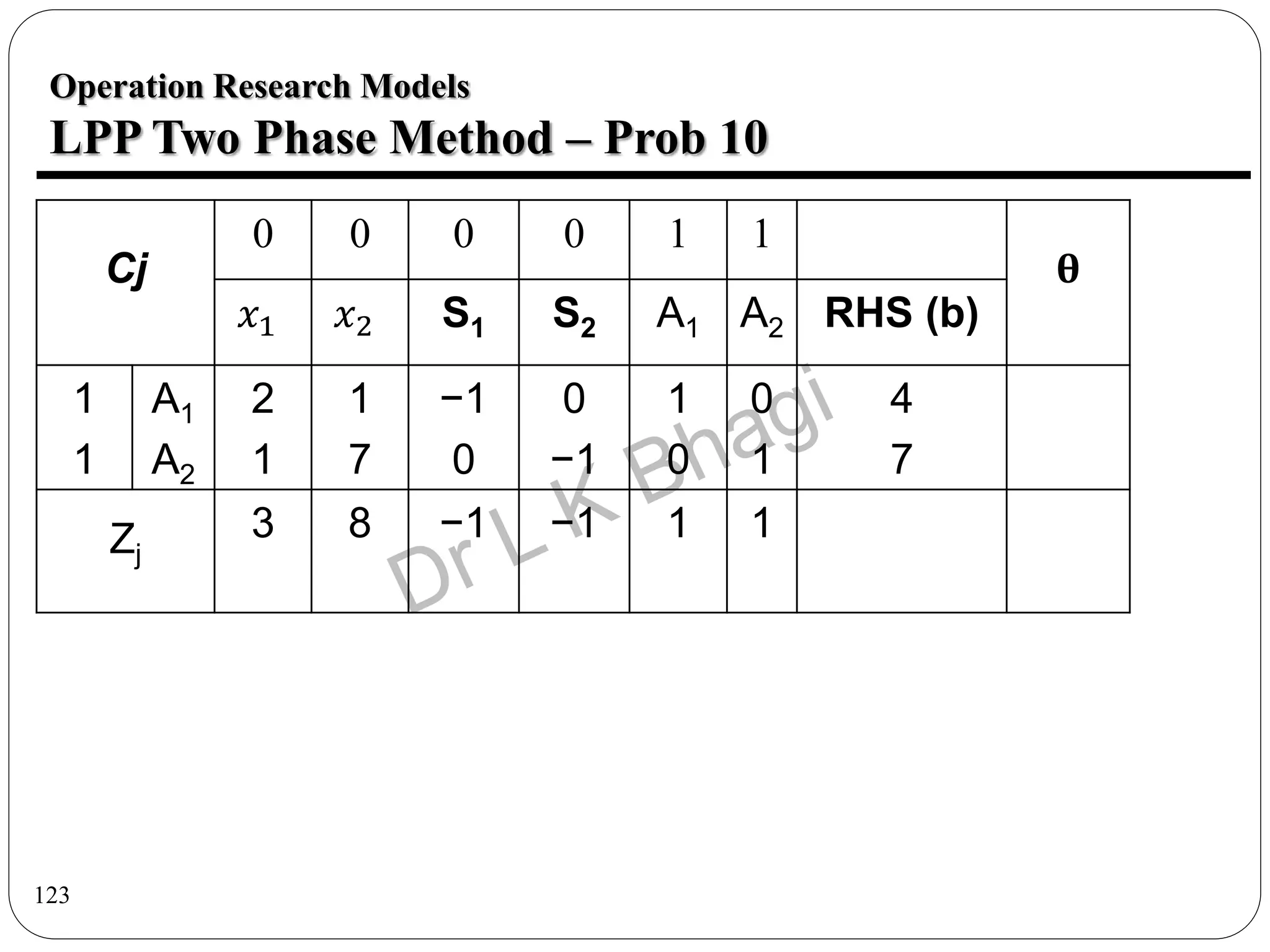

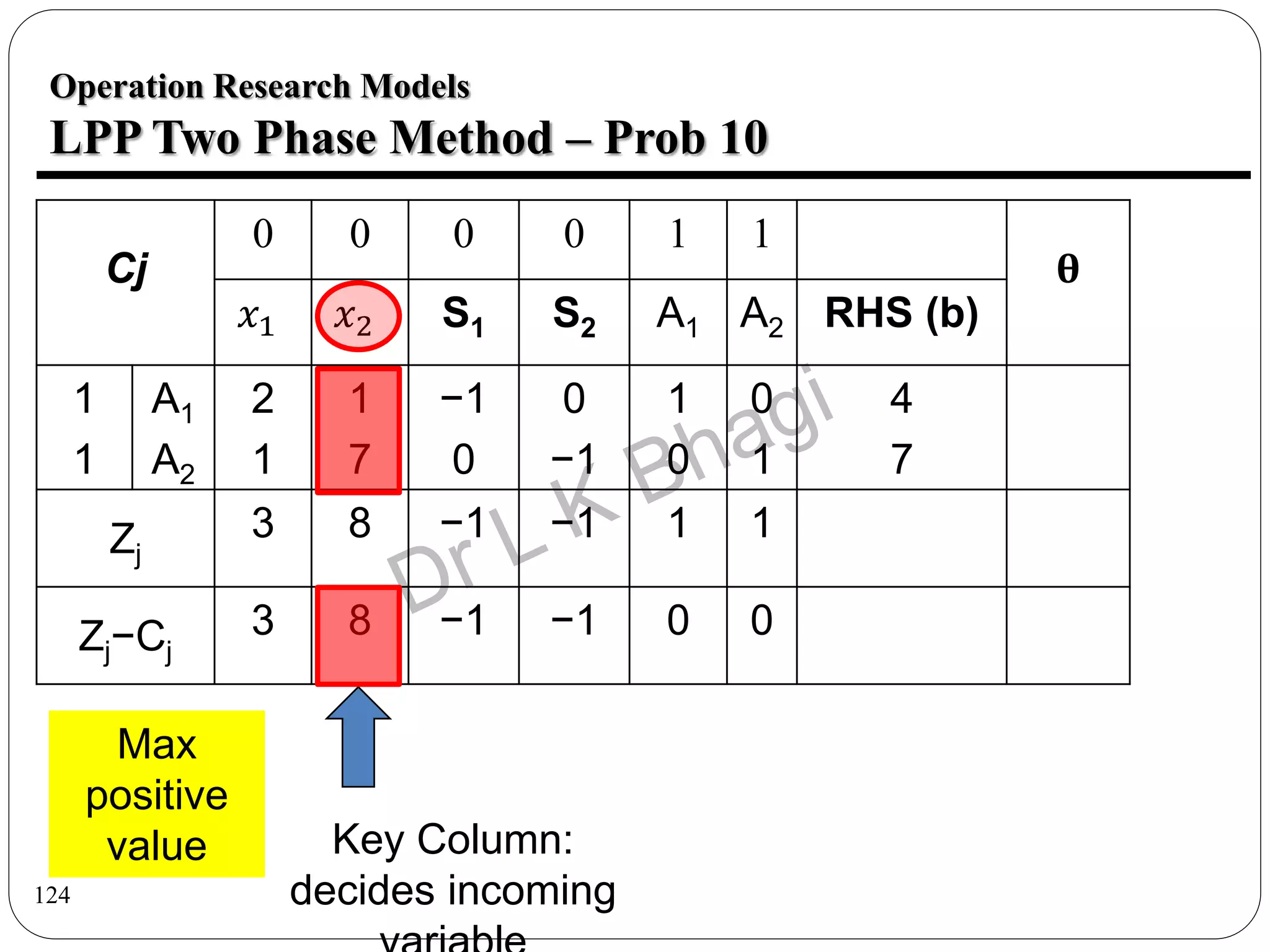

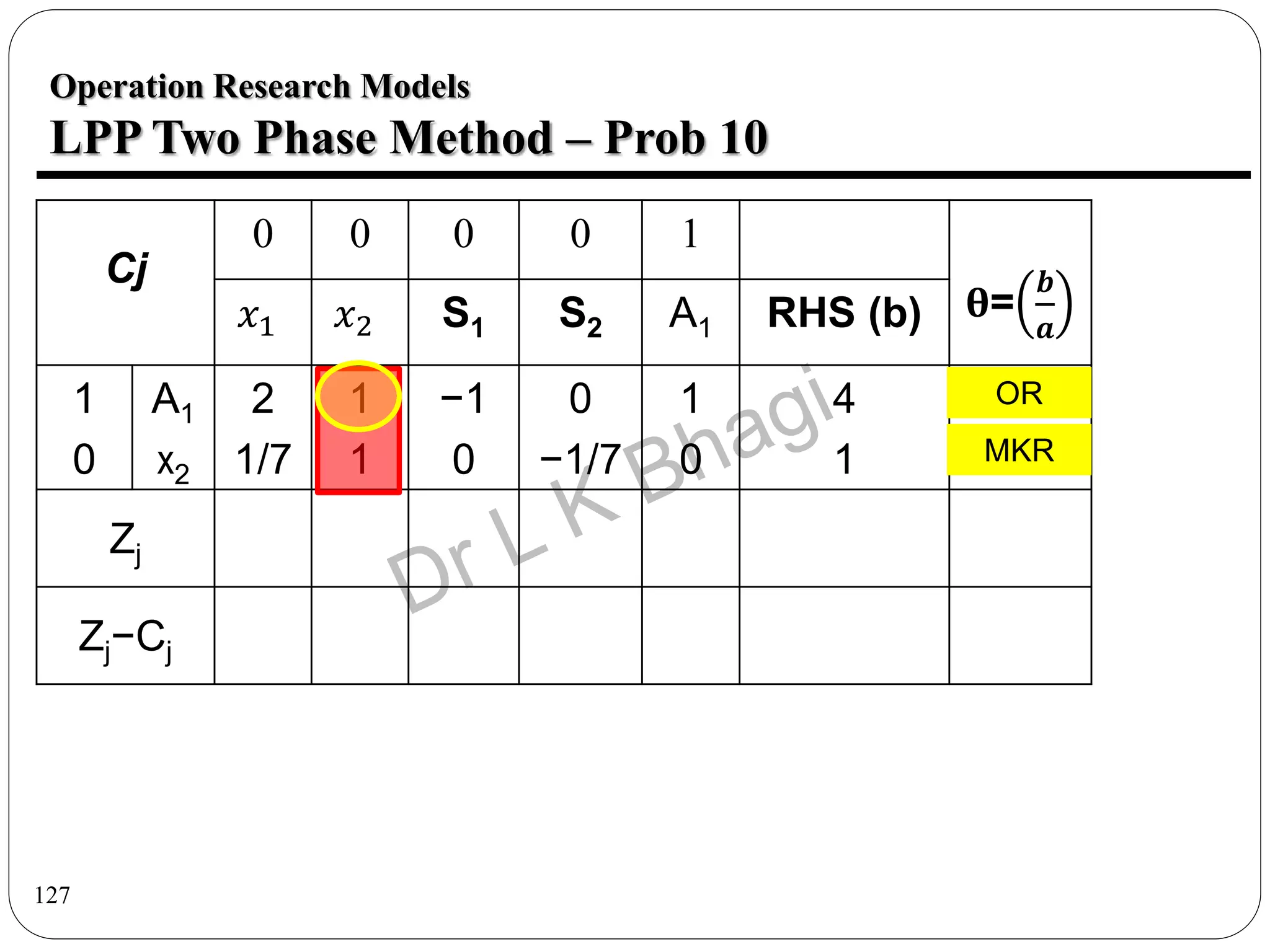

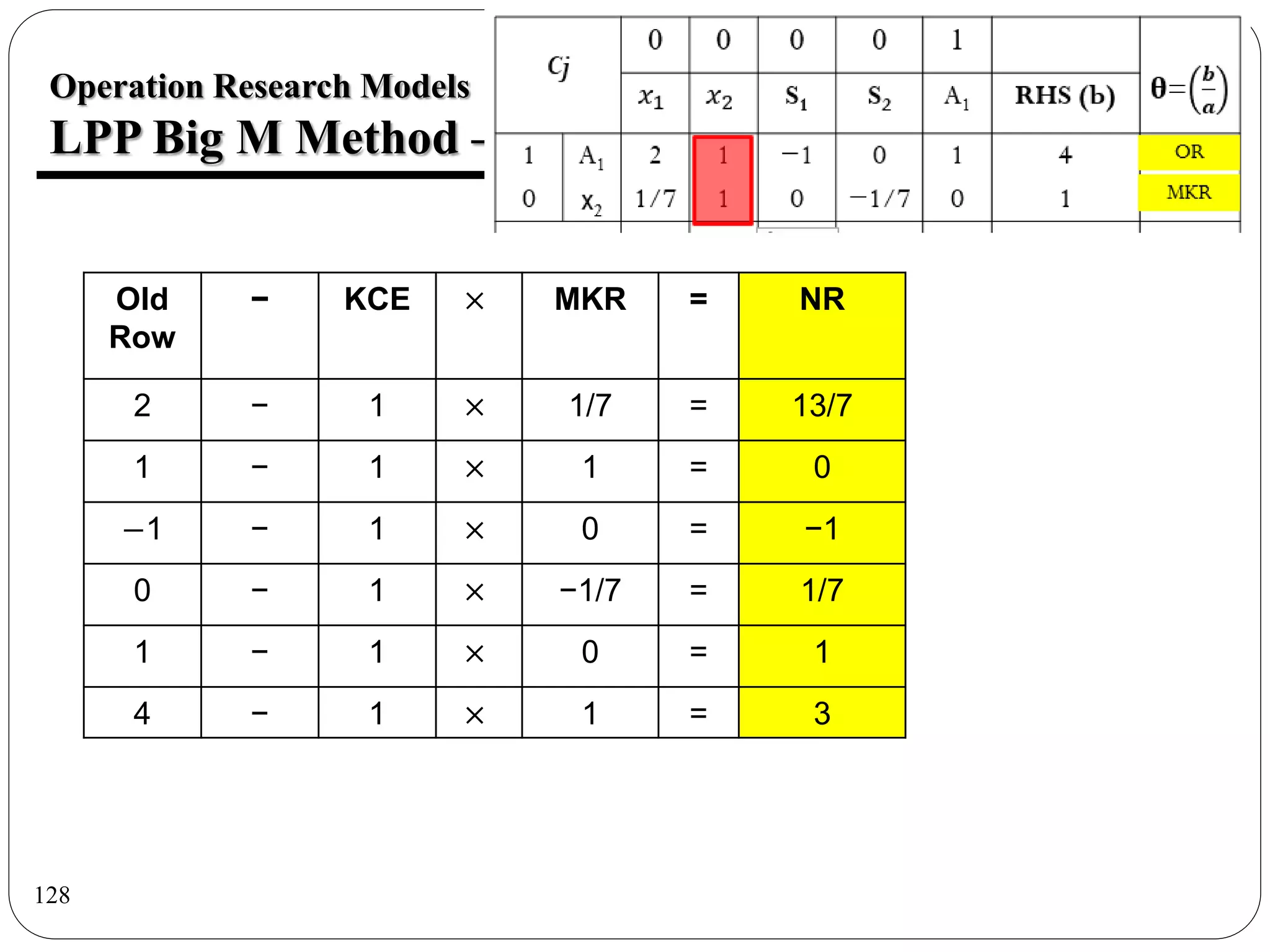

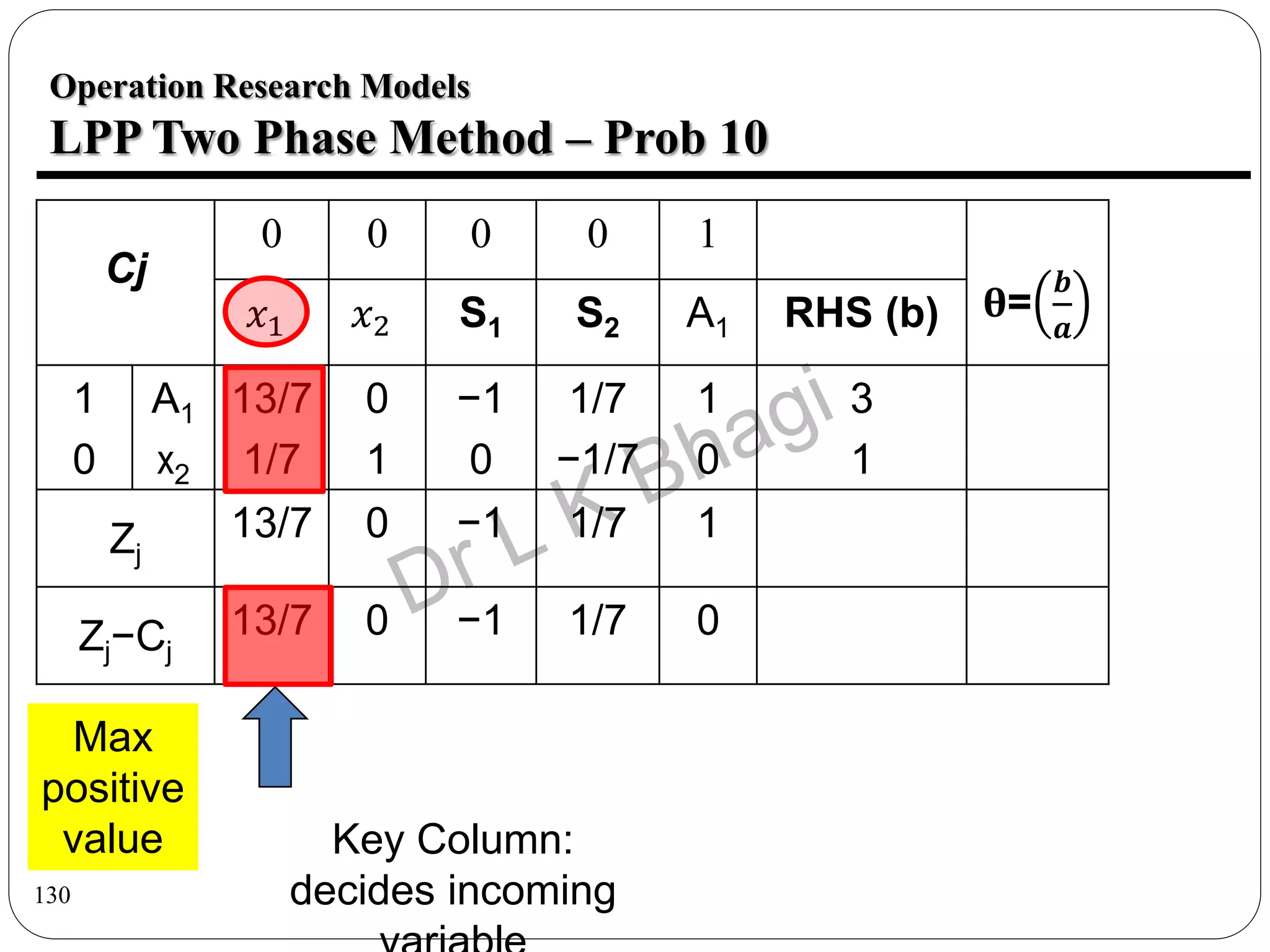

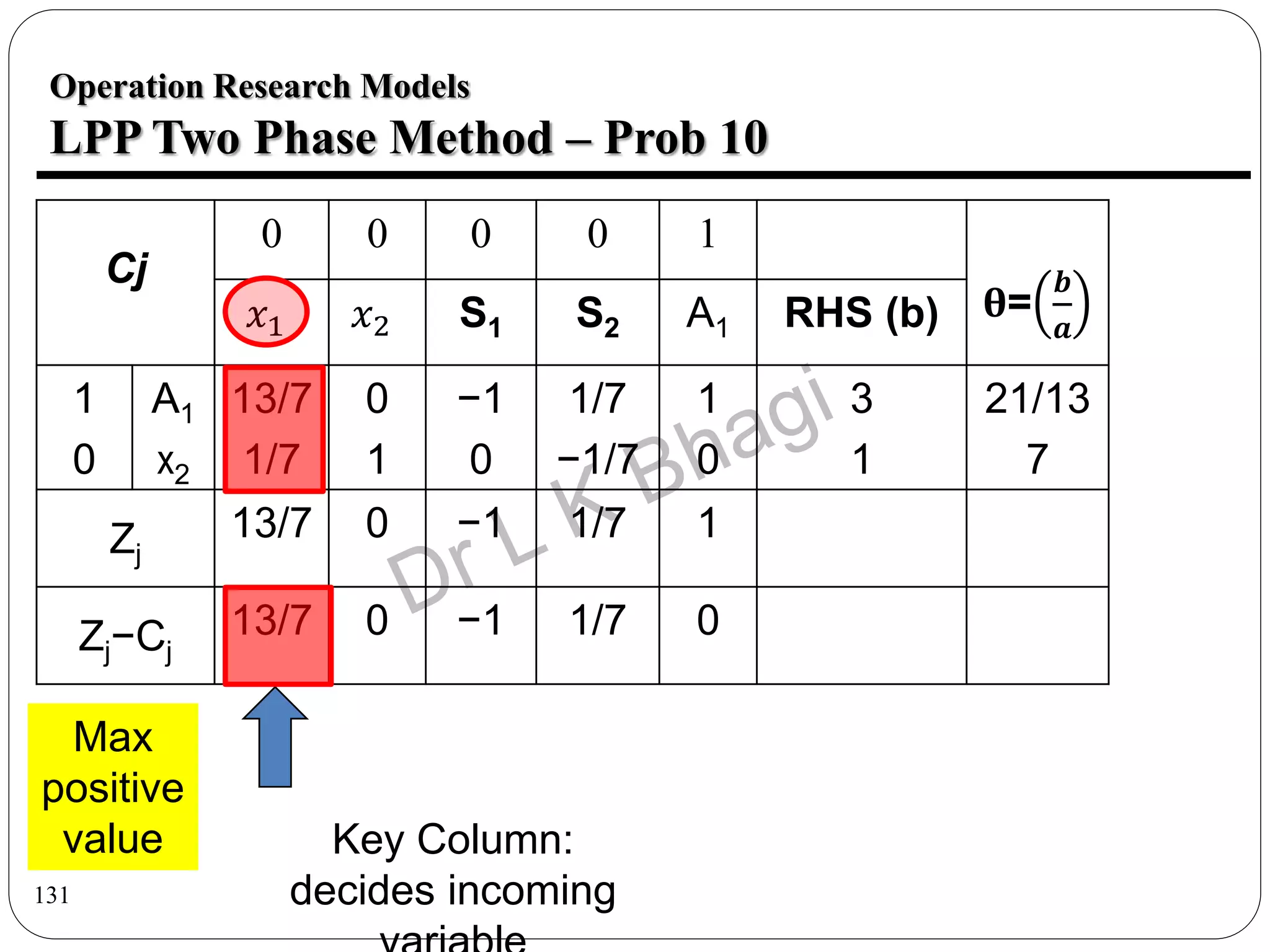

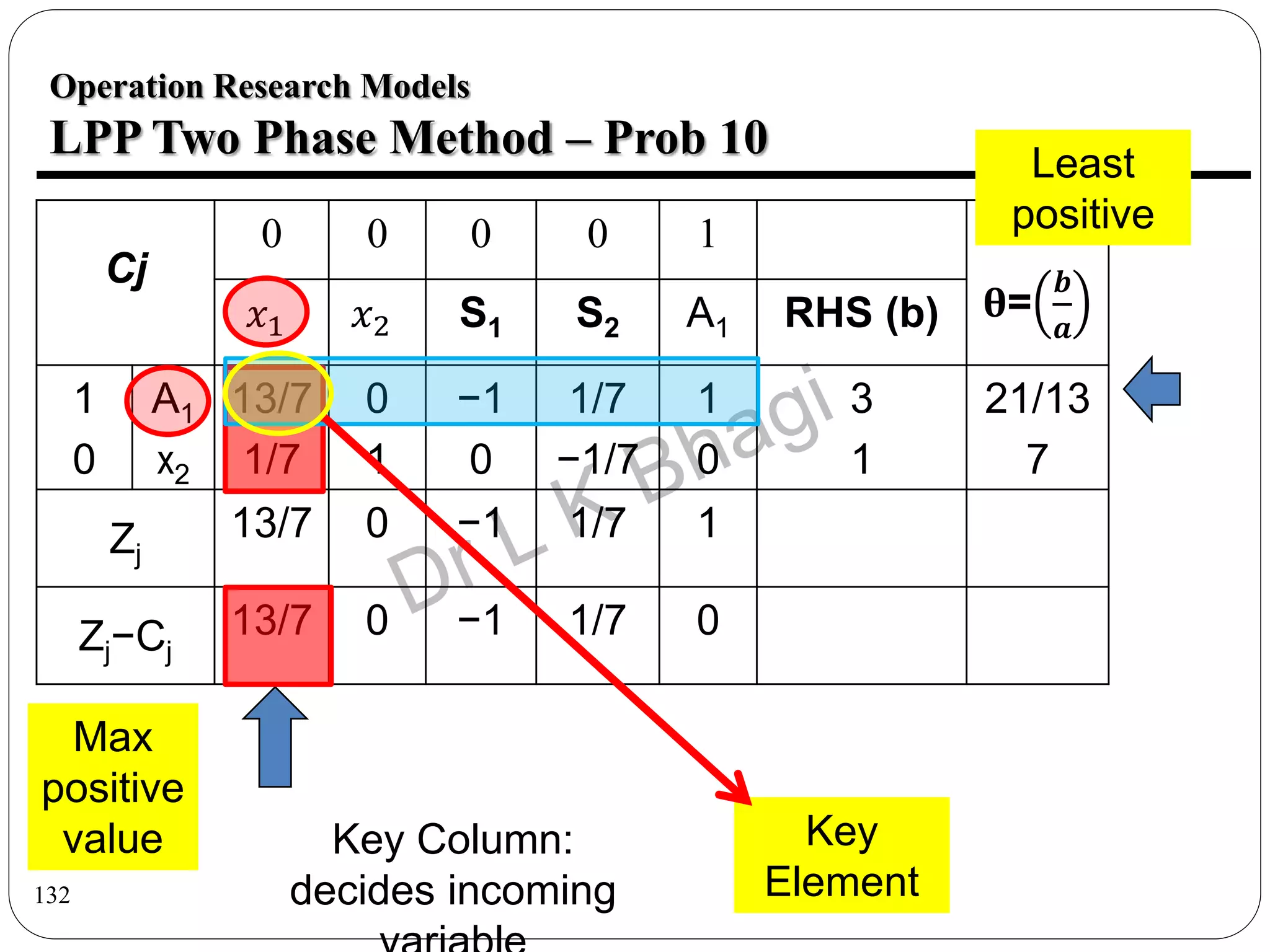

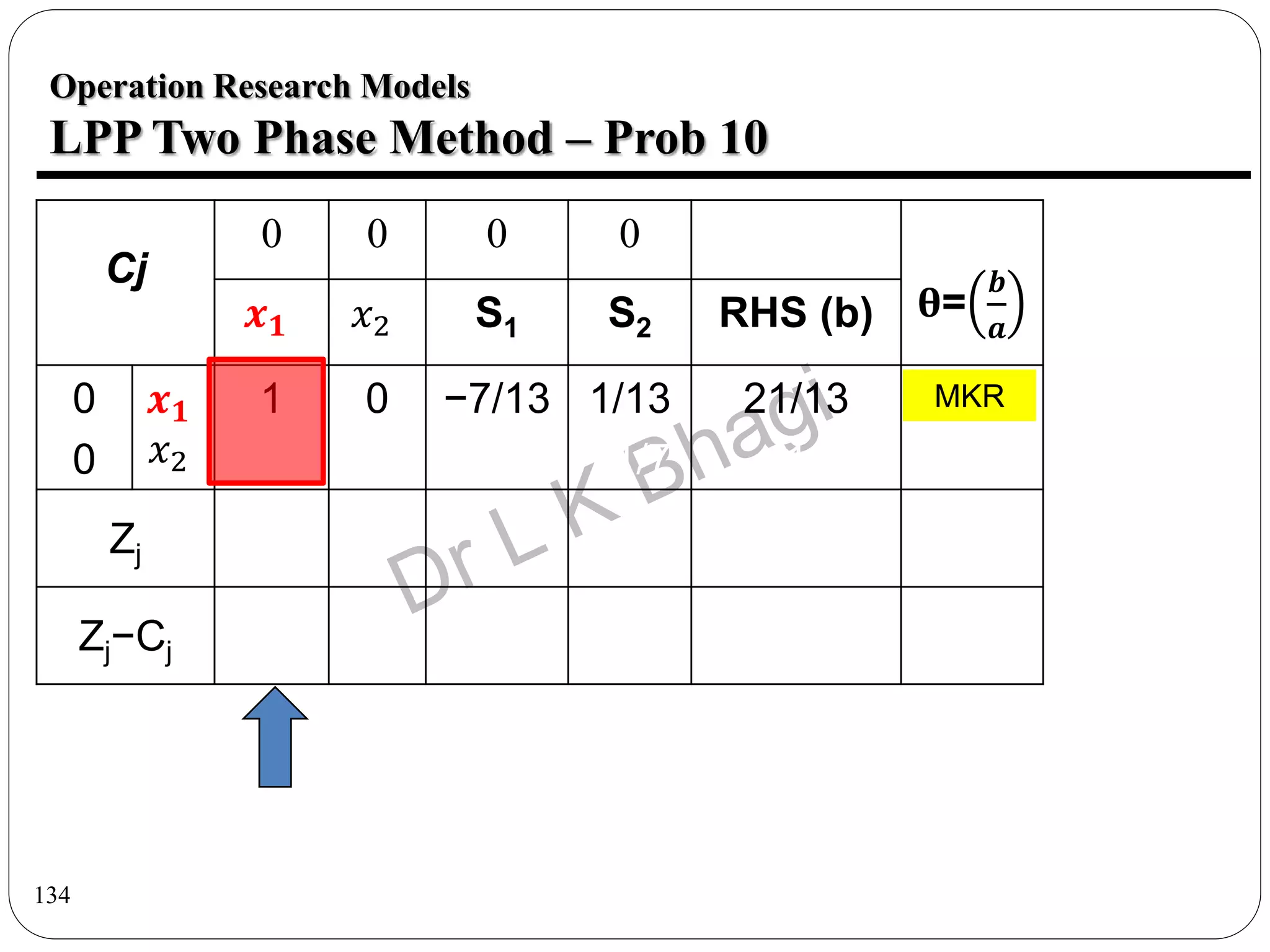

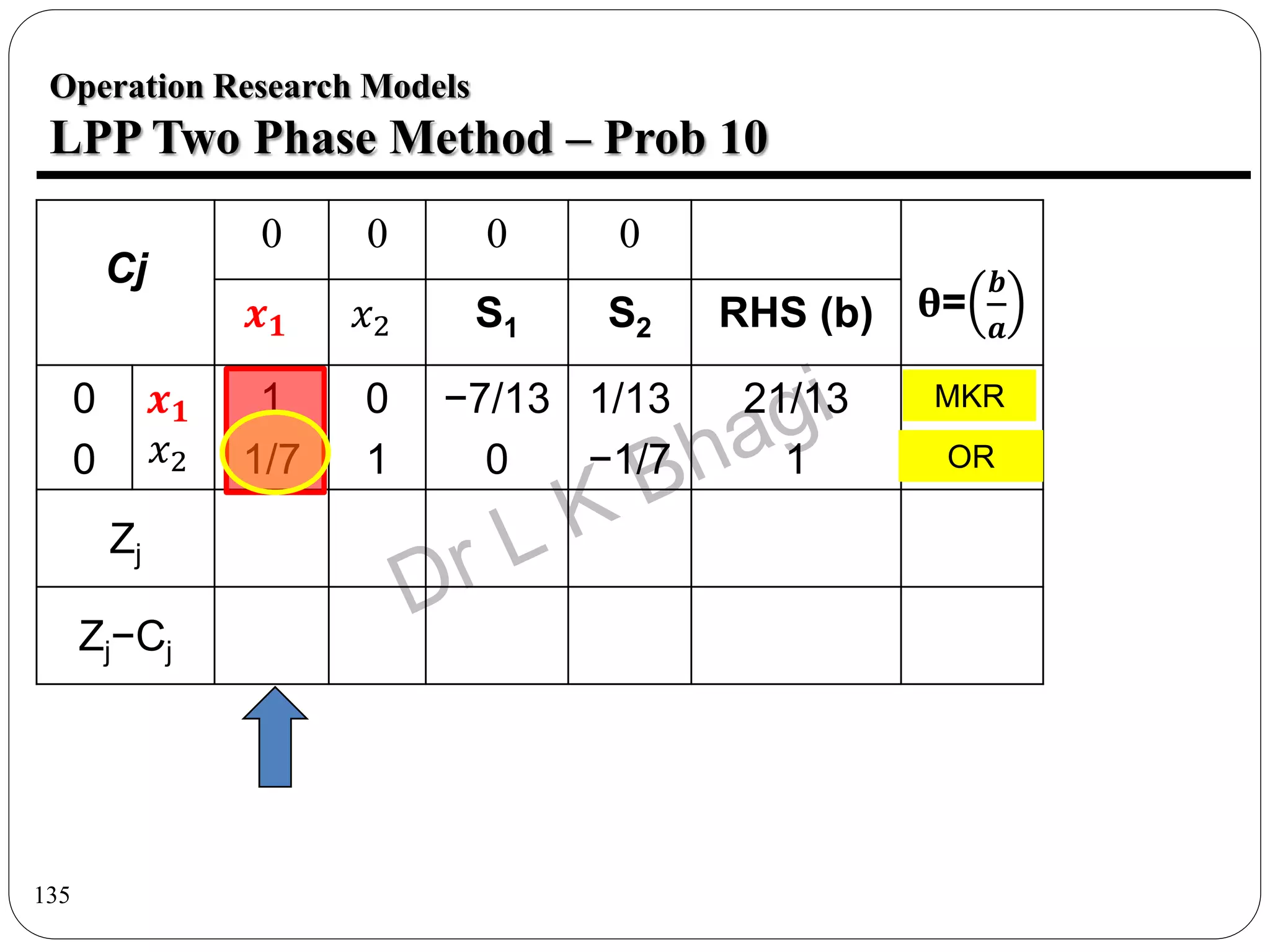

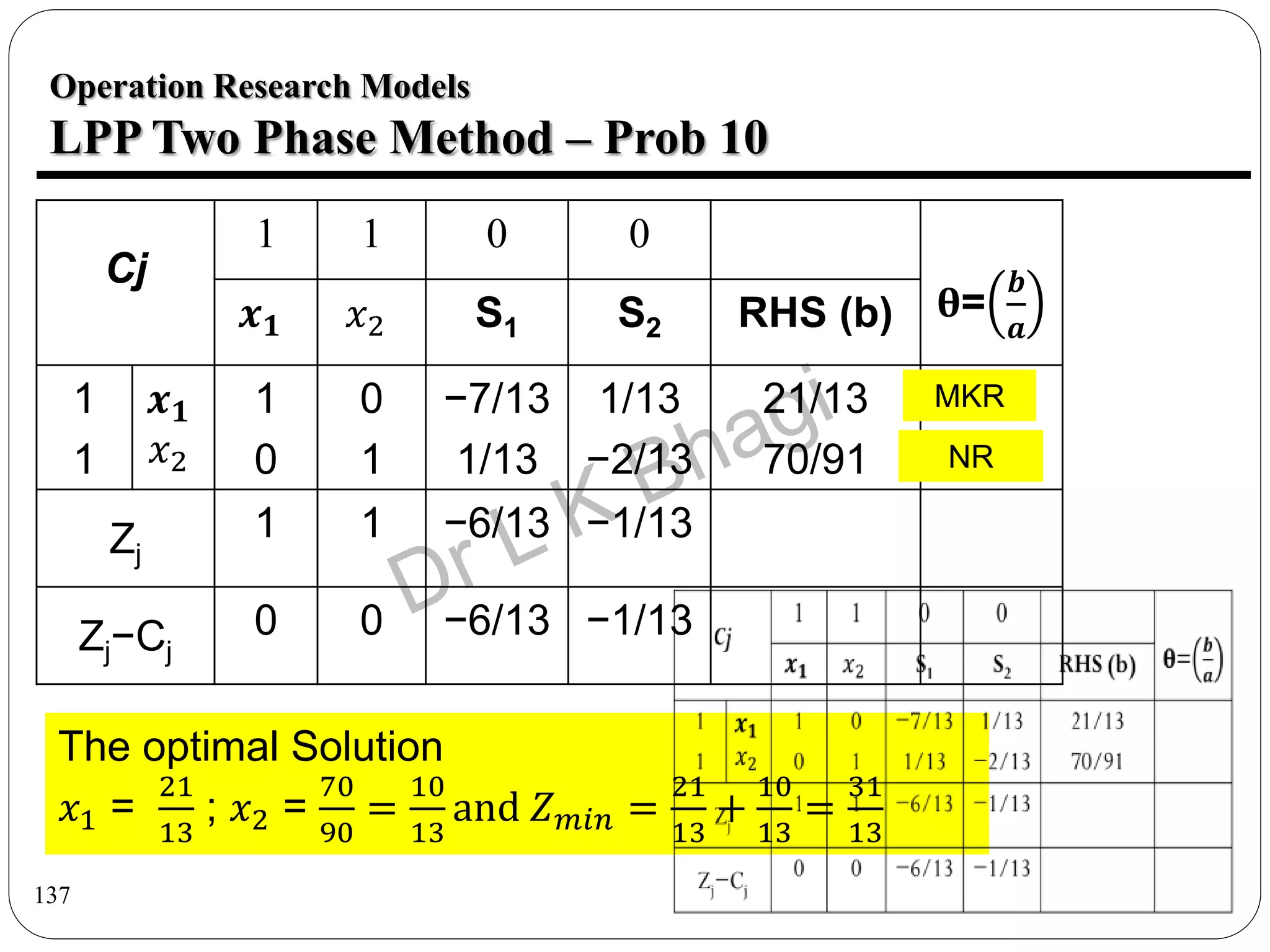

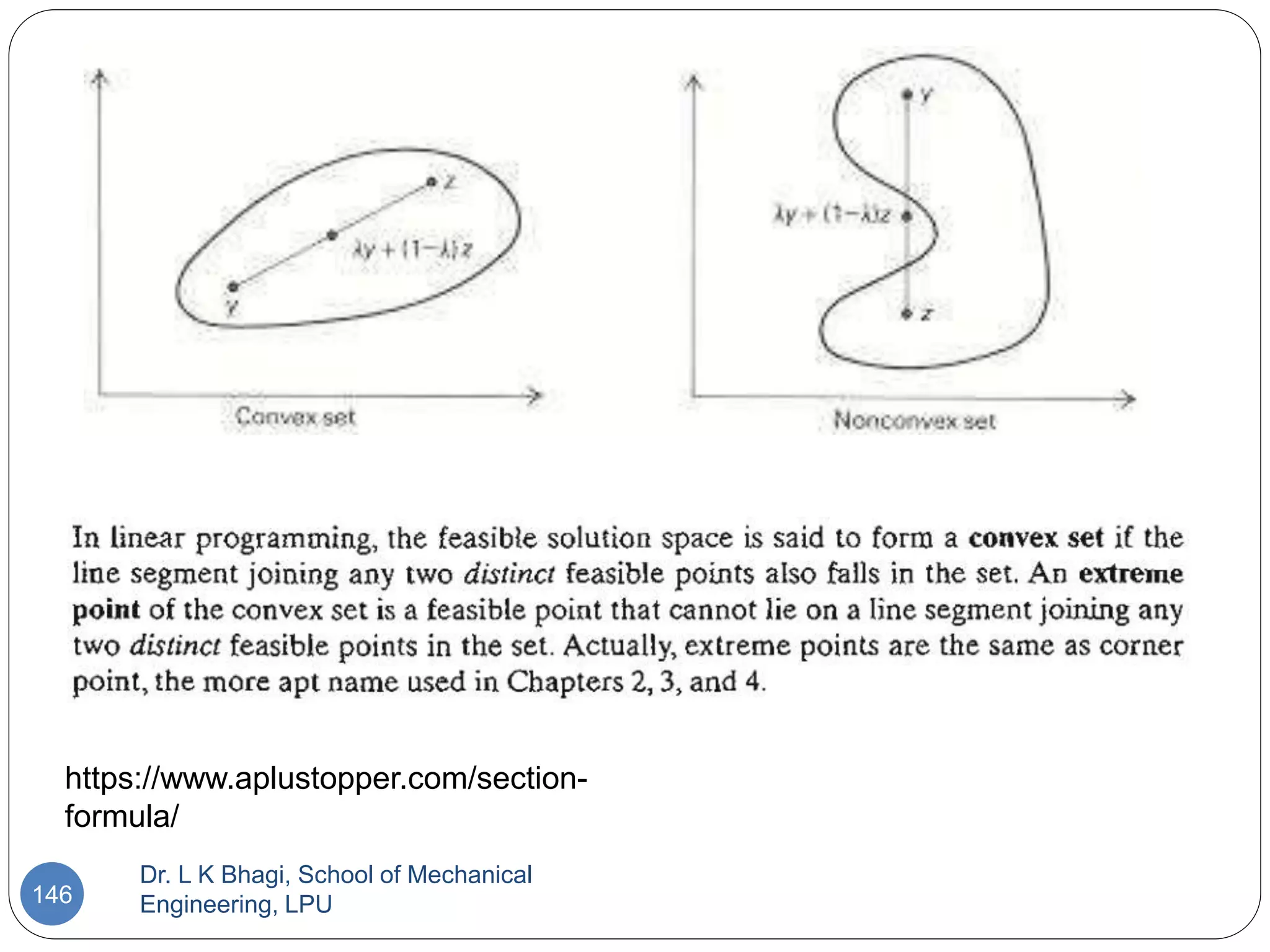

The document discusses the application of the Big M method within linear programming, focusing on formulating constraints as equations by introducing slack, surplus, and artificial variables. It presents multiple examples and step-by-step solutions to demonstrate the method's use in determining optimal solutions. Overall, it serves as a guide for implementing operational research models using the Big M approach.