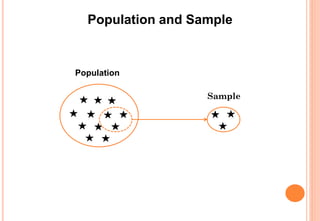

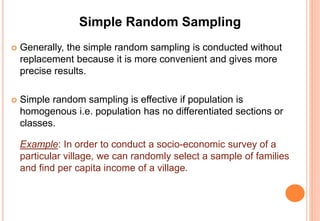

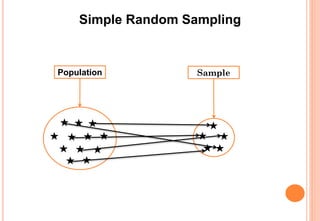

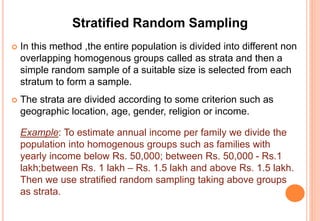

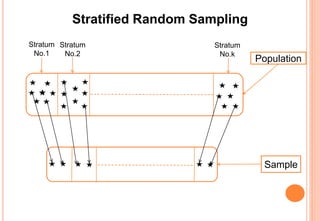

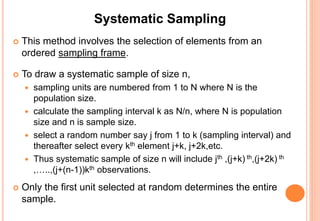

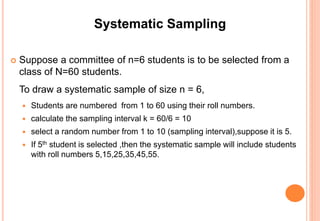

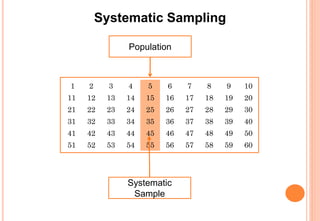

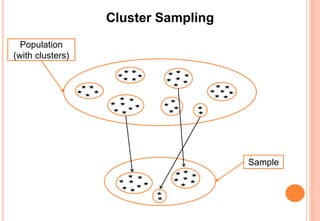

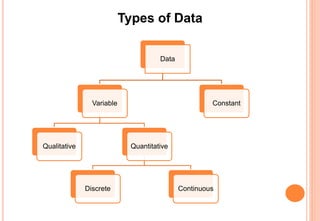

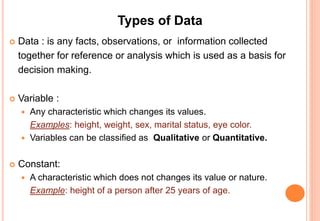

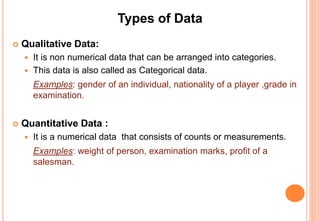

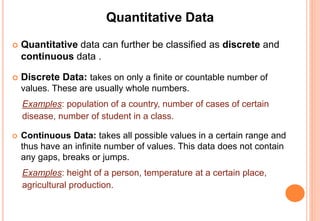

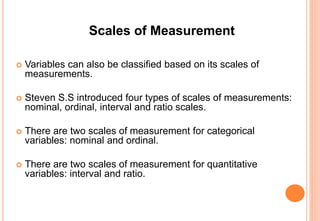

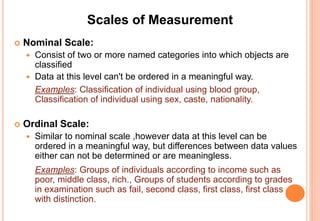

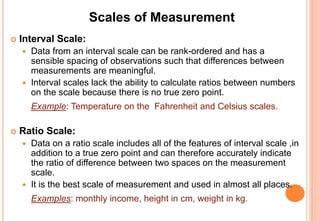

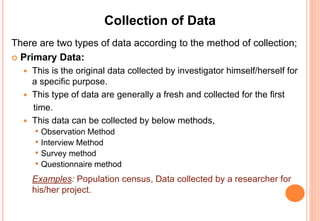

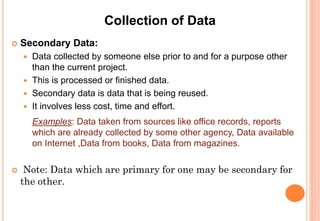

The document provides an introduction to statistics, covering its definition, importance, applications, and various branches like descriptive and inferential statistics. It details the concepts of population and sample, data sampling techniques, types of data, scales of measurement, and data collection methods. The document emphasizes the utility of statistical methods across multiple fields and the significance of proper data analysis for informed decision-making.