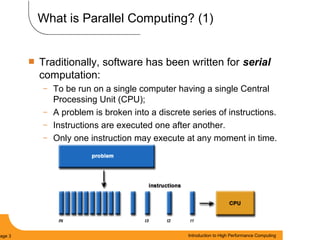

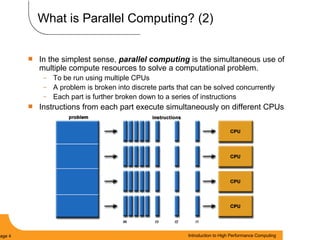

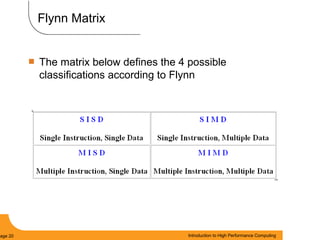

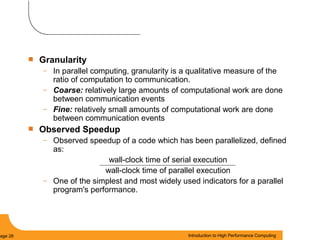

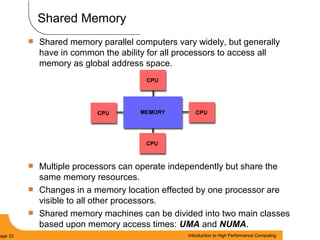

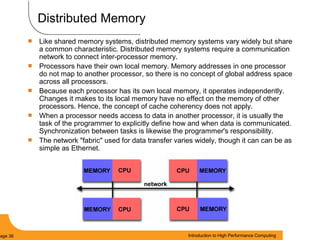

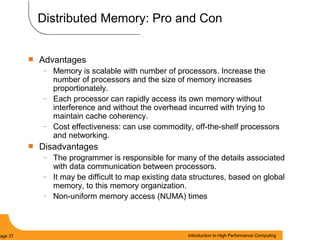

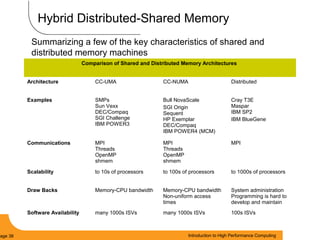

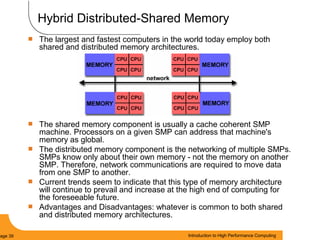

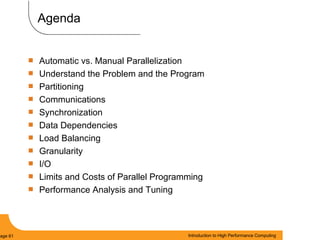

This document provides an introduction to parallel computing. It begins with definitions of parallel computing as using multiple compute resources simultaneously to solve problems. Popular parallel architectures include shared memory, where all processors can access a common memory, and distributed memory, where each processor has its own local memory and they communicate over a network. The document discusses key parallel computing concepts and terminology such as Flynn's taxonomy, parallel overhead, scalability, and memory models including uniform memory access (UMA), non-uniform memory access (NUMA), and distributed memory. It aims to provide background on parallel computing topics before examining how to parallelize different types of programs.