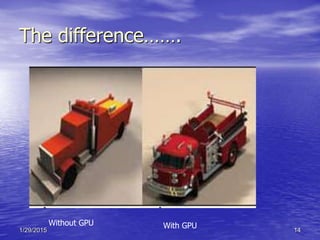

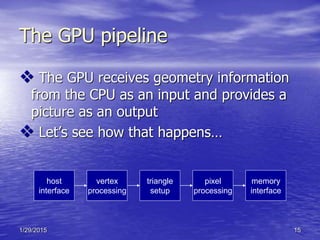

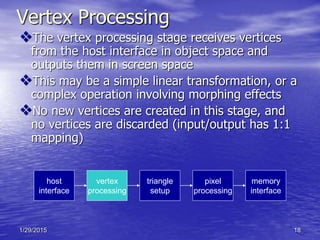

The document provides an overview of graphics processing units (GPUs). It defines a GPU as a dedicated processor for computer graphics that contains hundreds of parallel execution units tailored for graphics processing. The document compares GPUs to CPUs, describing how GPUs have many parallel units while CPUs operate serially. It outlines the typical architecture of a GPU, including its pipeline from vertex processing to pixel processing to memory storage. The document also discusses how GPUs interact with CPUs and their use of dedicated video memory.