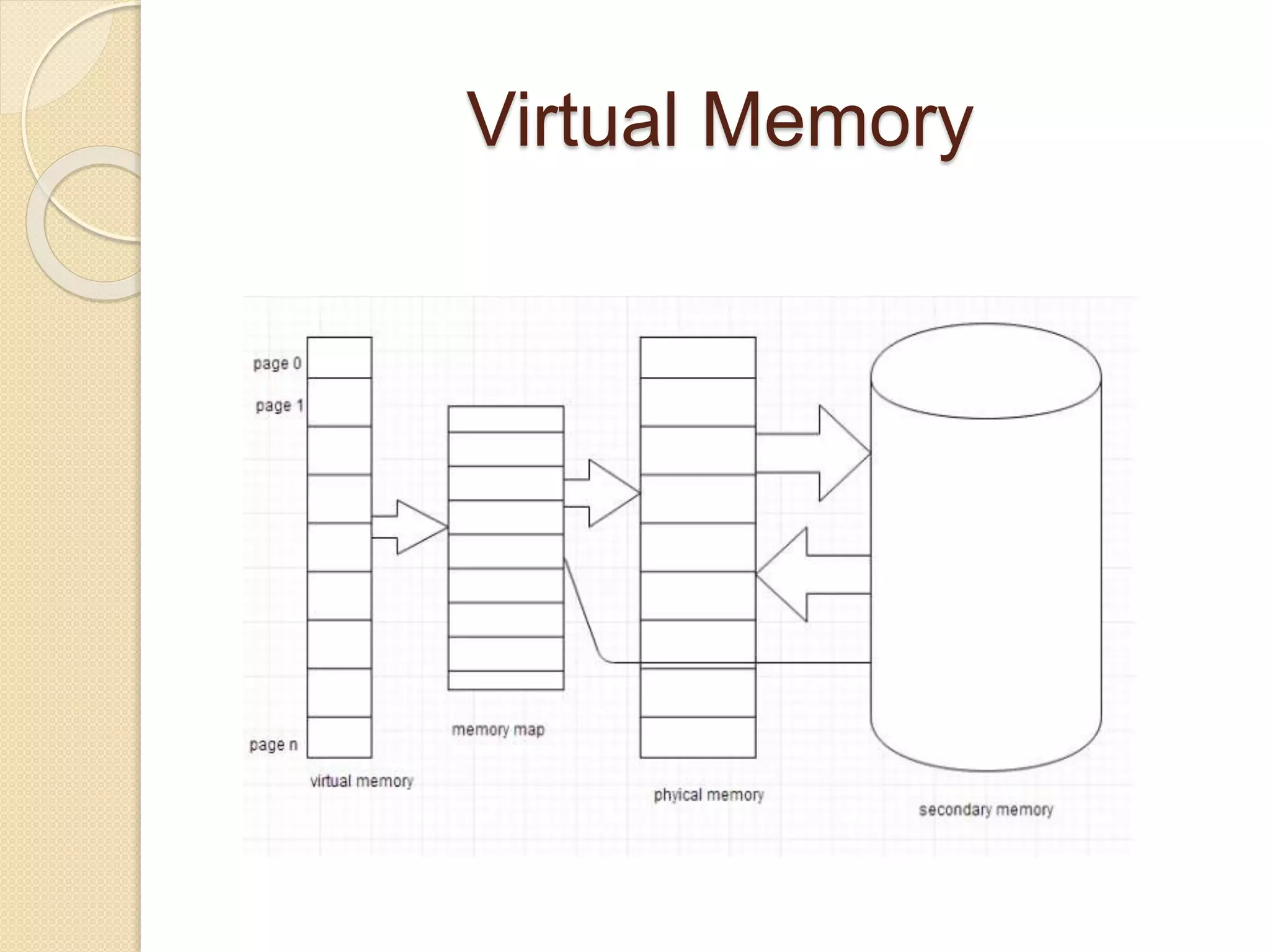

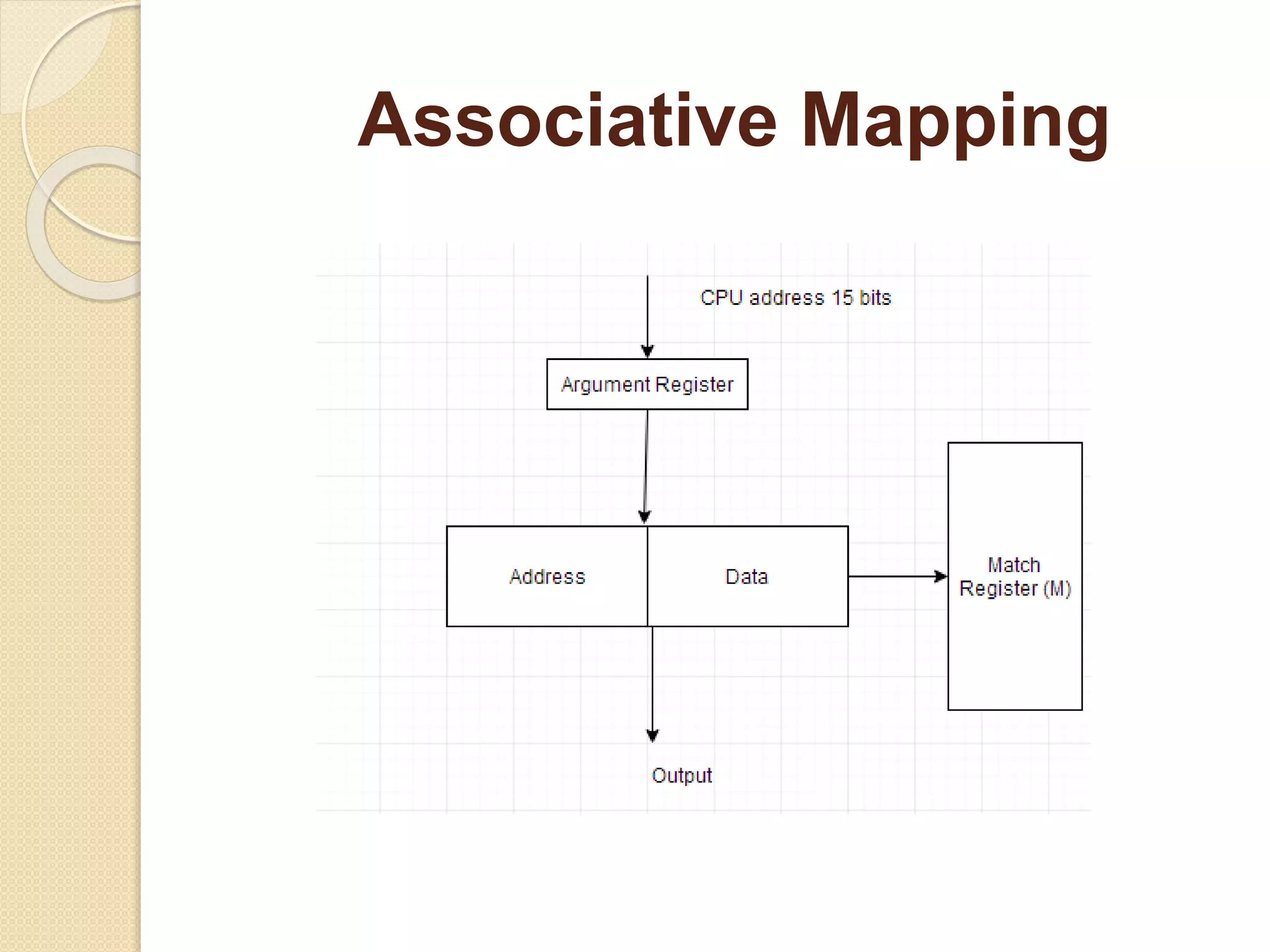

The document explains the concept of memory in computers, detailing physical memory (like RAM and ROM) and the technique of virtual memory that enables more extensive memory usage than physically available. It discusses different mappings (associative, direct, and set associative) and replacement algorithms (FIFO and LRU) used in managing cache memory. Additionally, the advantages and disadvantages of virtual memory are highlighted, such as enabling multiprogramming but potentially slowing down applications.