Embed presentation

Download as PDF, PPTX

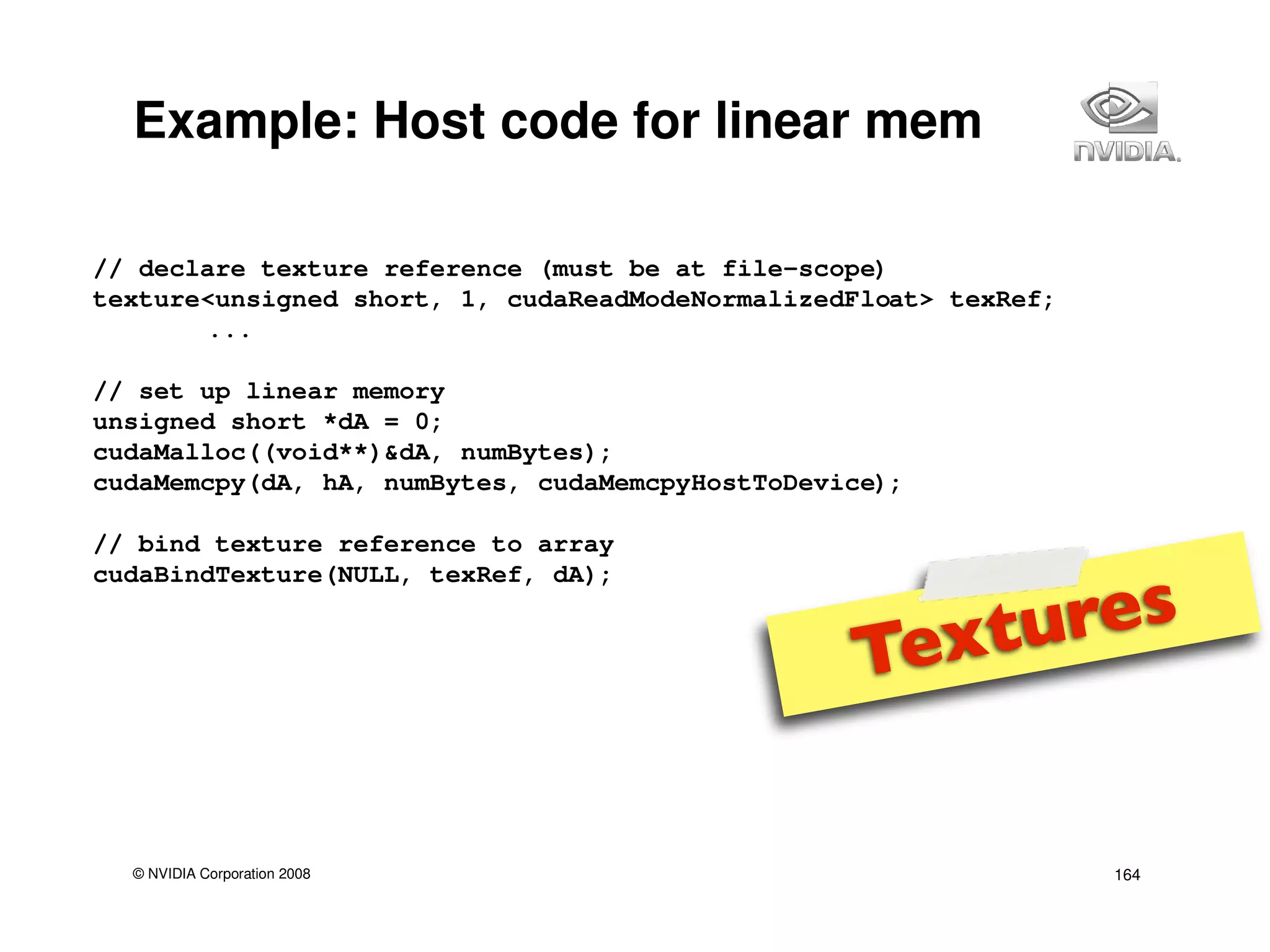

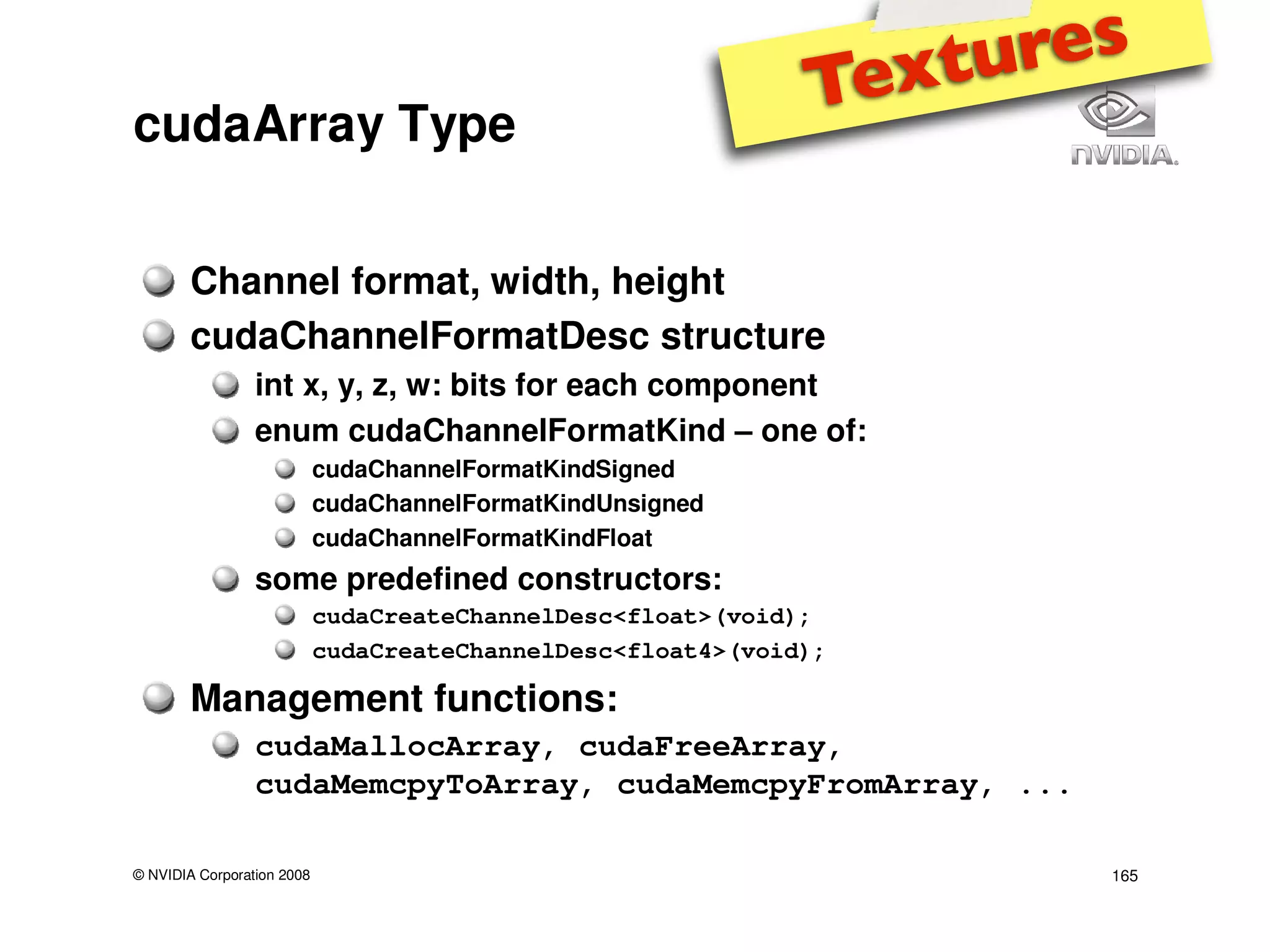

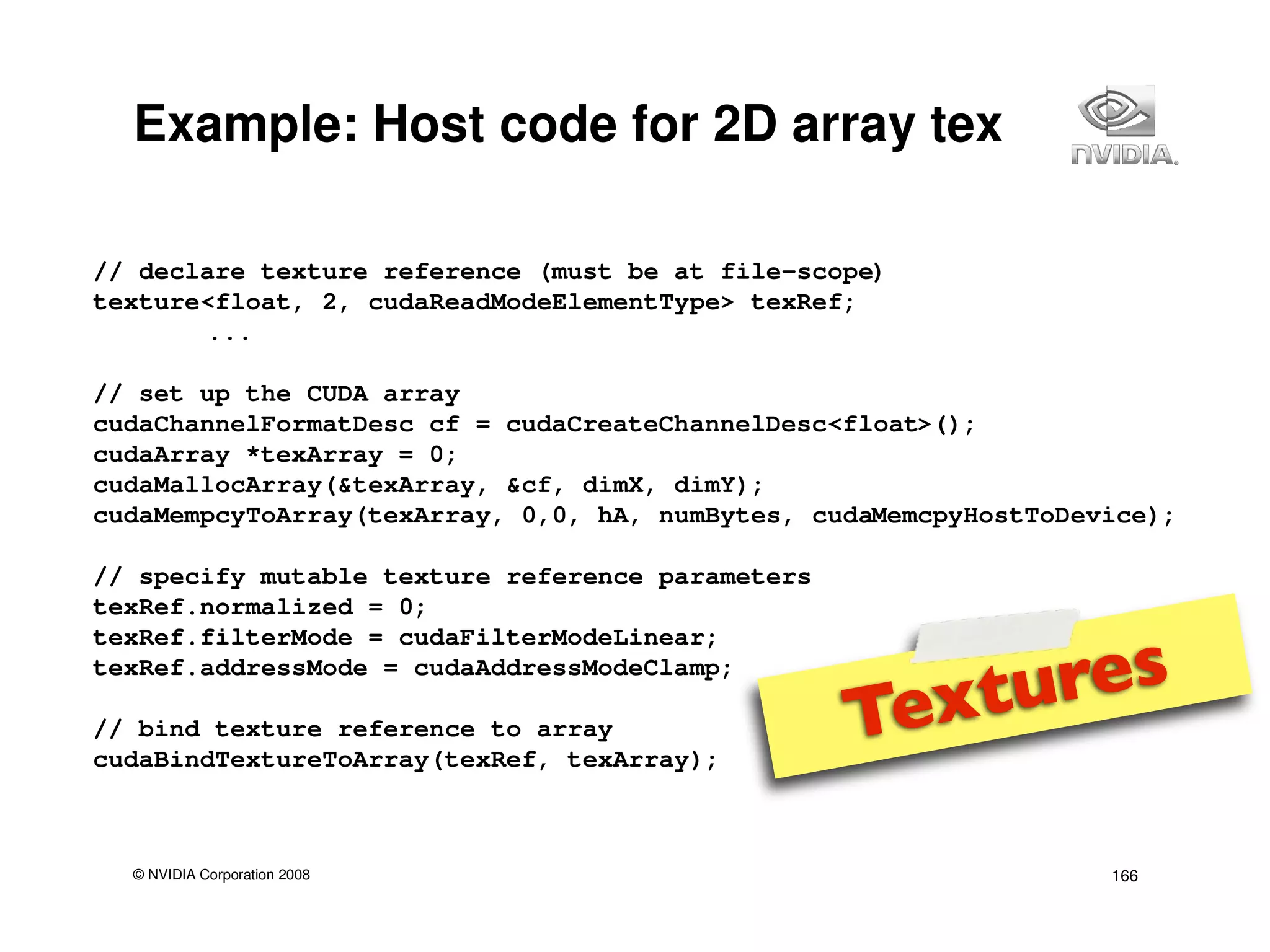

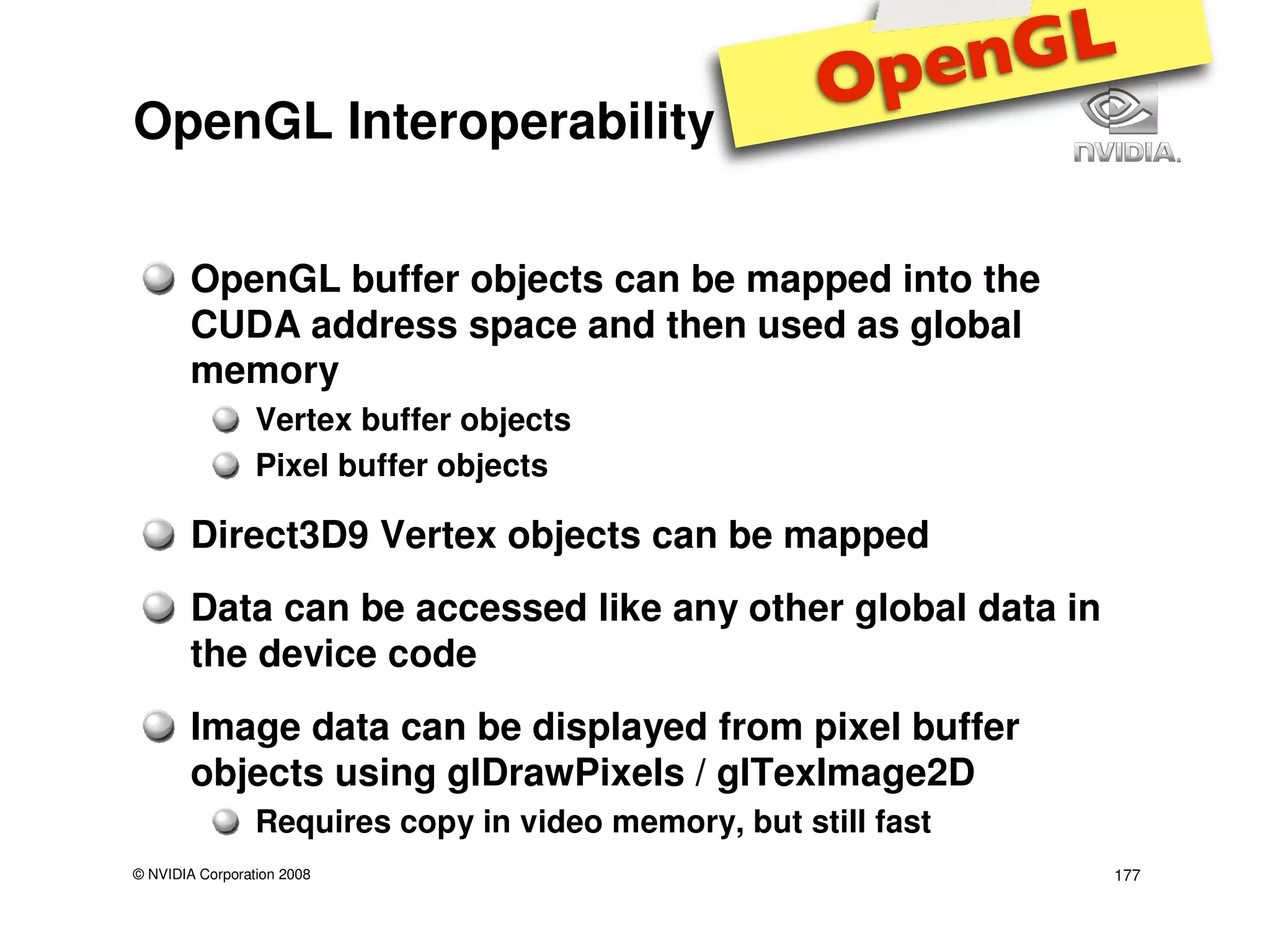

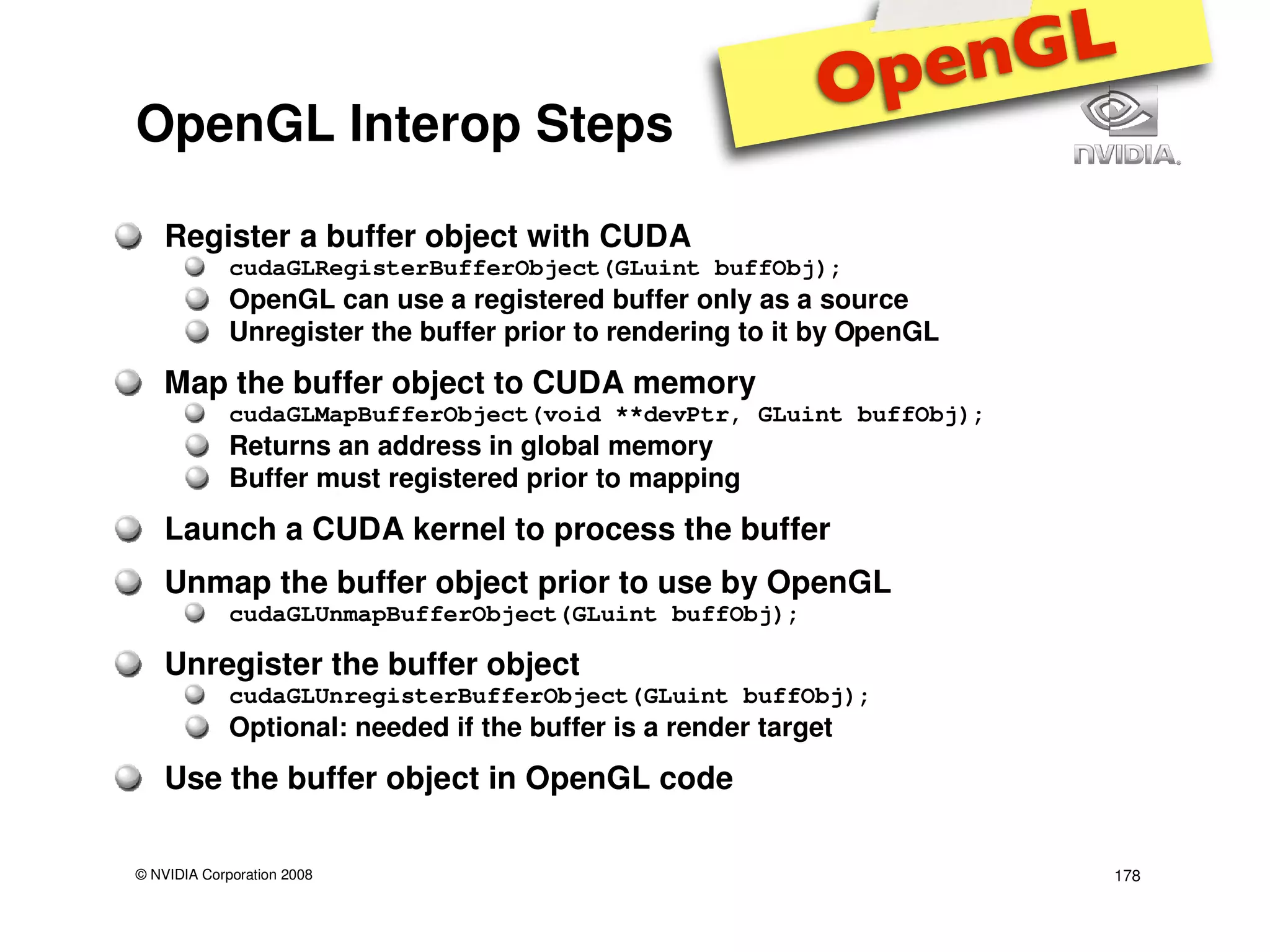

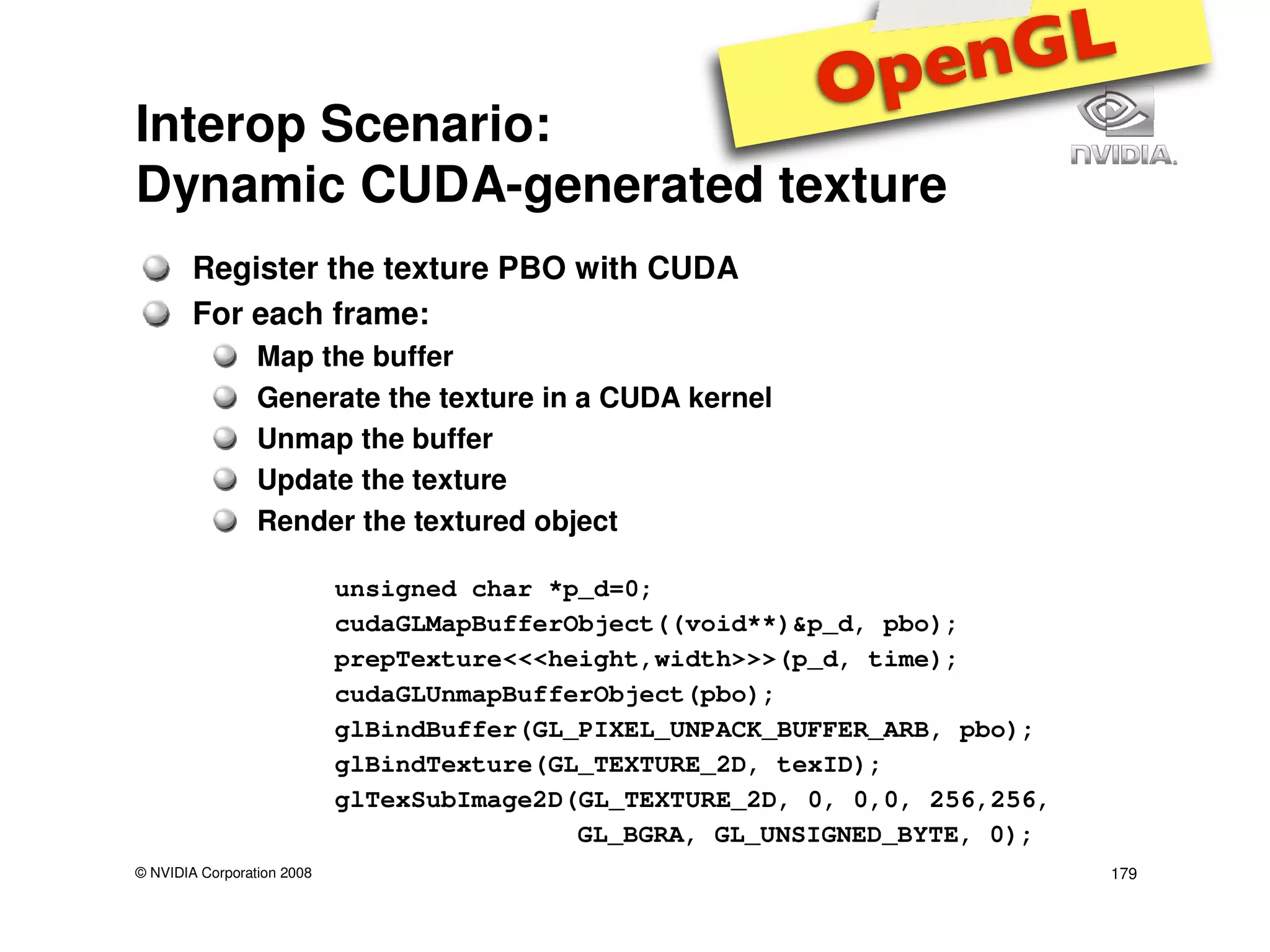

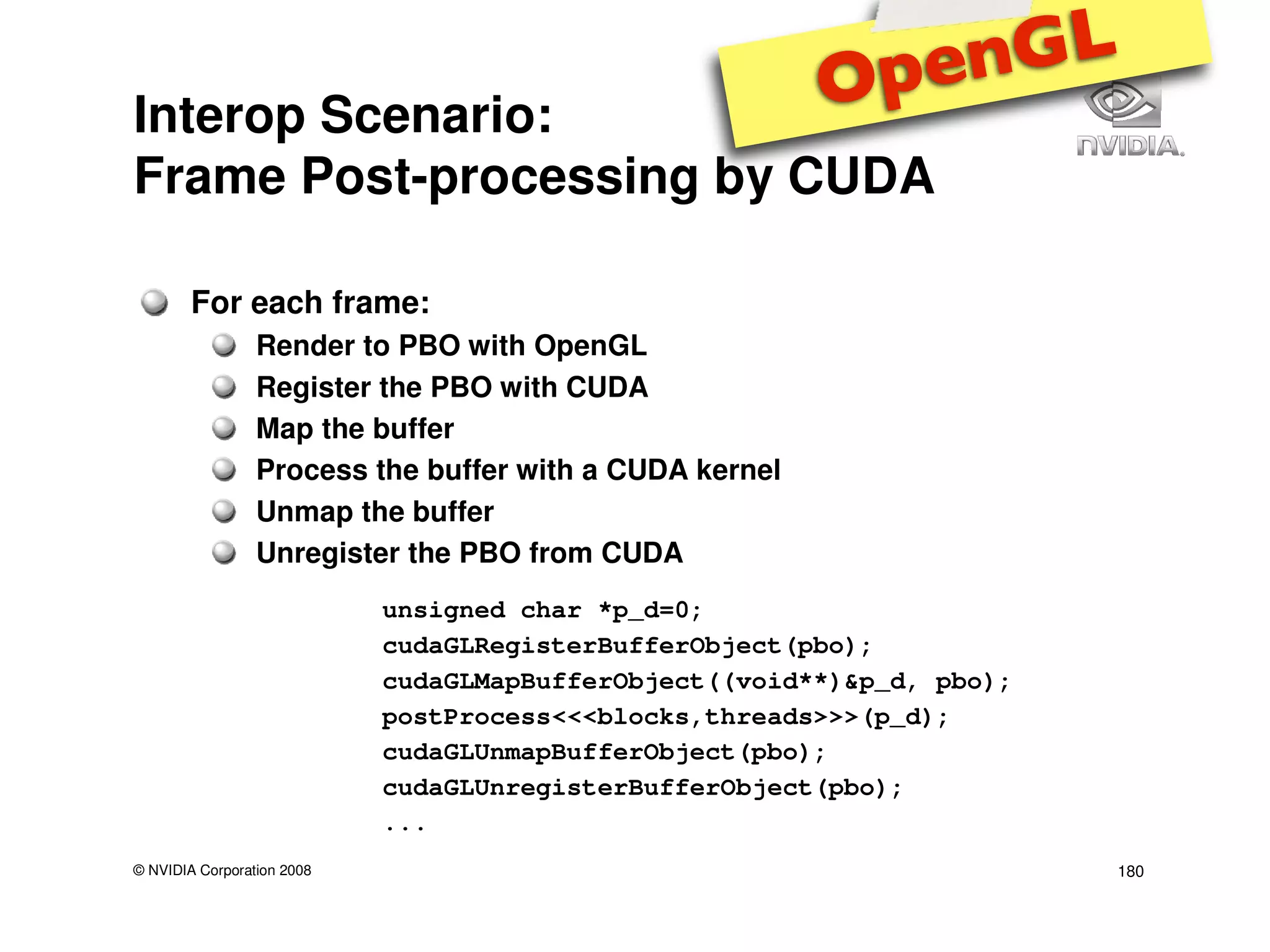

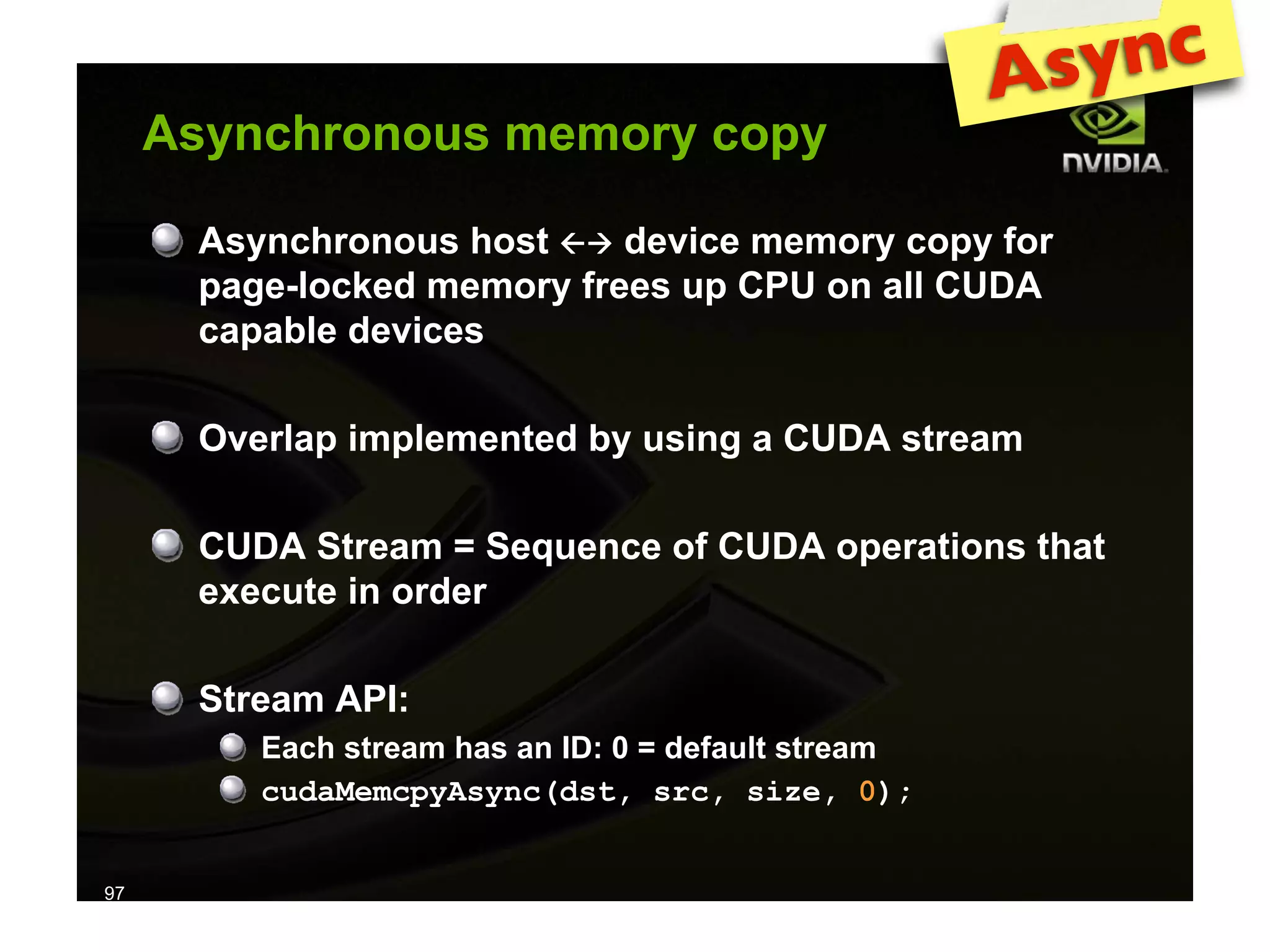

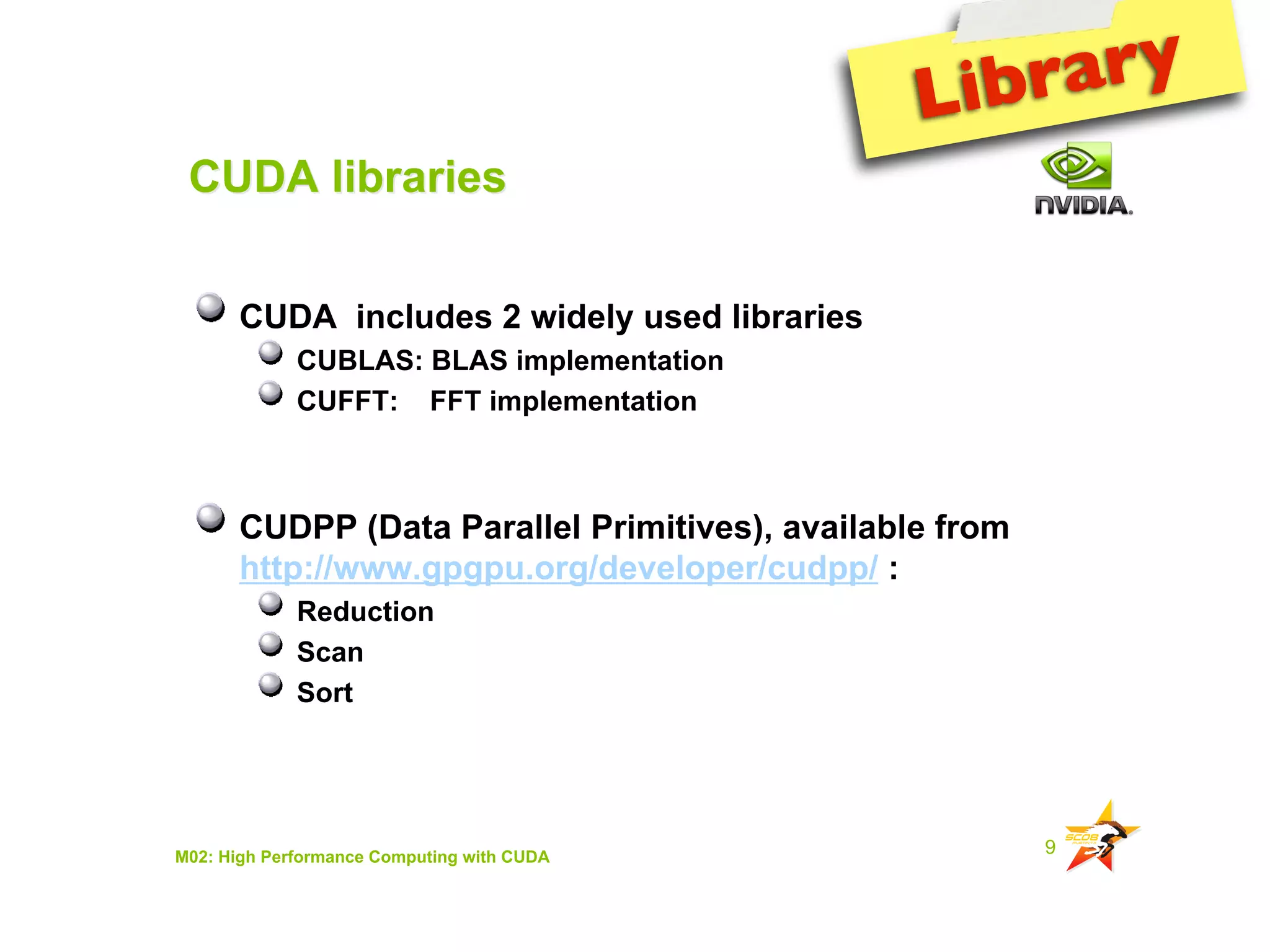

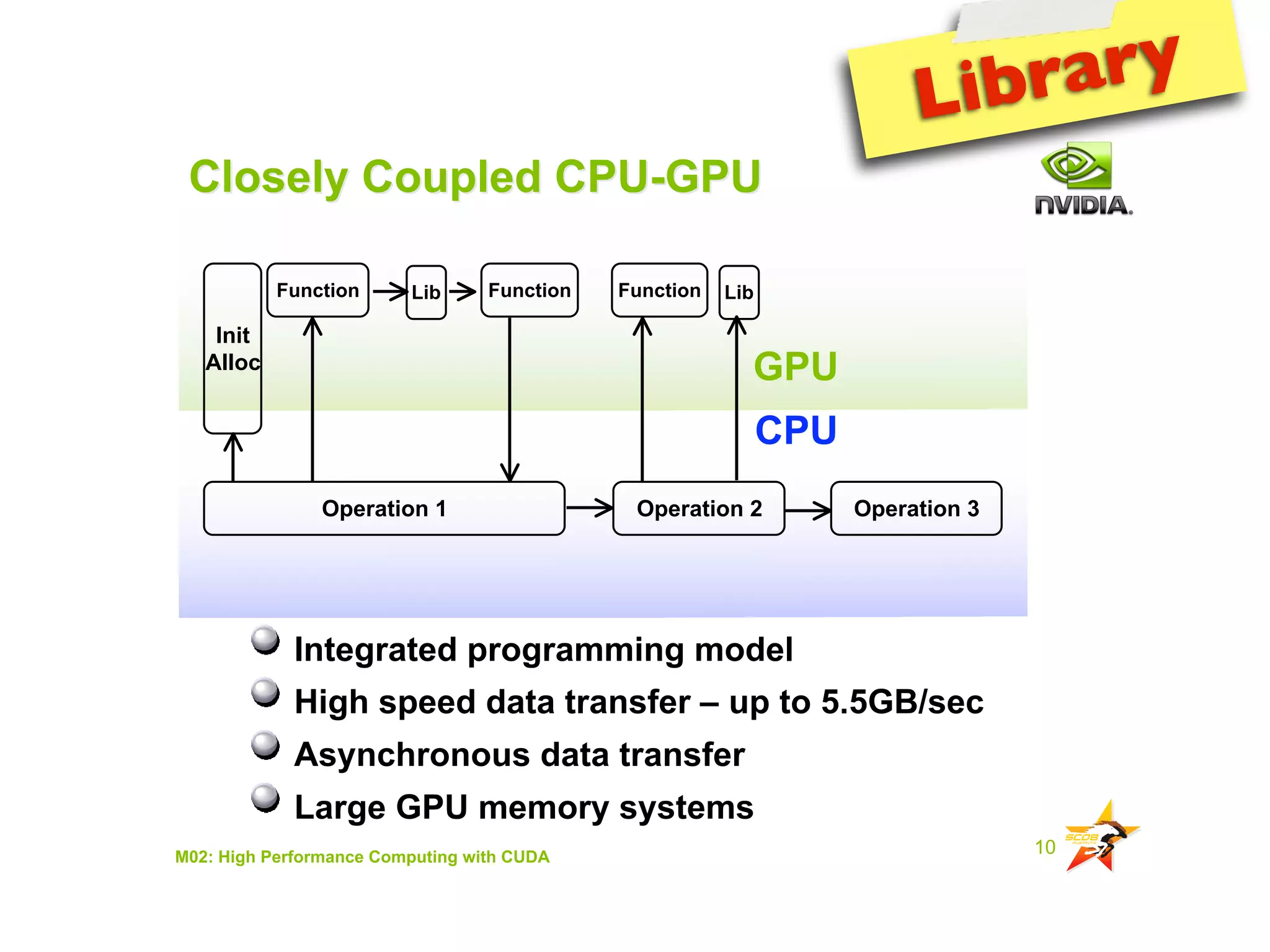

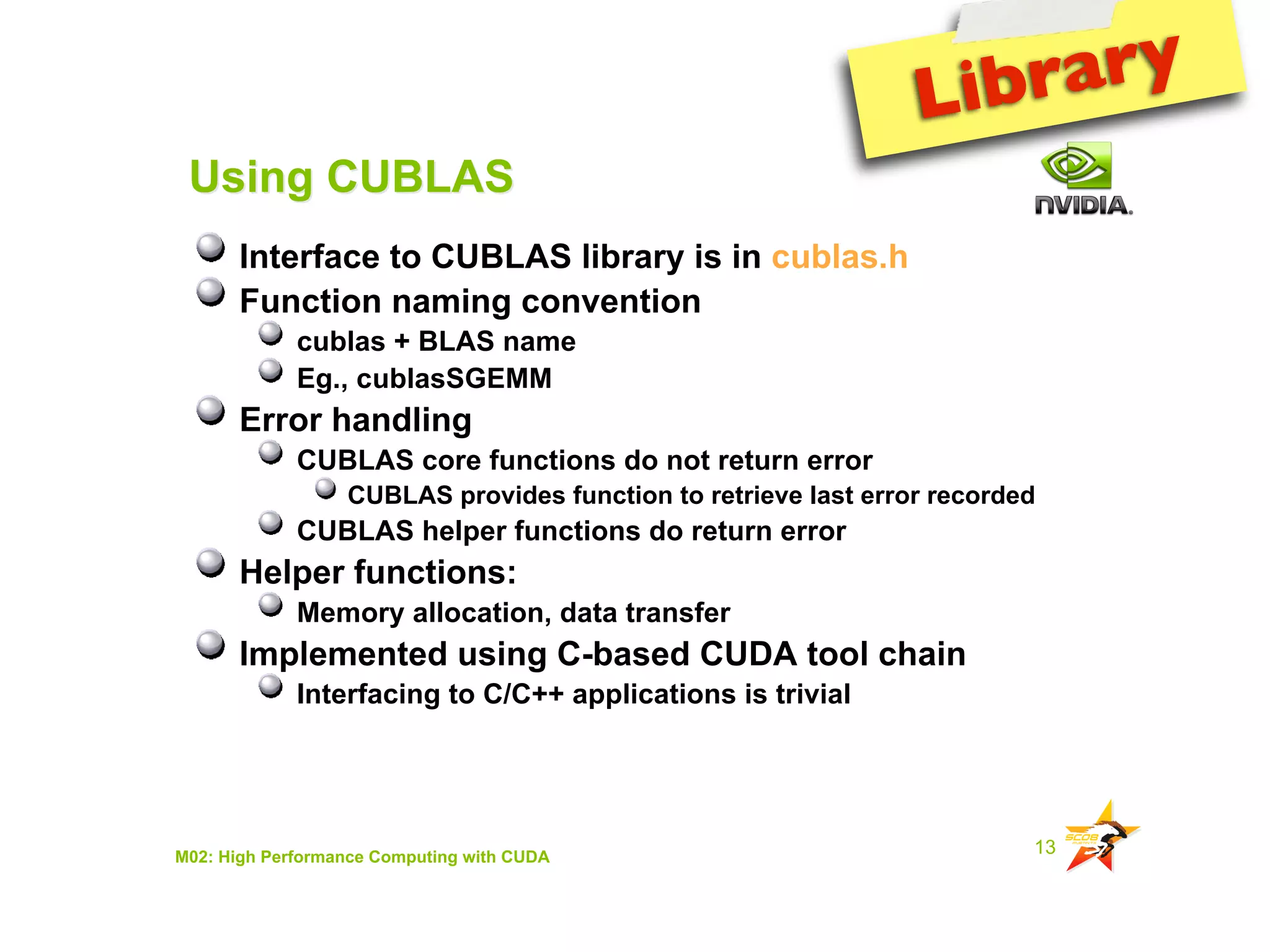

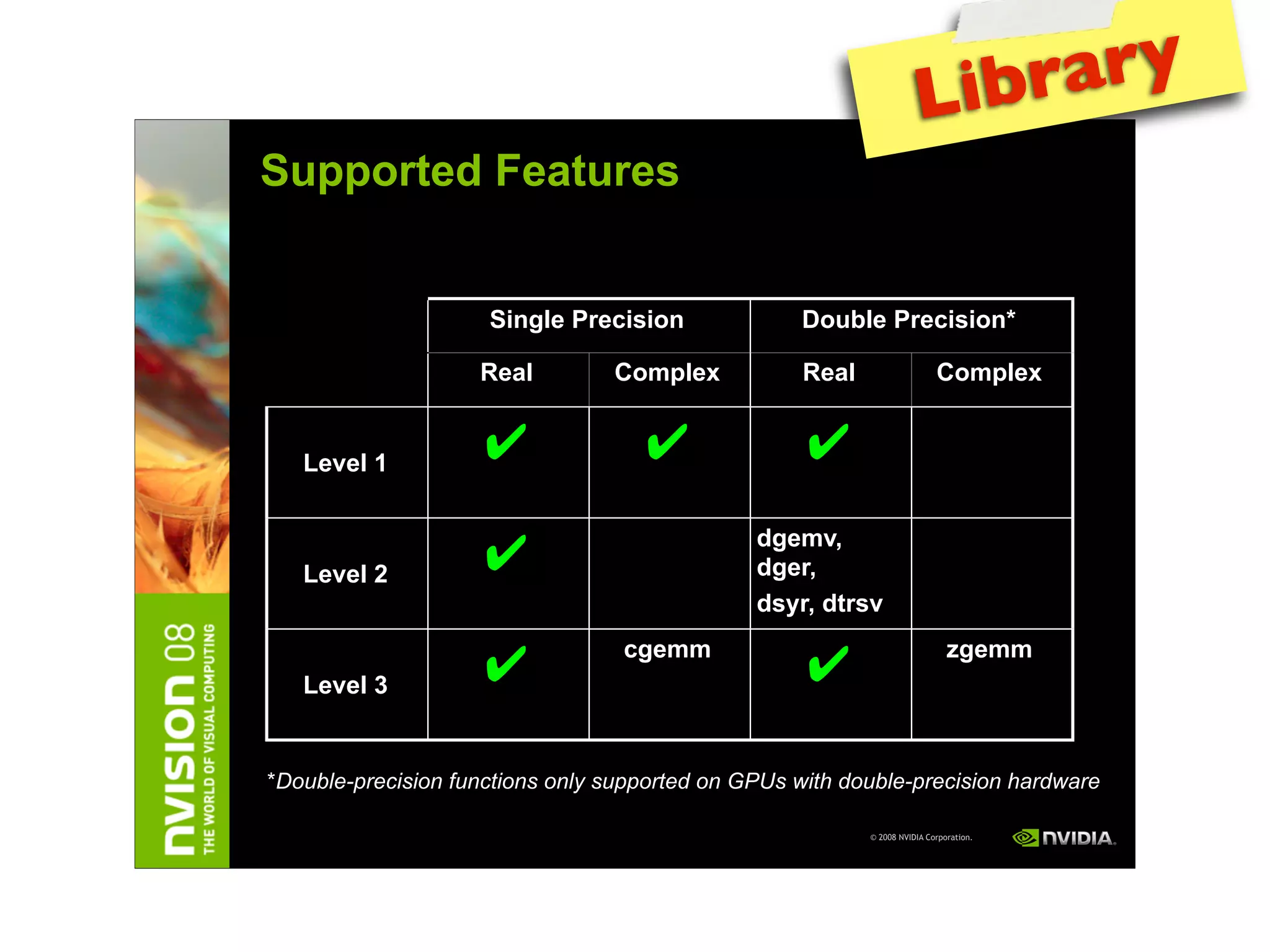

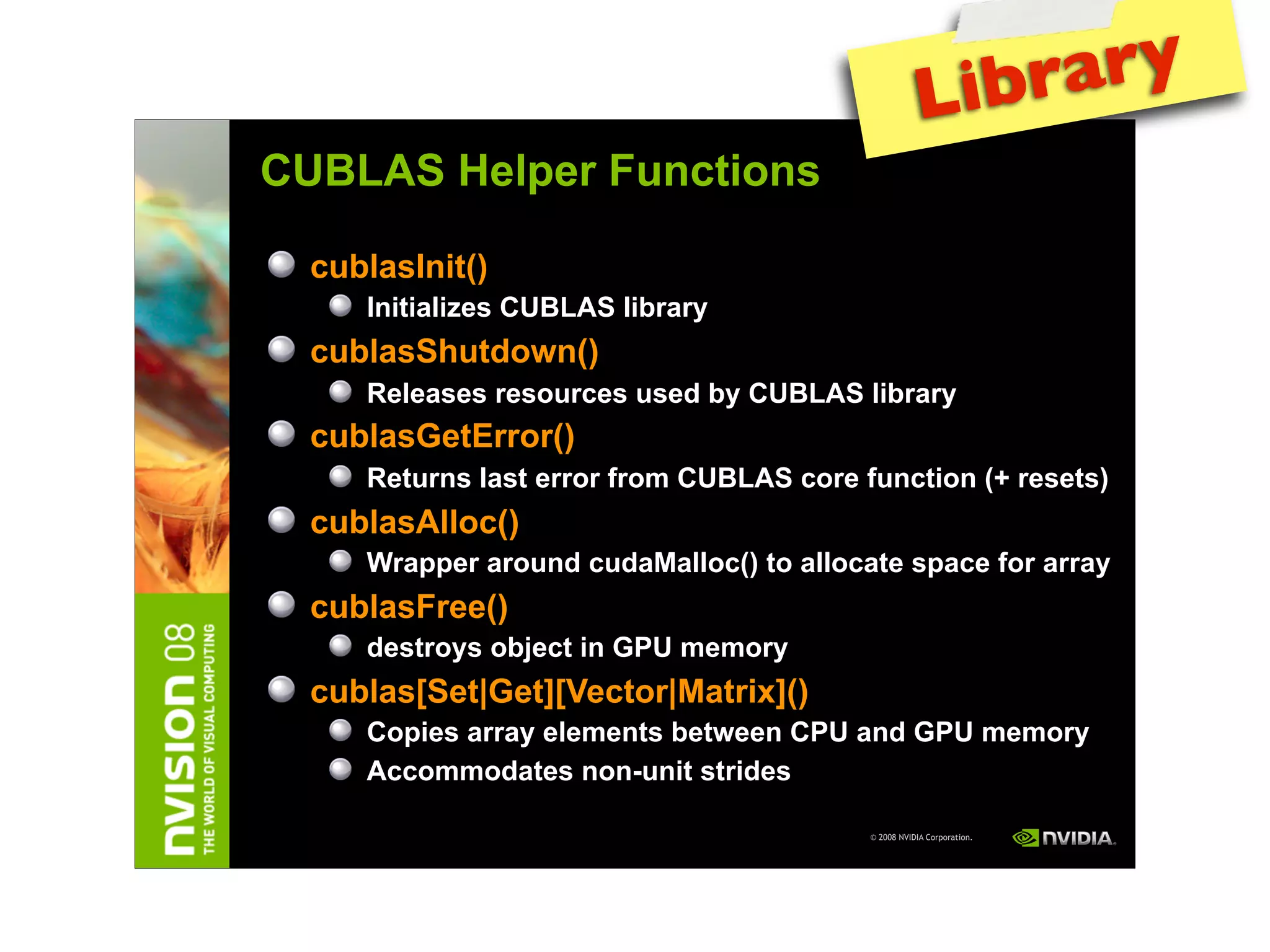

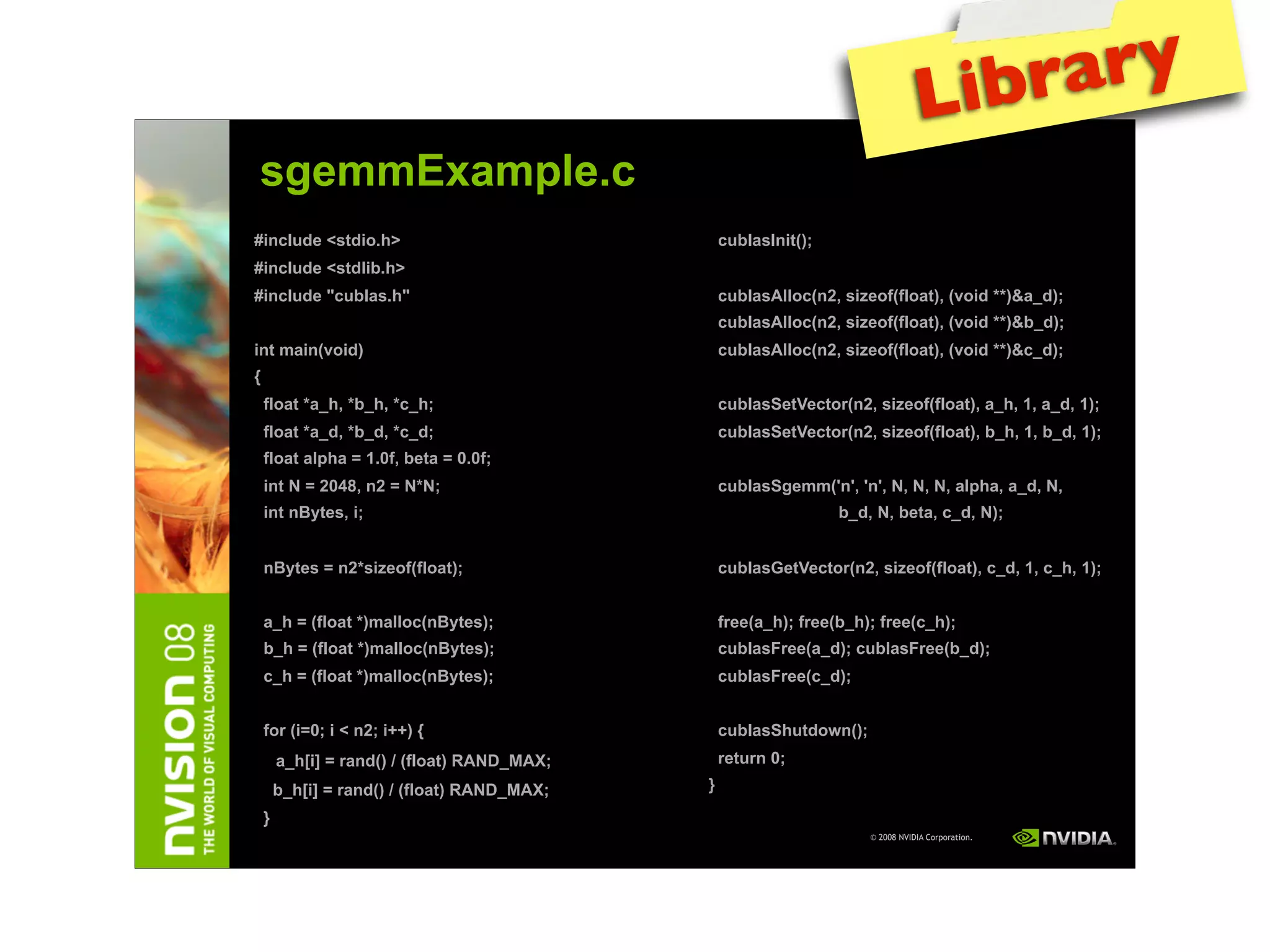

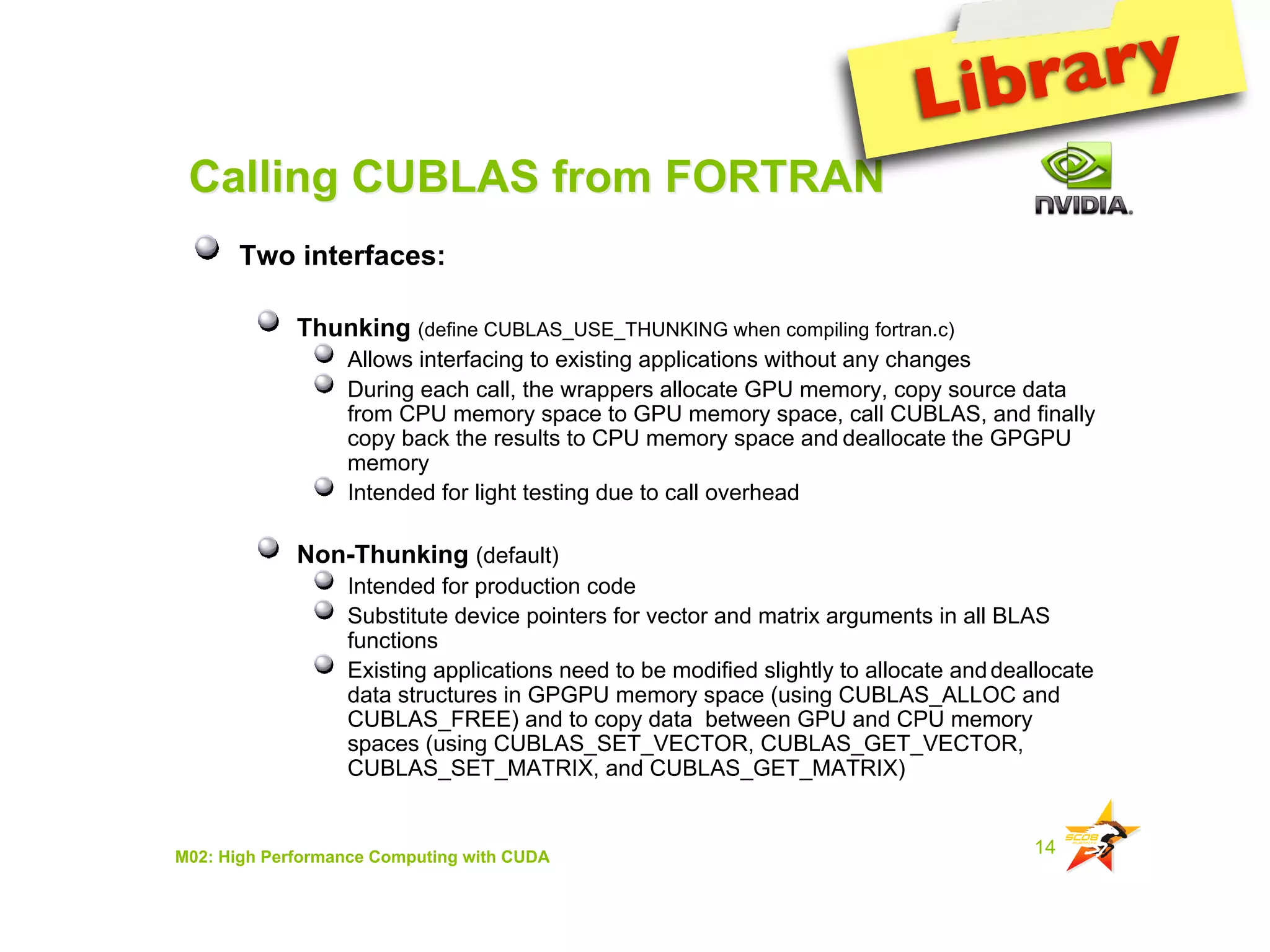

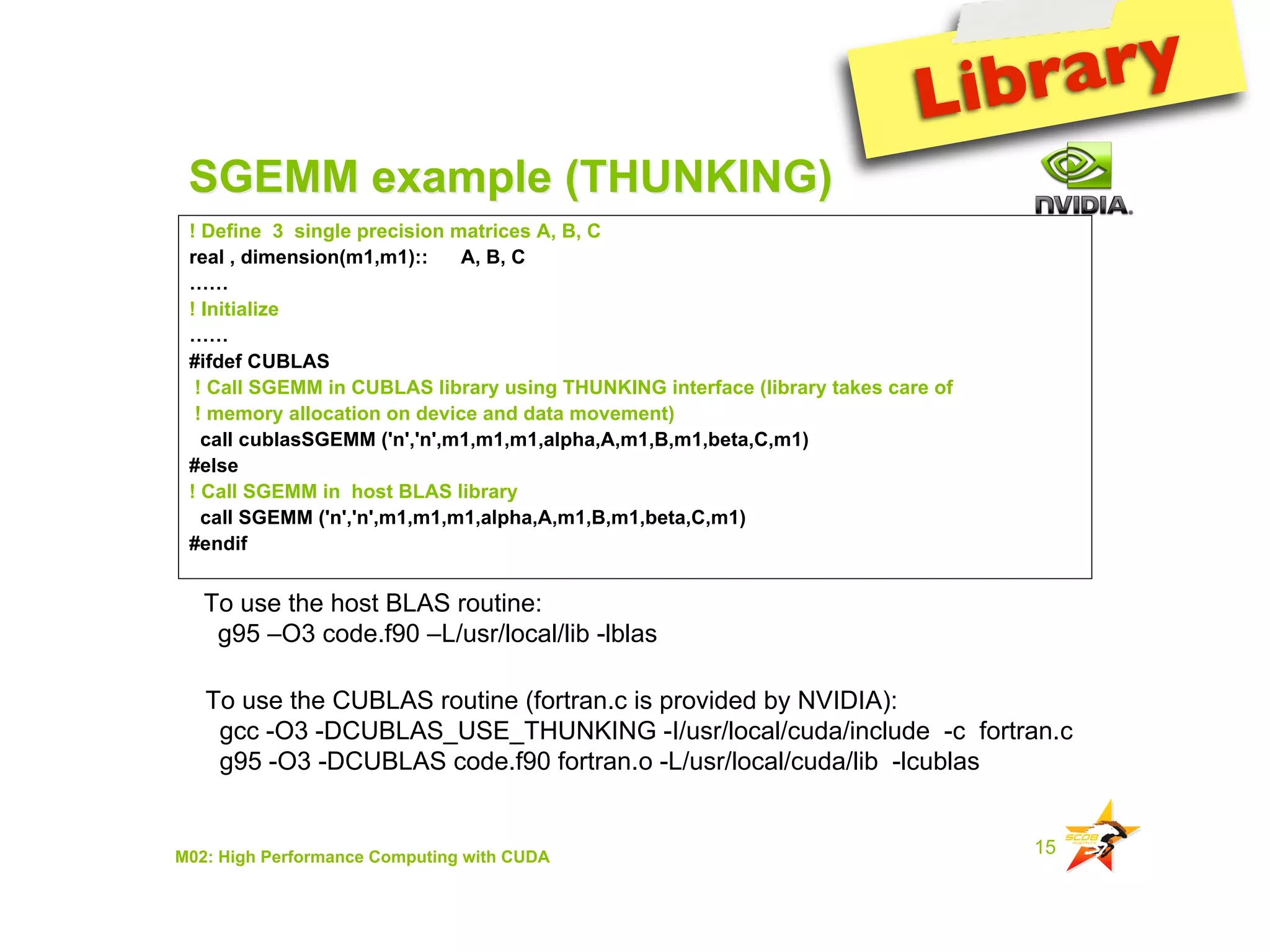

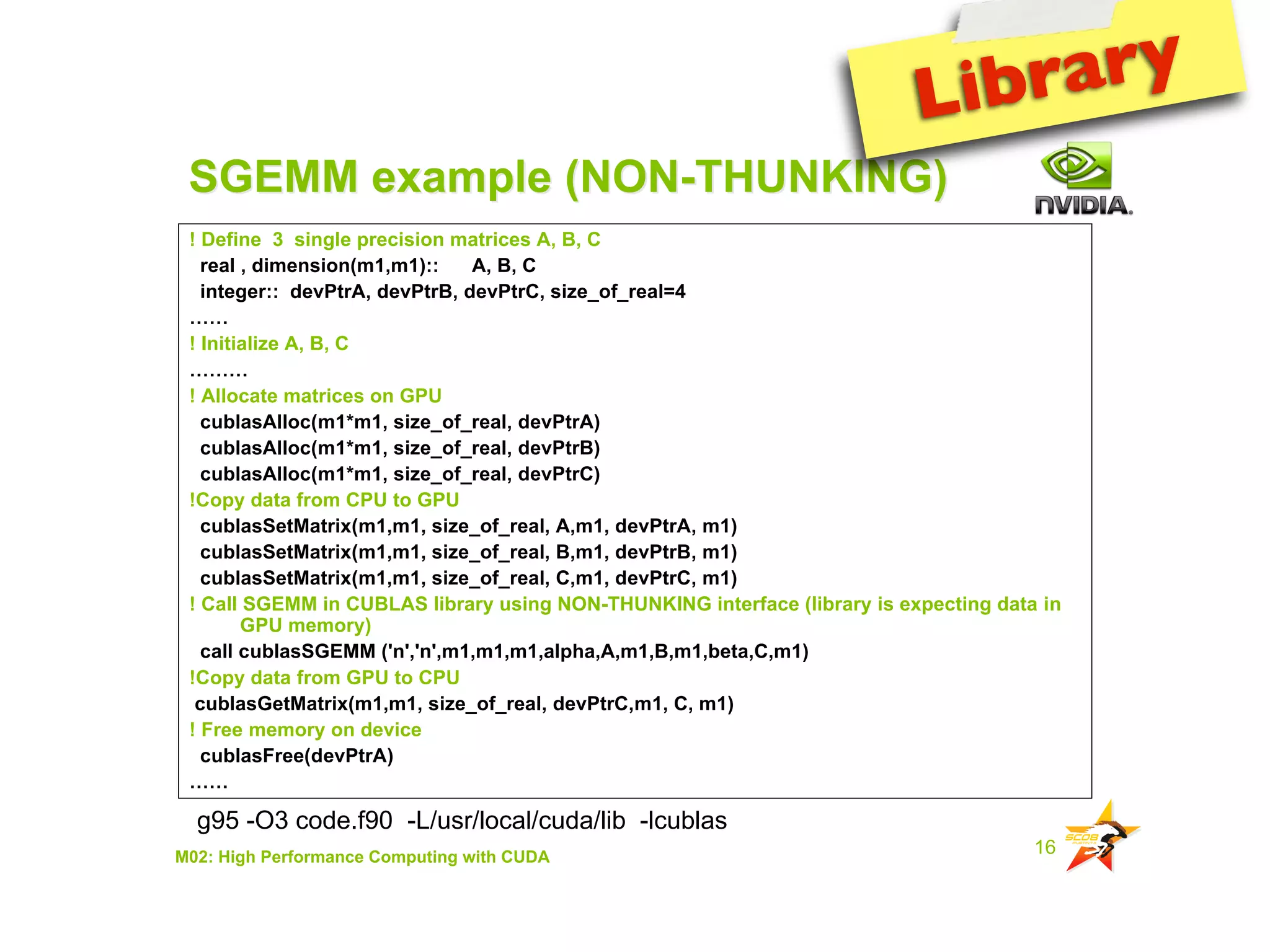

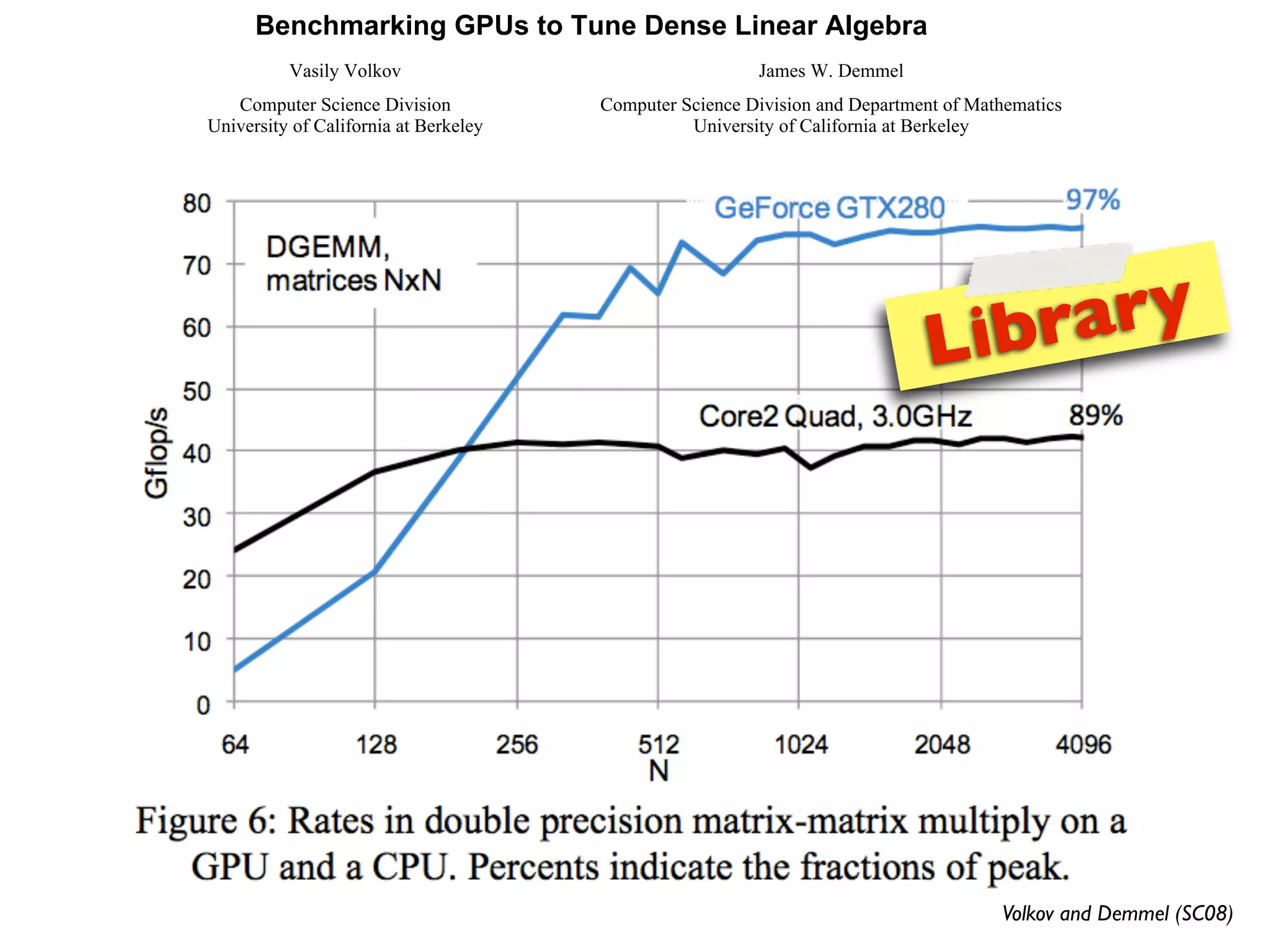

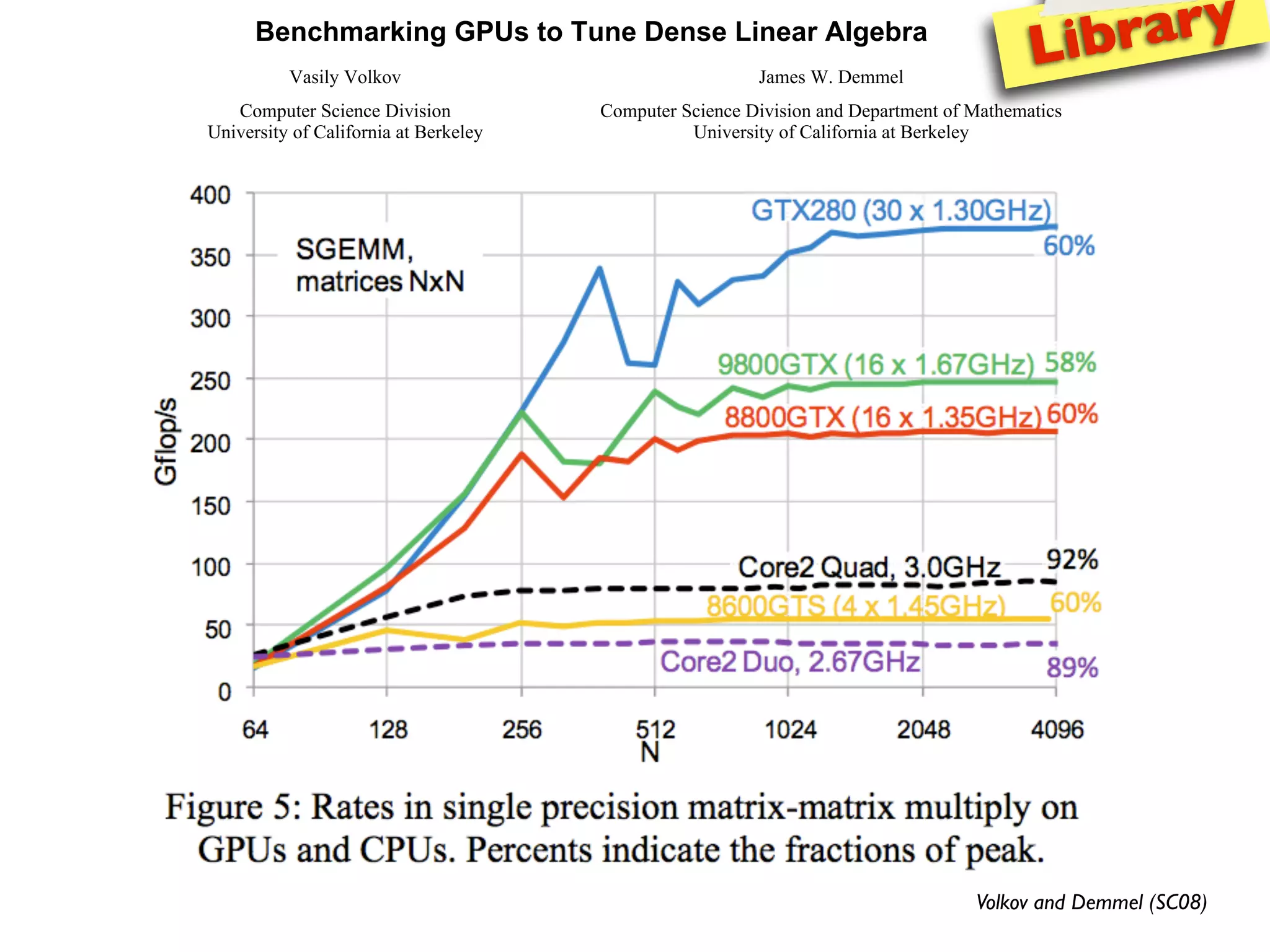

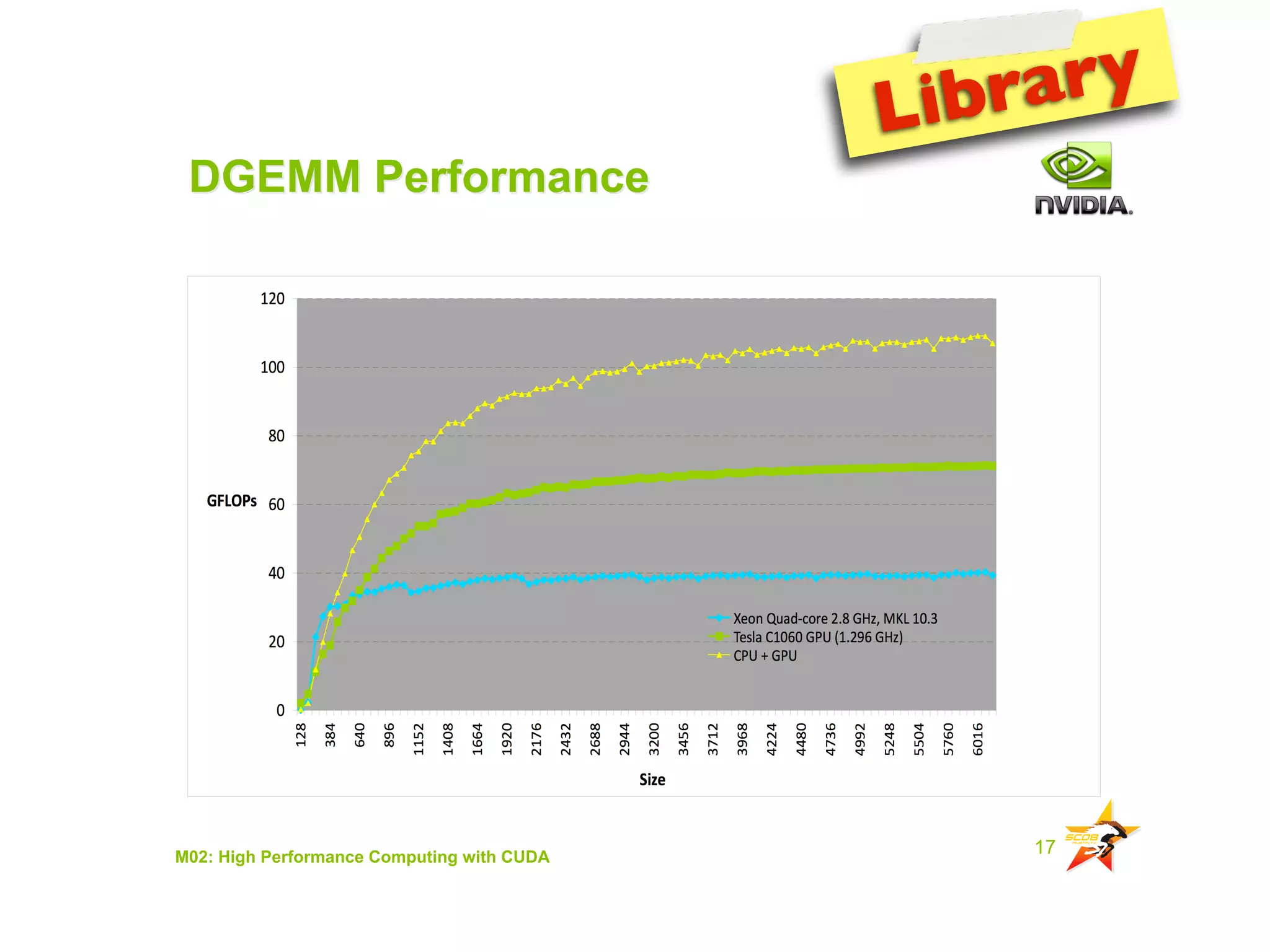

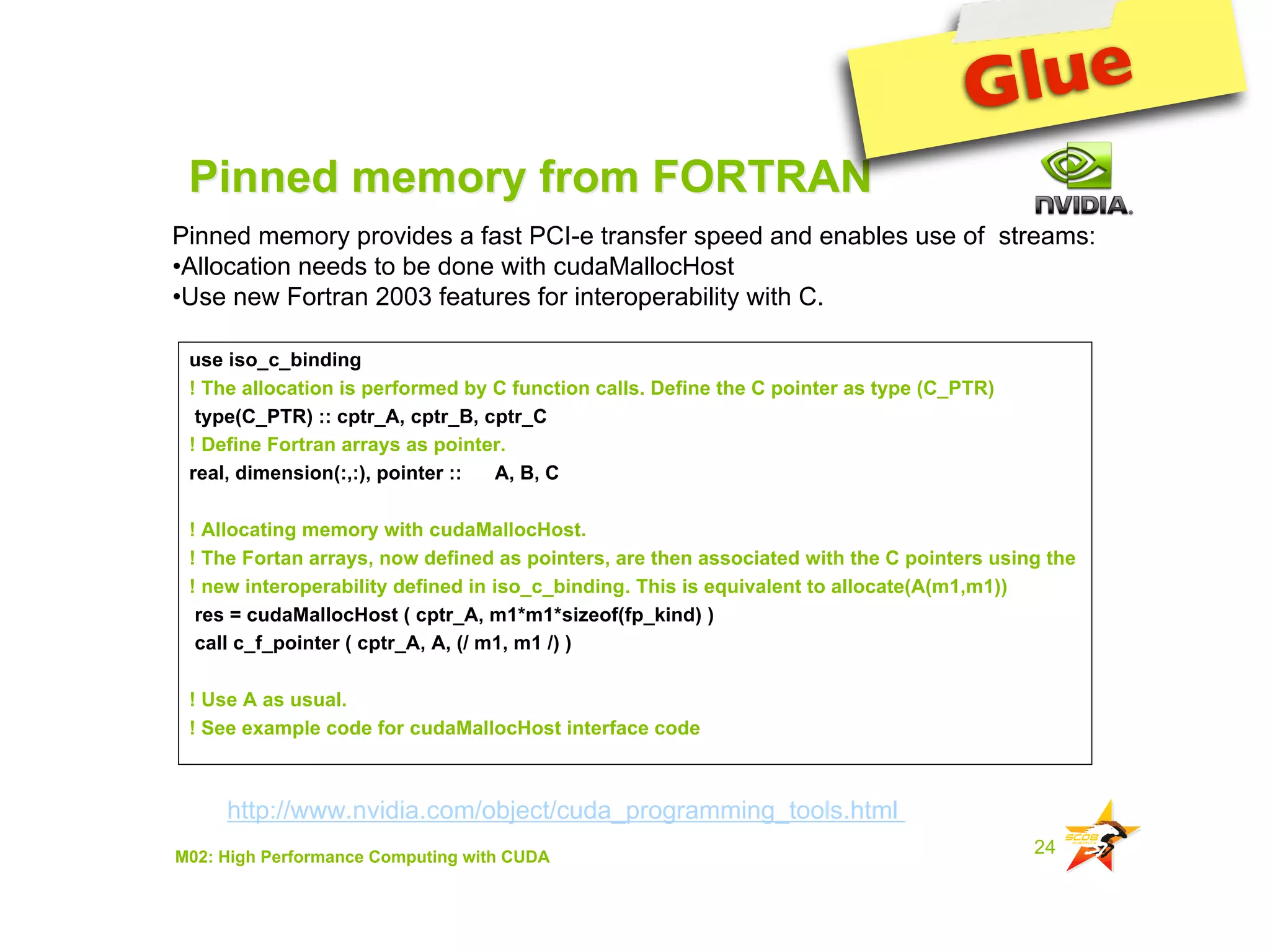

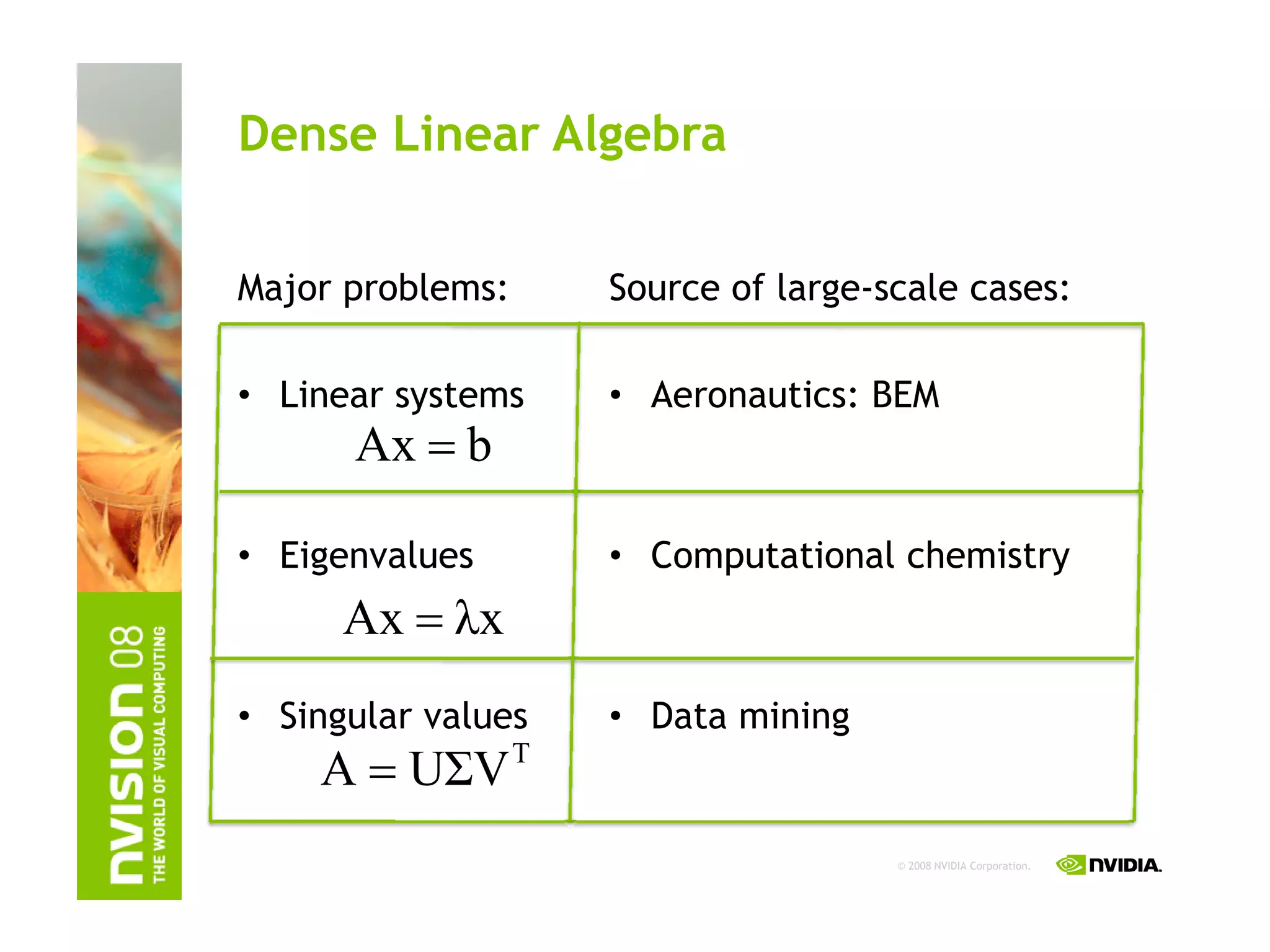

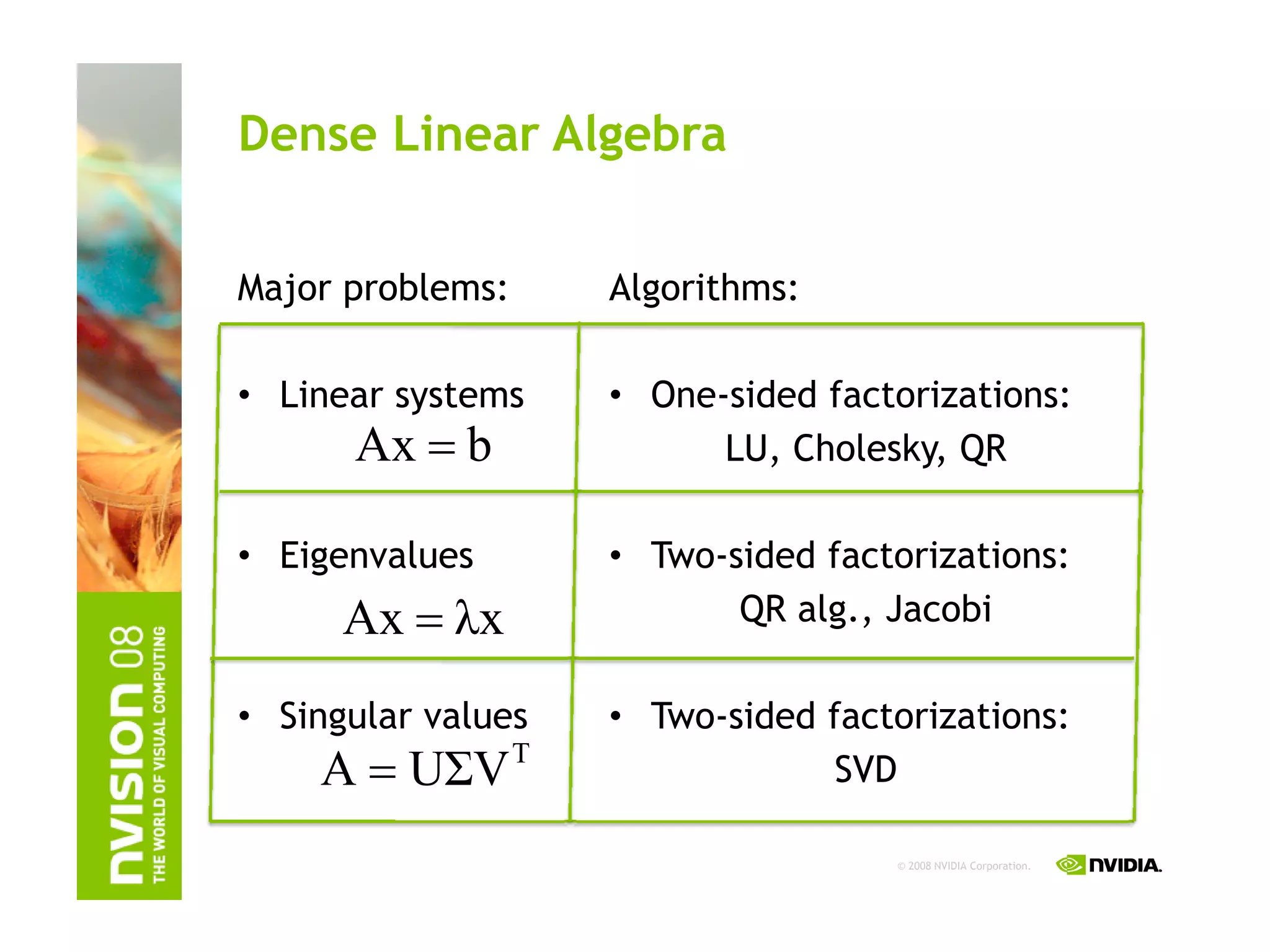

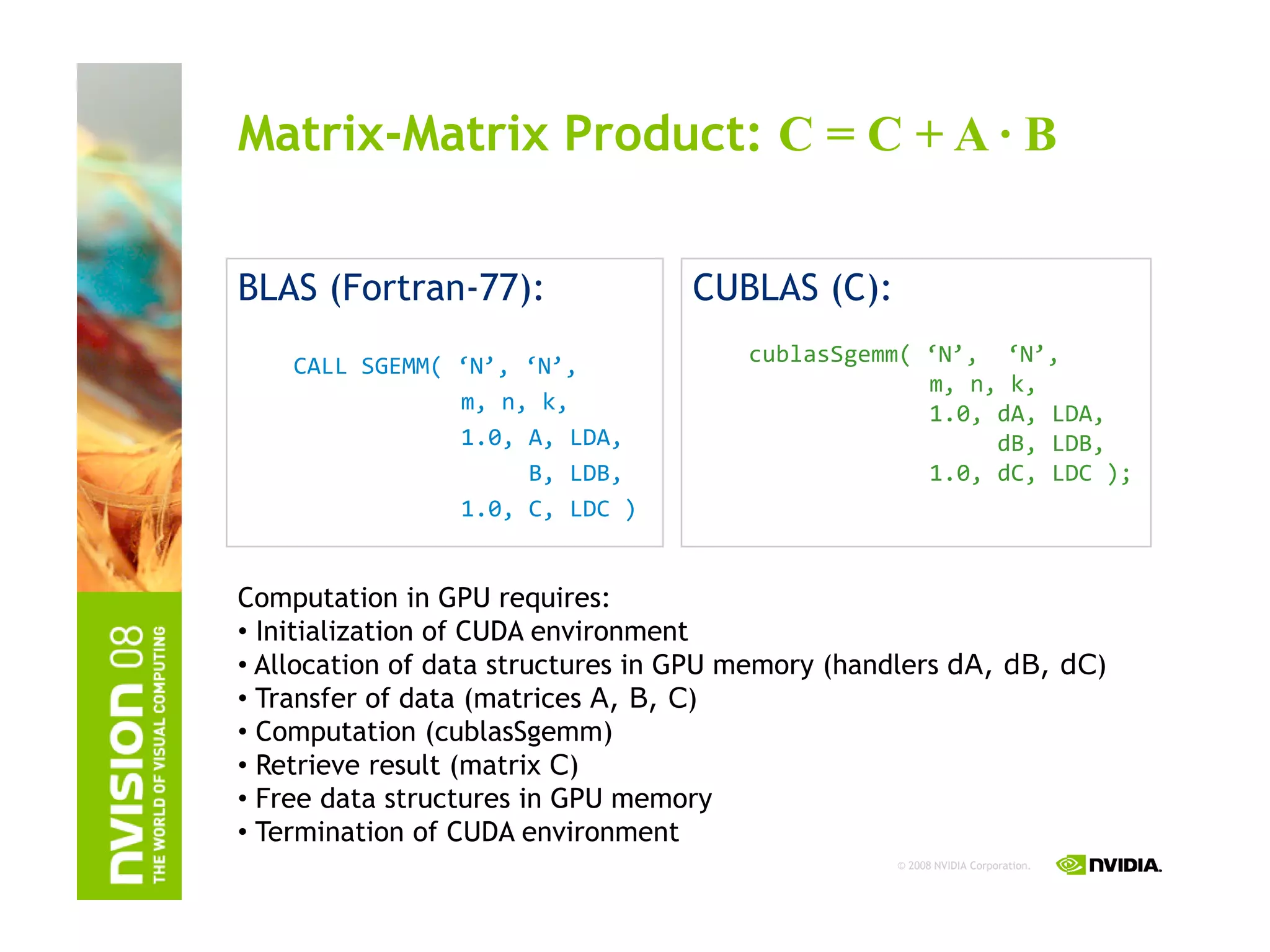

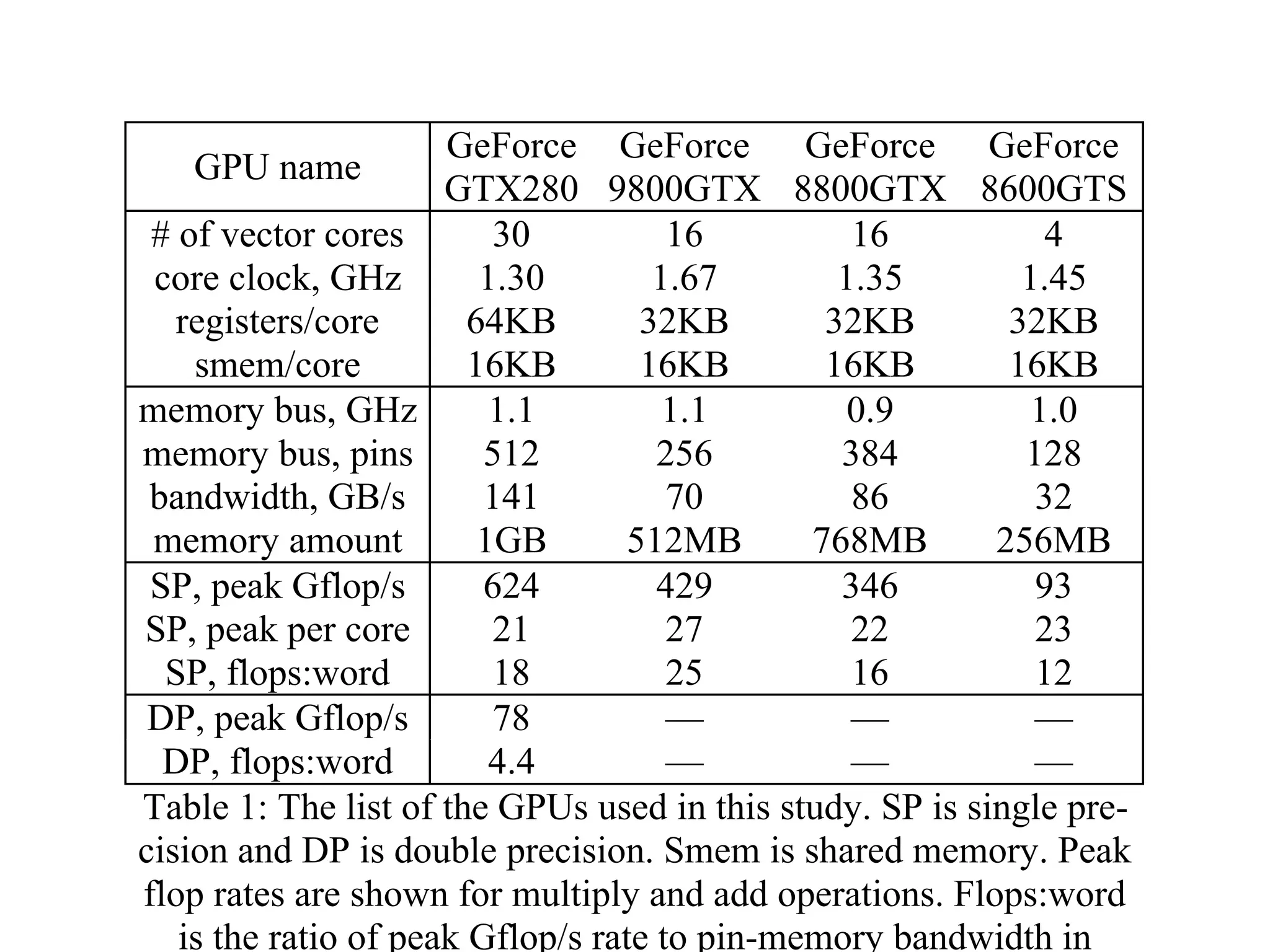

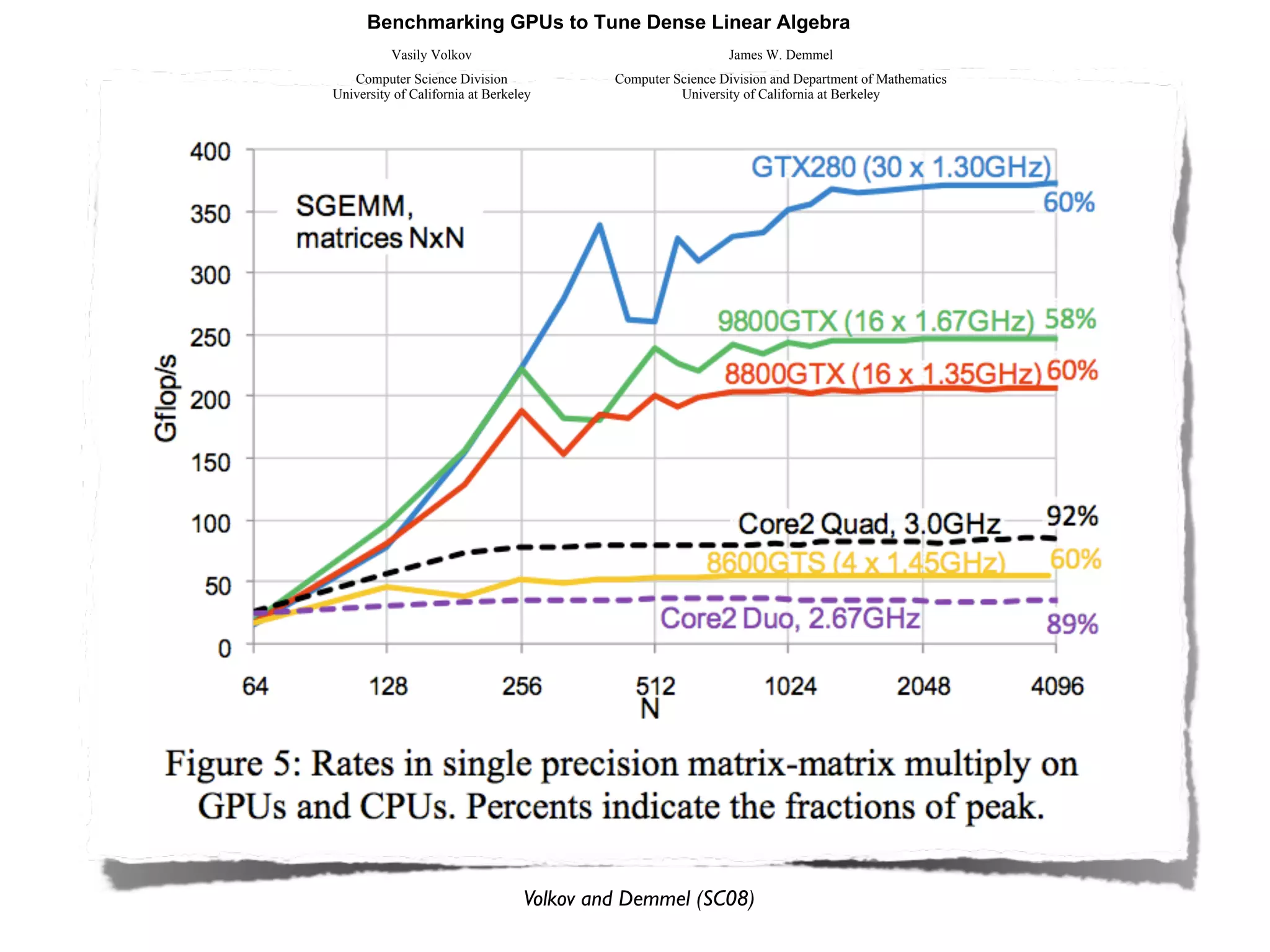

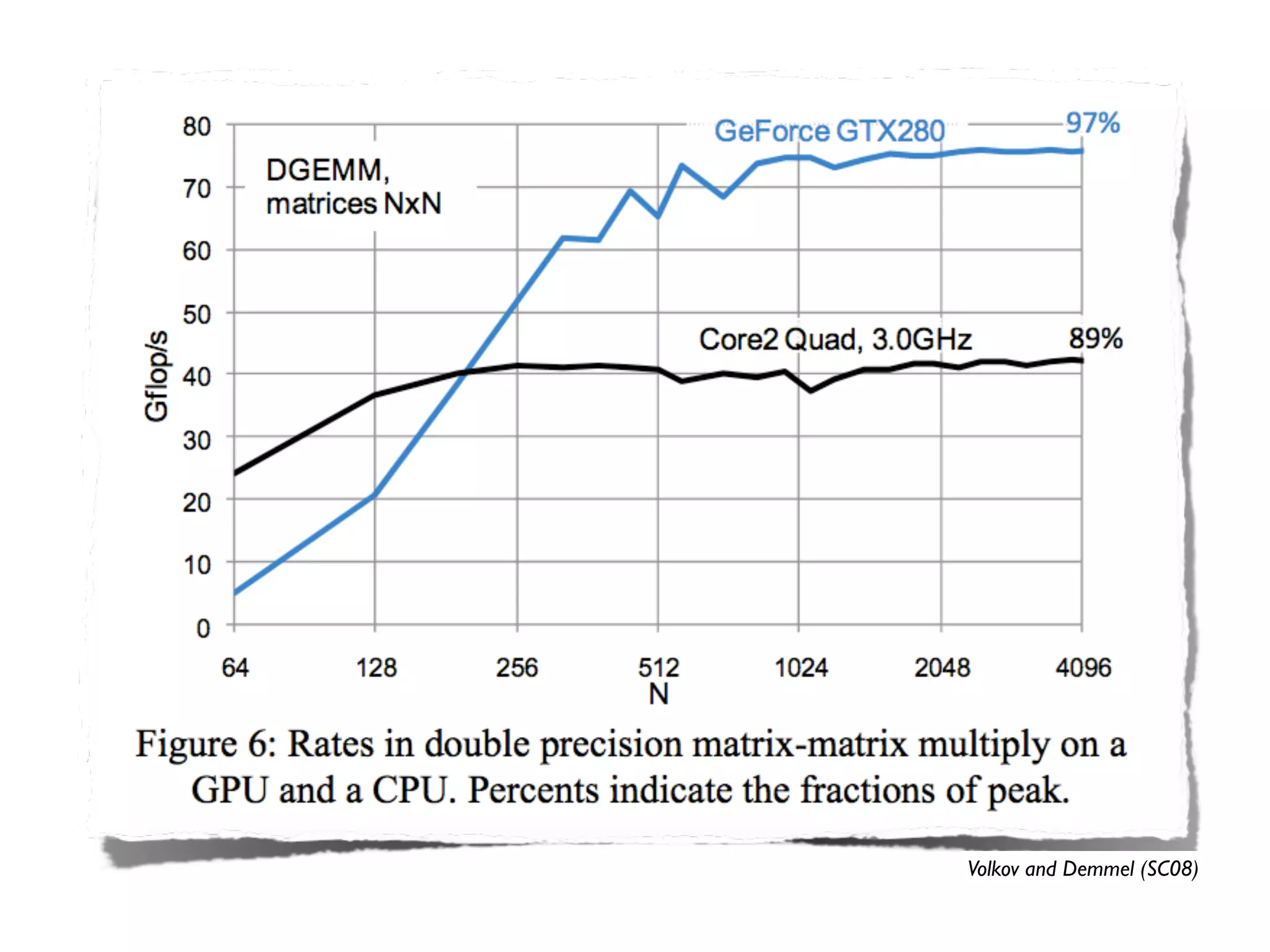

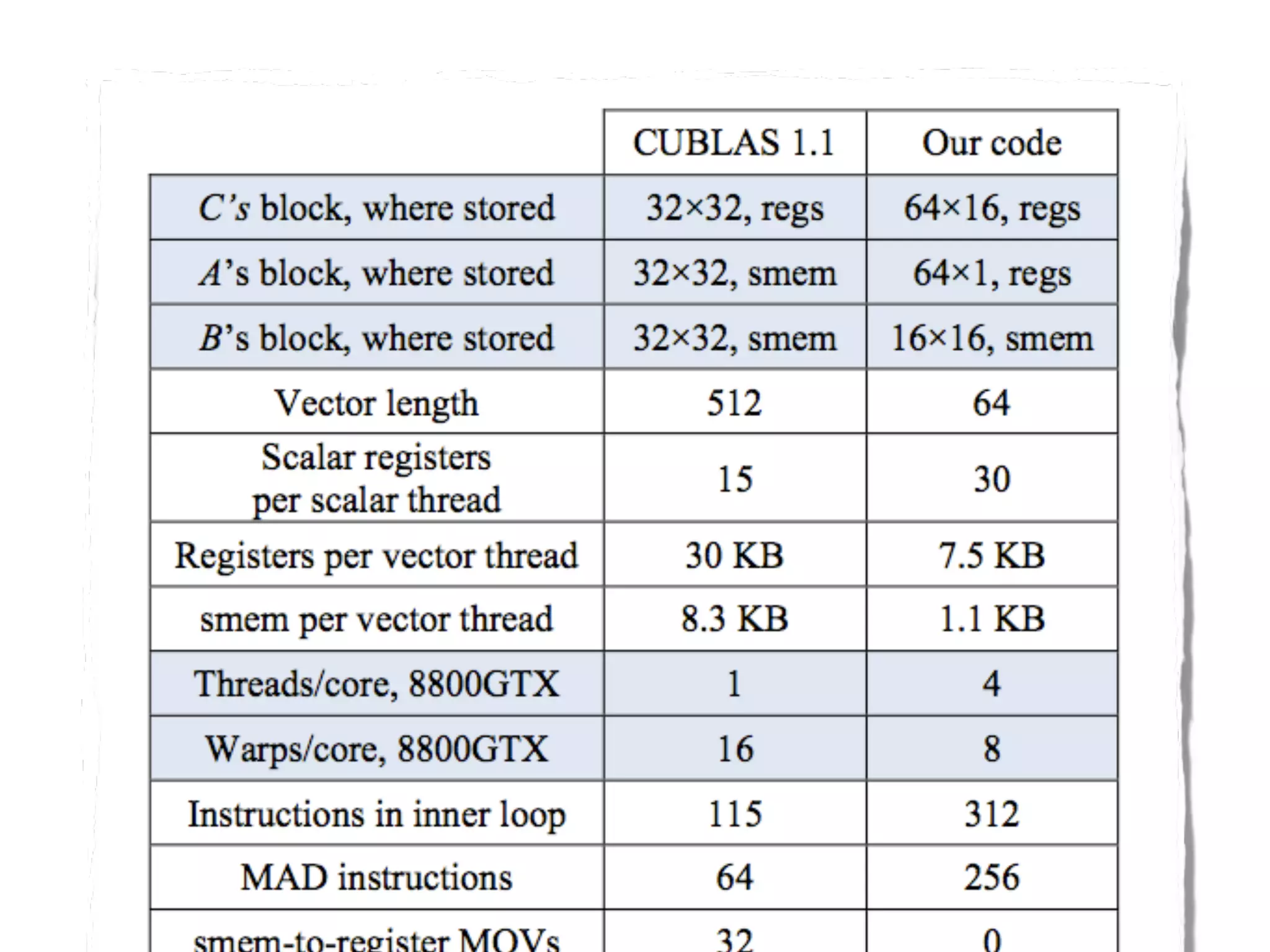

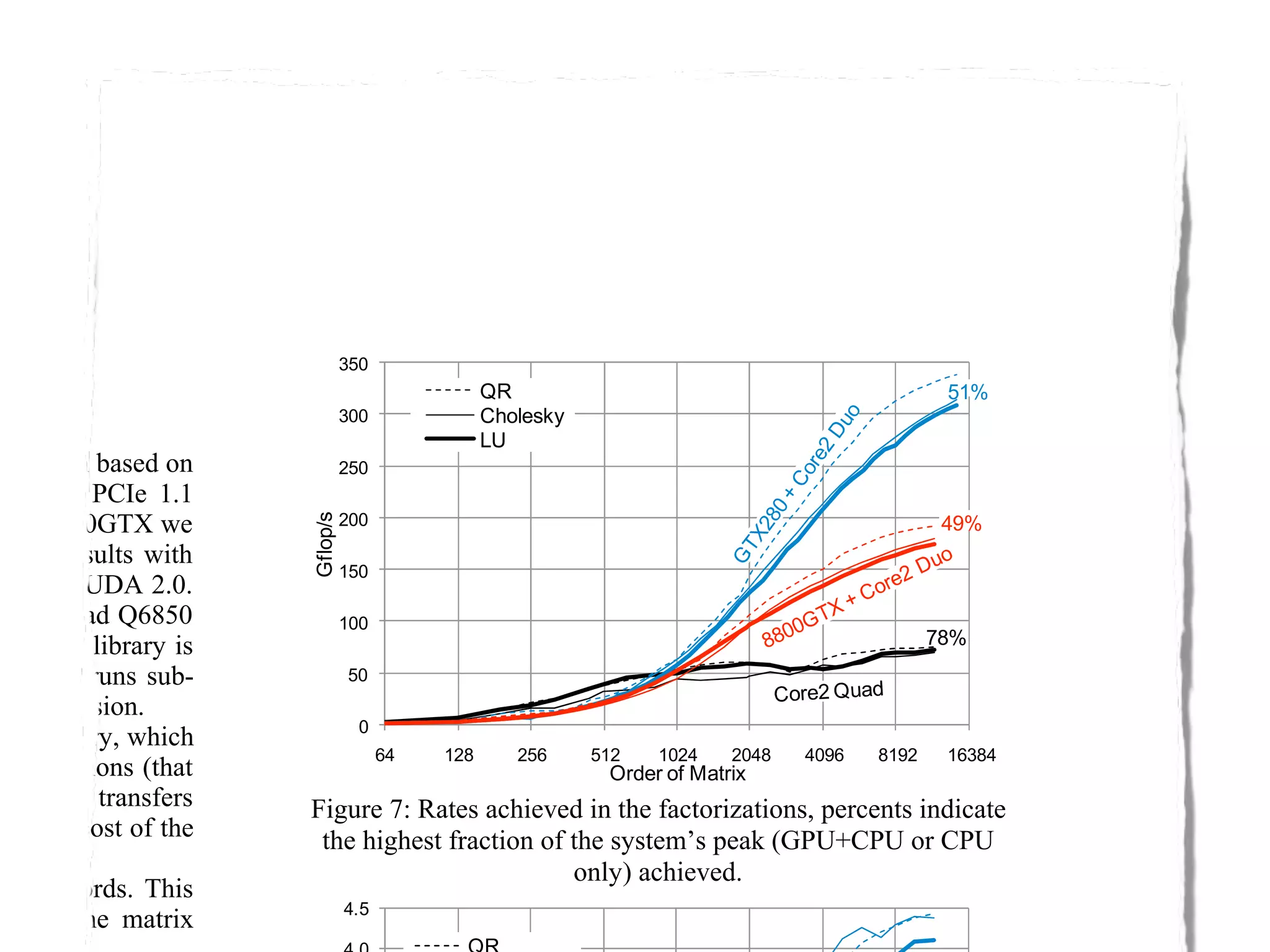

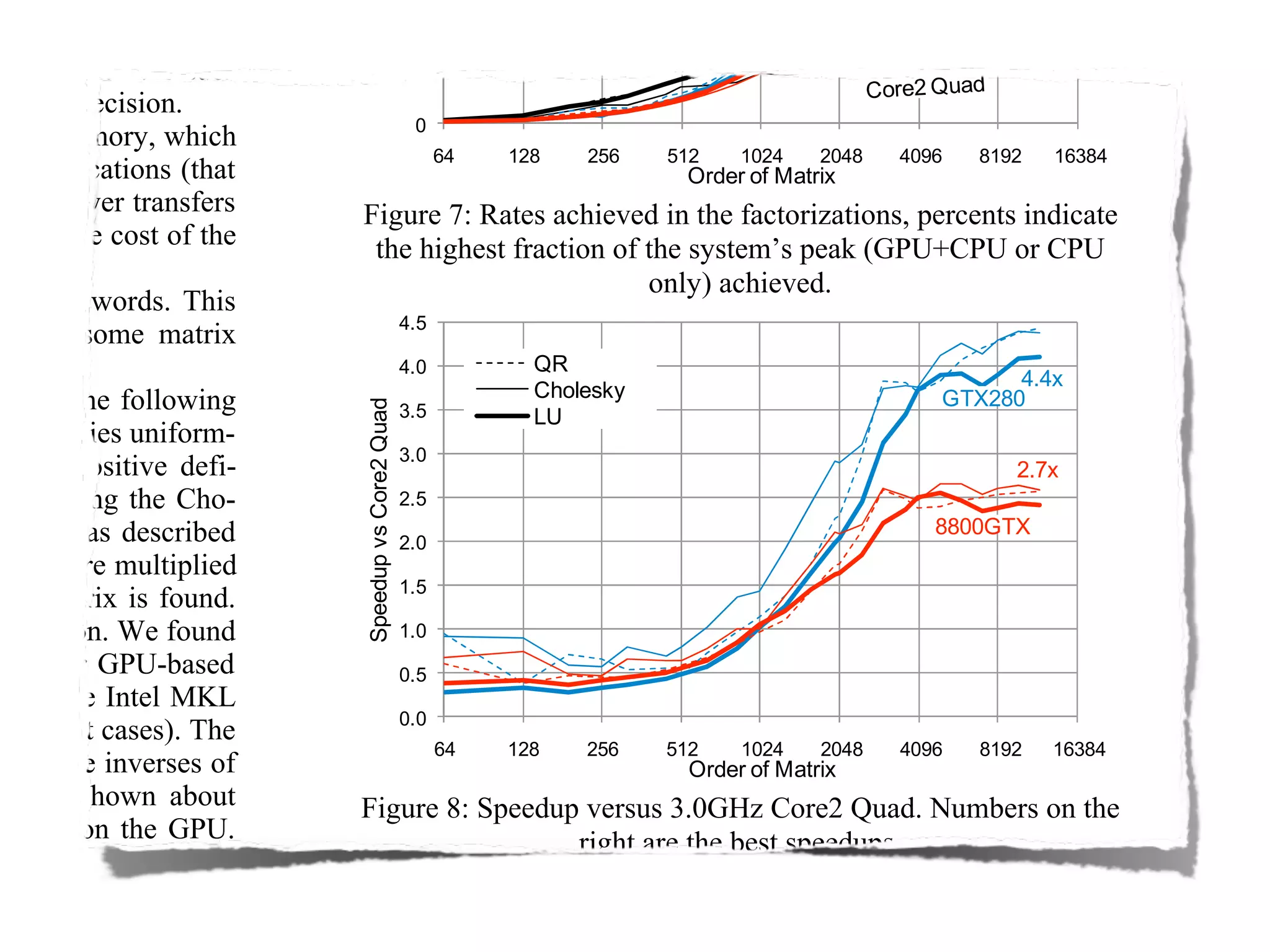

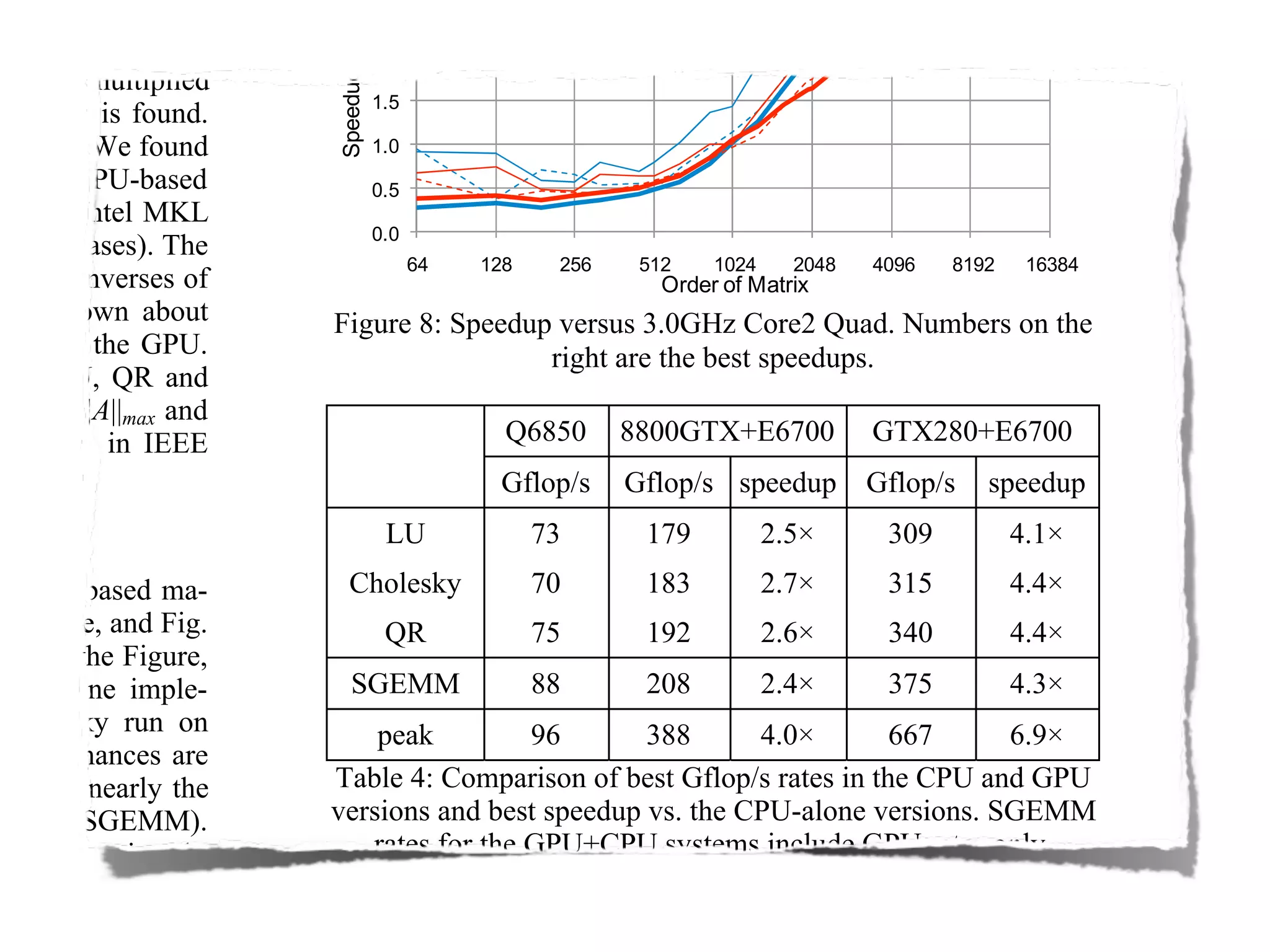

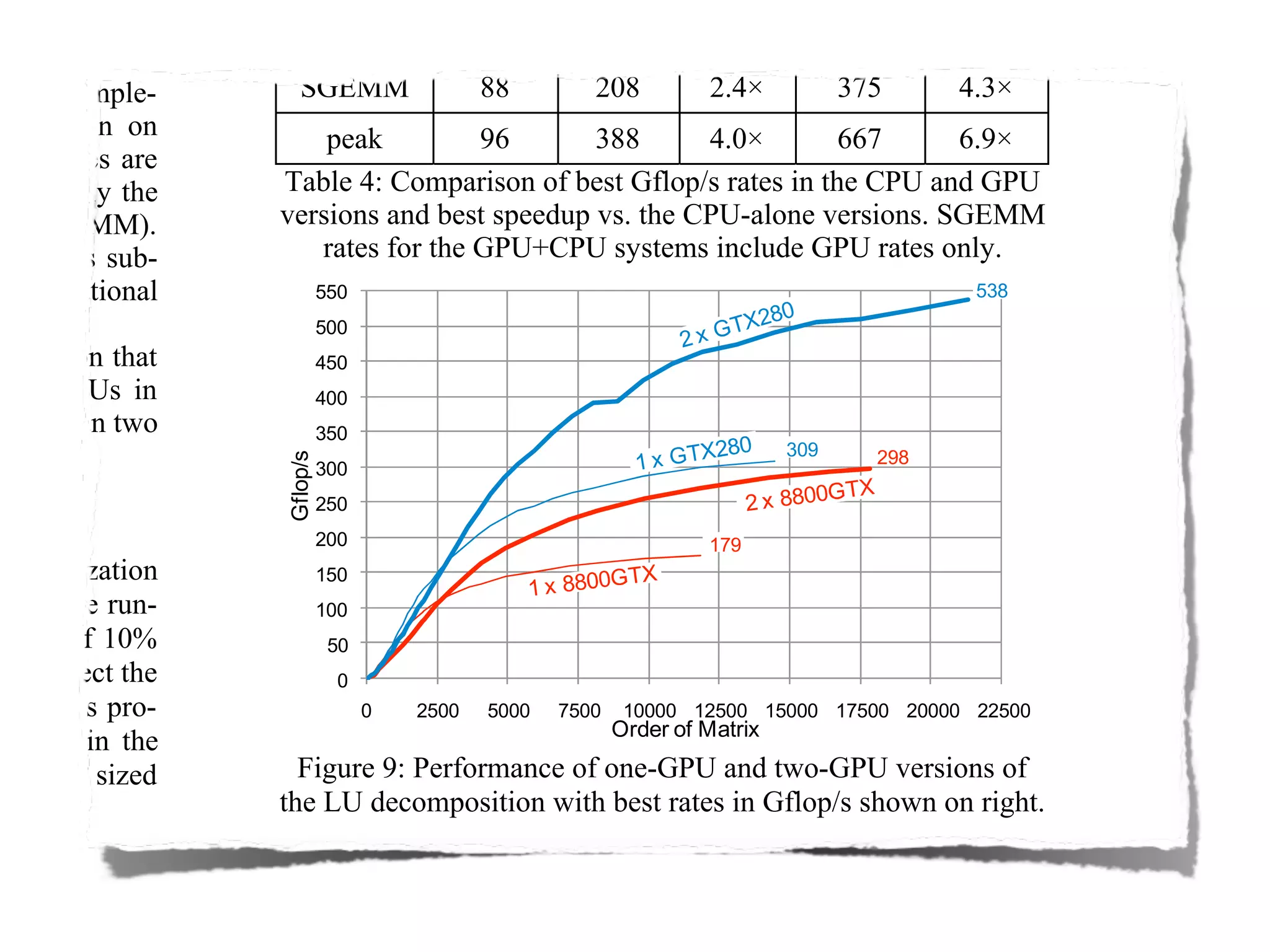

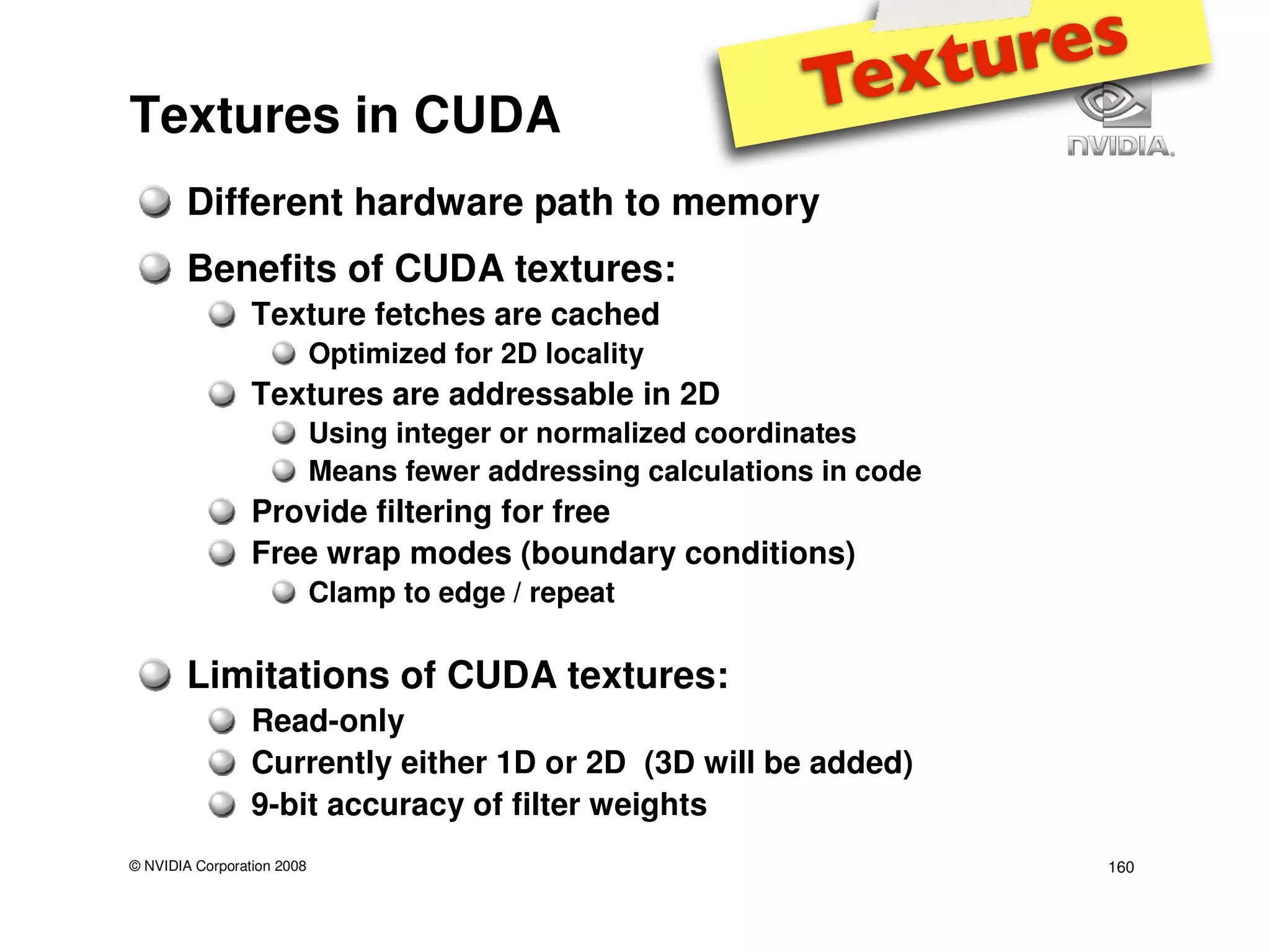

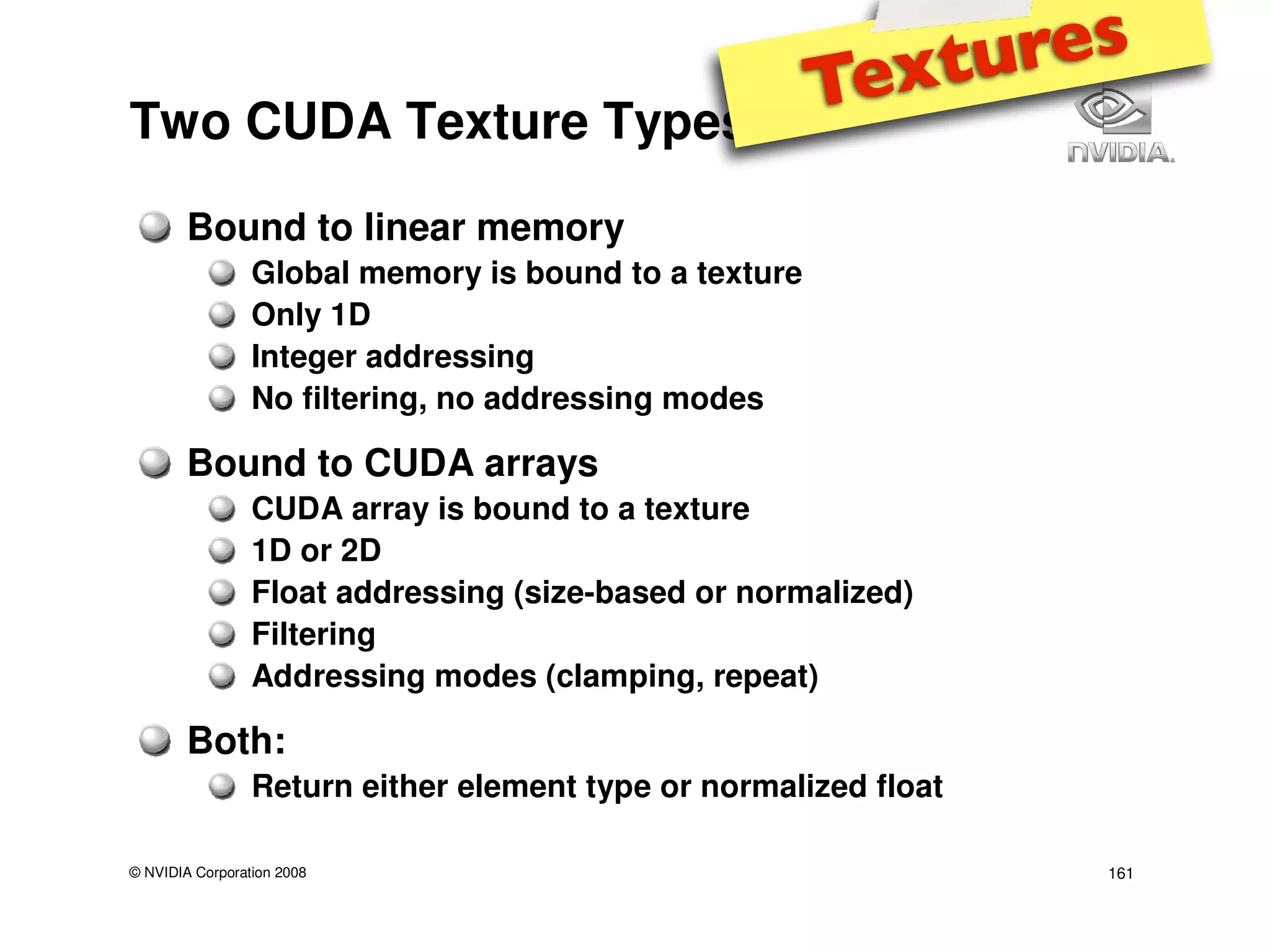

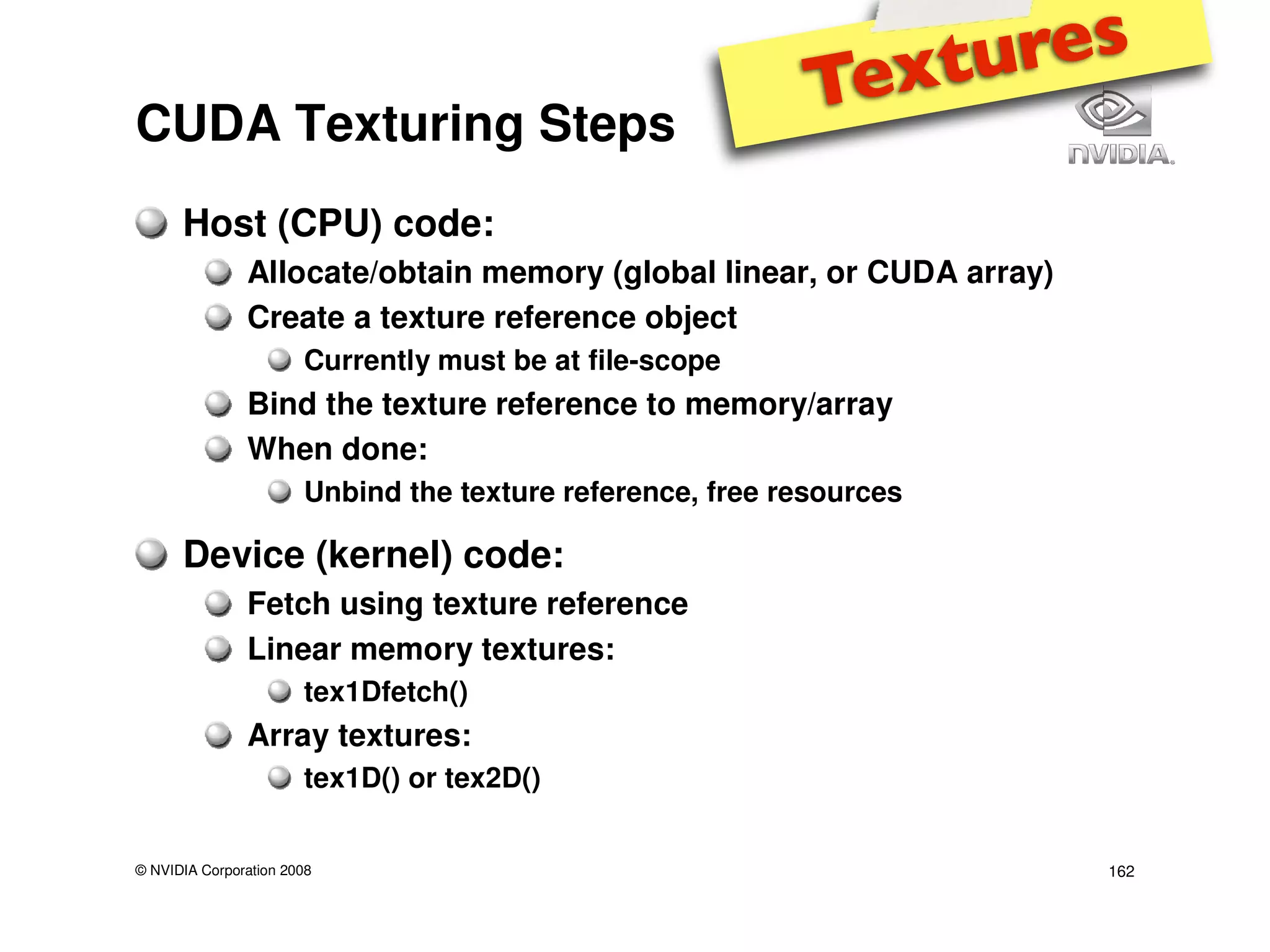

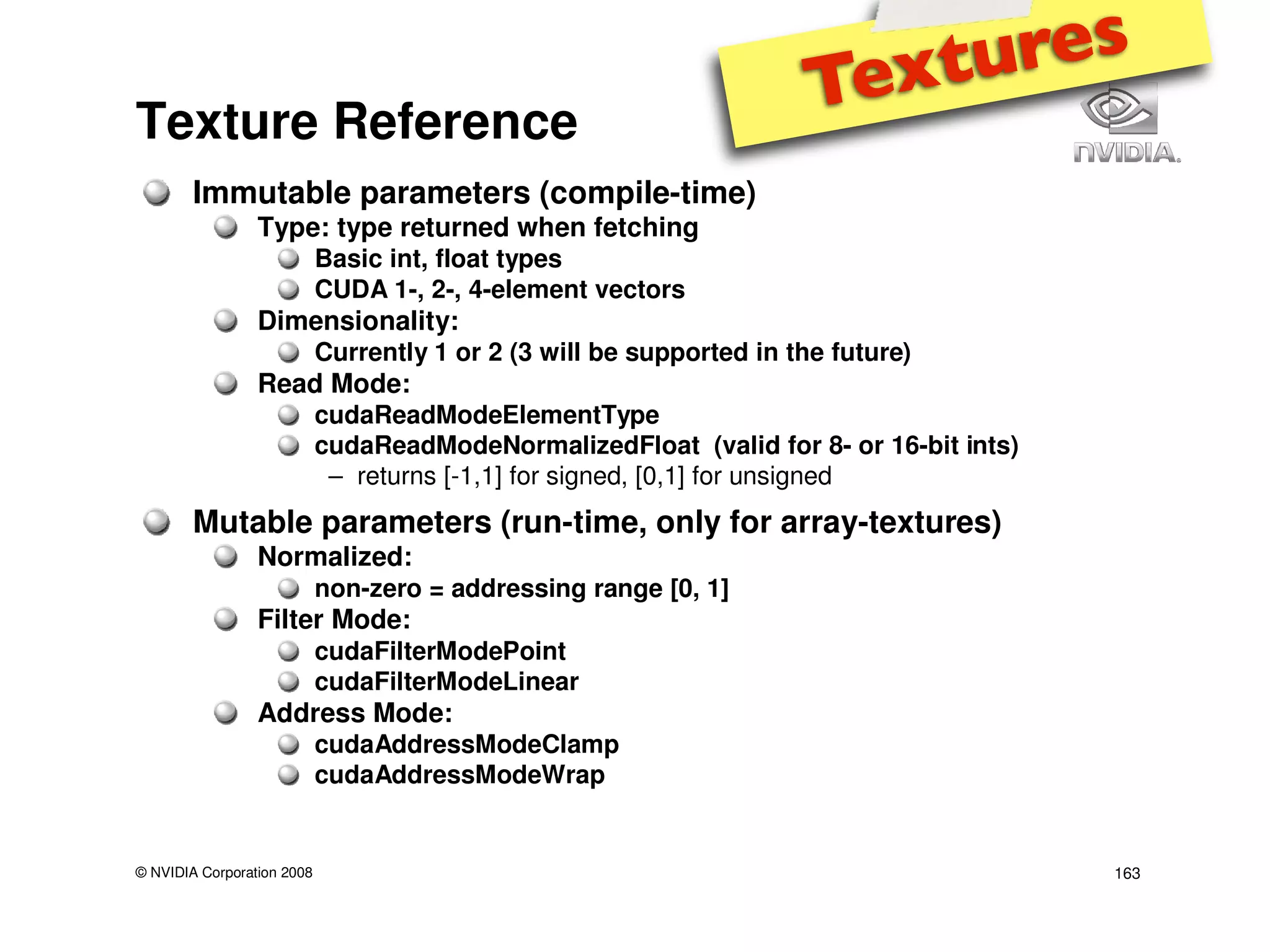

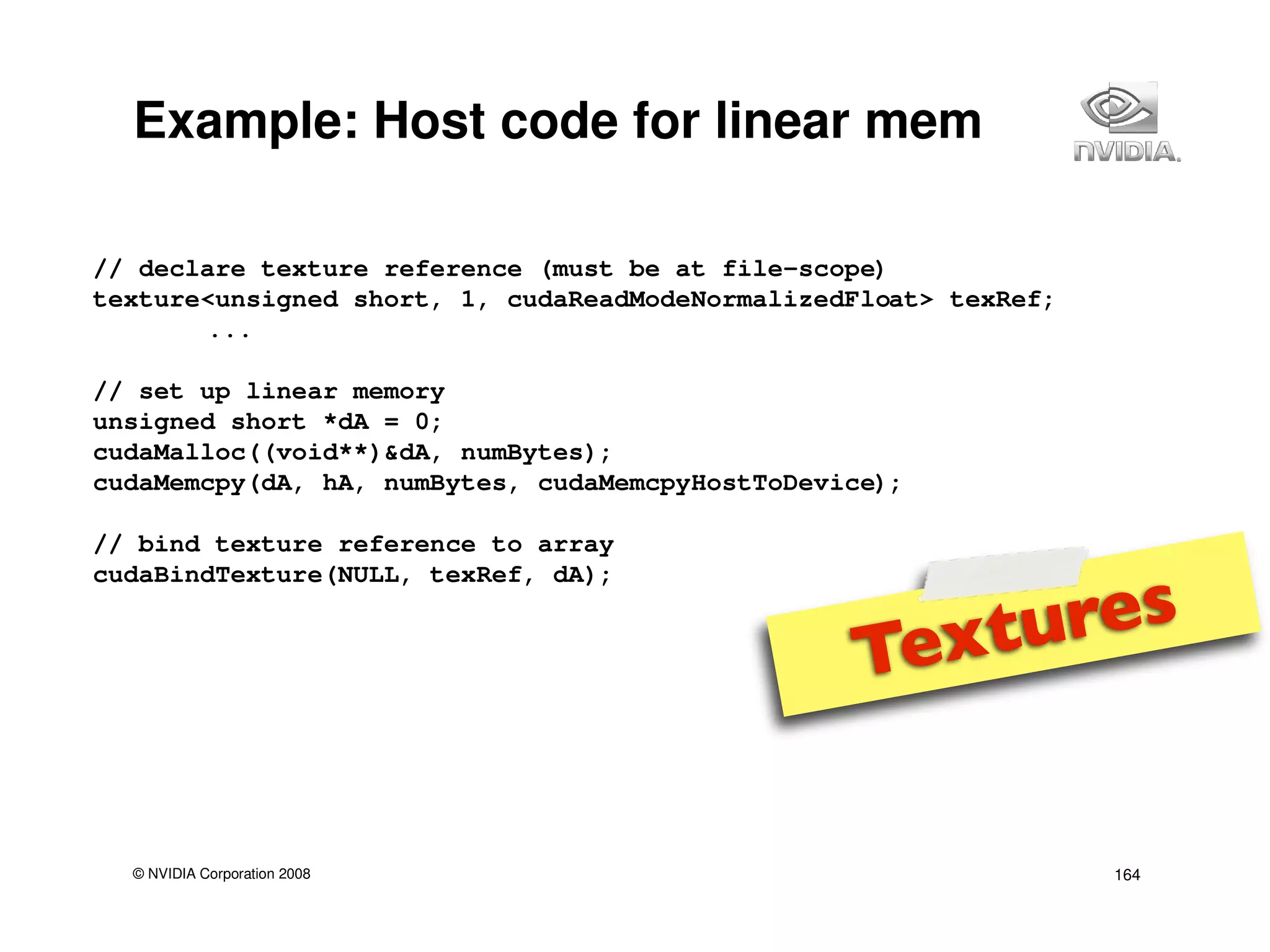

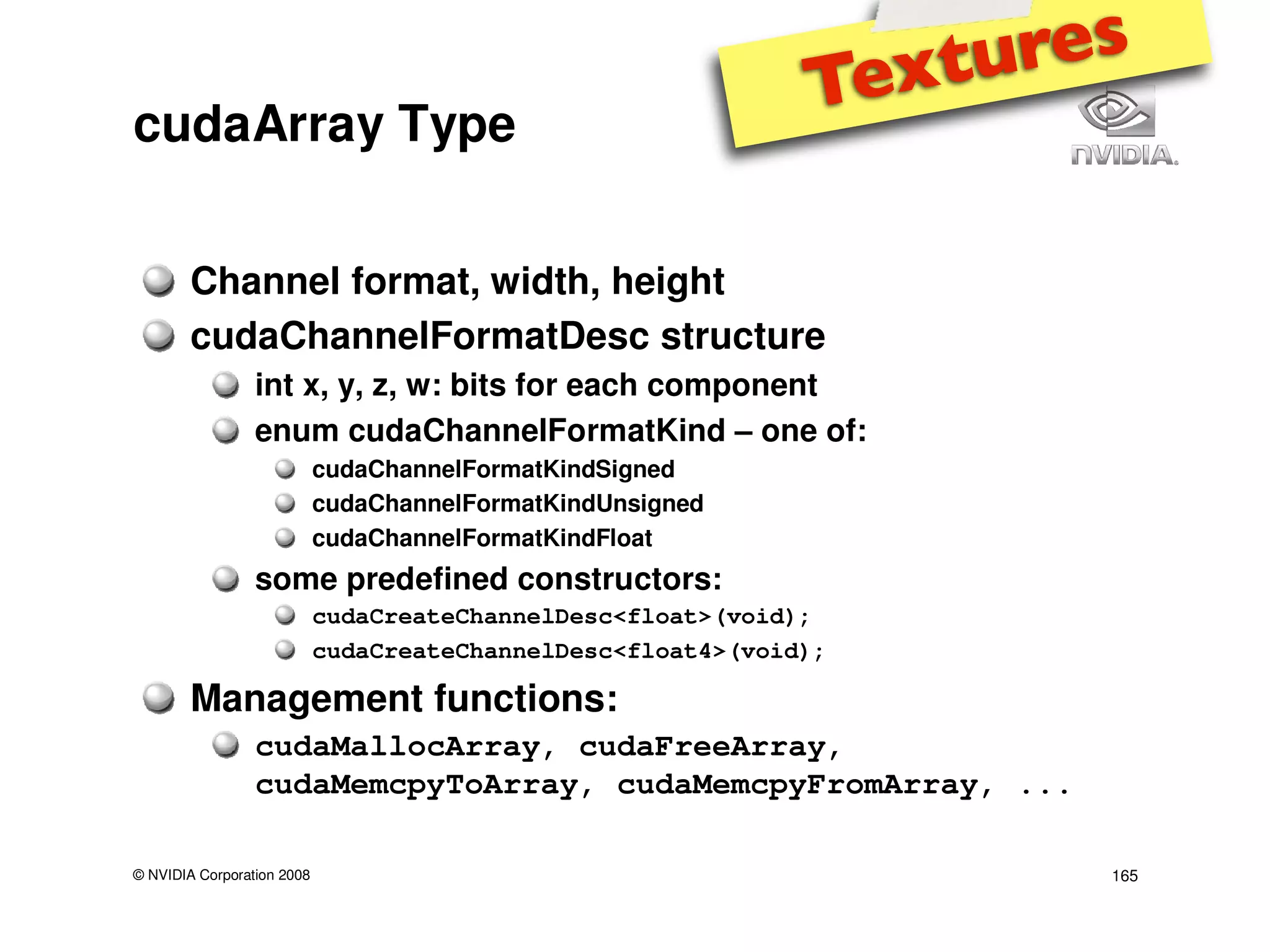

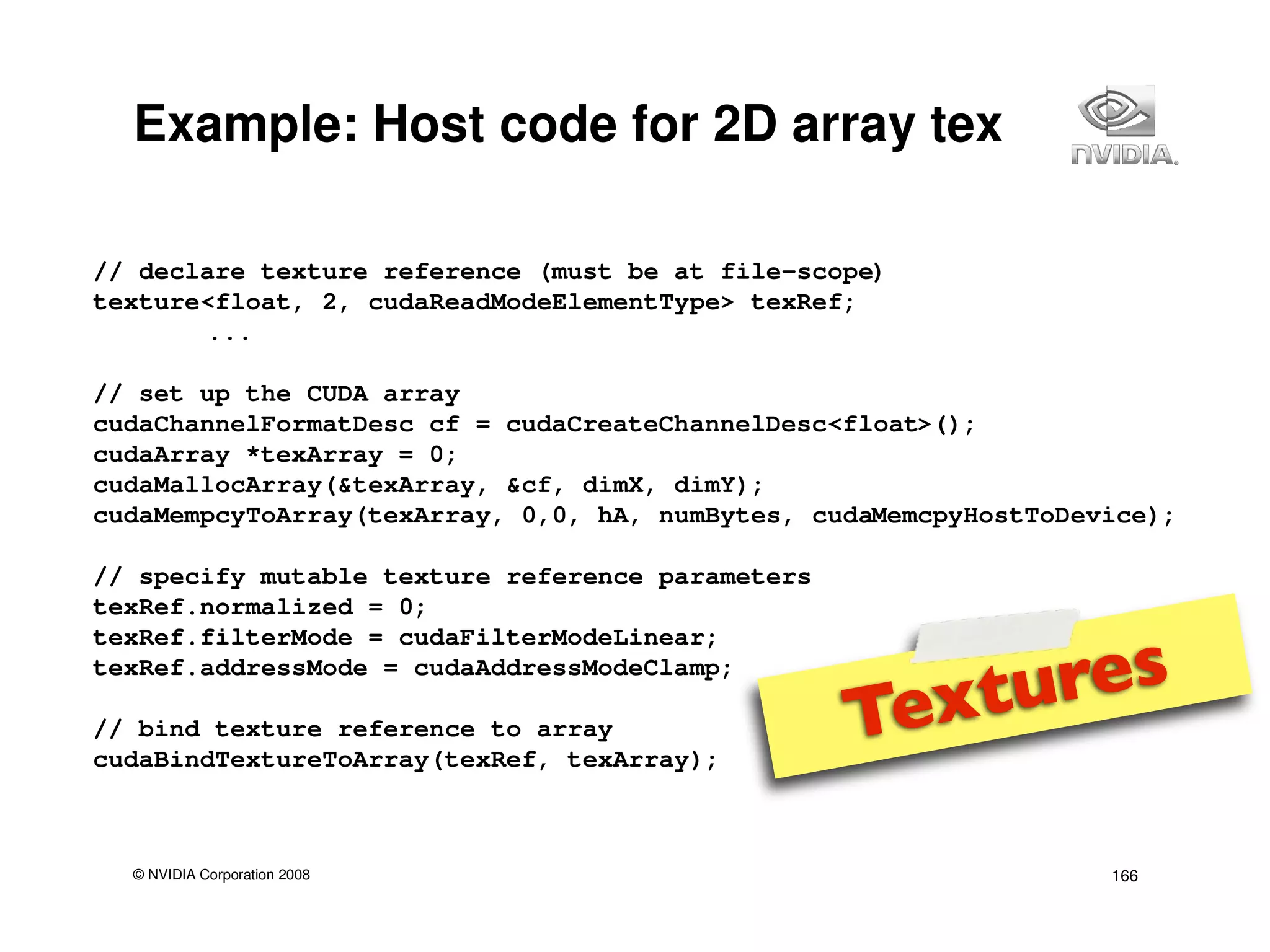

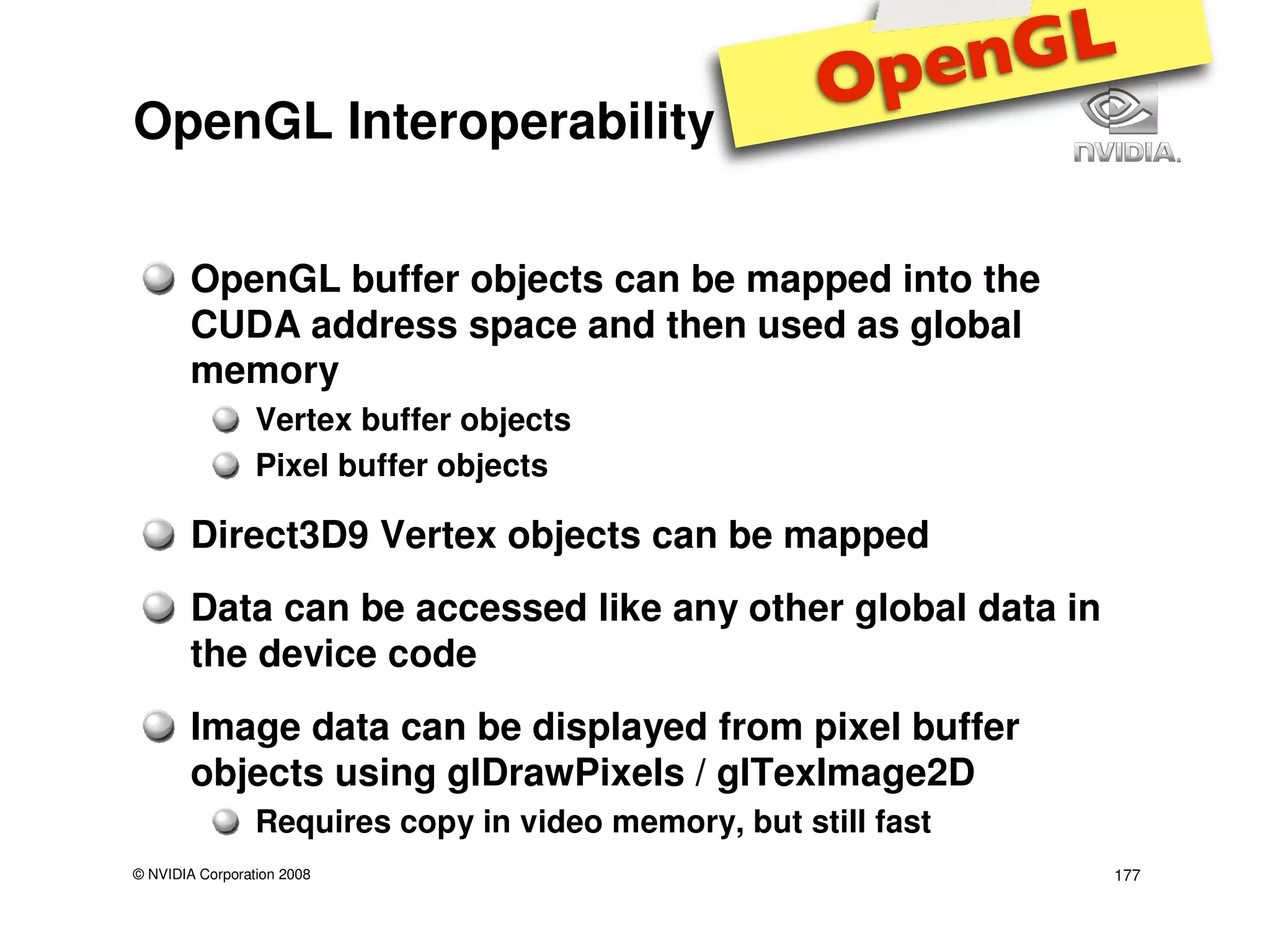

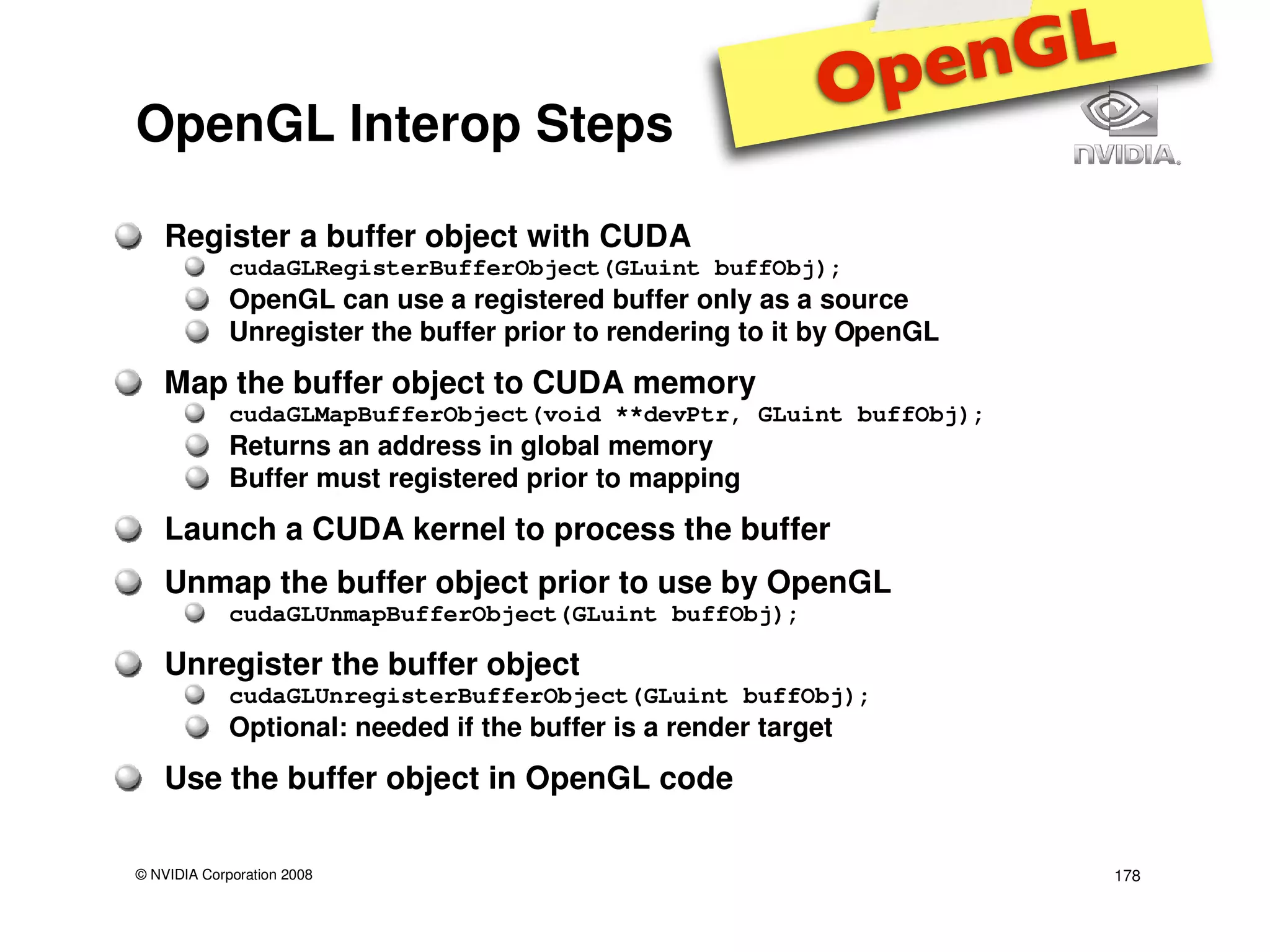

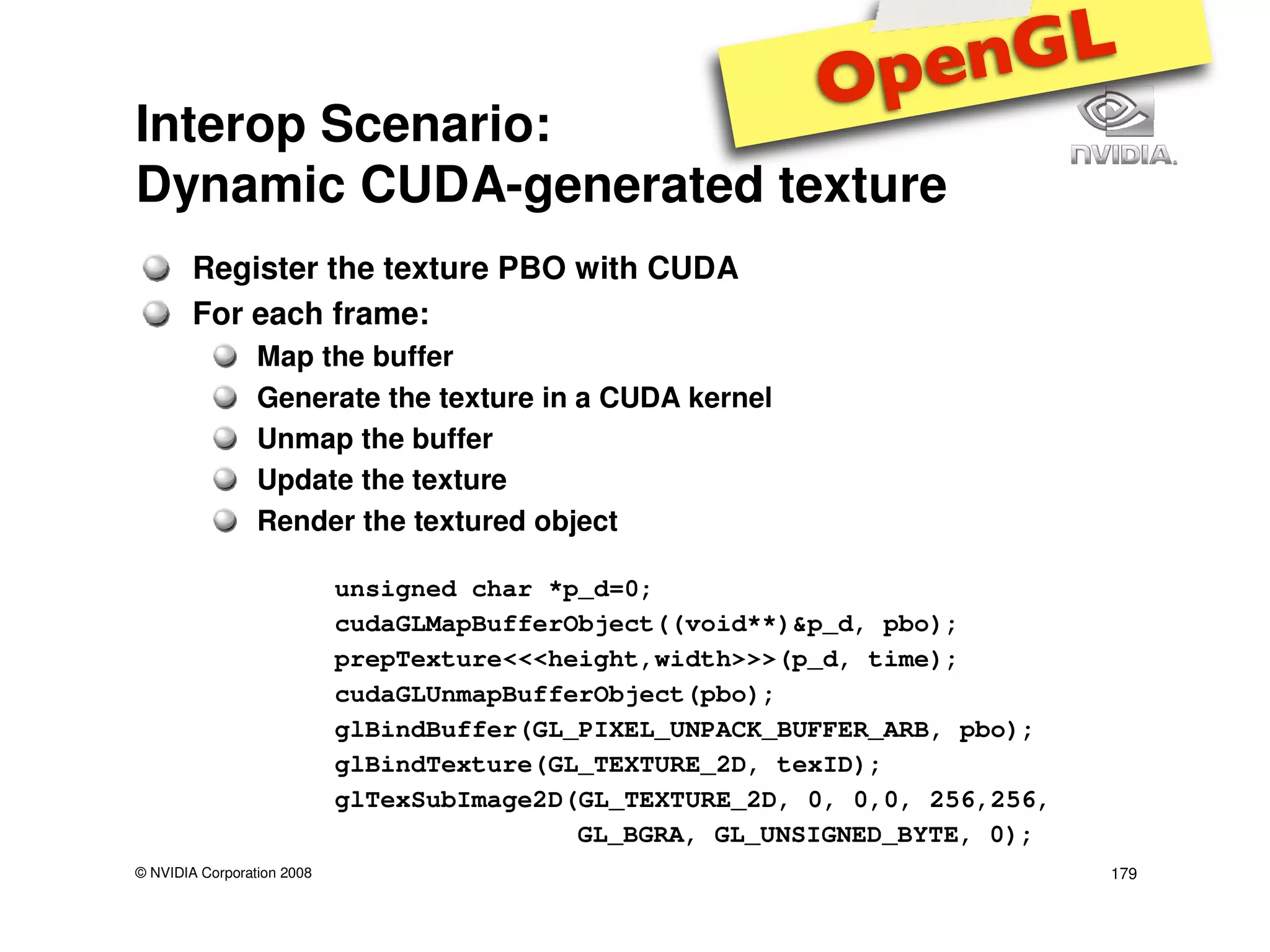

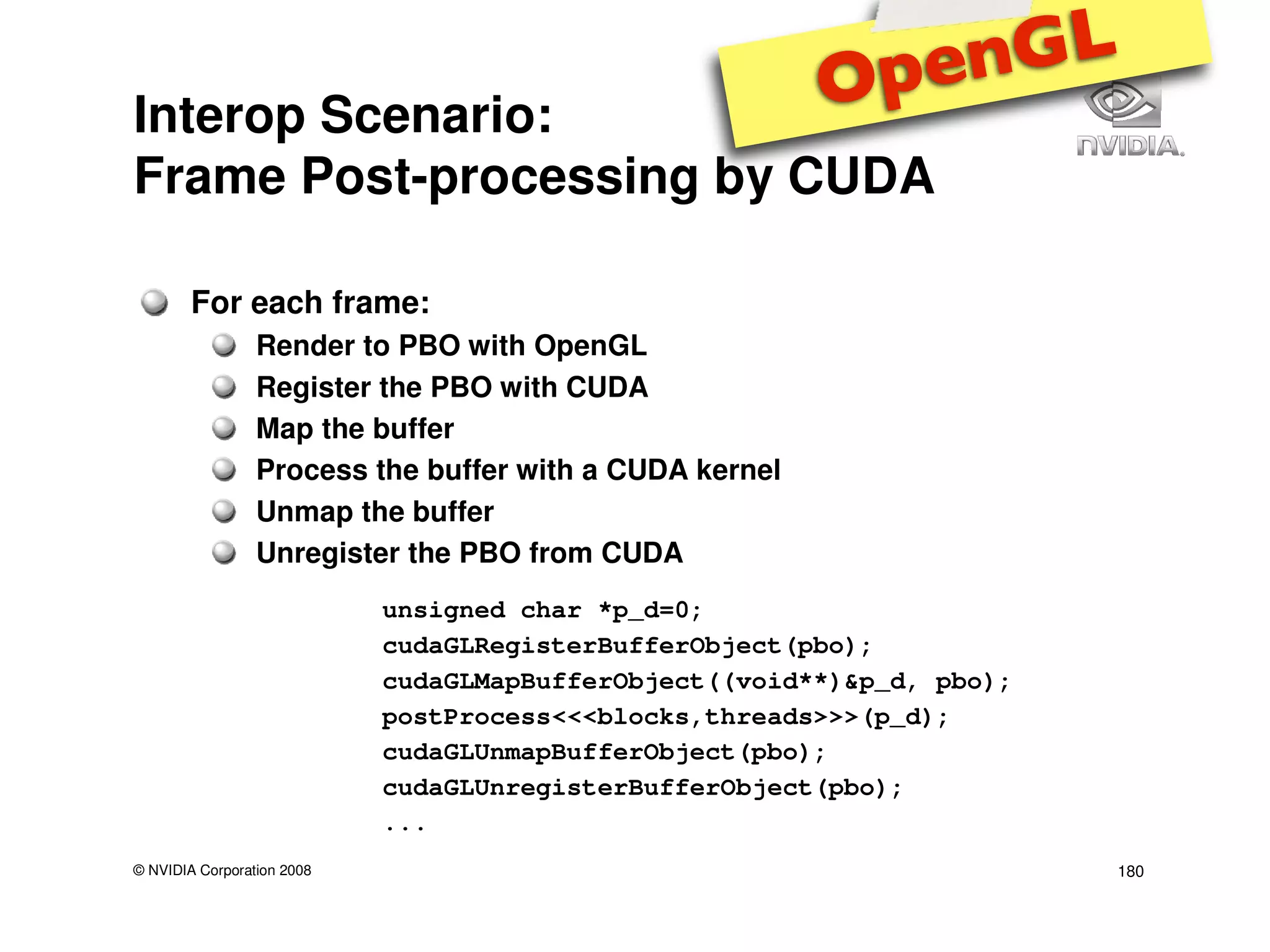

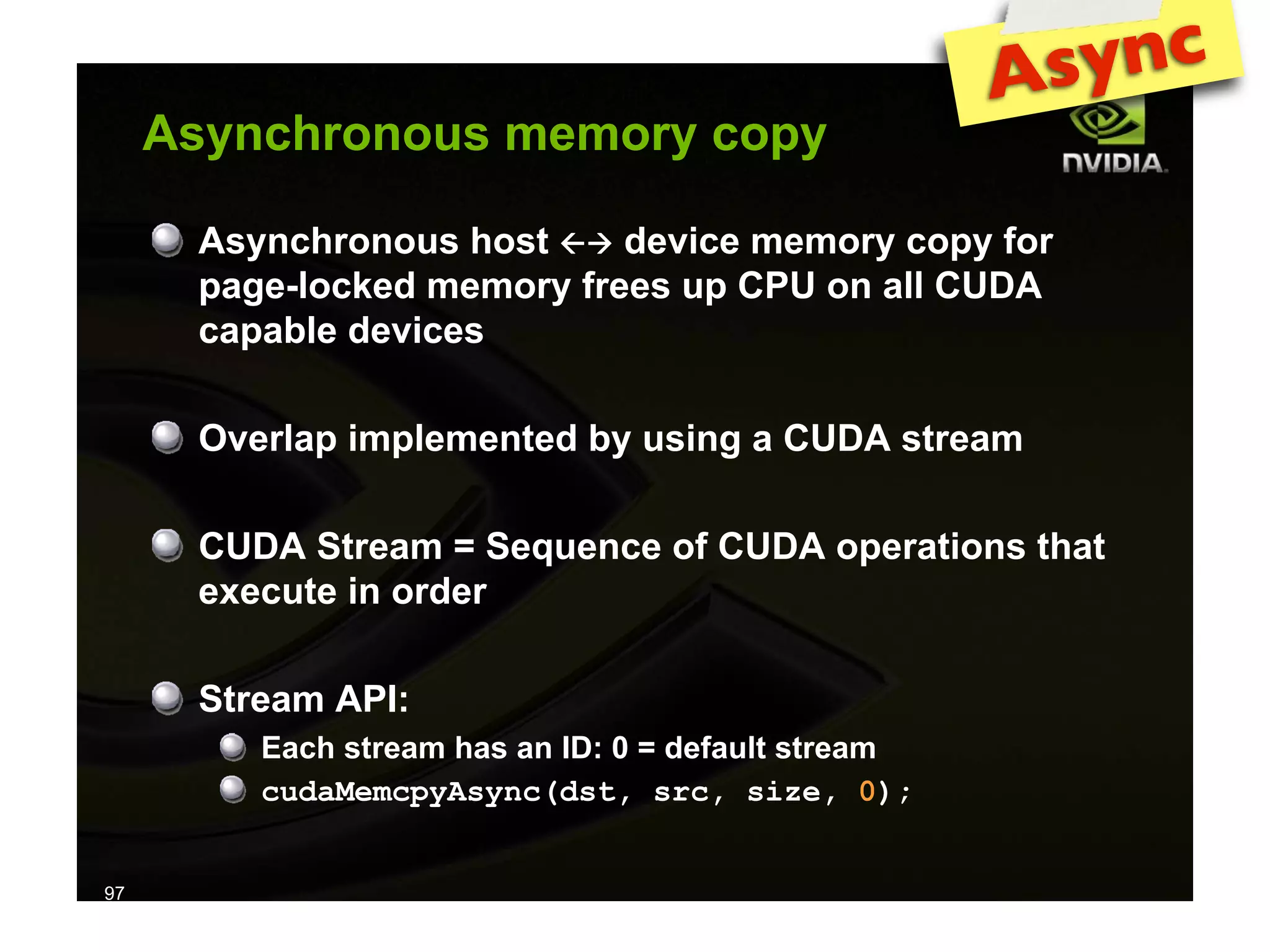

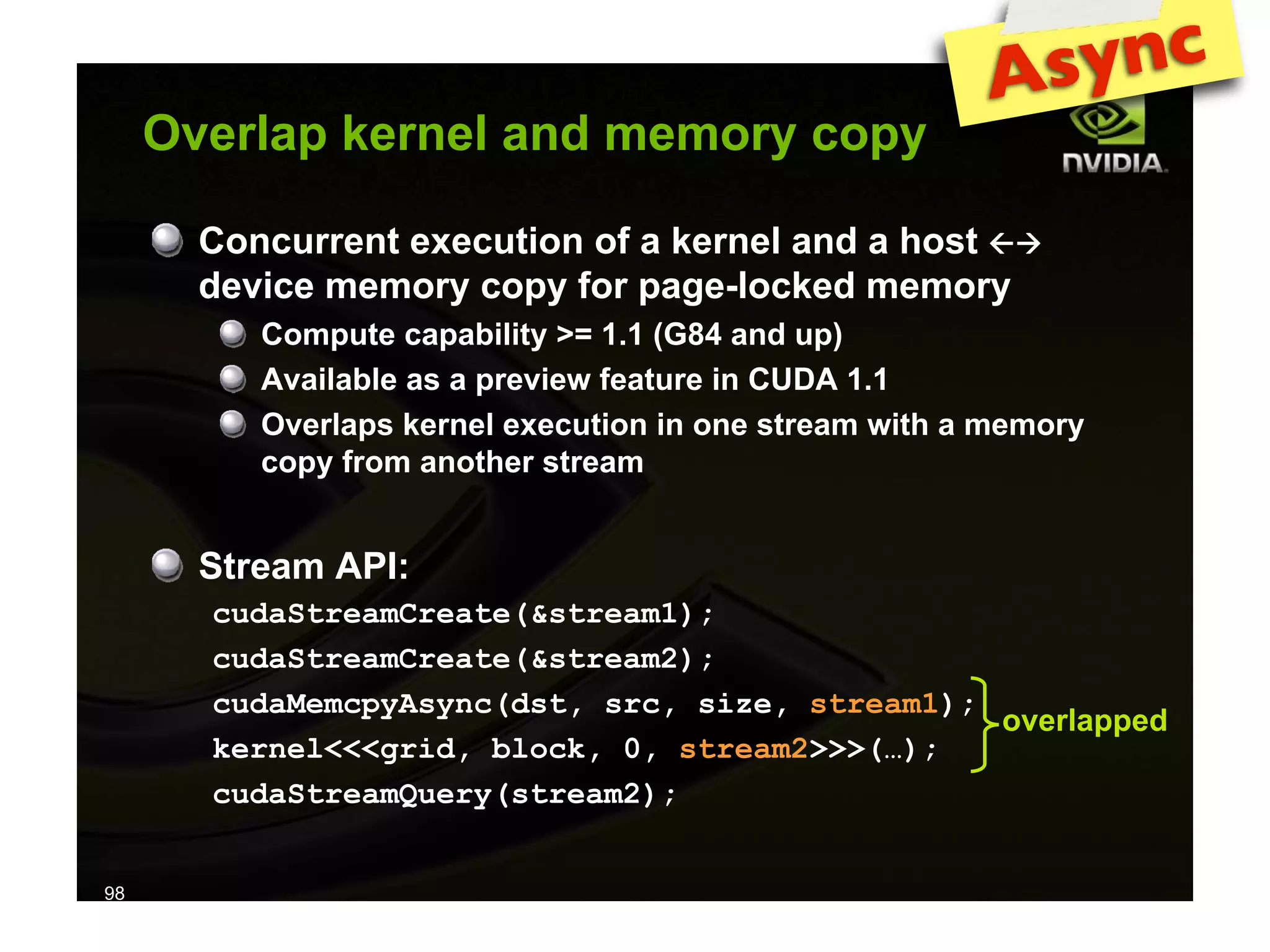

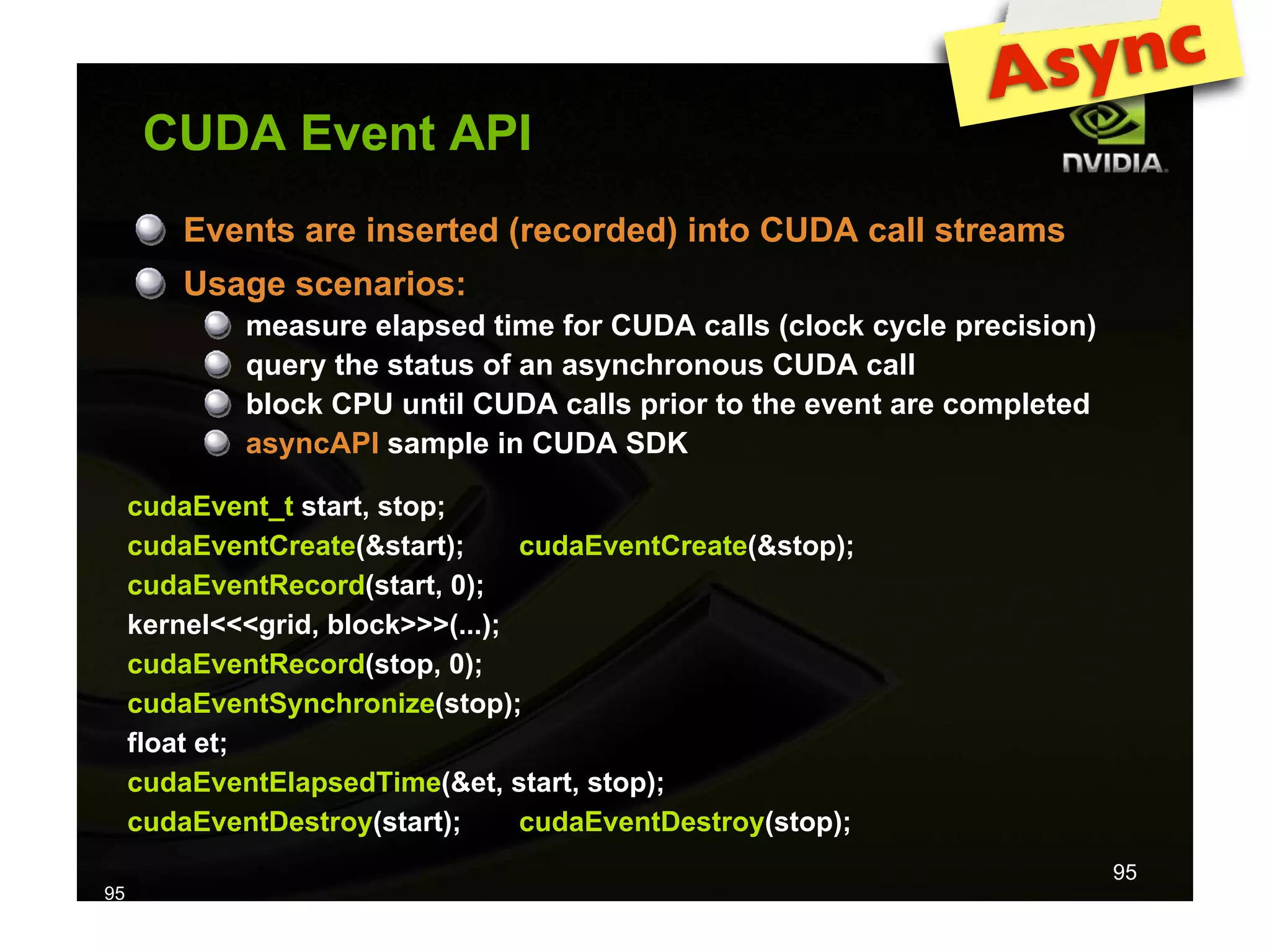

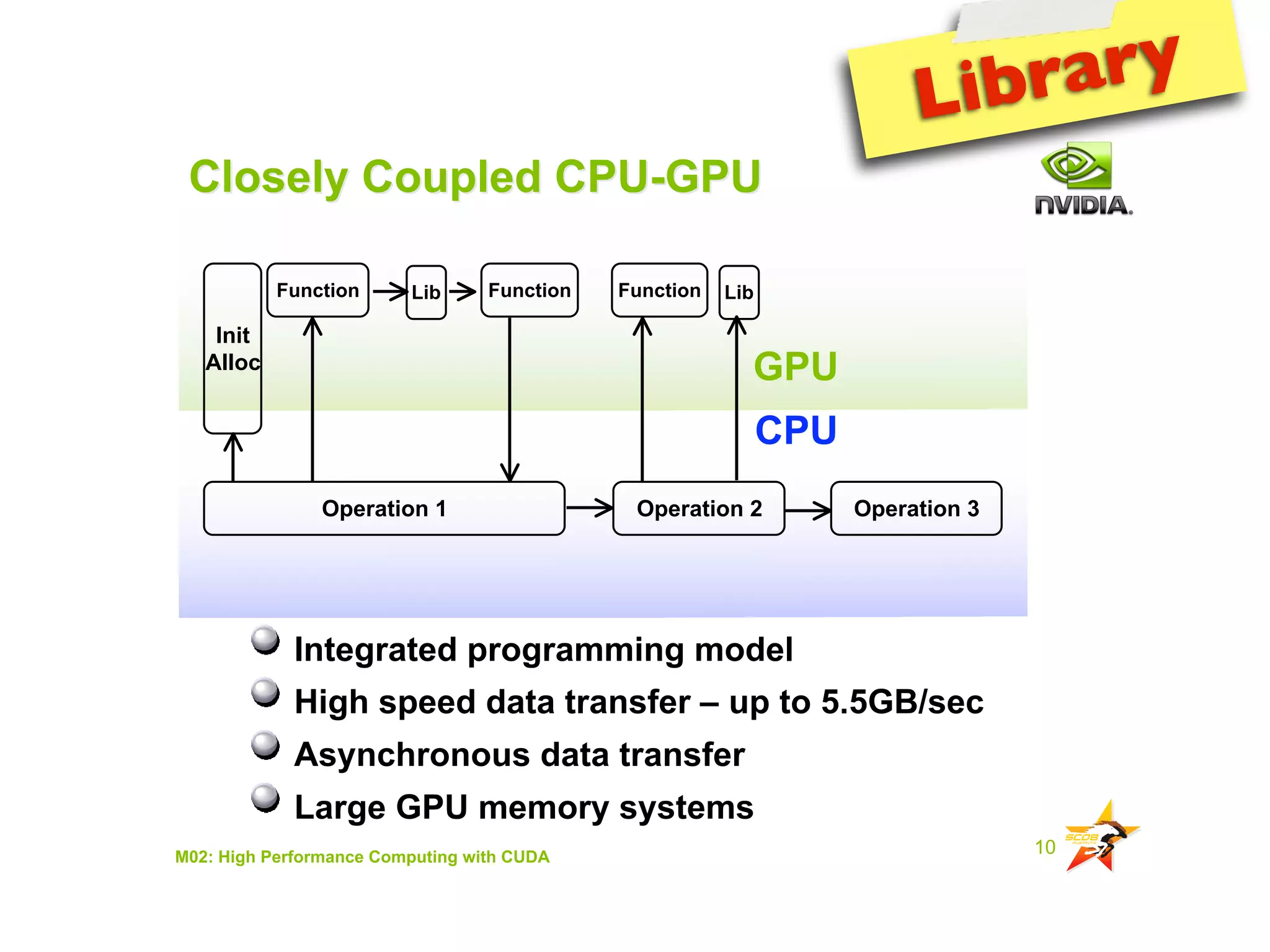

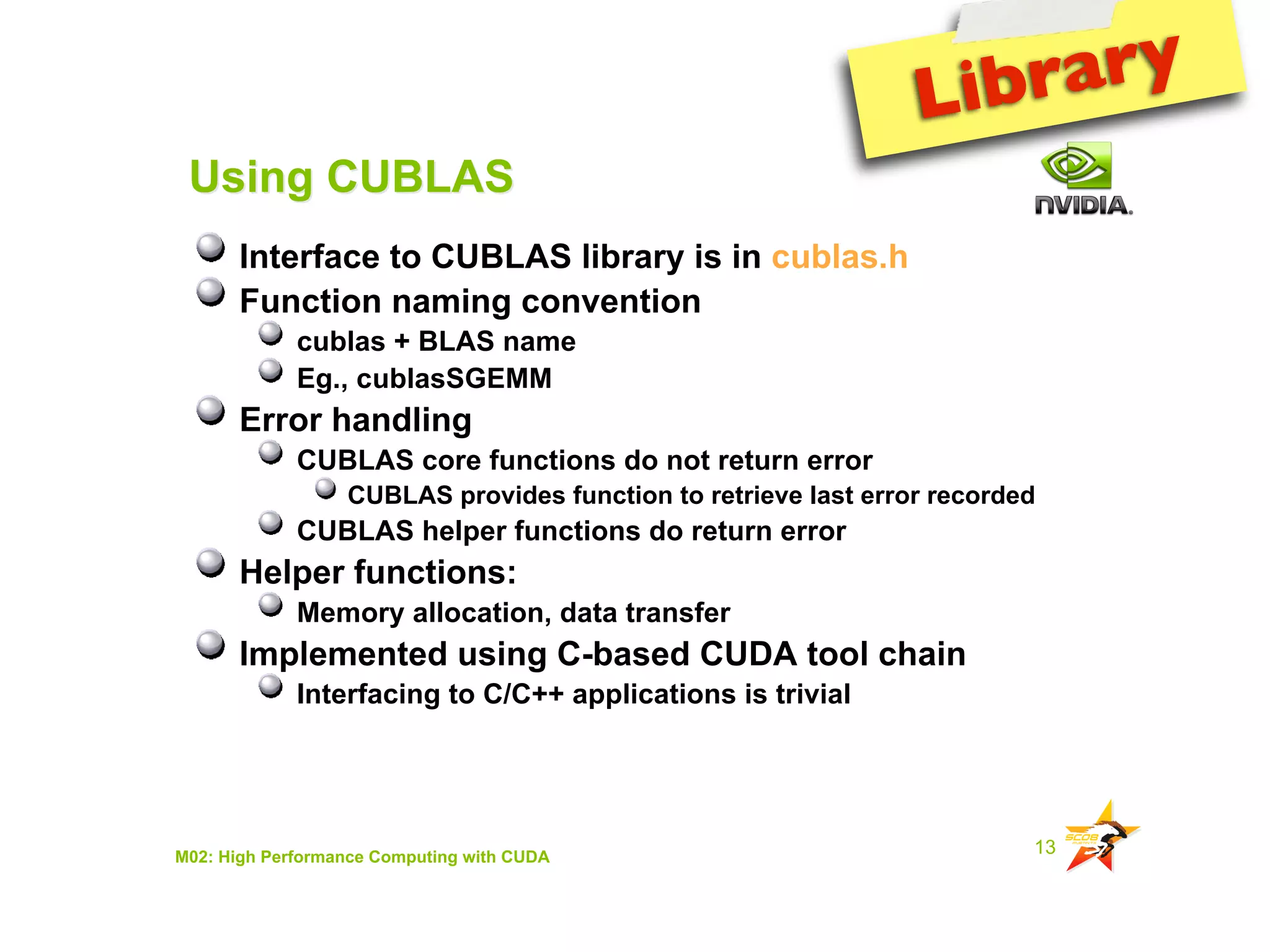

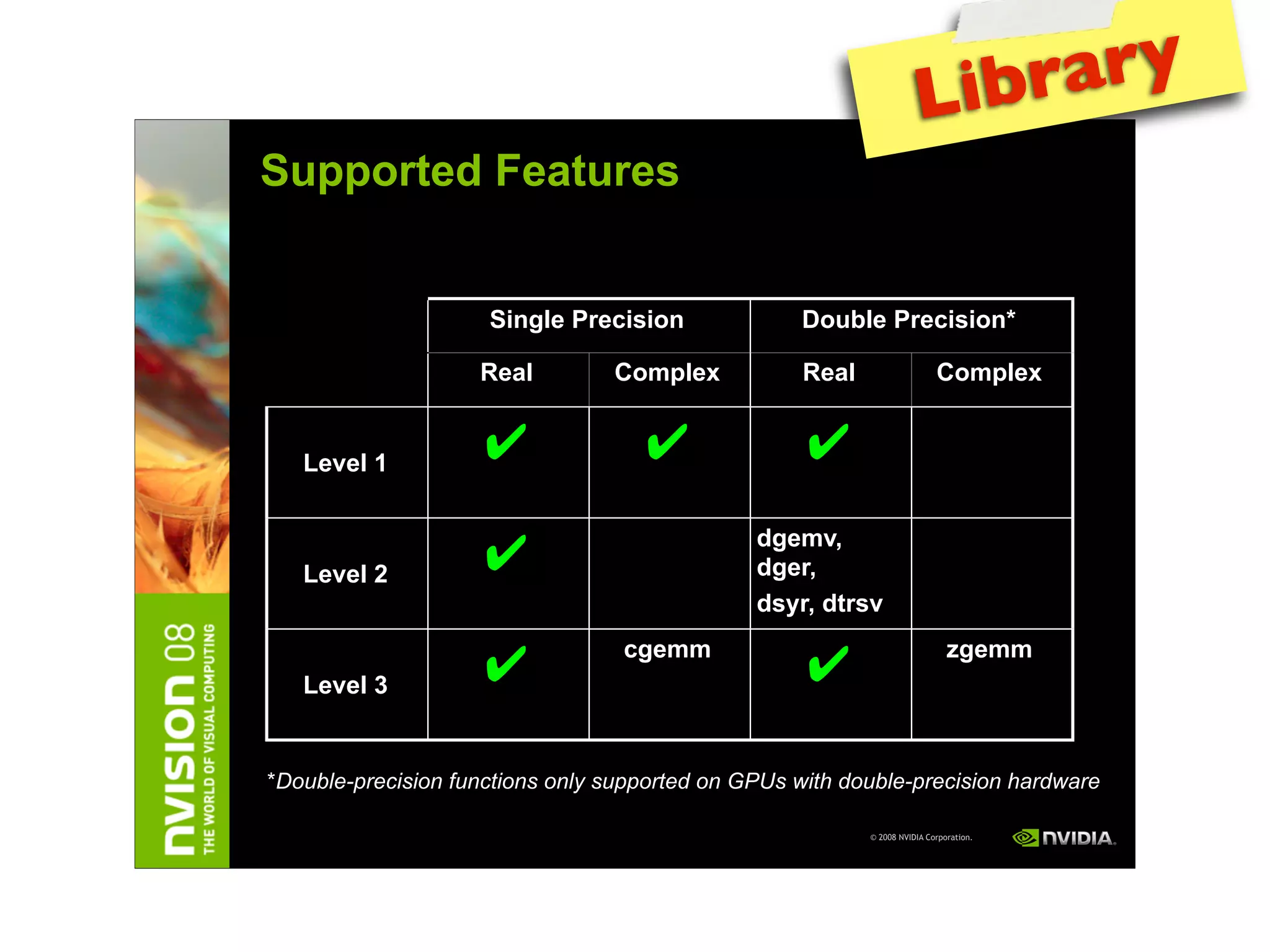

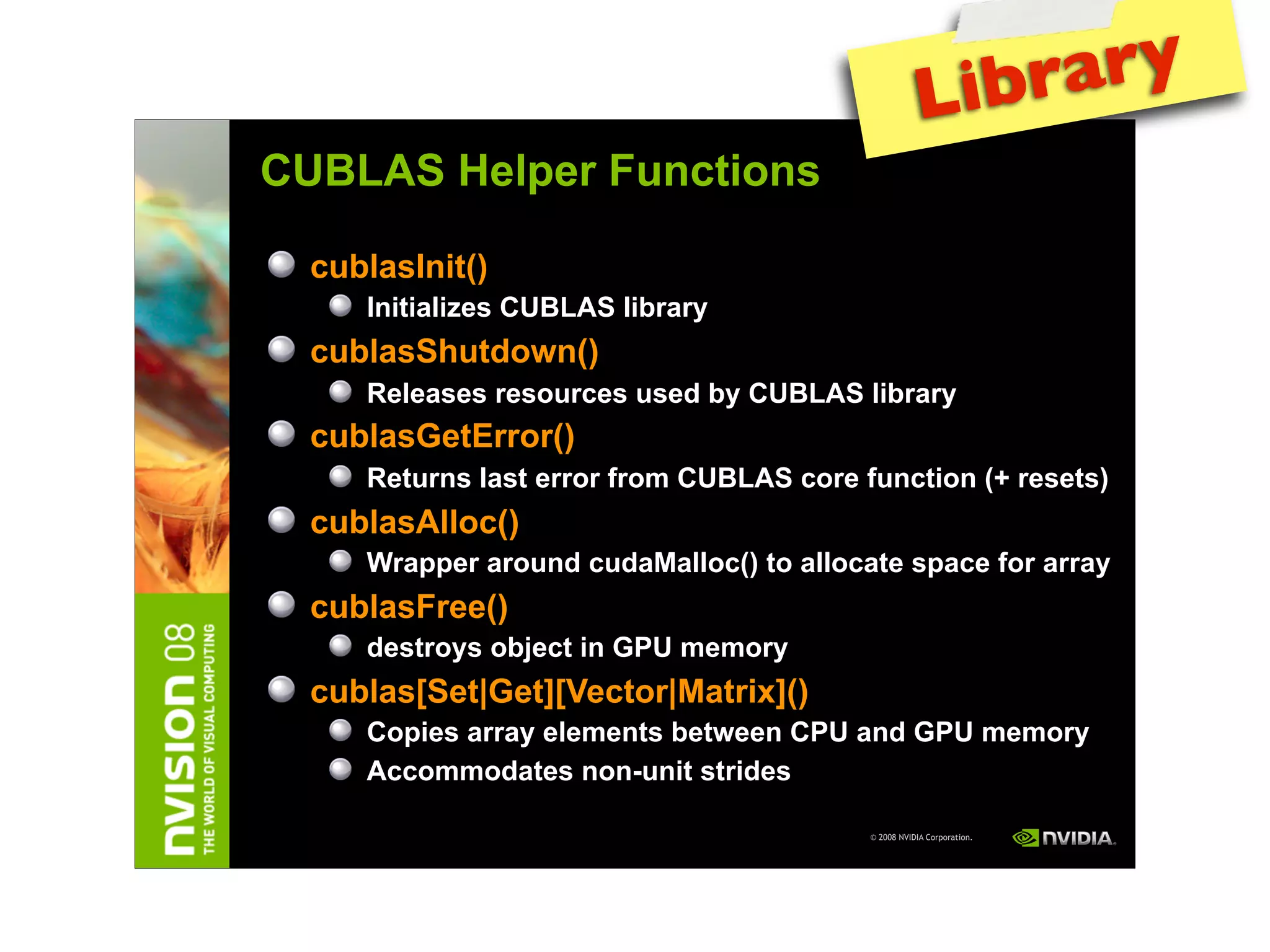

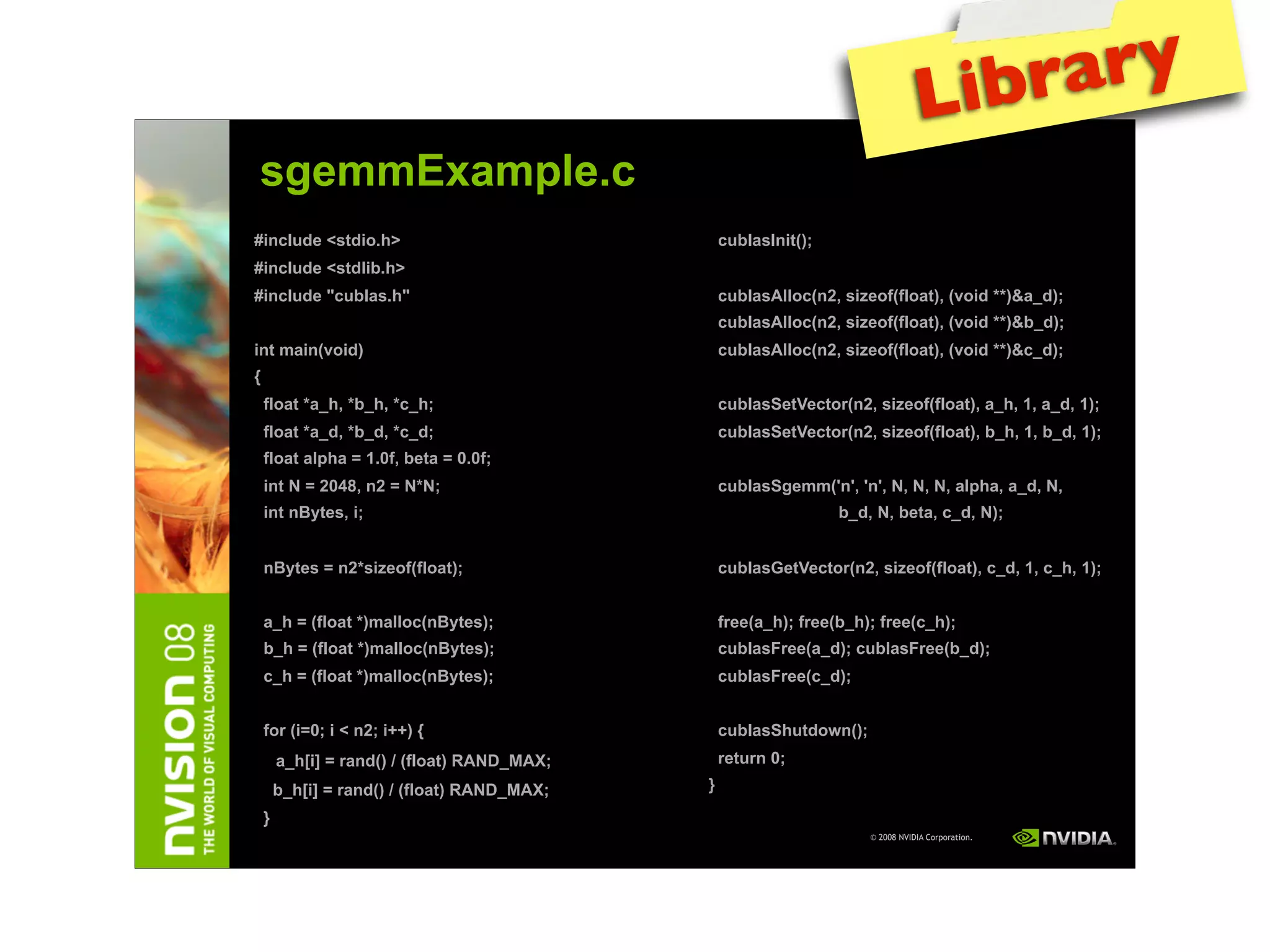

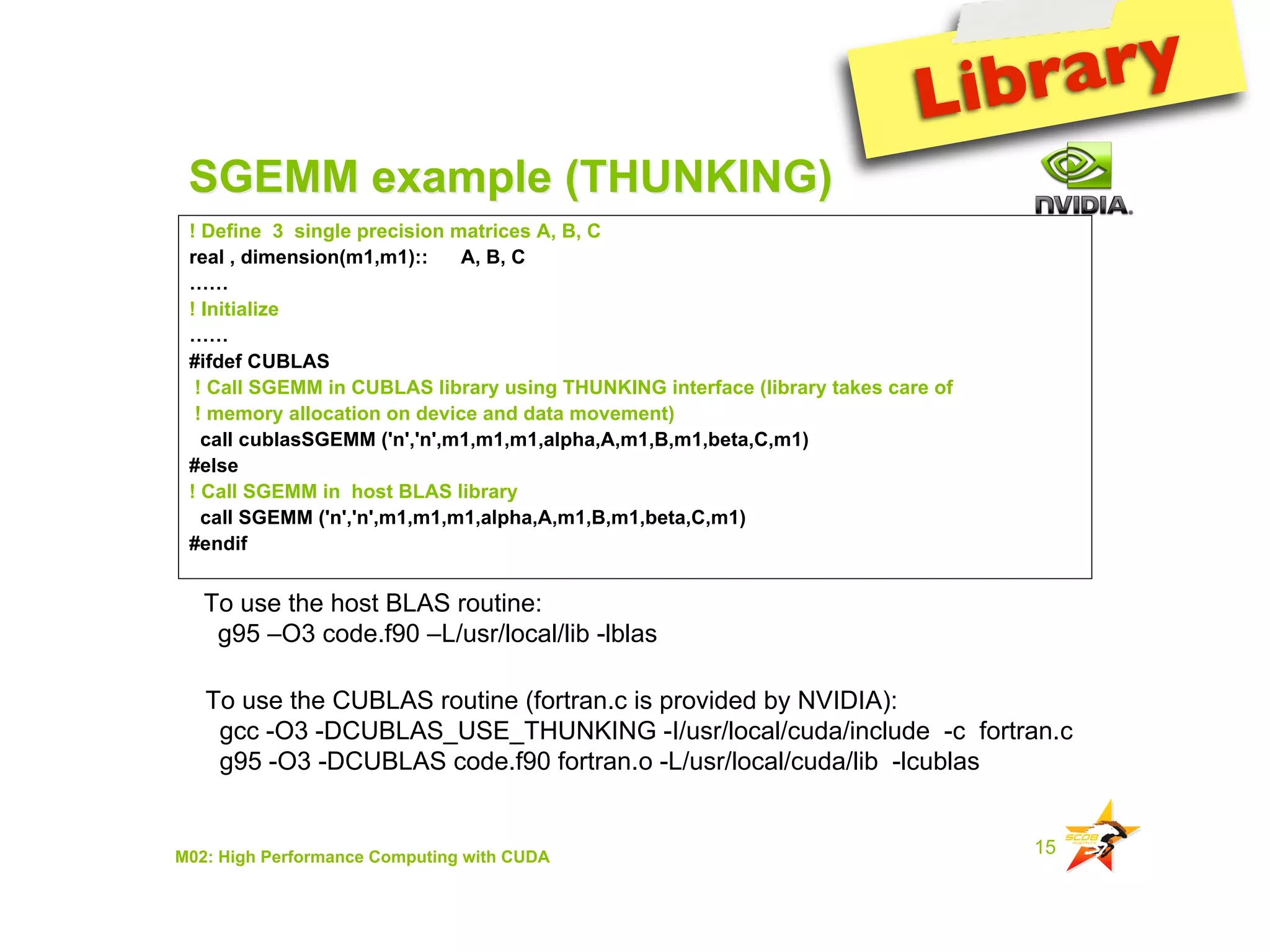

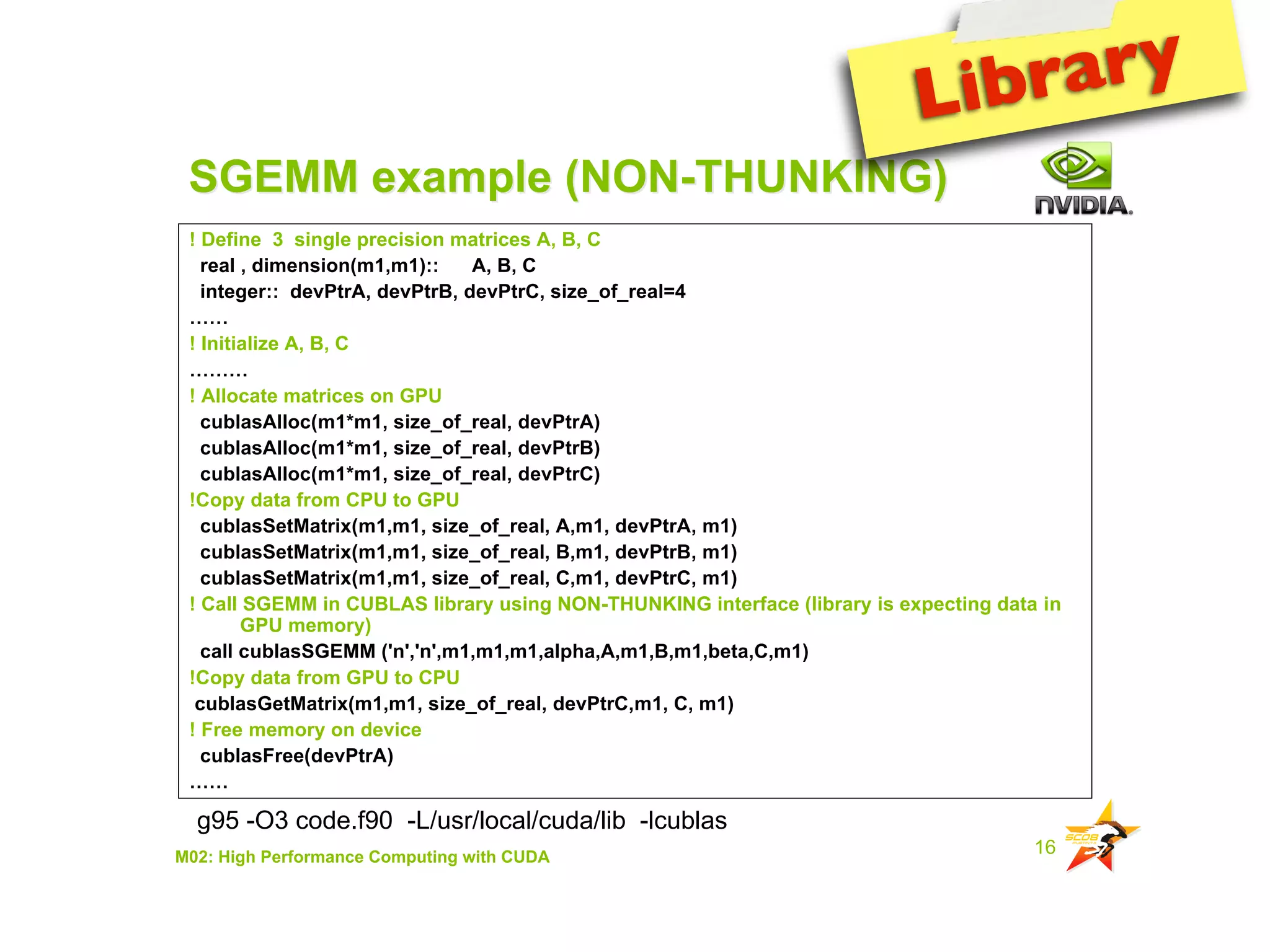

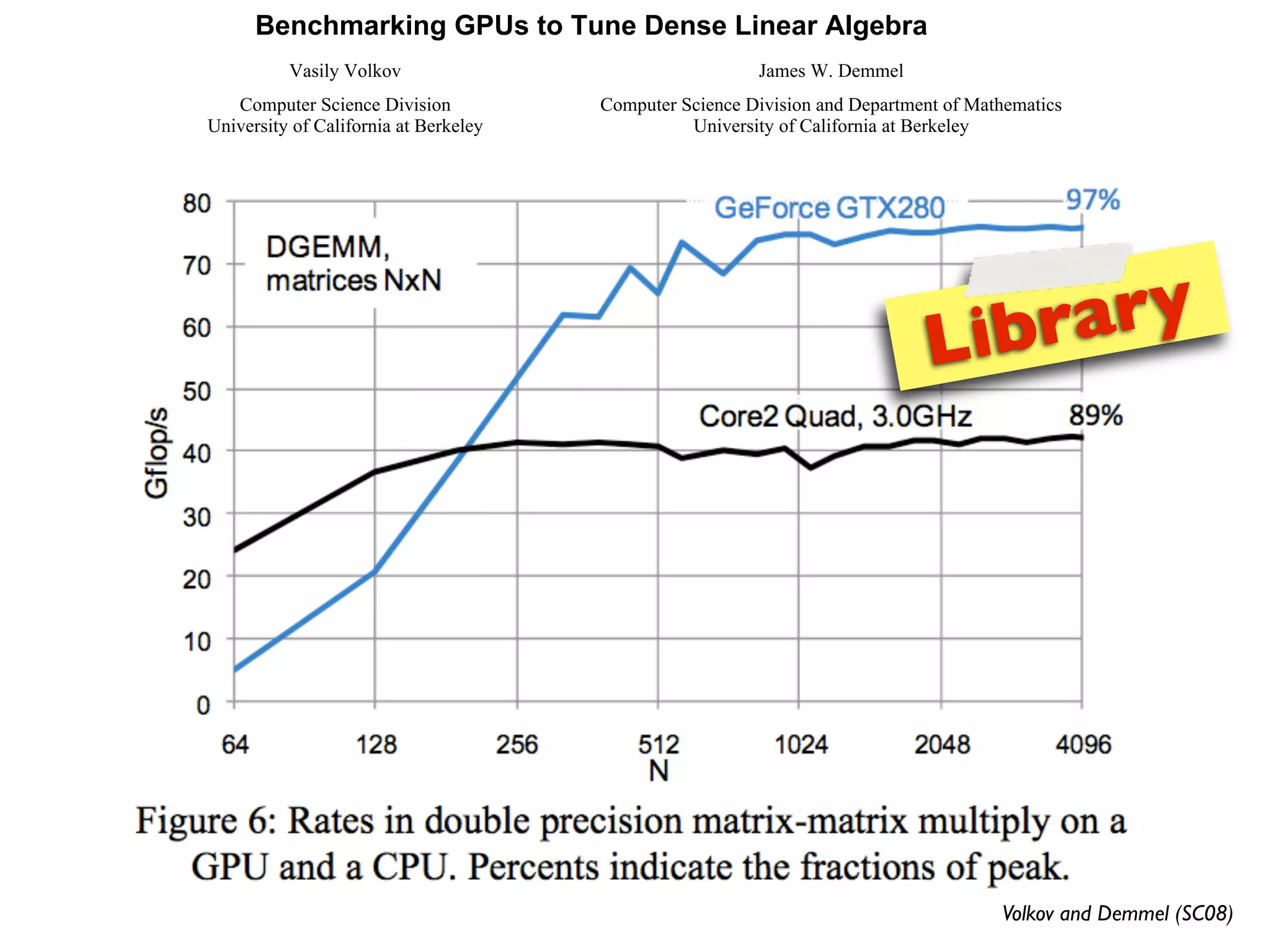

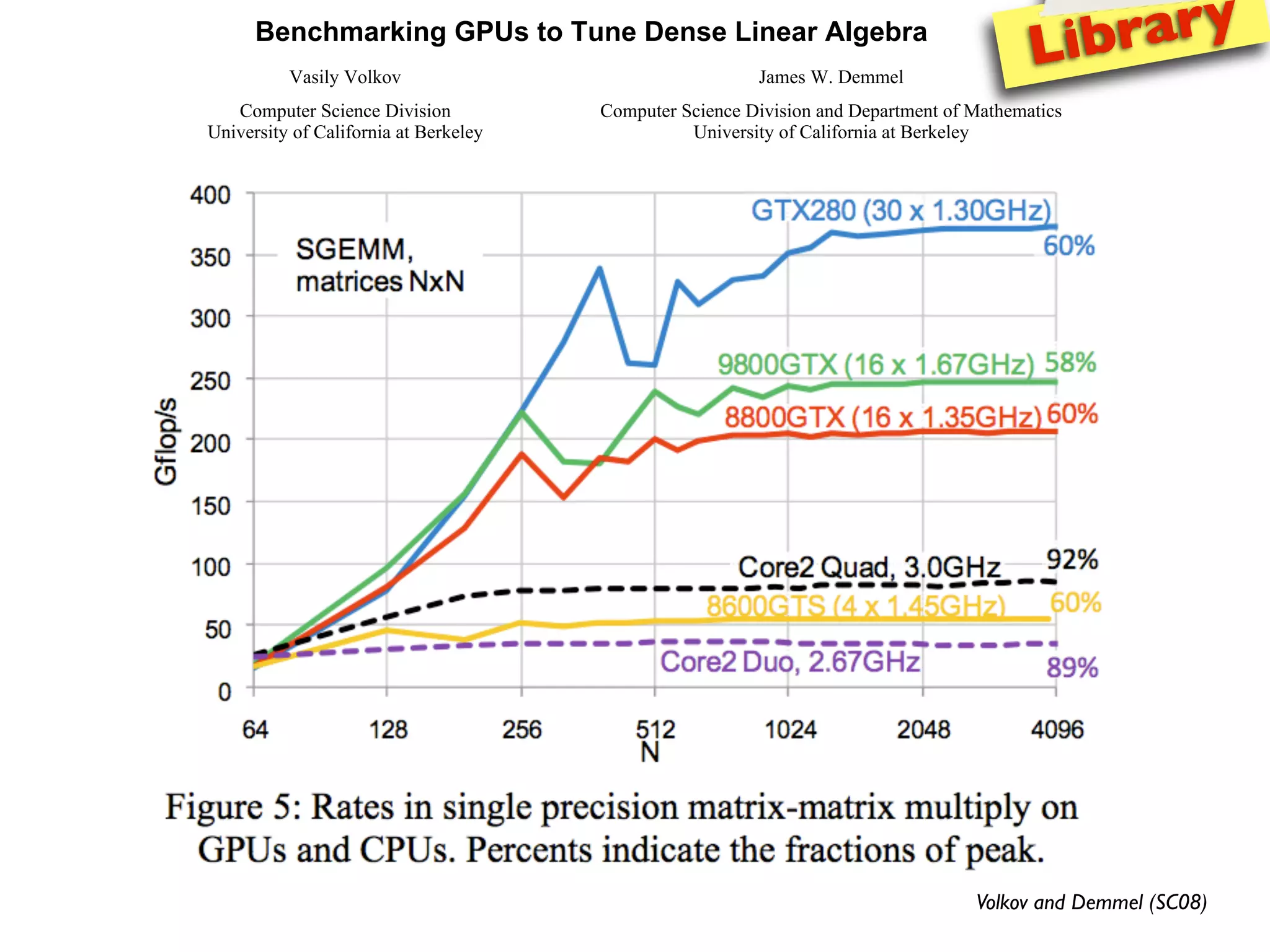

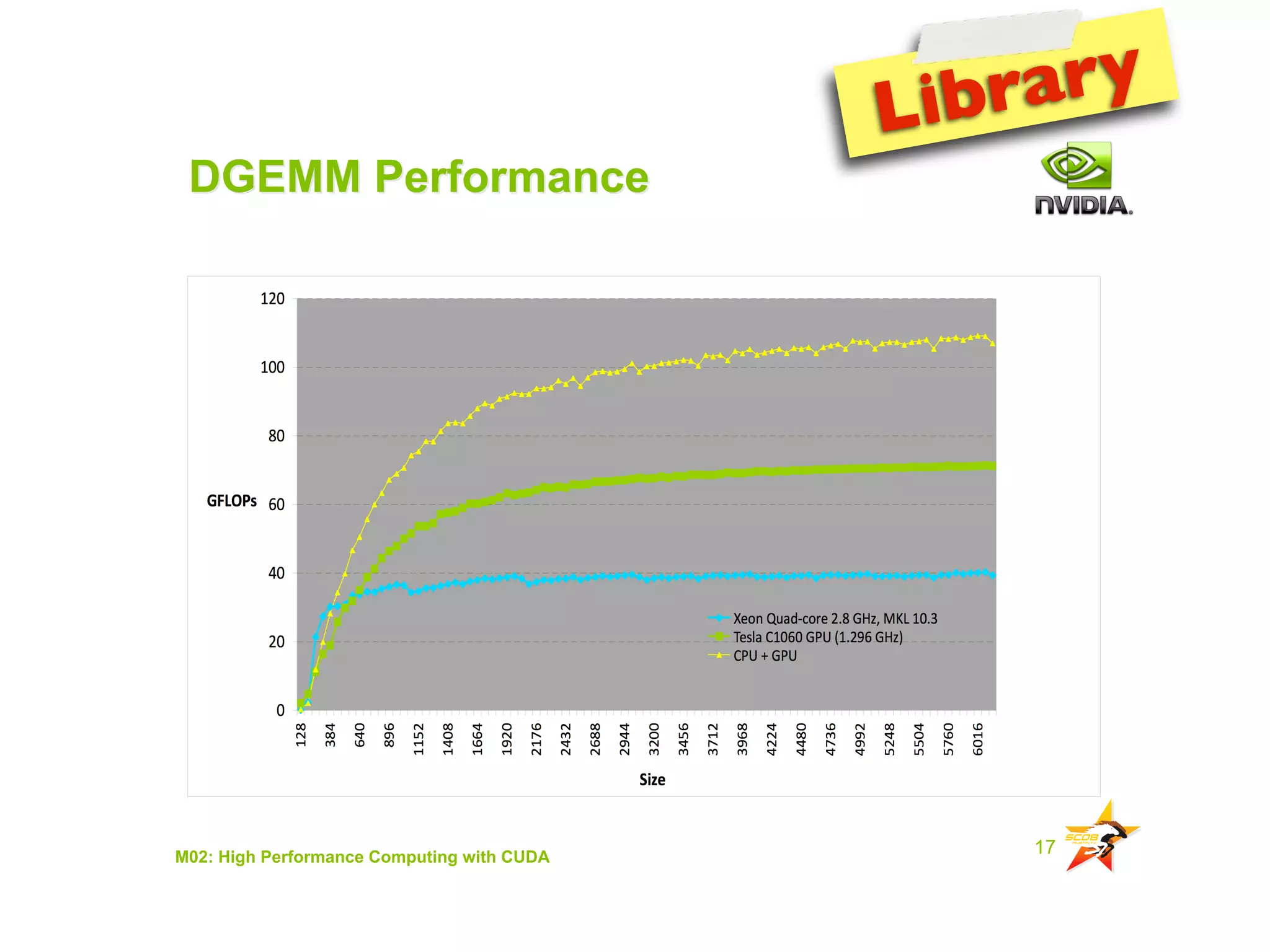

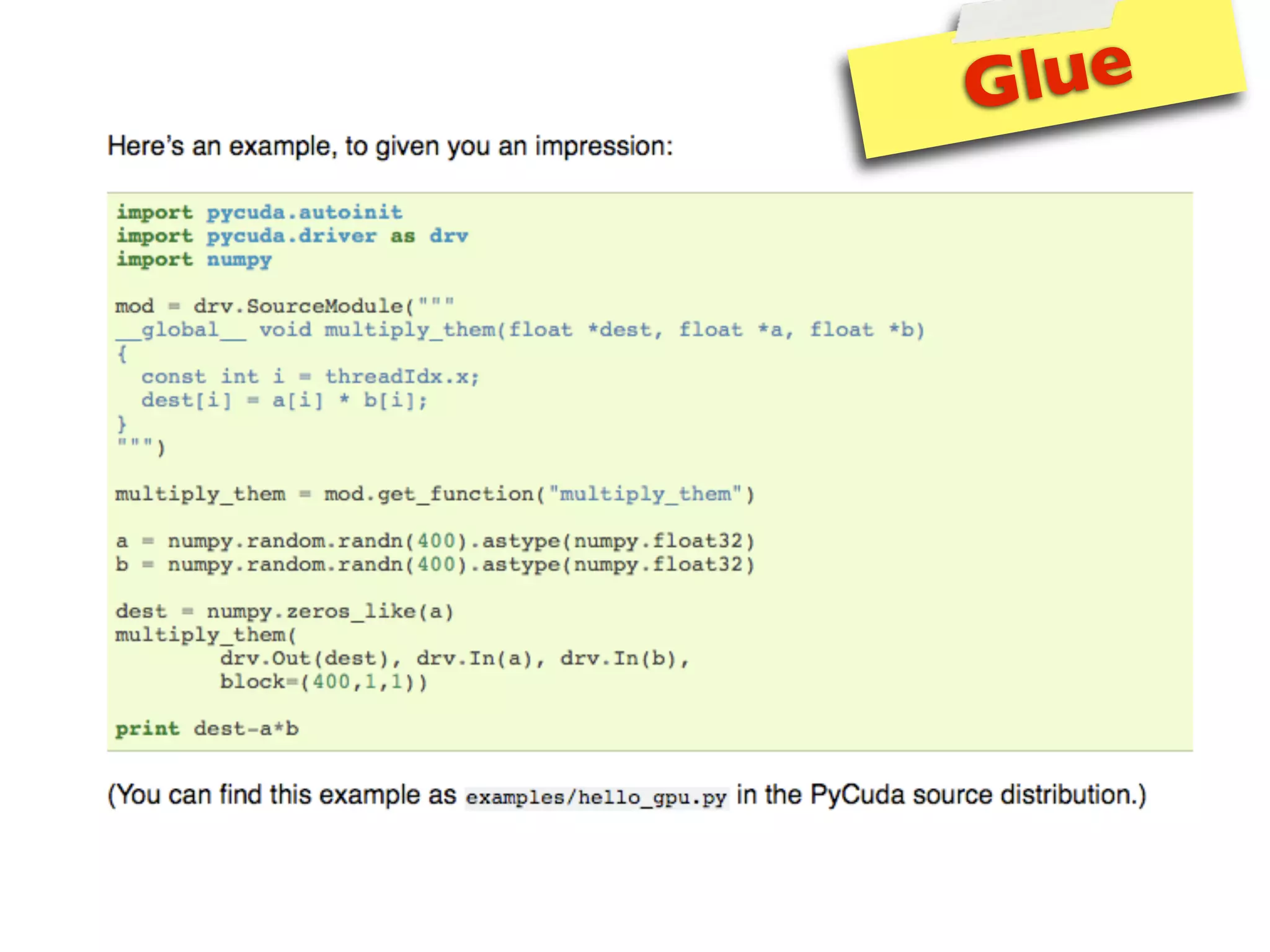

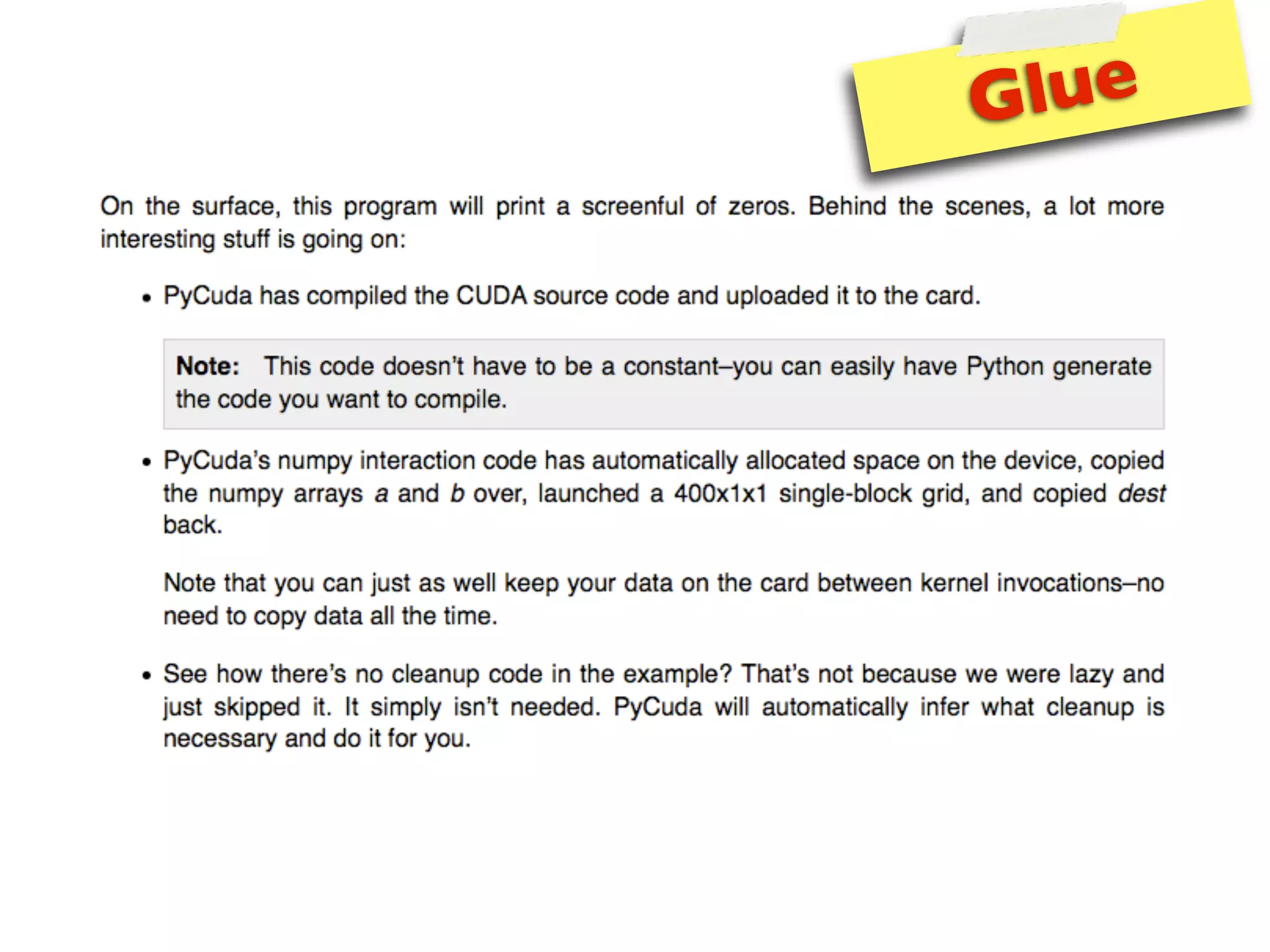

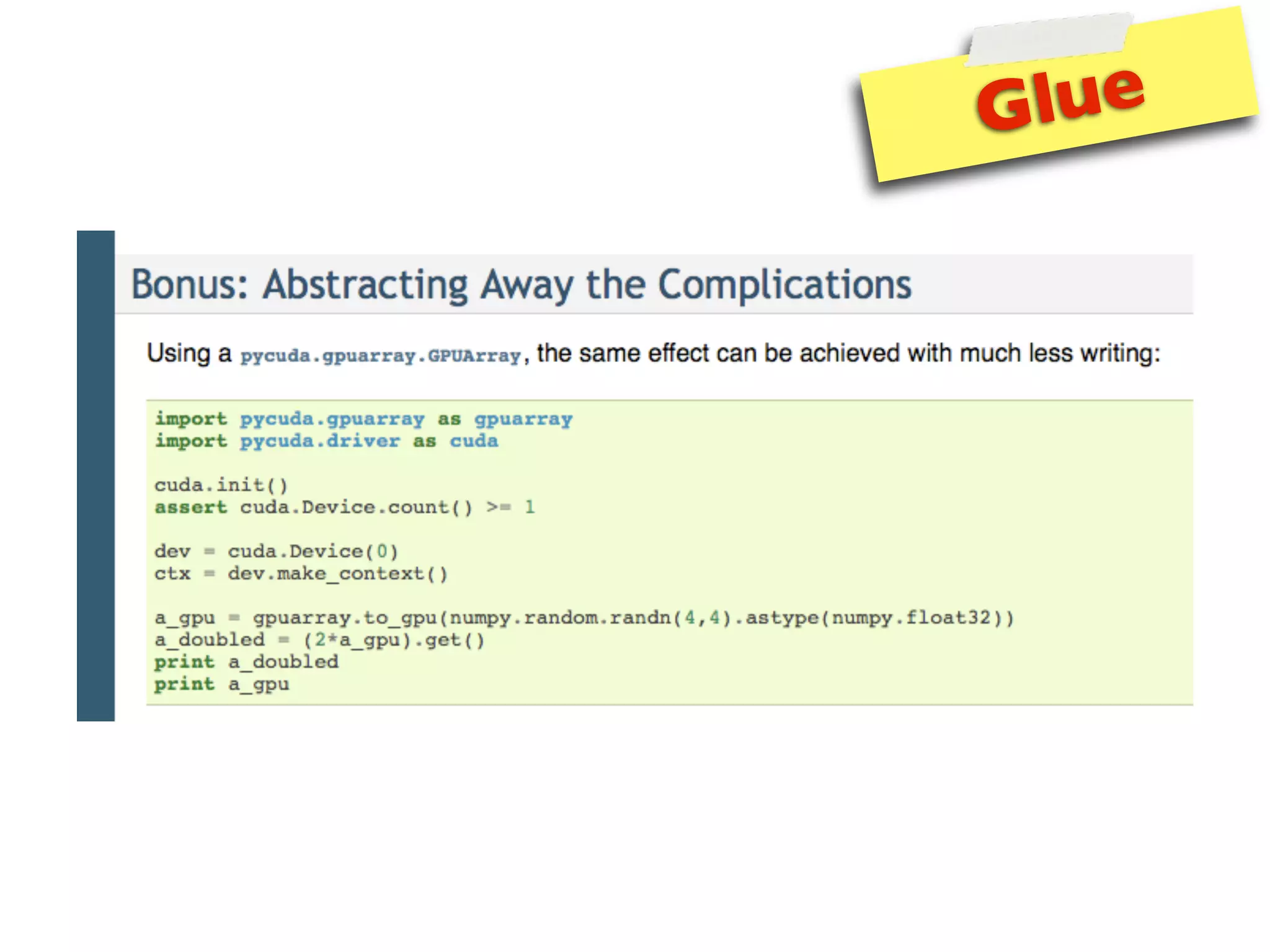

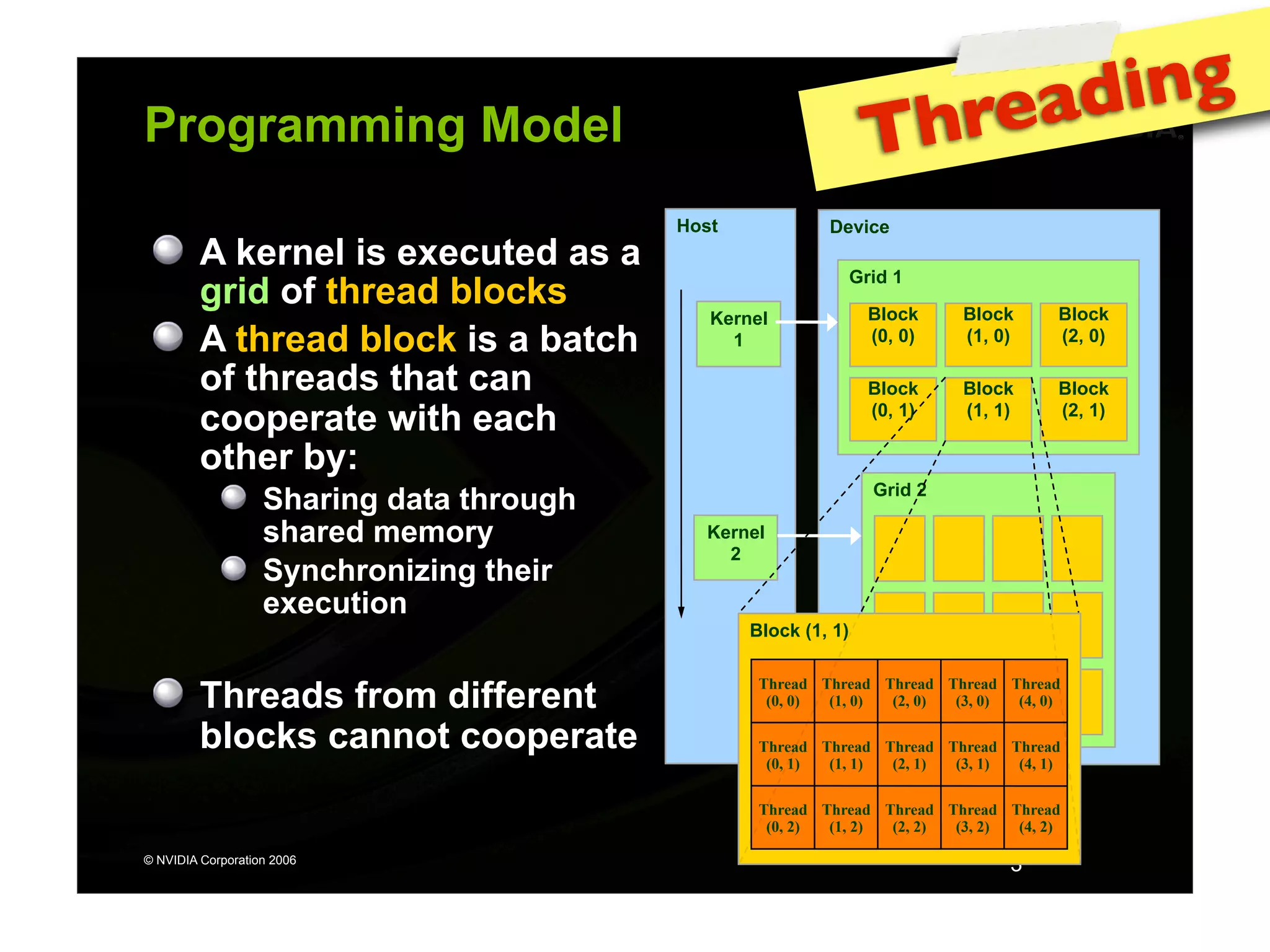

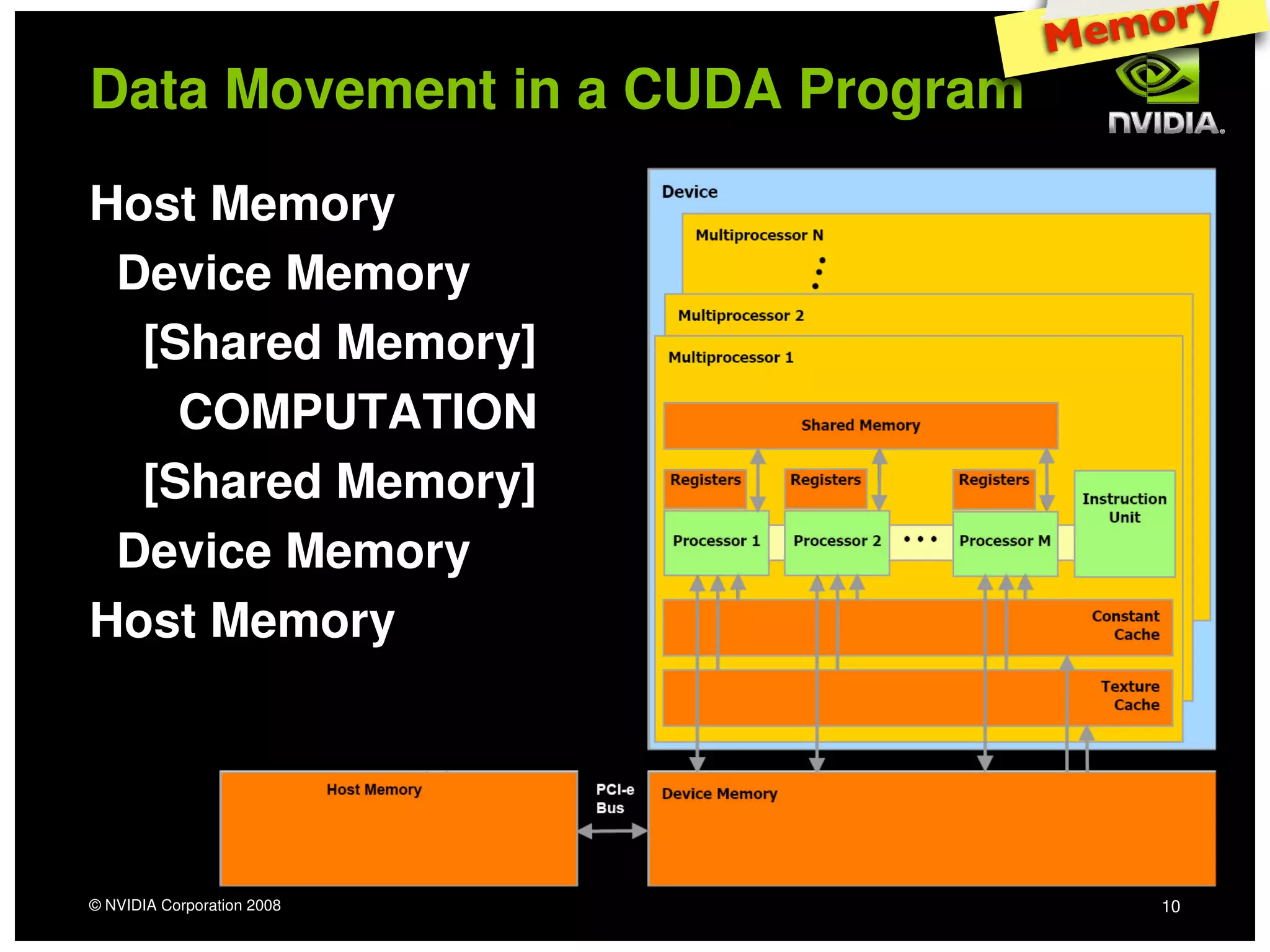

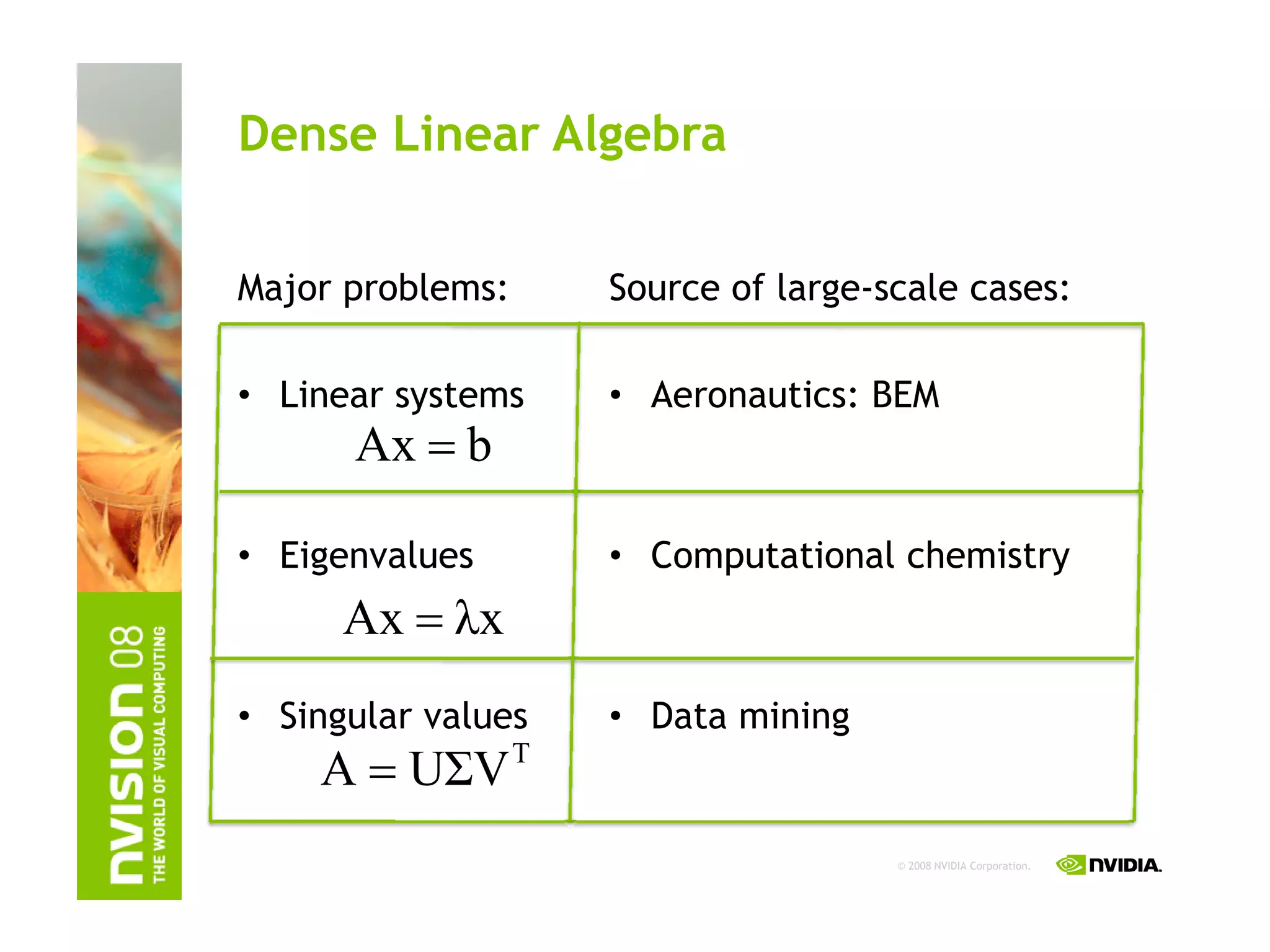

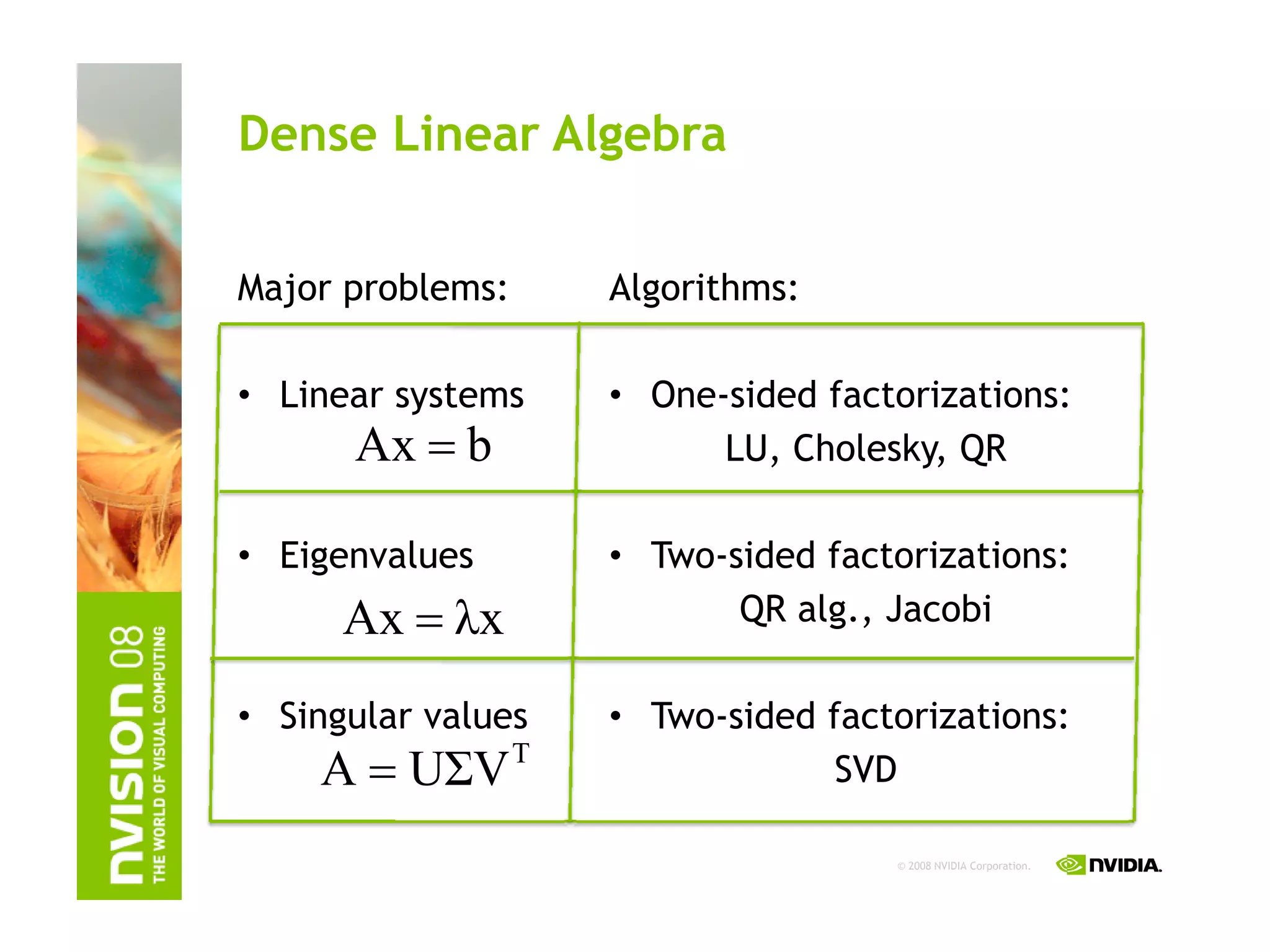

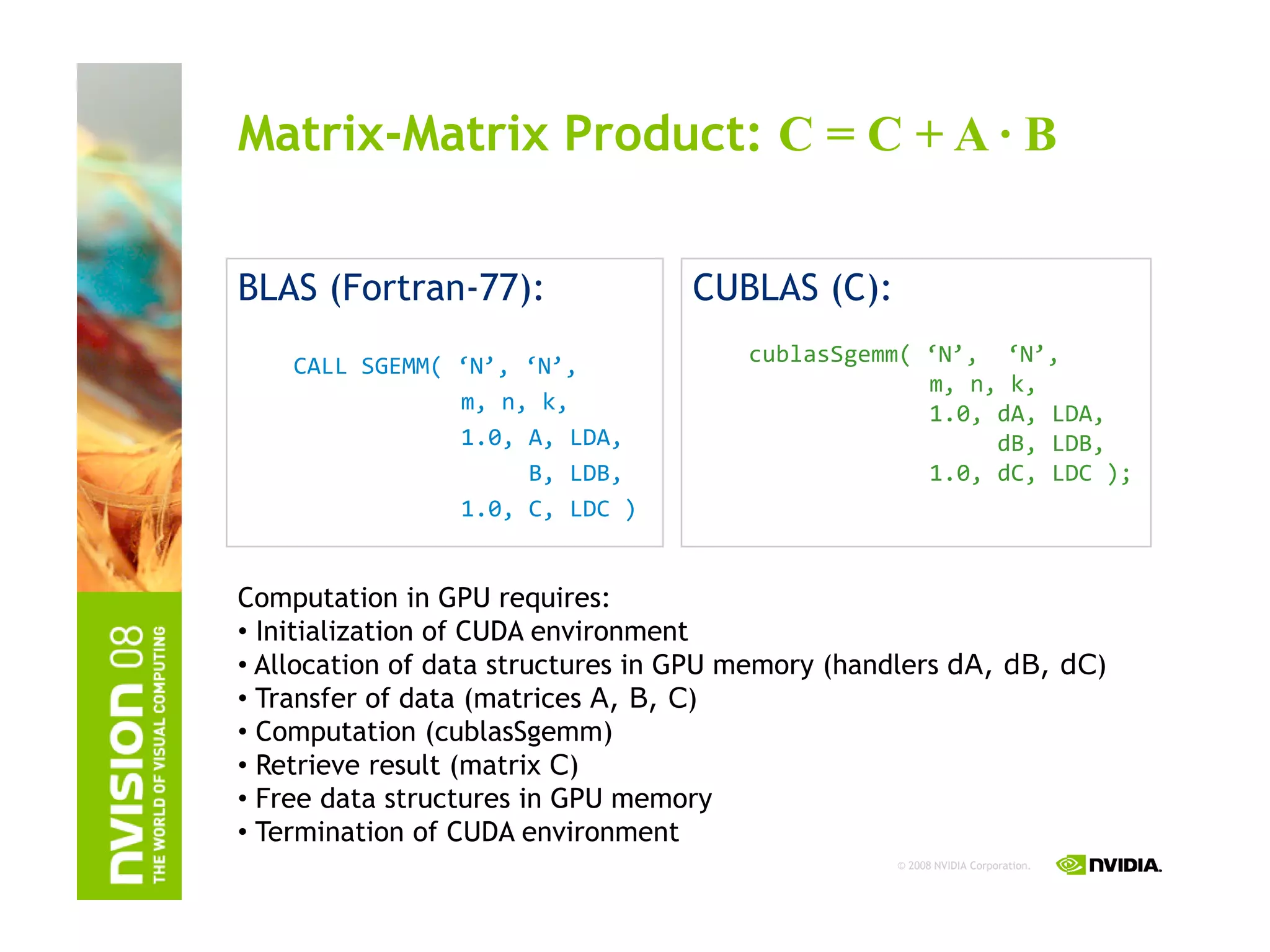

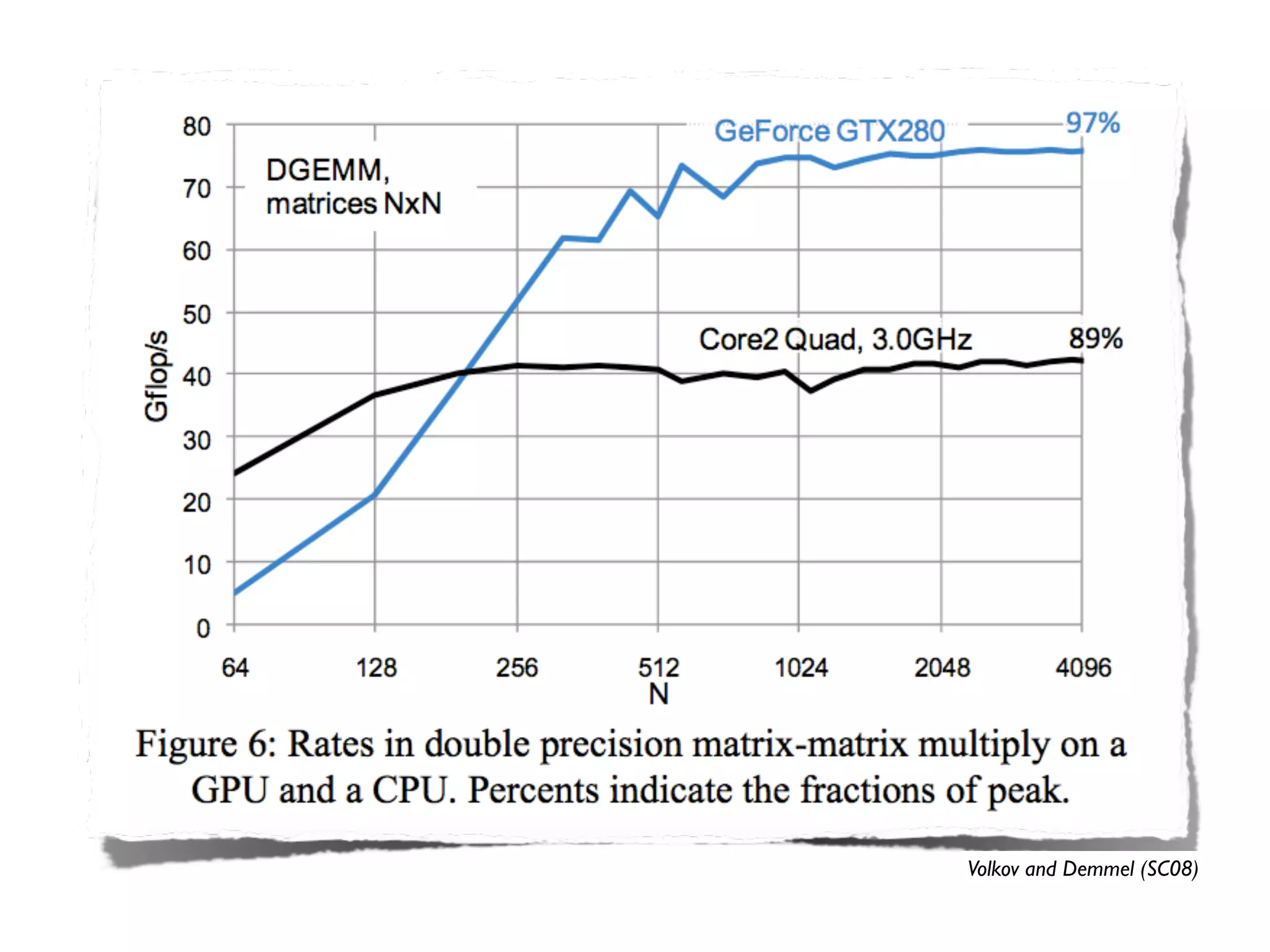

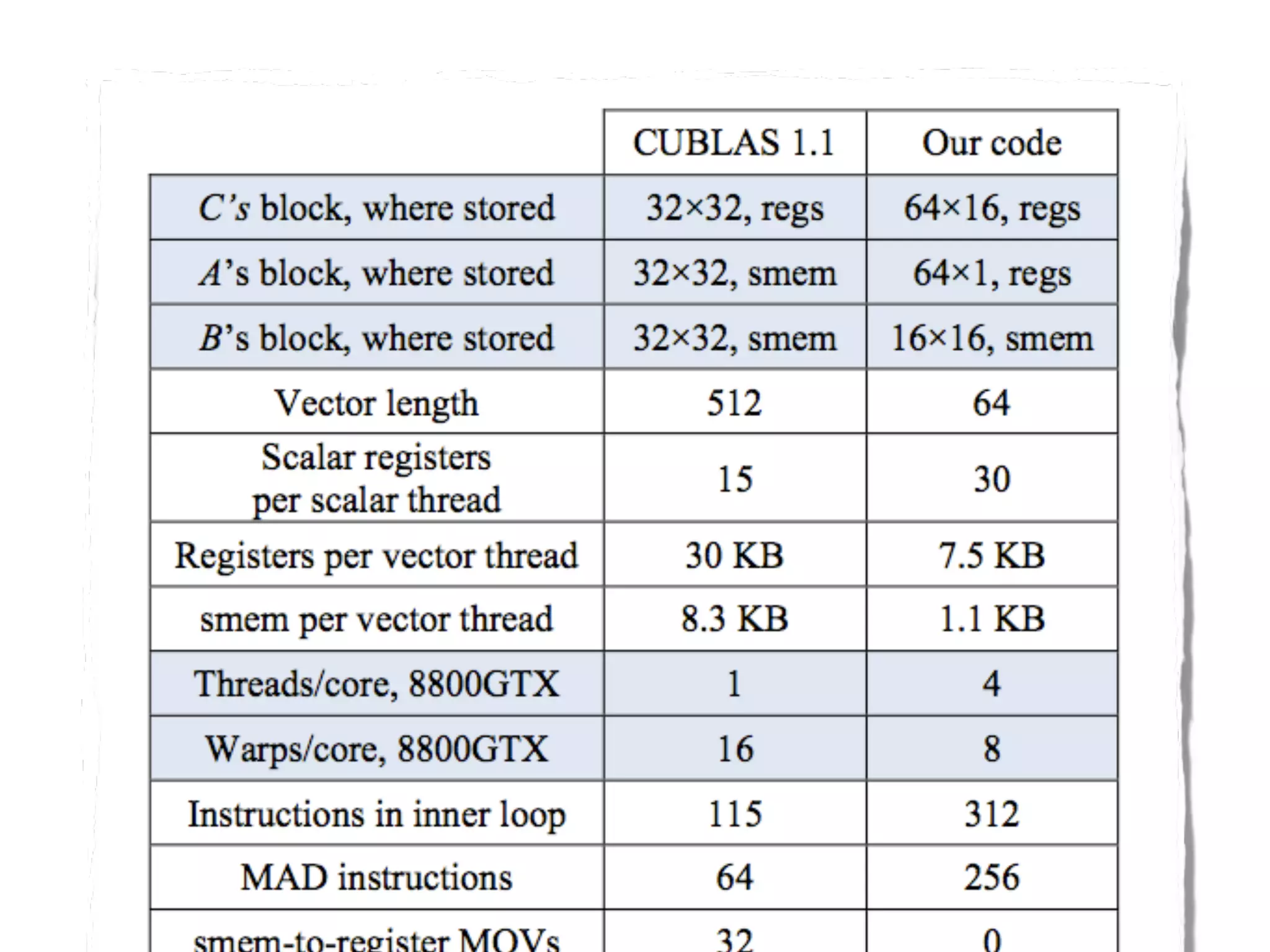

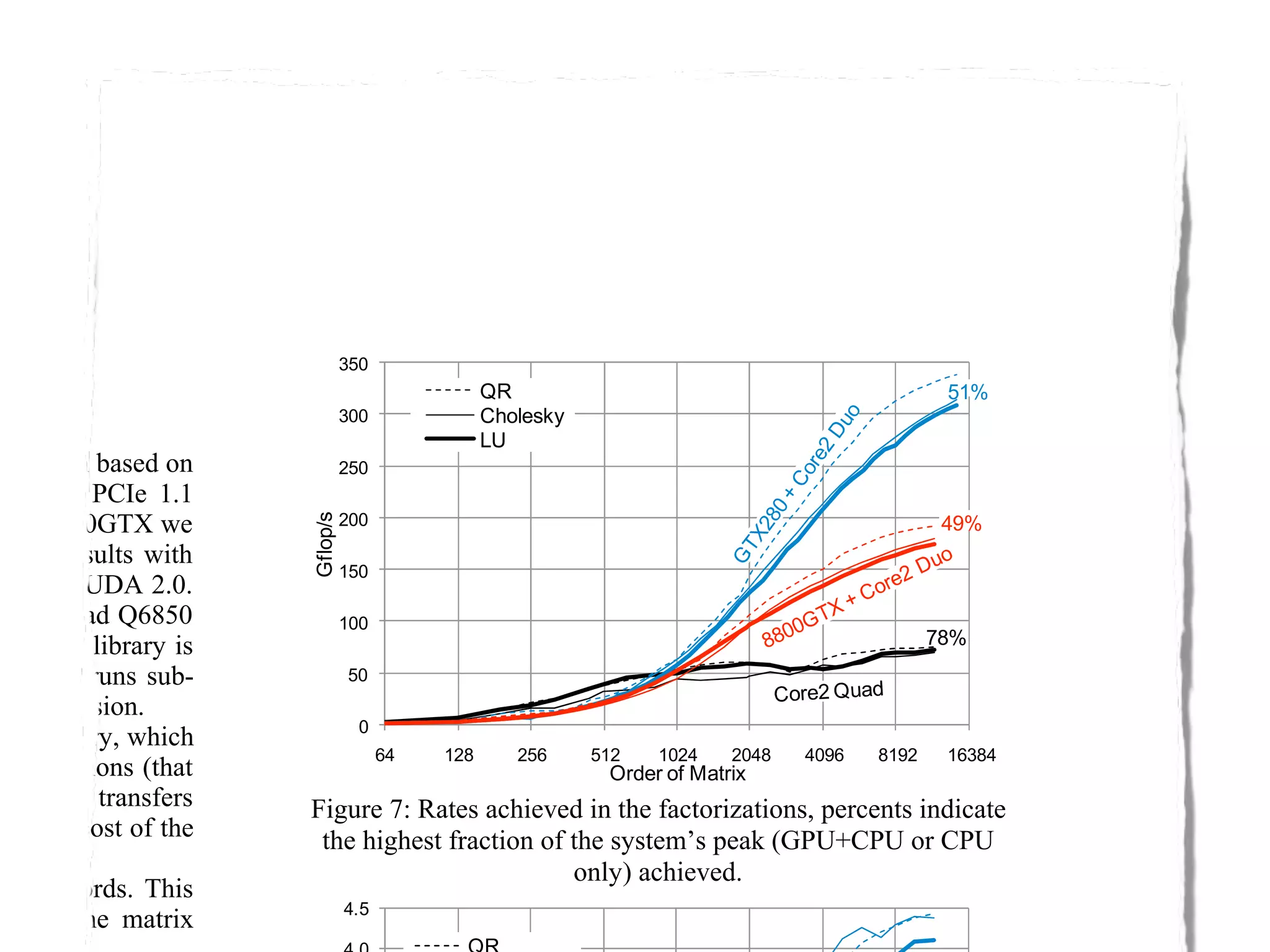

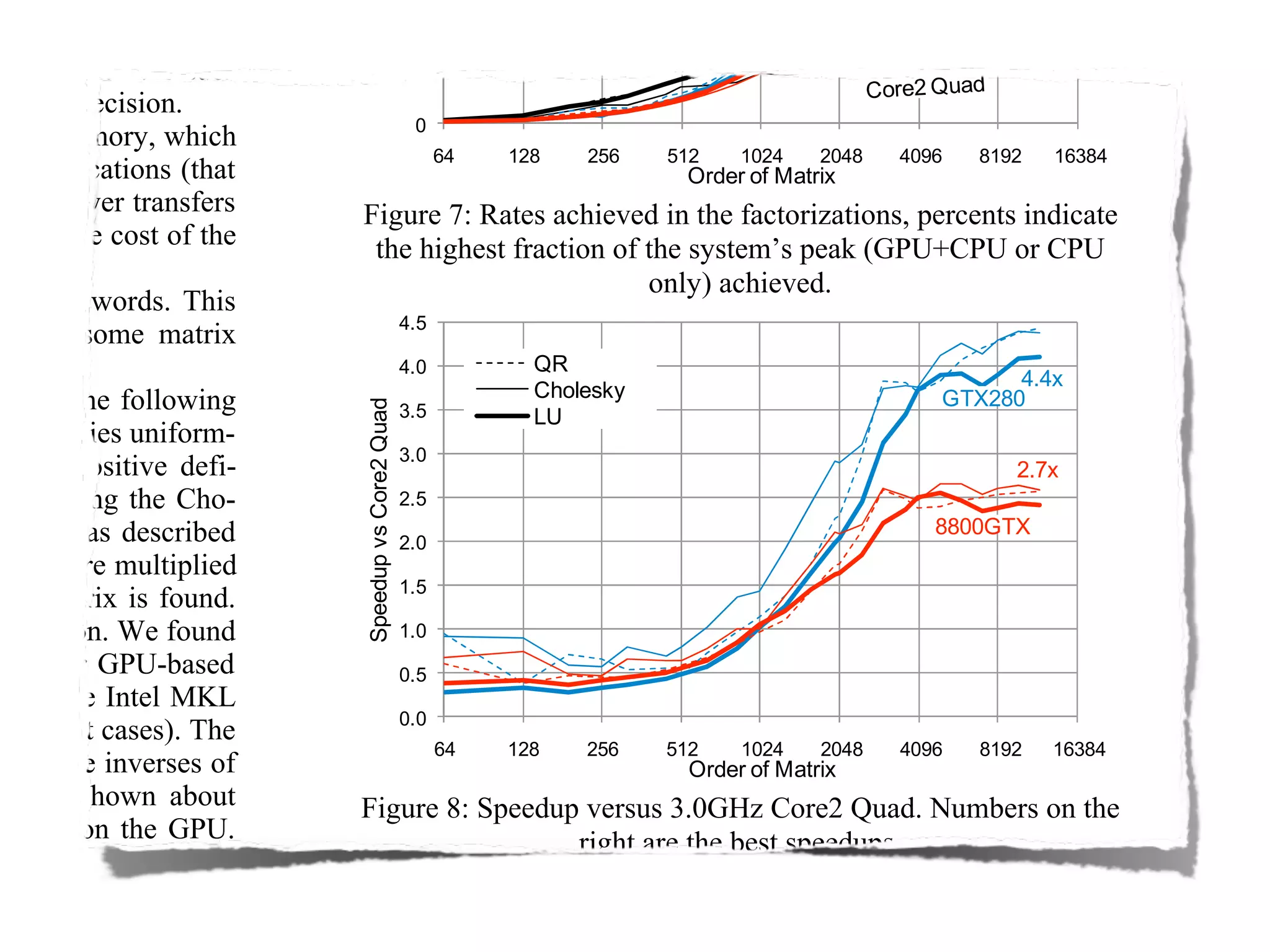

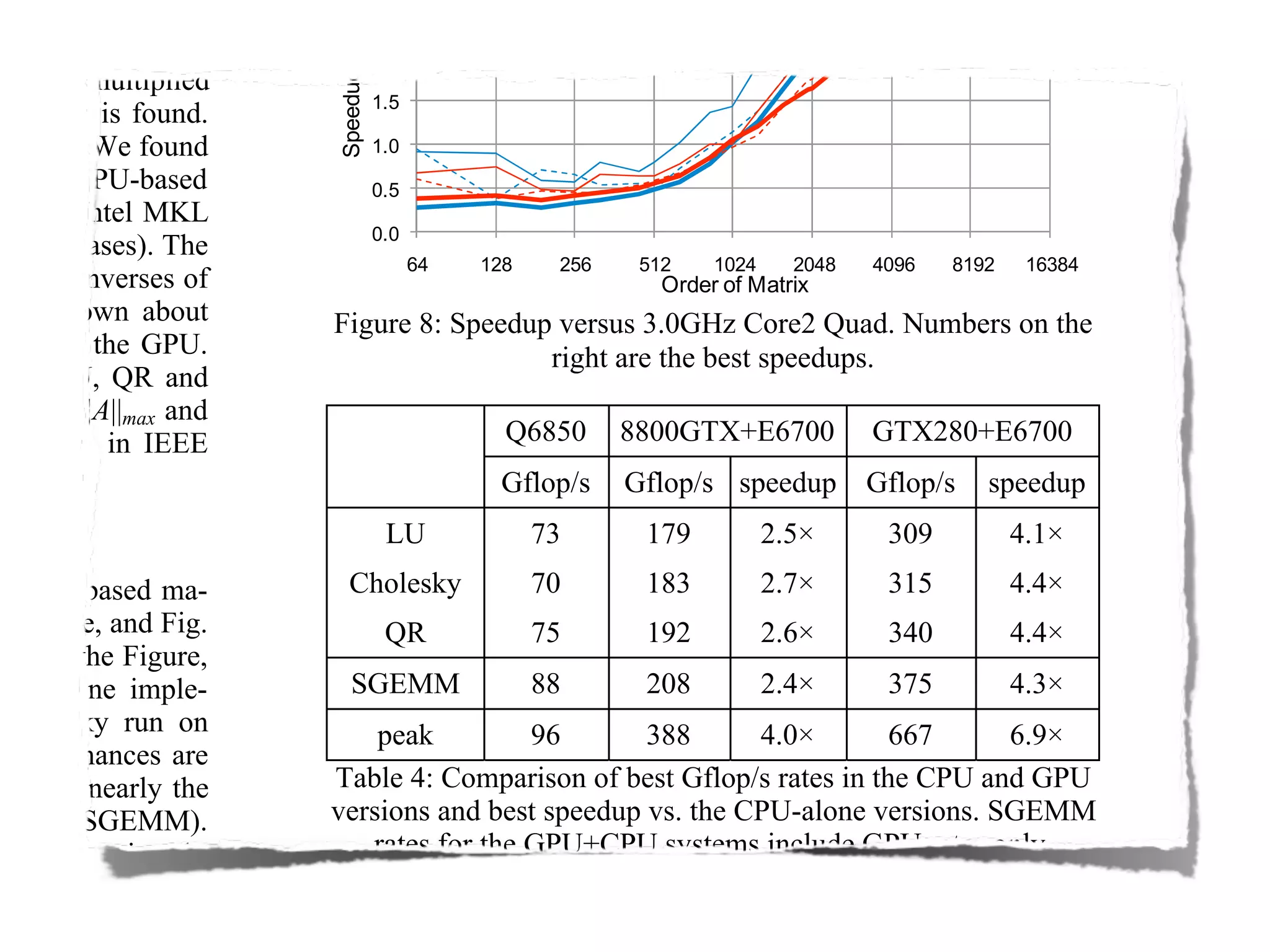

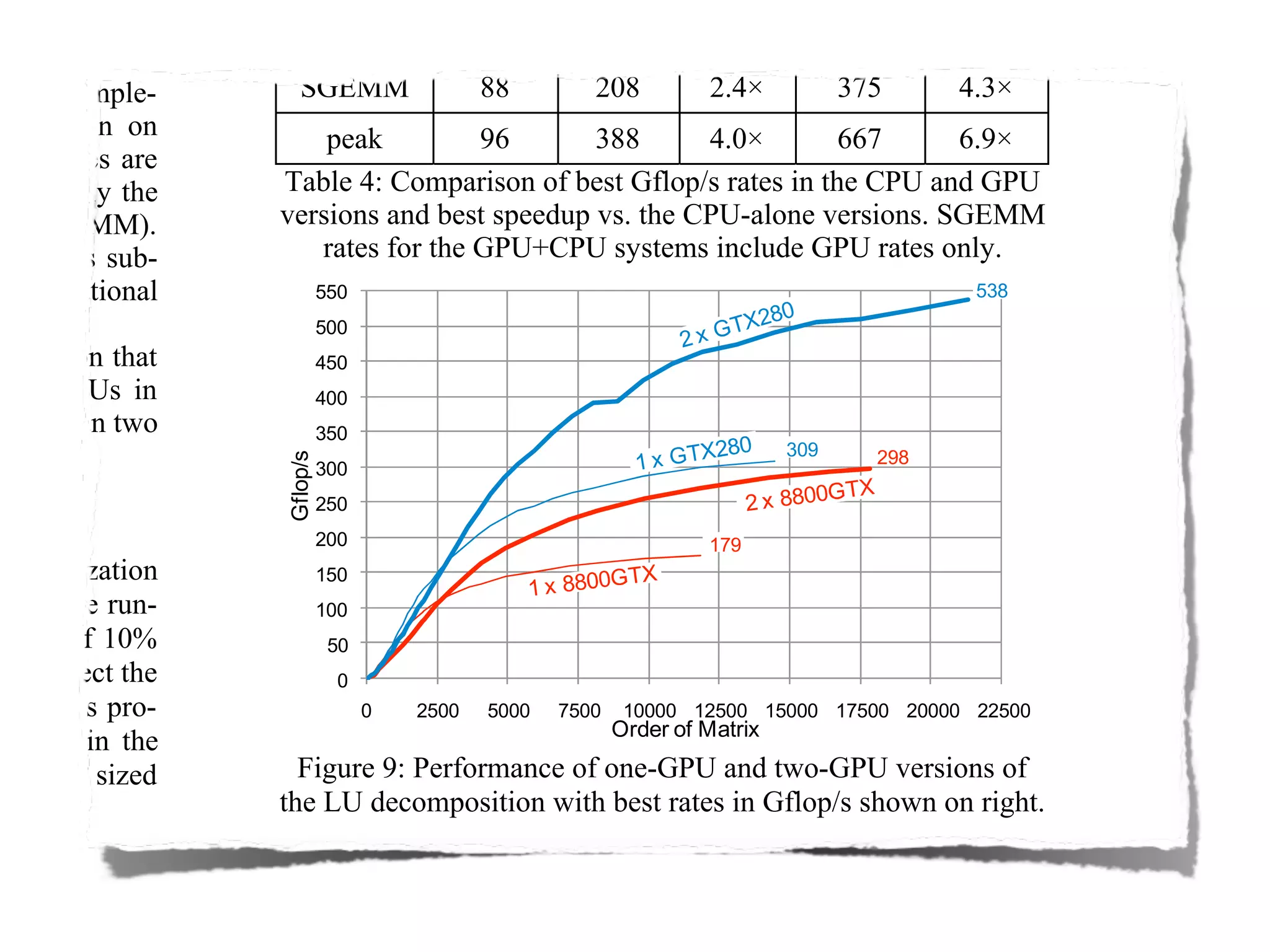

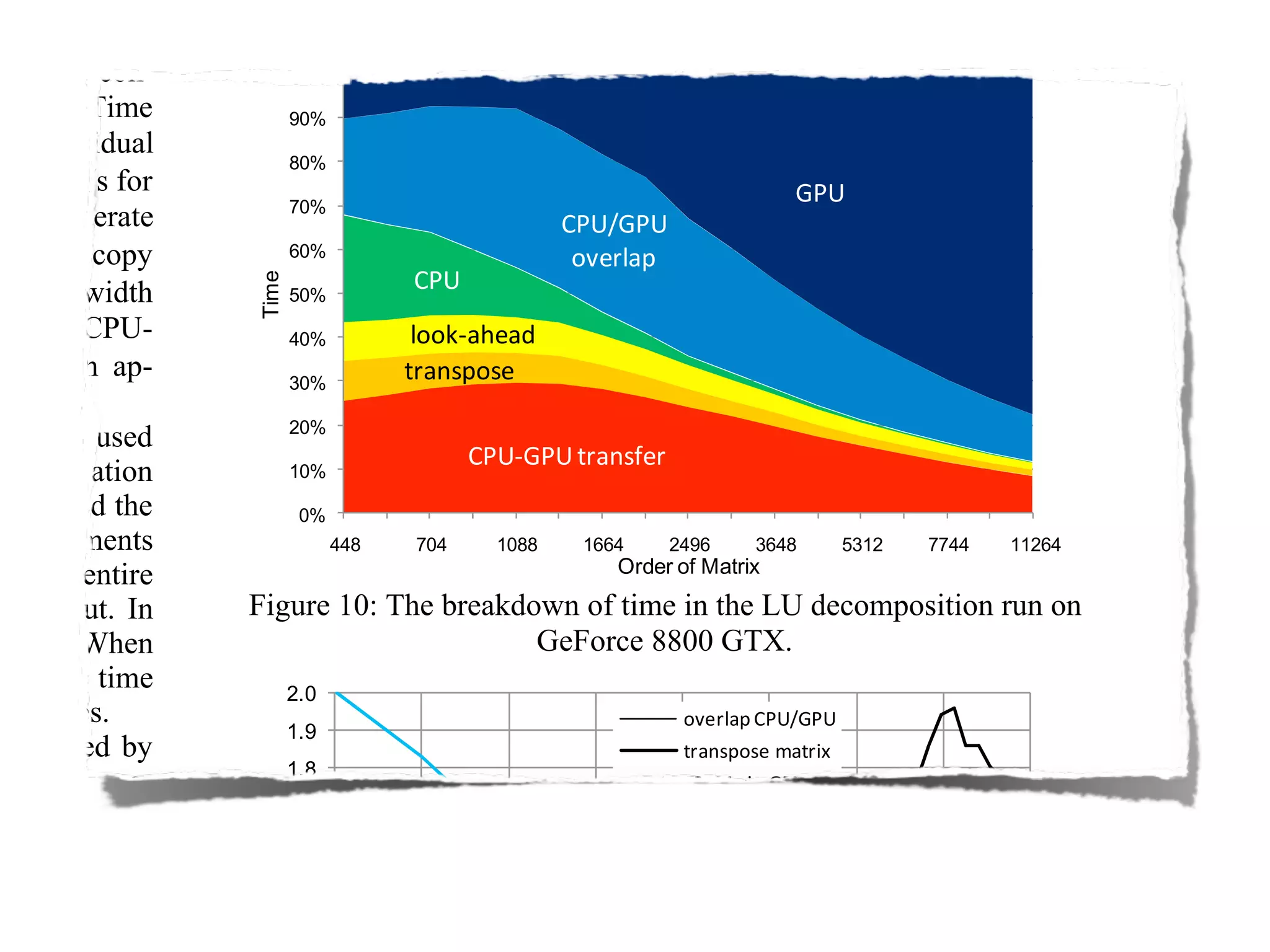

The document provides an overview of programming with CUDA, particularly focusing on texture management and interoperability with OpenGL. It discusses different types of CUDA textures, the steps required to allocate and bind textures, and the integration of CUDA with OpenGL for dynamic texture generation and frame post-processing. Additionally, it covers the use of CUDA libraries like cuBLAS for efficient linear algebra computations on GPUs.