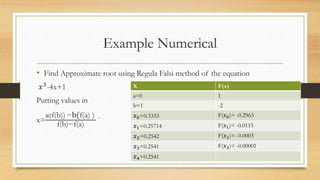

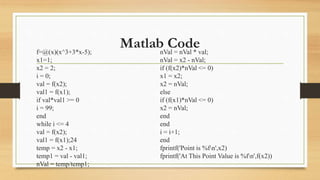

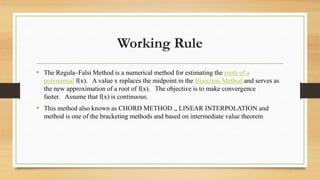

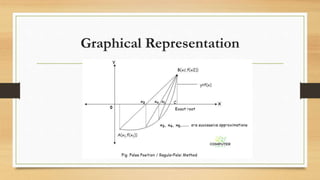

The regula falsi method is a numerical technique for finding roots of polynomials by using linear interpolation between two points where the function changes sign. The method iteratively improves the approximation of the root without requiring derivatives, although it can experience slower convergence in certain scenarios. An example application and MATLAB code are provided to illustrate the method's use and advantages.

![Algorithm

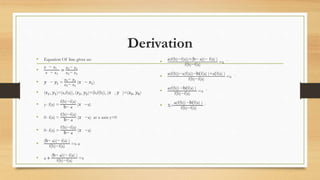

1.Find points a and b such that a < b and f(a) * f(b) < 0.

2.Take the interval [a, b] and determine the next value of x1.

3.If f(x1) = 0 then x1 is an exact root, else if f(x1) * f(b) < 0 then let a = x1,

else if f(a) * f(x1) < 0 then let b = x1.

4.Repeat steps 2 & 3 until f(xi) = 0 or |f(xi)| tolerance](https://image.slidesharecdn.com/lecture11regularfalsemethod-210328062818/85/False-Point-Method-Regula-falsi-method-5-320.jpg)