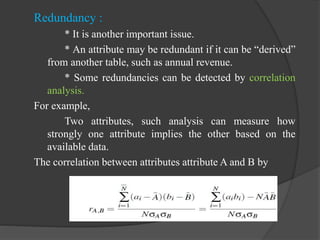

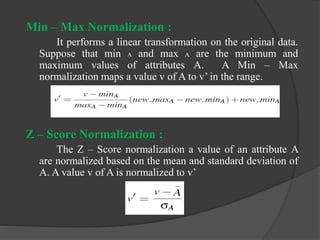

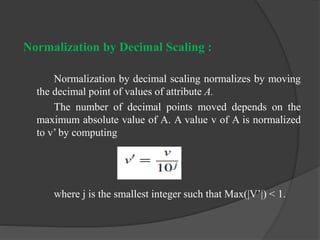

This document summarizes key aspects of data integration and transformation in data mining. It discusses data integration as combining data from multiple sources to provide a unified view. Key issues in data integration include schema integration, redundancy, and resolving data conflicts. Data transformation prepares the data for mining and can include smoothing, aggregation, generalization, normalization, and attribute construction. Specific normalization techniques are also outlined.