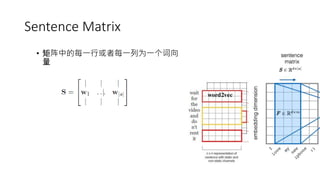

This document discusses various techniques for using convolutional neural networks for sentiment classification. It describes using word embeddings as network parameters that are learned during training or initialized from pre-trained models. It also discusses using sentence matrices and different types of convolutional and pooling layers. Specific CNN models discussed include using different channels, dynamic k-max pooling, semantic clustering, enriching word vectors, and multichannel variable-size convolution. References are provided for several papers on applying CNNs to sentiment classification.

![CNN for Sentence Classification [1]

• Two channels

• CNN-rand

• CNN-non-static

• CNN-static

• CNN-multichannel](https://image.slidesharecdn.com/convolutionalneuralnetworksforsentimentclassification-151027163337-lva1-app6892/85/Convolutional-neural-networks-for-sentiment-classification-8-320.jpg)

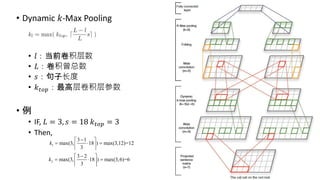

![DCNN Overview [2]

• Convolutional Neural

Networks with Dynamic 𝑘-

Max Pooling

• Wide Convolution

• Dynamic 𝑘-Max Pooling

• 𝑙:当前卷积层数

• 𝐿:卷积曾总数

• 𝑠:句子长度

• 𝑘 𝑡𝑜𝑝:最高层卷积层参数](https://image.slidesharecdn.com/convolutionalneuralnetworksforsentimentclassification-151027163337-lva1-app6892/85/Convolutional-neural-networks-for-sentiment-classification-9-320.jpg)

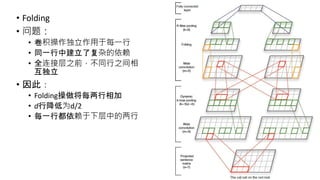

![Semantic Clustering [3]

Sentence

Matrix

Semantic

Candidate

Units

Semantic

Units

m=2, 3, …, 句子长度/2

Semantic Cliques](https://image.slidesharecdn.com/convolutionalneuralnetworksforsentimentclassification-151027163337-lva1-app6892/85/Convolutional-neural-networks-for-sentiment-classification-12-320.jpg)

![seq-CNN [4]

• 受启发于图像有RGB、CMYK多通道的思想,将句子视为图像,句

子中的单词视为像素,因此一个d维的词向量可以看成一个有d个

通道的像素

• 例 词汇表

句子

句向量

多通道

. . .

[0 0 0] [0 0 0] [1 0 0 ] [0 0 1] [0 1 0]](https://image.slidesharecdn.com/convolutionalneuralnetworksforsentimentclassification-151027163337-lva1-app6892/85/Convolutional-neural-networks-for-sentiment-classification-14-320.jpg)

![Enrich word vectors

• 使用了字符级的向量 (character-level embeddings),将词向量和字

符向量的合并在一起作为其向量表示。 [5]

• 使用传统的文本特征来扩展词向量,主要包括:大写单词数量、

表情符号、拉长的单词 (Elongated Units)、情感词数量、否定词、

标点符号、clusters、n-grams。[6]](https://image.slidesharecdn.com/convolutionalneuralnetworksforsentimentclassification-151027163337-lva1-app6892/85/Convolutional-neural-networks-for-sentiment-classification-15-320.jpg)

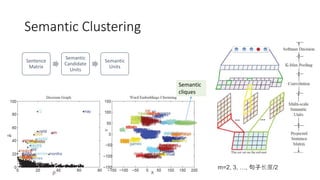

![MVCNN: Multichannel

Variable-Size Convolution [7]

• 不同word embeddings所含有的单

词不一样

• HLBL

• Huang

• GloVe

• SENNA

• Word2vec

• 对某些unknown words的处理

• Randomly initialized

• Projection: (mutual learning)

𝑎𝑟𝑔𝑚𝑖𝑛||𝑤𝑗 − 𝑤𝑗||2](https://image.slidesharecdn.com/convolutionalneuralnetworksforsentimentclassification-151027163337-lva1-app6892/85/Convolutional-neural-networks-for-sentiment-classification-16-320.jpg)

![References

[1] Kim, Y. (n.d.). Convolutional Neural Networks for Sentence Classification. Proceedings of the 2014 Conference on

Empirical Methods in Natural Language Processing (EMNLP).

[2] Kalchbrenner, N., Grefenstette, E., & Blunsom, P. (n.d.). A Convolutional Neural Network for Modelling Sentences.

Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers).

[3] Wang, P., Xu, J., Xu, B., Liu, C. L., Zhang, H., Wang, F., & Hao, H. (2015). Semantic Clustering and Convolutional

Neural Network for Short Text Categorization. In Proceedings of the 53rd Annual Meeting of the Association for

Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (Vol. 2, pp. 352-

357).

[4] Johnson, R., & Zhang, T. (n.d.). Effective Use of Word Order for Text Categorization with Convolutional Neural

Networks. Proceedings of the 2015 Conference of the North American Chapter of the Association for Computational

Linguistics: Human Language Technologies.

[5] dos Santos, C. N., & Gatti, M. (2014). Deep convolutional neural networks for sentiment analysis of short texts. In

Proceedings of the 25th International Conference on Computational Linguistics (COLING), Dublin, Ireland.

[6] Tang, D., Wei, F., Qin, B., Liu, T., & Zhou, M. (2014, August). Coooolll: A deep learning system for twitter sentiment

classification. In Proceedings of the 8th International Workshop on Semantic Evaluation (SemEval 2014) (pp. 208-212).

[7] Wenpeng Yin, Hinrich Schütze. Multichannel Variable-Size Convolution for Sentence Classification. The 19th SIGNLL

Conference on Computational Natural Language Learning (CoNLL'2015, long paper). July 30-31, Peking, China.](https://image.slidesharecdn.com/convolutionalneuralnetworksforsentimentclassification-151027163337-lva1-app6892/85/Convolutional-neural-networks-for-sentiment-classification-18-320.jpg)