Embed presentation

Download as PDF, PPTX

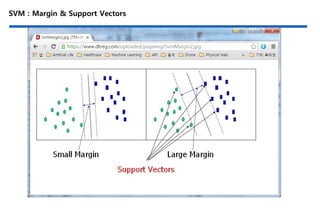

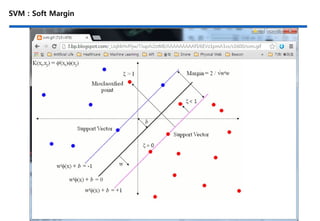

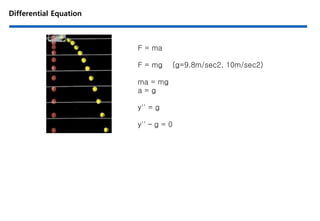

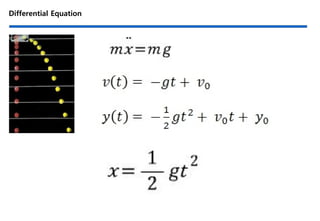

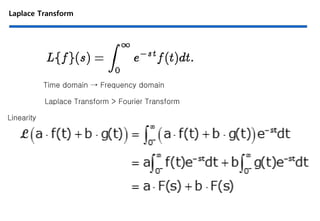

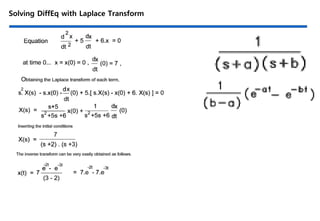

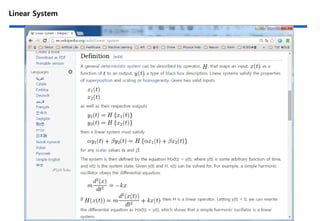

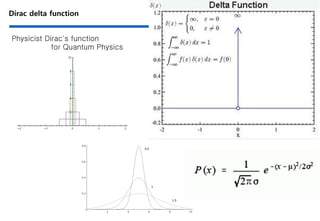

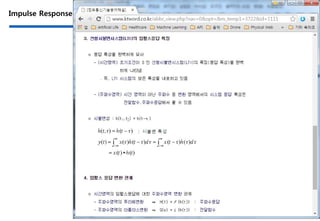

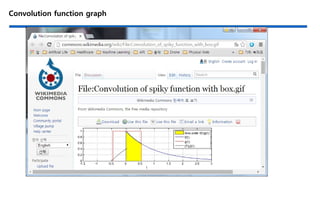

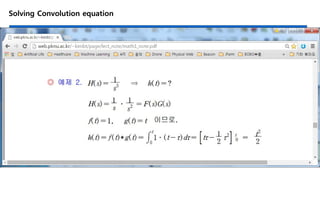

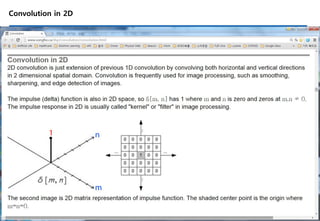

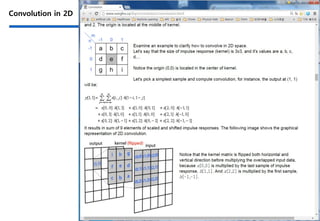

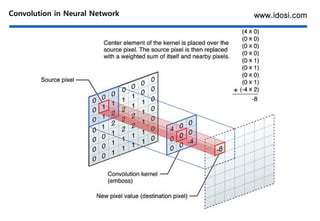

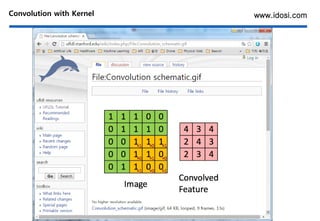

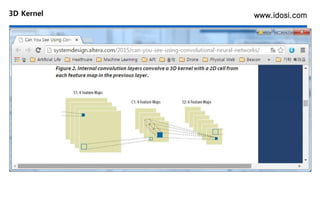

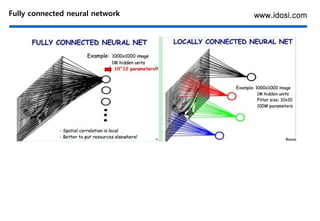

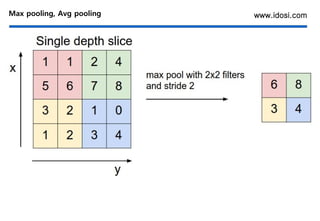

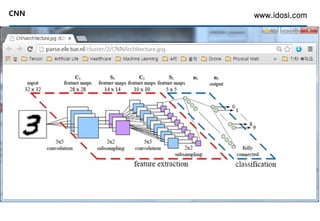

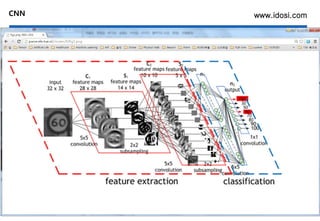

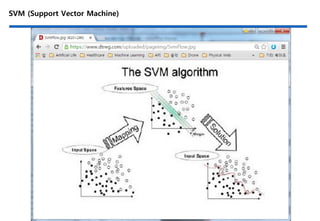

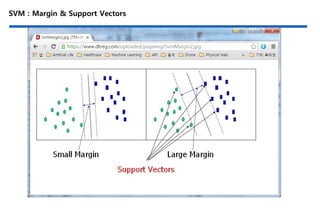

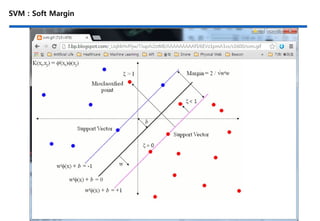

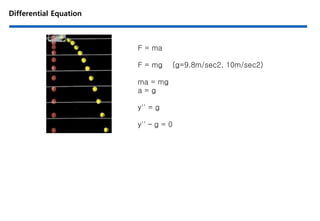

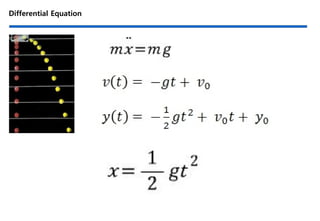

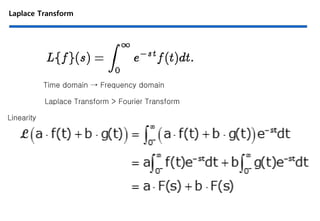

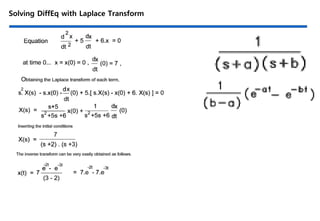

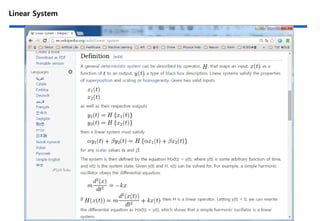

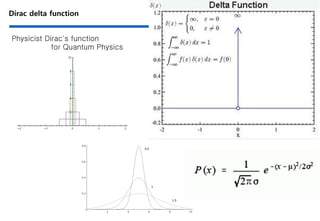

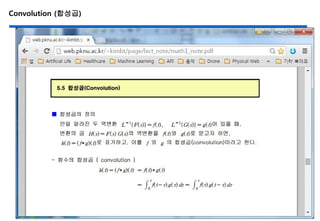

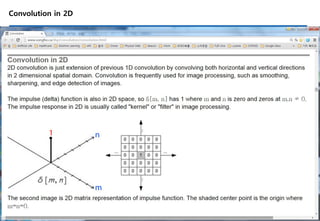

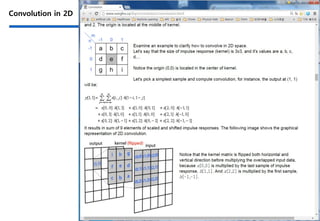

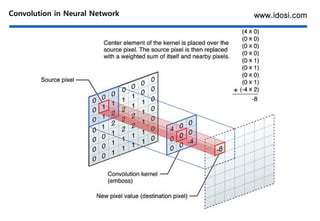

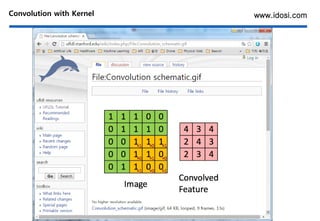

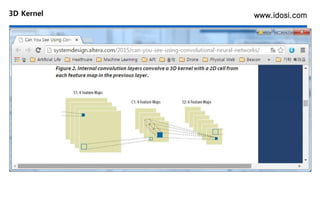

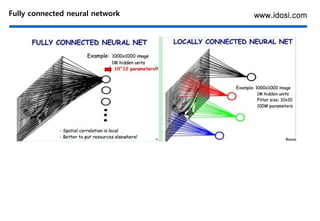

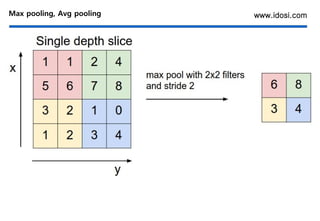

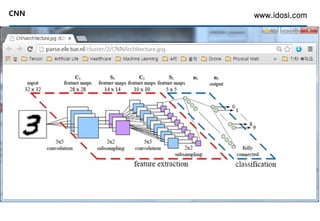

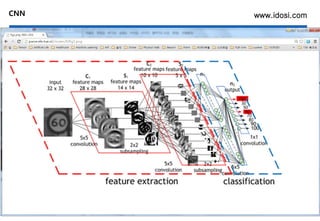

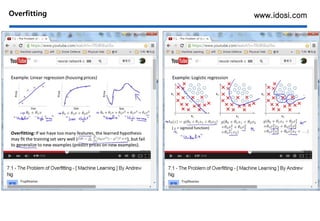

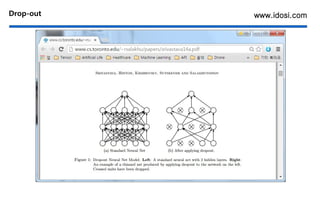

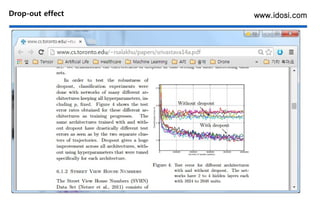

This document discusses deep learning concepts, focusing on convolutional neural networks (CNNs) and related mathematical foundations such as differential equations and the Laplace transform. It covers topics like feature addition in support vector machines (SVM), convolution operations in 2D and 3D, pooling techniques, and methods to address overfitting in neural networks. Additionally, it references various resources, including websites and tools related to deep learning research and applications.