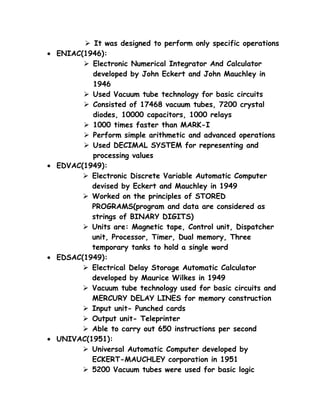

The document discusses the five generations of computers based on the underlying technologies used. The first generation used vacuum tube technology, while the second generation introduced transistor technology. The third generation was based on integrated circuit (IC) technology, and the fourth generation used microchip technology. The fifth generation aims to develop computers with human-like thinking capabilities using technologies like ultra-large-scale integrated circuits and artificial intelligence. The document also covers other classification methods of computers like by purpose, size, and operating principles.