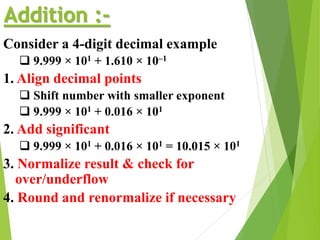

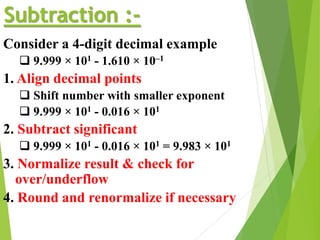

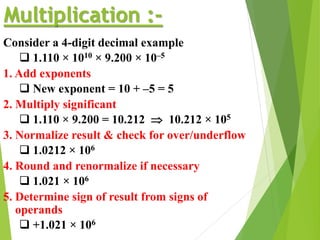

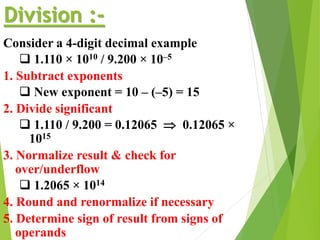

The document discusses floating point arithmetic, including its representation, operations, and pitfalls. It outlines the structure of 32-bit floating point numbers as per the IEEE standard and explains normalization, which ensures the mantissa is non-zero. Additionally, it details how to perform arithmetic operations like addition, subtraction, multiplication, and division with floating point numbers, emphasizing the steps for aligning, normalizing, and adjusting exponents.