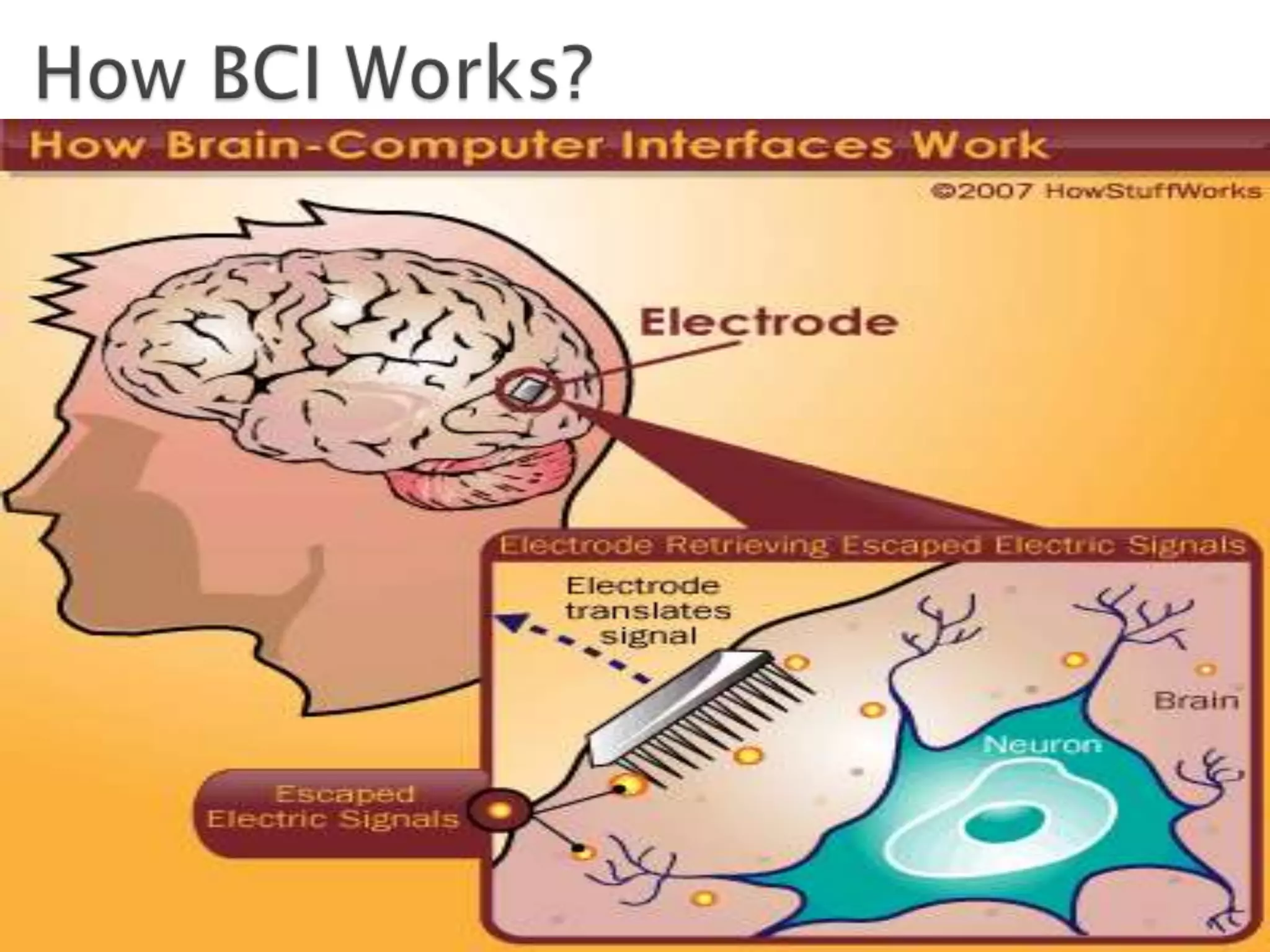

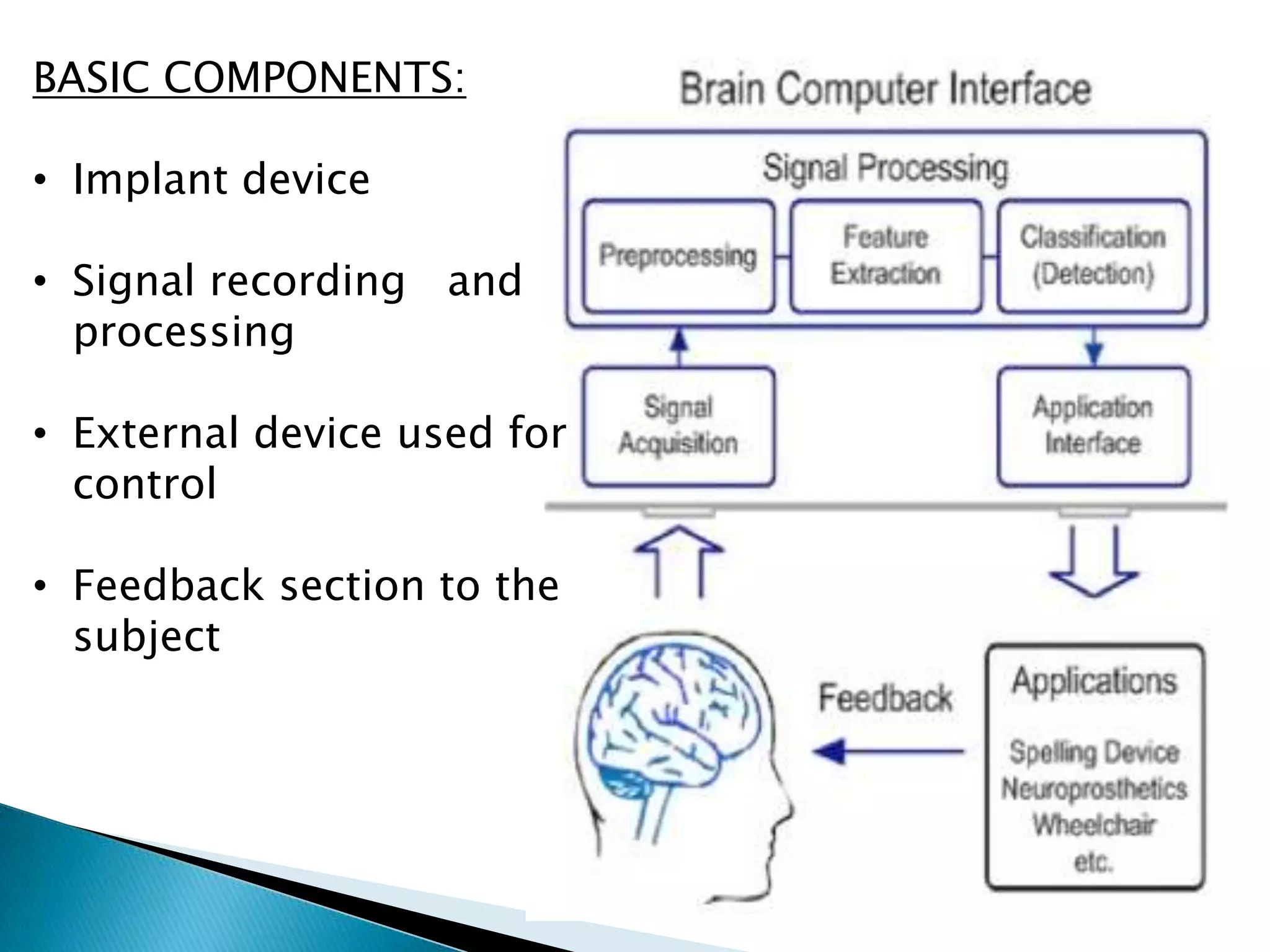

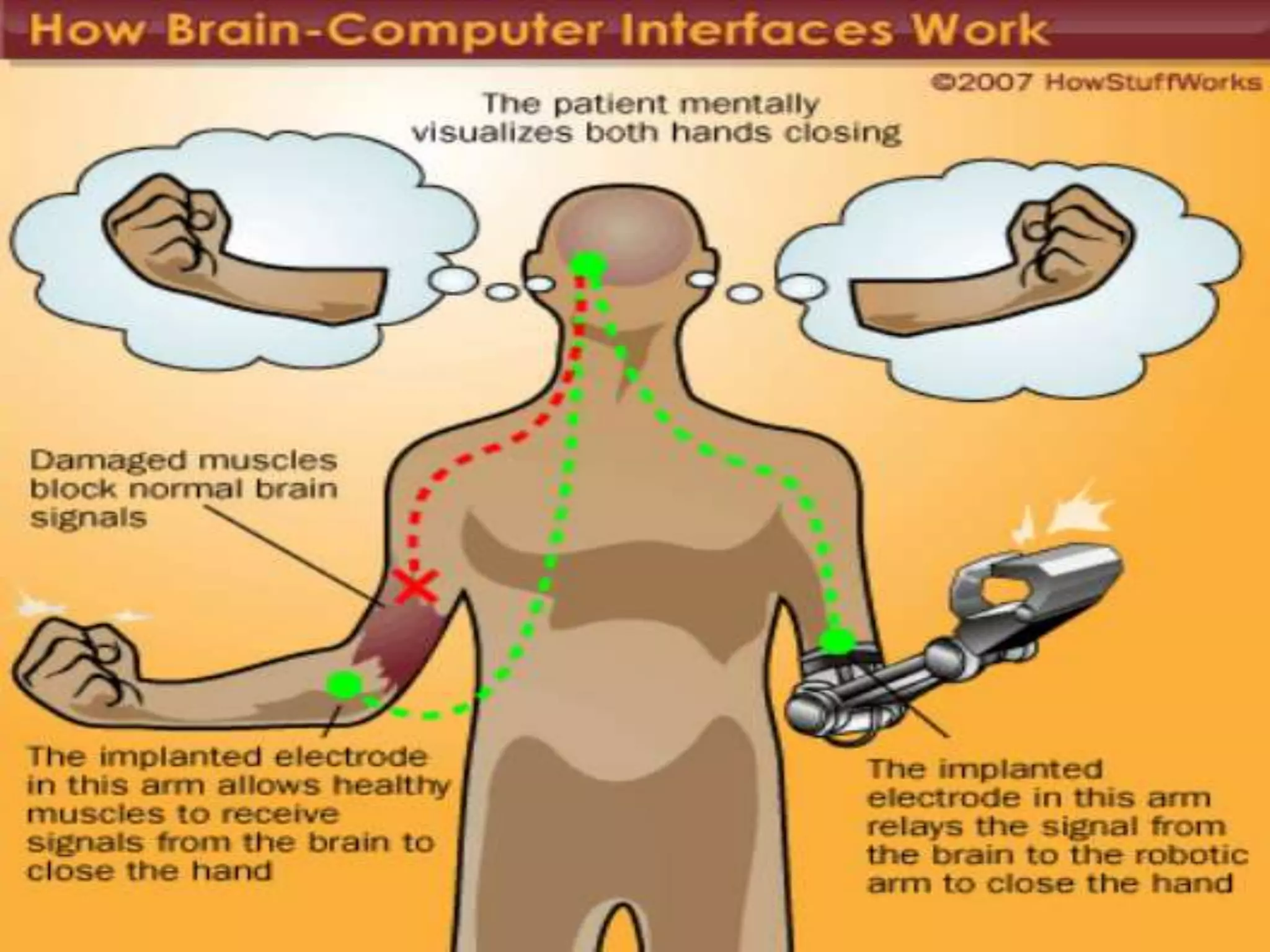

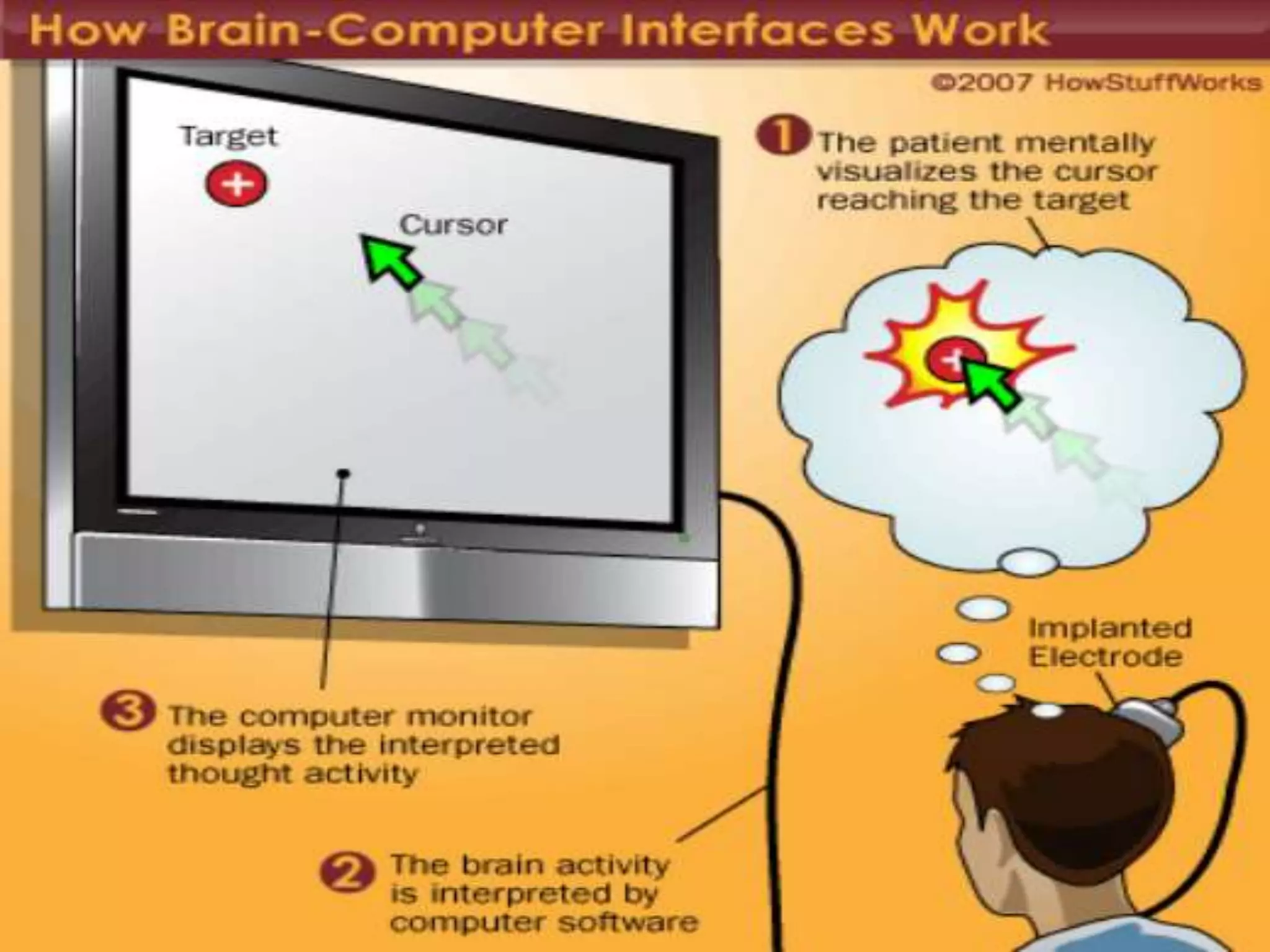

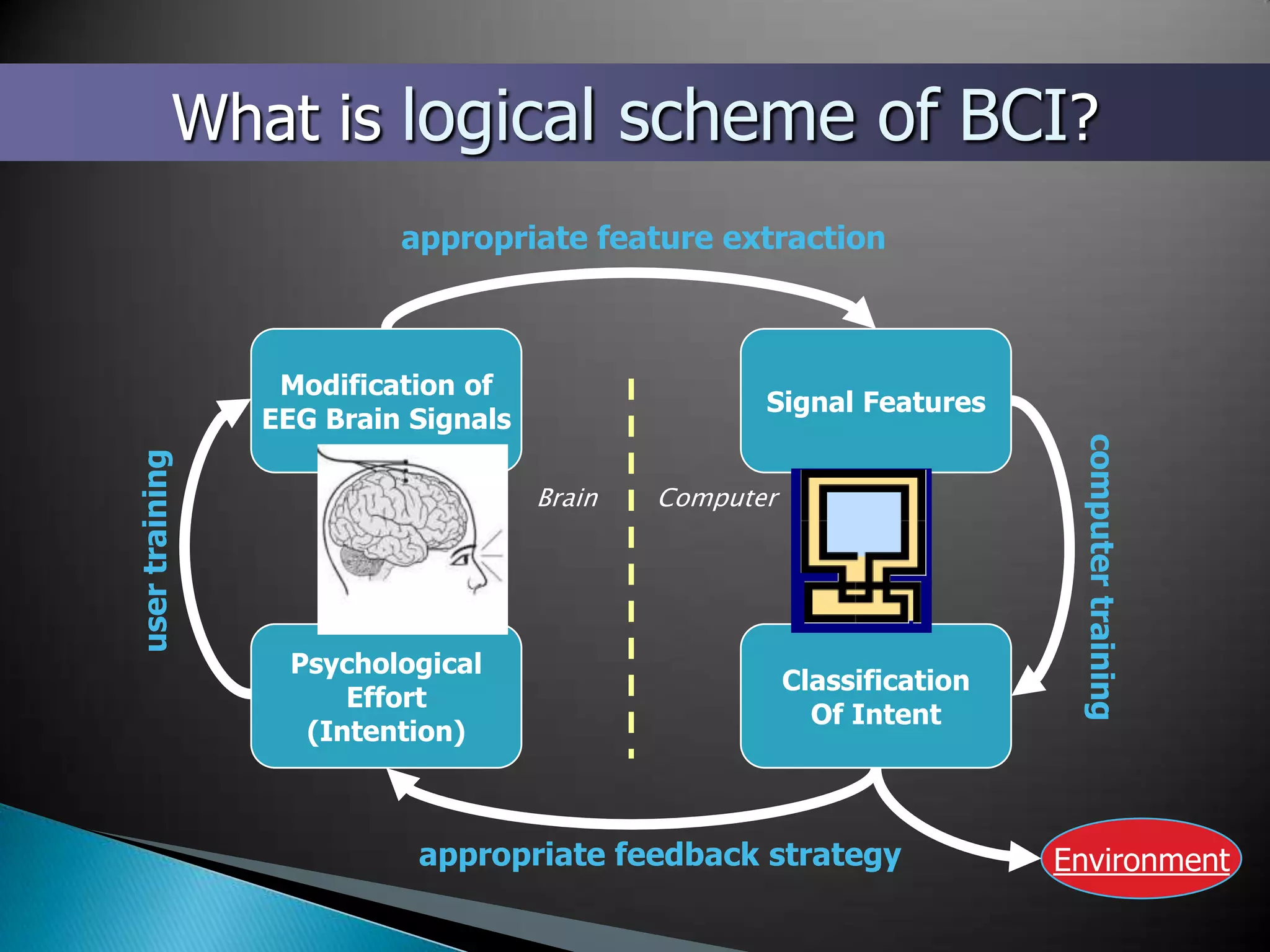

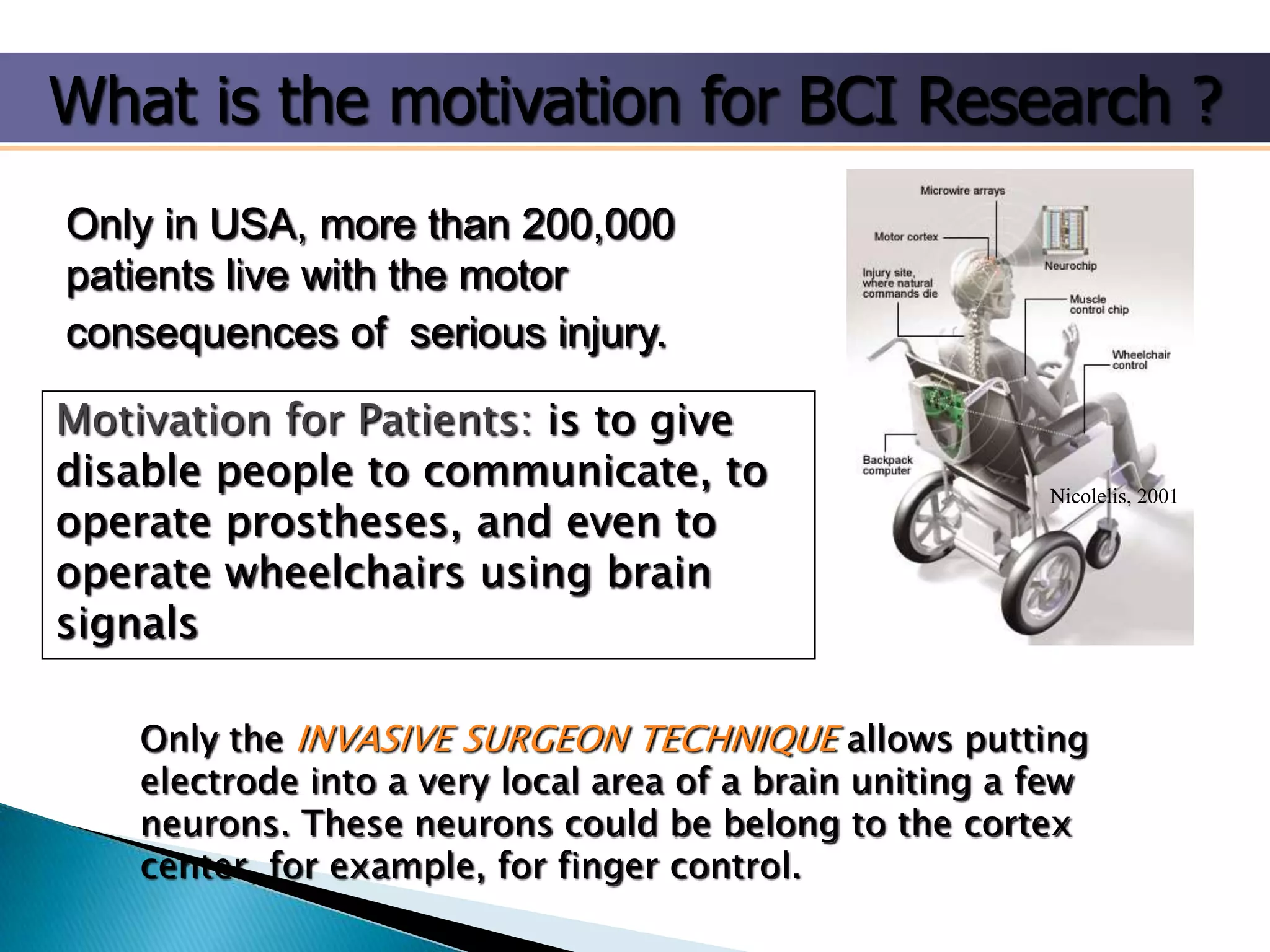

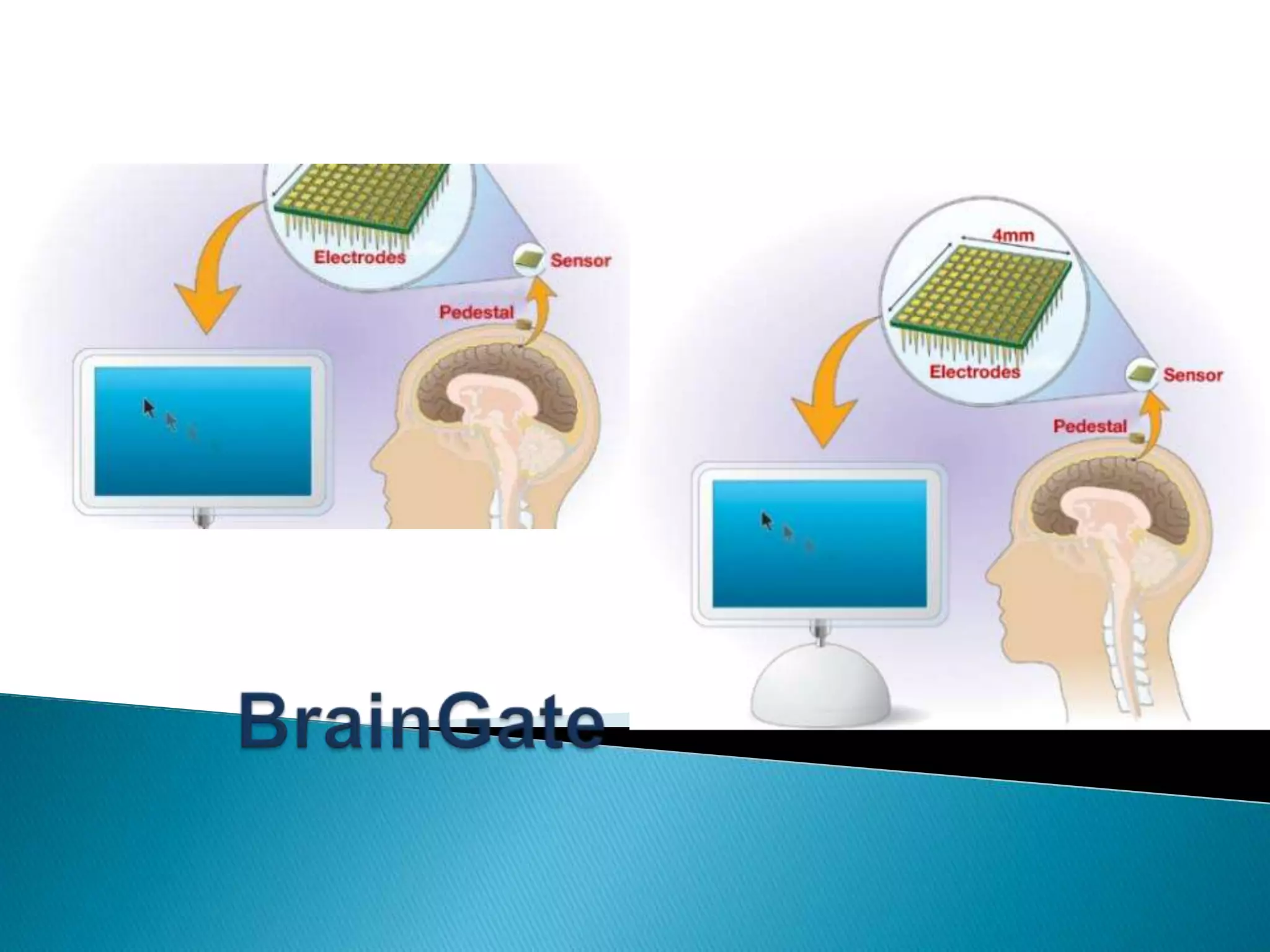

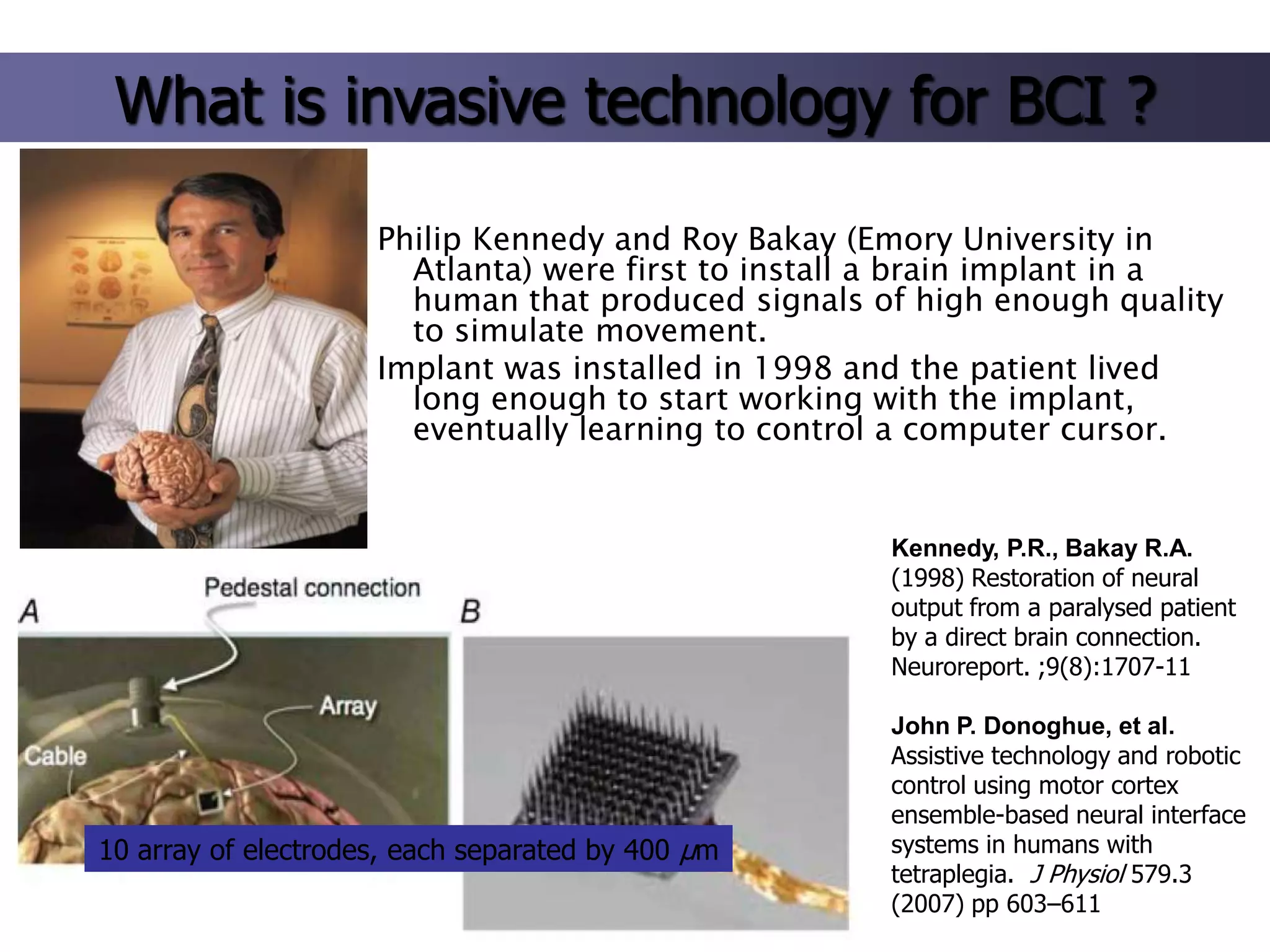

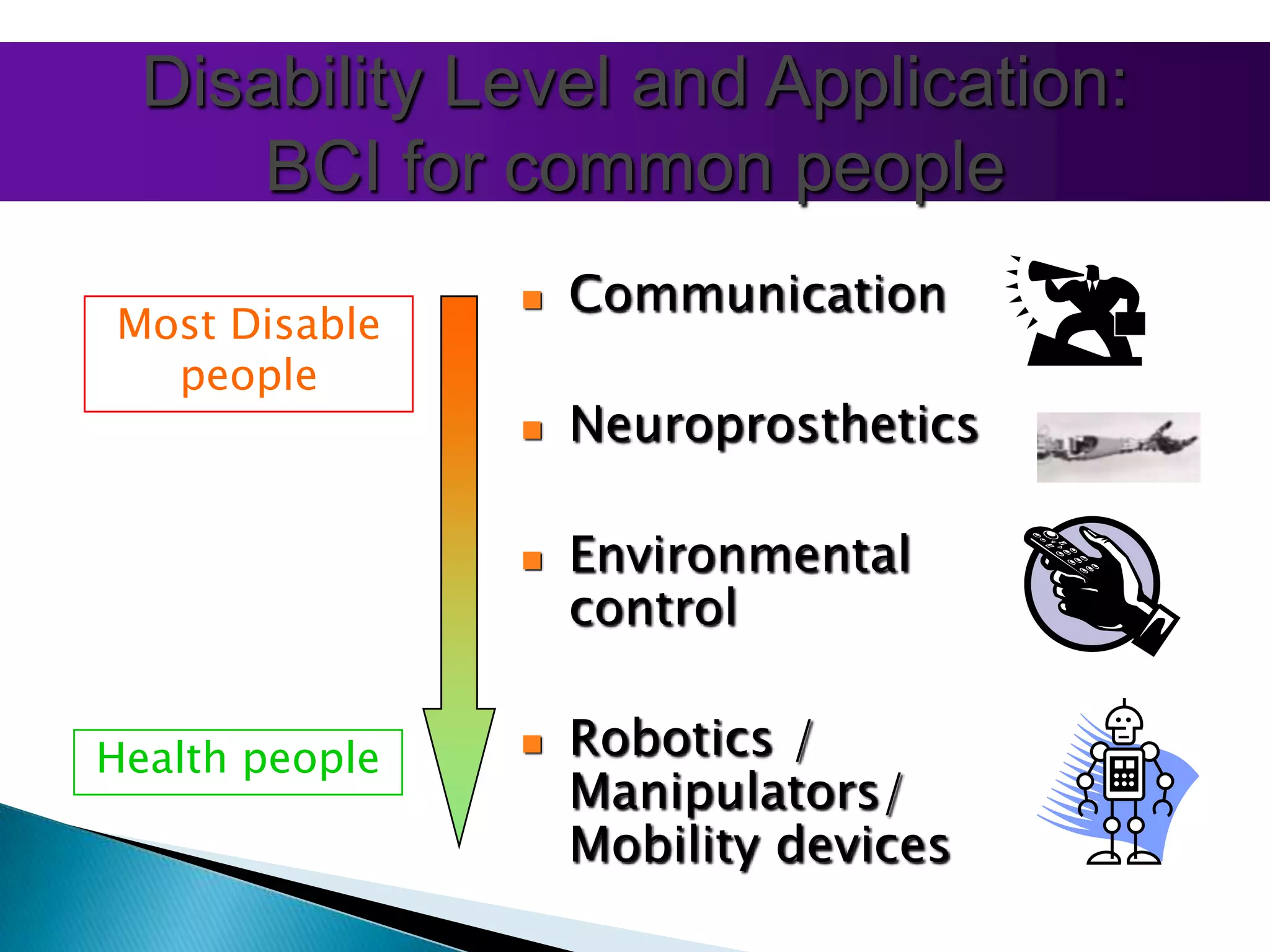

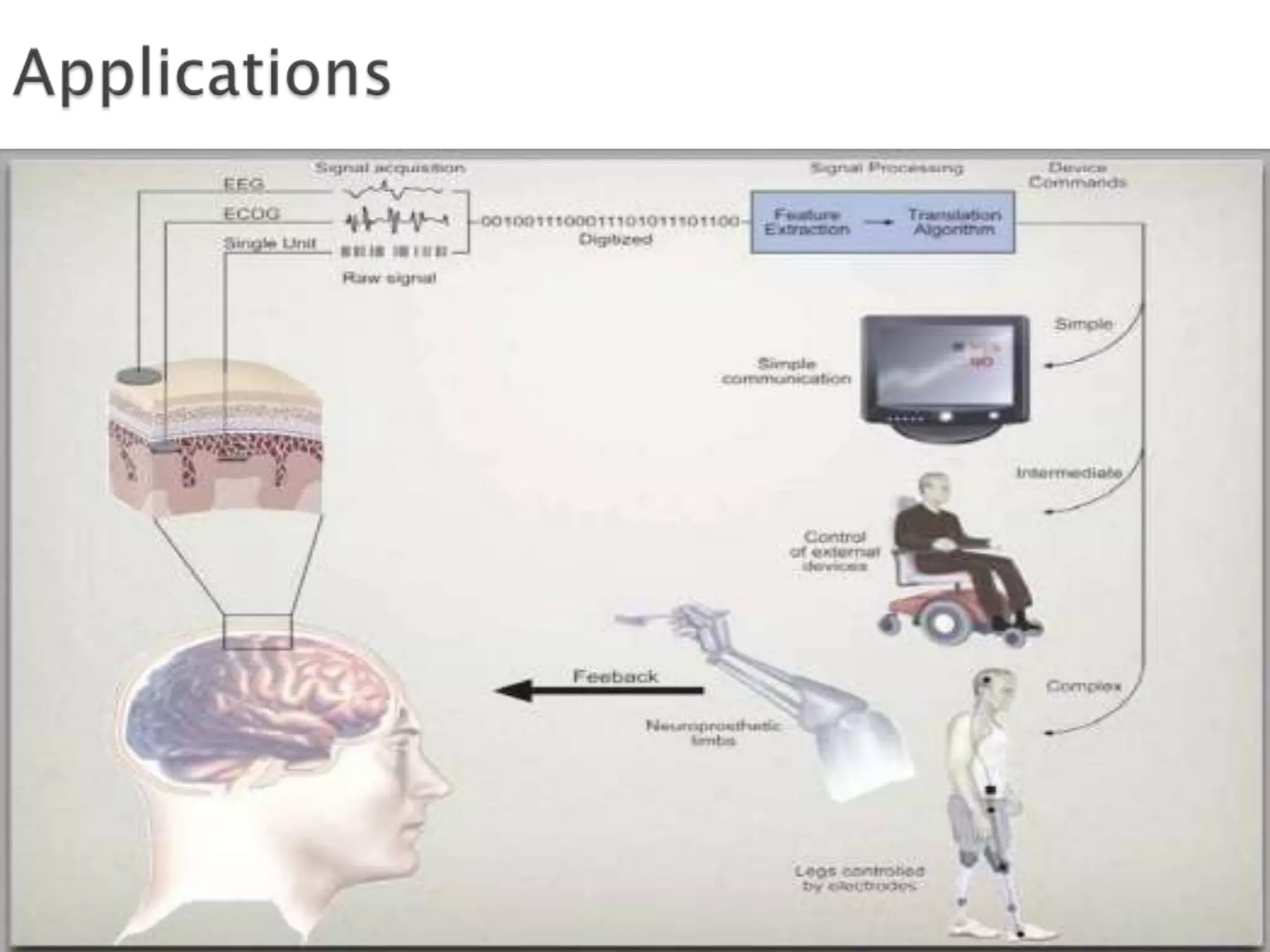

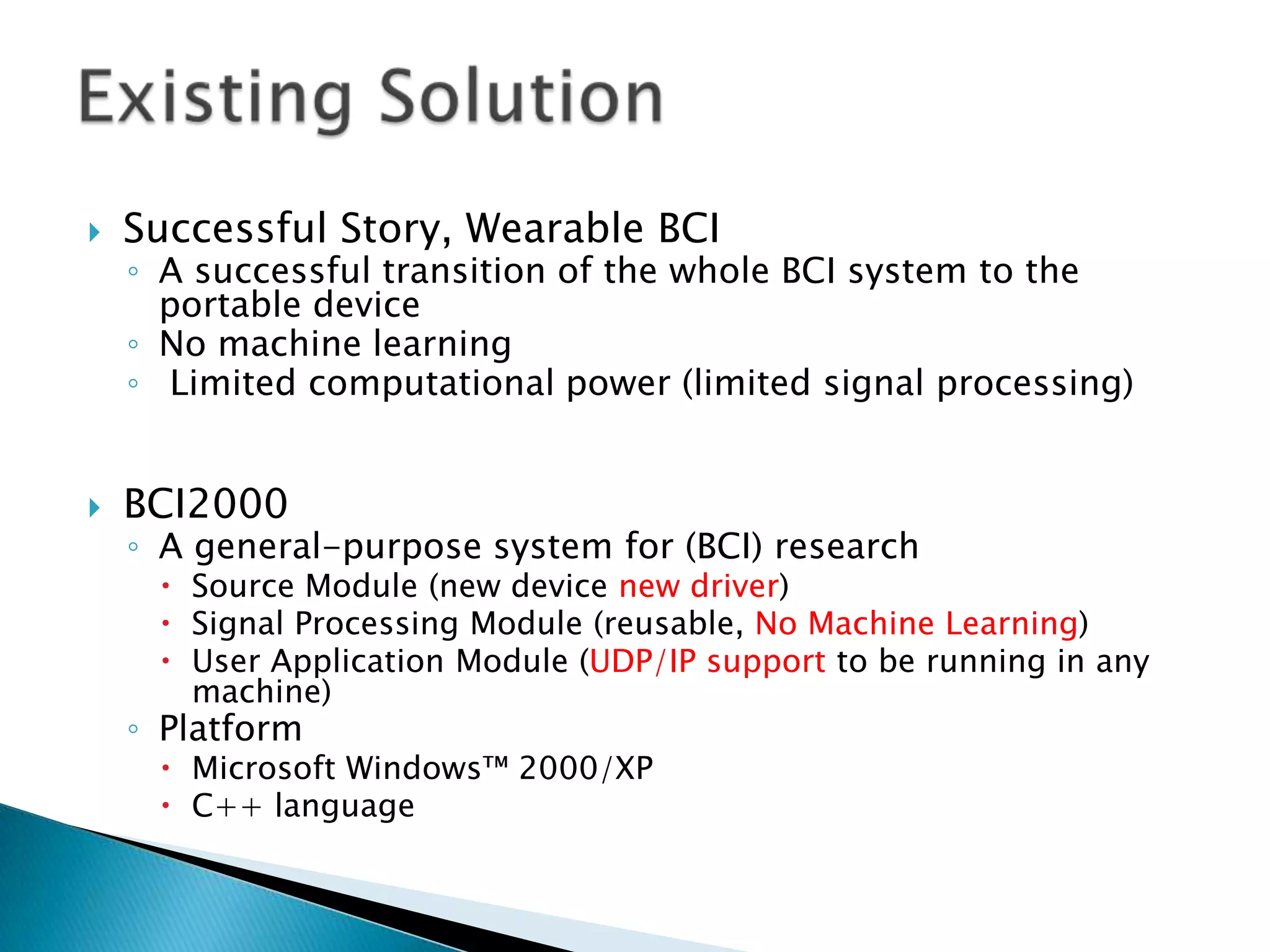

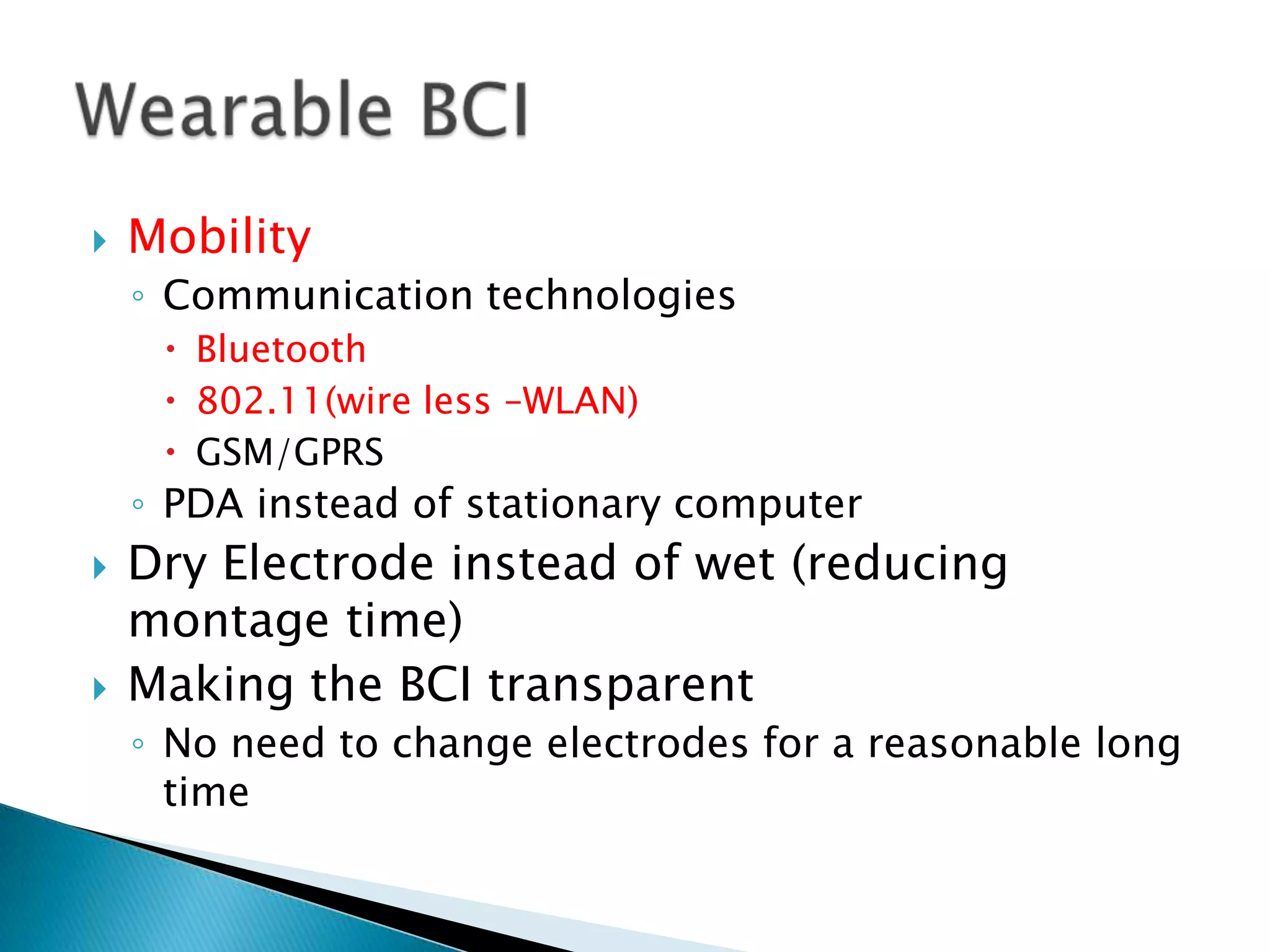

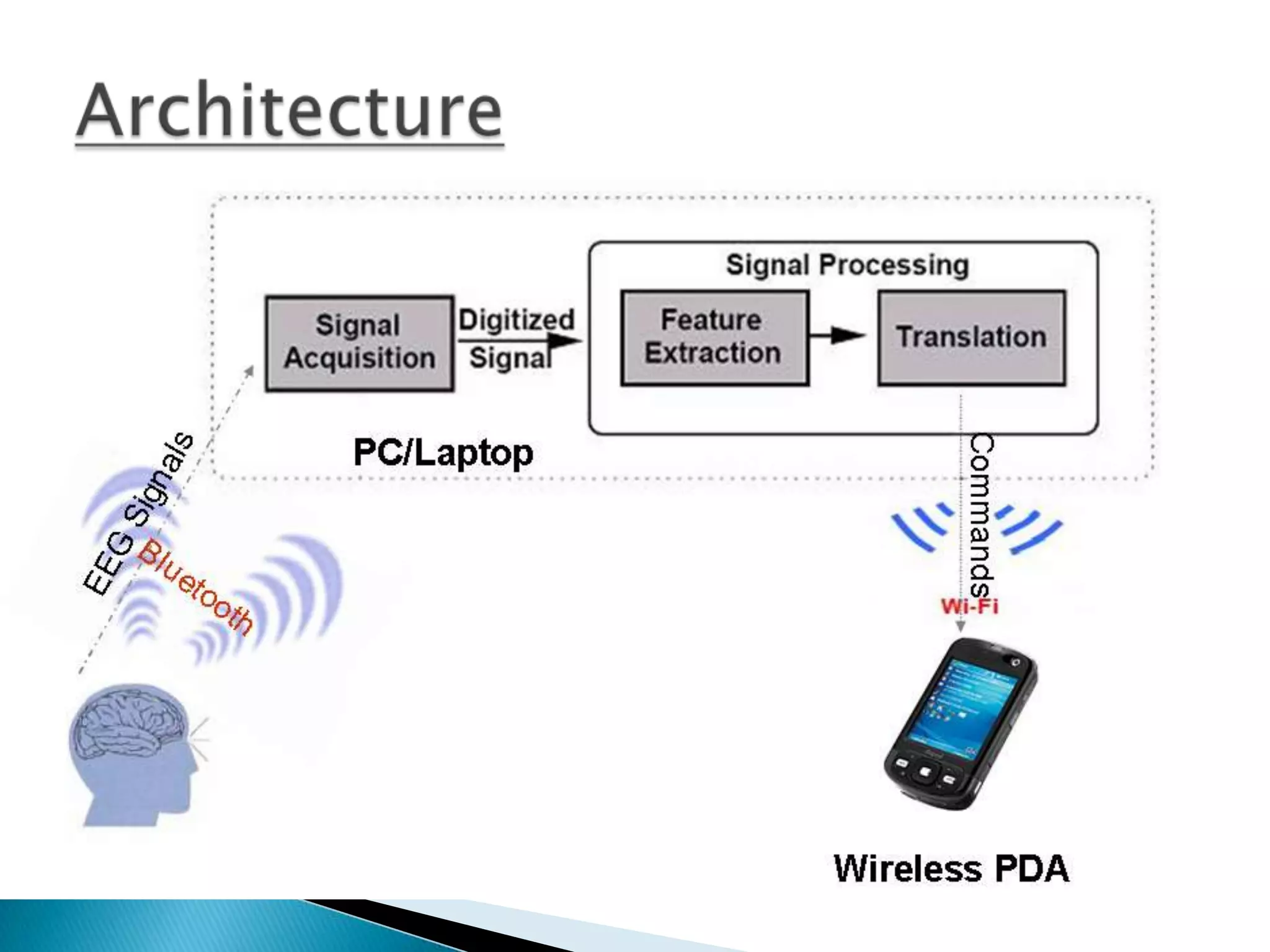

The document discusses brain-computer interfaces (BCI), highlighting their ability to connect the human brain to external devices, enabling communication and control through thought. It covers the history, types (invasive and non-invasive), components, and potential applications of BCI technology, as well as the challenges and advancements in the field. BCIs are portrayed as a promising solution for improving the lives of individuals with disabilities and have implications for various technologies including robotics and virtual reality.

![“ A Brain-Computer Interface is a communication

system that do not depend on peripheral nerves

and muscles “

[J. R. Wolpaw et al. “Brain-computer interface

technology: A review of the first international

meeting,” IEEE Trans. Rehab. Eng., vol. 8, no.

2, pp. 164–173, 2000]](https://image.slidesharecdn.com/braincomputerinterfacesbyudaymcgill-131013022609-phpapp01/75/Brain-Computer-Interfaces-BCI-4-2048.jpg)

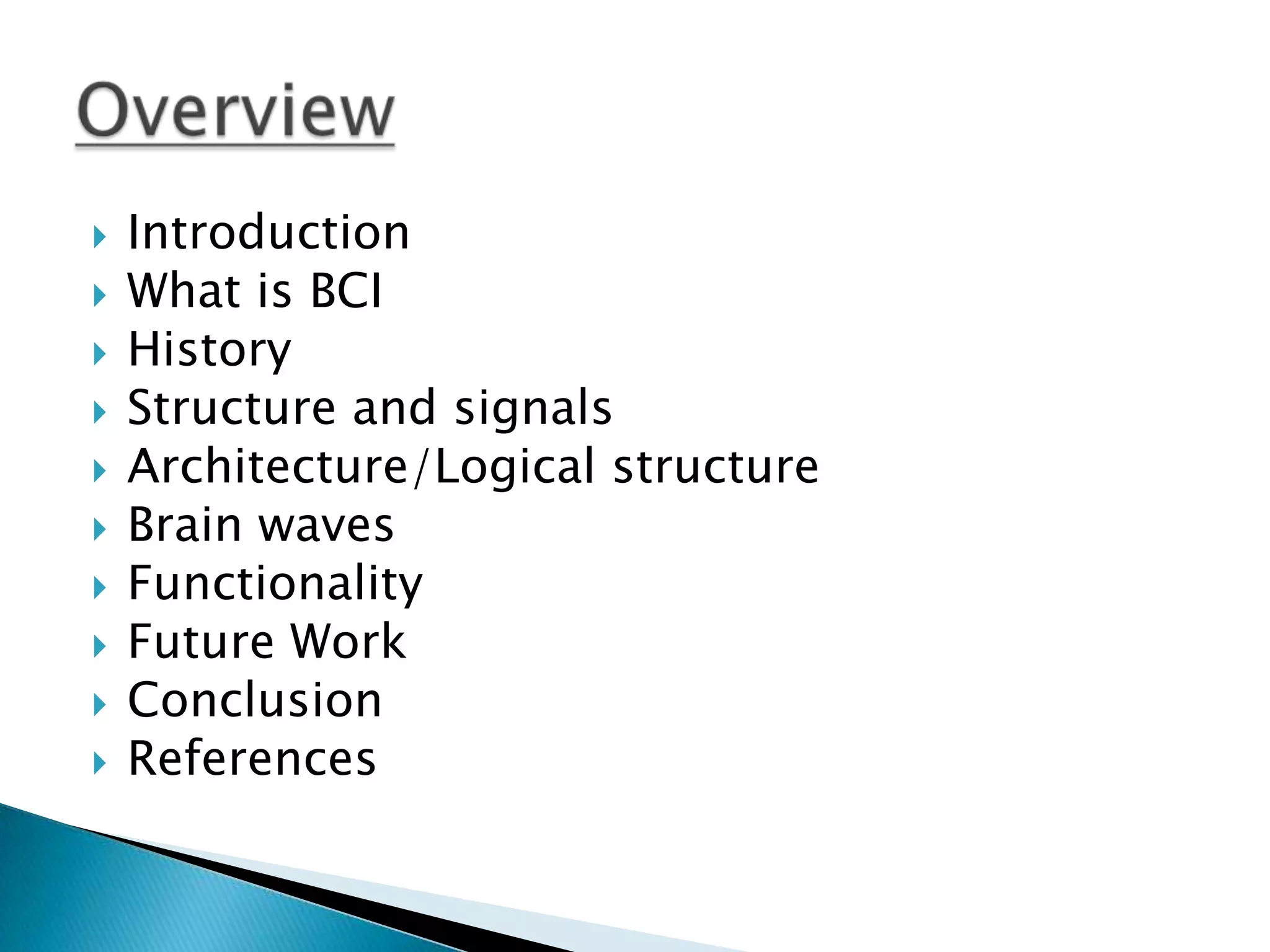

![Theta

waves [4, 7.5] associated with reverie,

daydreaming, meditation, creative ideas

Delta waves [0,4] Hz associated with deep sleep and in

the awake state were thought to indicates physical defects

in the brain.](https://image.slidesharecdn.com/braincomputerinterfacesbyudaymcgill-131013022609-phpapp01/75/Brain-Computer-Interfaces-BCI-28-2048.jpg)

![

[1] IEEE Xplorer Digital Library website(Through

SJCE Server)

http://ieeexplore.ieee.org/Xplore

[2] Wikipedia - internet encyclopedia

http://en.wikipedia.org/wiki/Braincomputer_interface](https://image.slidesharecdn.com/braincomputerinterfacesbyudaymcgill-131013022609-phpapp01/75/Brain-Computer-Interfaces-BCI-39-2048.jpg)