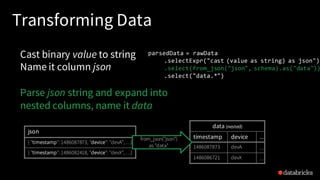

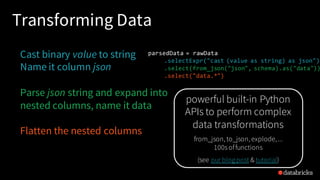

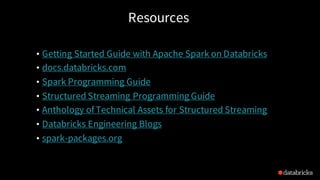

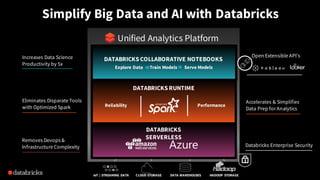

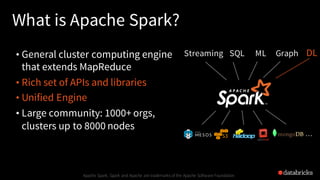

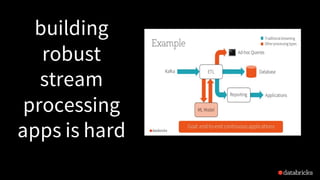

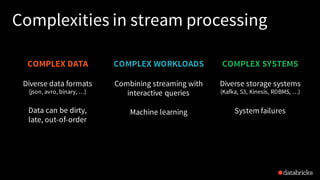

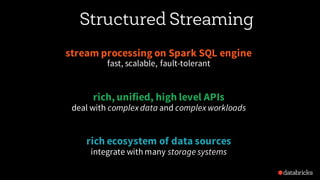

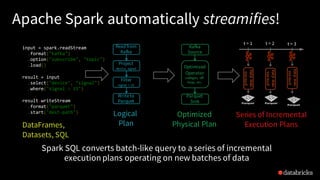

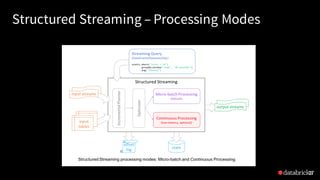

The document discusses the use of Apache Spark for building continuous applications with structured streaming, emphasizing the challenges of stream processing and advantages of using Spark. It covers various components such as data integration, transformation, and real-time analytics, showcasing the power of structured streaming in dealing with complex data. The talk includes a demonstration of continuous applications and resources for further exploration of Spark's capabilities.

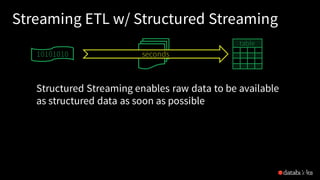

![Traditional ETL

• Raw, dirty, un/semi-structured is data dumped as files

• Periodic jobs run every few hours to convert raw data to structured

data ready for further analytics

• Hours of delay before taking decisions on latest data

• Problem: Unacceptable when time is of essence

• [intrusion detection, anomaly detection,etc.]

38

file

dump

seconds hours

table

10101010](https://image.slidesharecdn.com/pysparkstructuredstreamingfinal-180409215523/85/Writing-Continuous-Applications-with-Structured-Streaming-in-PySpark-38-320.jpg)

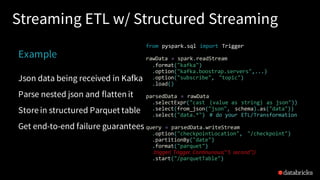

![Reading from Kafka

raw_data_df = spark.readStream

.format("kafka")

.option("kafka.boostrap.servers",...)

.option("subscribe", "topic")

.load()

rawData dataframe has

the following columns

key value topic partition offset timestamp

[binary] [binary] "topicA" 0 345 1486087873

[binary] [binary] "topicB" 3 2890 1486086721](https://image.slidesharecdn.com/pysparkstructuredstreamingfinal-180409215523/85/Writing-Continuous-Applications-with-Structured-Streaming-in-PySpark-41-320.jpg)