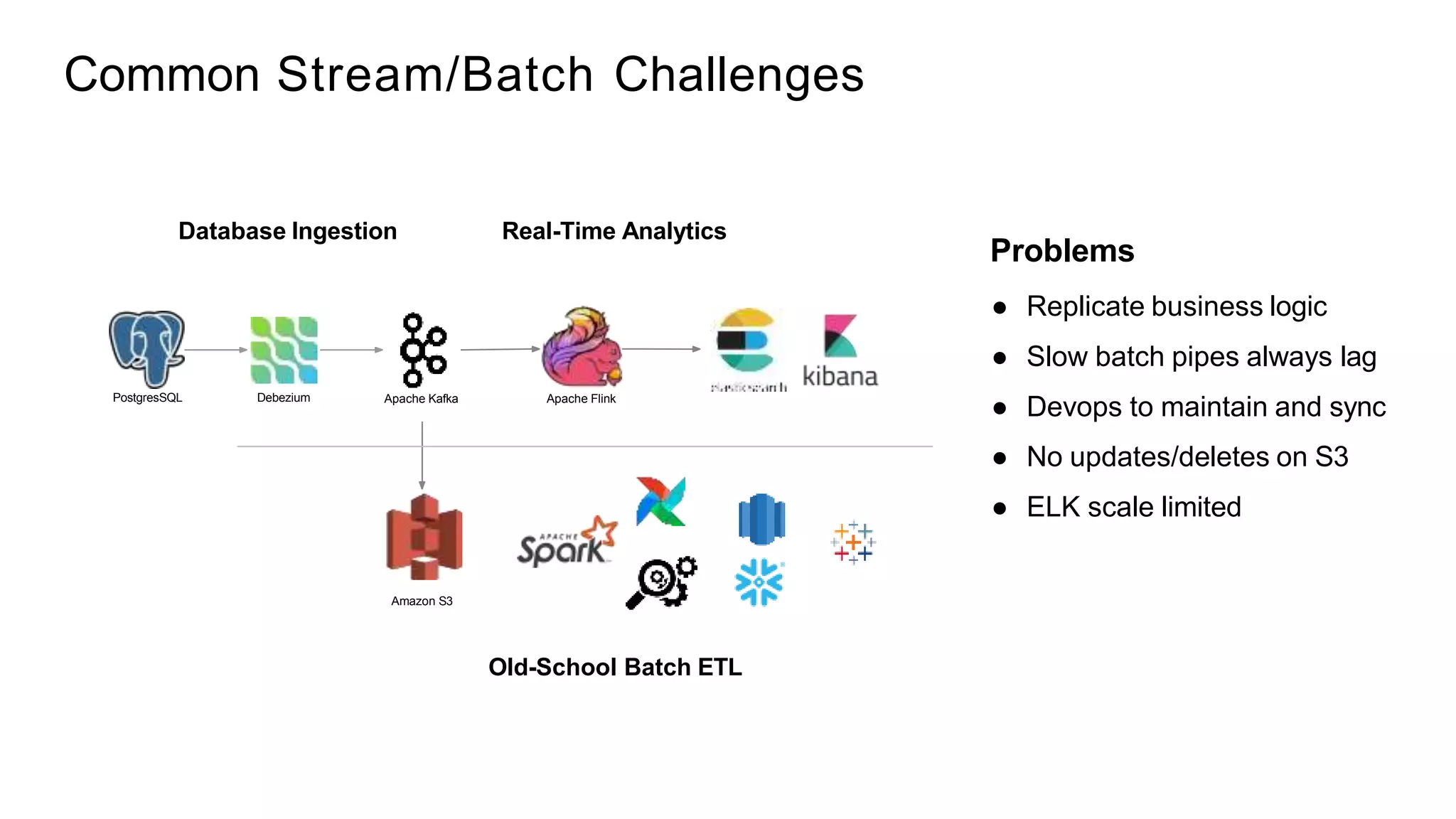

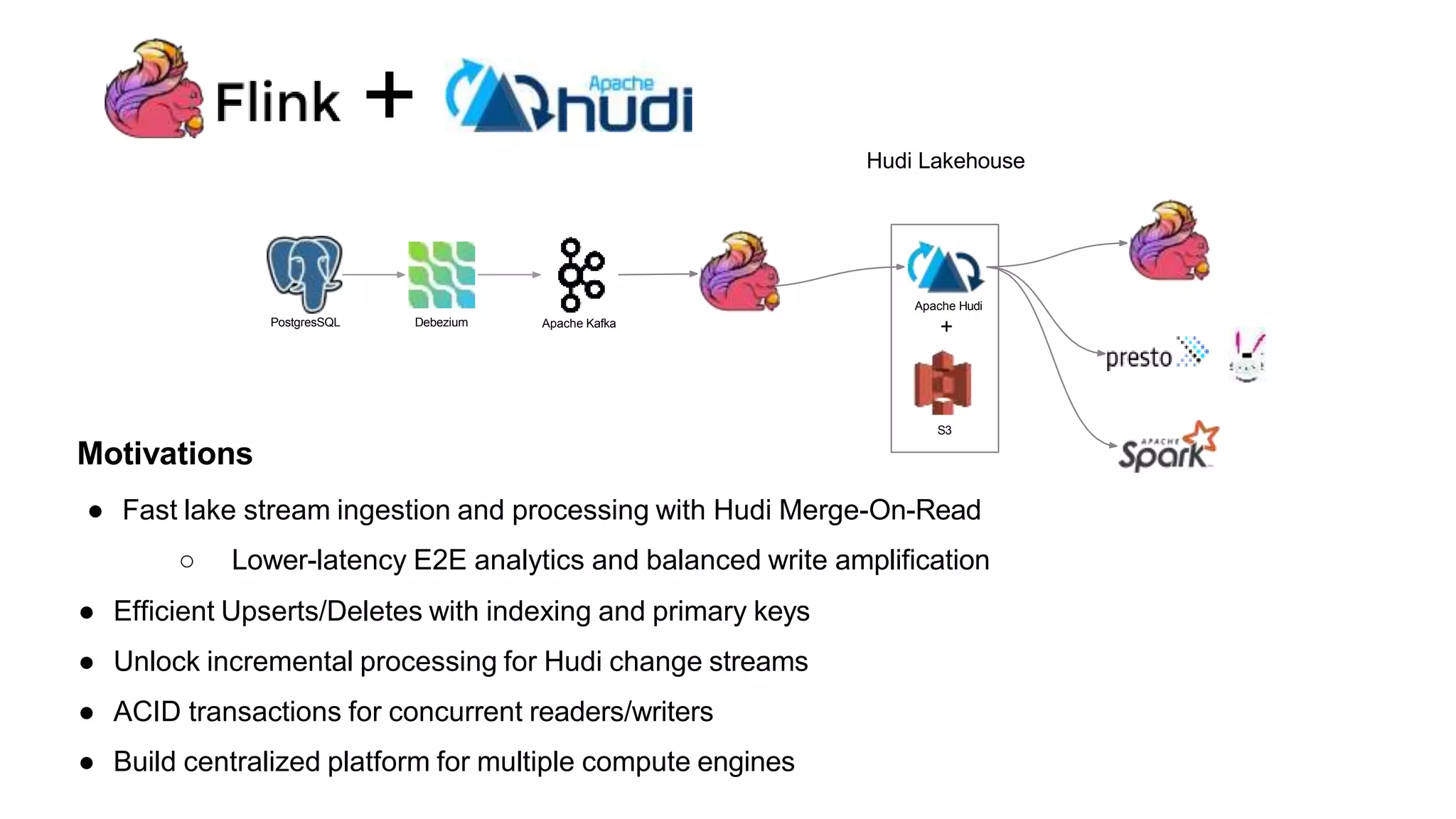

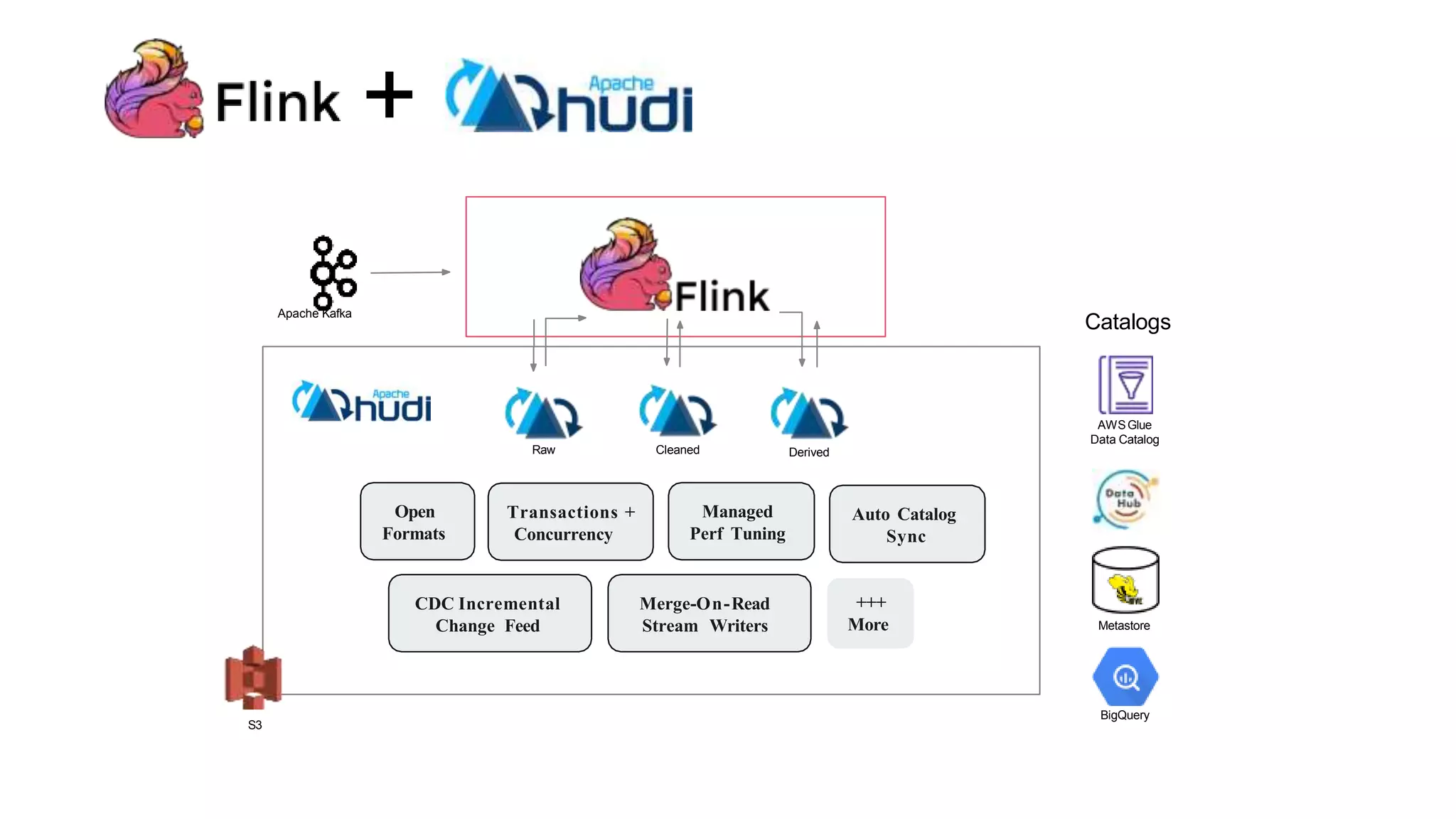

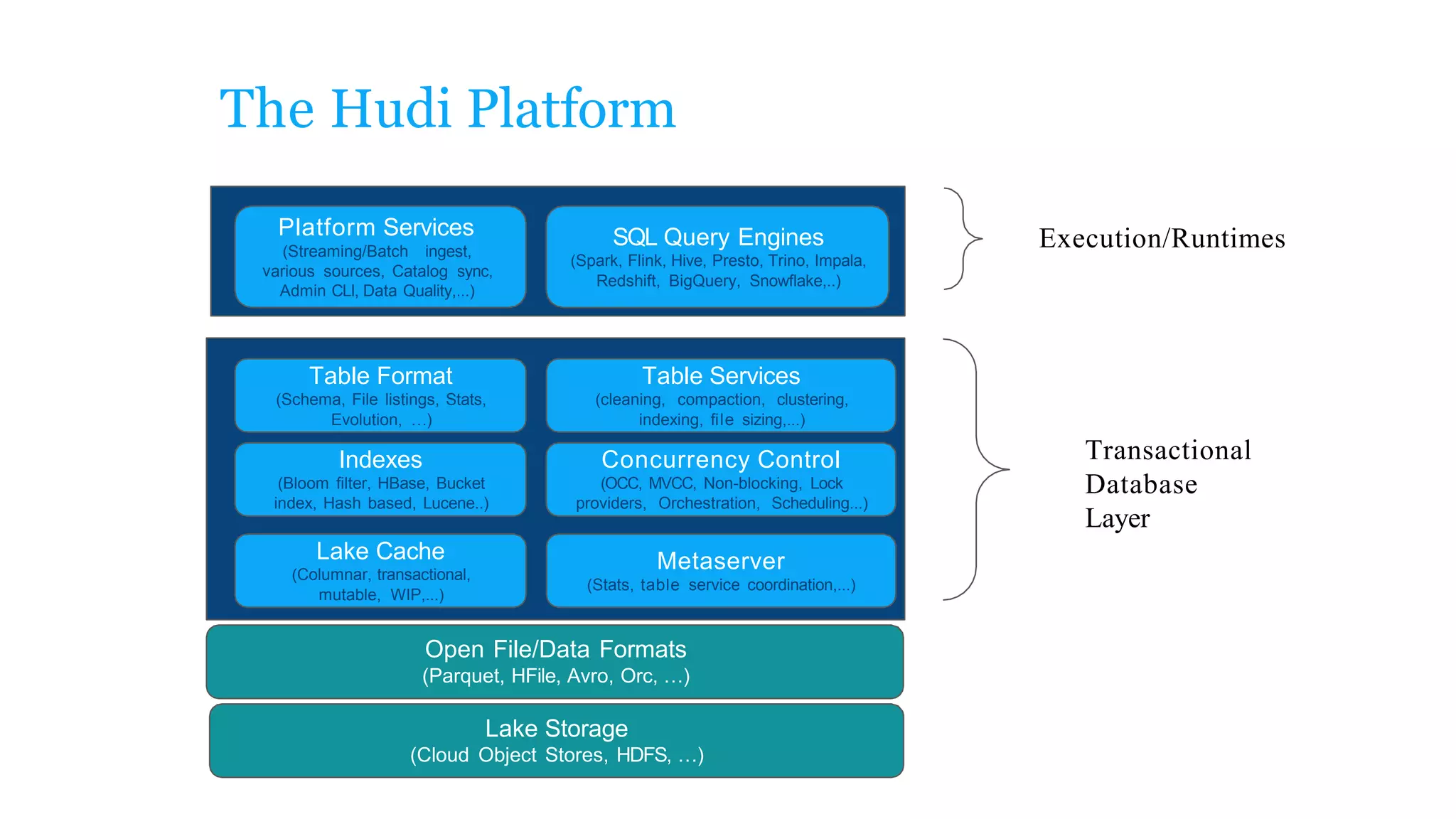

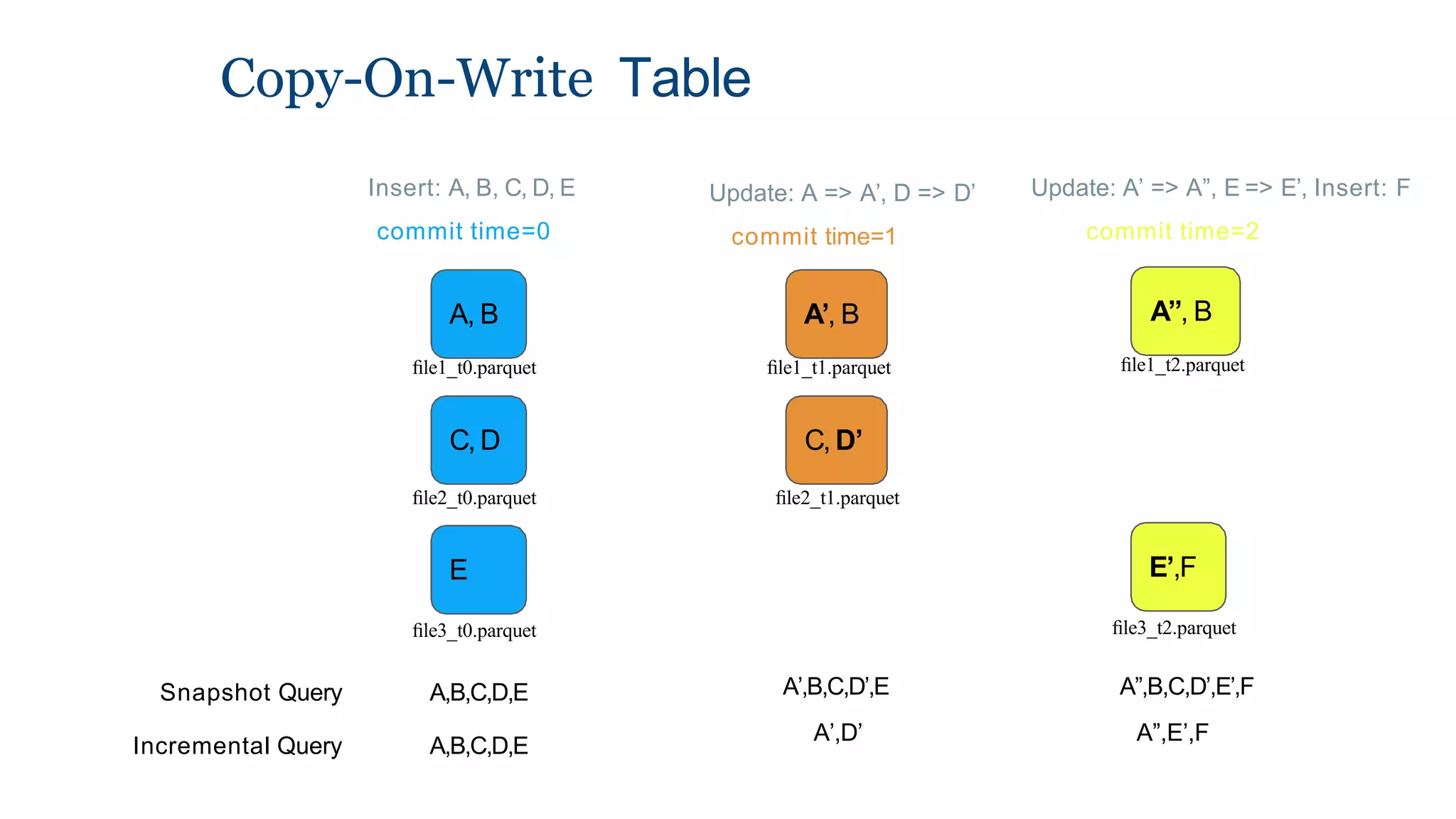

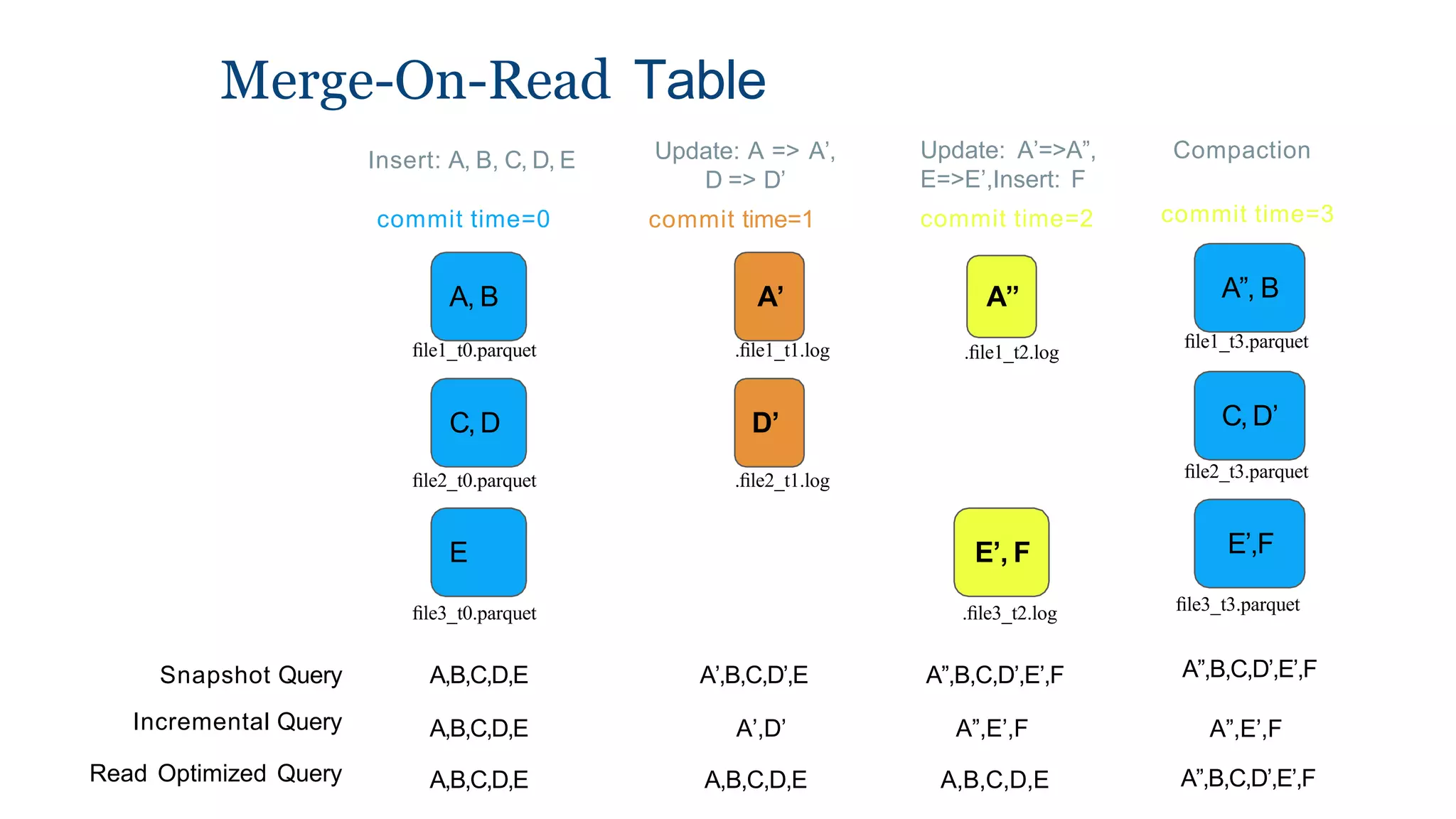

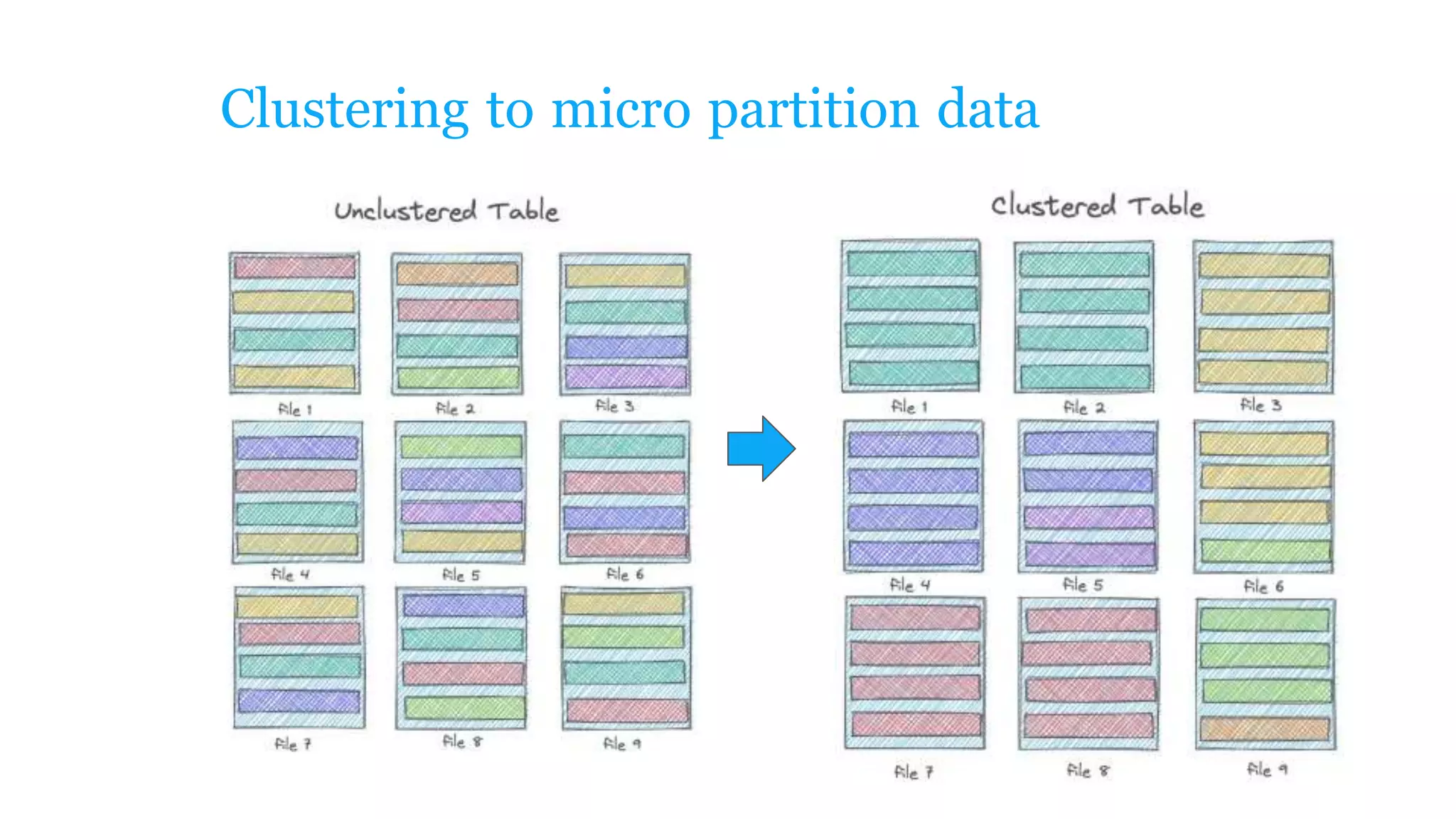

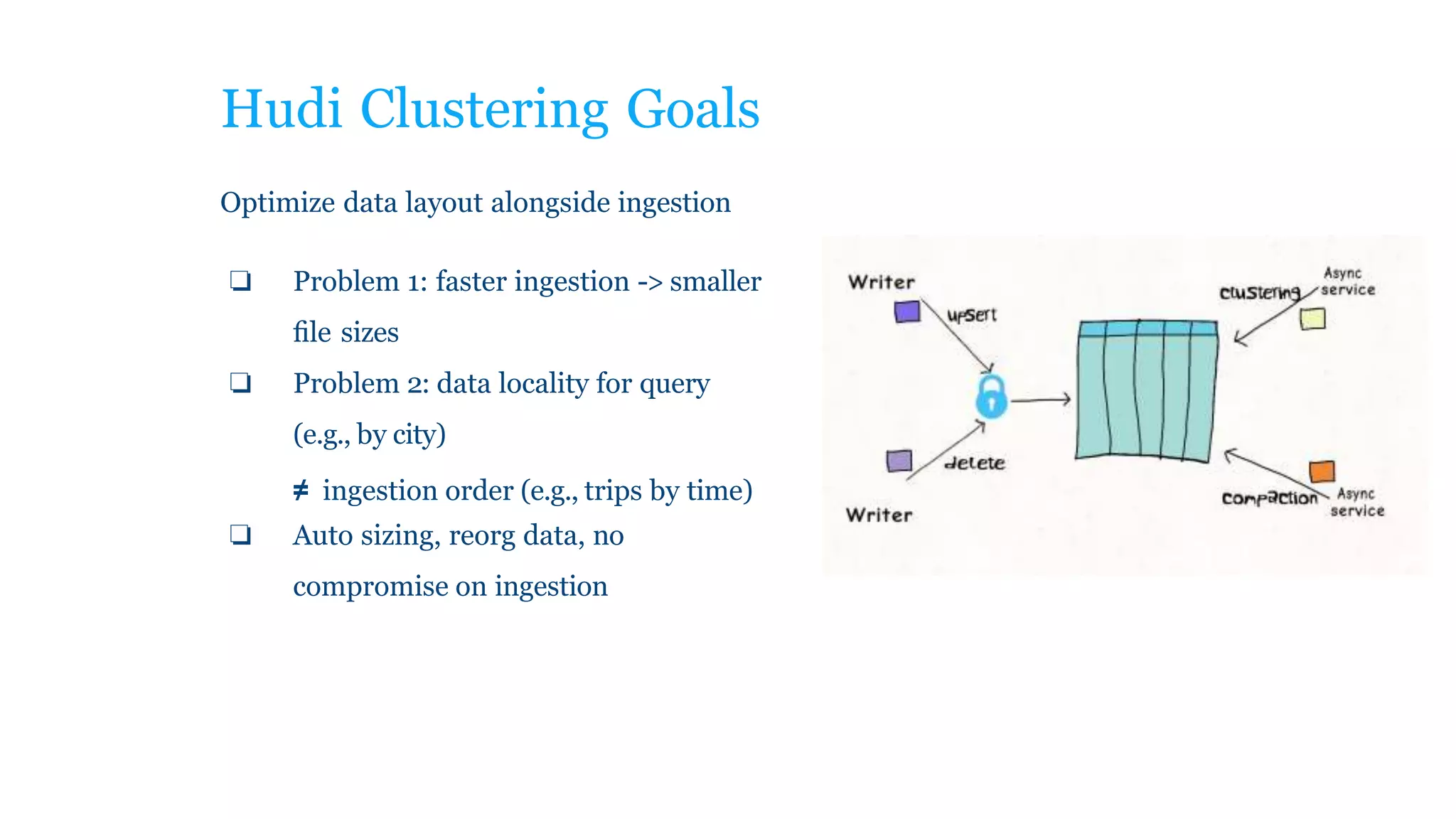

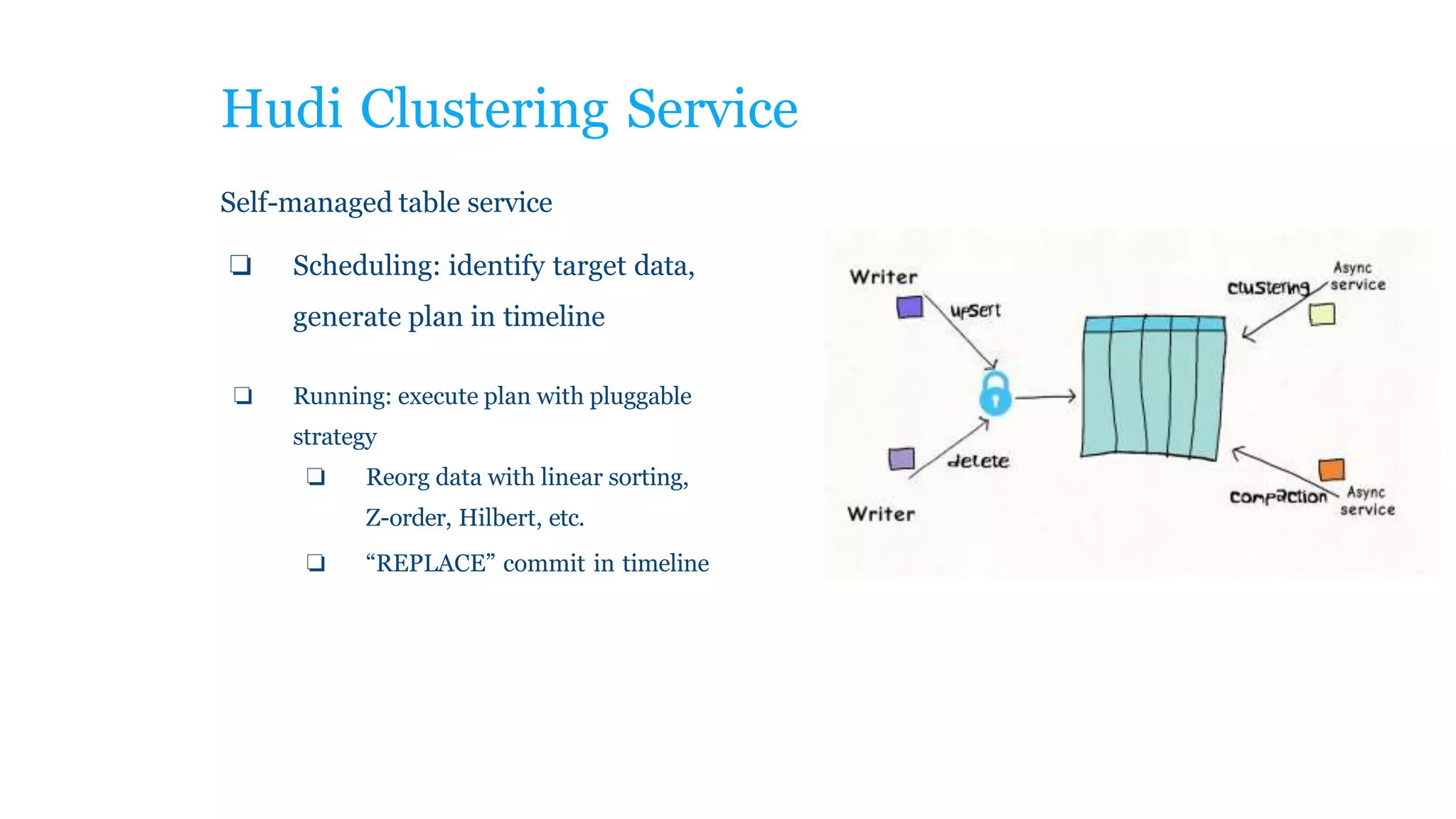

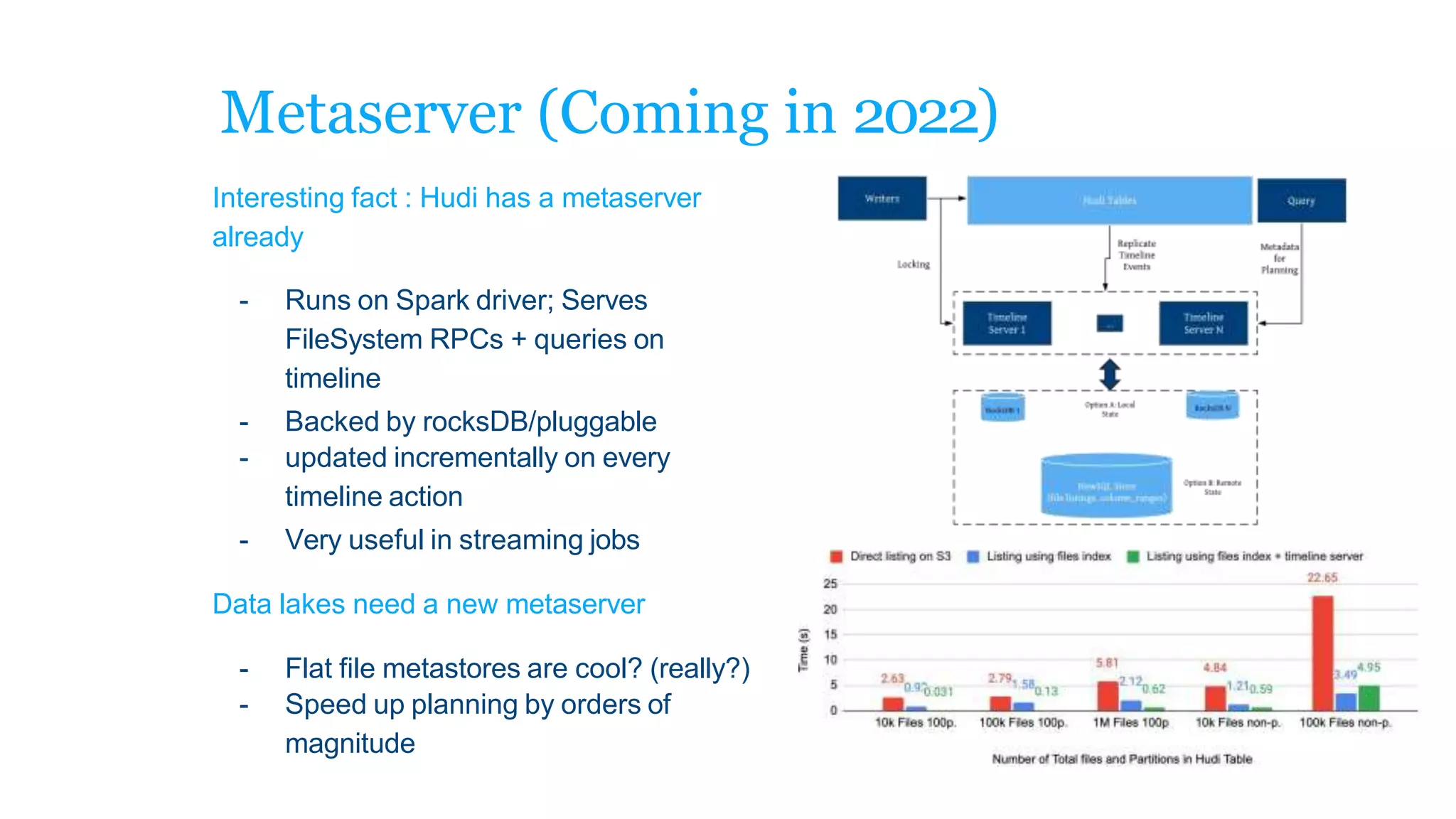

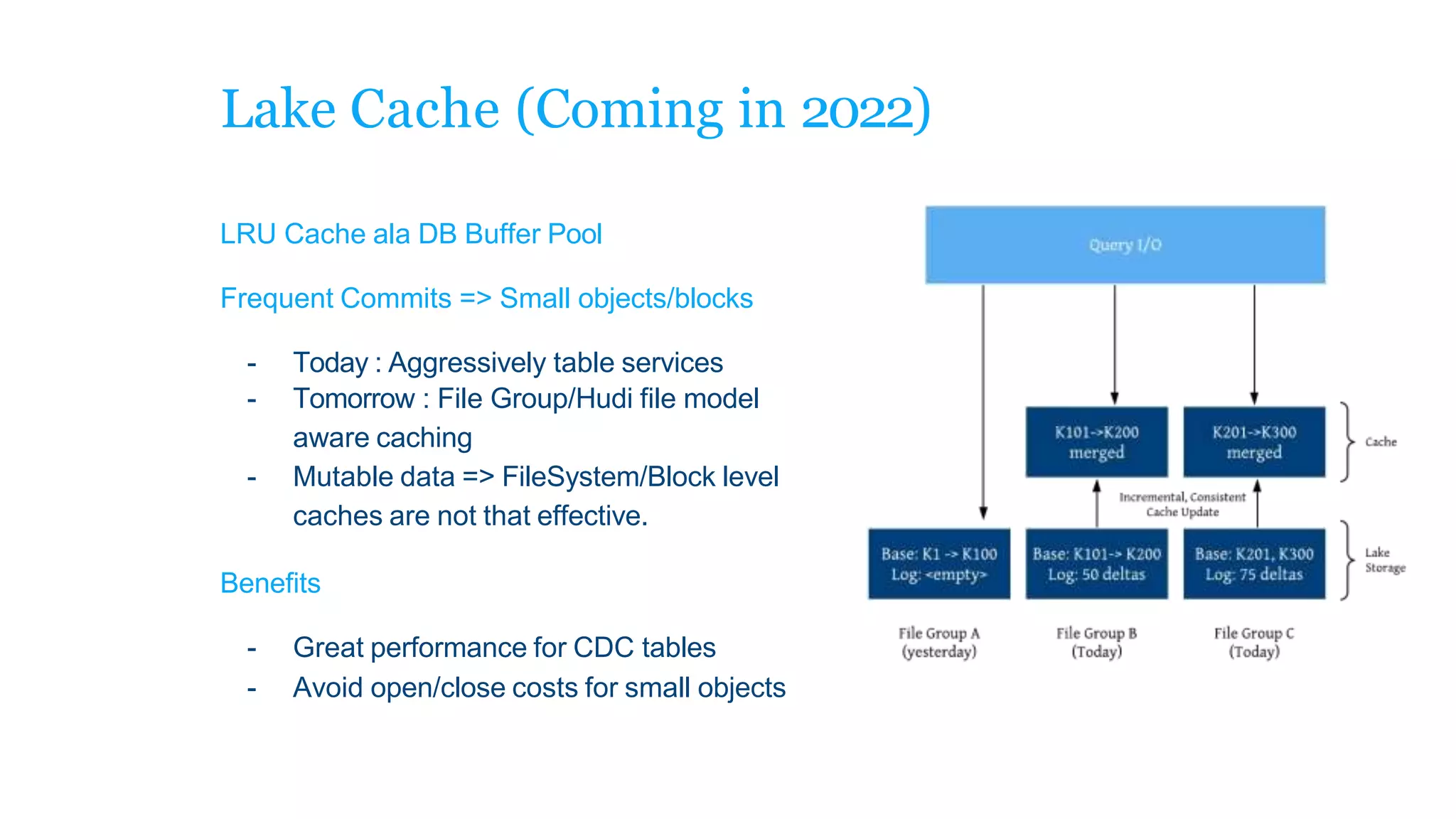

The document explains how to build a streaming lakehouse using Apache Flink and Hudi for real-time analytics and data ingestion challenges. It discusses key features of the Hudi framework, including fast stream ingestion, efficient upserts/deletes, and support for ACID transactions, culminating in composite data management strategies. Additionally, it mentions various components such as the metaserver and lake cache aimed at optimizing data layout and ingestion processes.