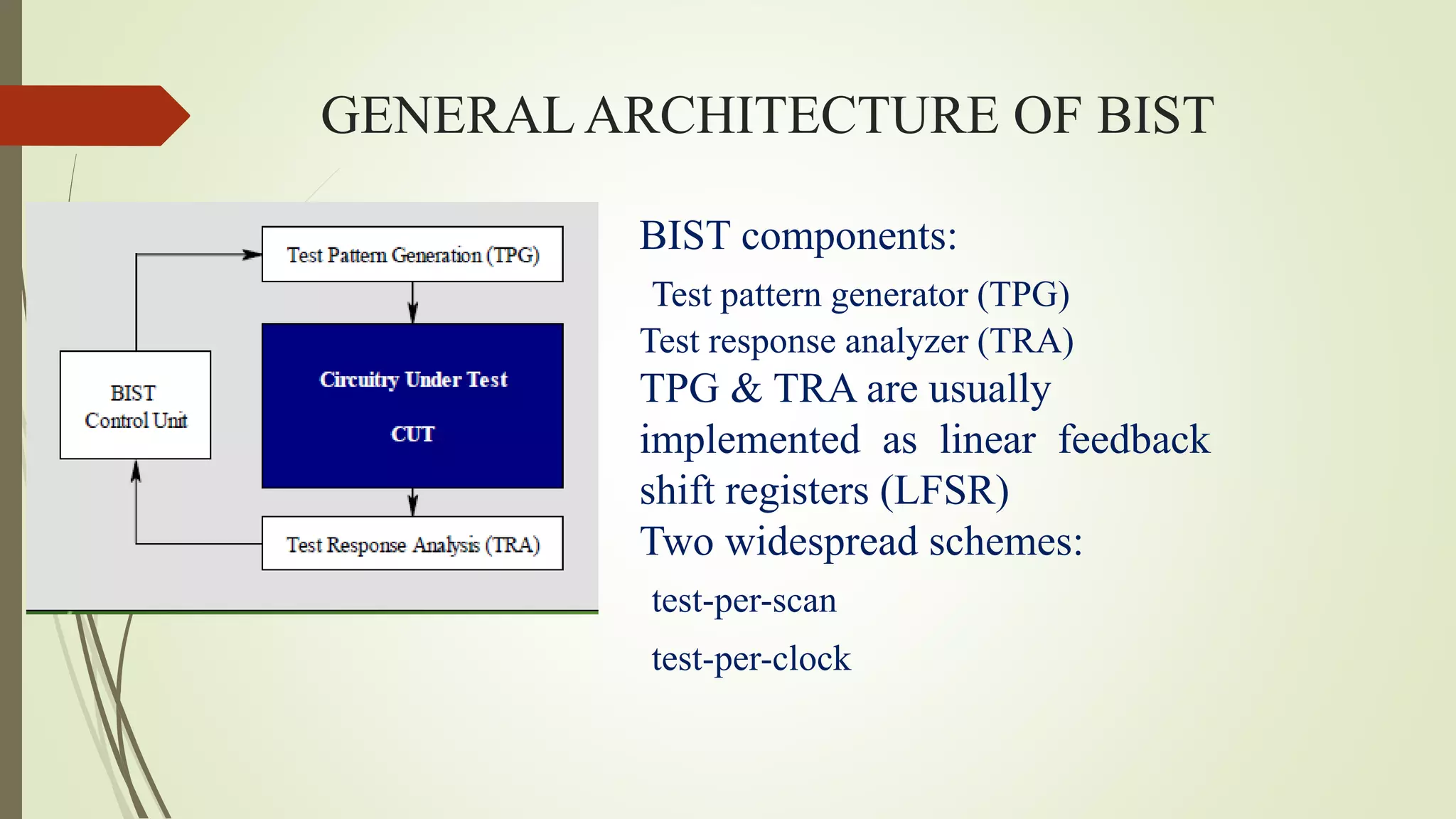

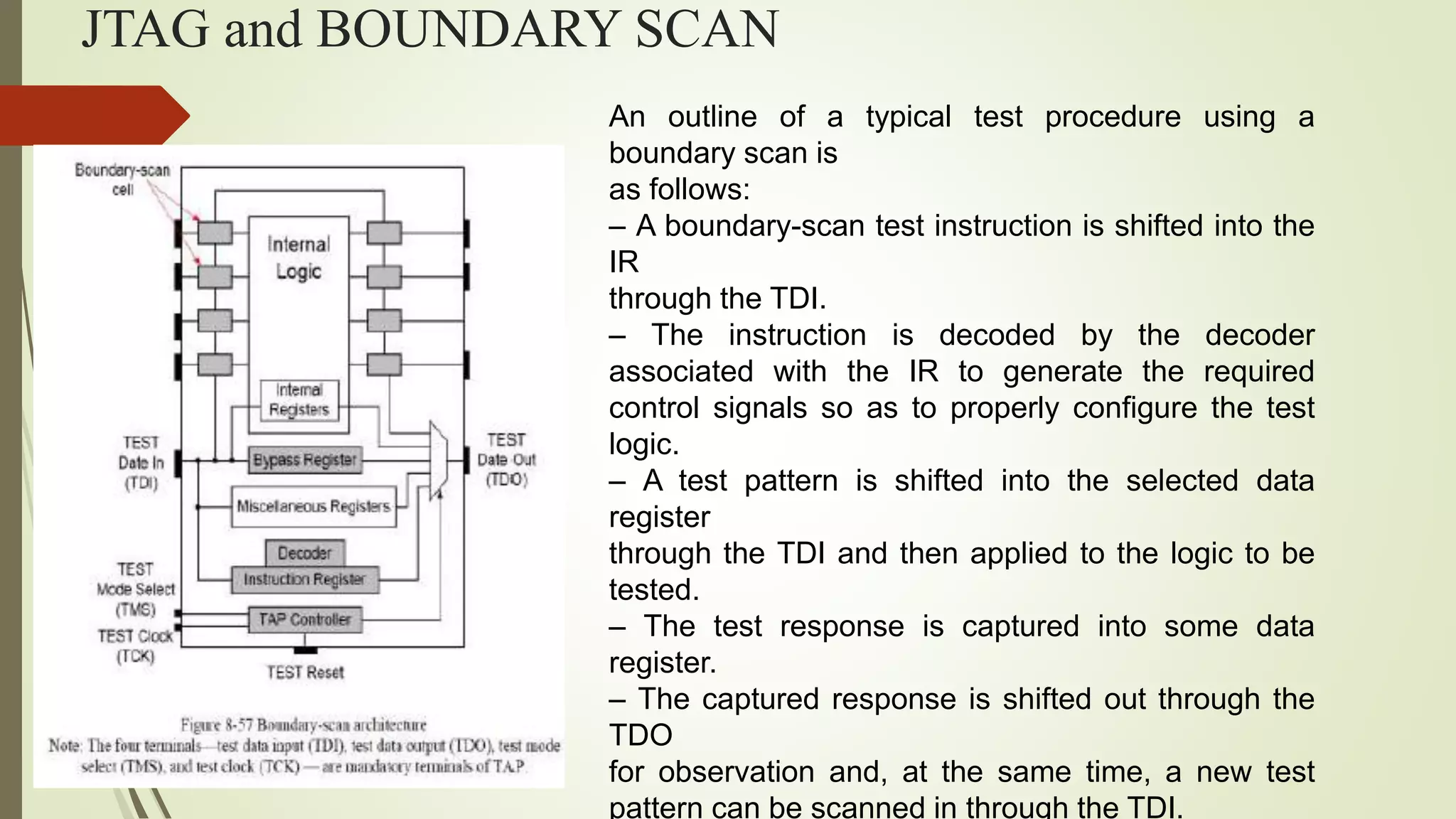

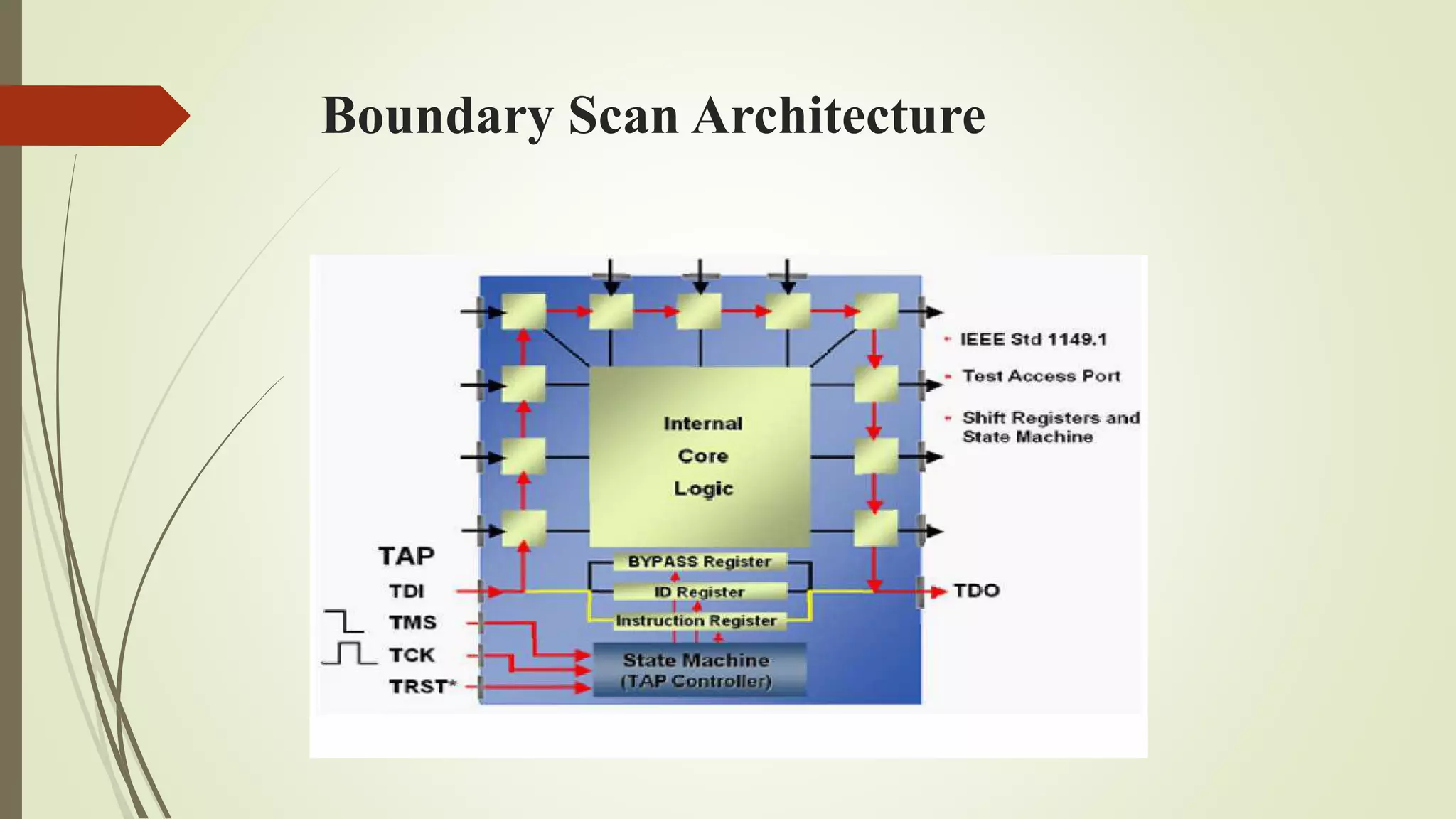

This document discusses VLSI testing and analysis. It defines key terms like defect, fault, and error and describes typical types of defects. It also discusses logical fault models and the role of testing in quality control. Different types of tests like production testing and burn-in testing are described. The testing process, fault simulation, design for testability techniques, and built-in self-test are summarized.