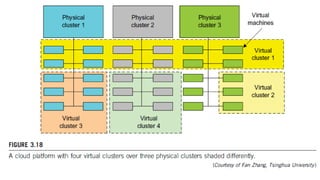

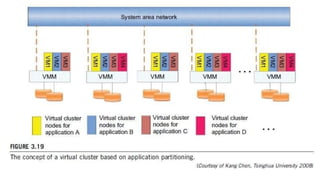

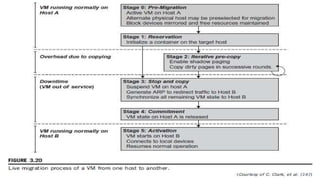

The document discusses the concepts of virtual clusters and resource management, highlighting their properties and applications. It covers critical design issues, including live migration of virtual machines (VMs), memory and file migrations, and dynamic deployment, while comparing virtual and physical clusters. It details management strategies for virtual clusters, emphasizing the importance of efficient image storage and dynamic resource allocation in high-performance virtualized computing environments.