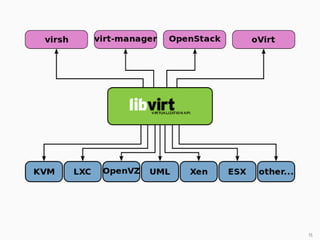

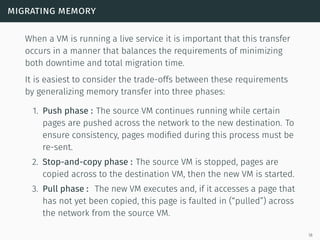

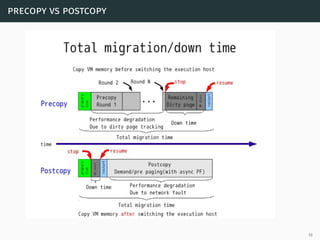

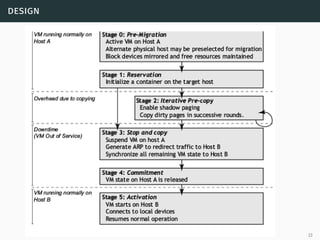

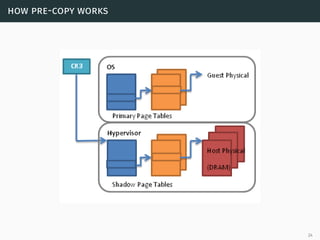

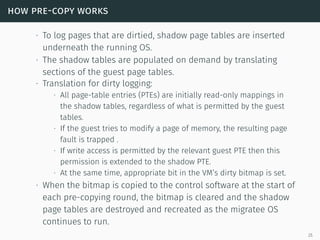

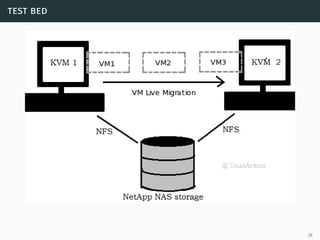

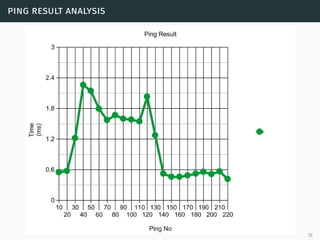

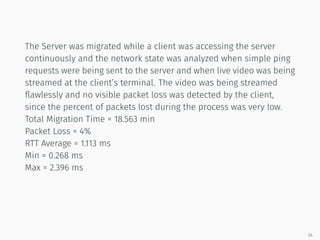

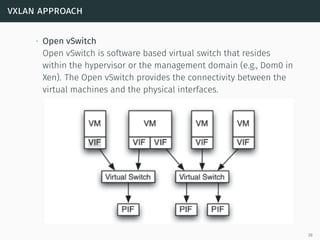

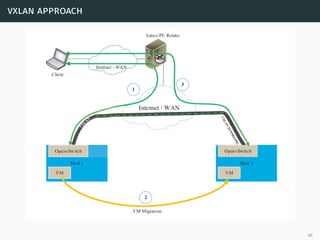

This document presents a detailed overview of live VM migration, including the fundamentals of virtualization, types of hypervisors, and various techniques for live migration. It outlines benefits like load balancing, disaster recovery, and the importance of maintaining network connectivity during migration. The study also discusses the technology stack involved, such as KVM and QEMU, and presents experimental results related to network performance during VM migration.