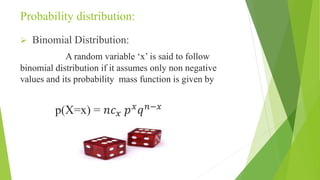

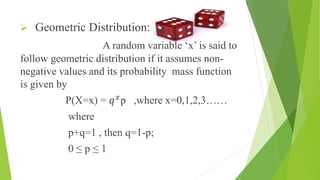

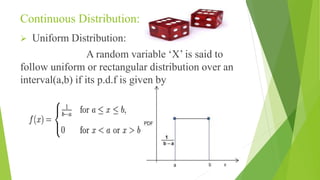

This document discusses probability theory and its applications, detailing key terminologies such as random experiments, sample spaces, and types of events including mutually exclusive and independent events. It explains various probability distributions including binomial, Poisson, and uniform distributions, as well as practical applications such as opinion polls and simulations. The document serves as a comprehensive introduction to the foundational concepts in probability theory and its relevance to statistical analysis.

![TERMINOLOGIES

Random Experiment:

If an experiment or trial is repeated under

the same conditions for any number of times and it is

possible to count the total number of outcomes is called as

“Random Experiment”

Sample Space:

The set of all possible outcomes of a

random experiment is known as “Sample Space” and

denoted by set S. [this is similar to Universal set in Set

Theory] The outcomes of the random experiment are

called sample points or outcomes.](https://image.slidesharecdn.com/pqtpresentation-150226184456-conversion-gate02/85/Probablity-queueing-theory-basic-terminologies-applications-4-320.jpg)