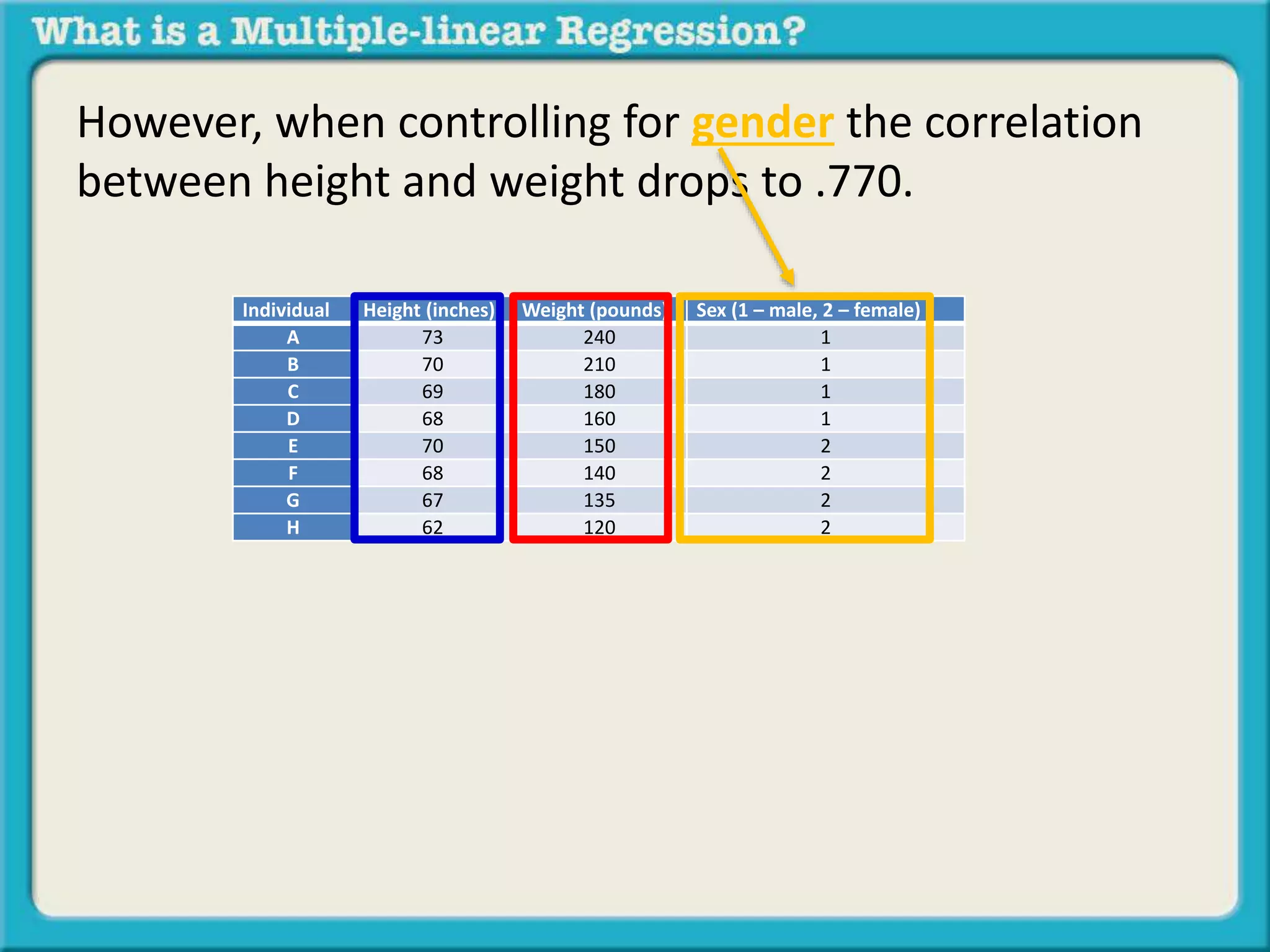

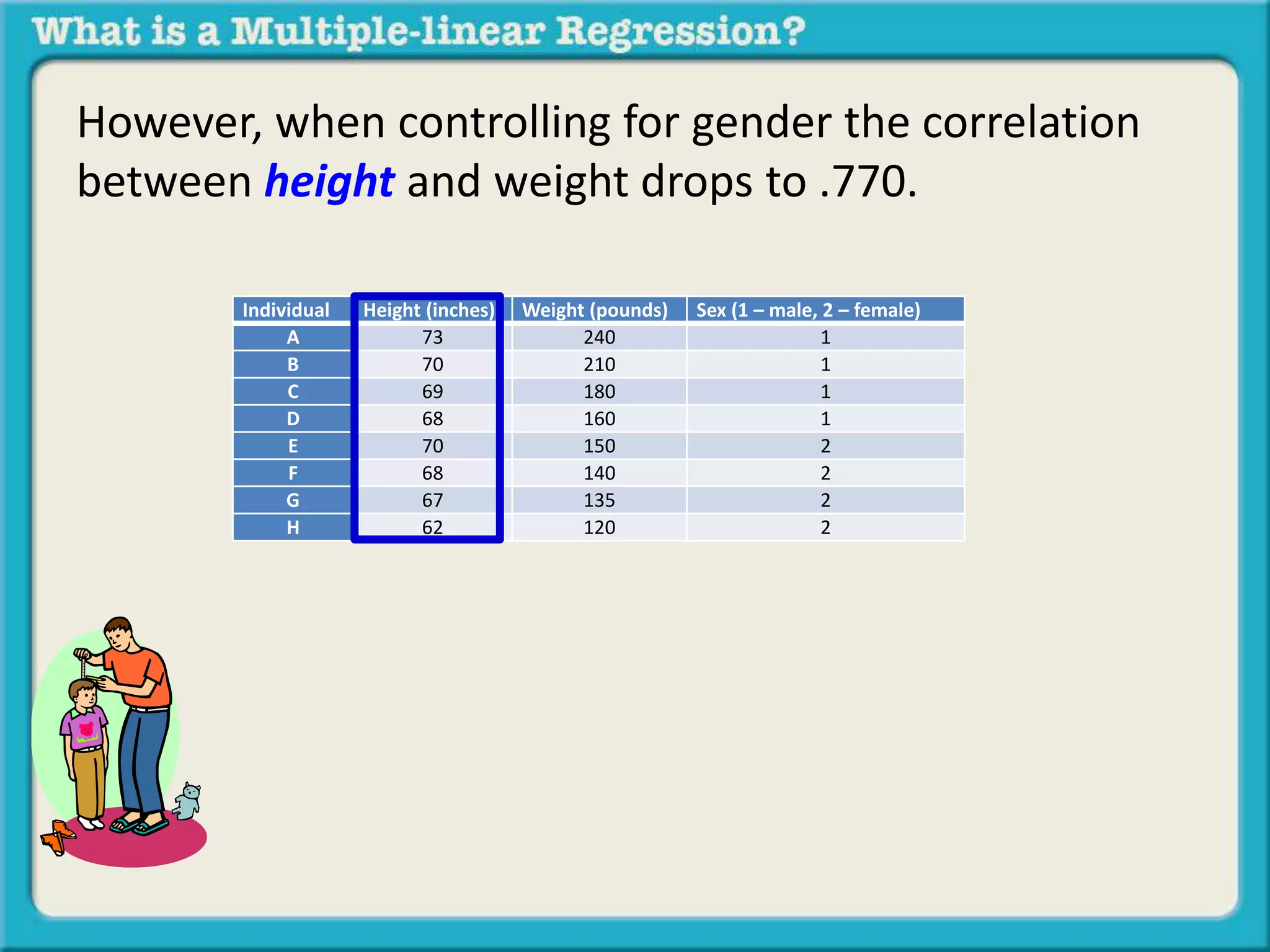

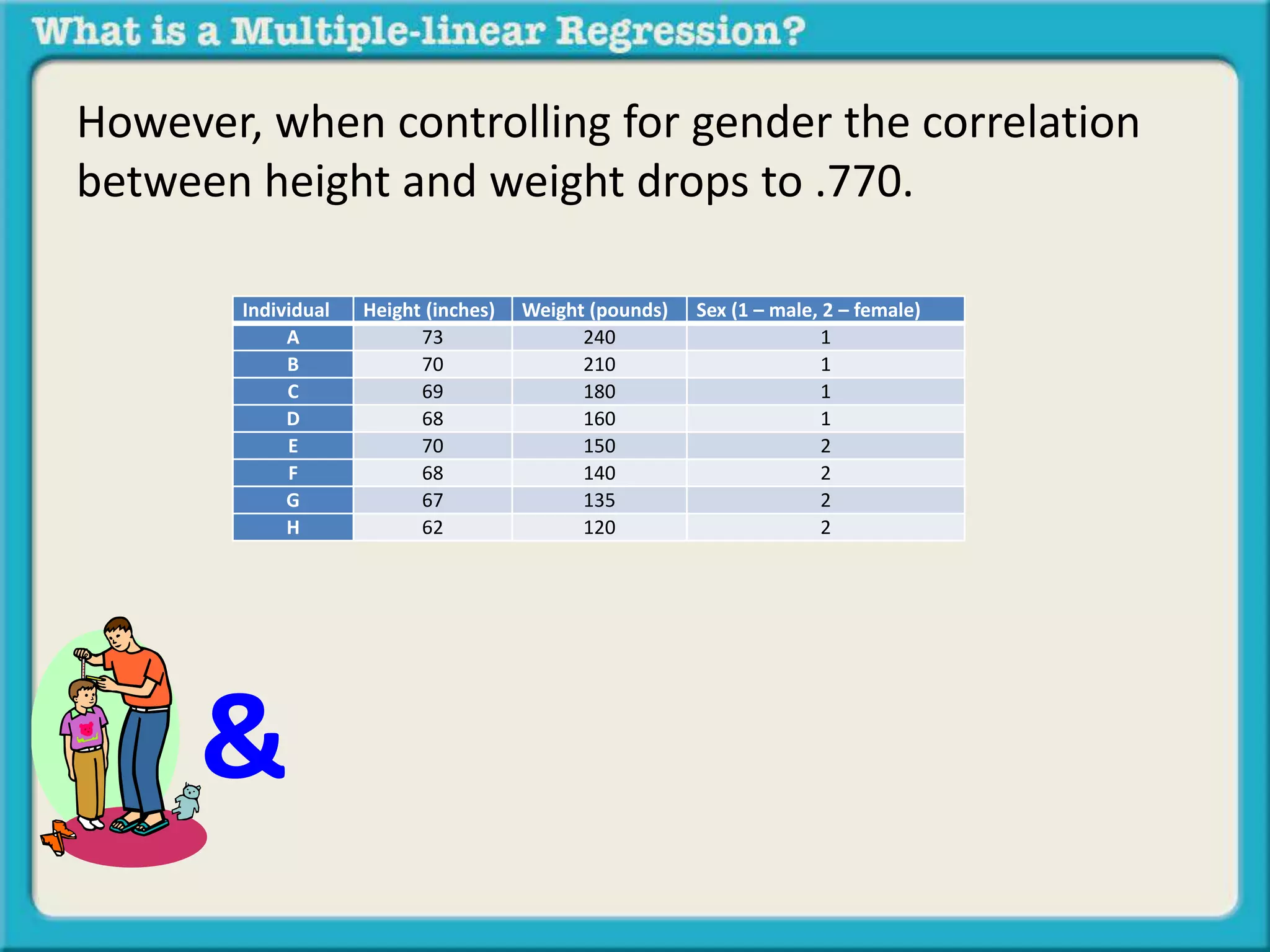

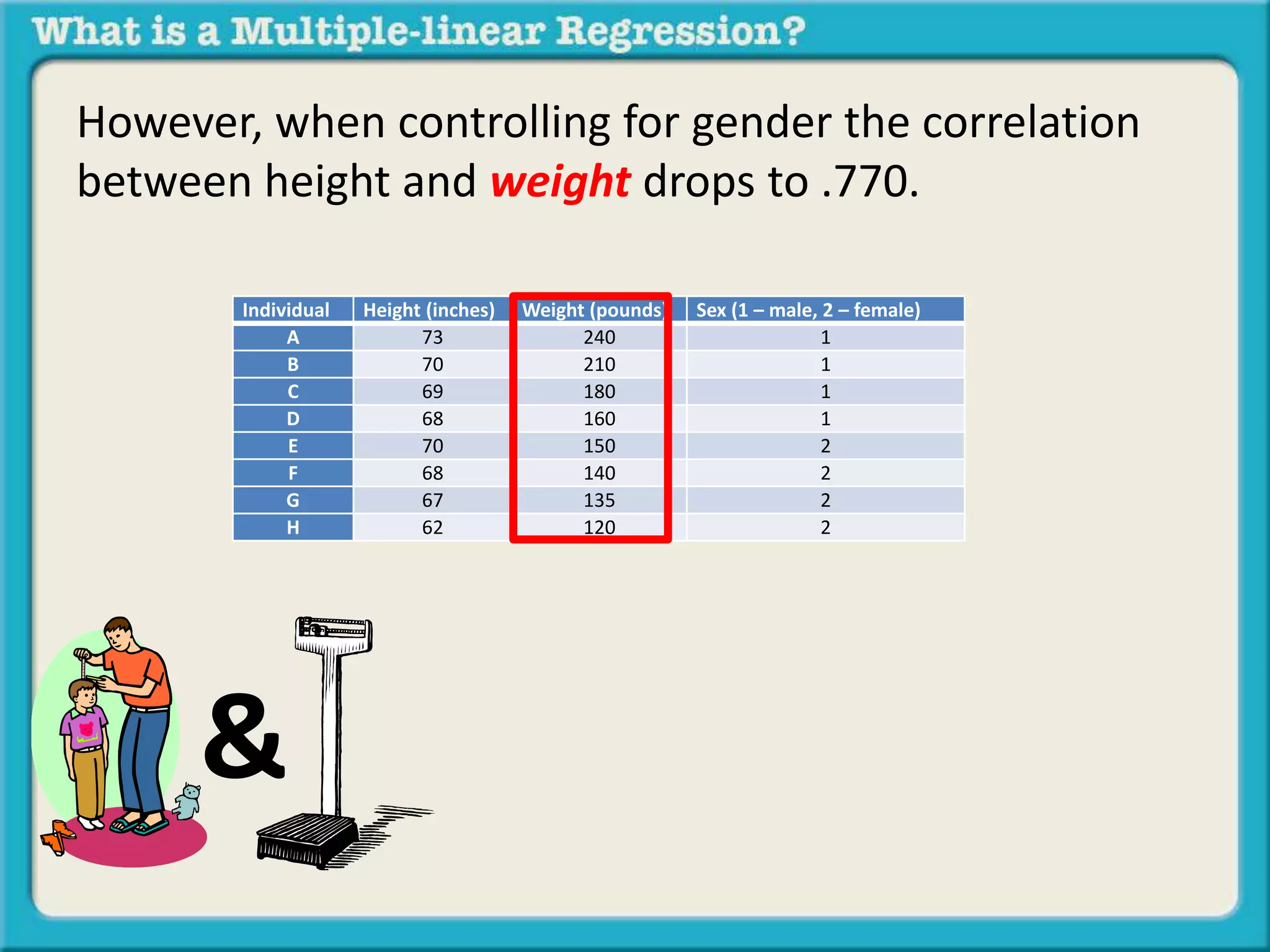

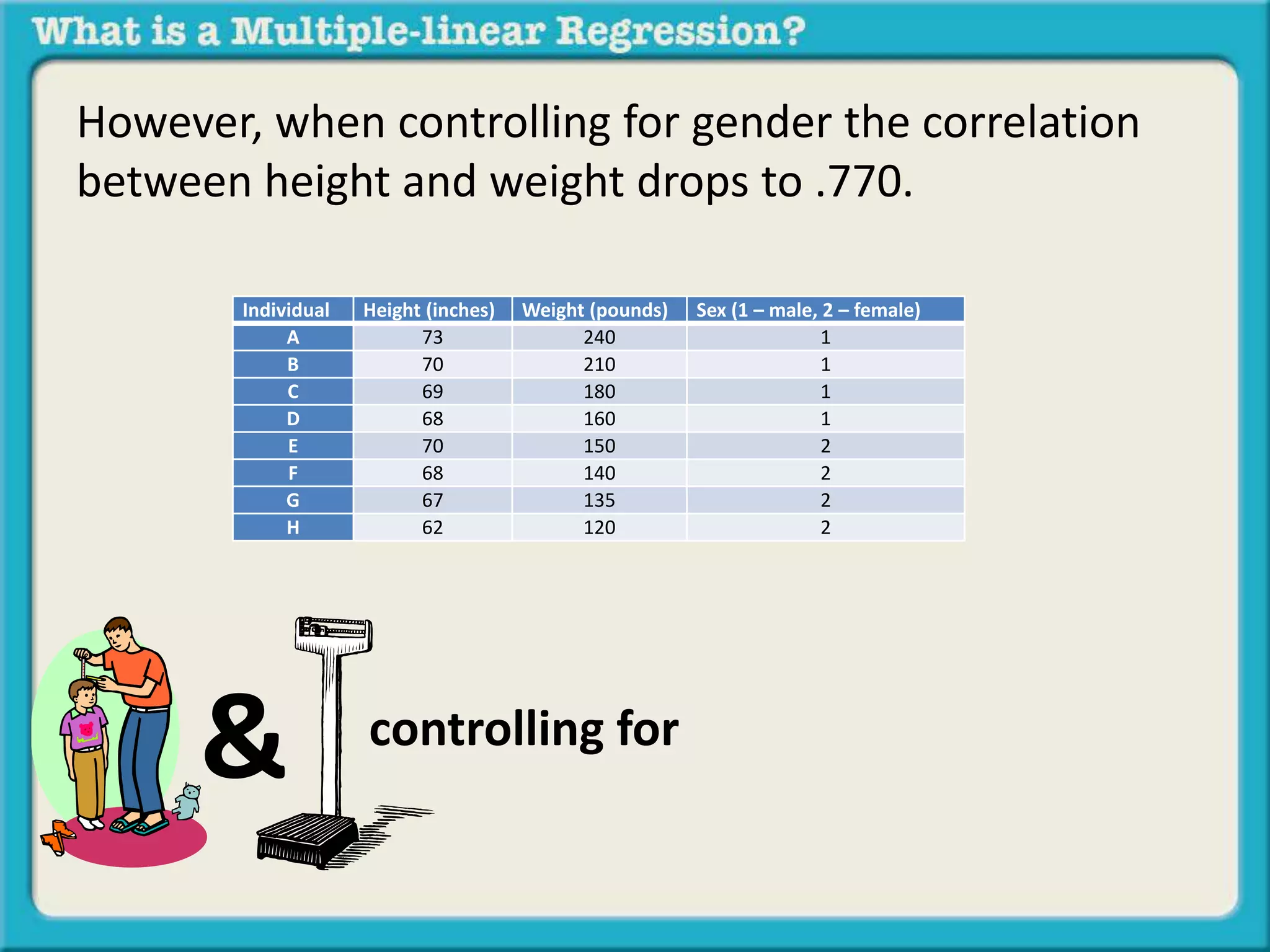

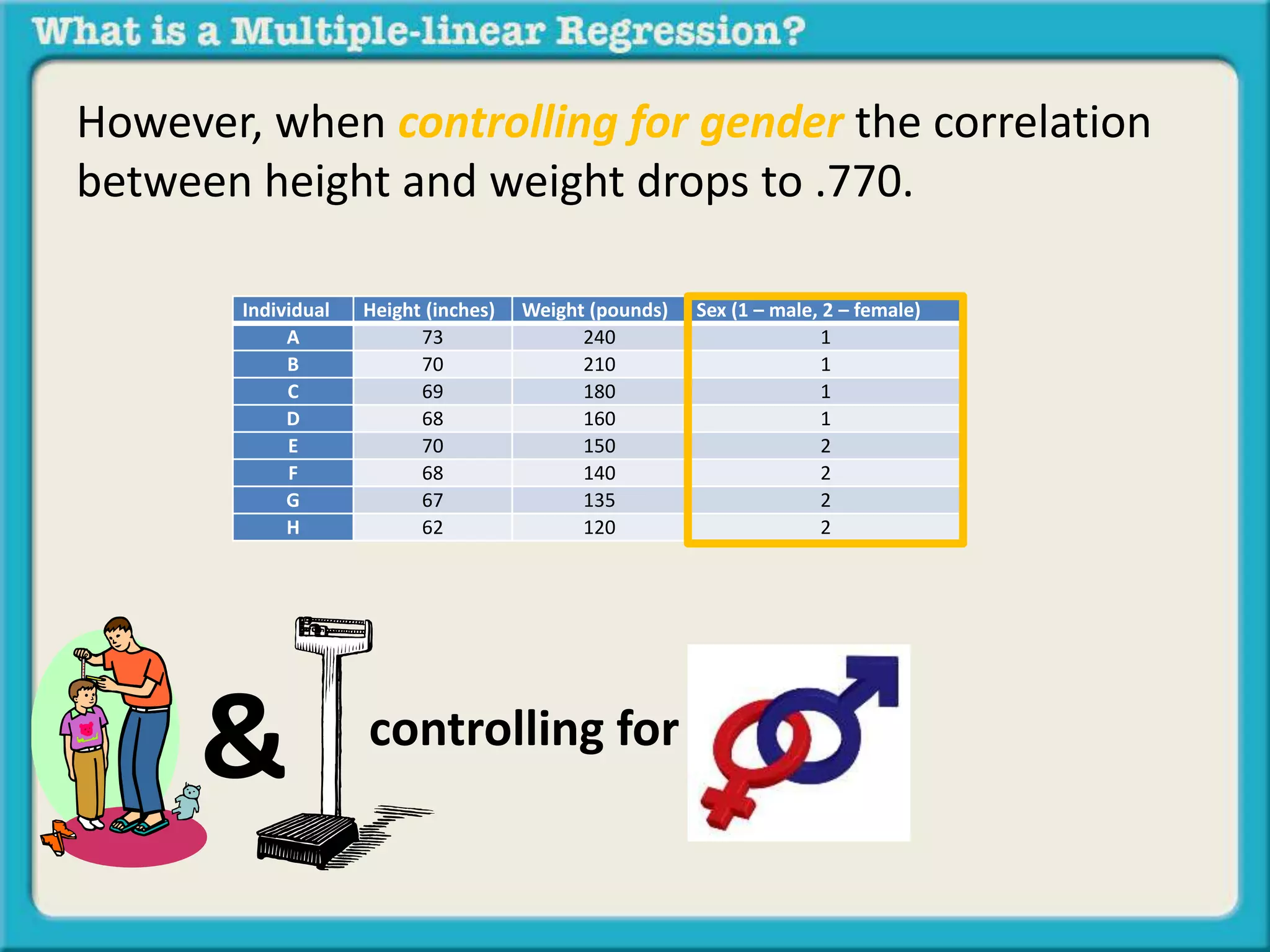

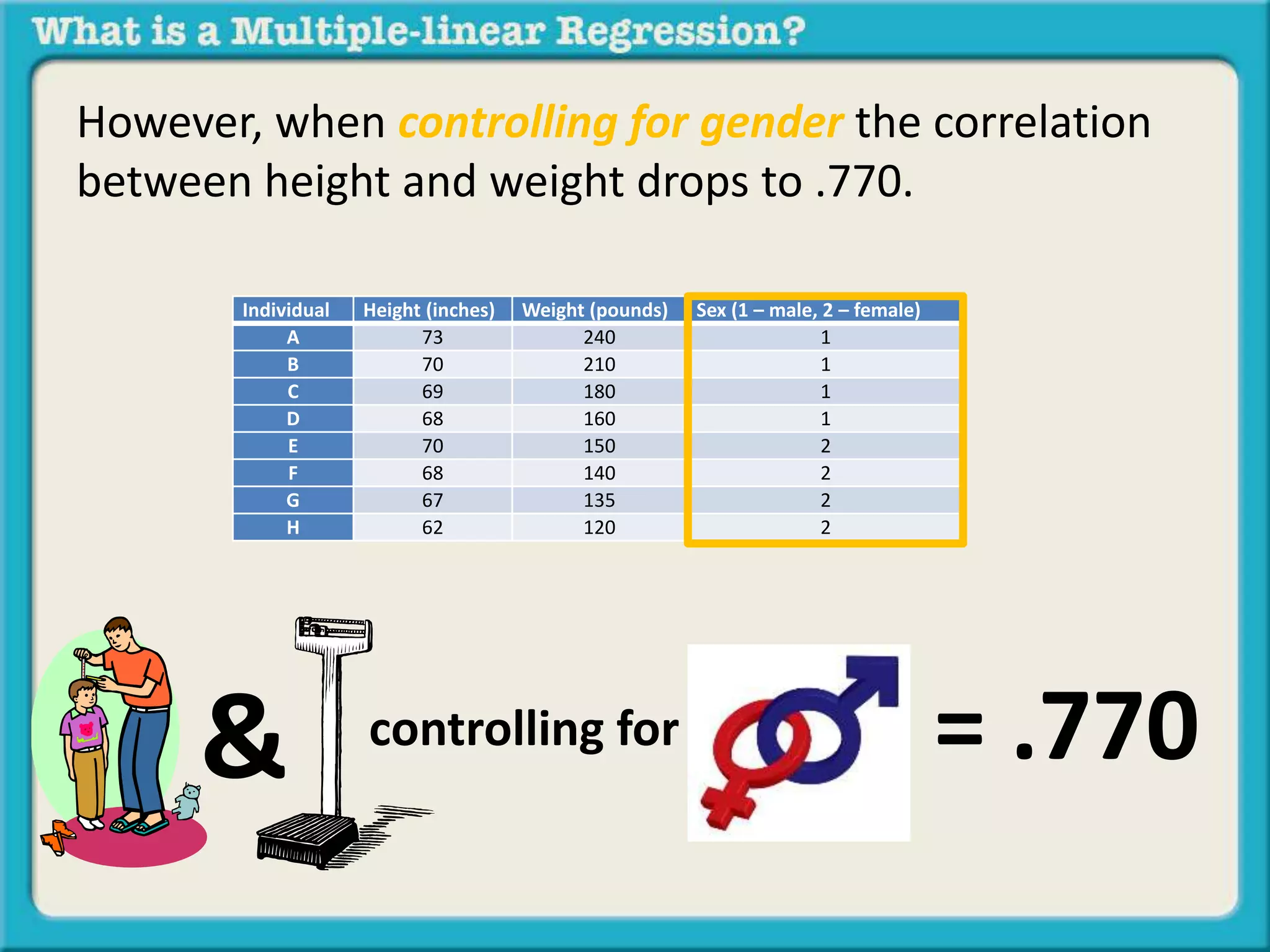

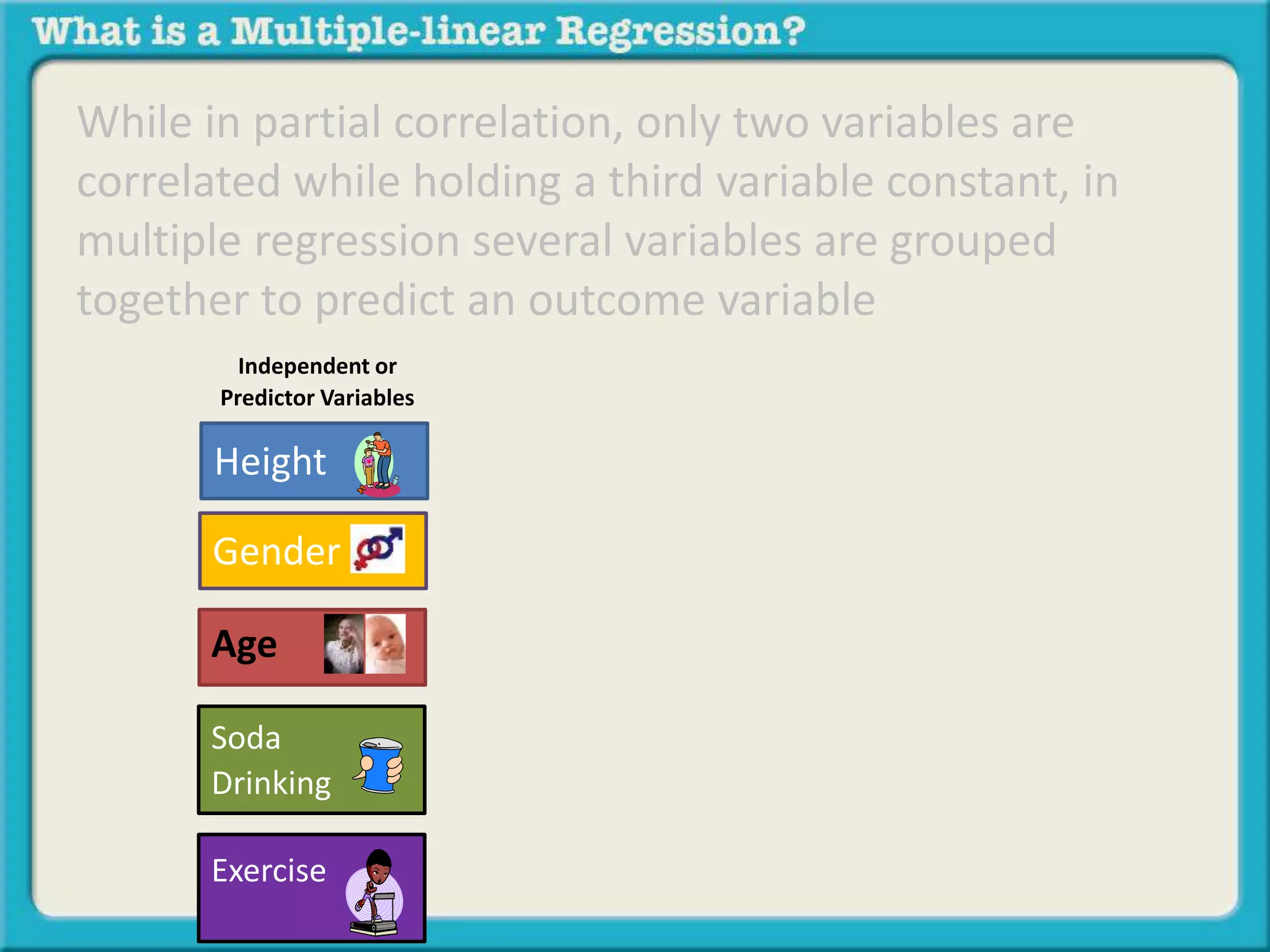

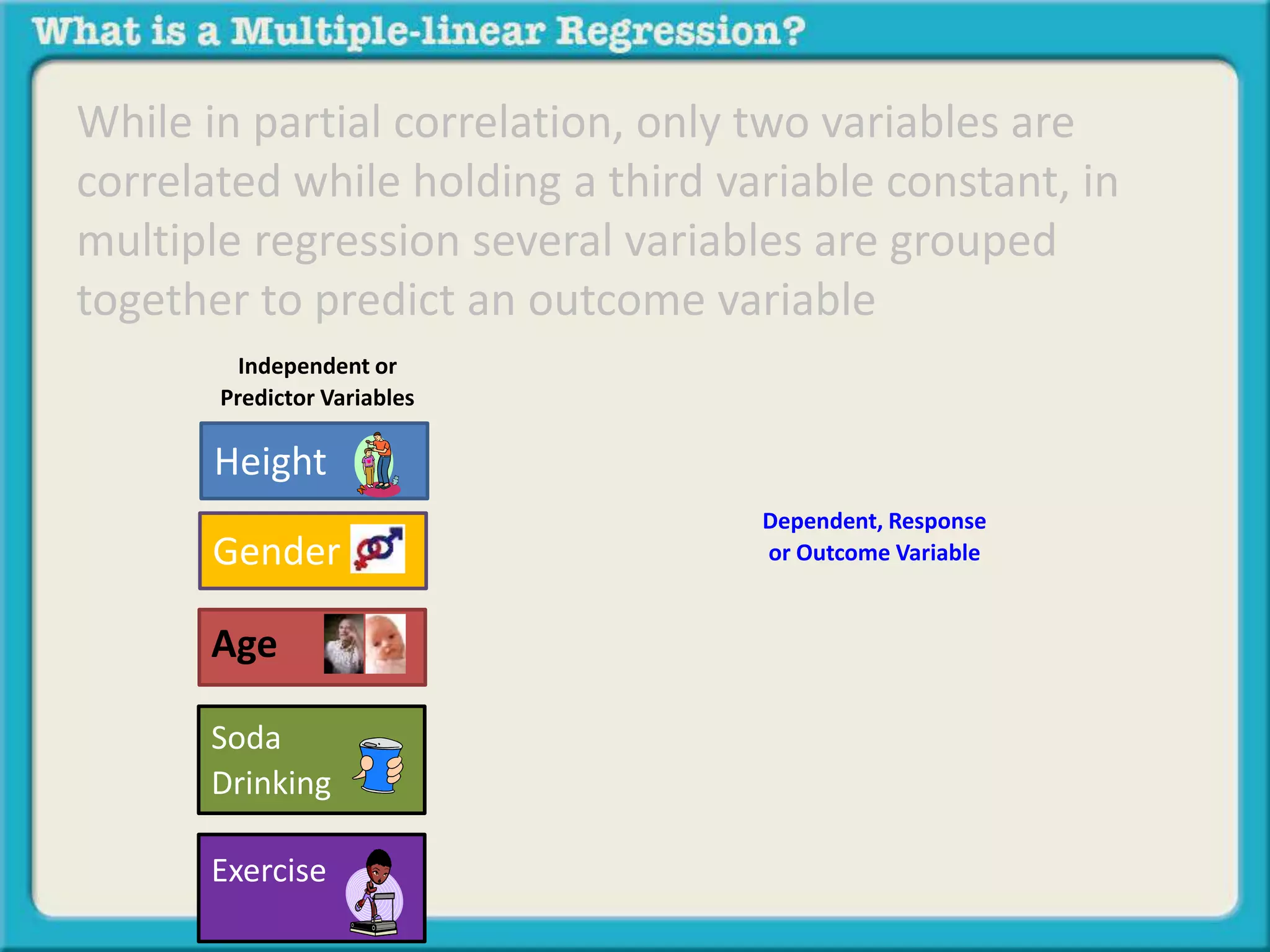

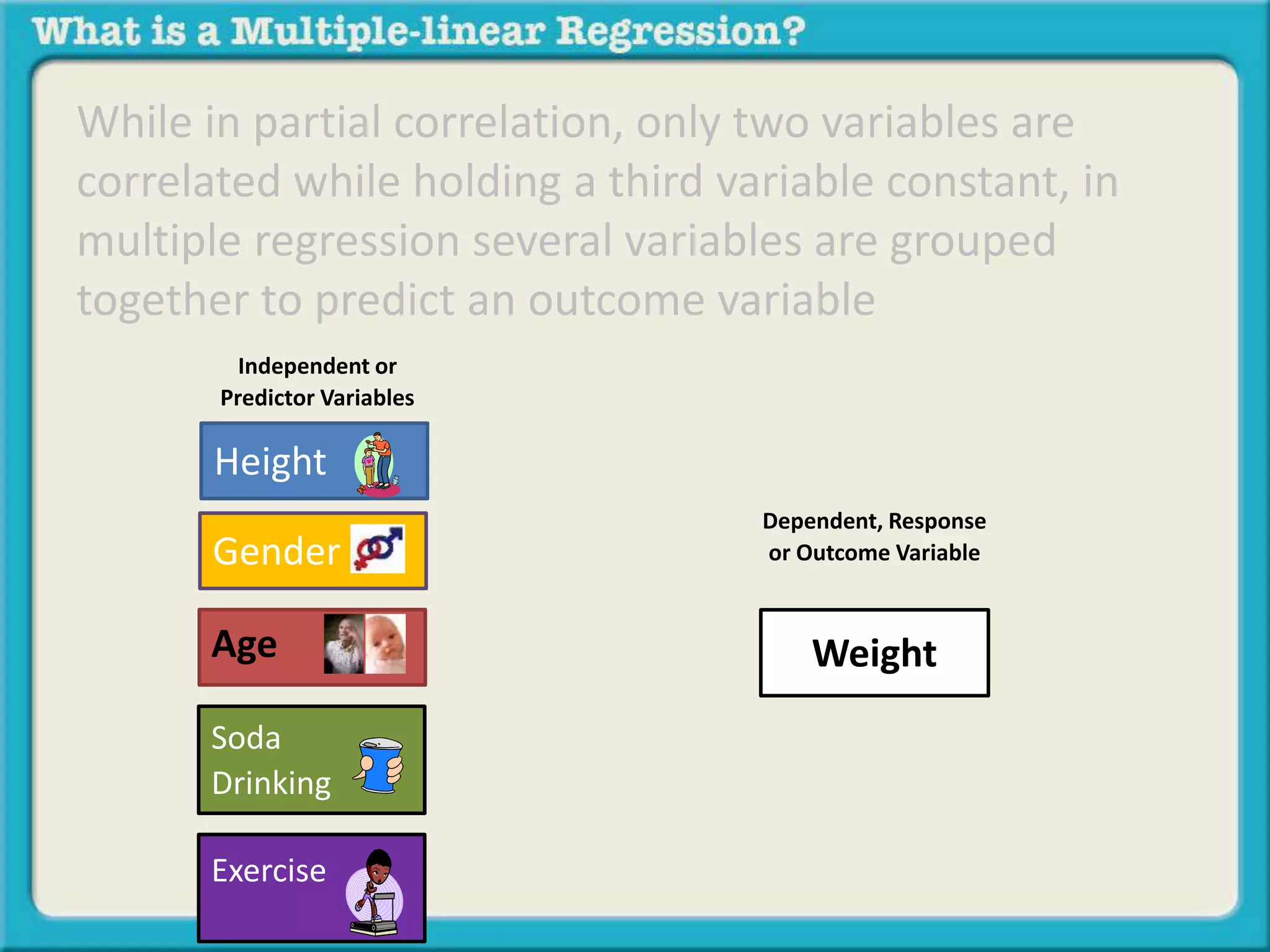

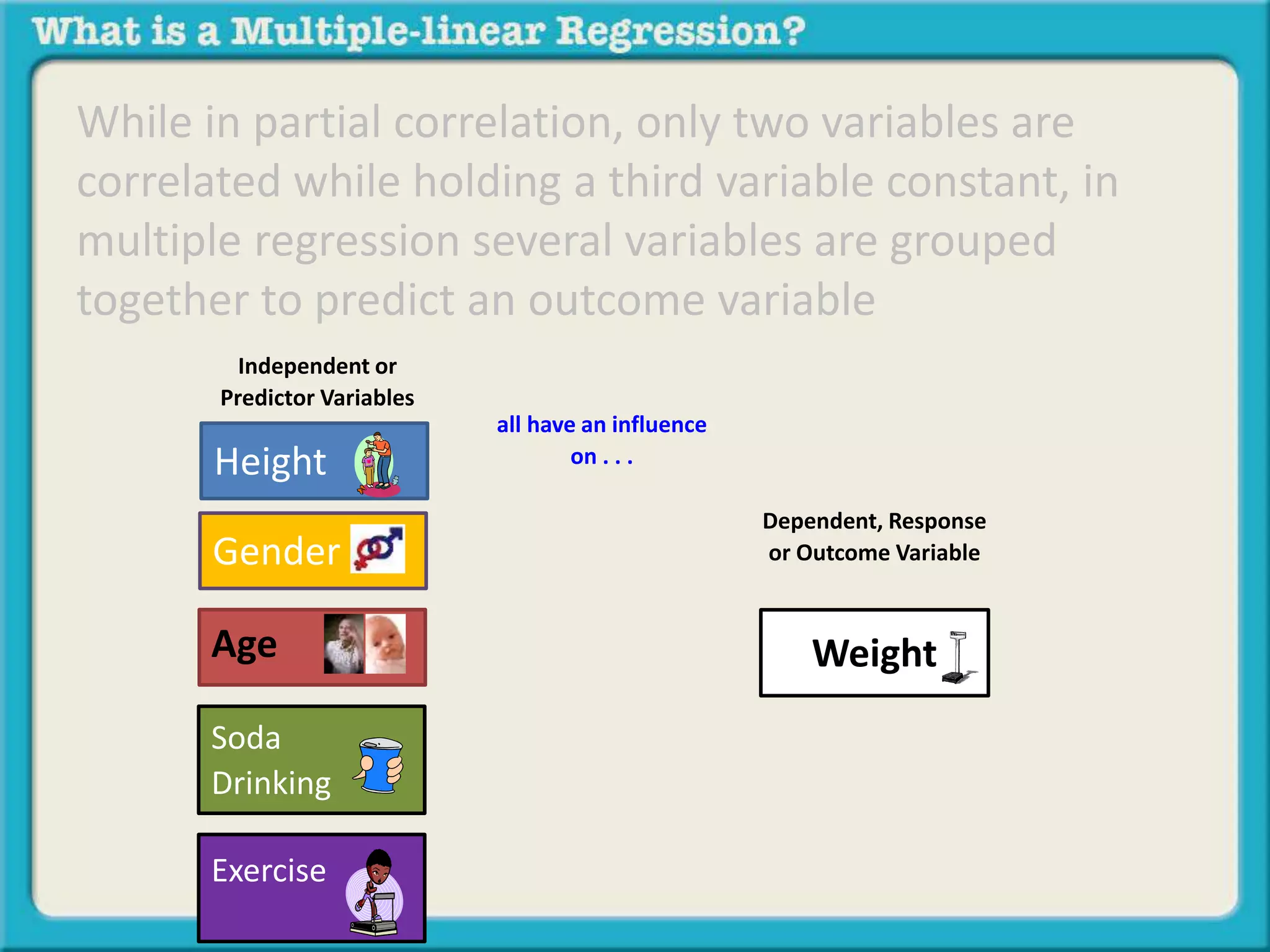

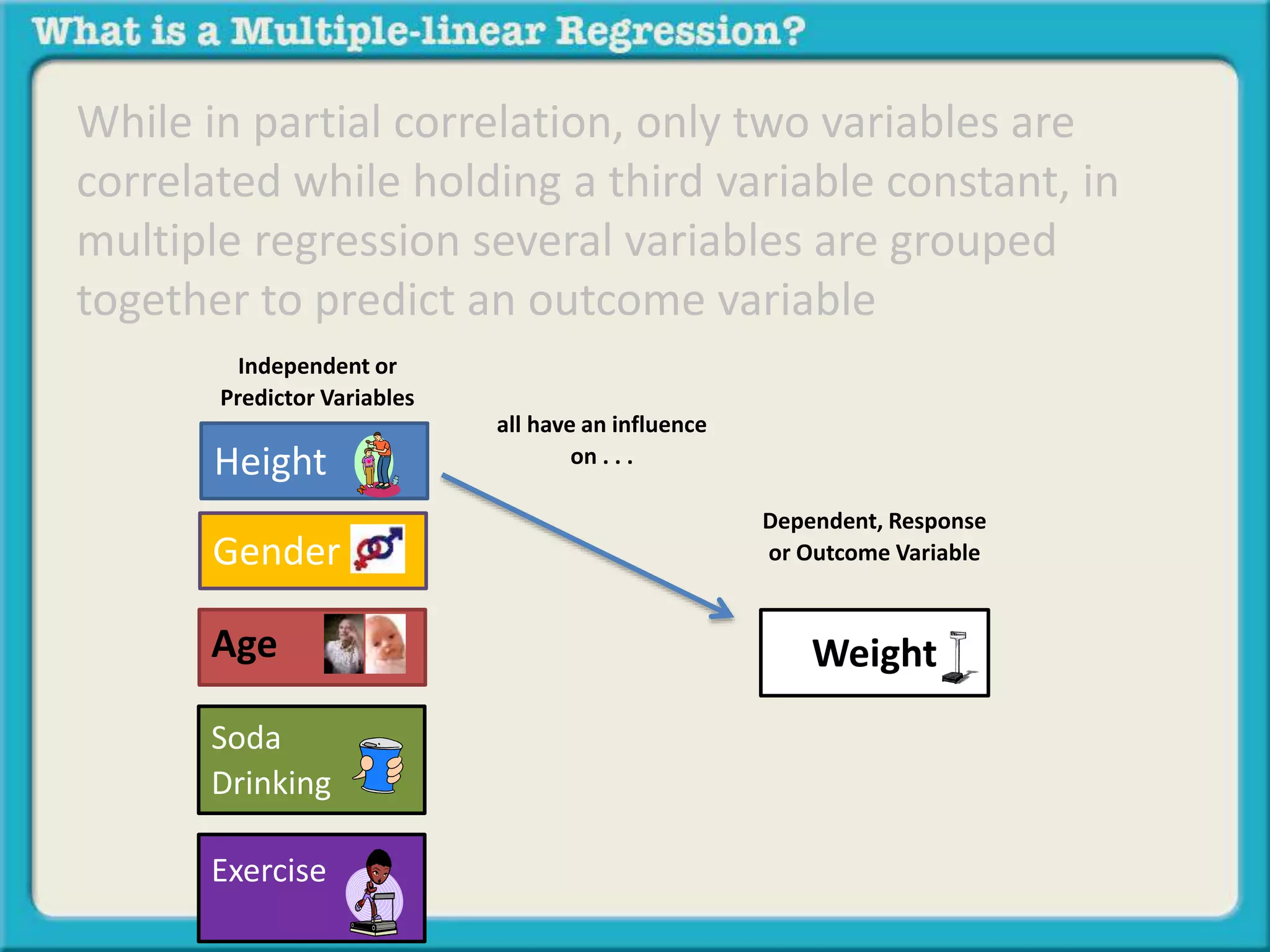

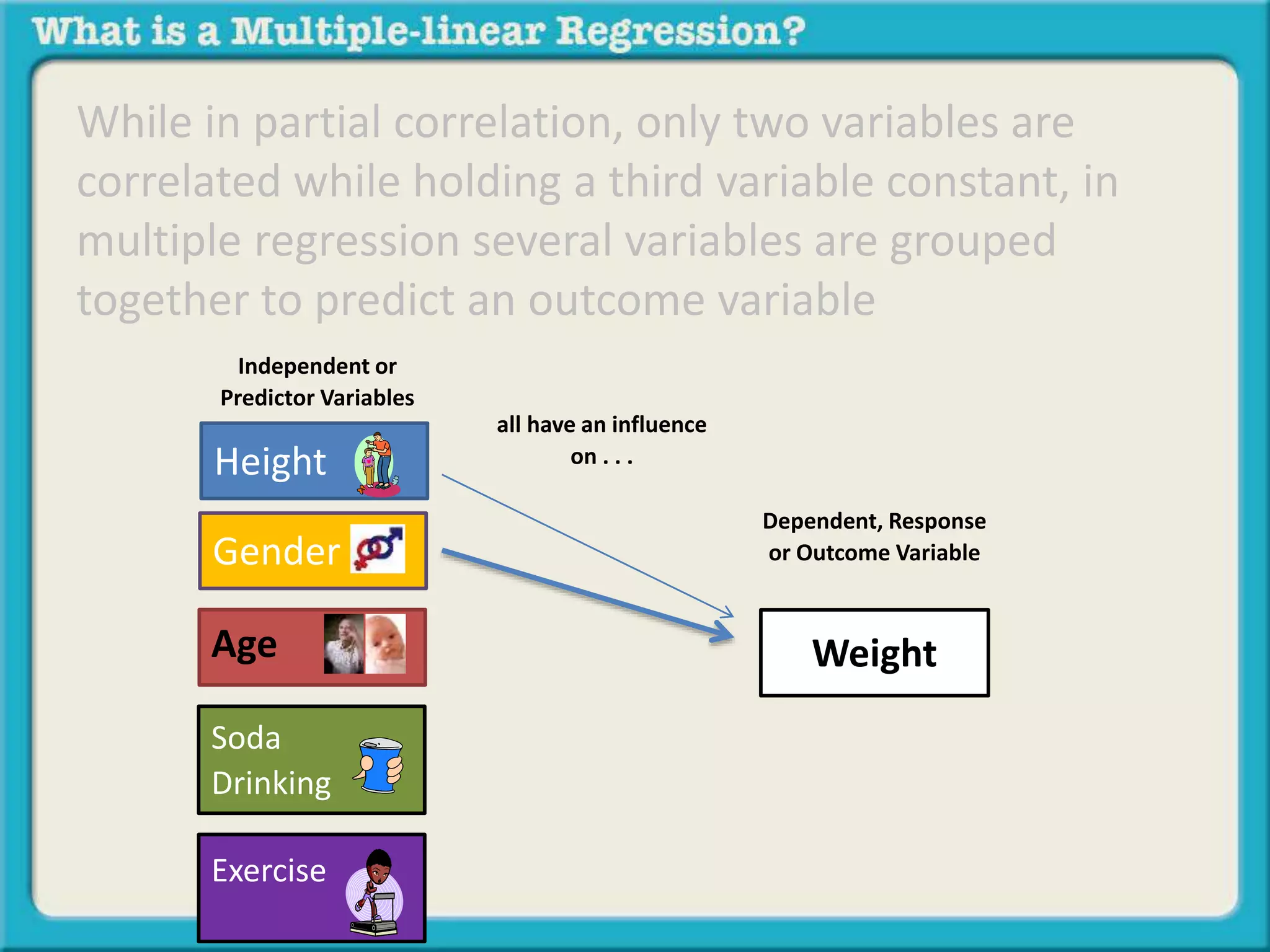

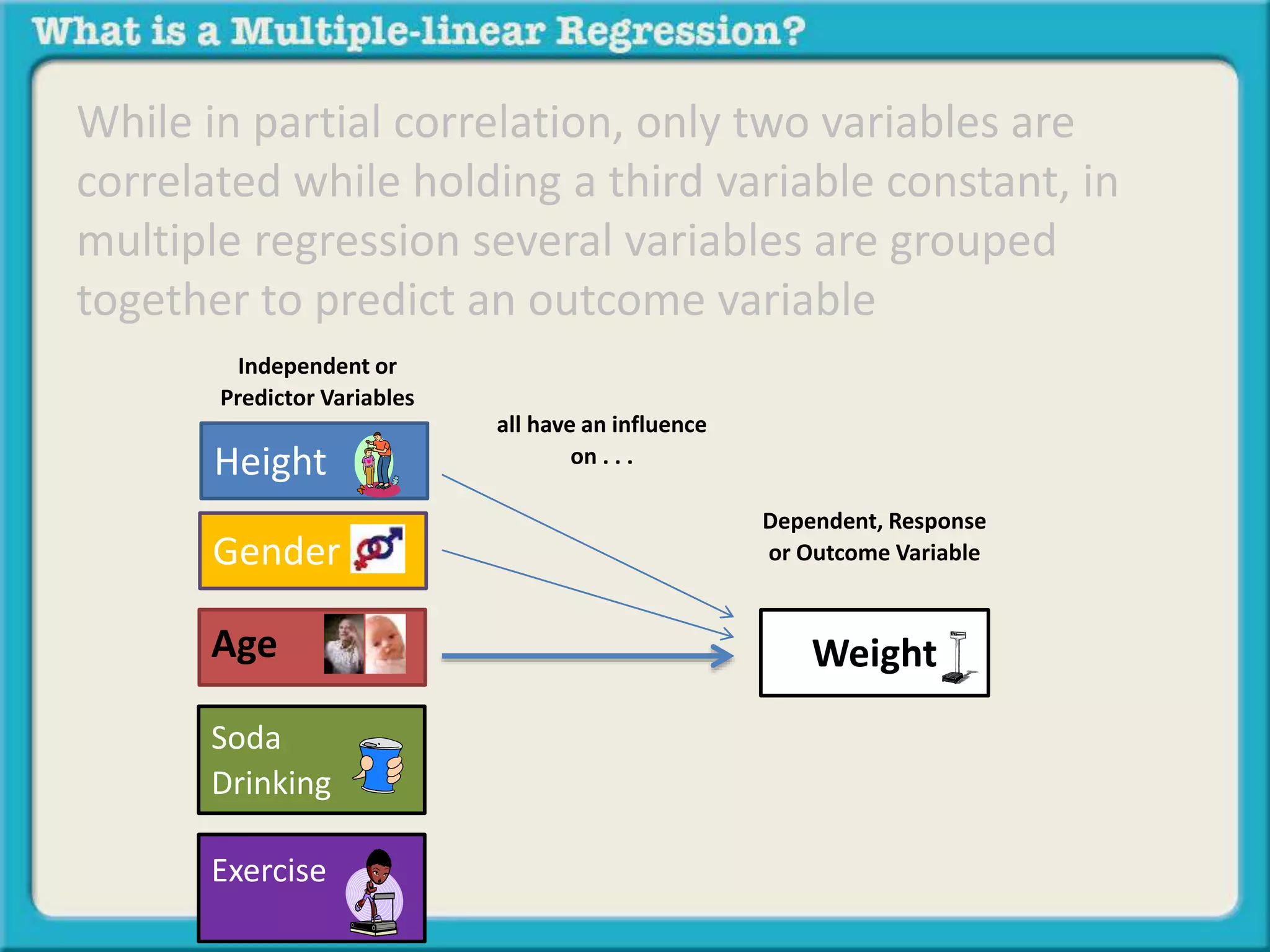

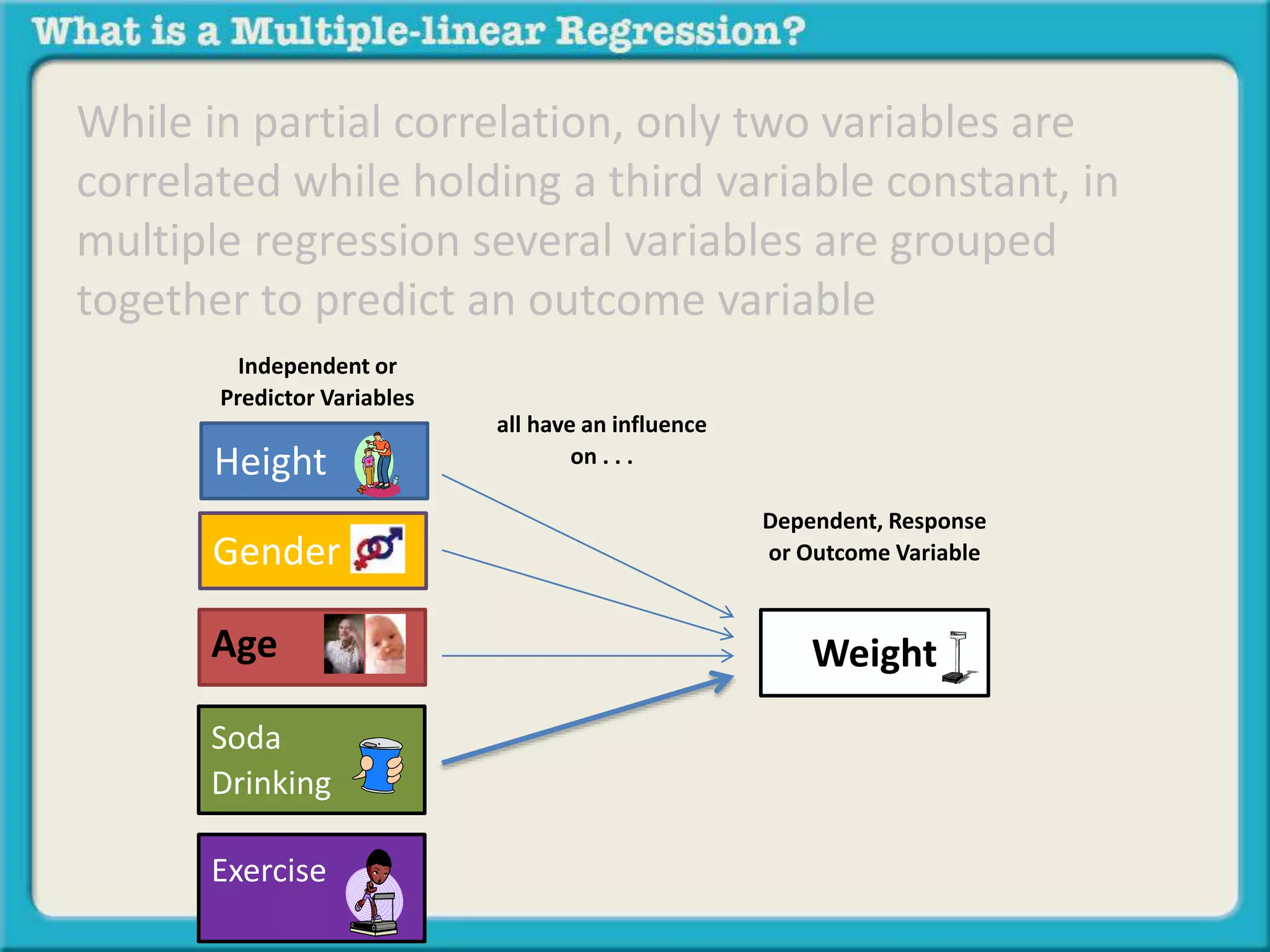

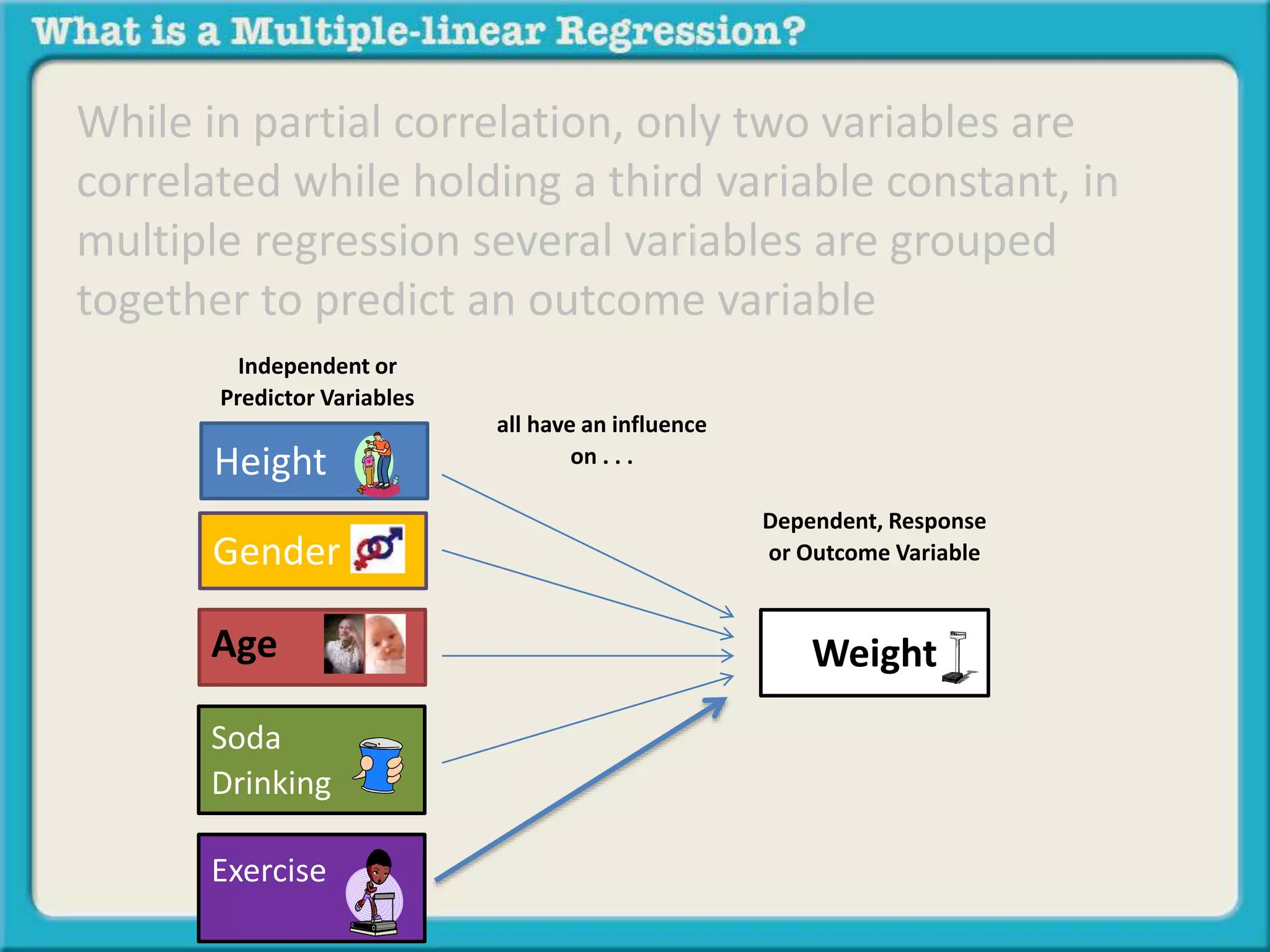

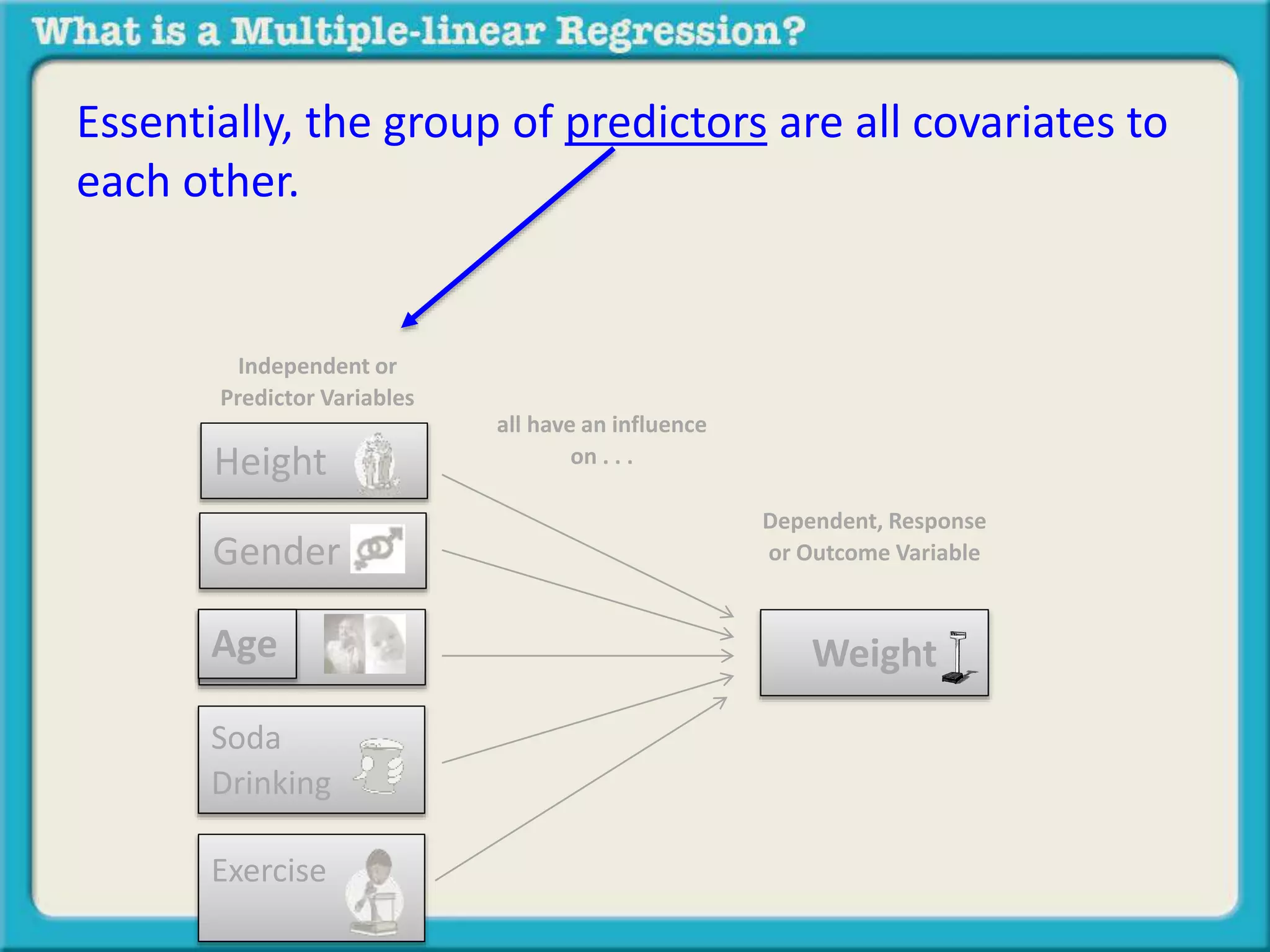

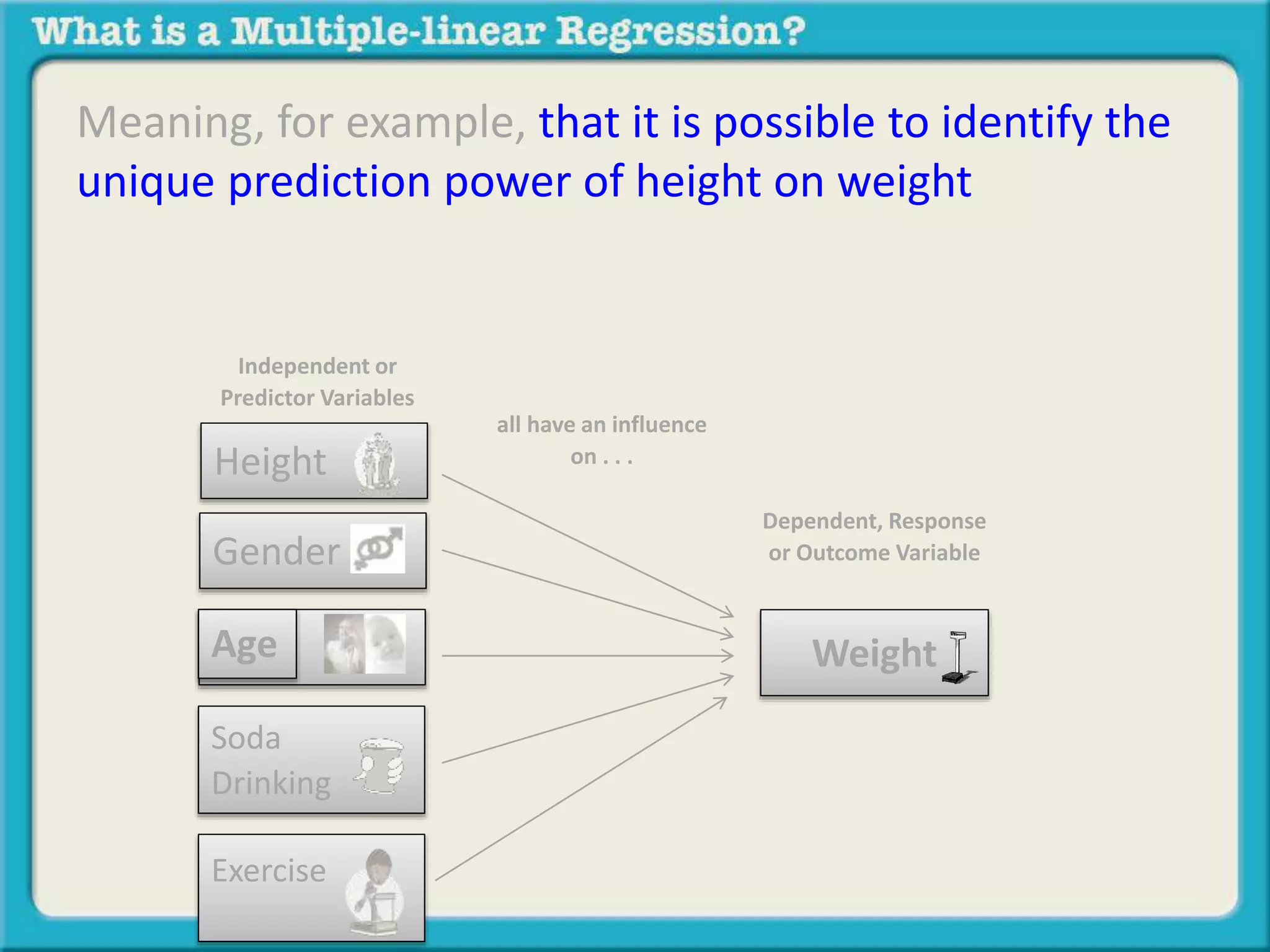

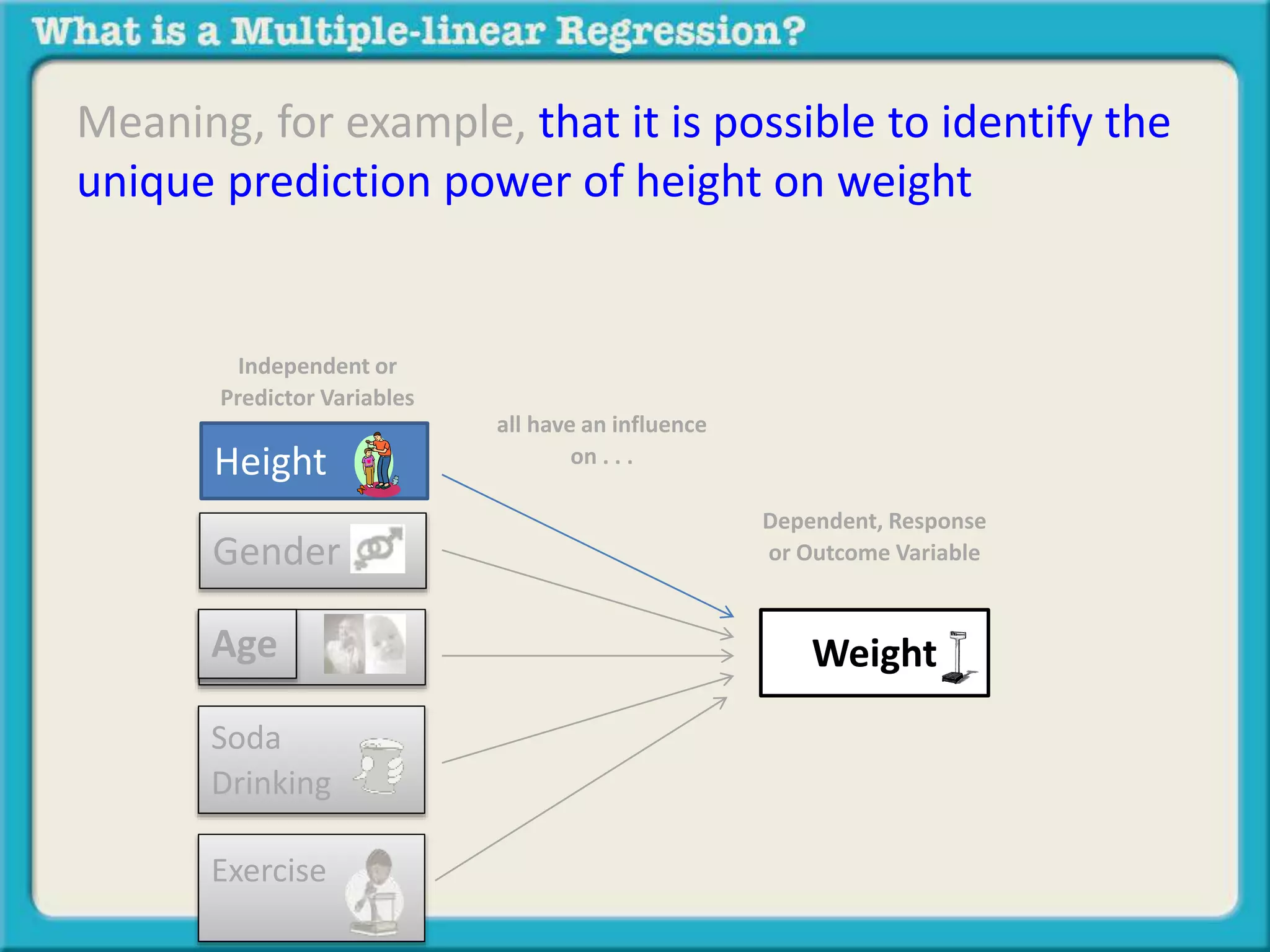

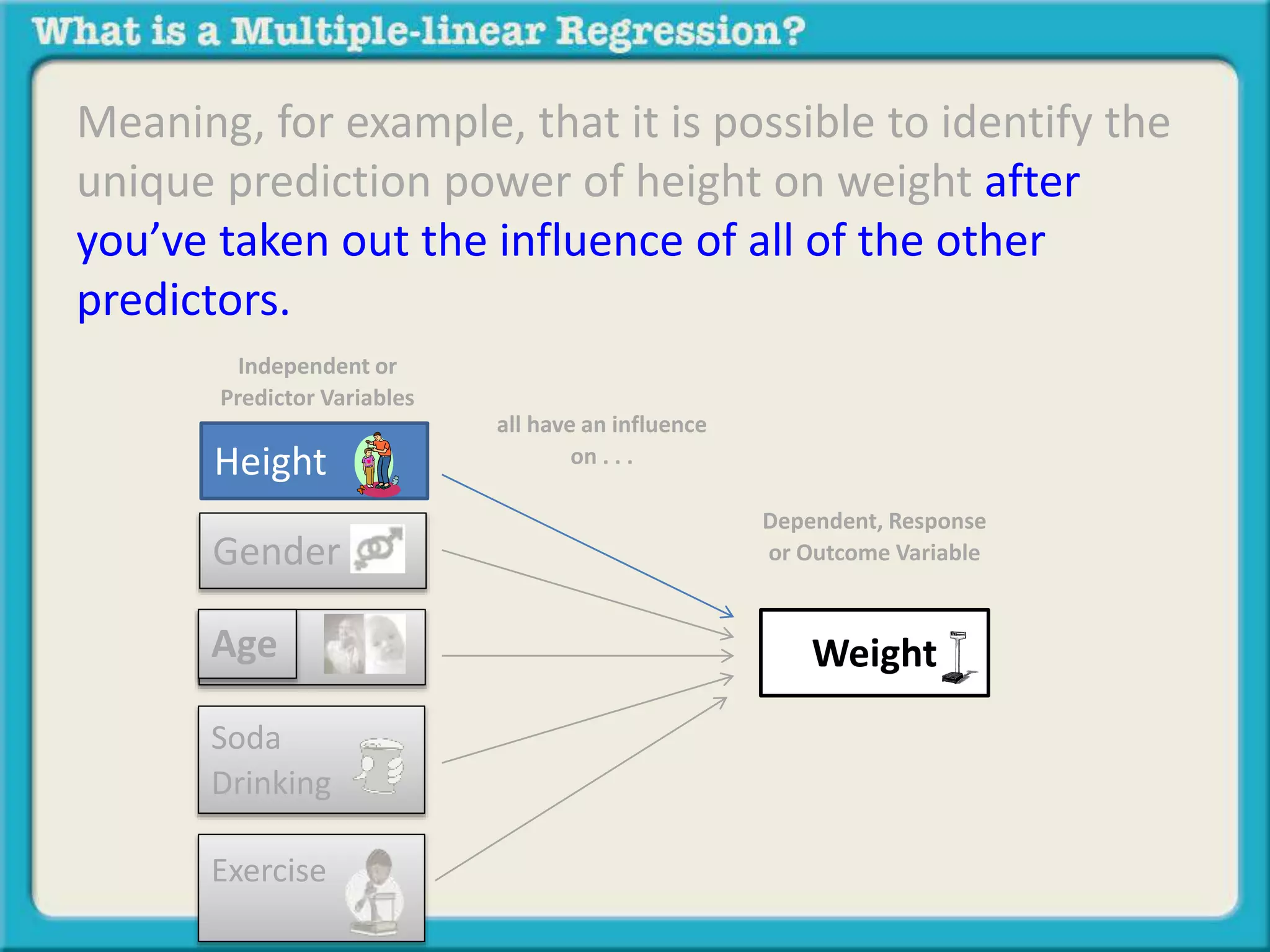

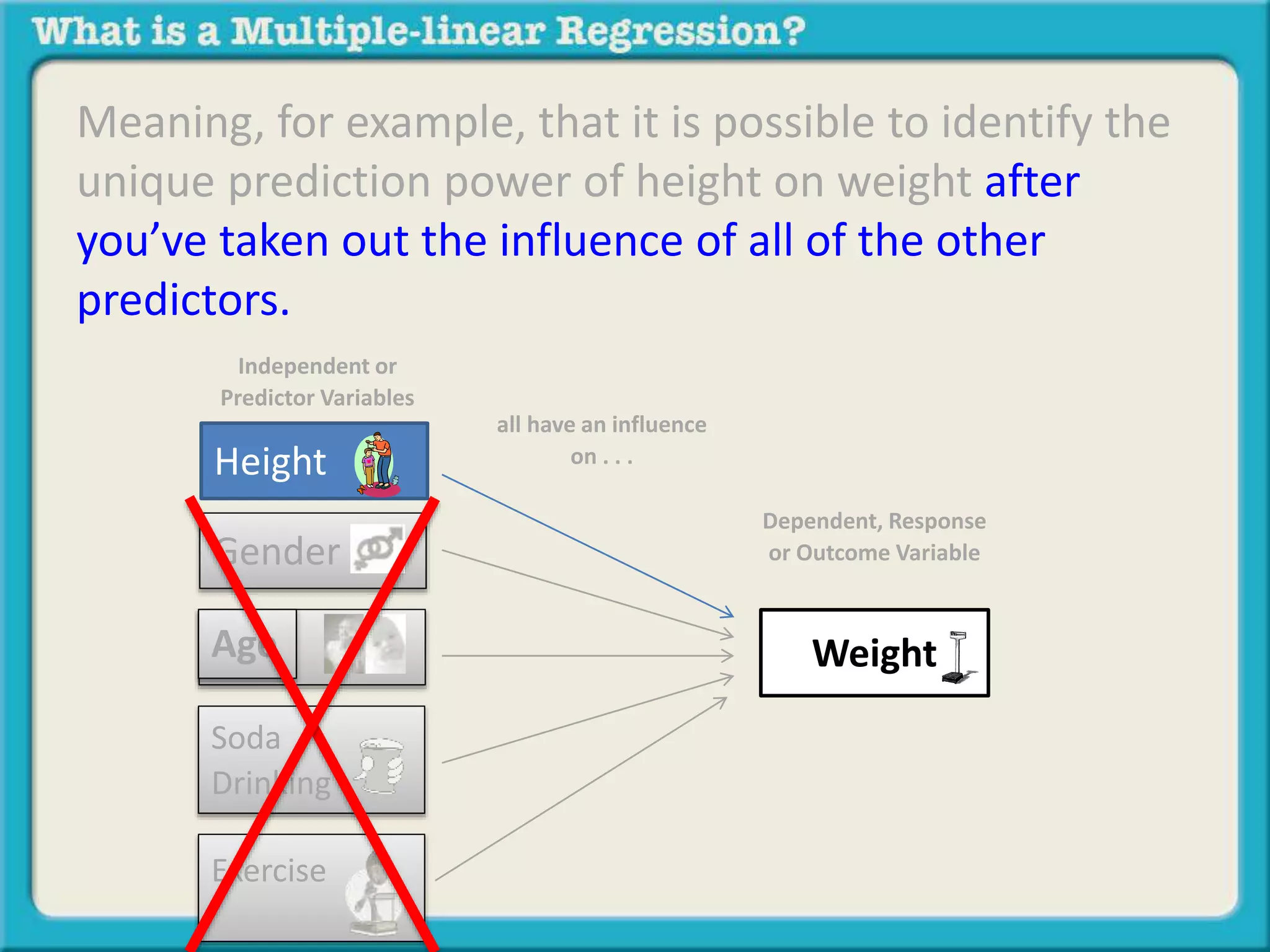

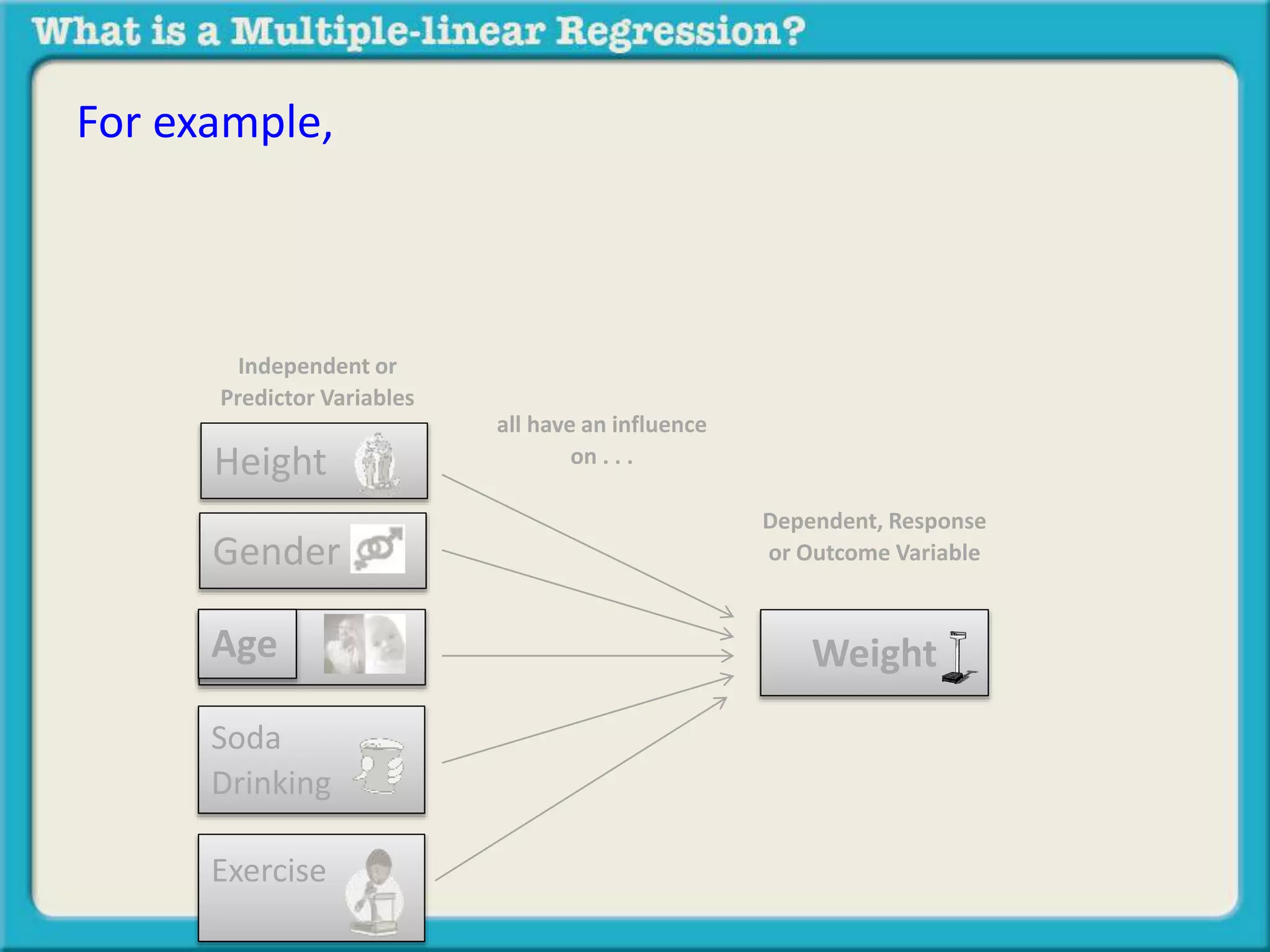

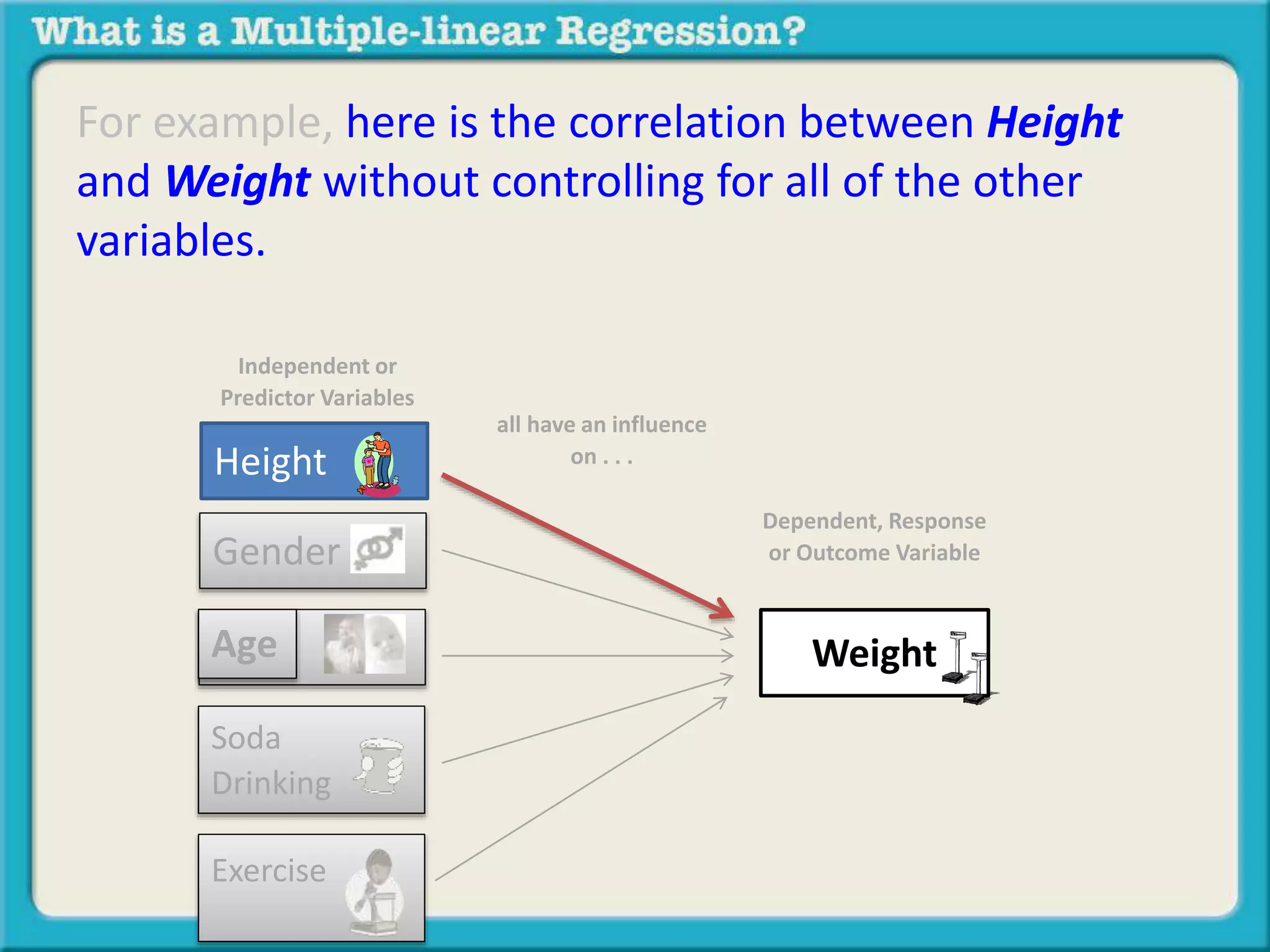

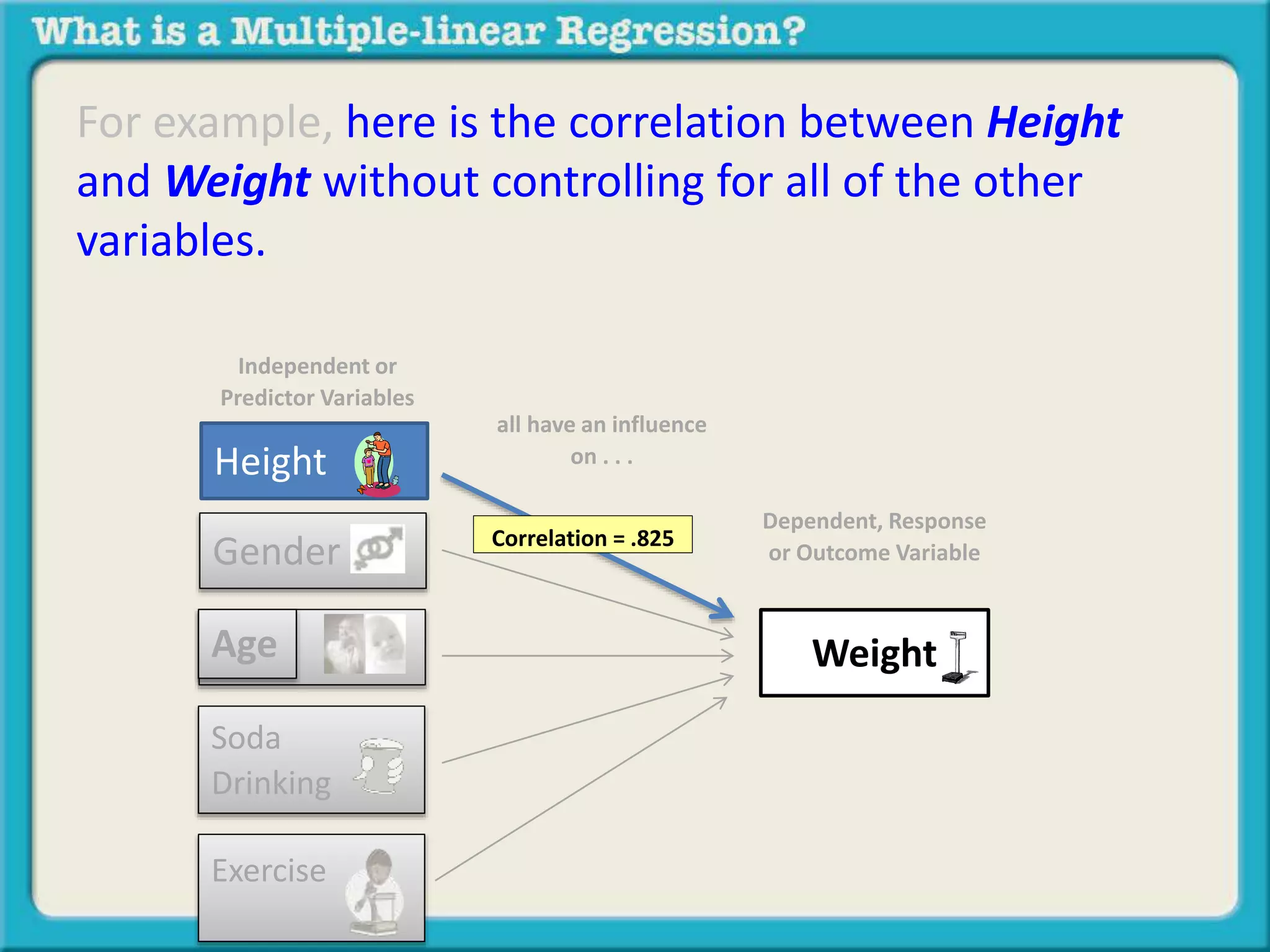

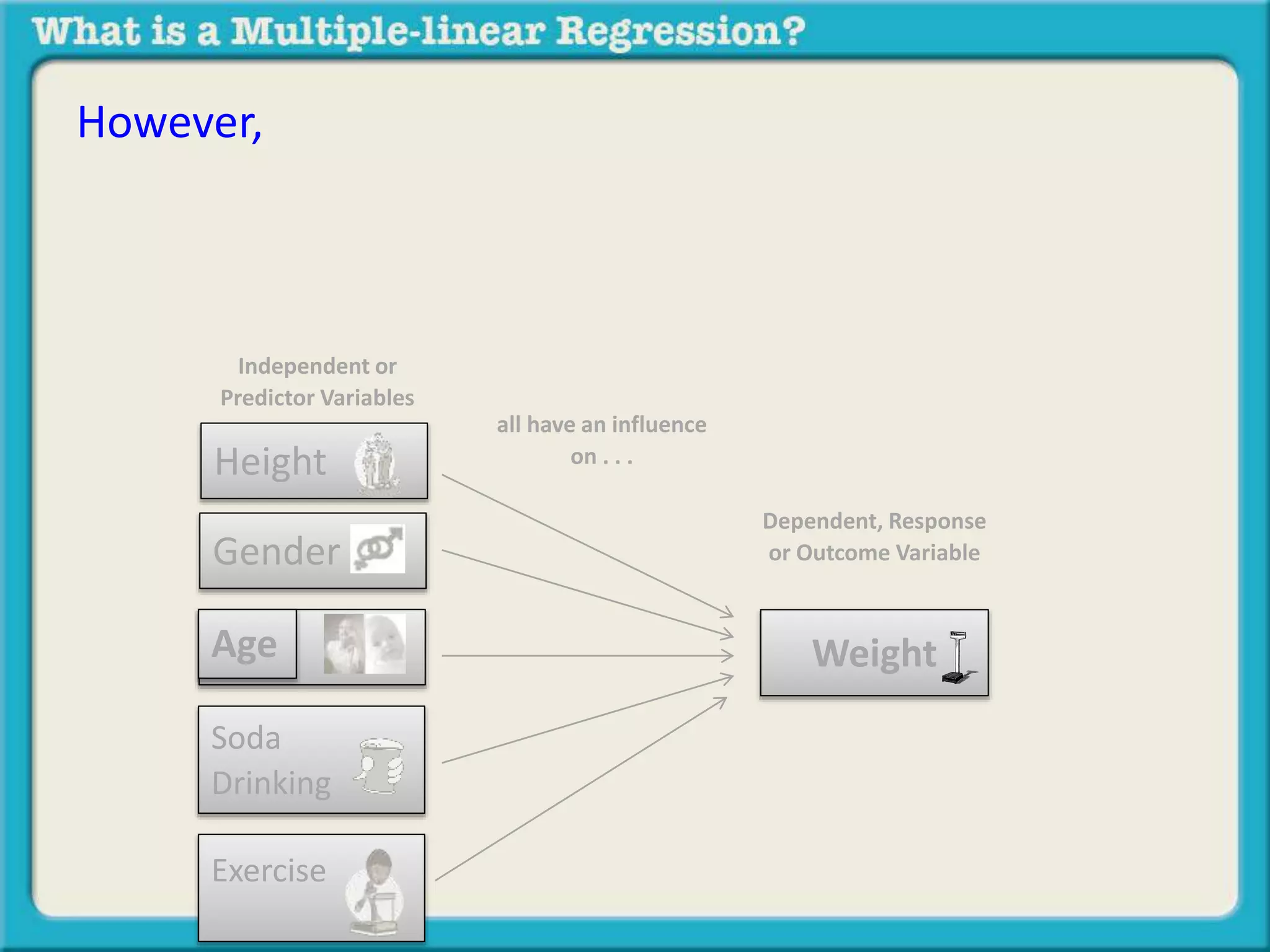

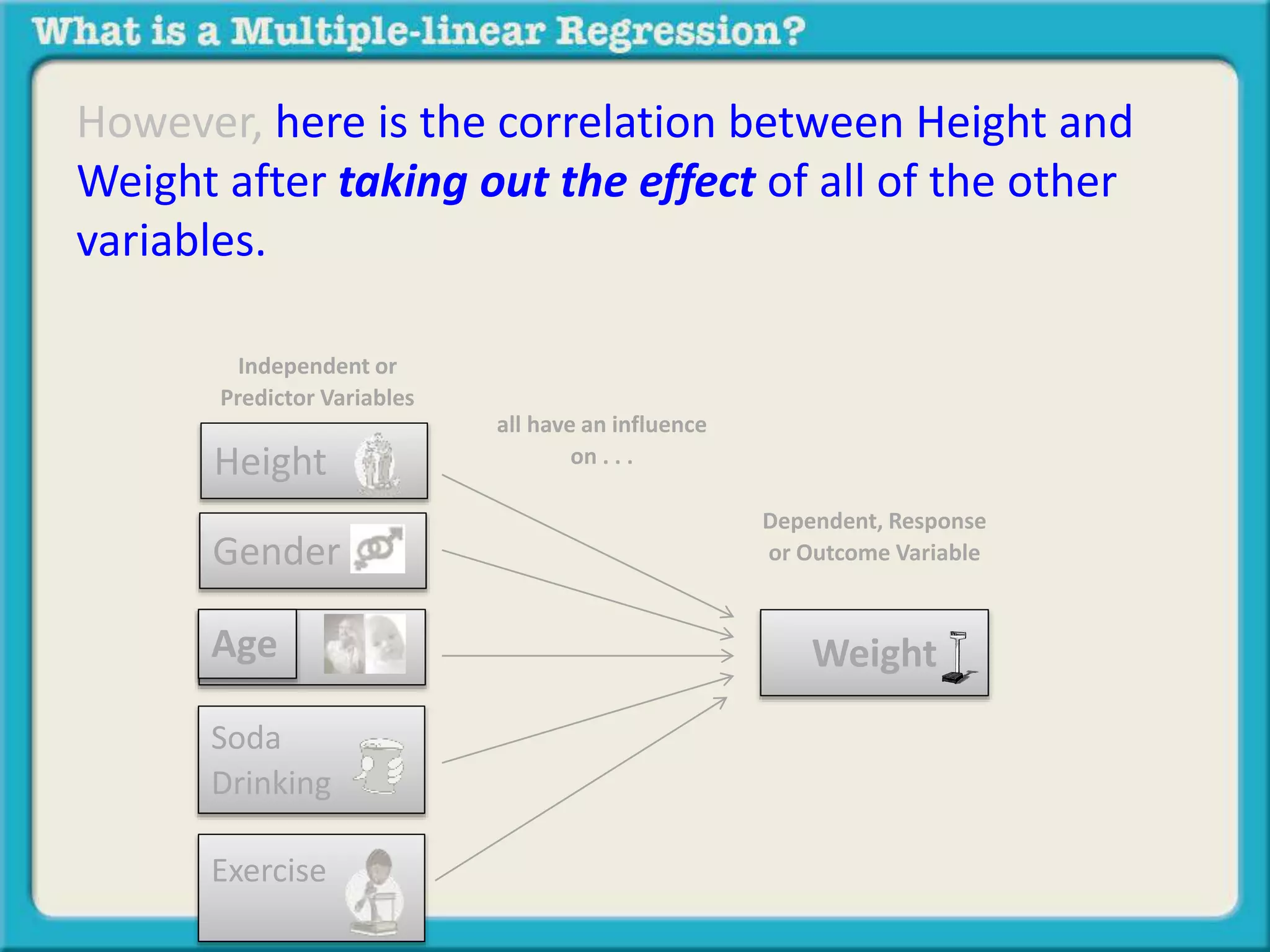

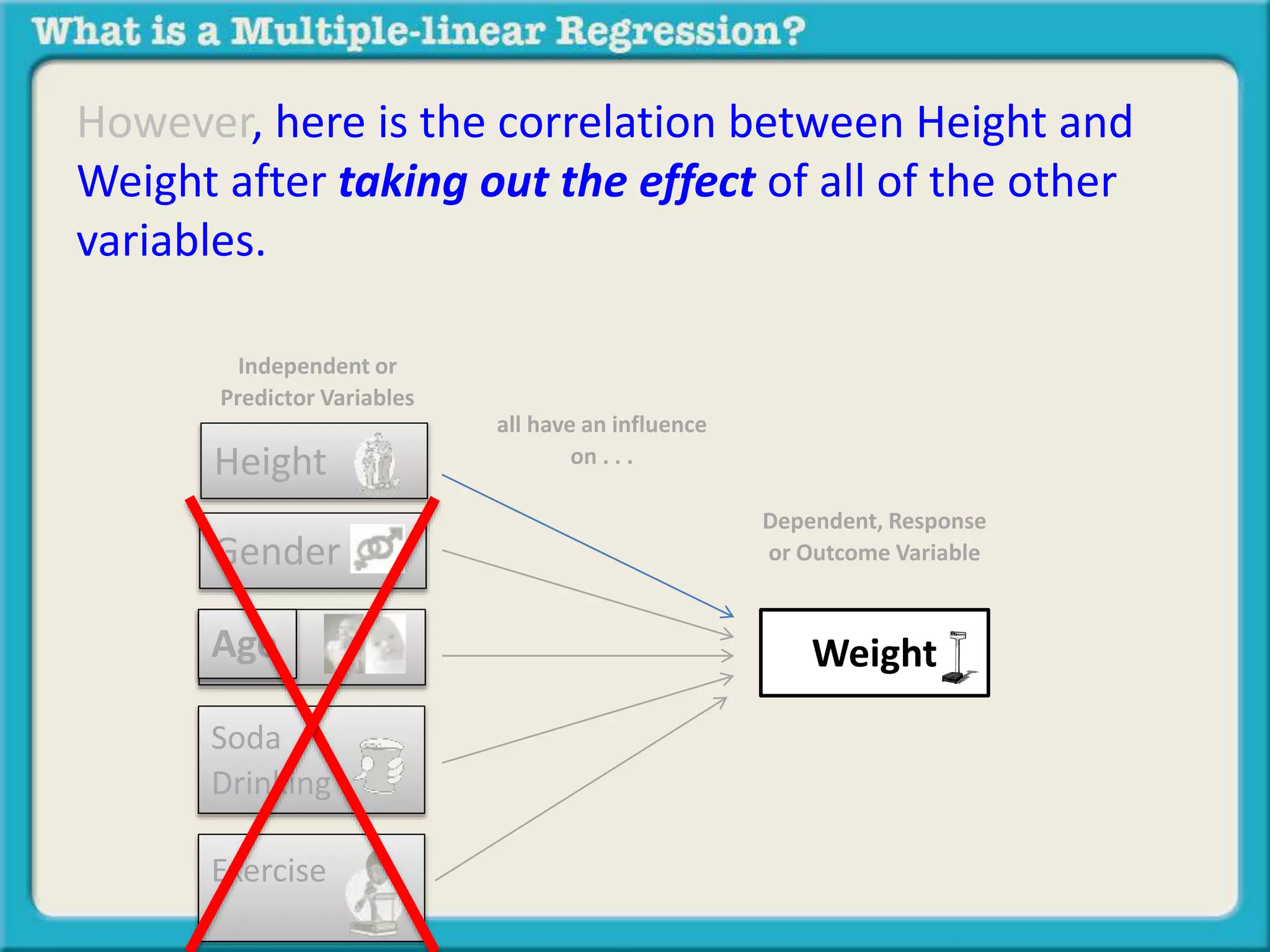

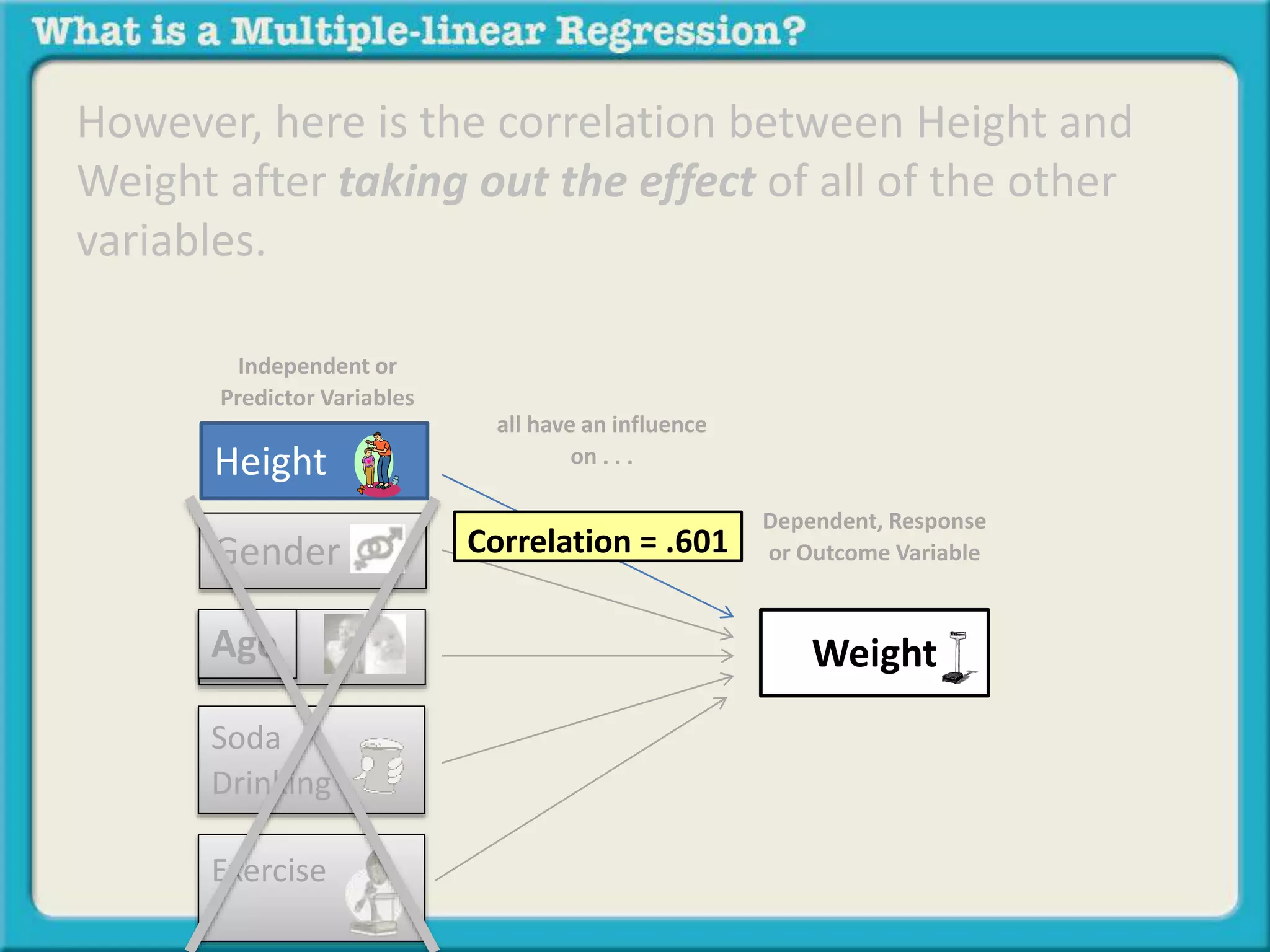

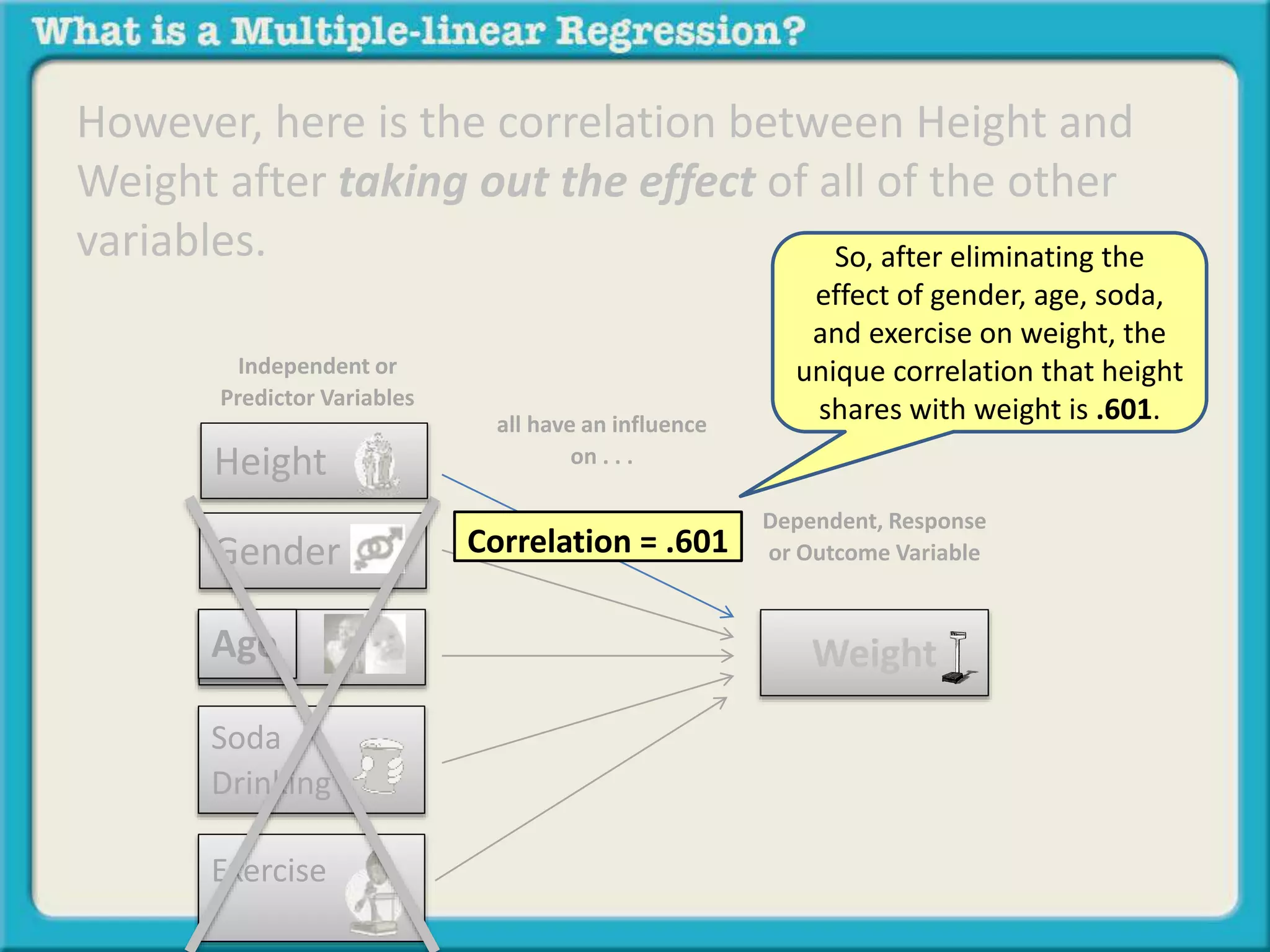

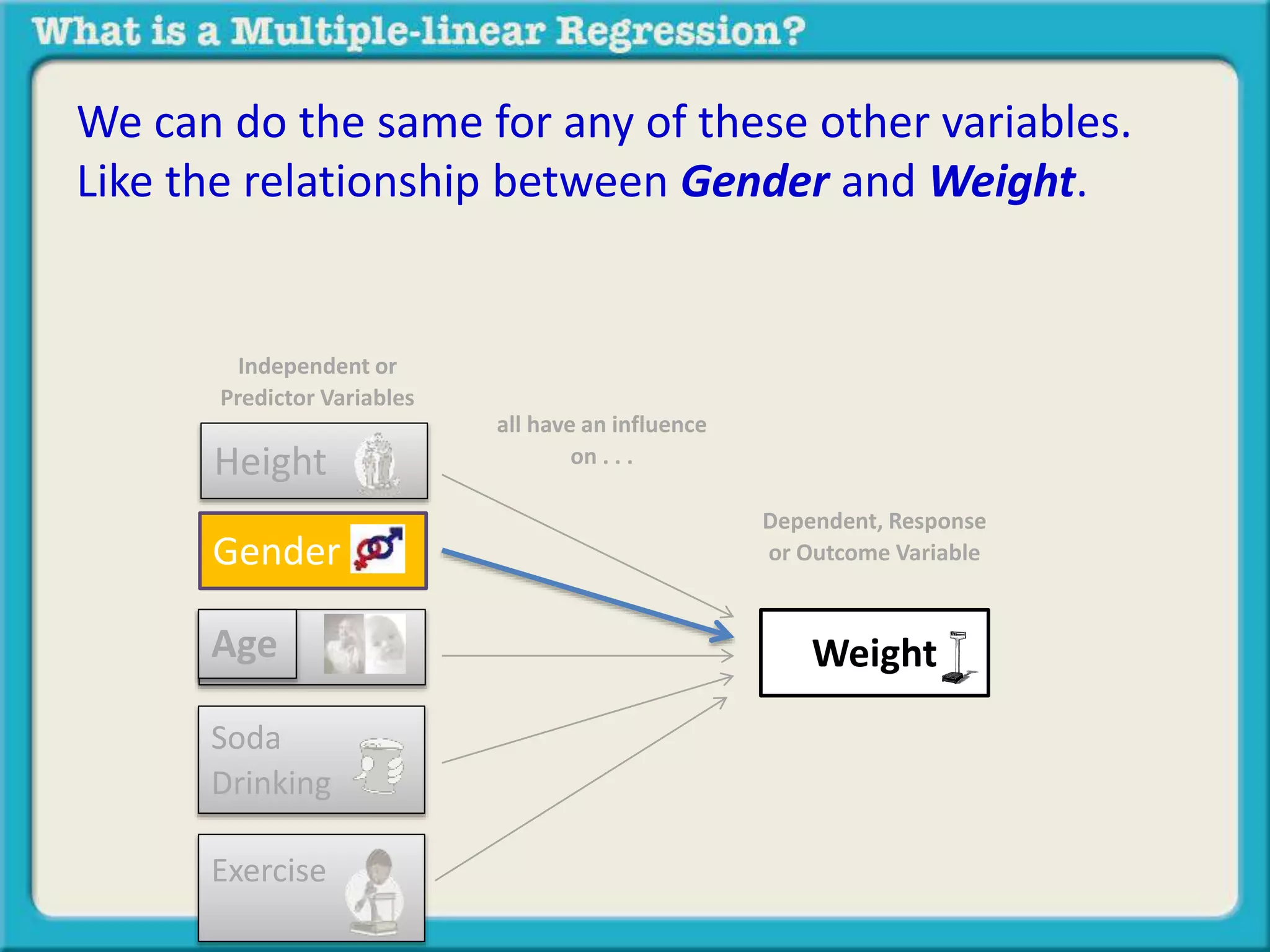

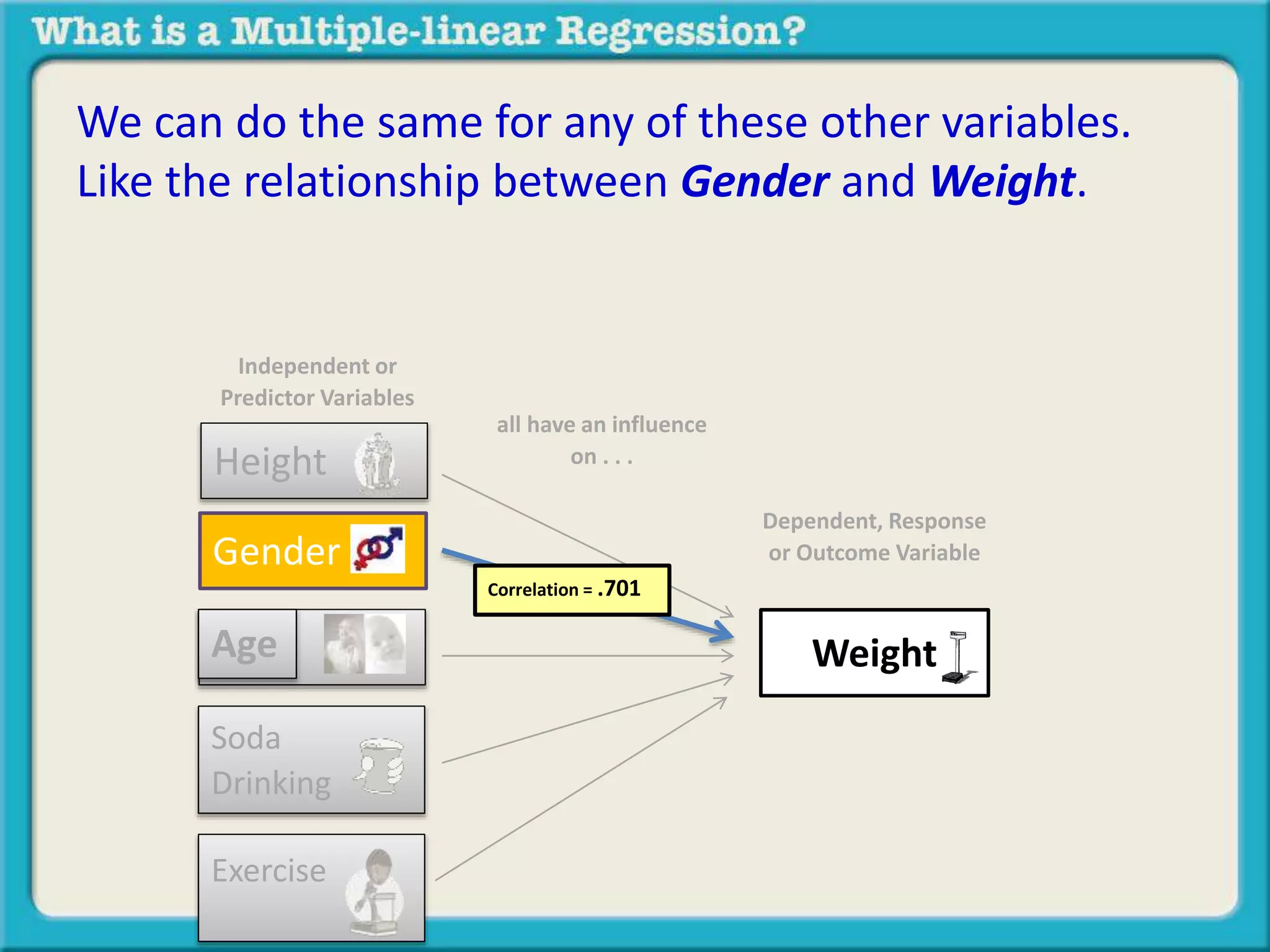

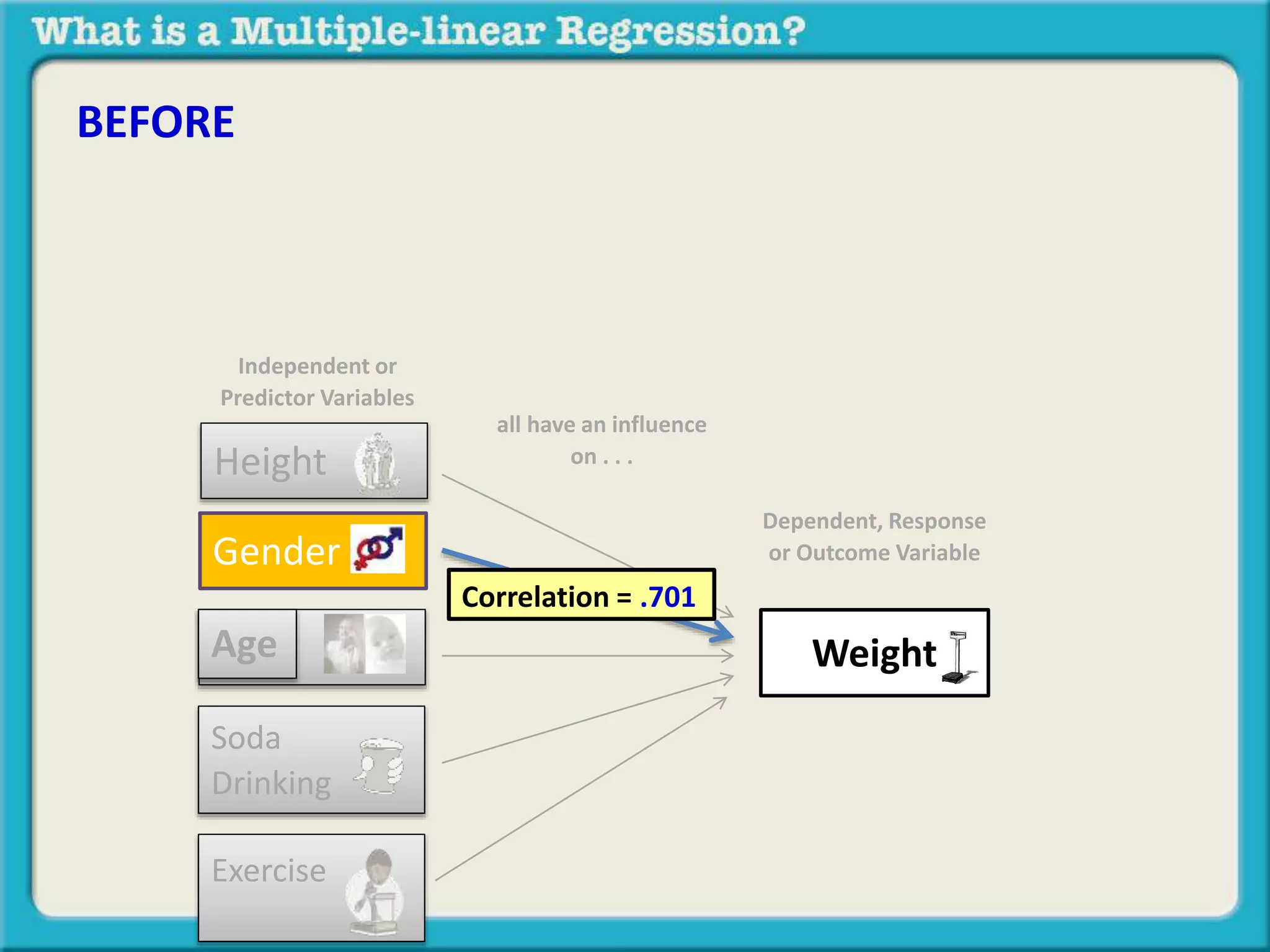

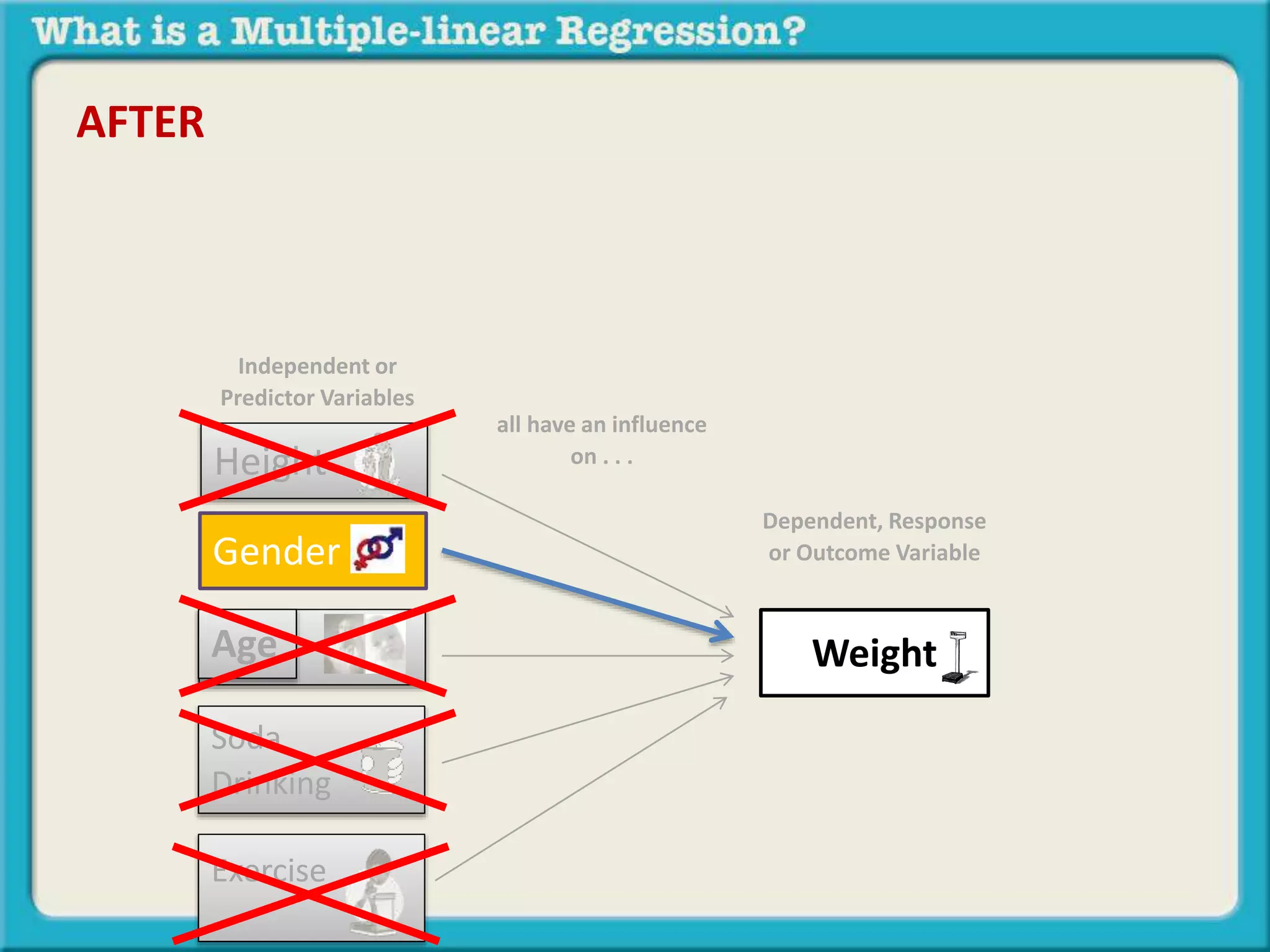

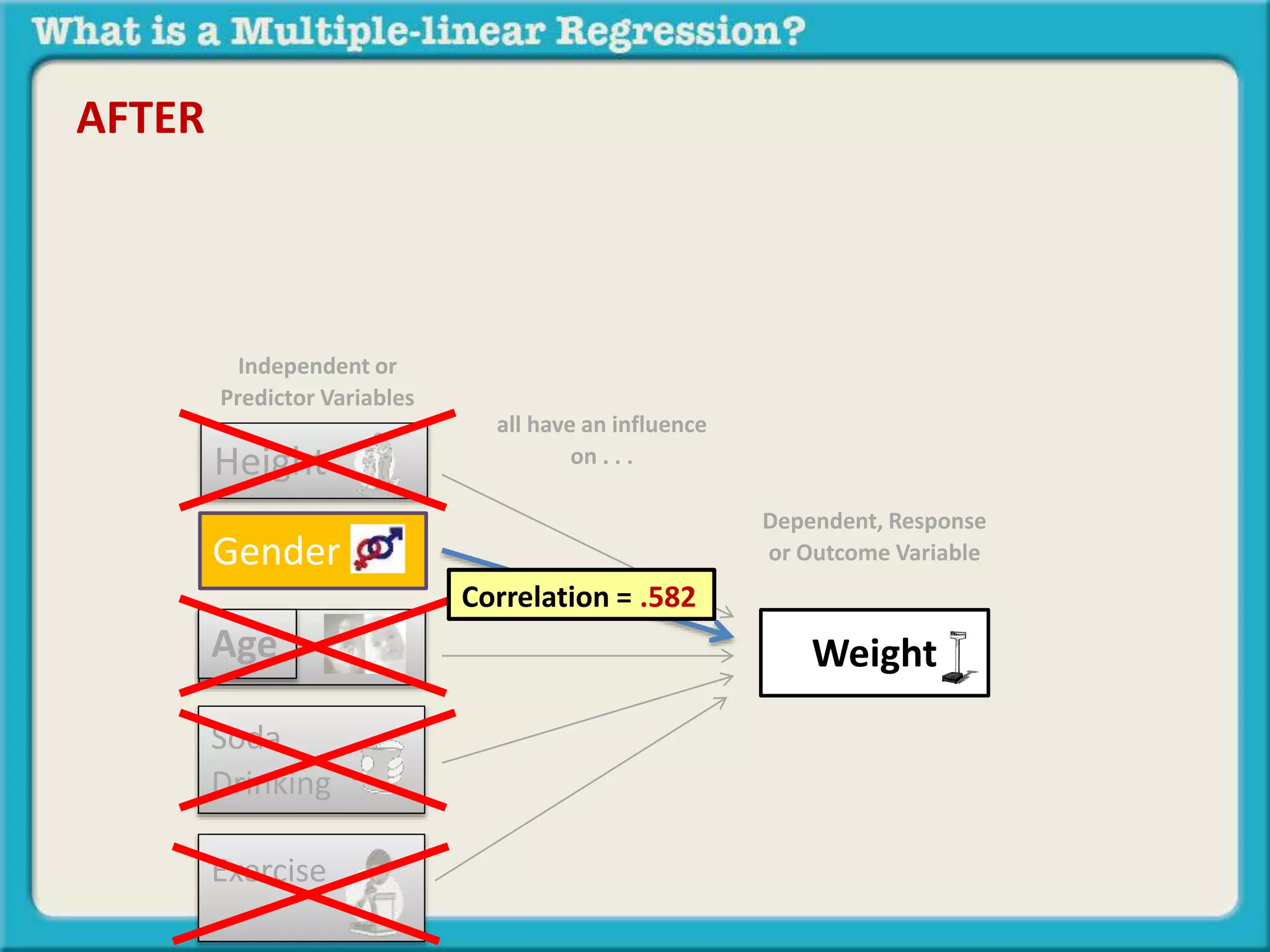

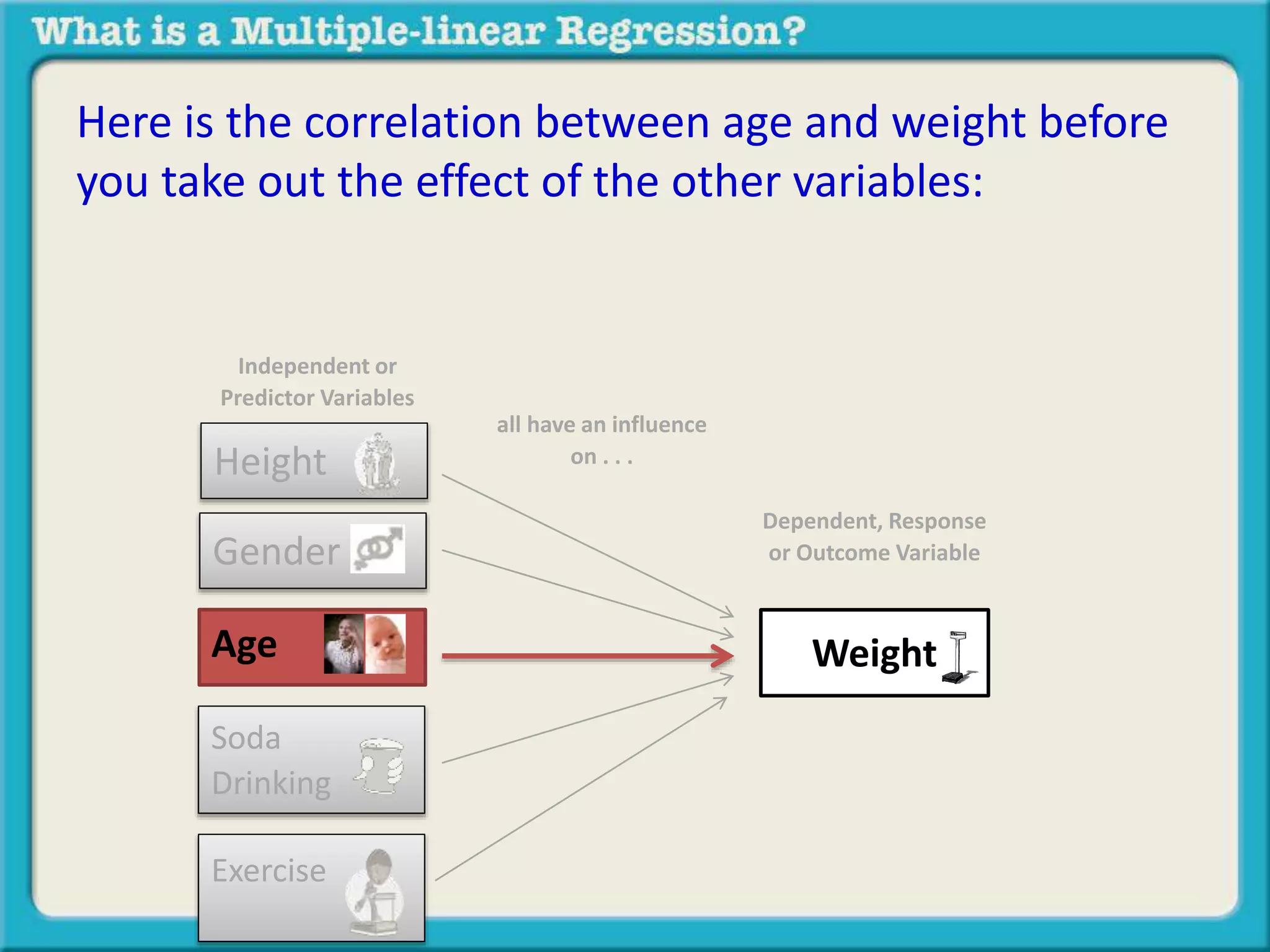

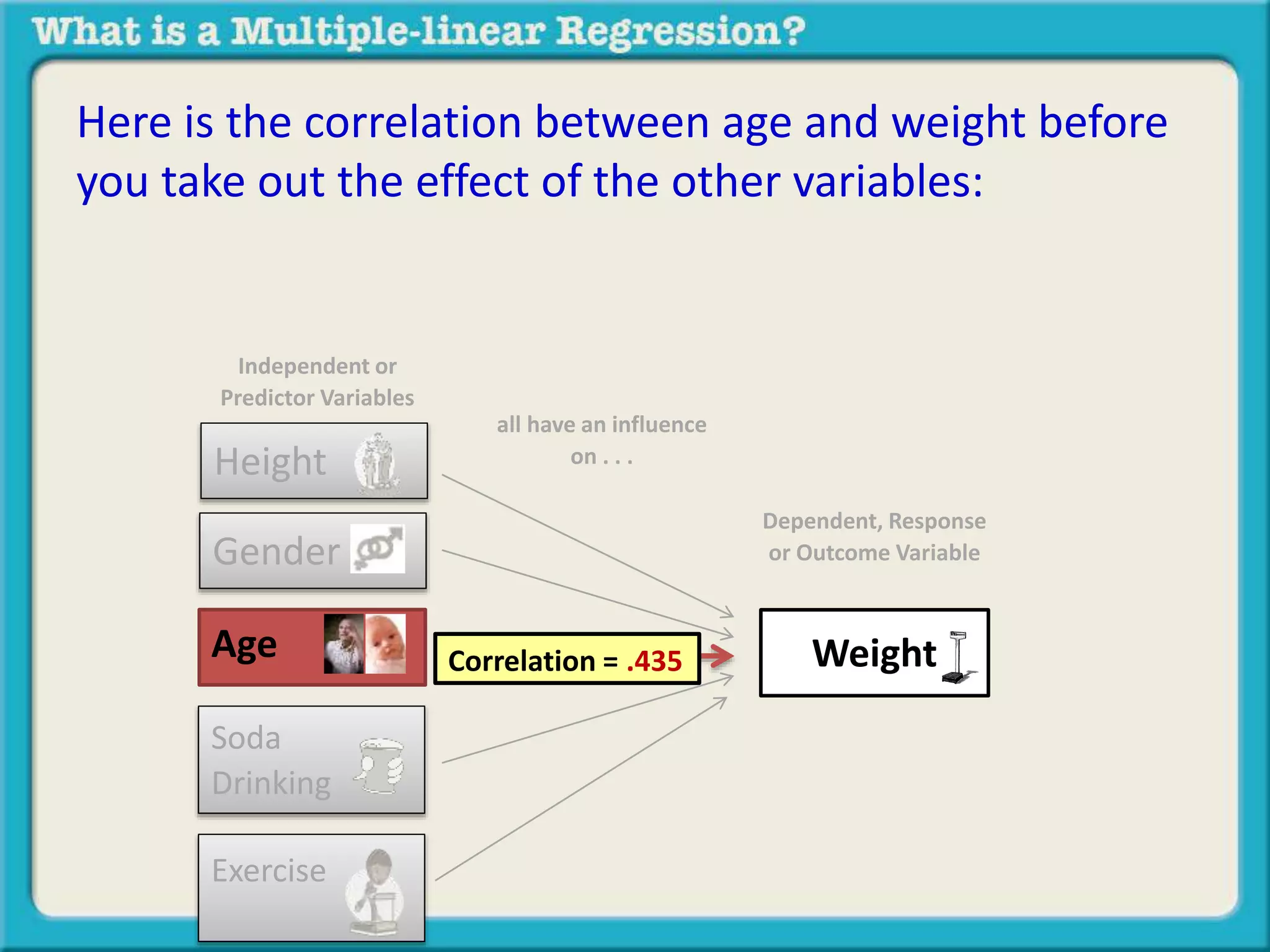

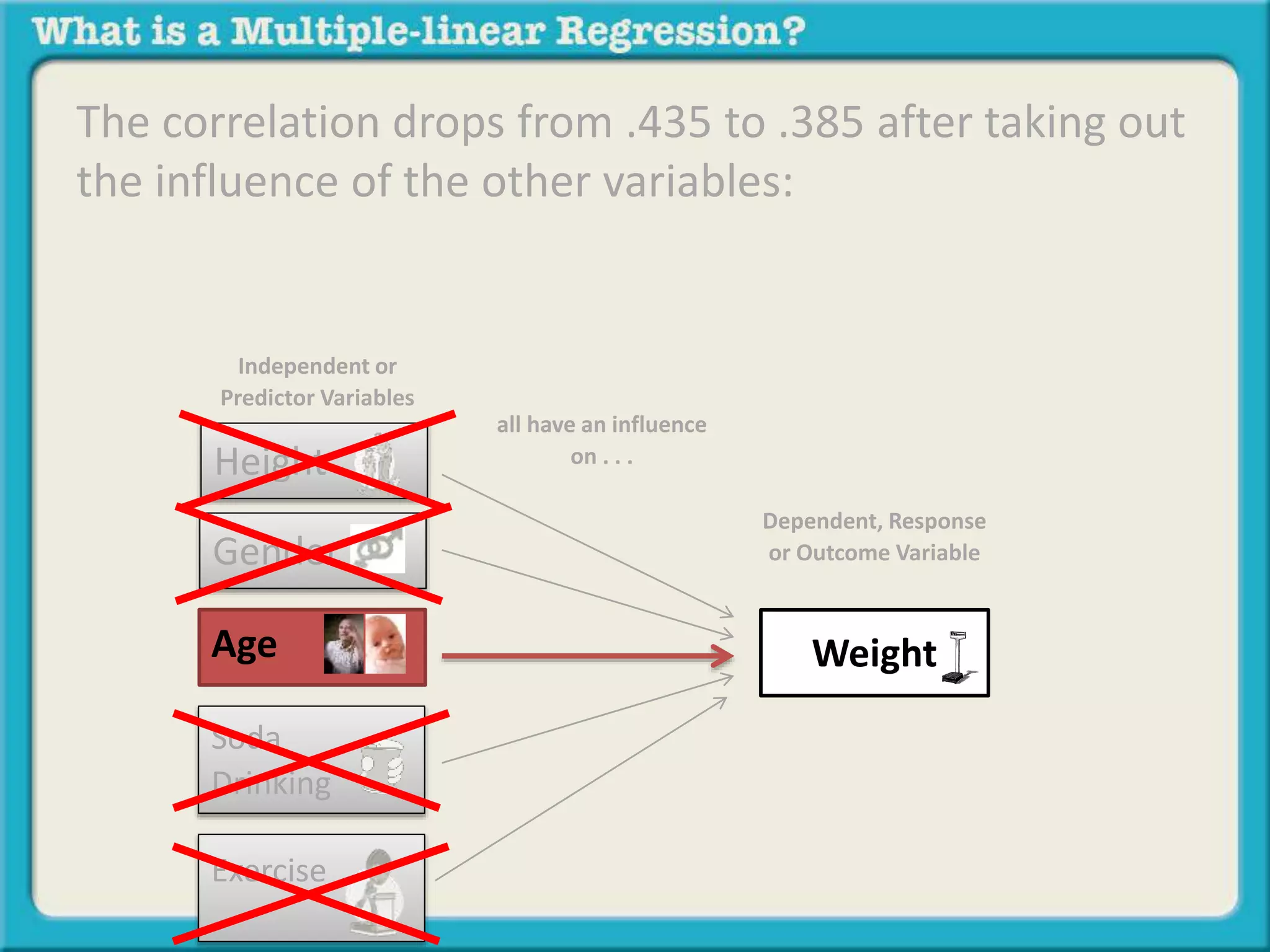

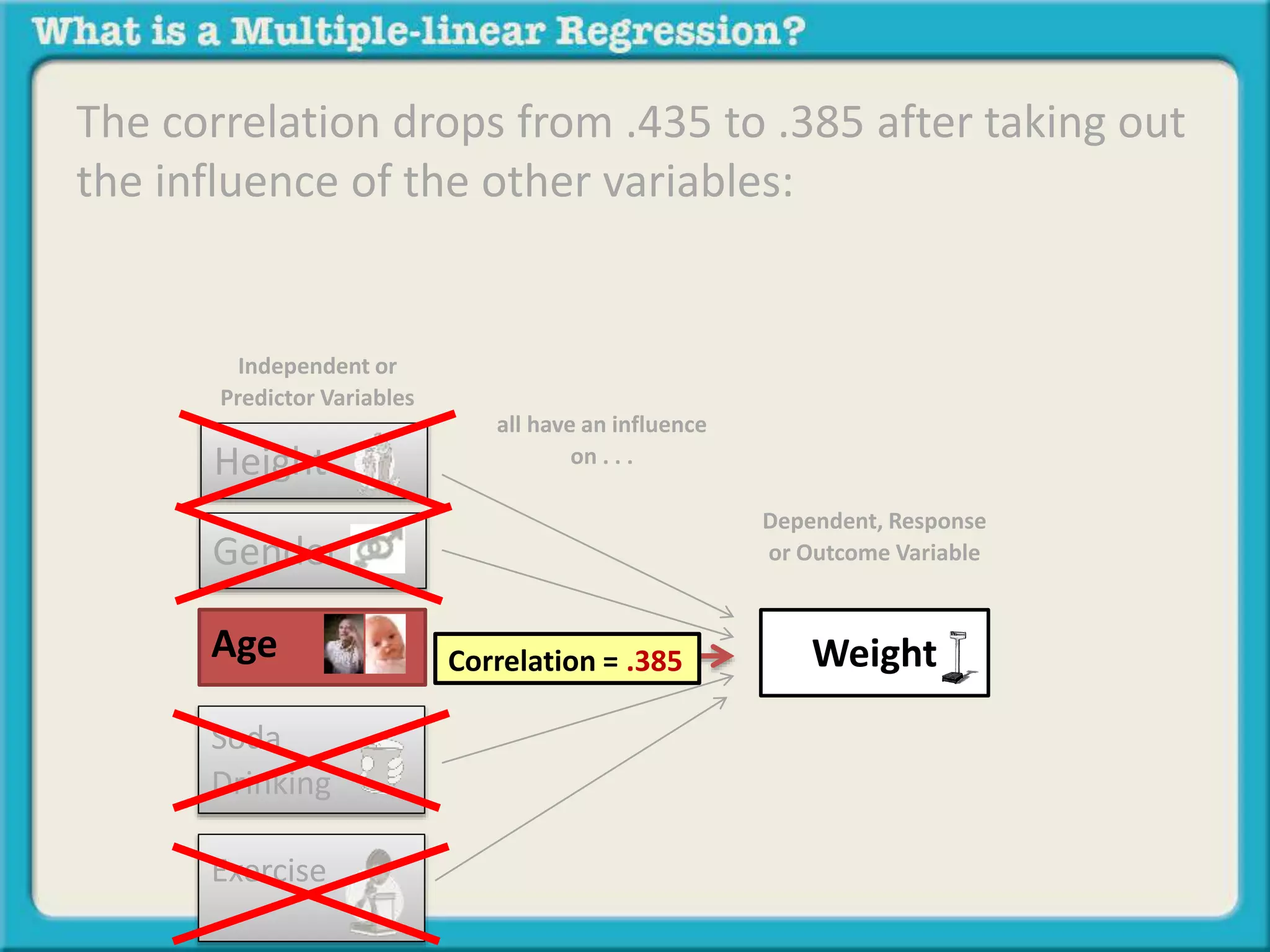

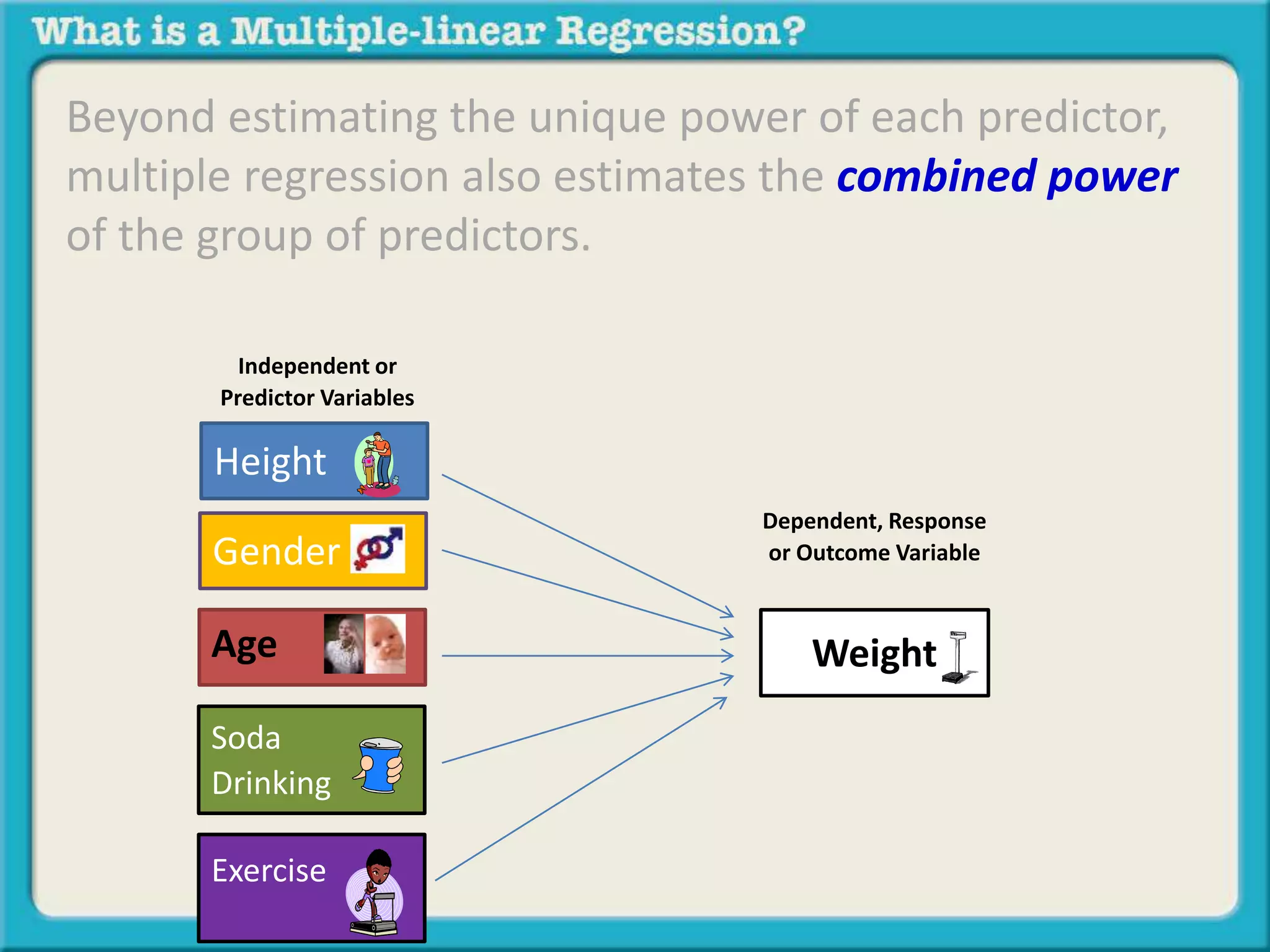

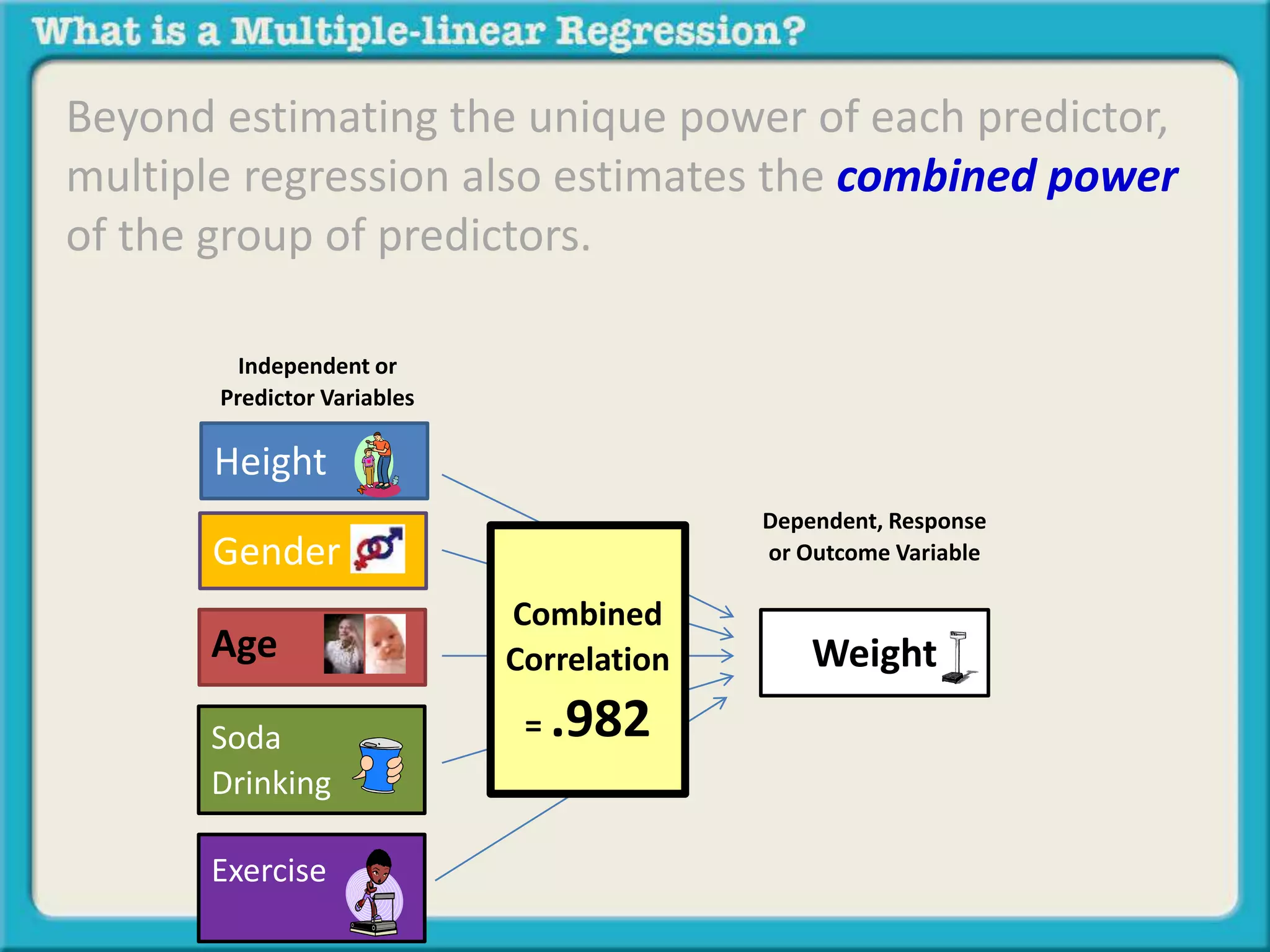

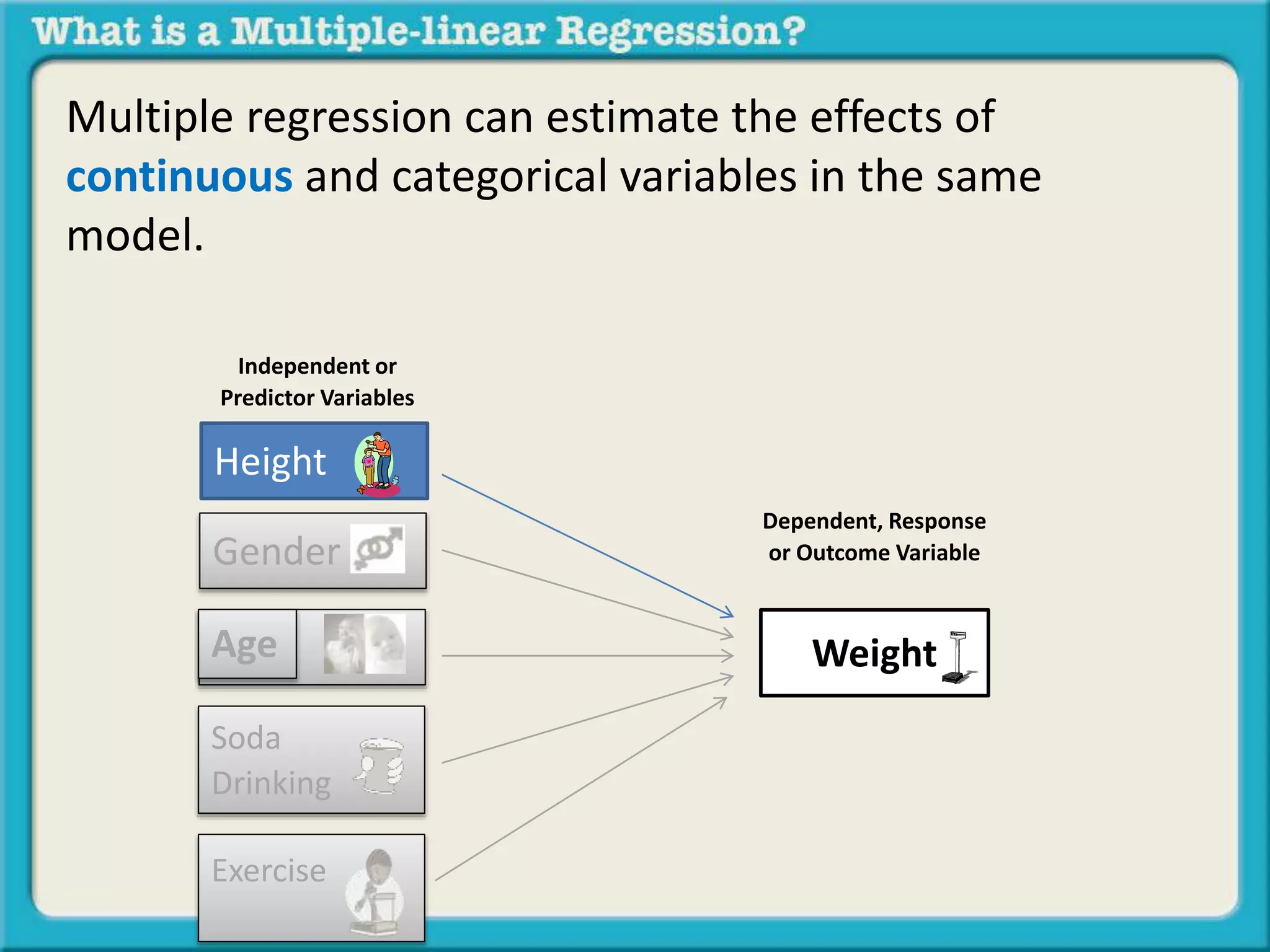

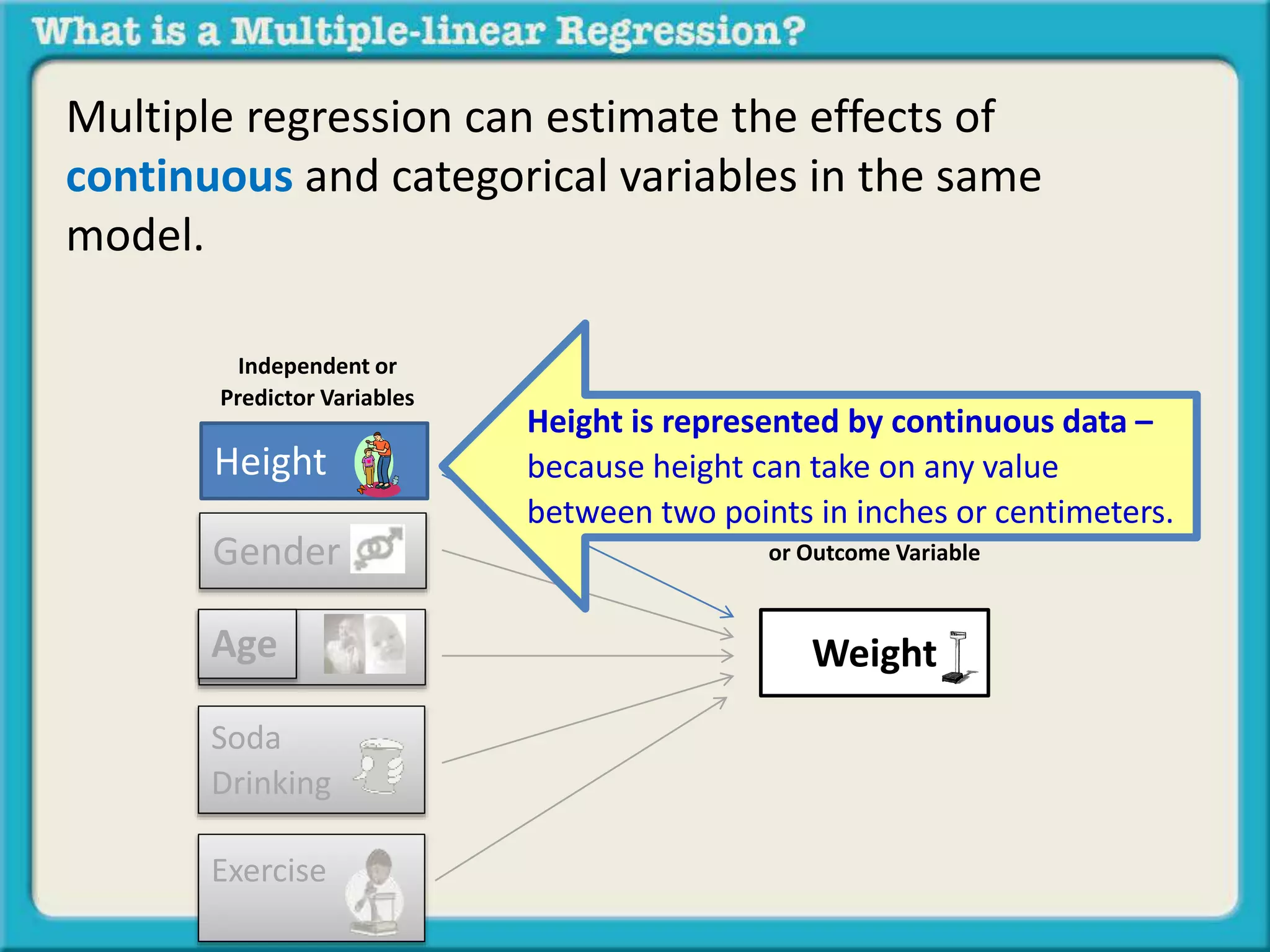

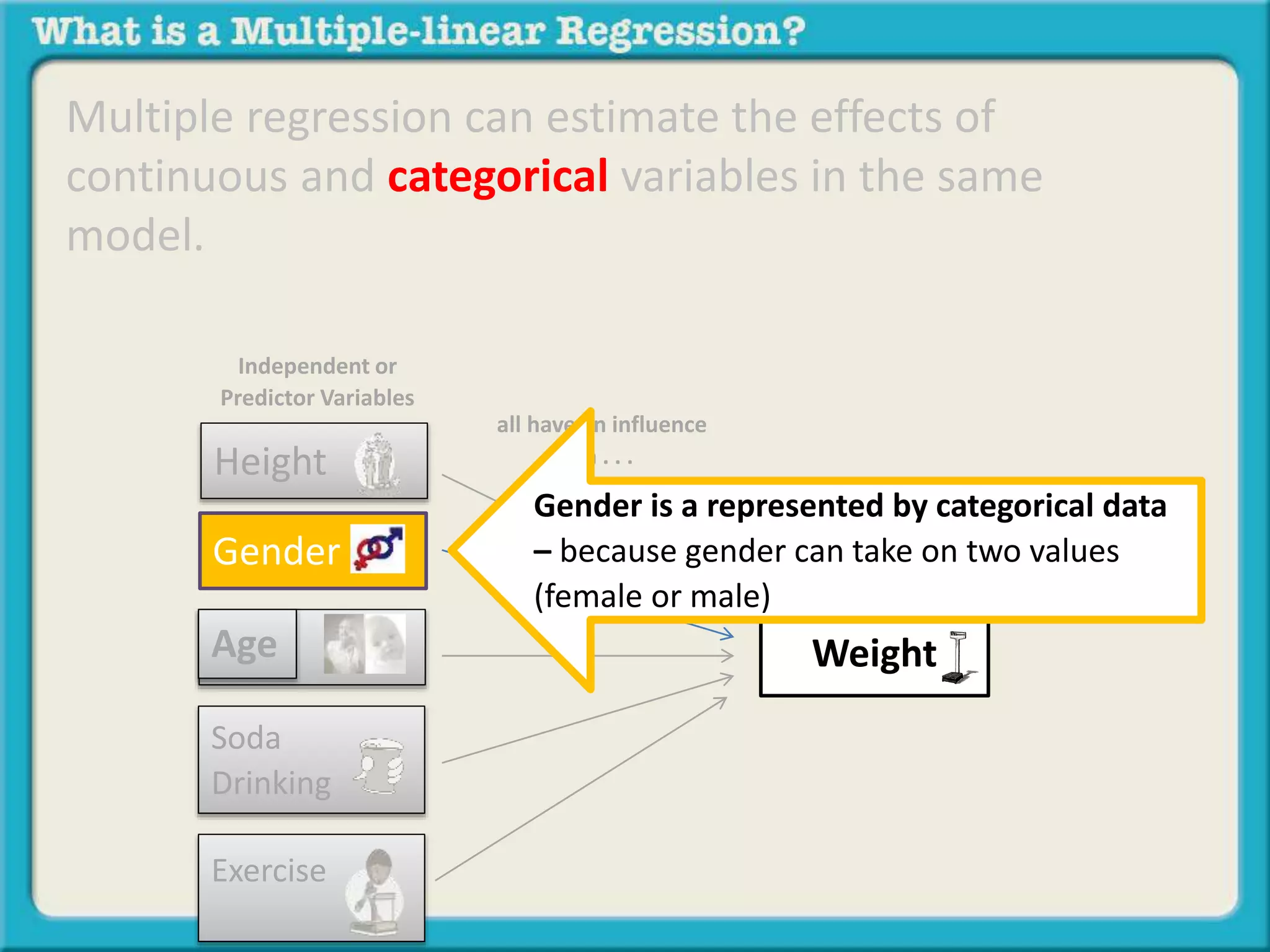

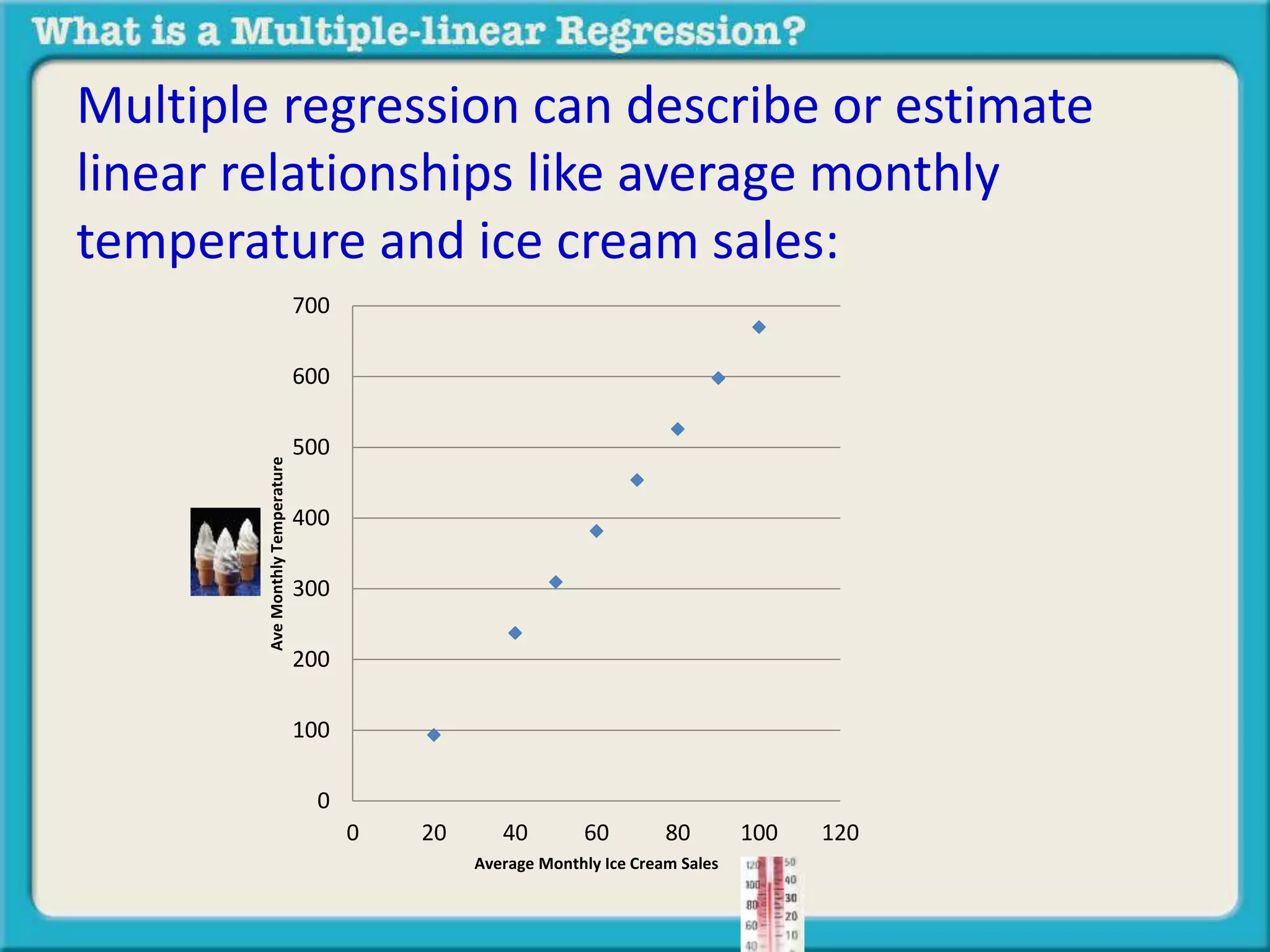

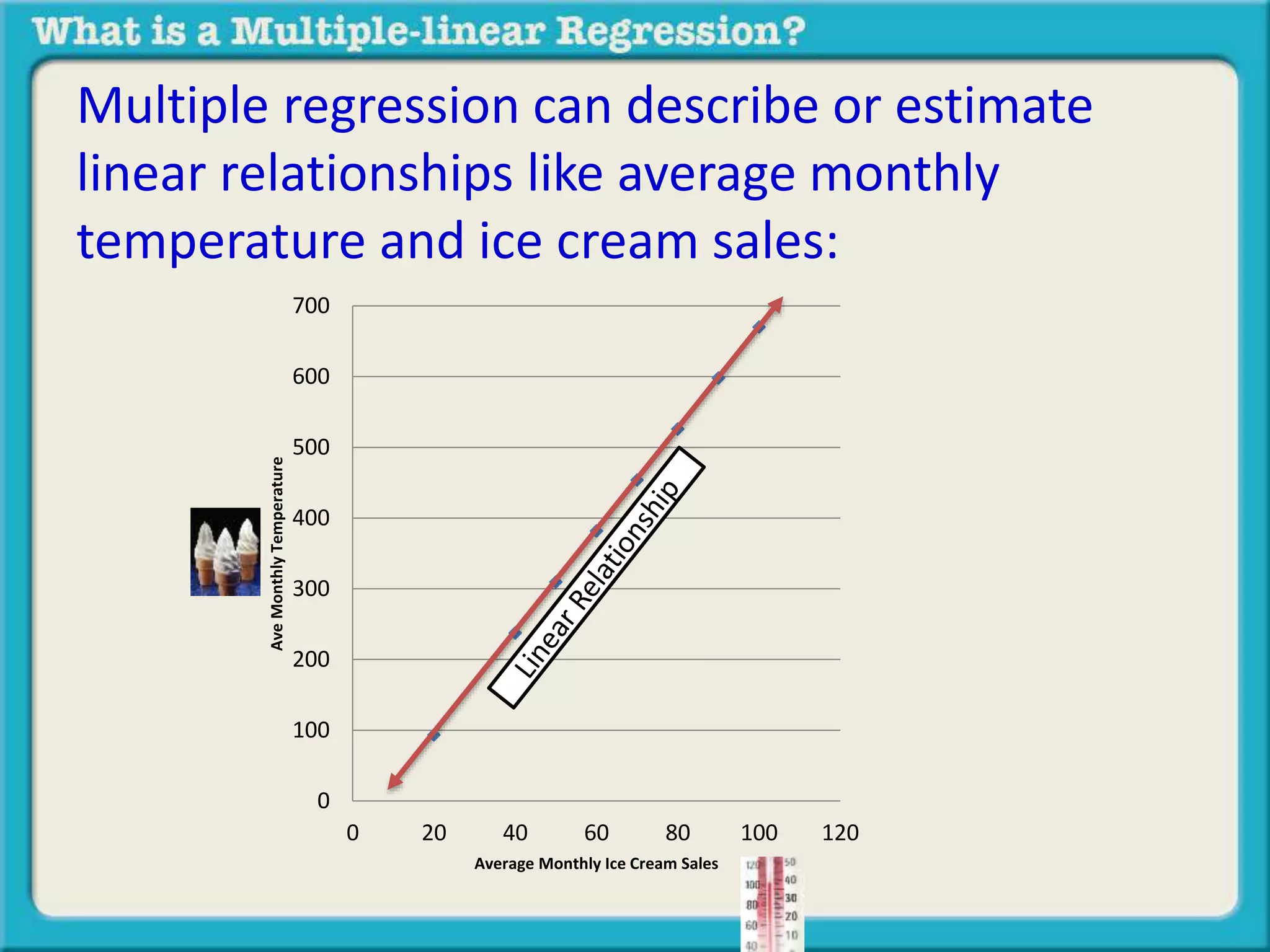

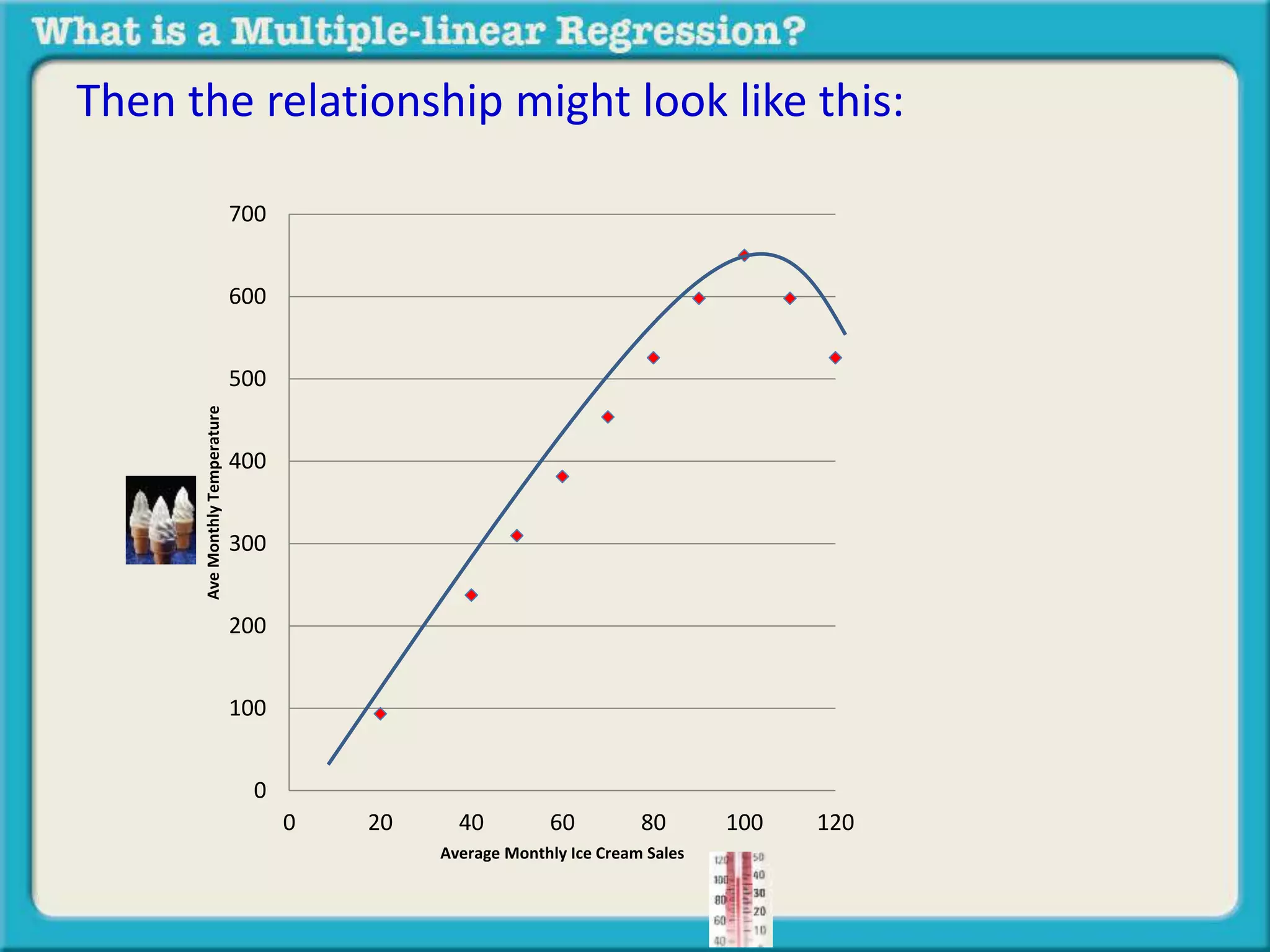

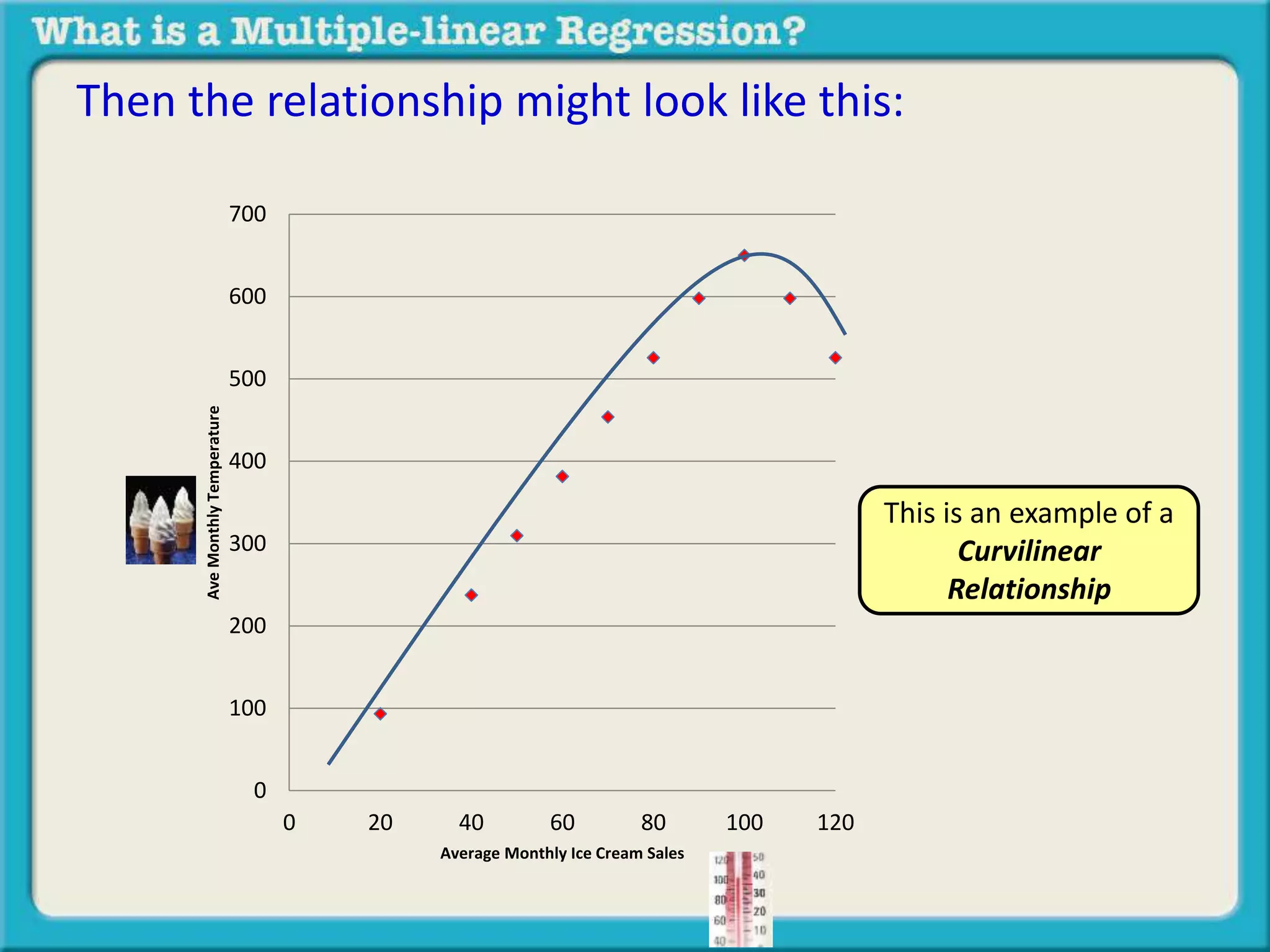

The document discusses multiple linear regression and partial correlation. It explains that multiple regression allows one to analyze the unique contribution of predictor variables to an outcome variable after accounting for the effects of other predictor variables. Partial correlation similarly examines the relationship between two variables while controlling for a third, but only considers two variables, whereas multiple regression examines the effects of multiple predictor variables simultaneously. Examples are given comparing the correlation between height and weight with and without controlling for other relevant variables like gender, age, exercise habits, etc.