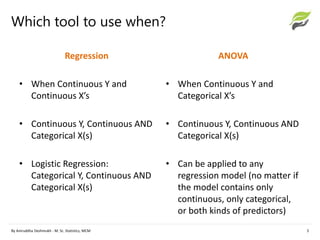

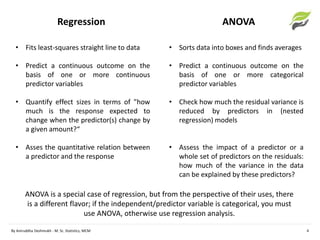

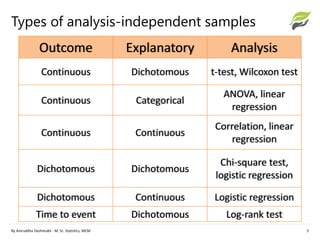

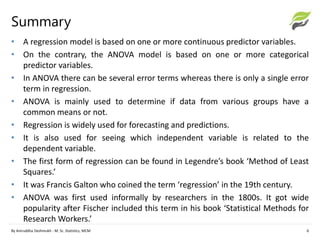

The document compares ANOVA and regression analysis, highlighting their similarities and differences in statistical modeling. ANOVA is suited for categorical predictor variables while regression is used for continuous predictors, with each having distinct applications in data analysis. The document also details the historical context and foundational principles of both methods.