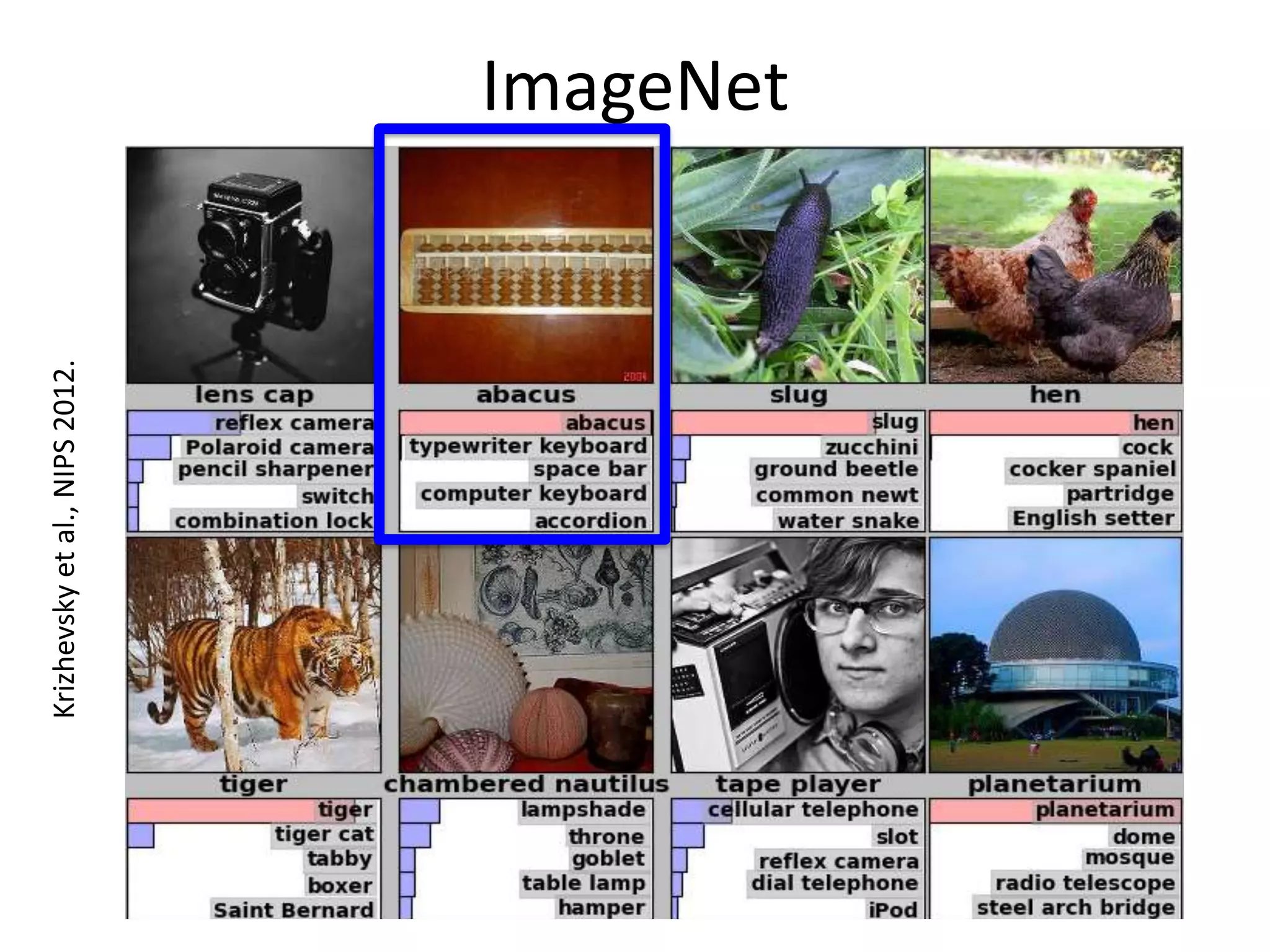

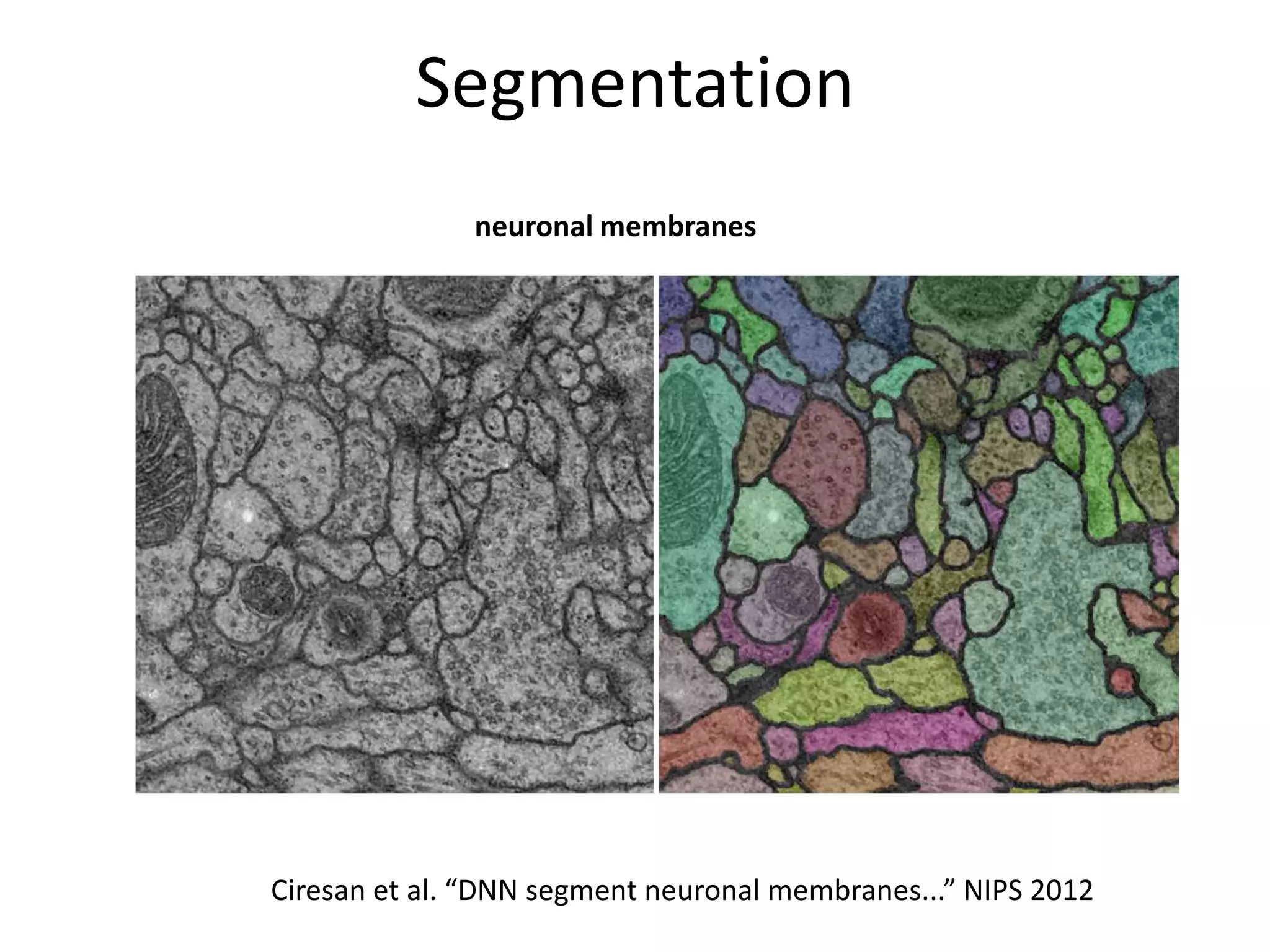

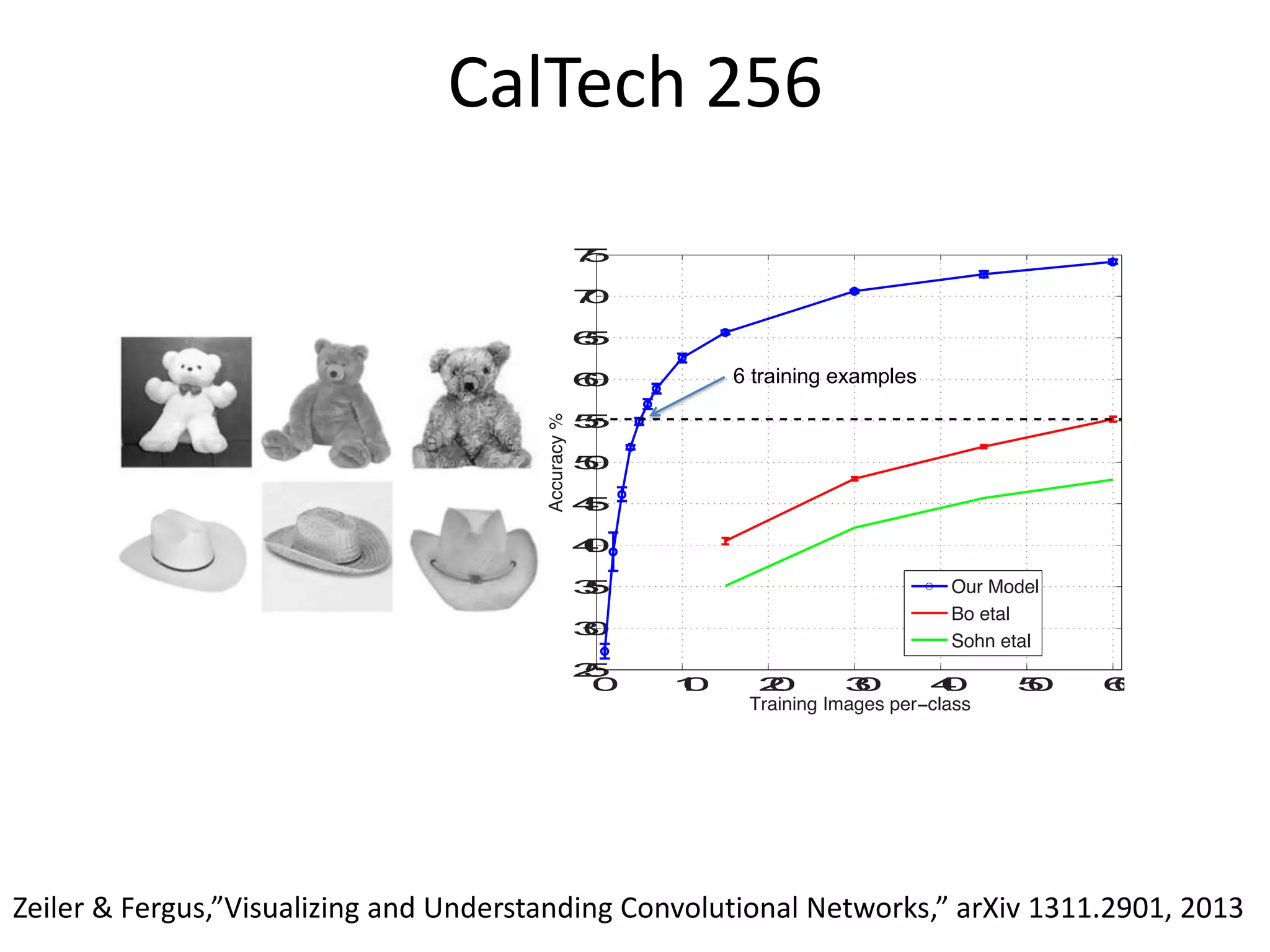

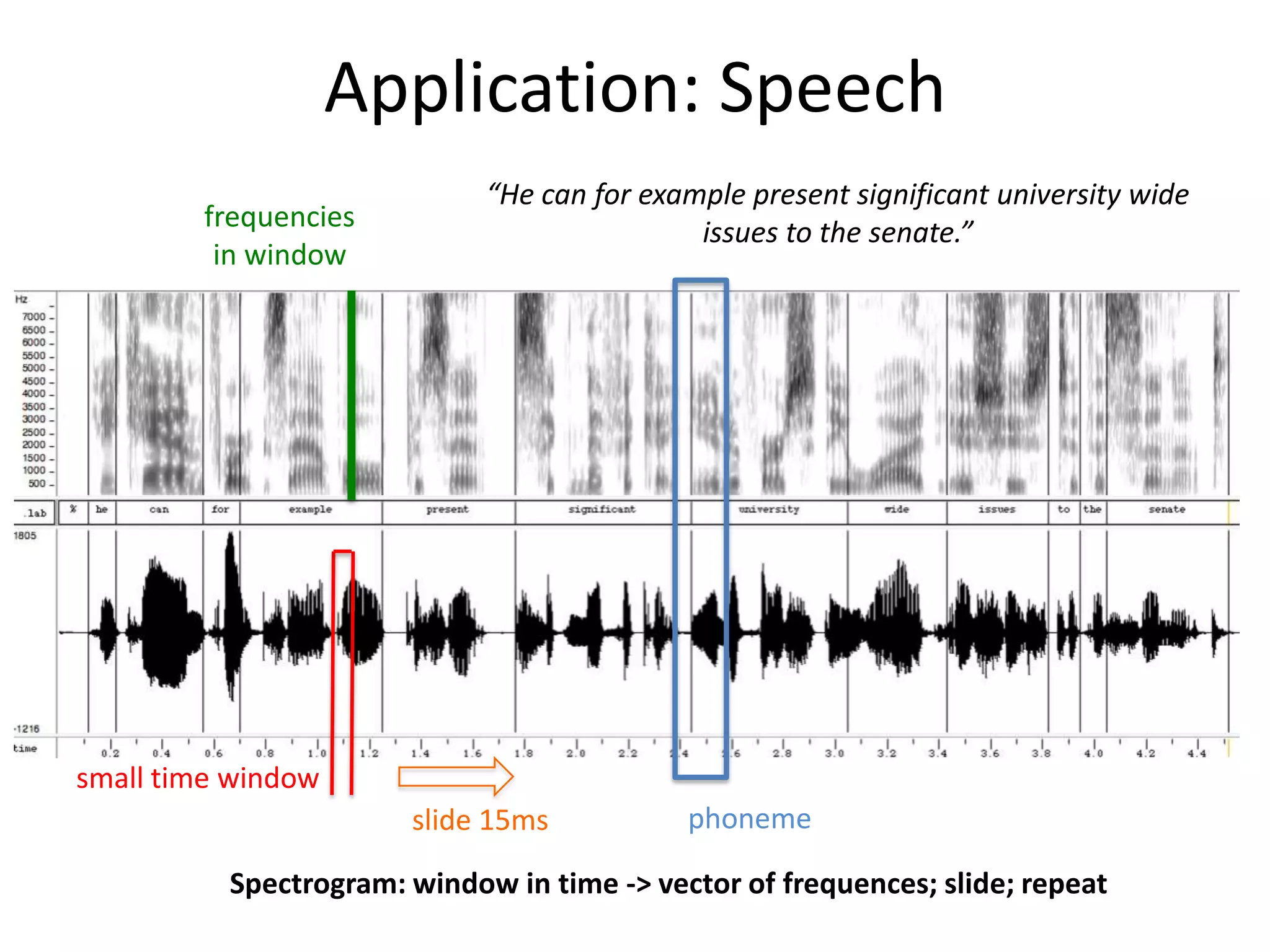

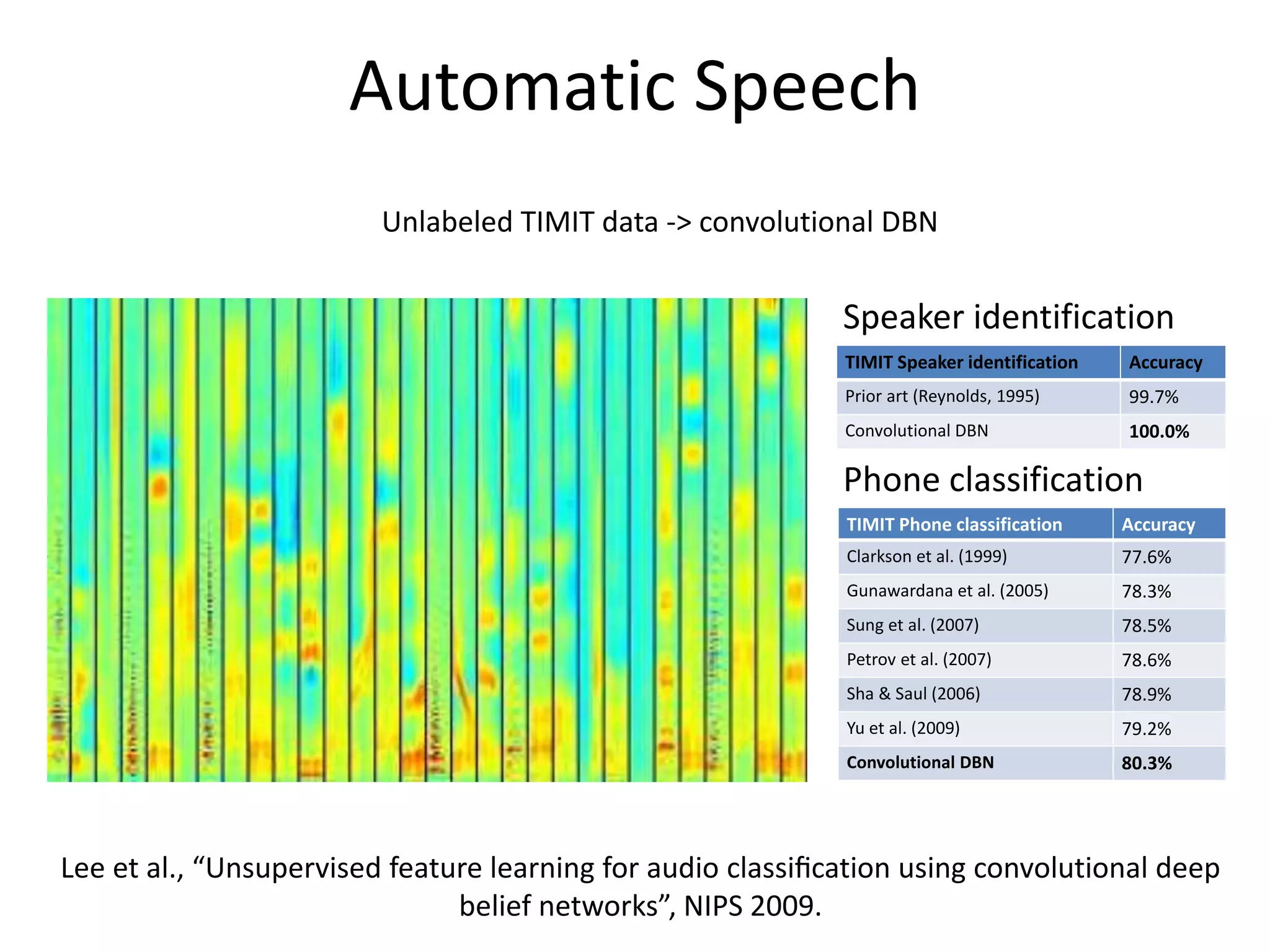

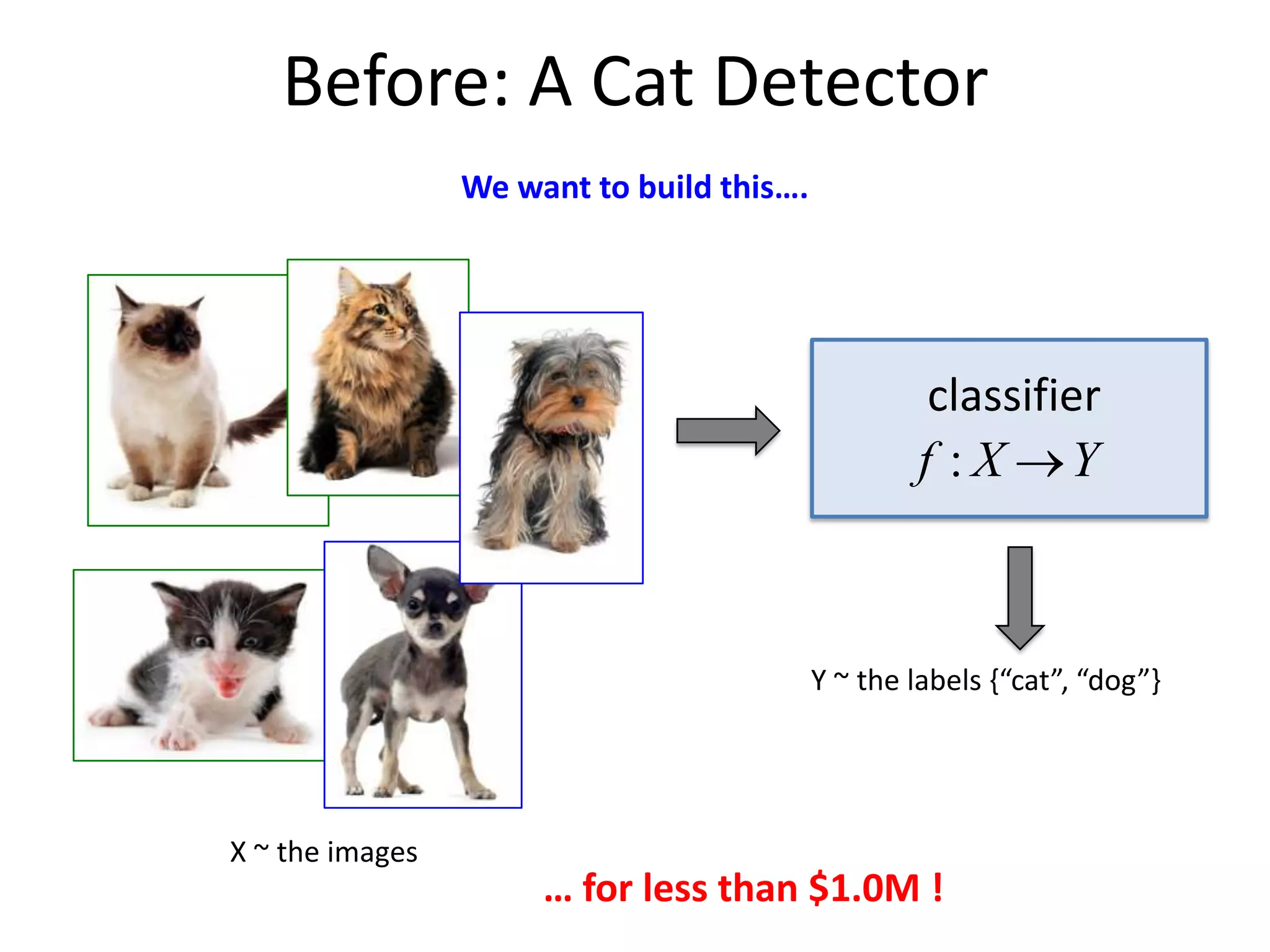

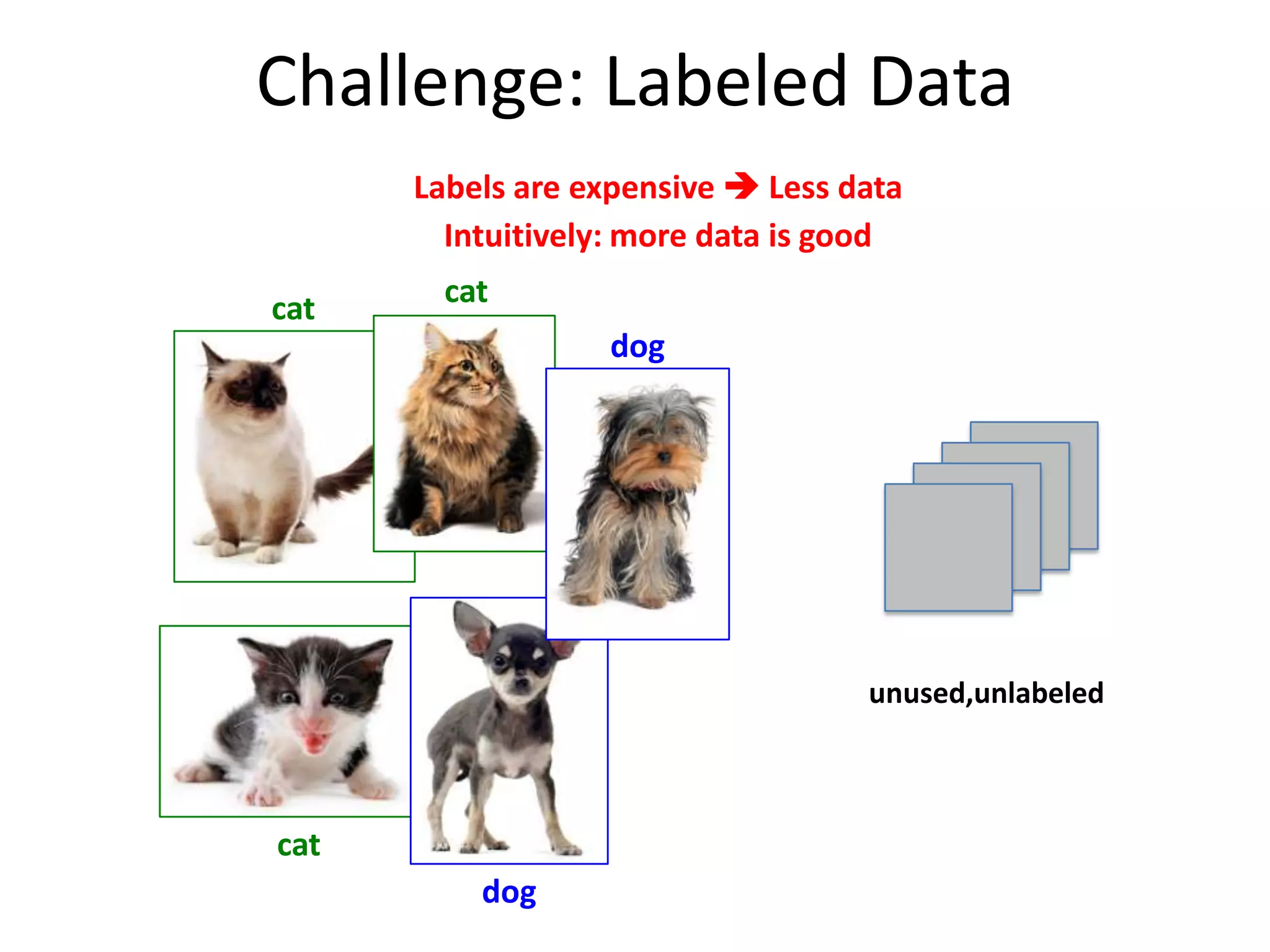

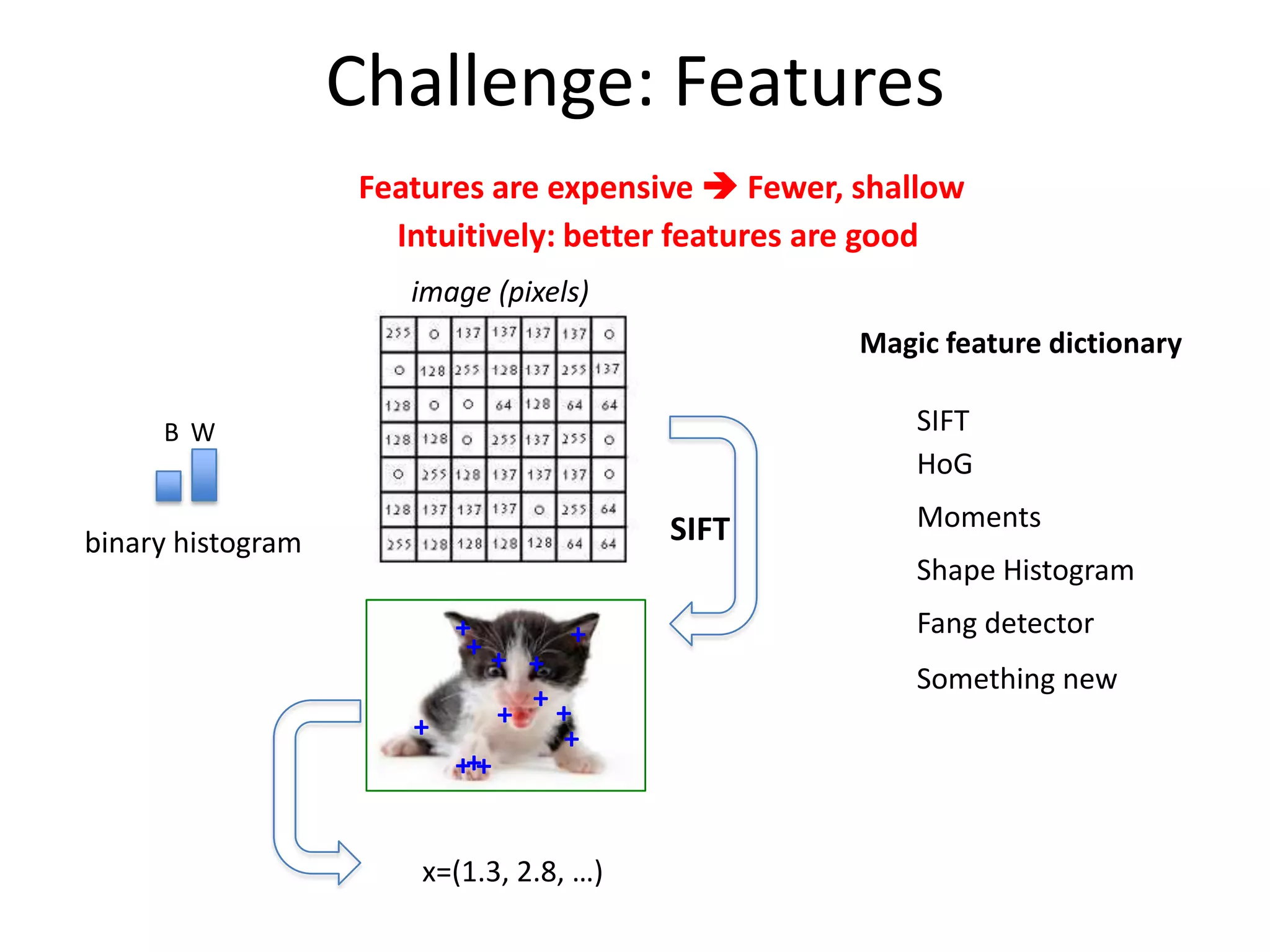

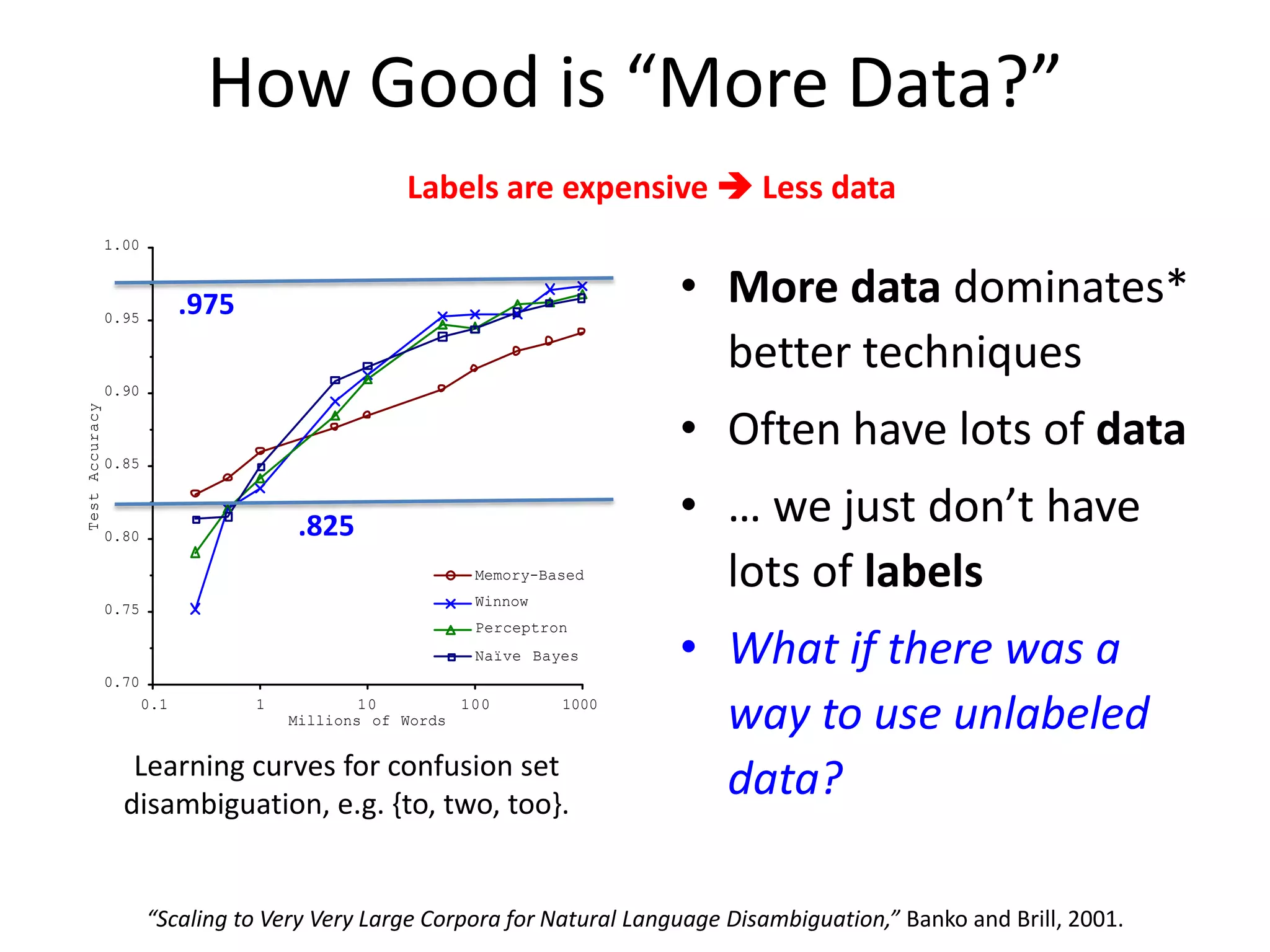

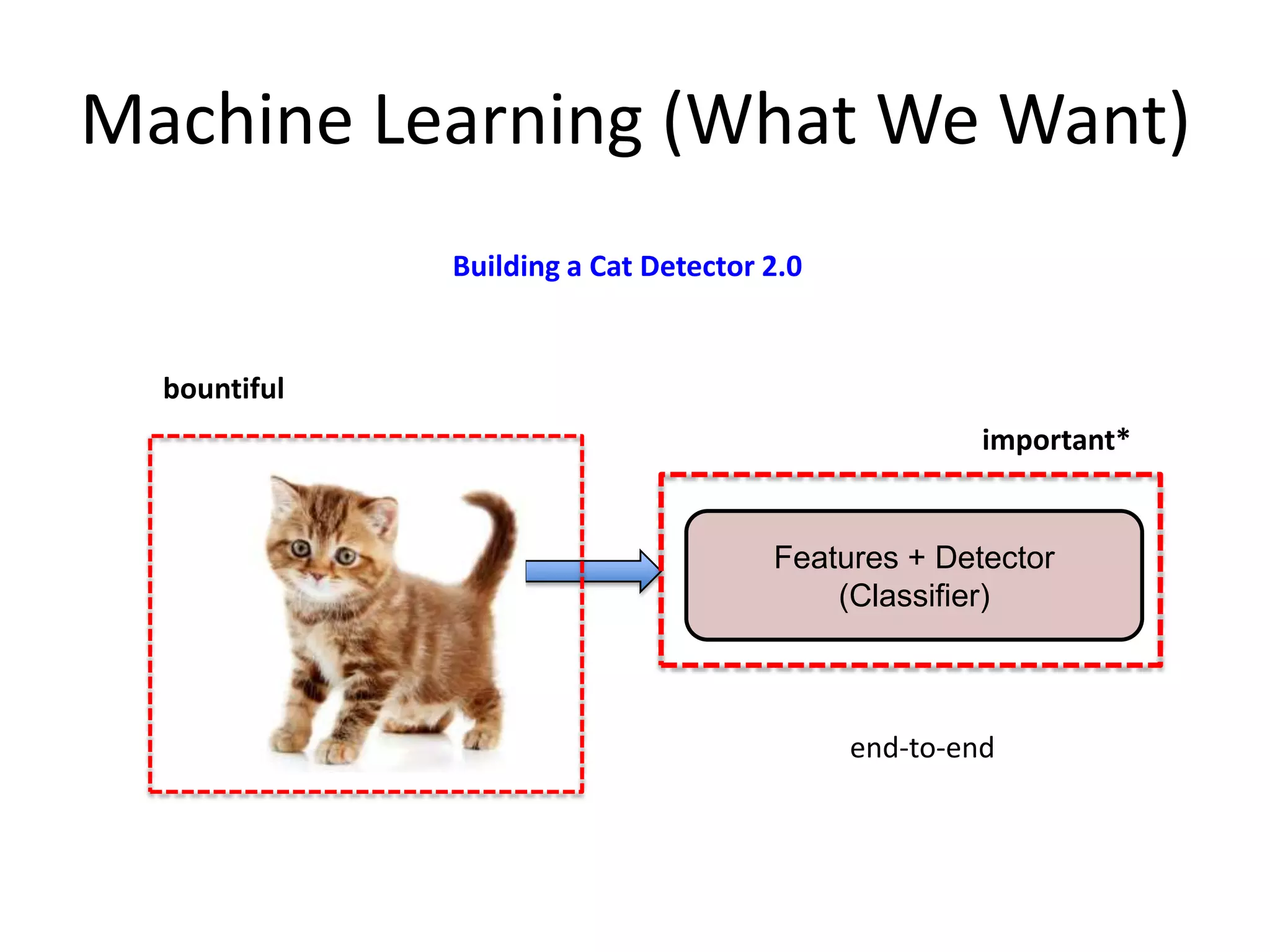

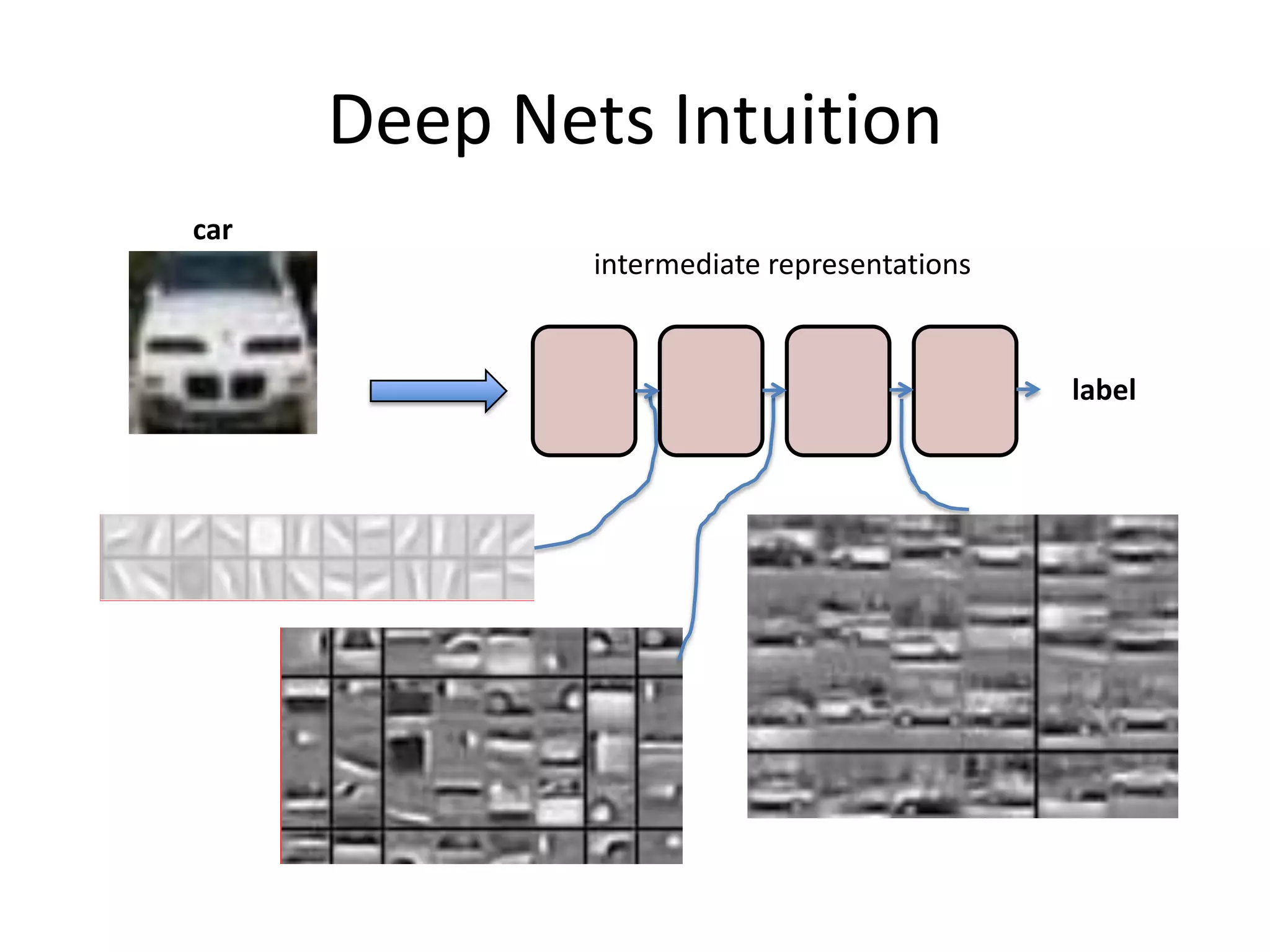

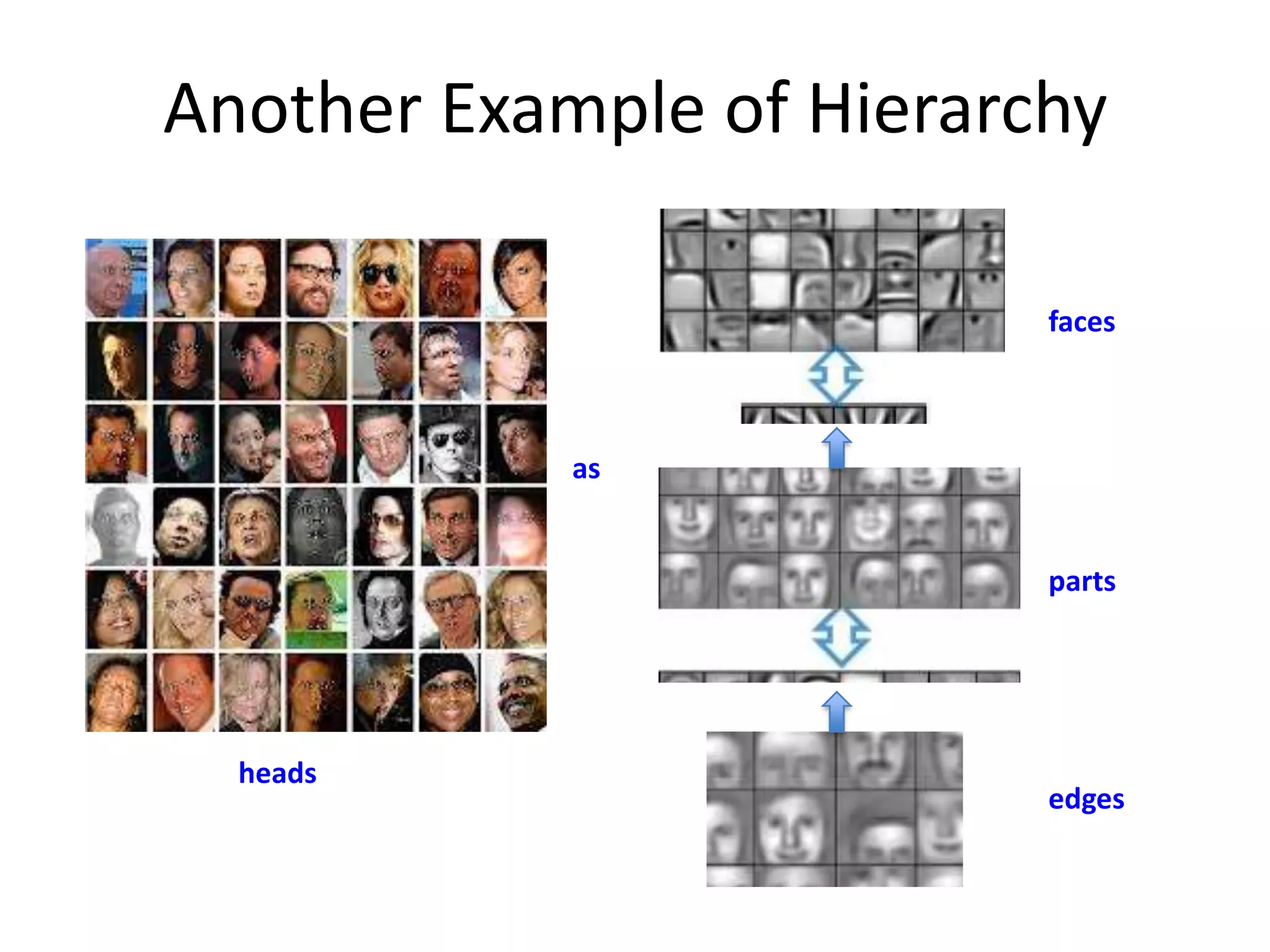

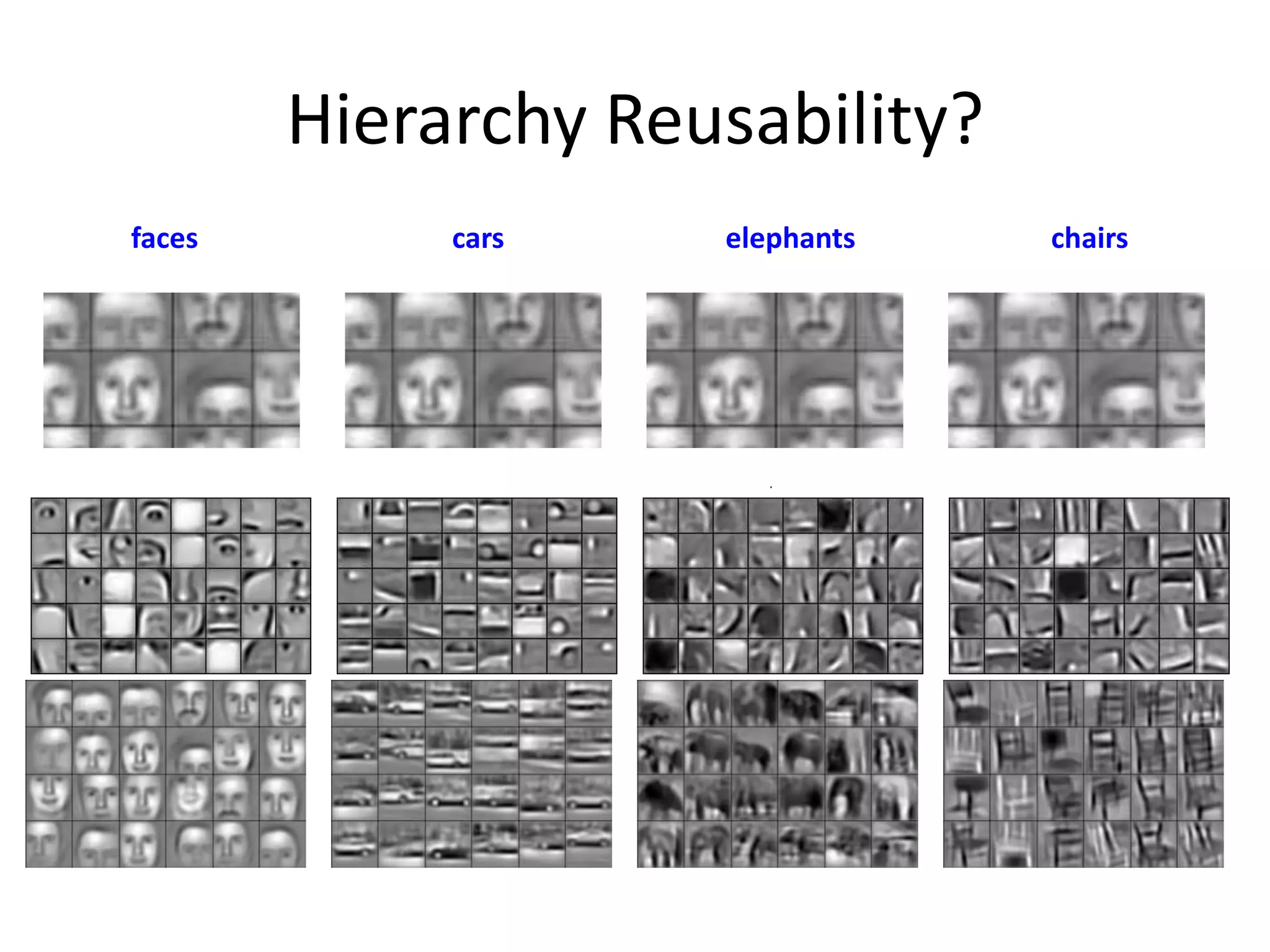

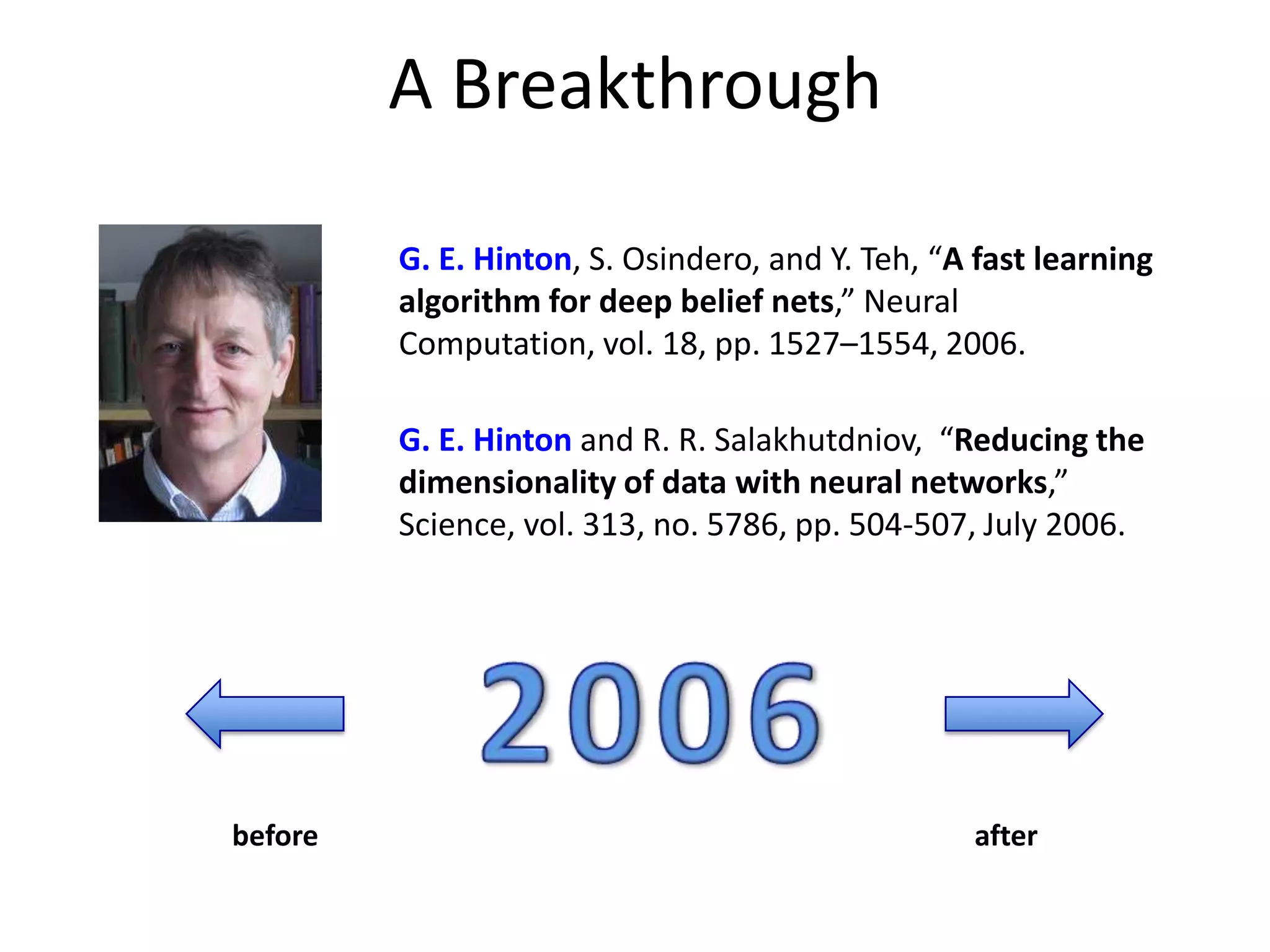

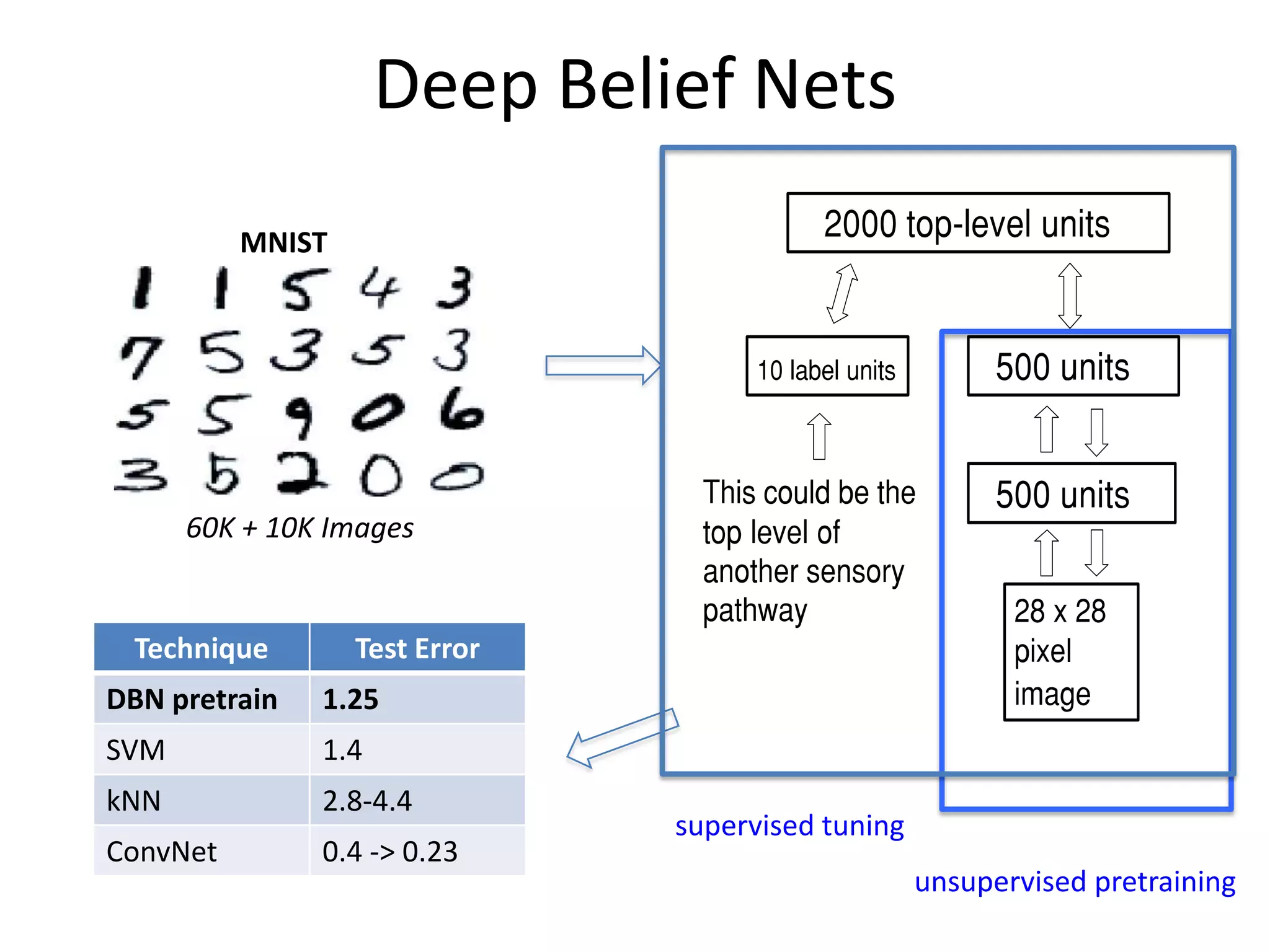

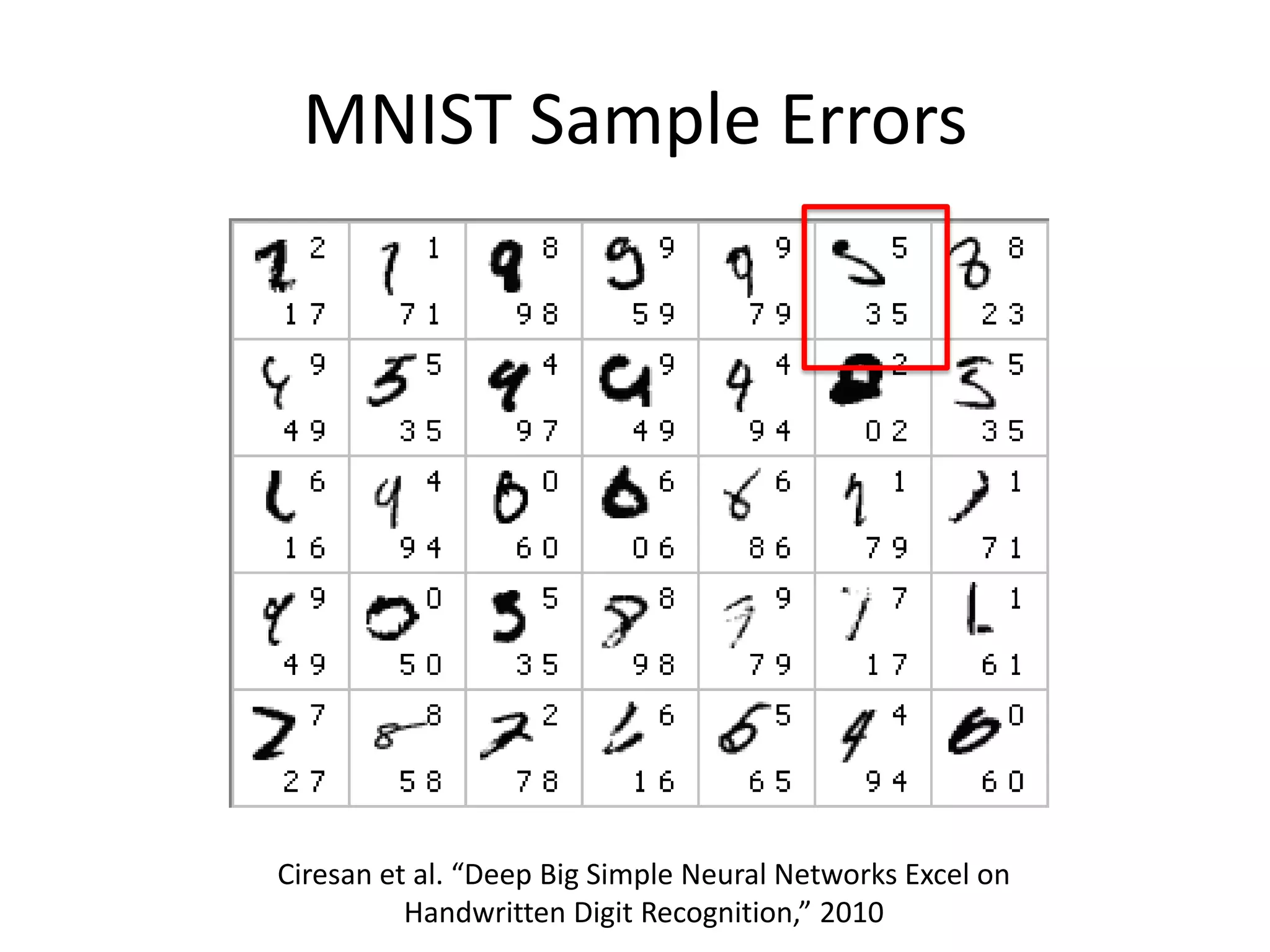

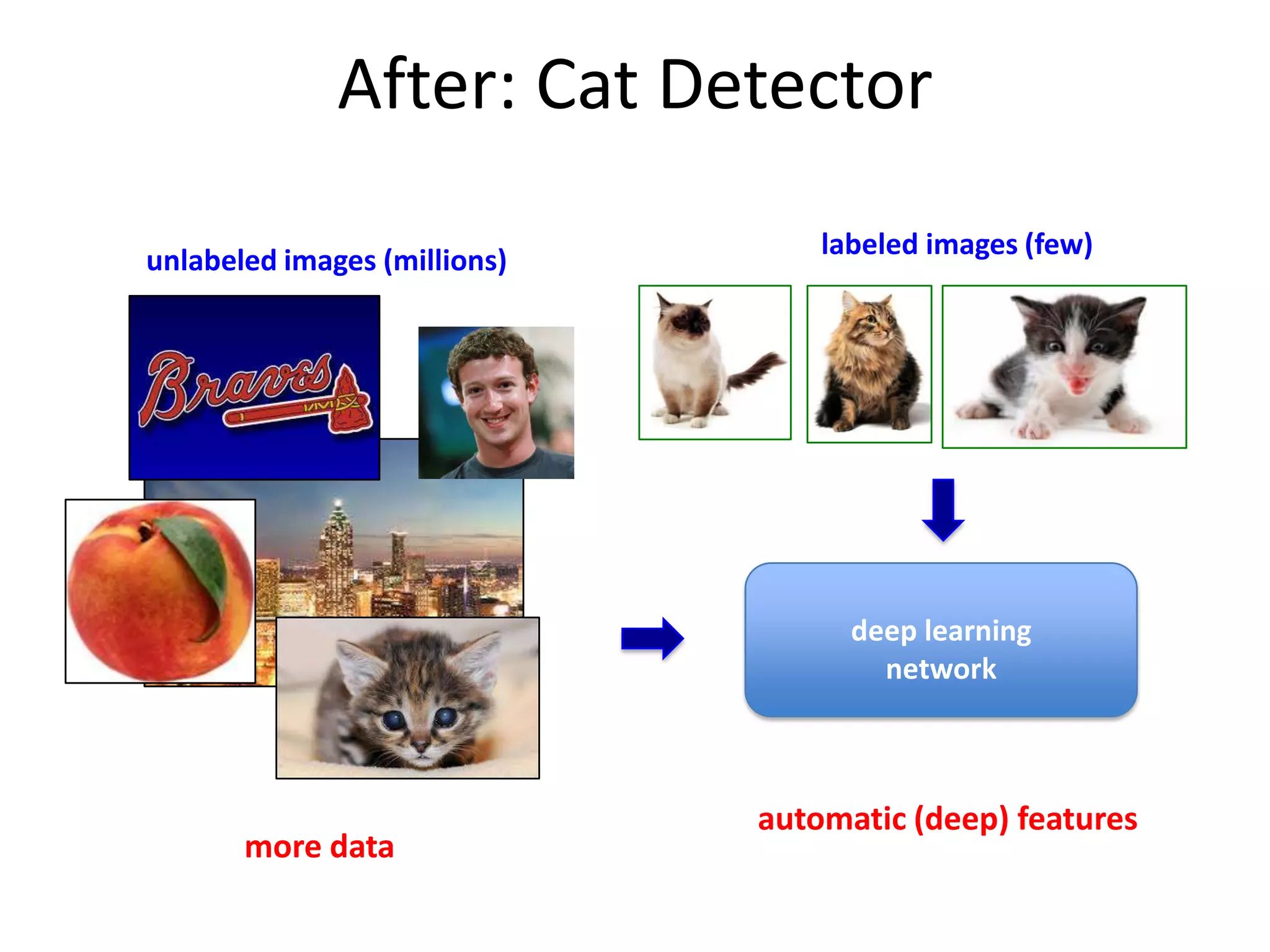

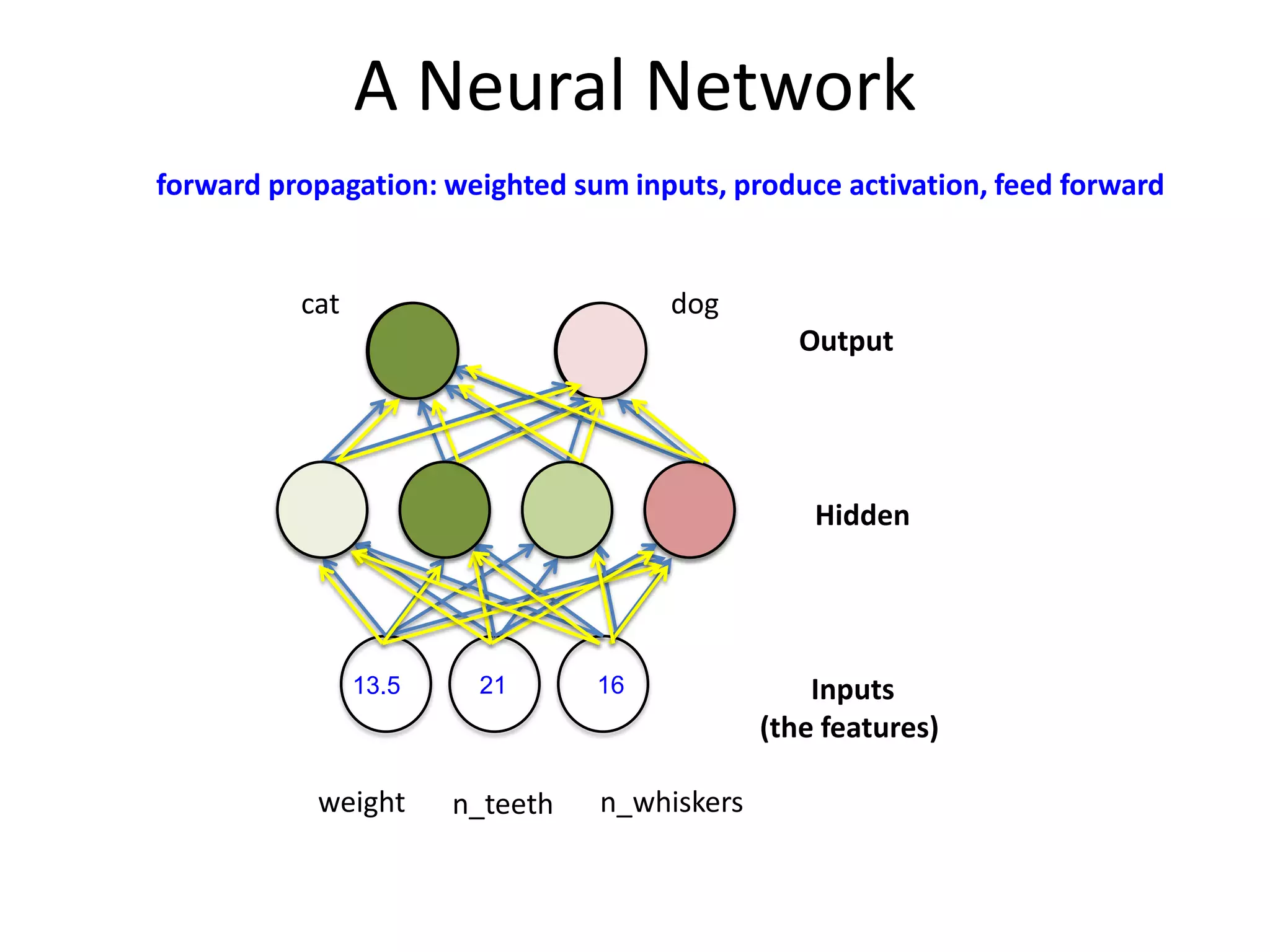

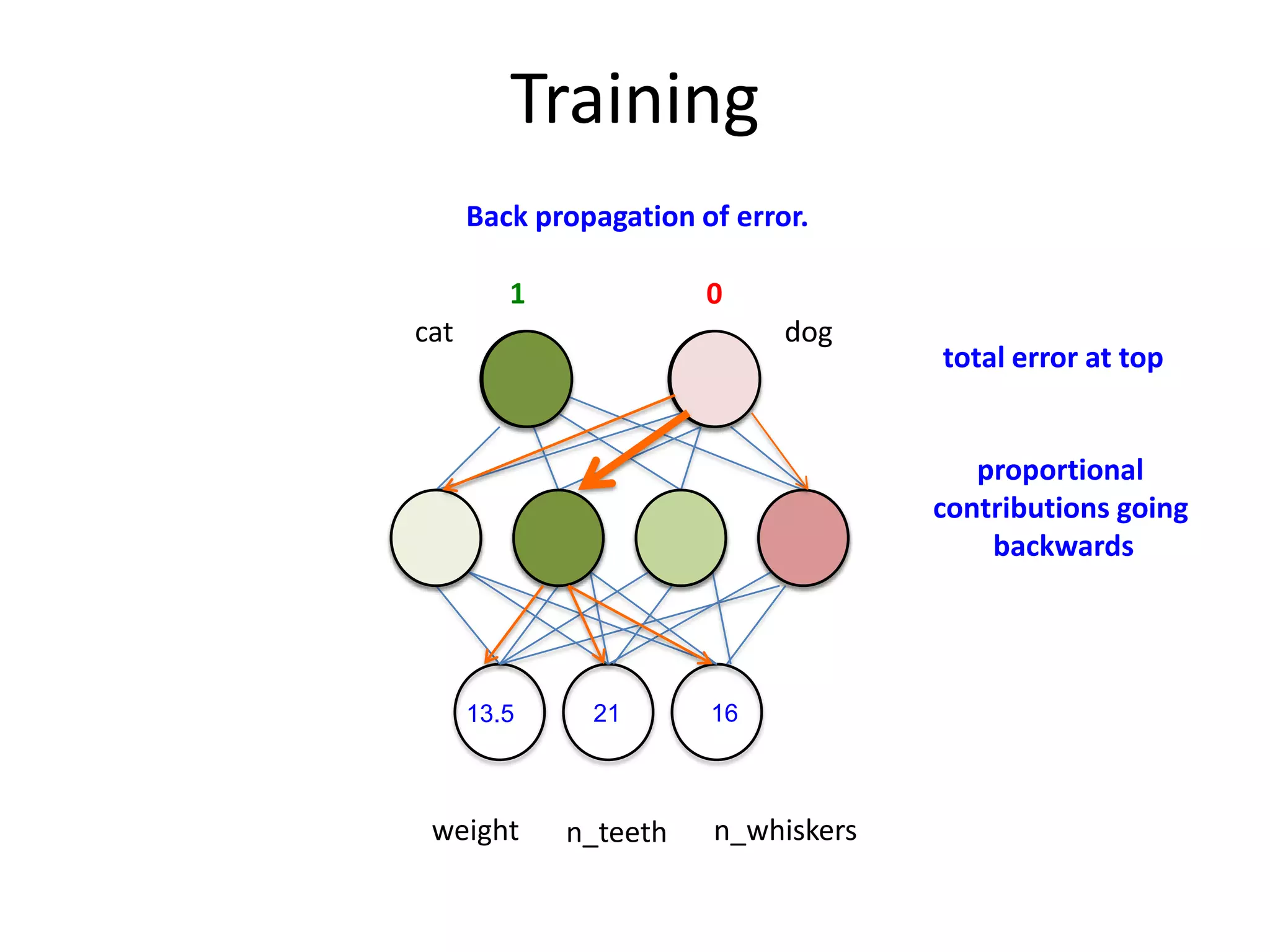

This document discusses the evolution and impact of deep learning in artificial intelligence, highlighting key figures and institutions involved in its development. It reviews the advantages of using unlabeled data to improve model performance and emphasizes the significance of feature learning and deep architectures. Additionally, it covers various applications of deep learning across fields such as speech recognition, image classification, and natural language processing.

![After Training

network

layer weights

weights as a matrix

[.5, -.2, 4, .15, -1,…]

-.5

.4

0

.1

.1

.5

-1

2

[-.5, -.3, .4, 0, …]

-.3

.7

-.2

.4

we can view weight matrix as image

… plus performance evaluation & logging](https://image.slidesharecdn.com/dsatl-deeplearning-alpharetta-slideshare-140108235833-phpapp01/75/Deep-Learning-for-Data-Scientists-Data-Science-ATL-Meetup-Presentation-2014-01-08-27-2048.jpg)