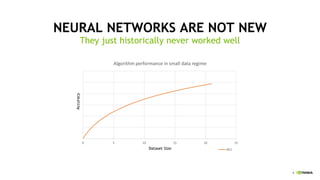

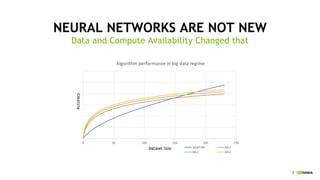

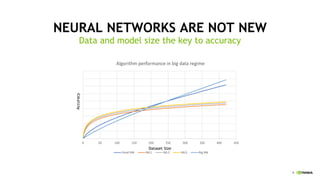

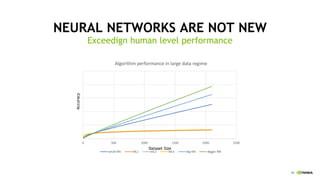

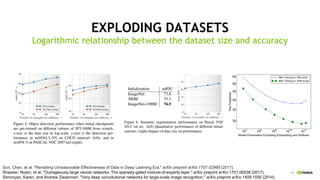

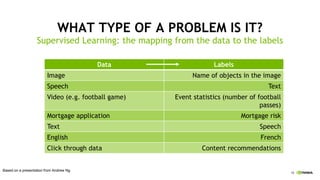

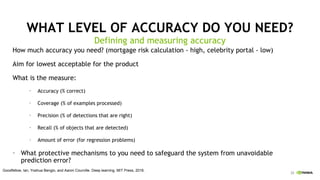

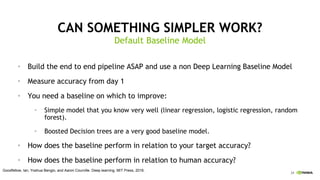

The document discusses the evolution and increasing effectiveness of deep learning, emphasizing the importance of large datasets and compute power in improving neural network performance. It highlights the criteria for successful AI projects, including the necessity of sufficient labeled data and acceptable accuracy levels. Additionally, it promotes Nvidia's initiatives in deep learning education and application across various industries.