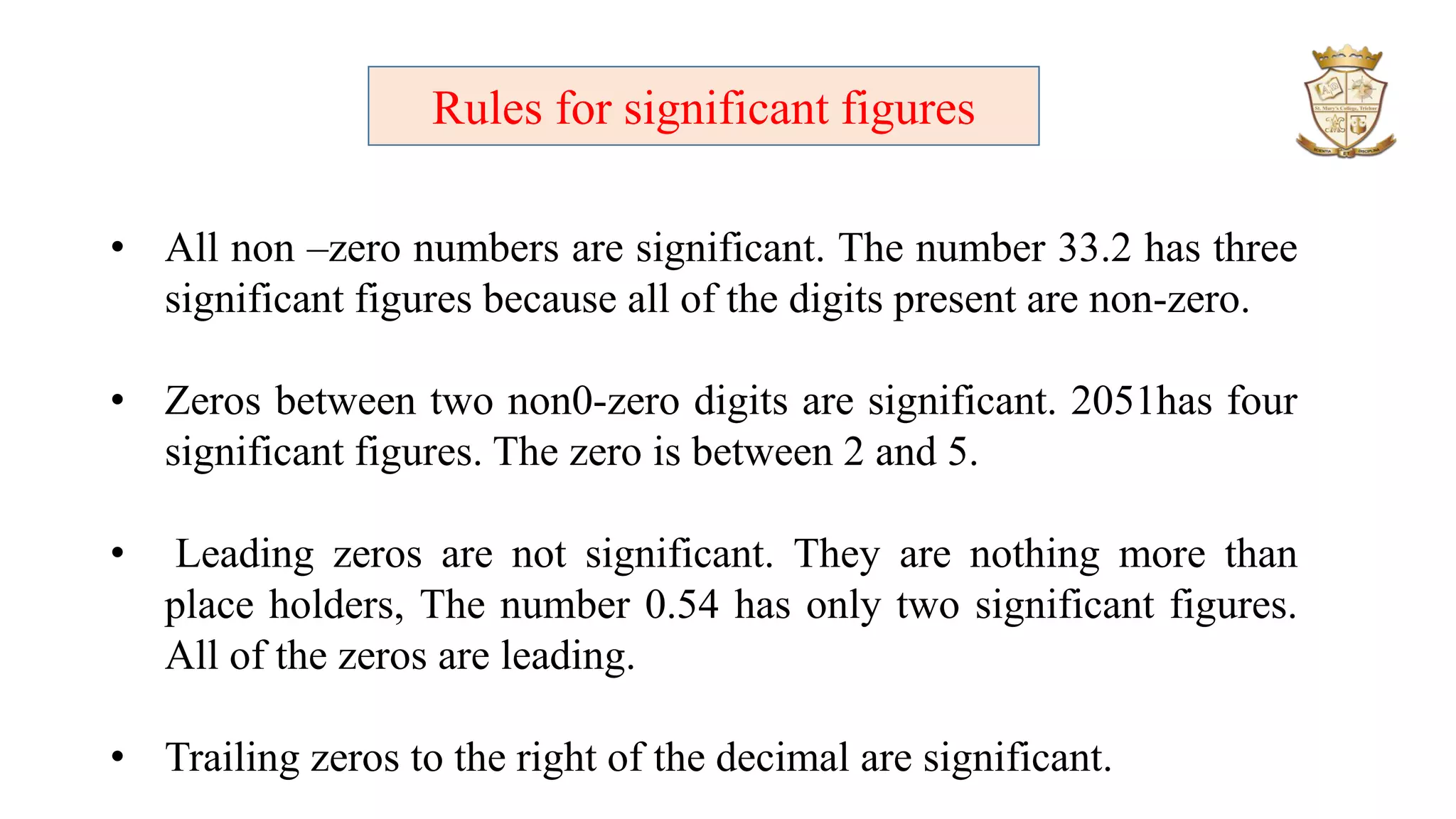

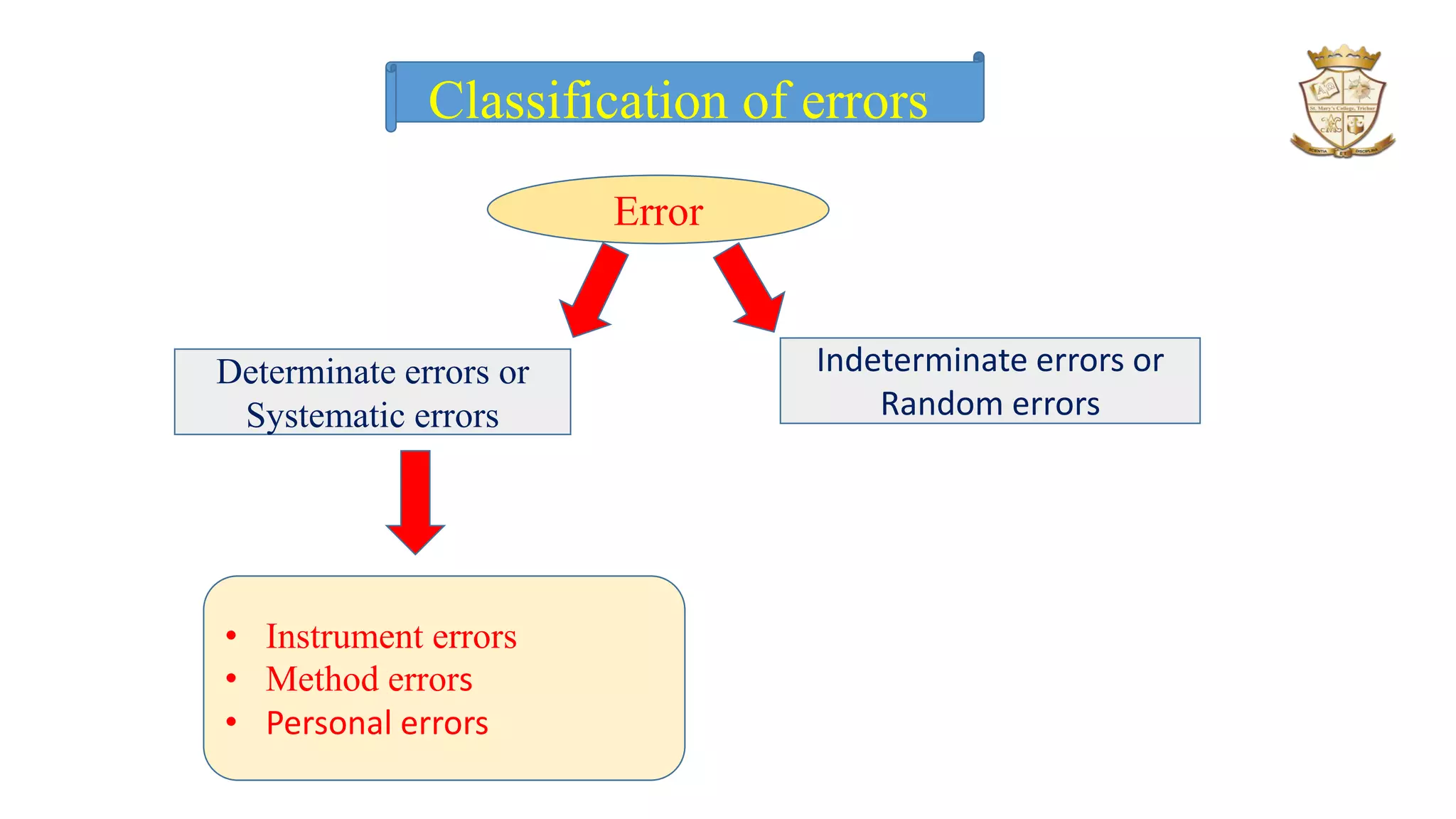

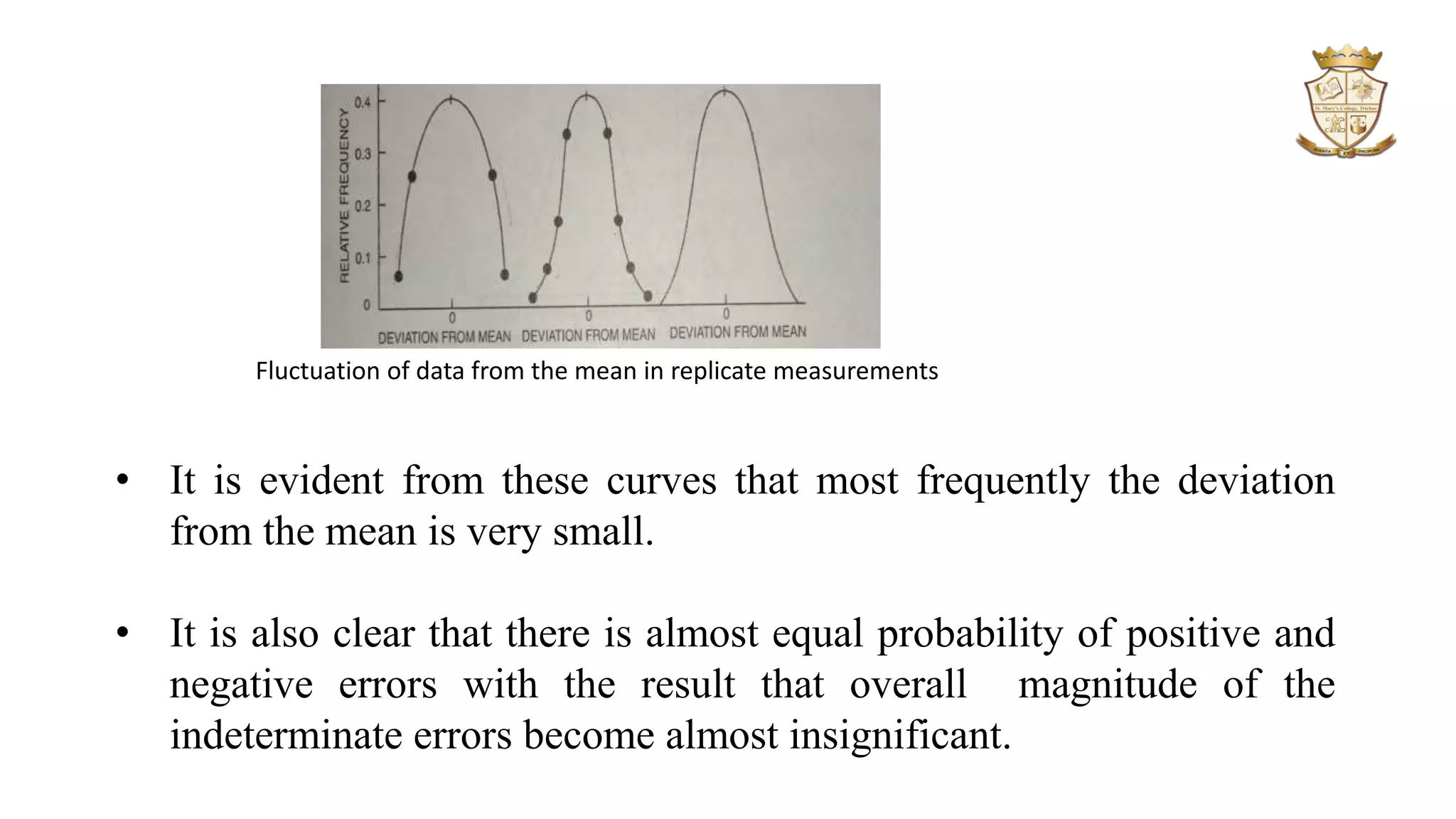

The document discusses significant figures and errors in analytical chemistry, outlining the definitions and classifications of significant figures and types of errors (determinate and indeterminate). It explains how errors can arise from measuring instruments, methodologies, and personal judgment, alongside methods for identifying and correcting these errors. Additionally, it distinguishes between precision and accuracy, describing how both are quantified in measurements and their relevance in statistical analysis of results.