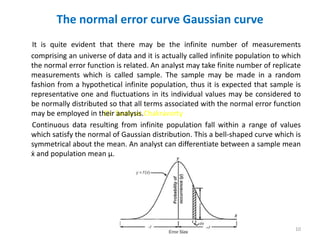

The document discusses analytical chemistry methods and concepts related to errors, precision, accuracy, and statistical analysis of data. It defines types of errors, describes methods to minimize errors, and explains concepts like absolute and relative error, precision, accuracy, and statistical measures including mean, median, mode, standard deviation, and t-tests and F-tests. It also provides an example of calculating average deviation and standard deviation from a set of concentration data and discusses the normal distribution curve.