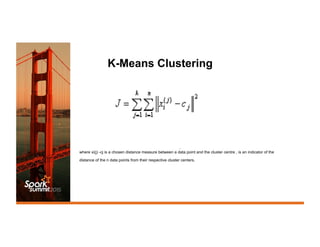

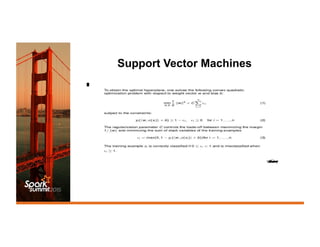

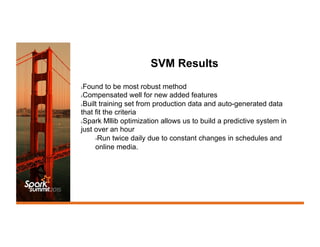

This document discusses using Spark MLlib to predict which digital media files should be offlined from storage to free up space. It describes using k-means clustering, naive Bayes classification, and support vector machines (SVM) on features like file size, age, and airing schedule. SVM performed best and allowed building a predictive system in under an hour. The system is run twice daily on a Spark cluster to select files for purging from a large storage system based on predictions. Some initial issues were addressed and the system is now running robustly in production.