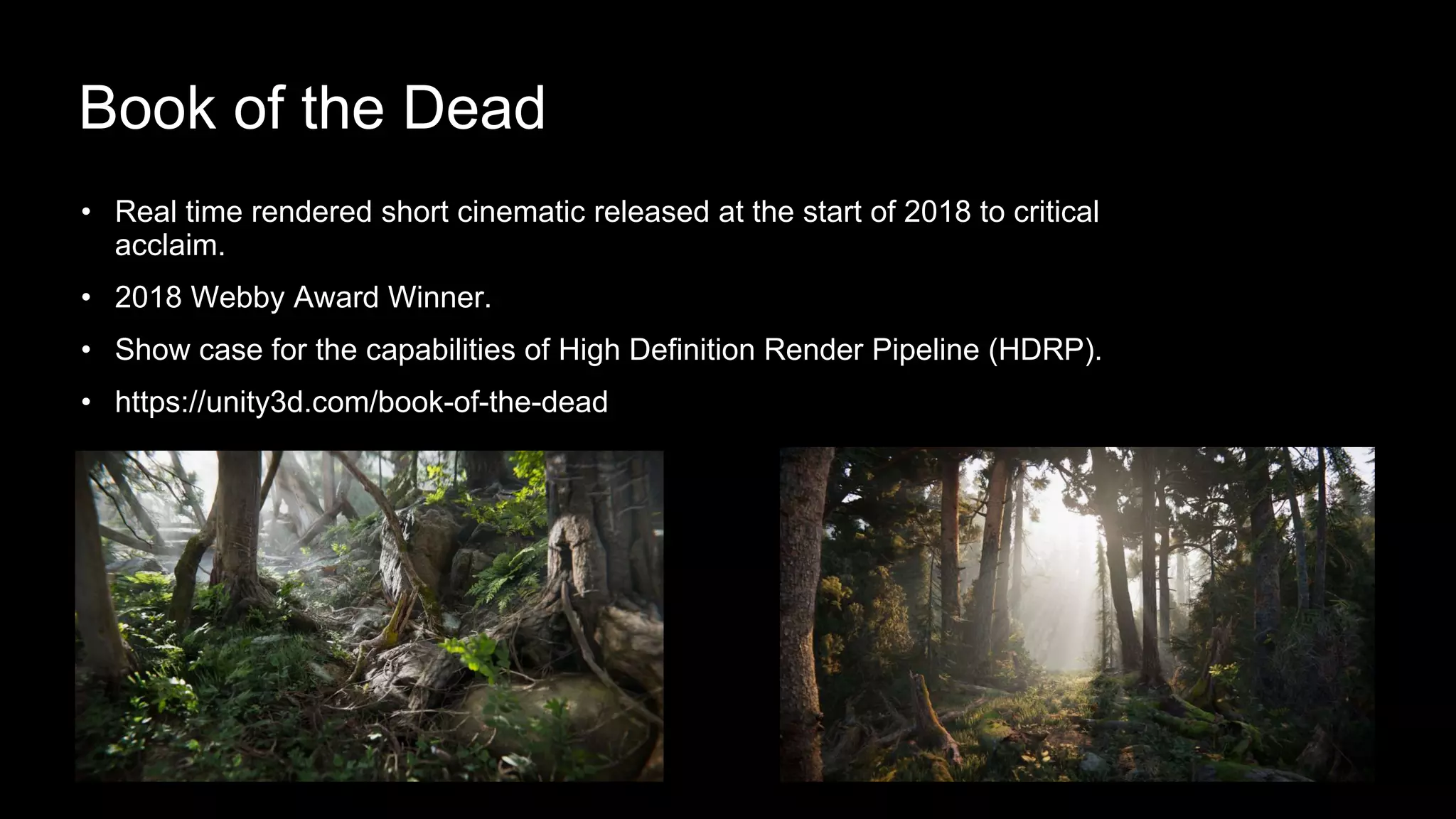

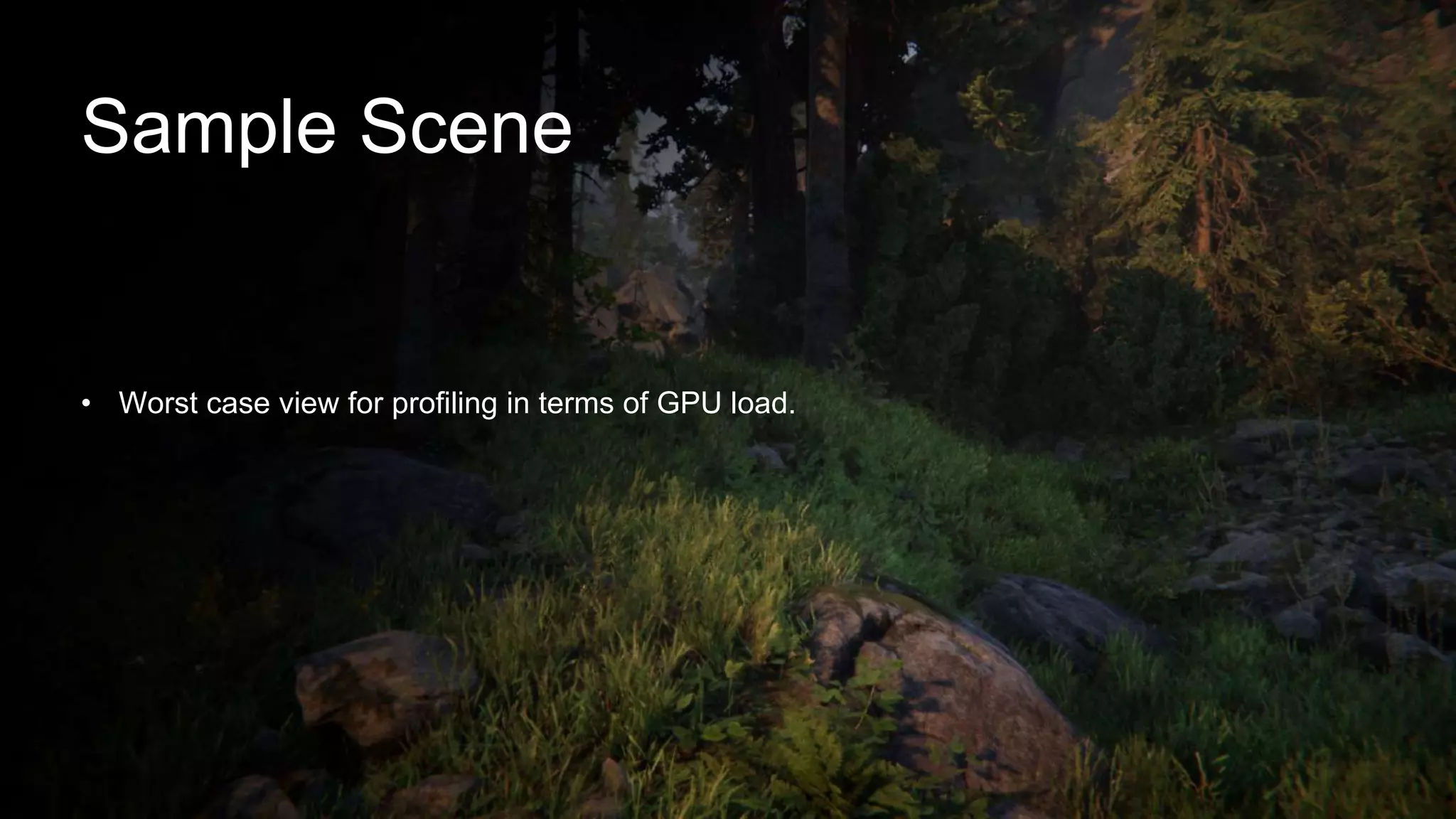

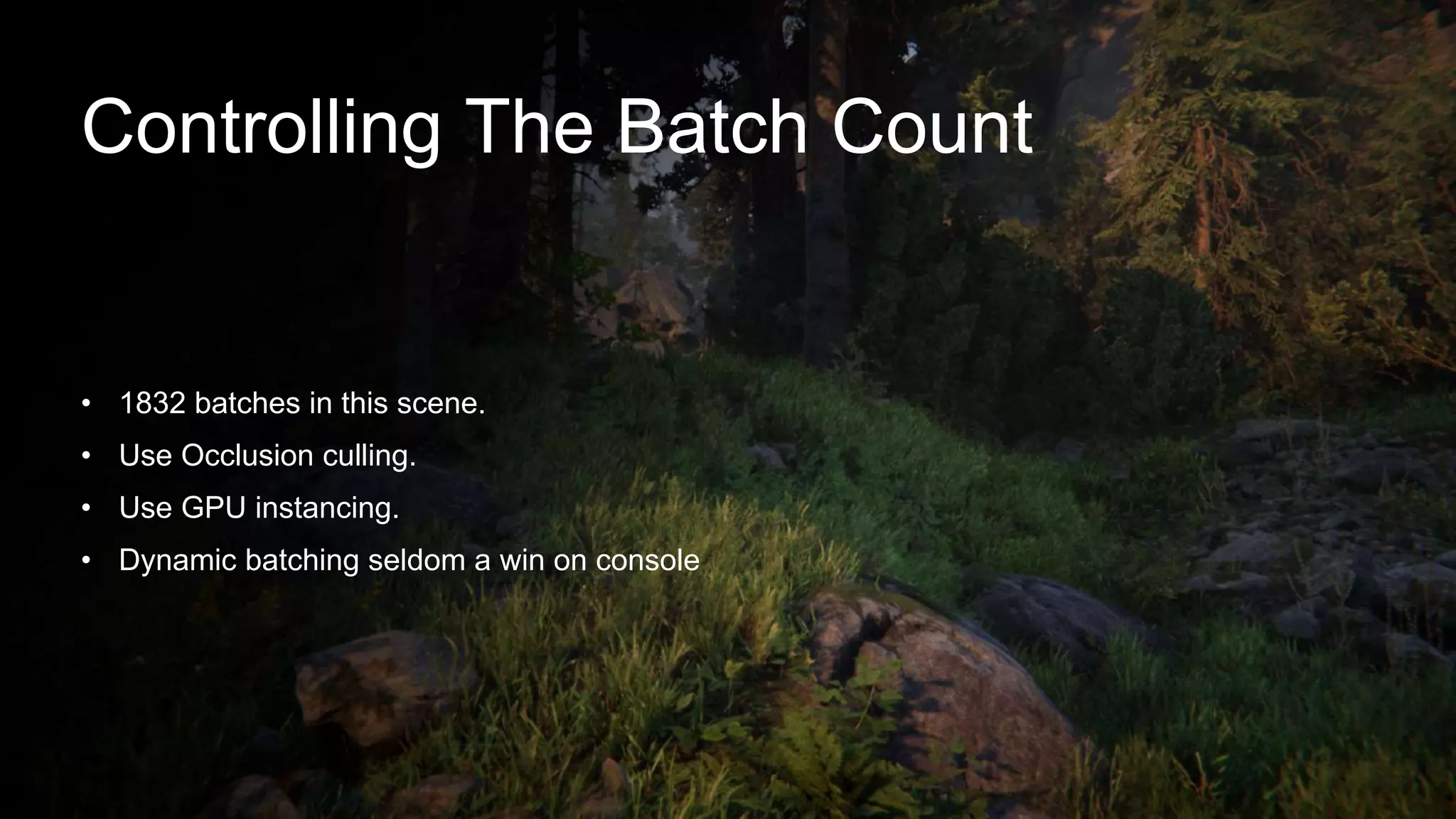

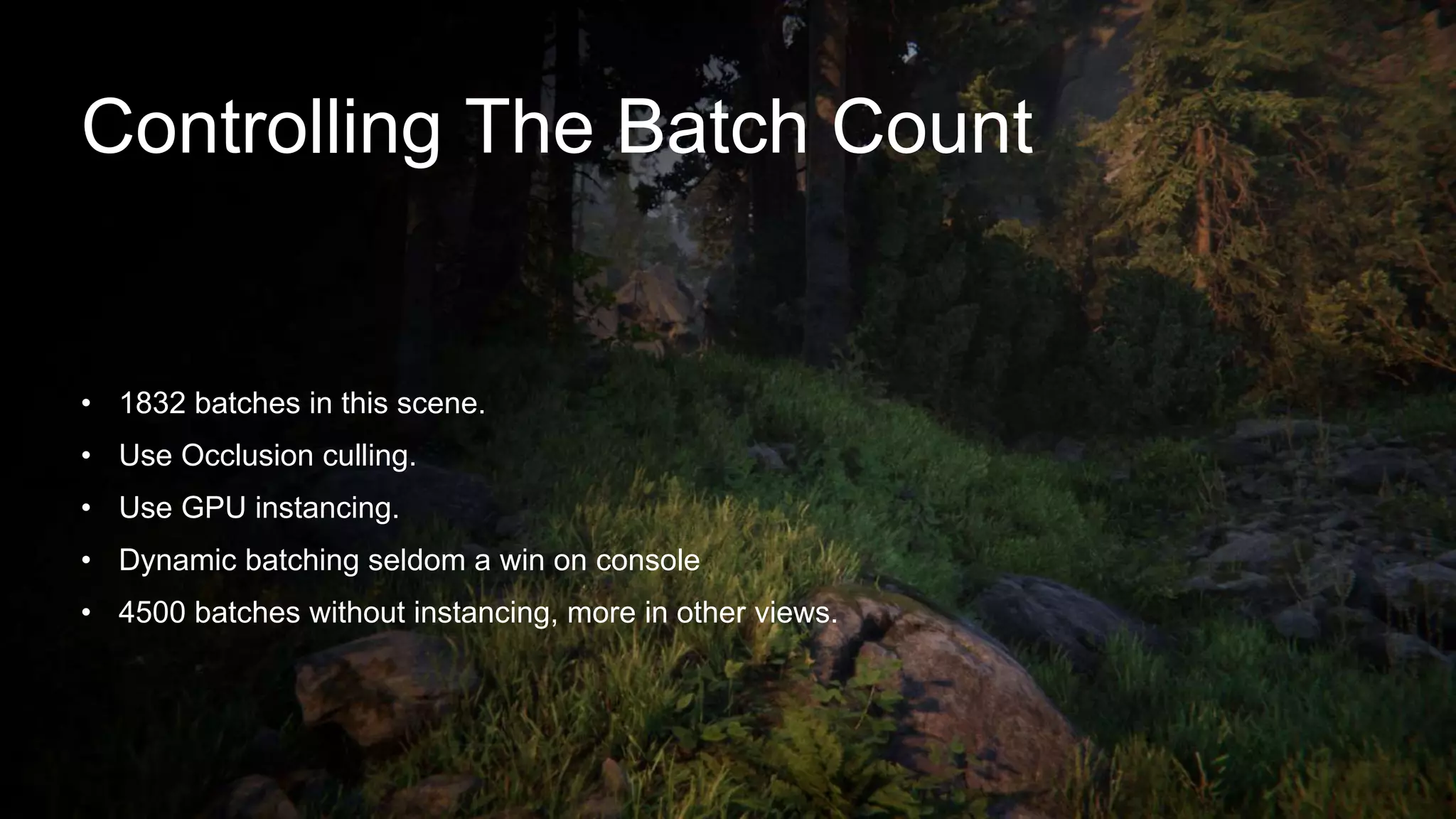

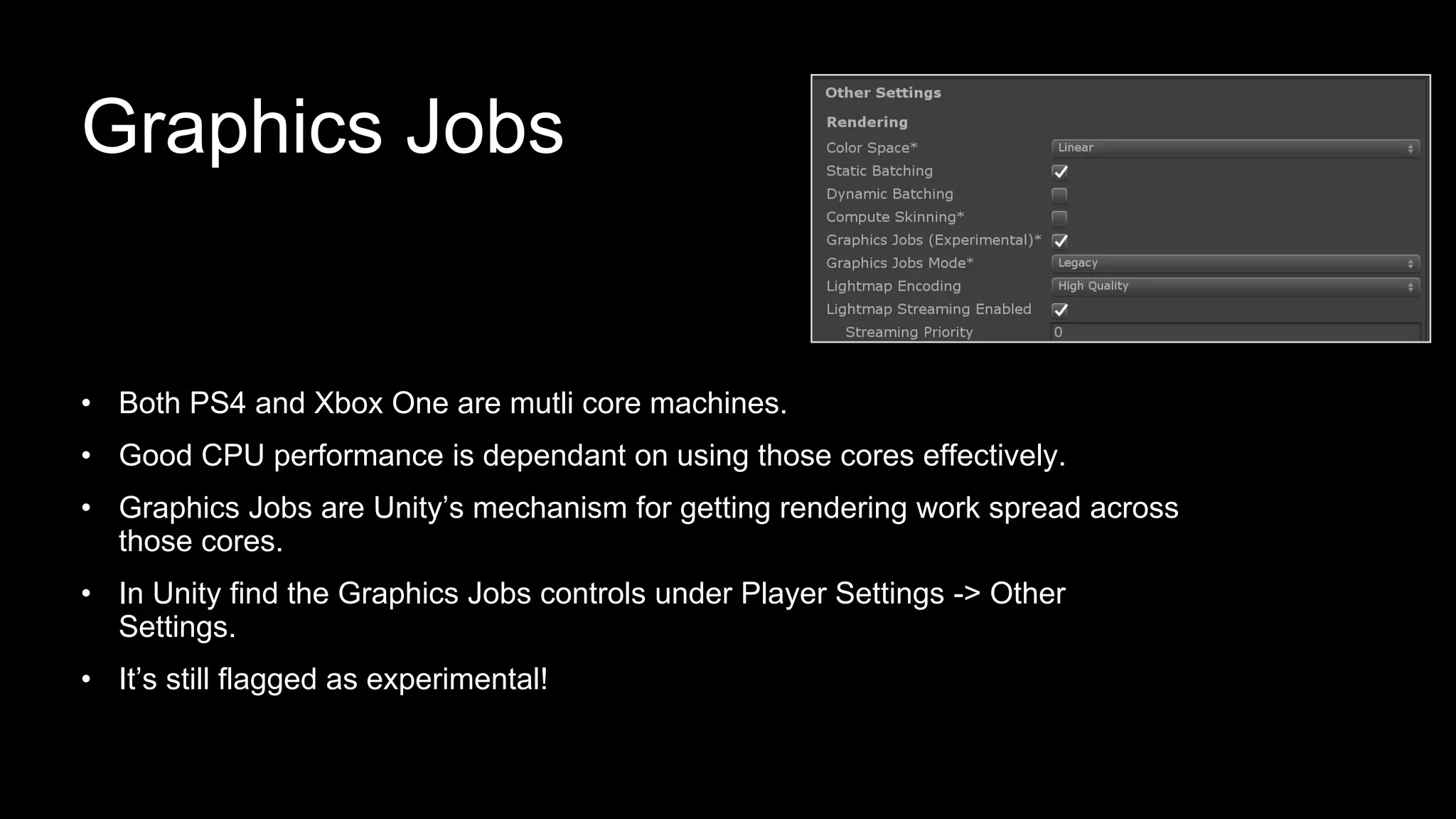

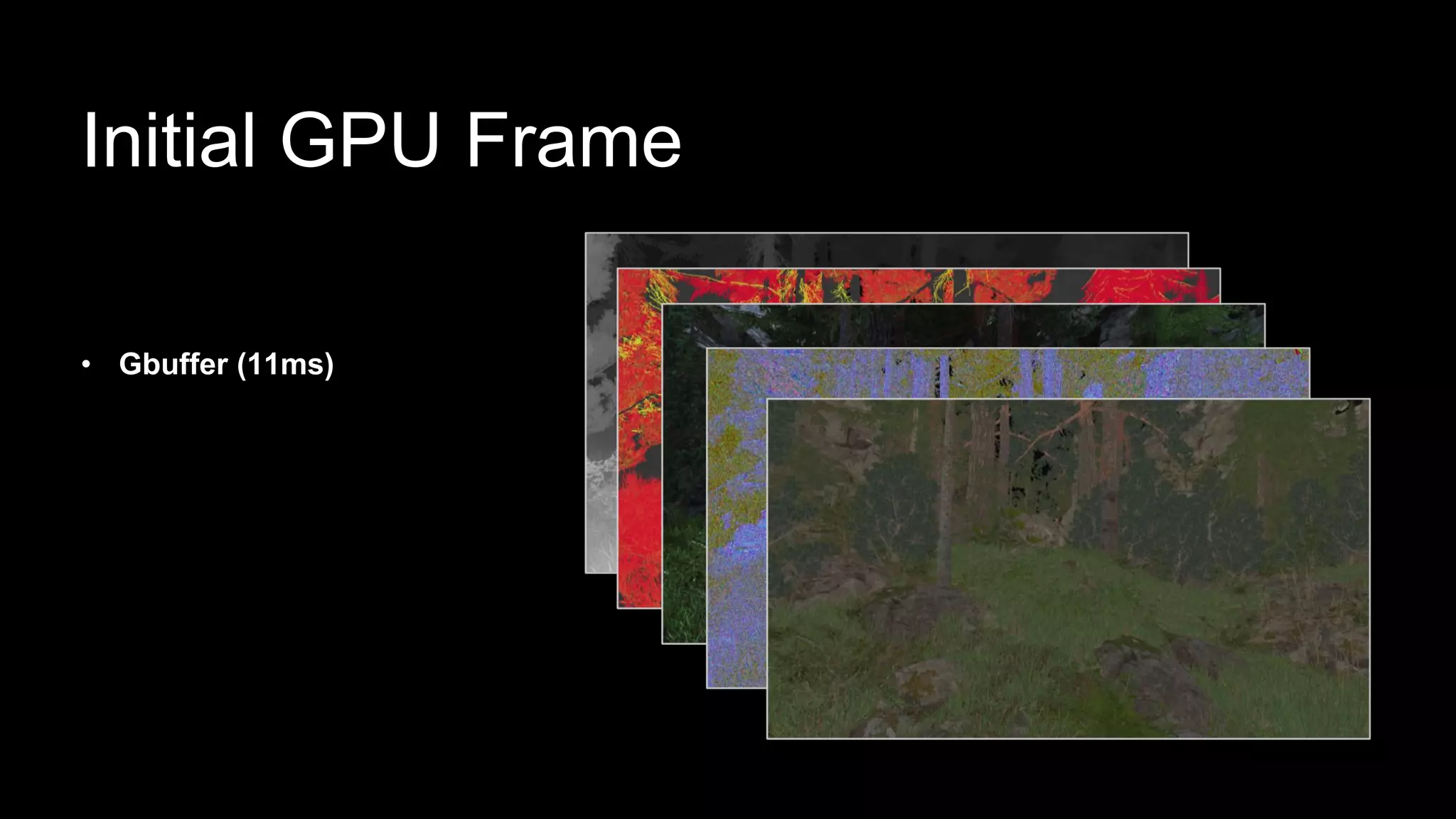

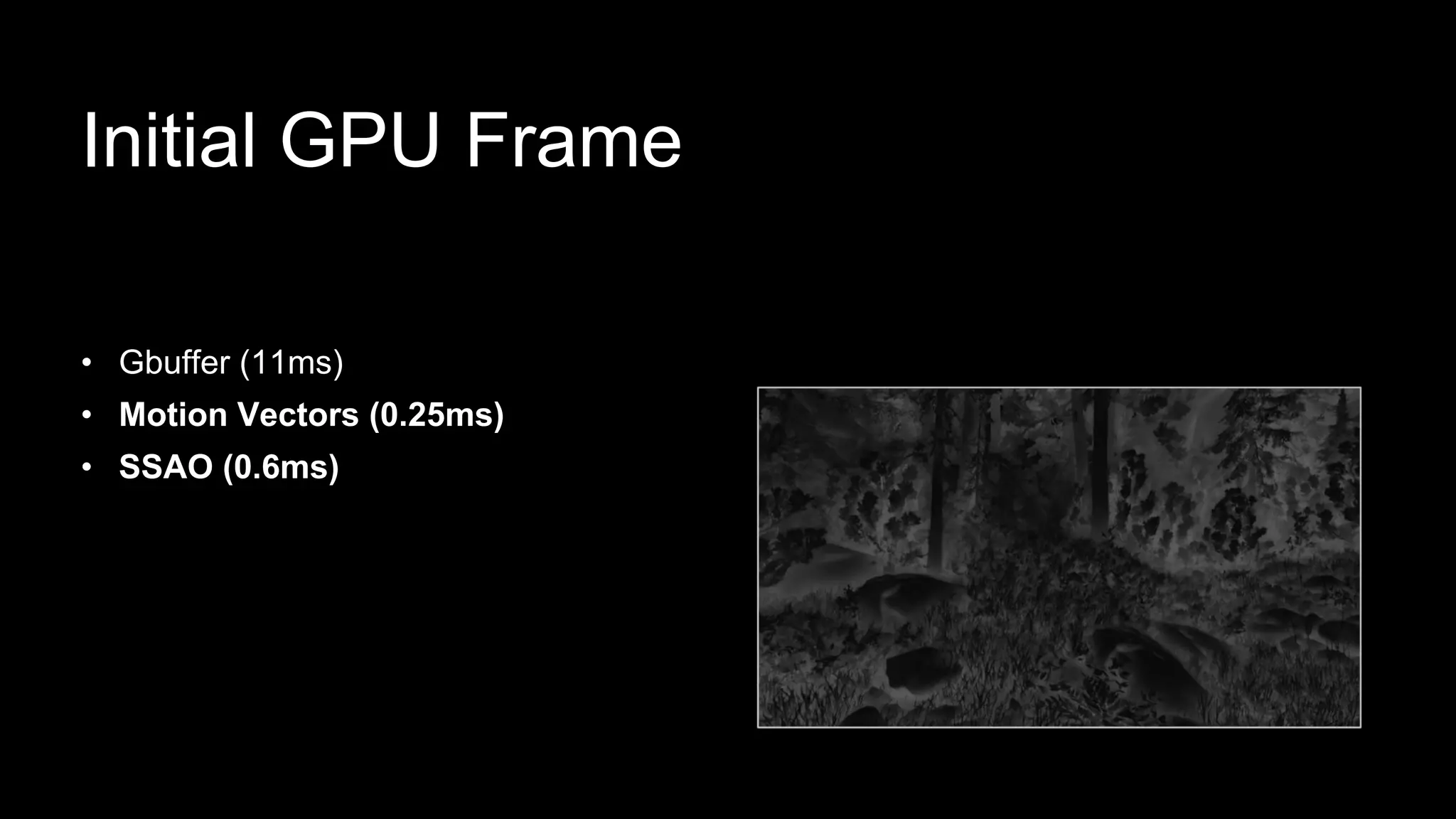

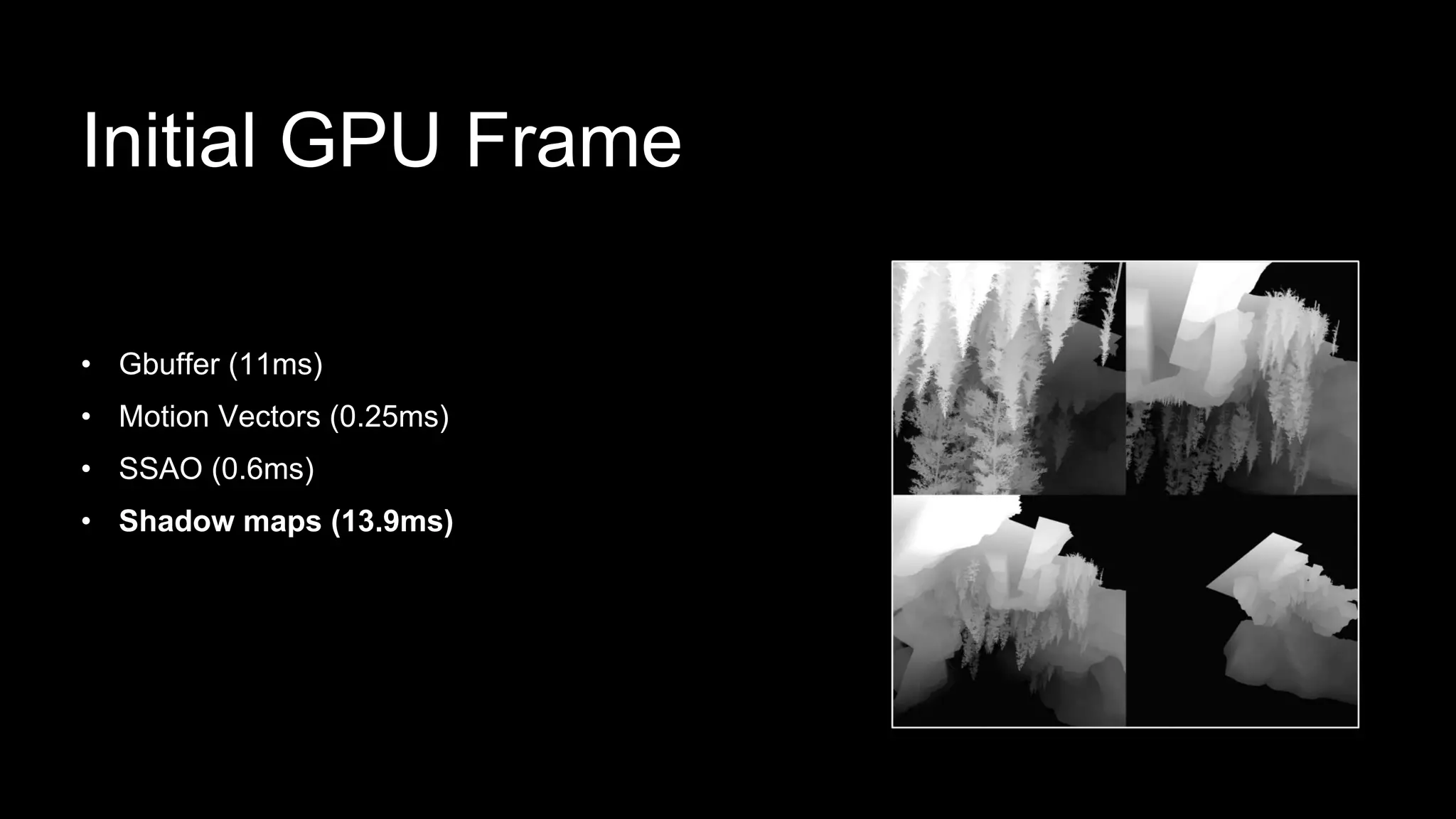

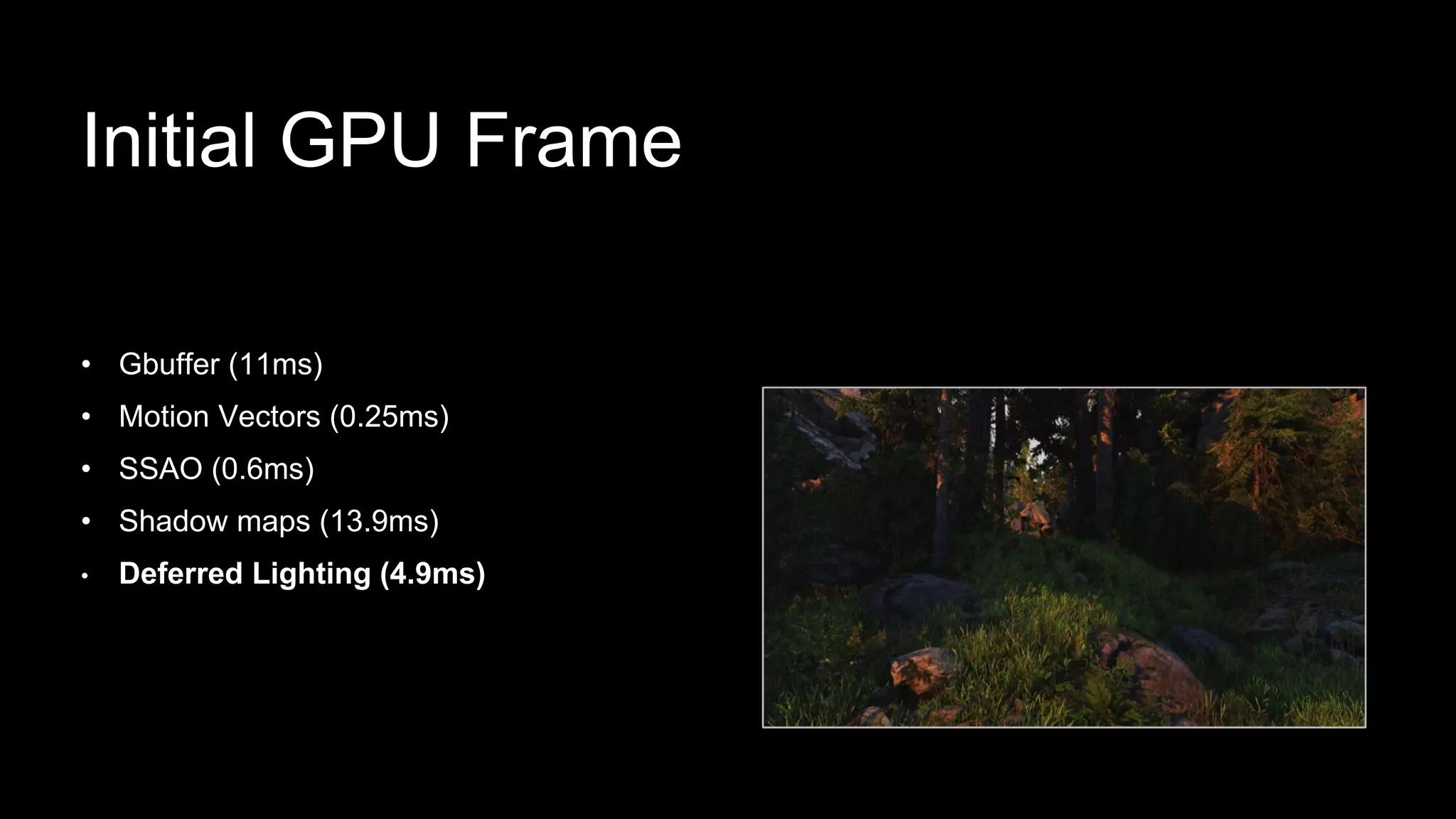

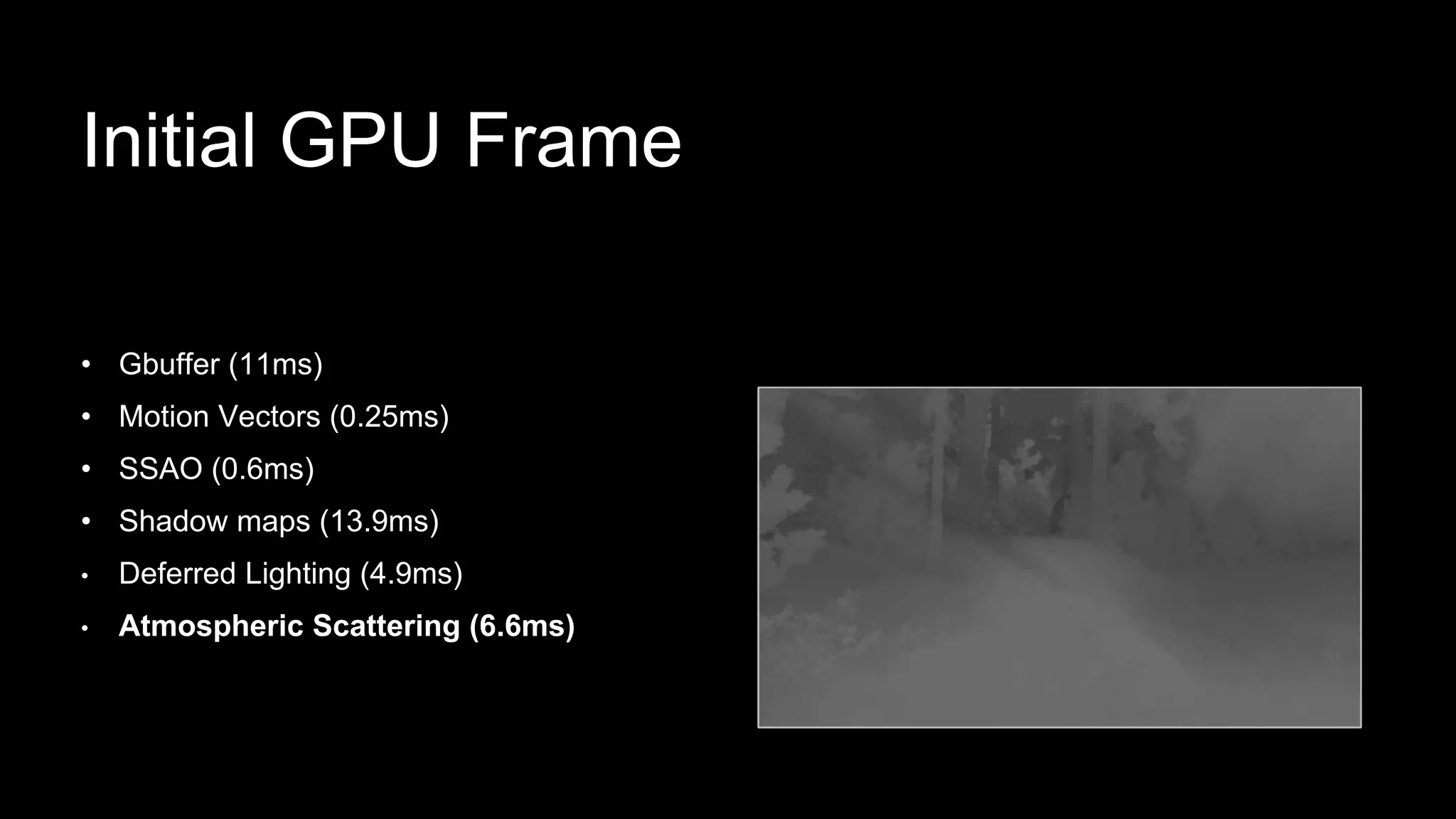

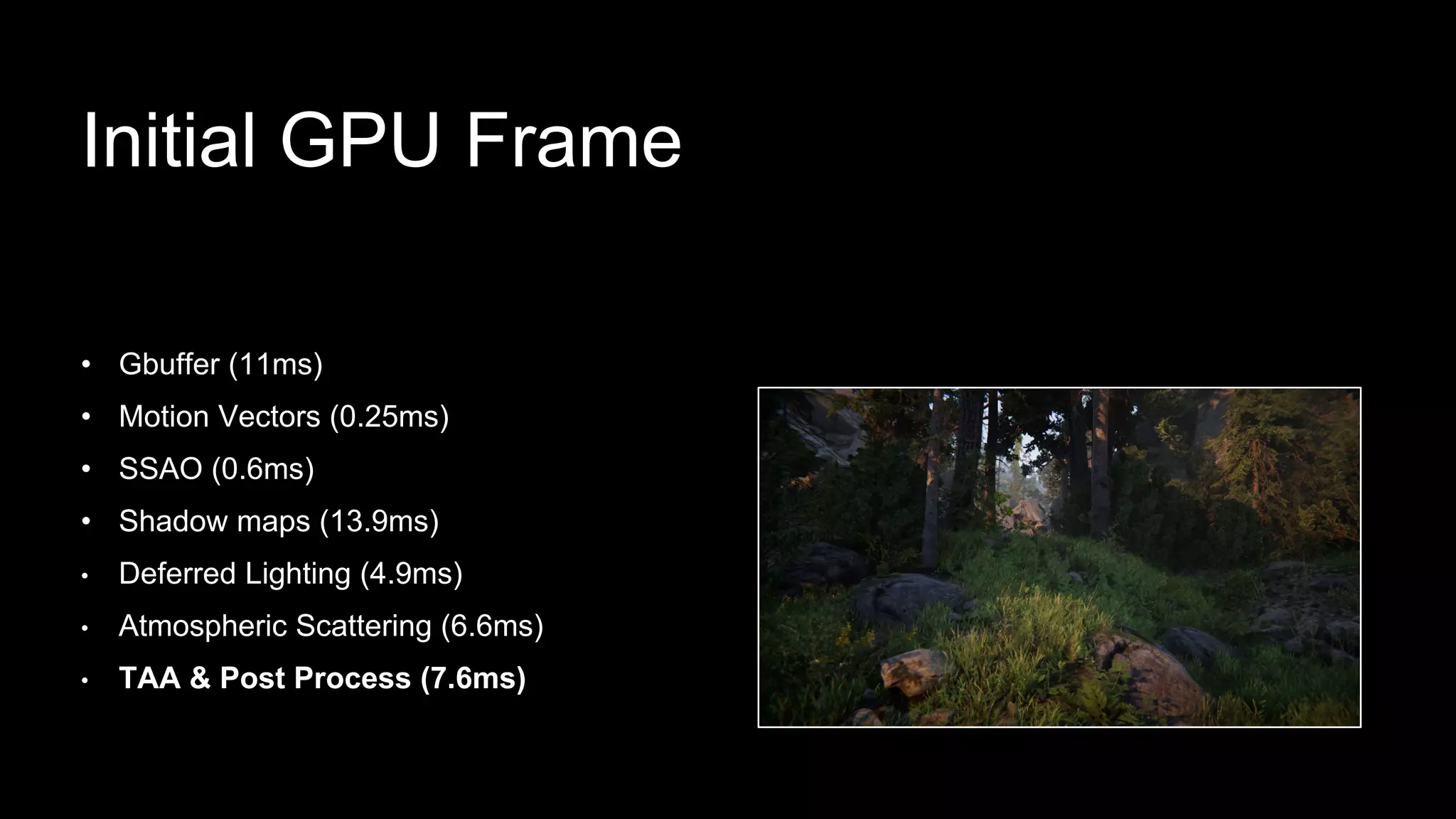

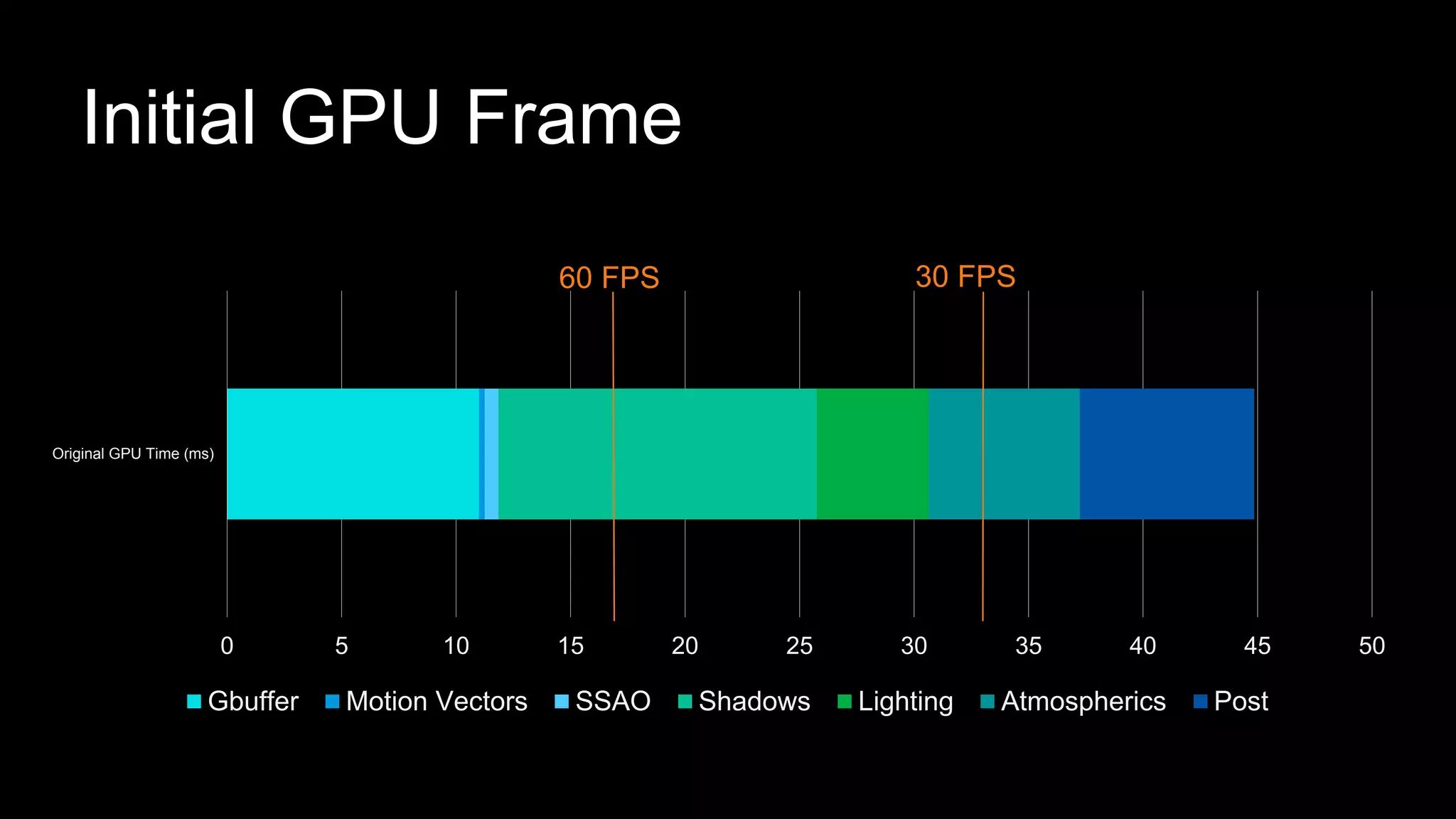

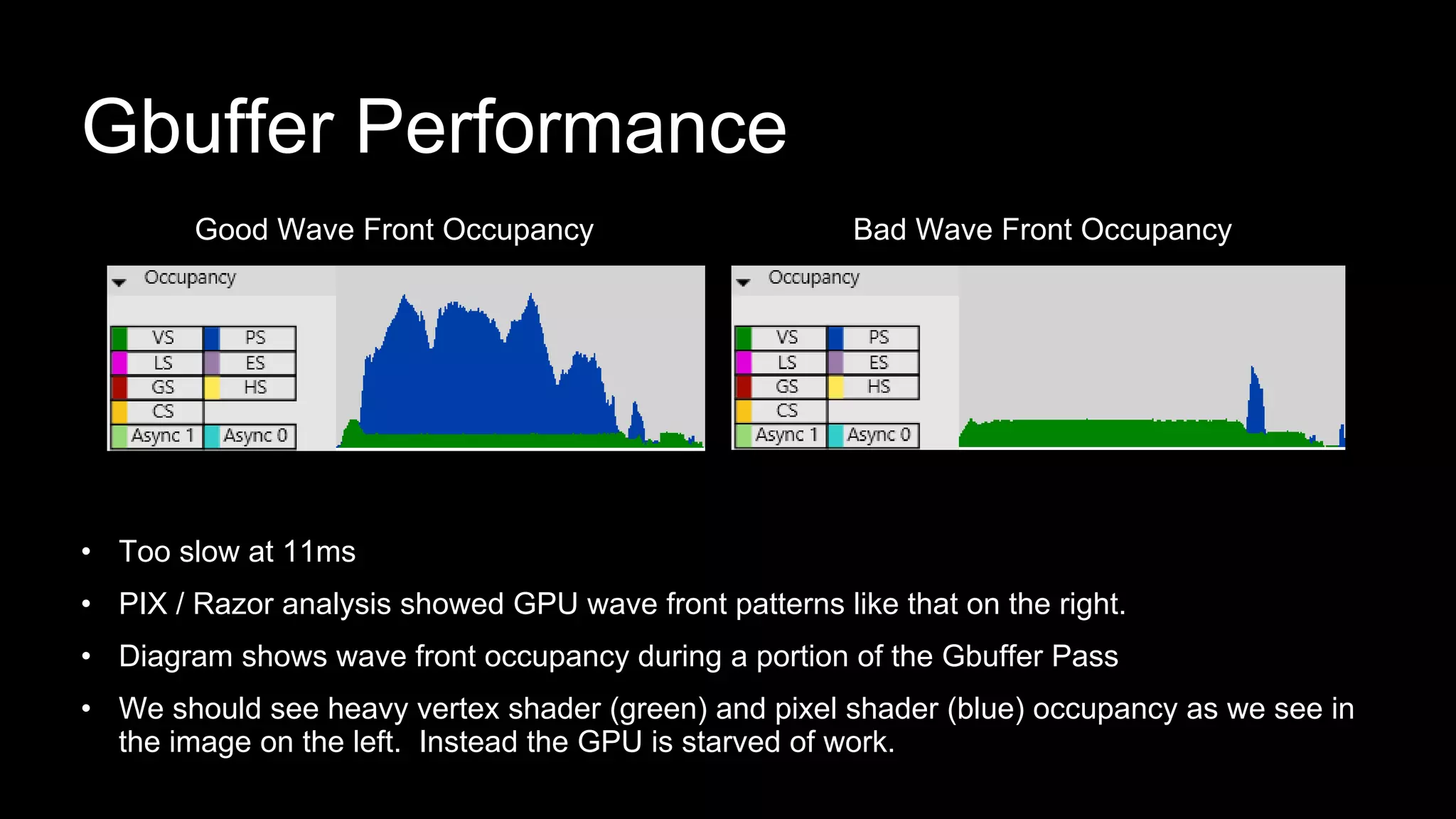

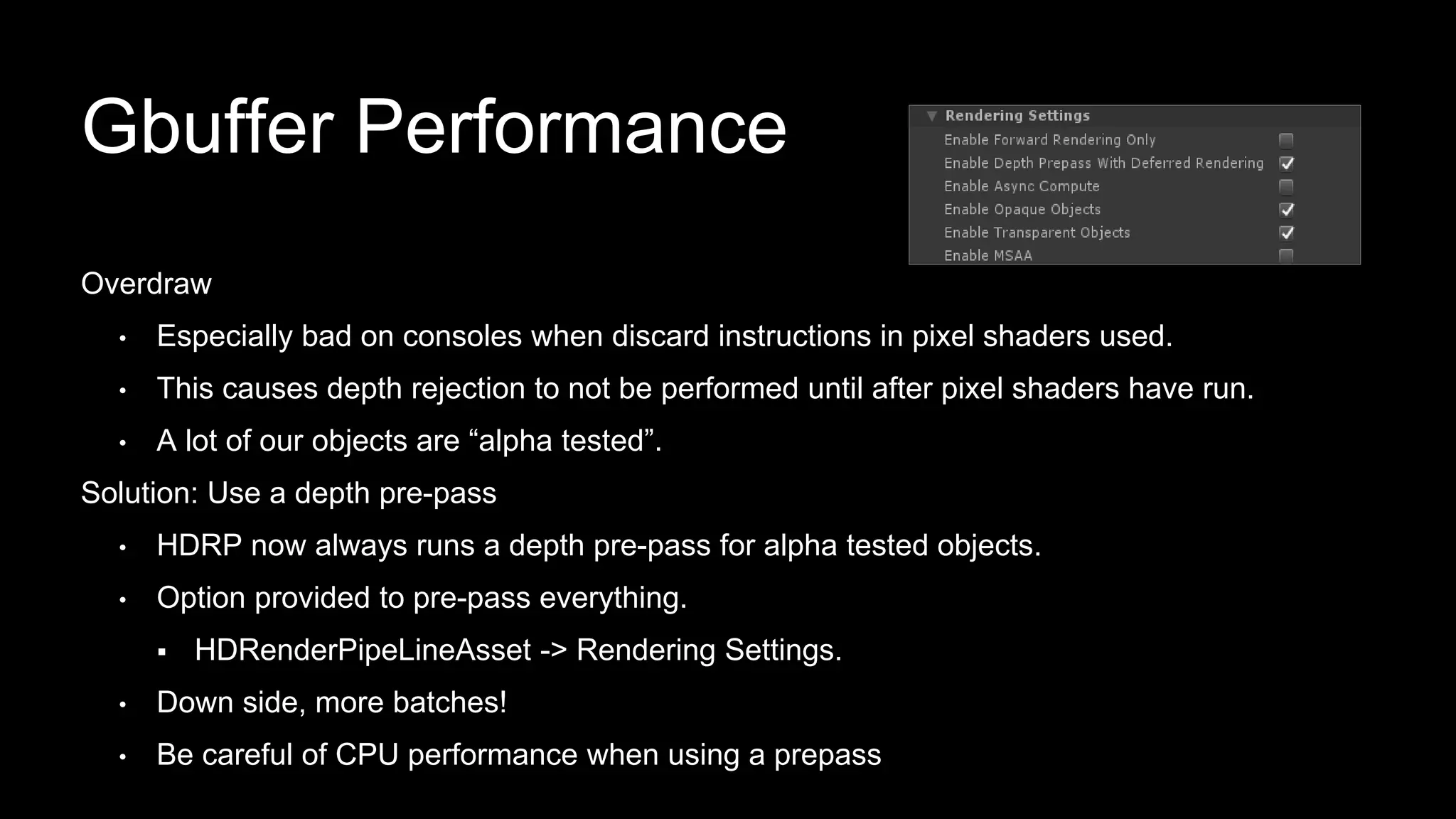

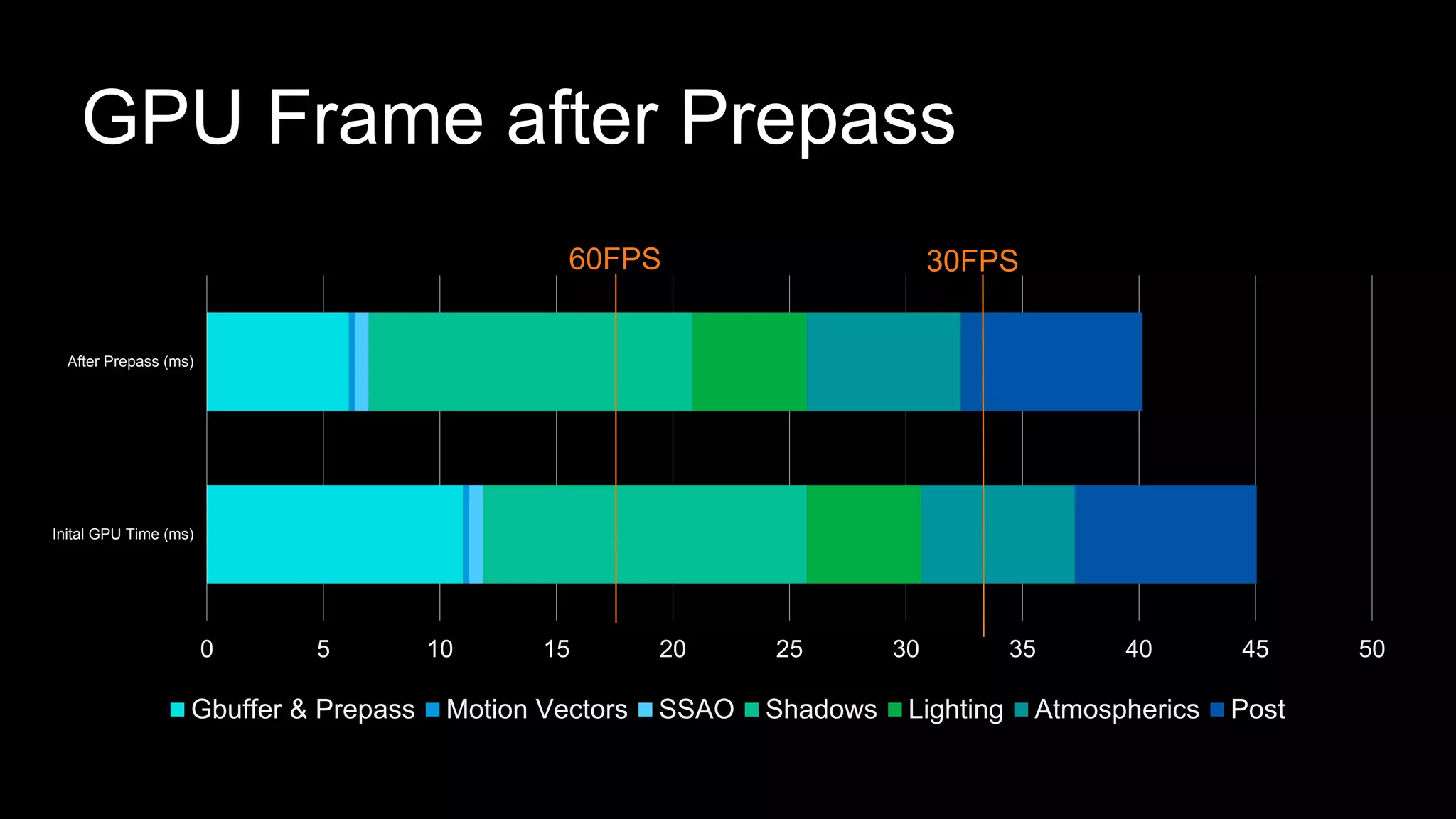

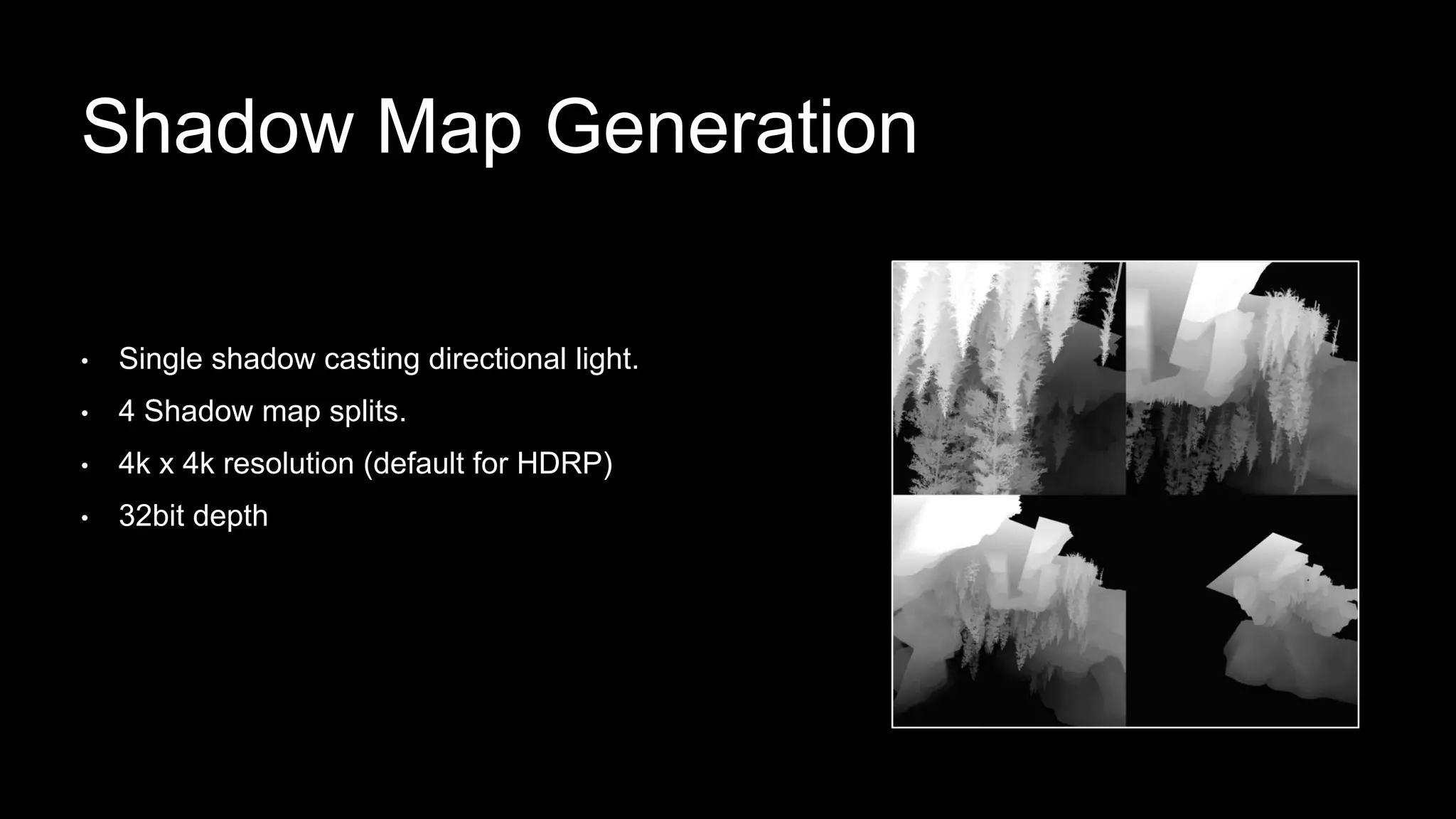

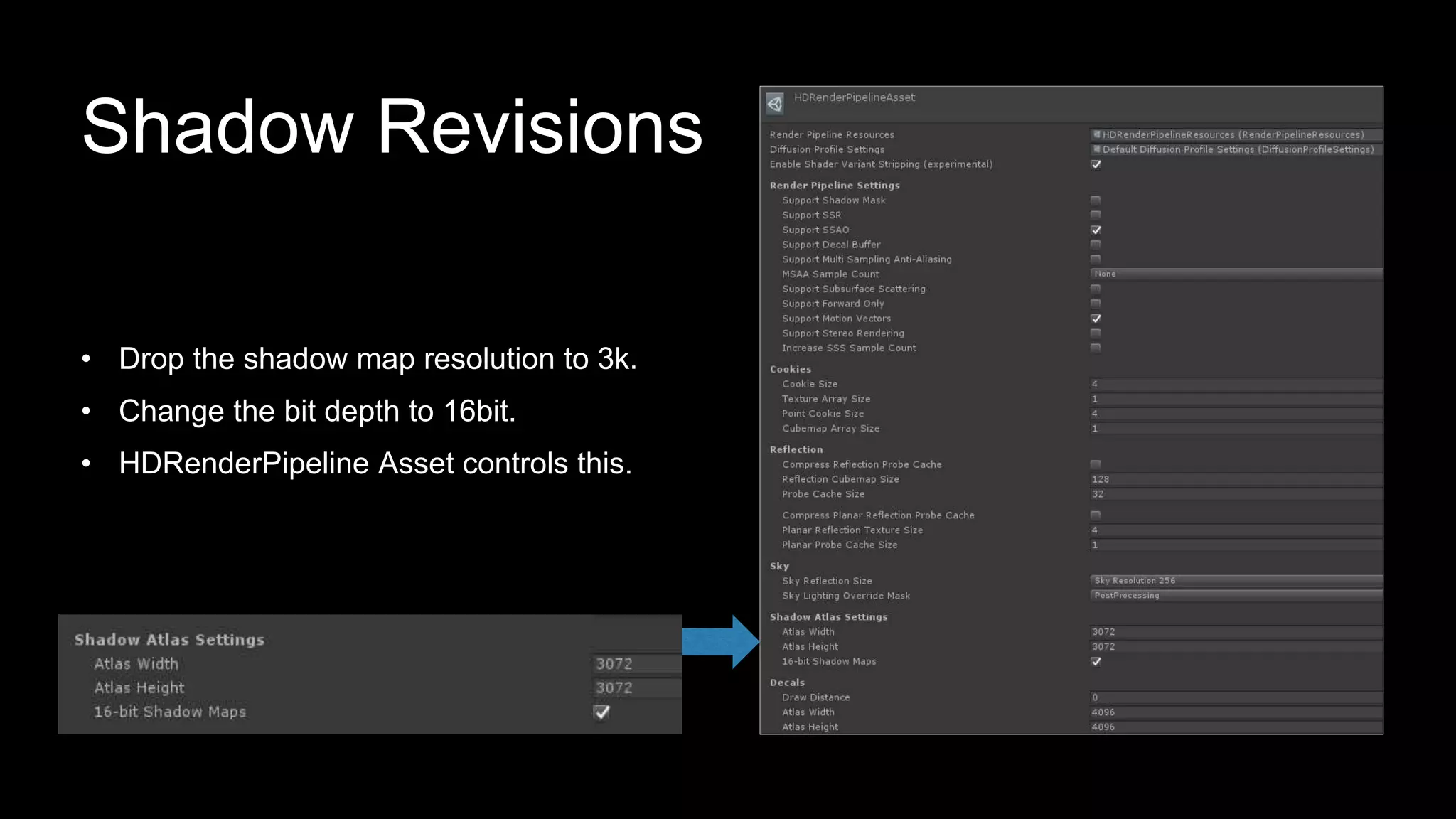

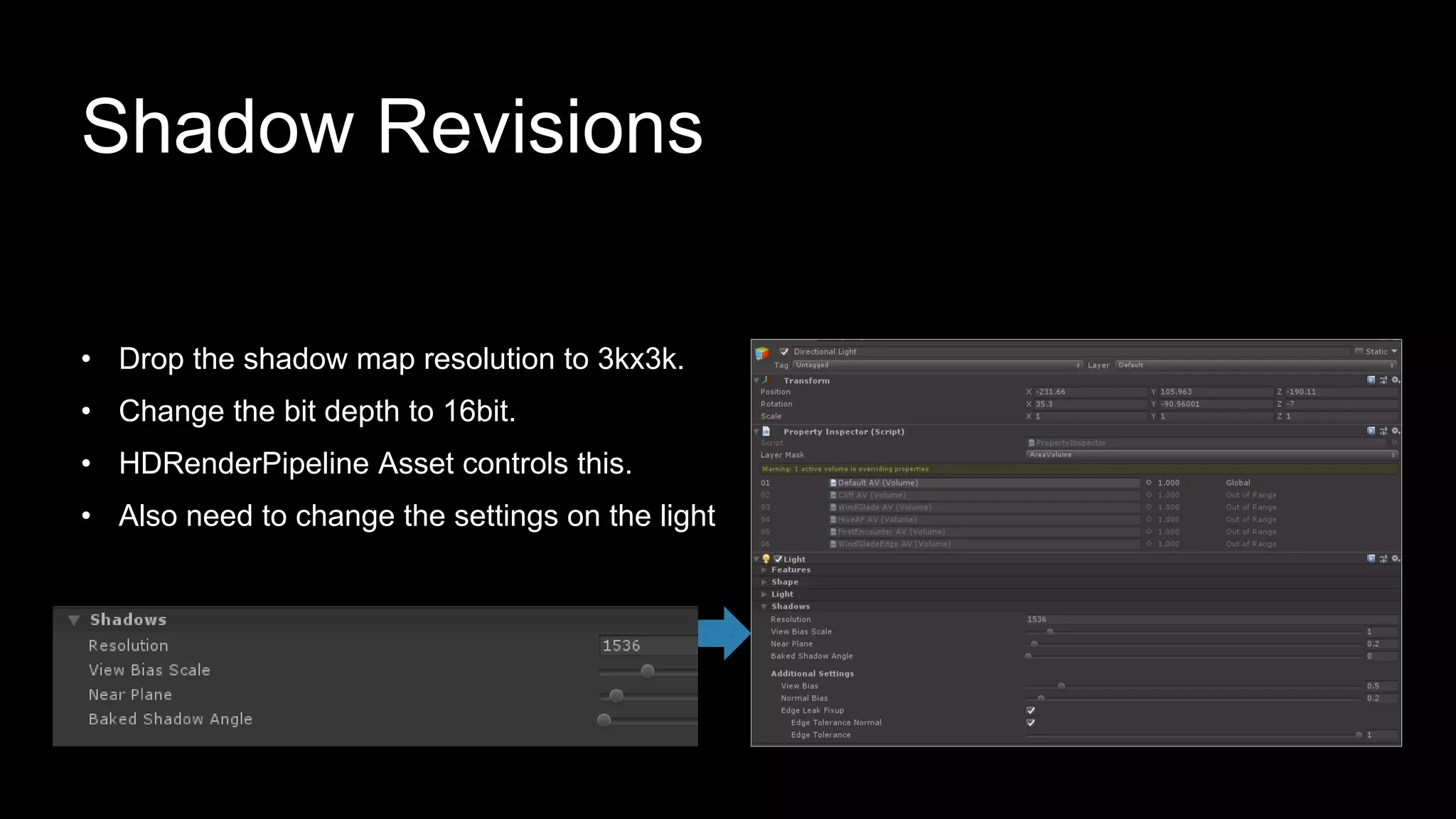

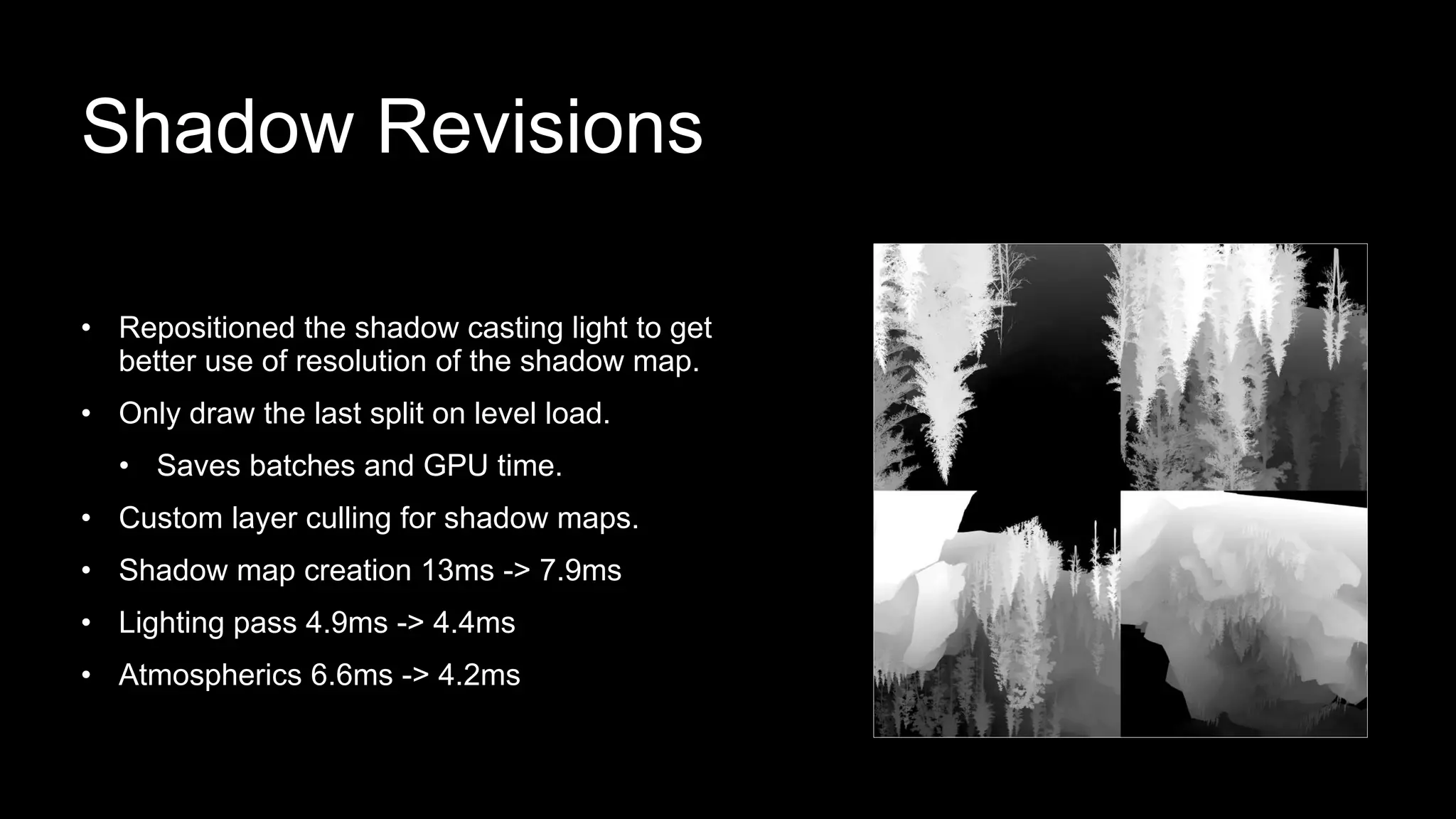

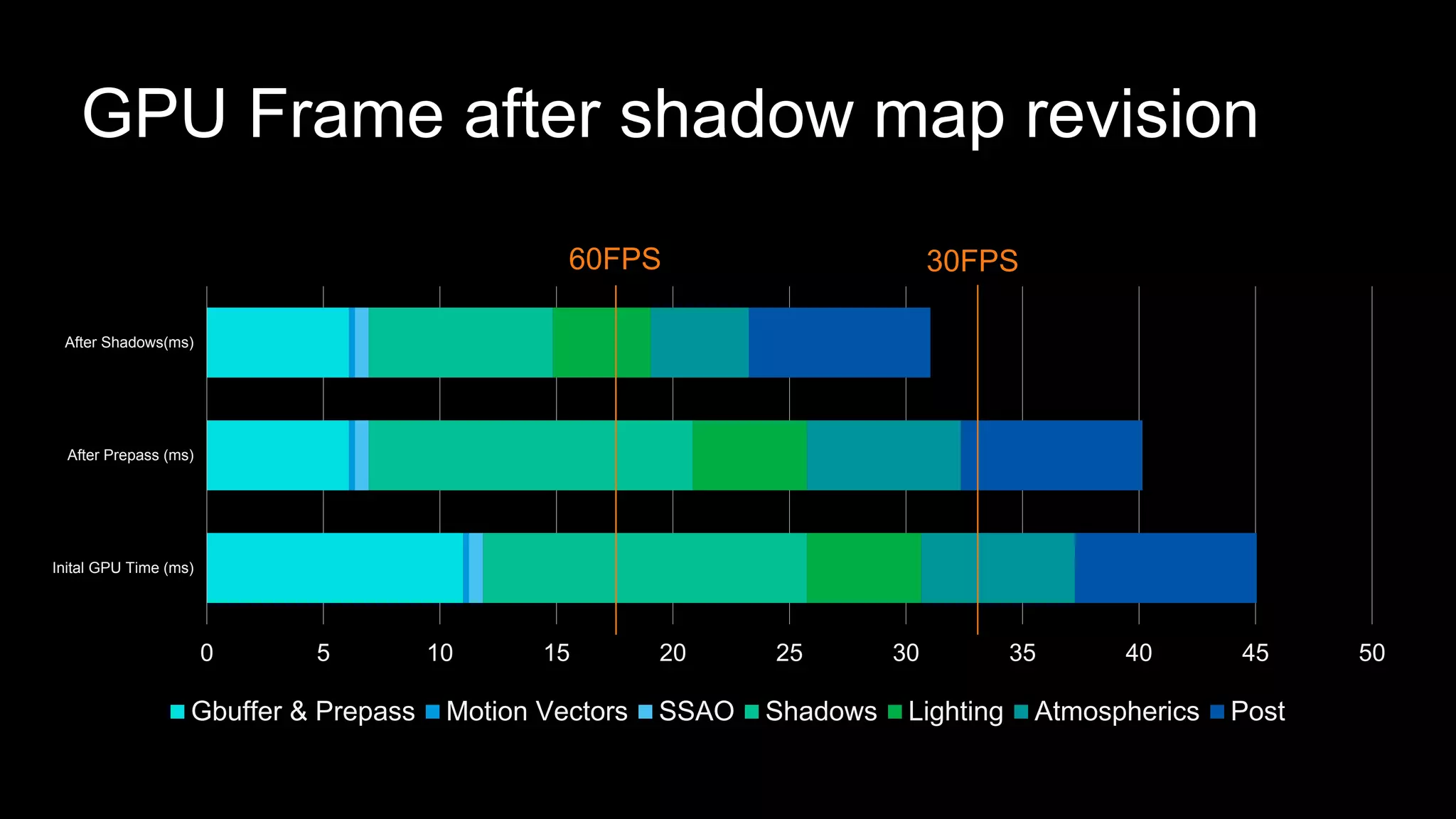

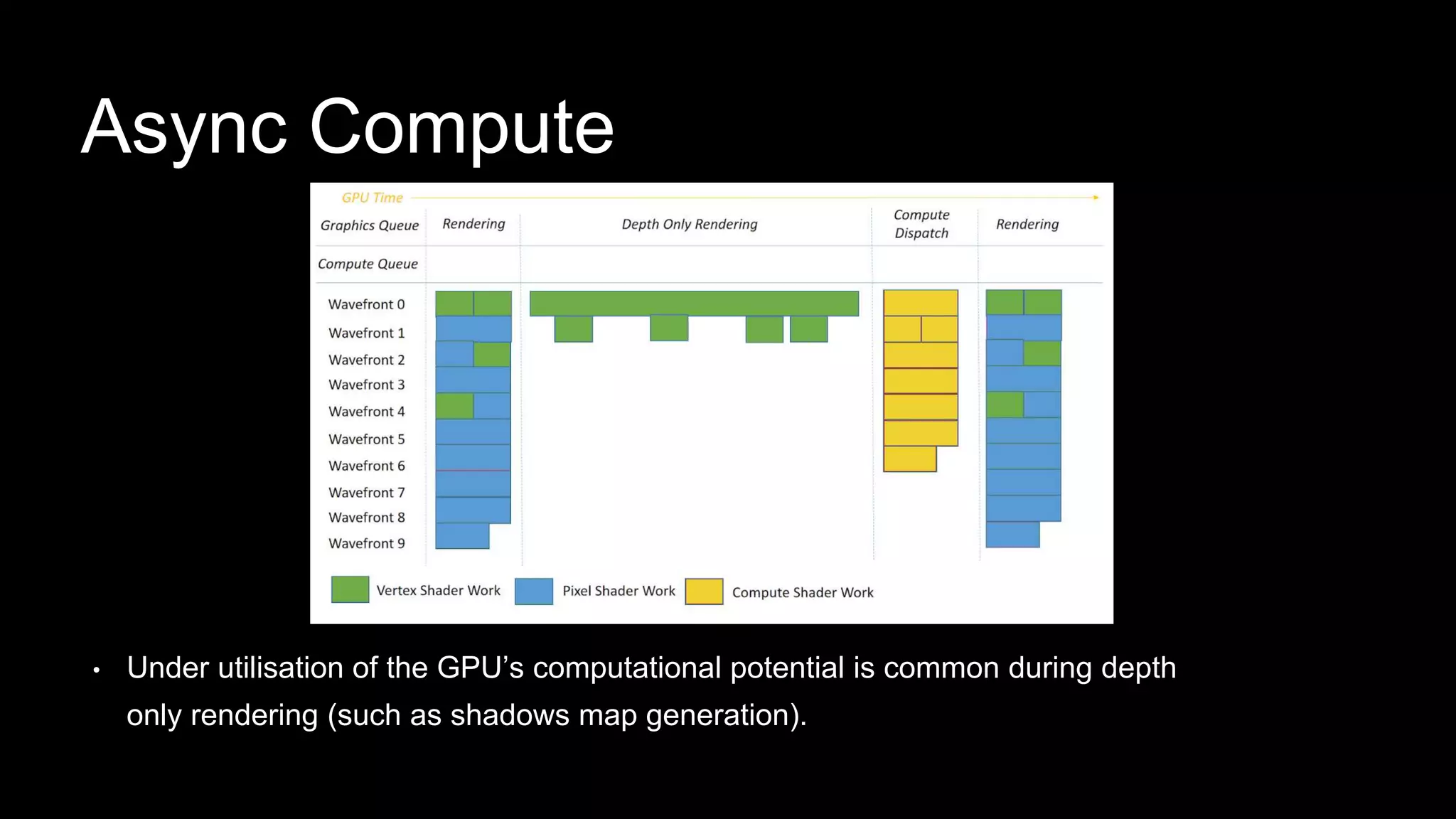

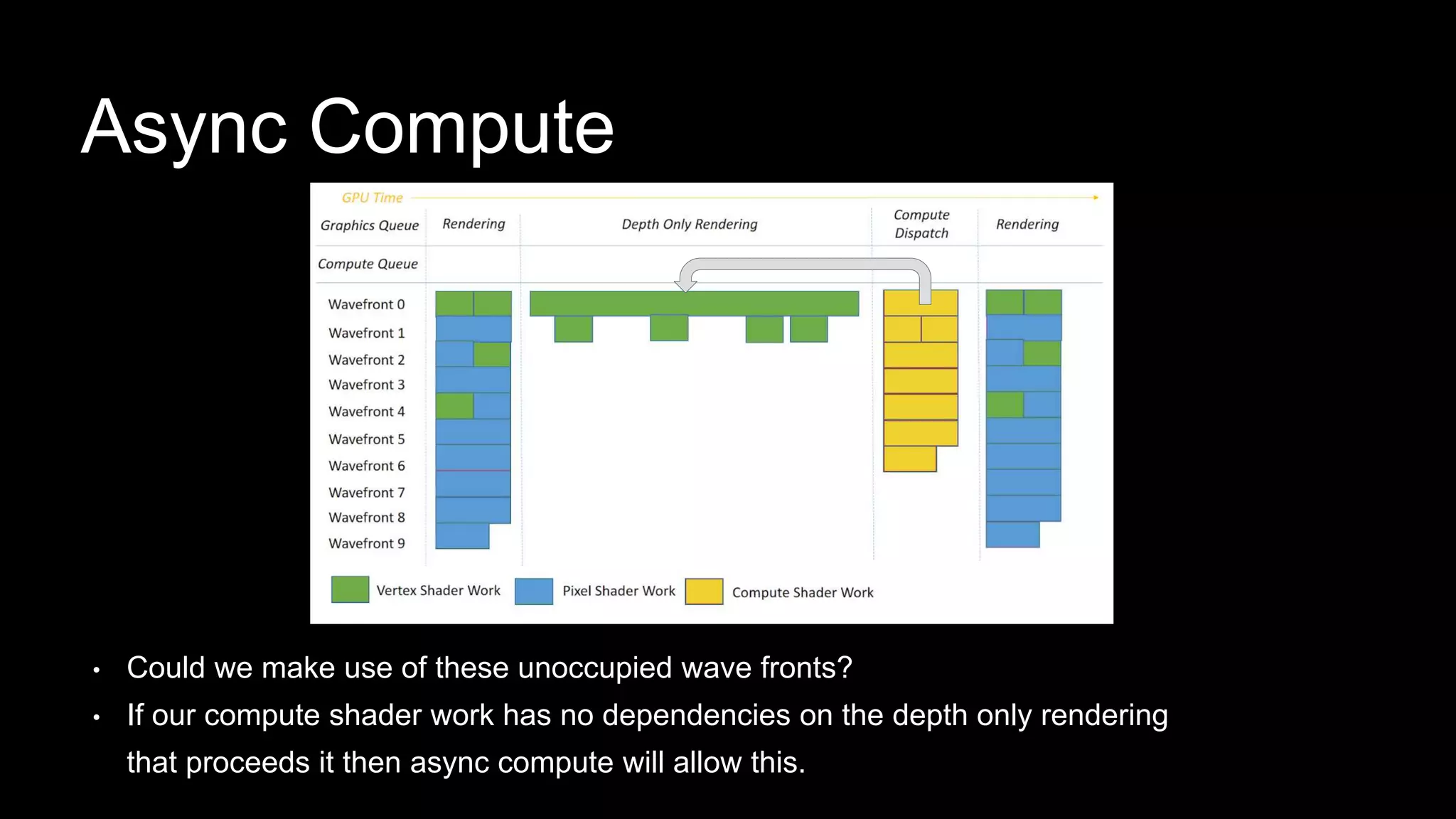

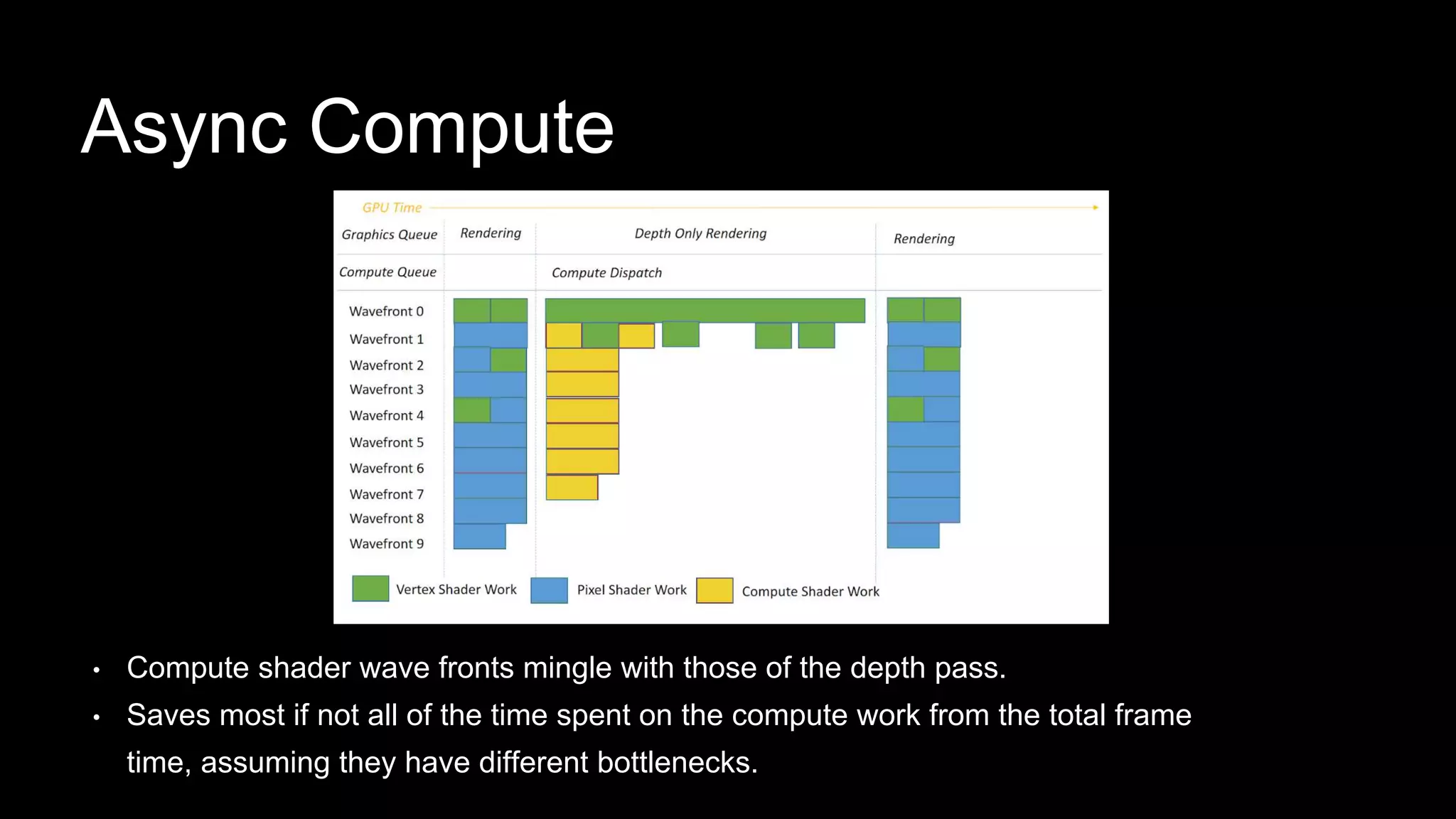

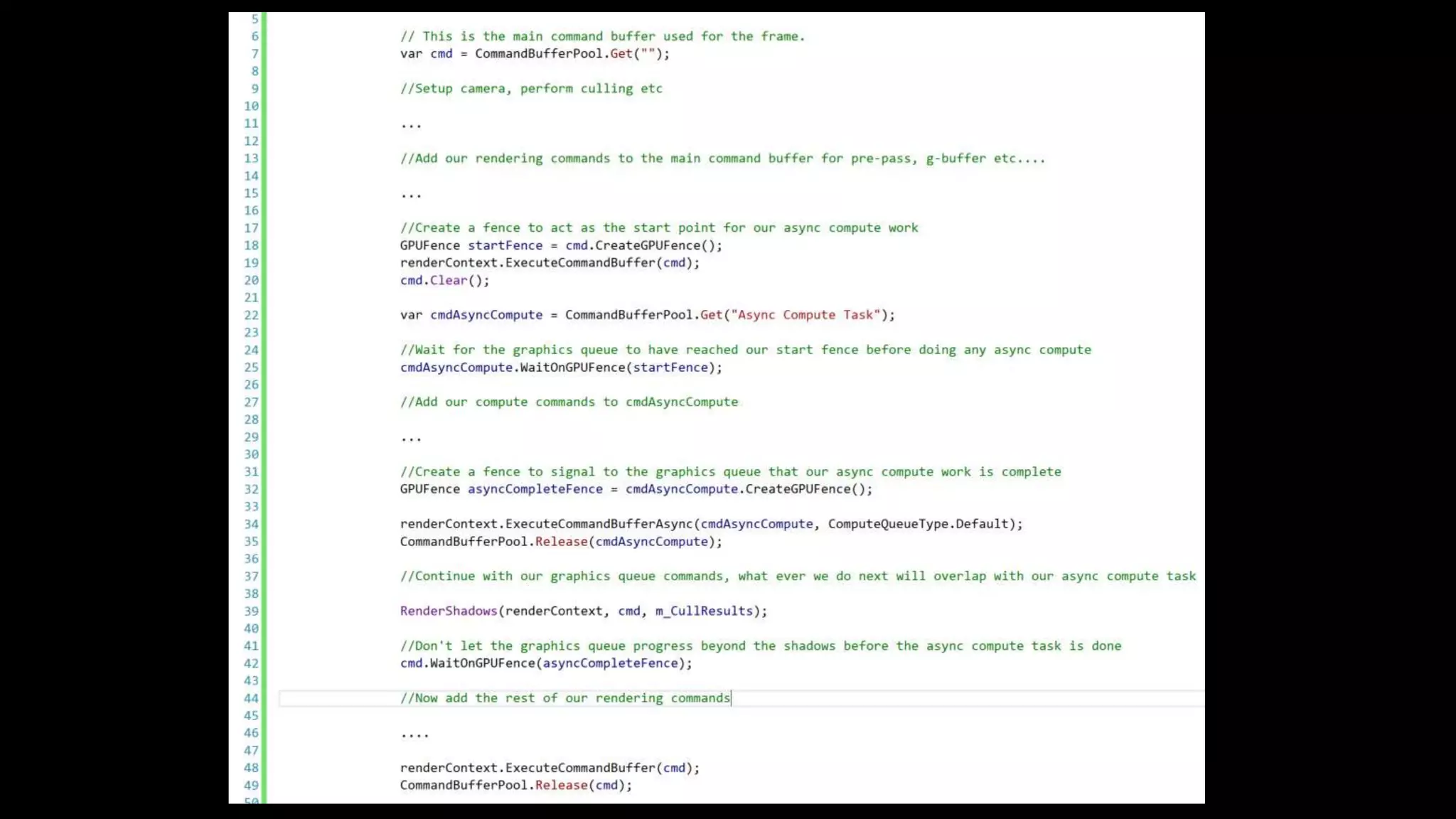

The document is a technical presentation by Rob Thompson on graphics optimization for high-end consoles like Xbox One and PlayStation 4, focusing on a case study using the 'Book of the Dead' project. It provides insights into performance profiling, managing draw calls, utilizing graphics jobs, and the effects of techniques such as tessellation and async compute. The presentation aims to highlight the project's capabilities within the High Definition Render Pipeline (HDRP) and offers practical recommendations for optimizing GPU performance in console game development.