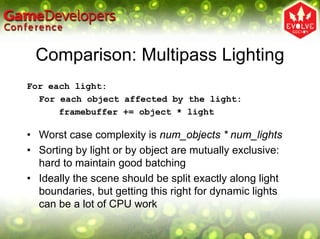

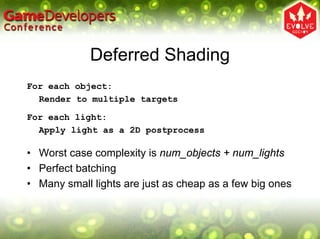

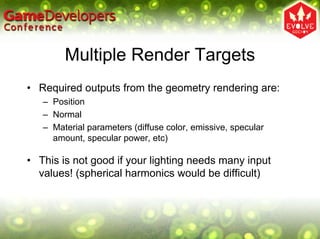

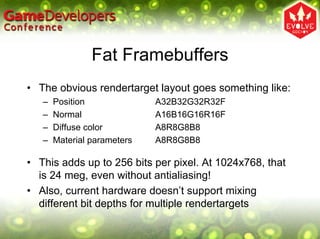

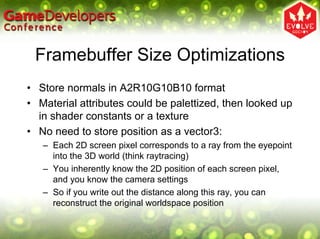

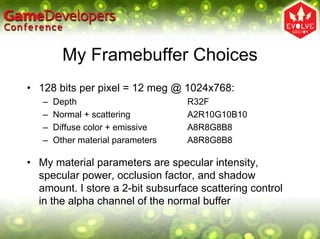

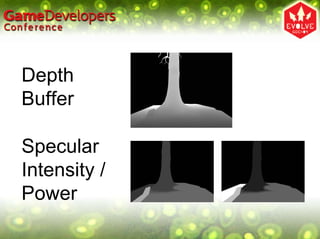

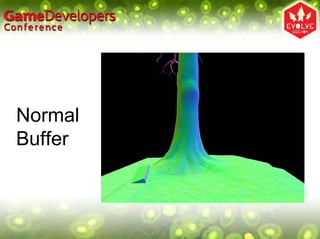

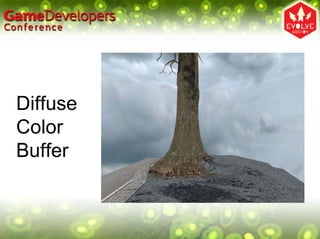

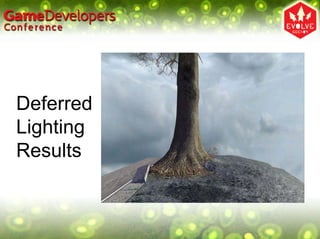

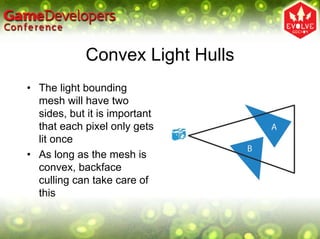

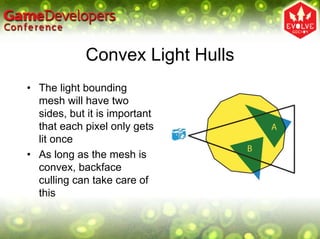

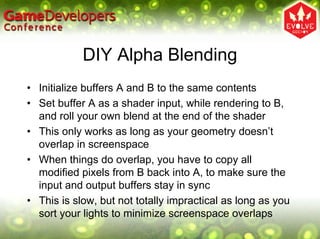

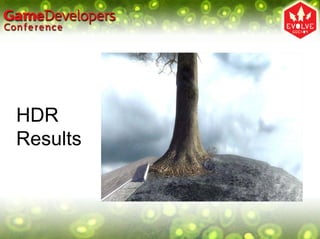

Deferred shading is a technique where scene geometry is rendered to multiple render targets storing position, normal, and material properties, and then lighting is applied as a post-process. This improves batching and ensures each pixel is lit only once. Challenges include large framebuffer sizes, handling alpha blending and multisampling, but it enables easy addition of new light types and post-processing effects.