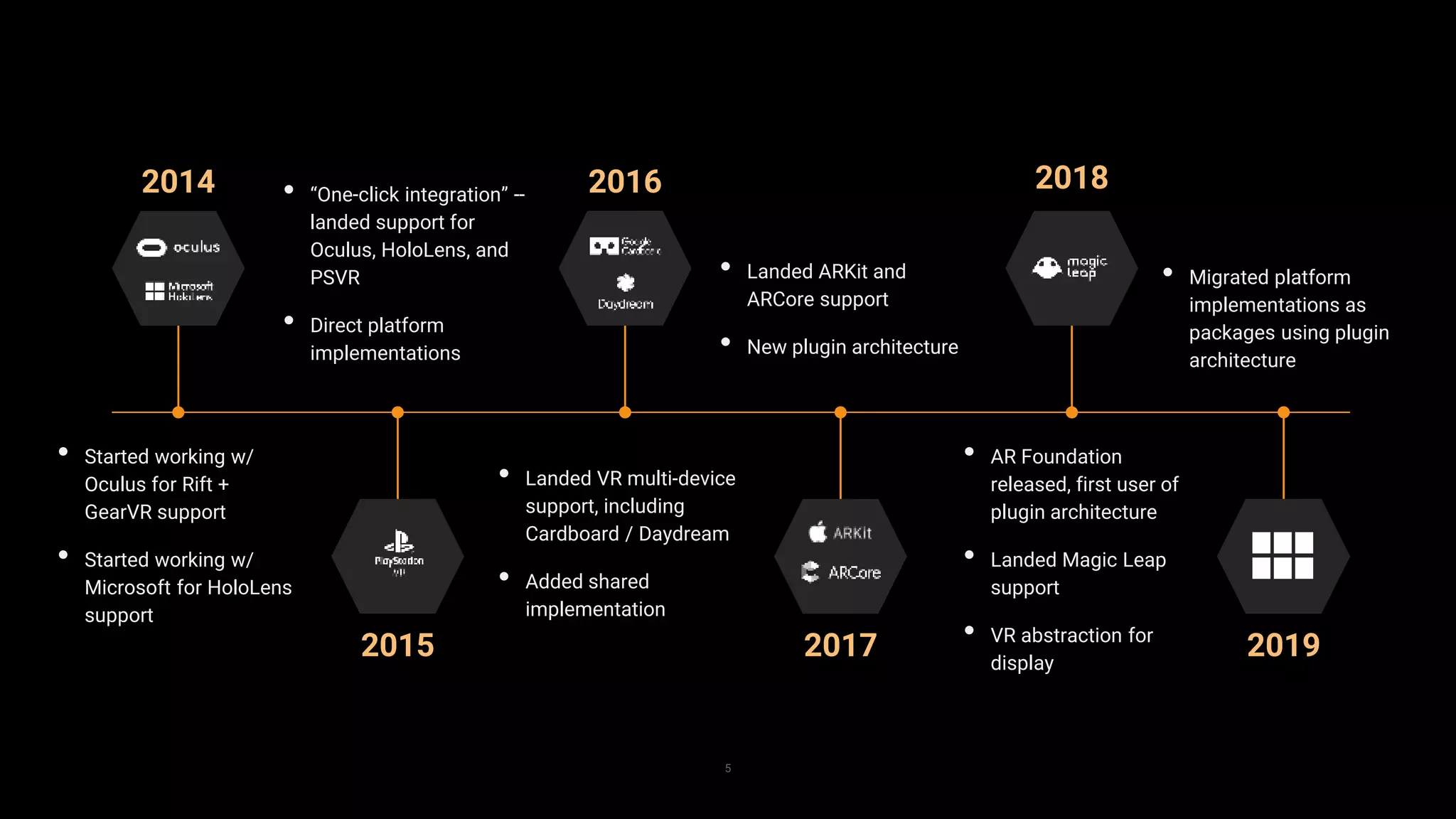

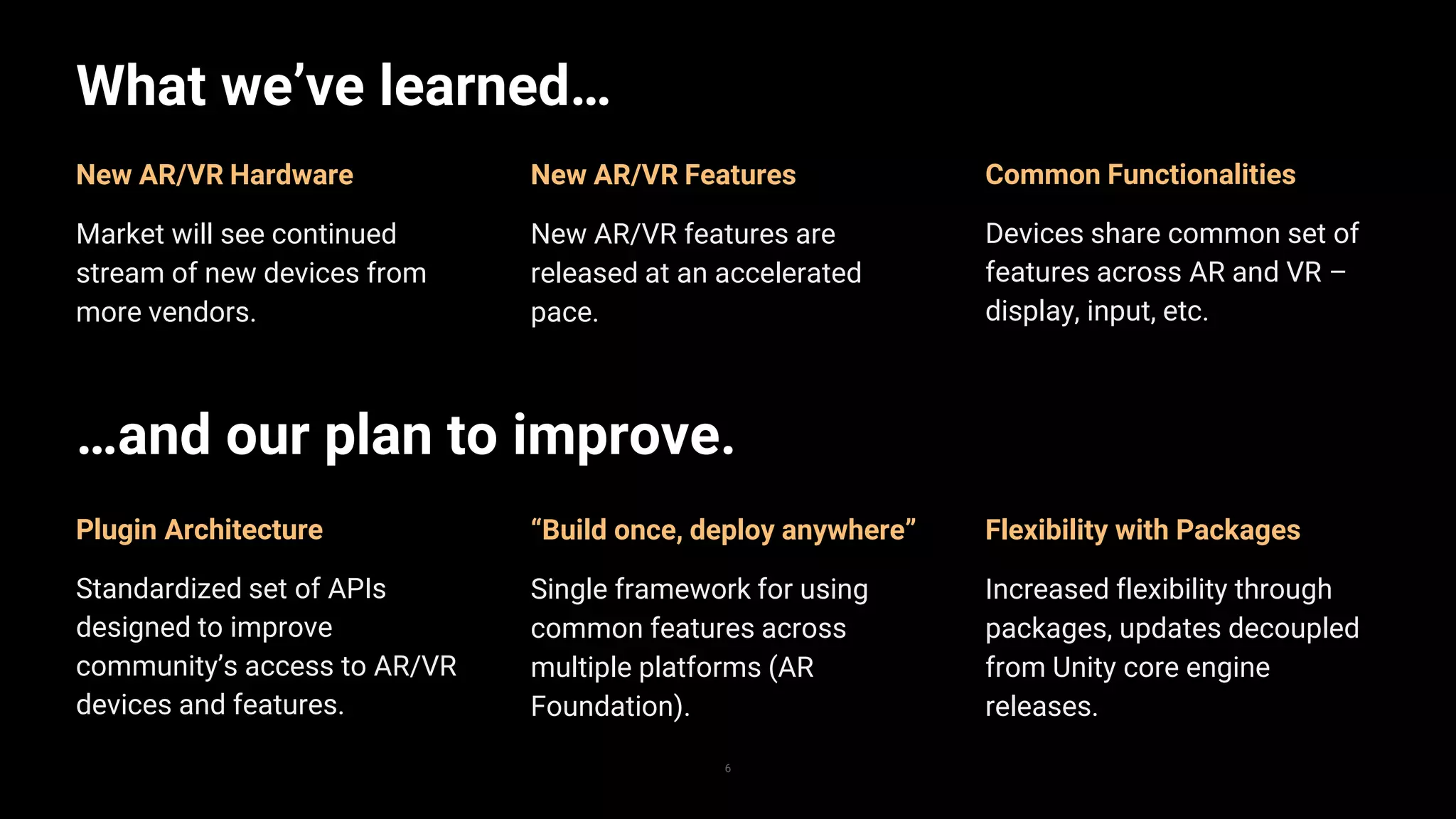

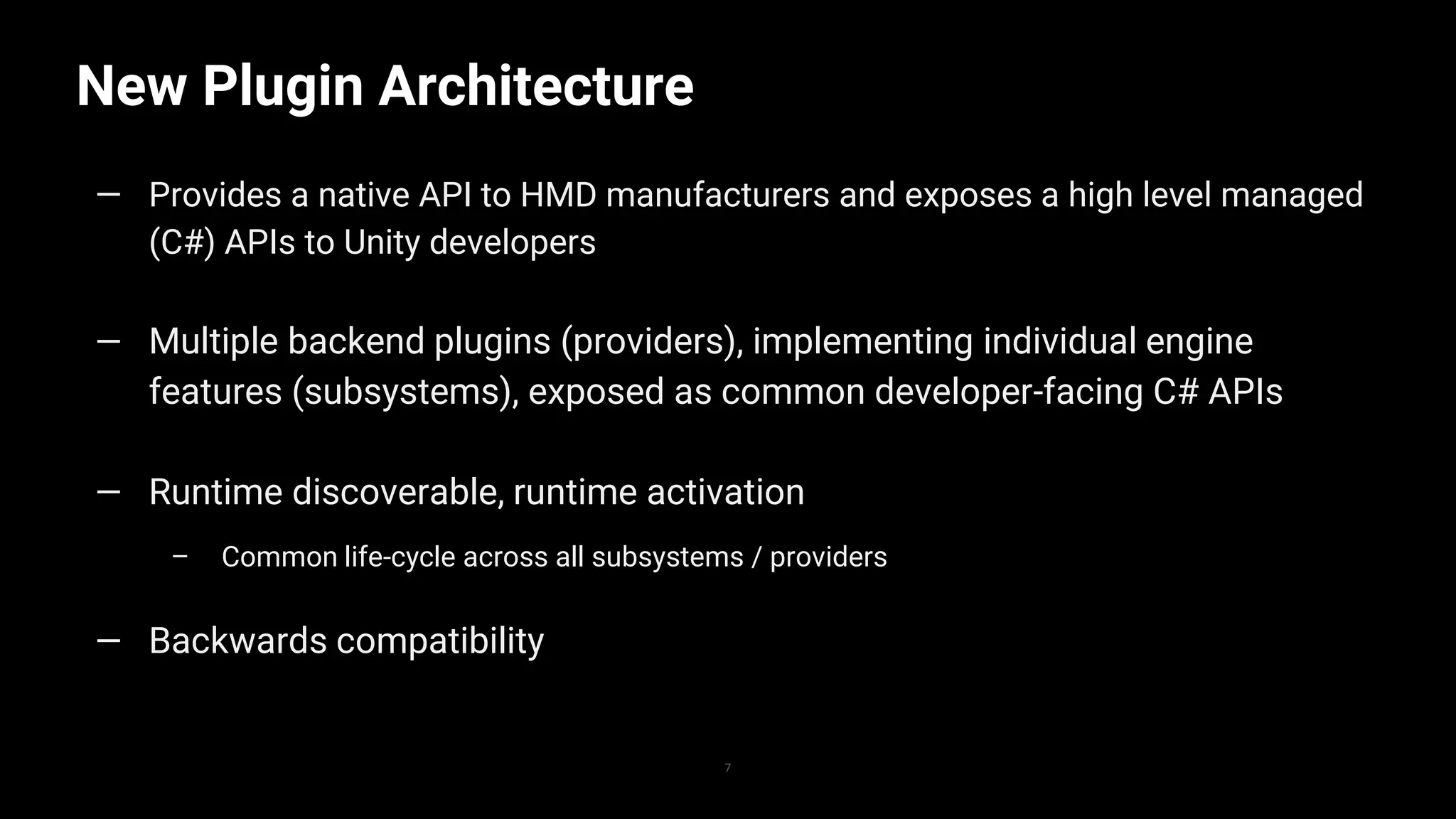

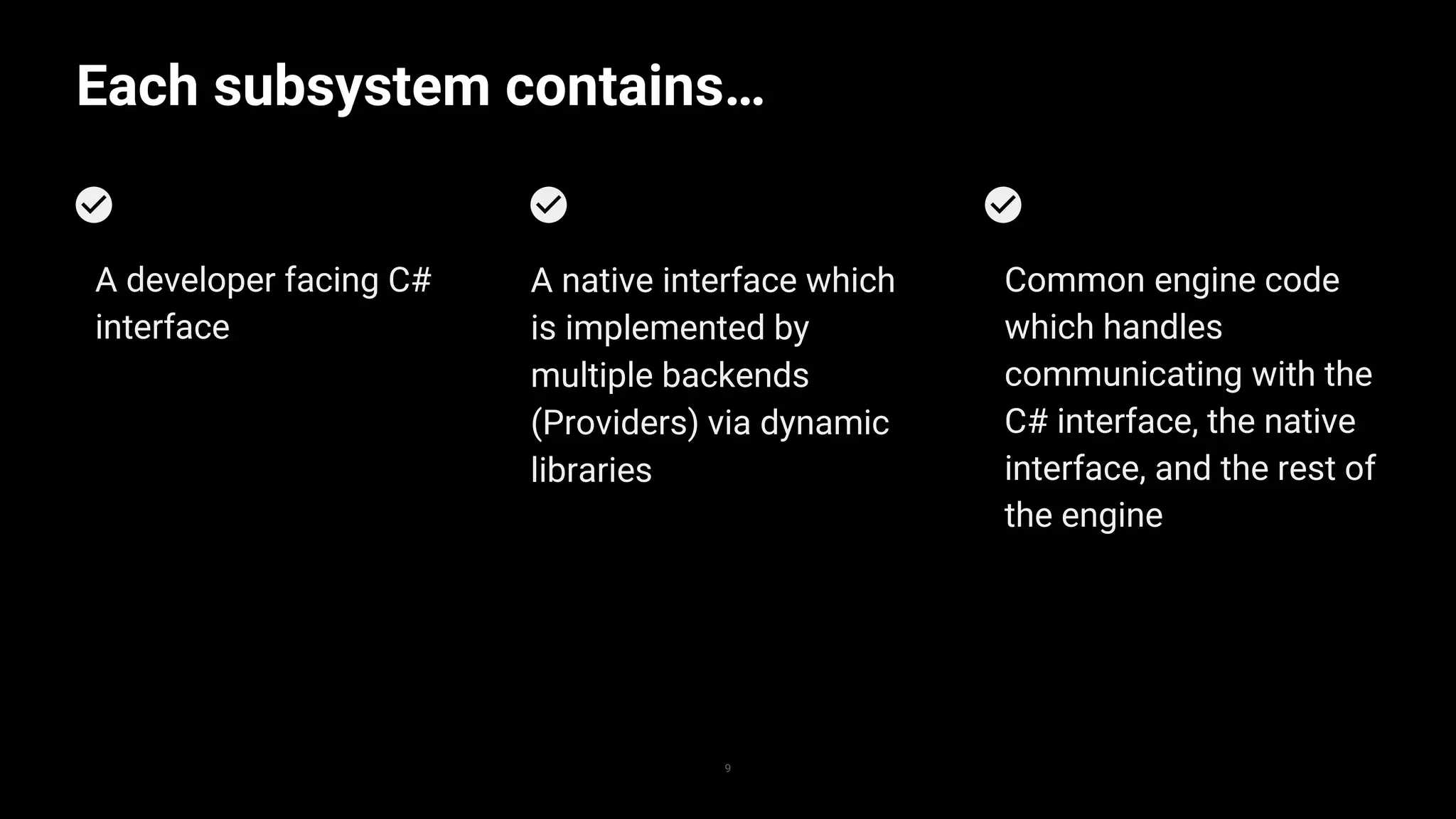

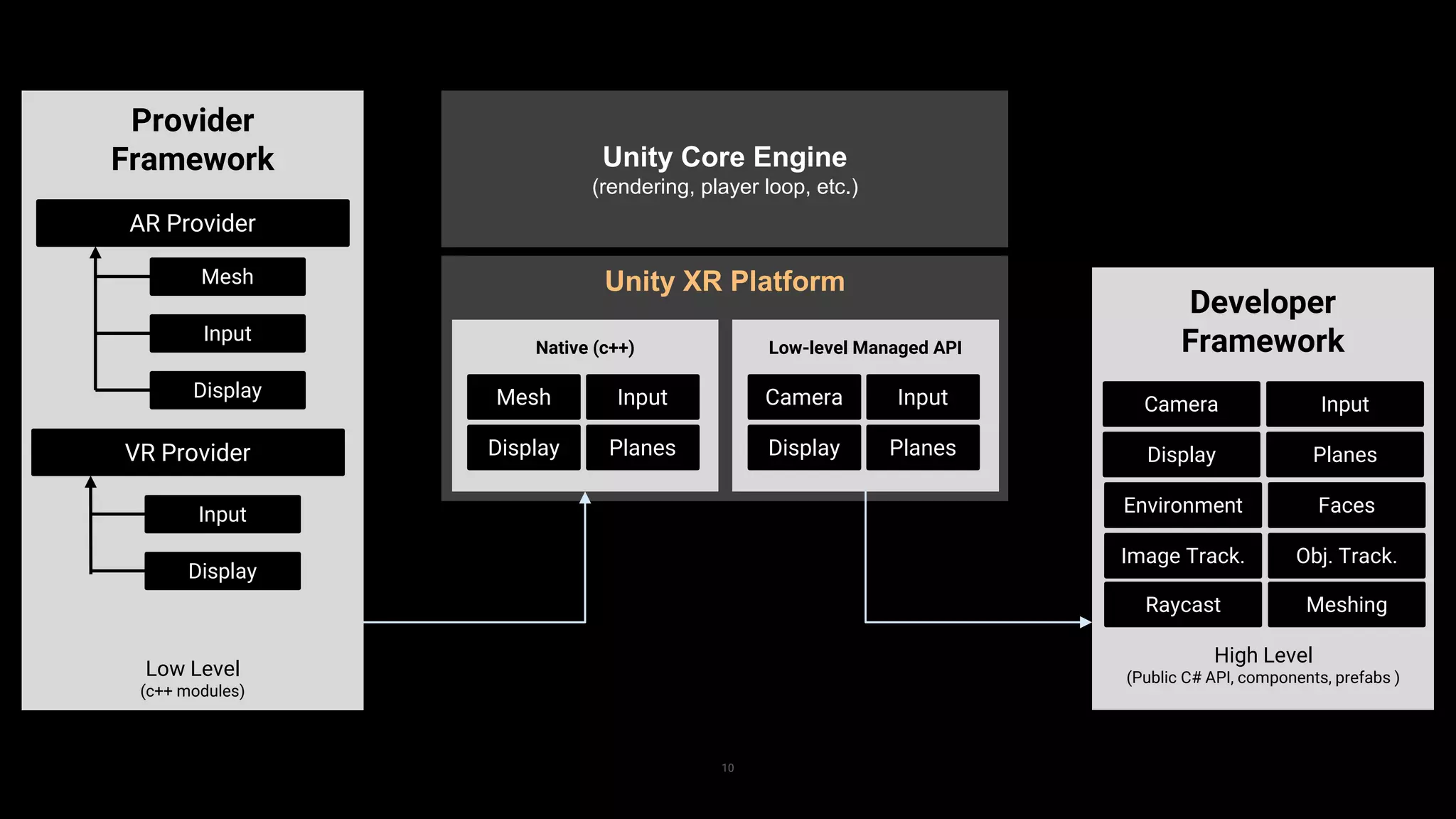

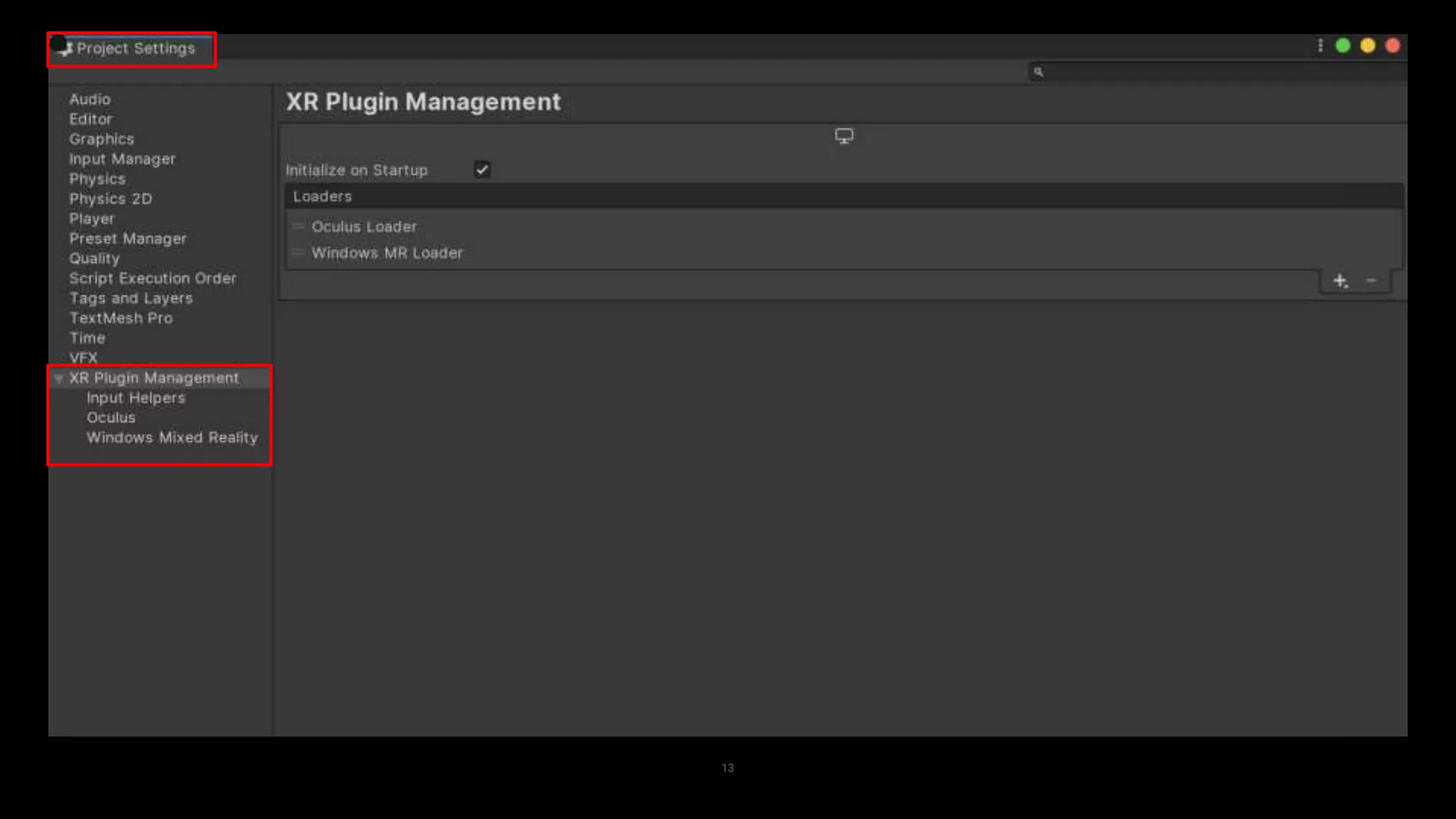

The document outlines Unity's advancements in XR (AR/VR) platform support, detailing the new architecture that enhances flexibility and accelerates feature releases. It presents a unified framework for common functionalities across devices, utilizing a plugin architecture for better integration and compatibility. Future plans include migrating platform SDKs to packages and enhancing the user experience with improved management tools.