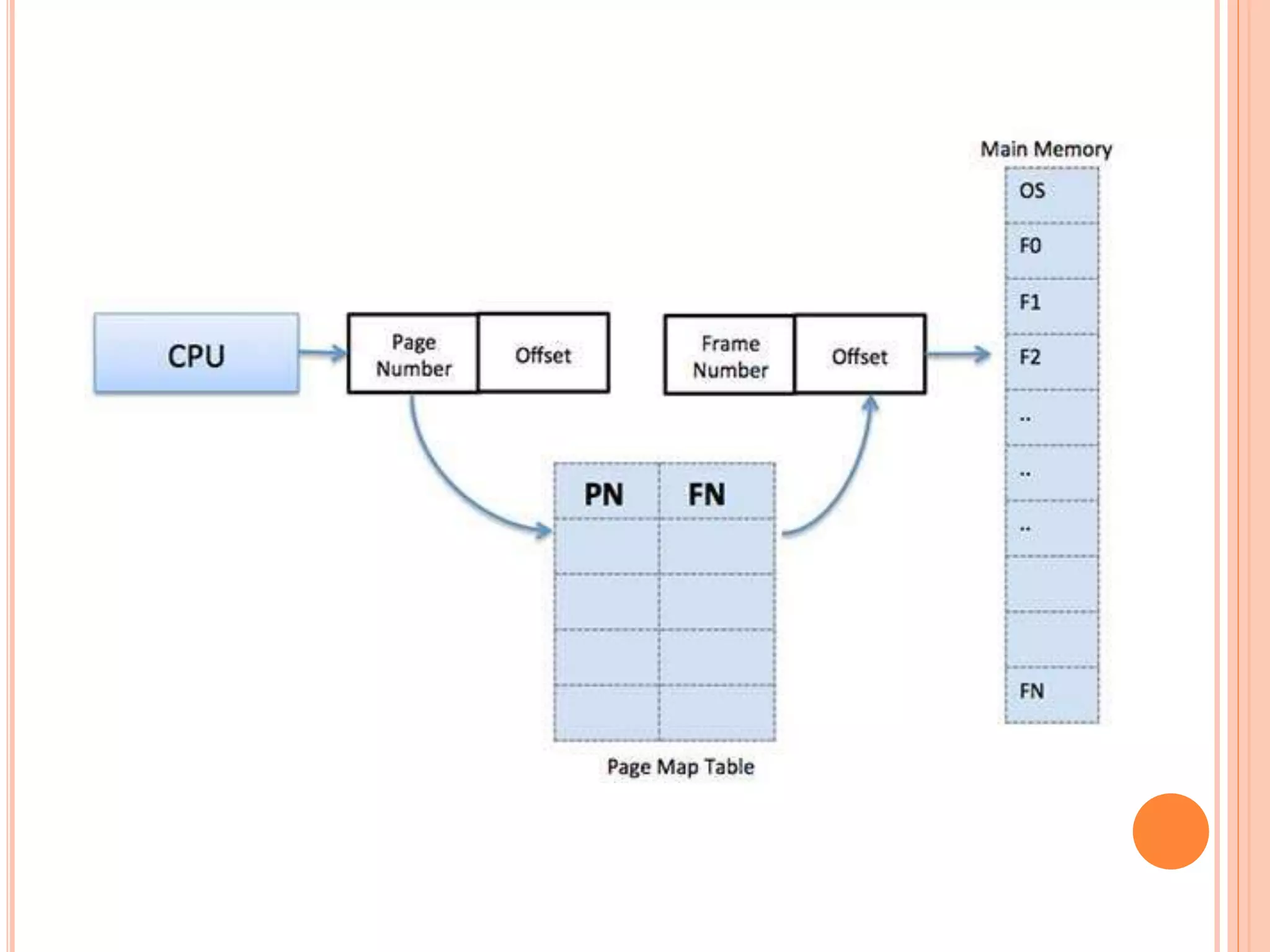

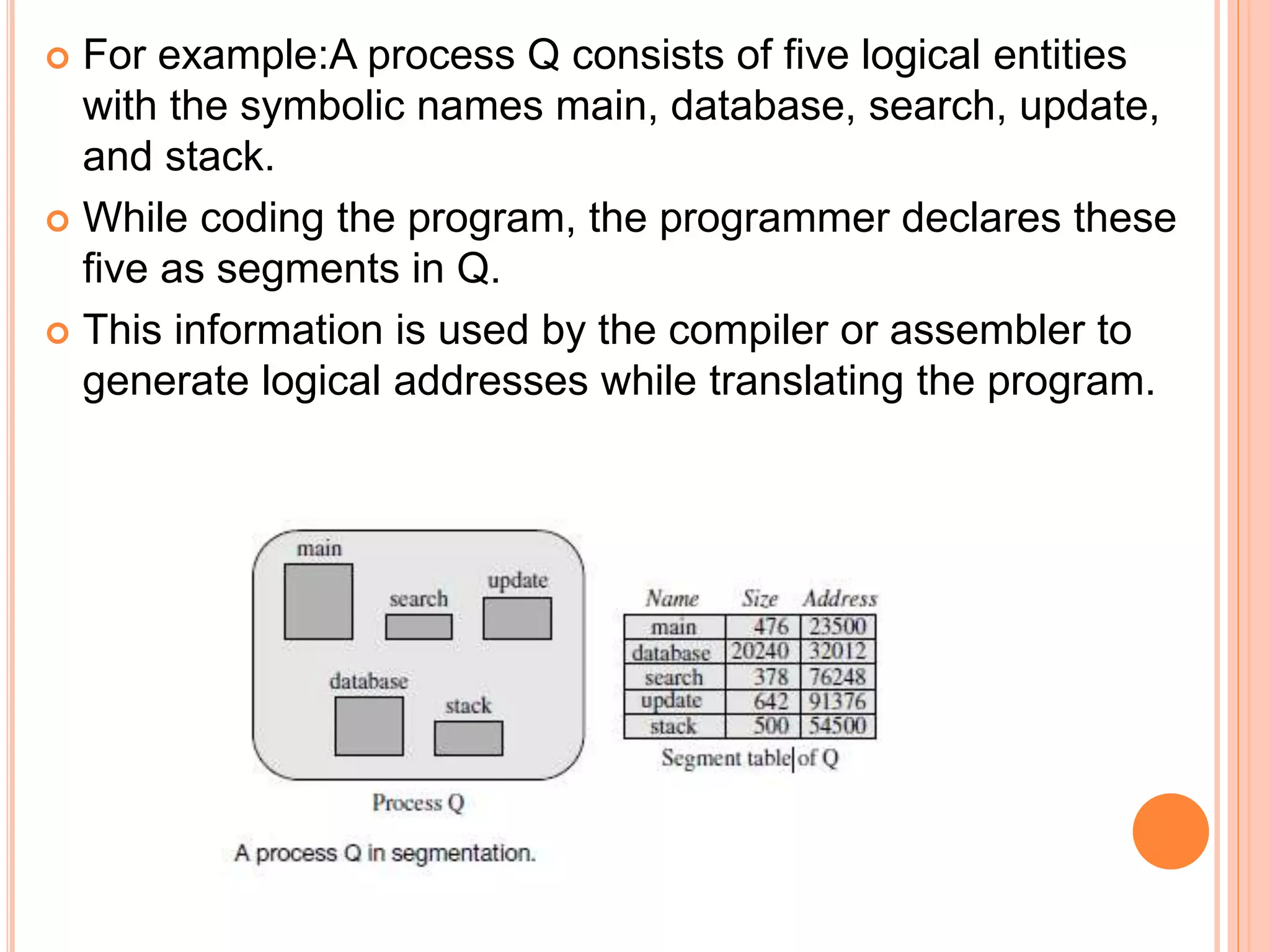

The document discusses paging and segmentation as memory management techniques. Paging allows a process's physical address space to be non-contiguous, organizing memory into pages and frames, while segmentation manages logical entities in a program as units. It also explores the combination of segmentation with paging and its implications for memory allocation and protection.