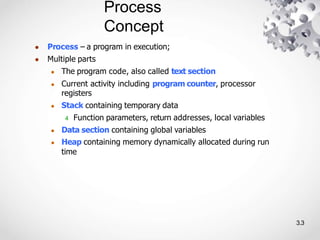

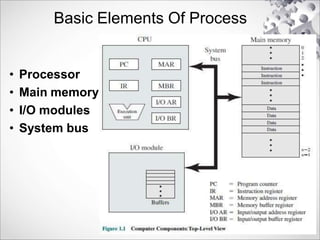

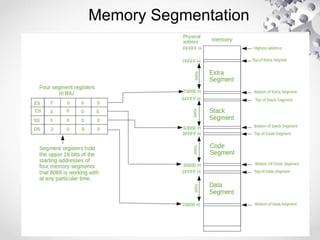

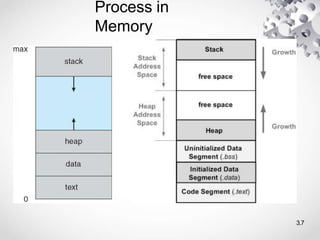

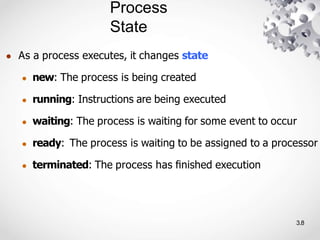

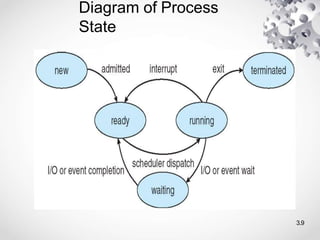

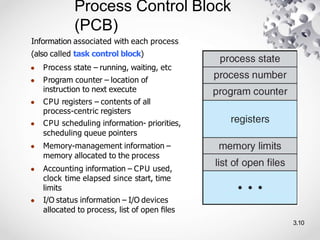

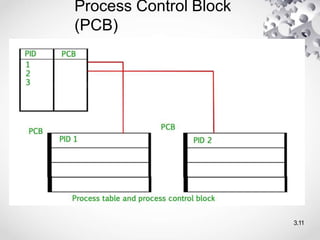

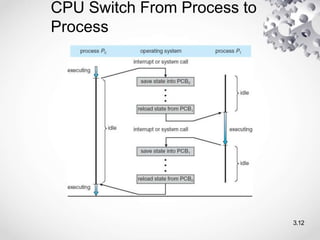

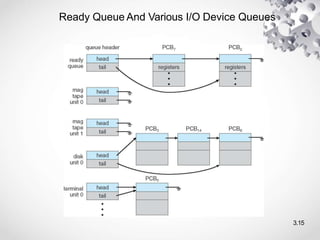

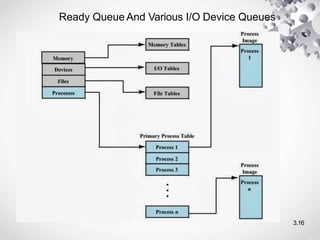

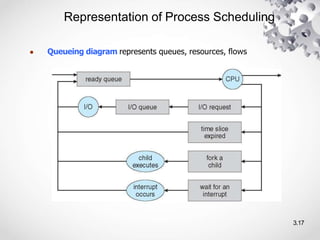

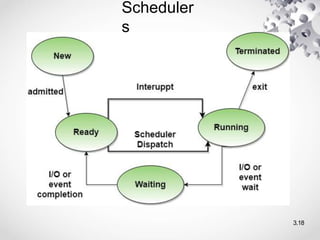

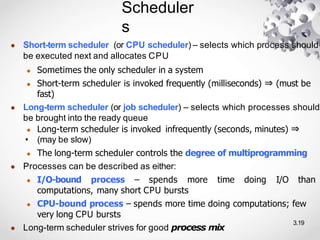

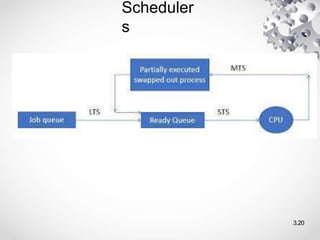

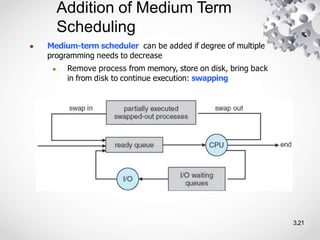

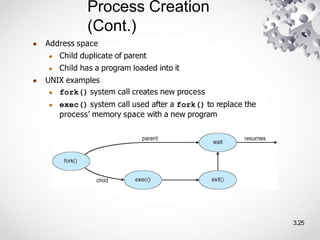

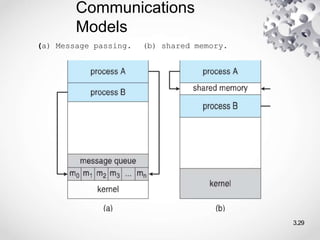

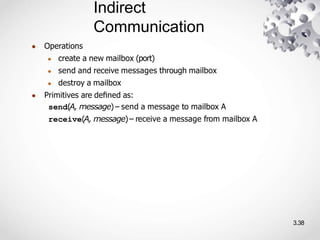

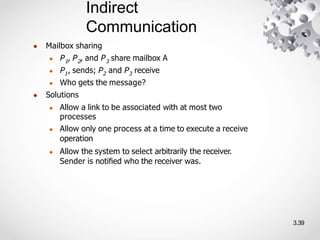

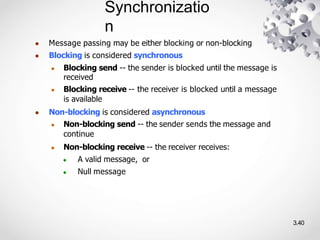

Chapter 3 covers process management, detailing concepts such as processes, process states, and scheduling. It explores the structure of process control blocks (PCBs), different types of schedulers, and interprocess communication methods including shared memory and message passing. The chapter also discusses process creation and termination, providing insights into the management and communication between cooperating and independent processes.