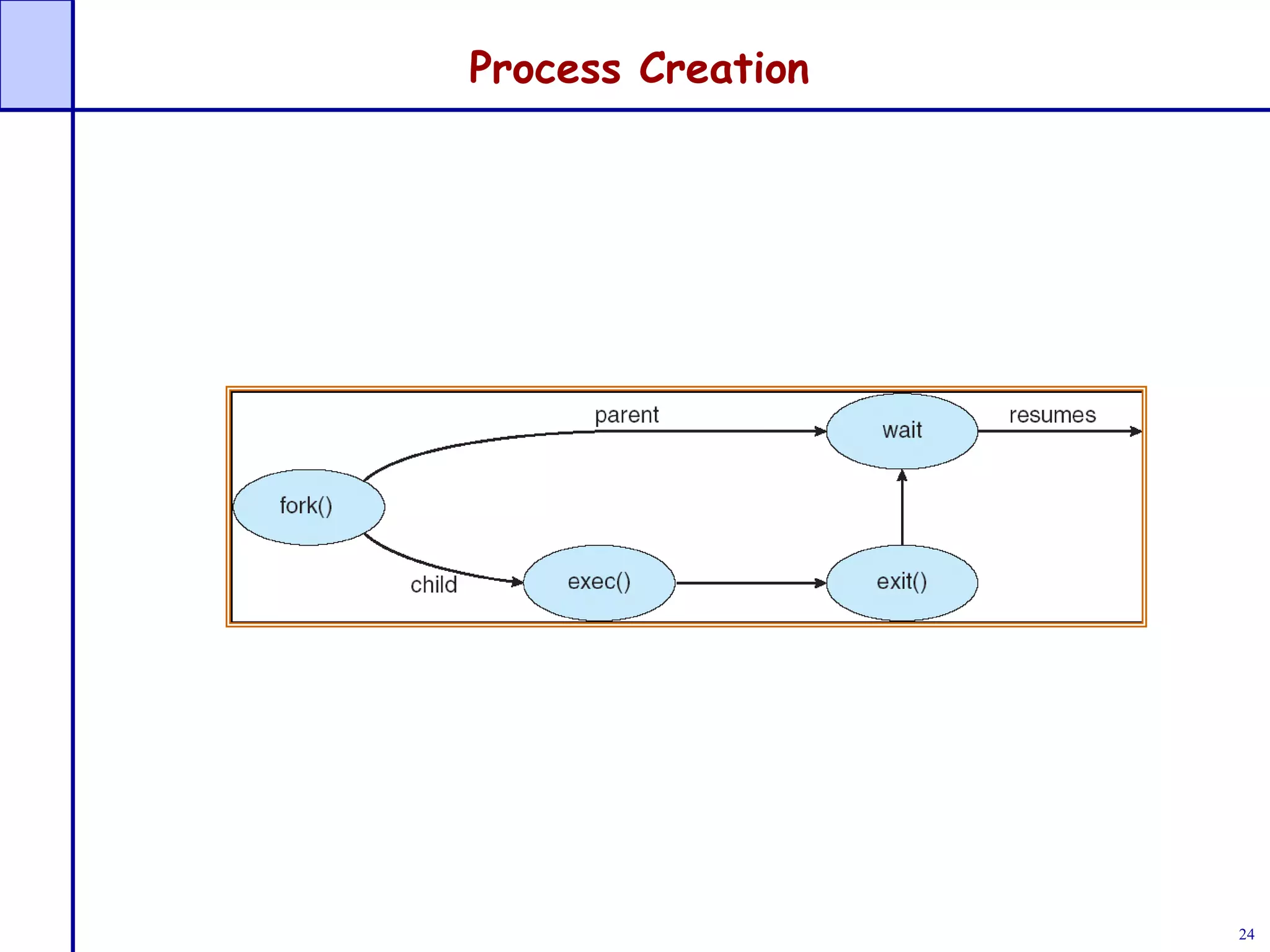

This document discusses processes and process management. It covers key concepts like process states, process scheduling, and inter-process communication. The main points covered are:

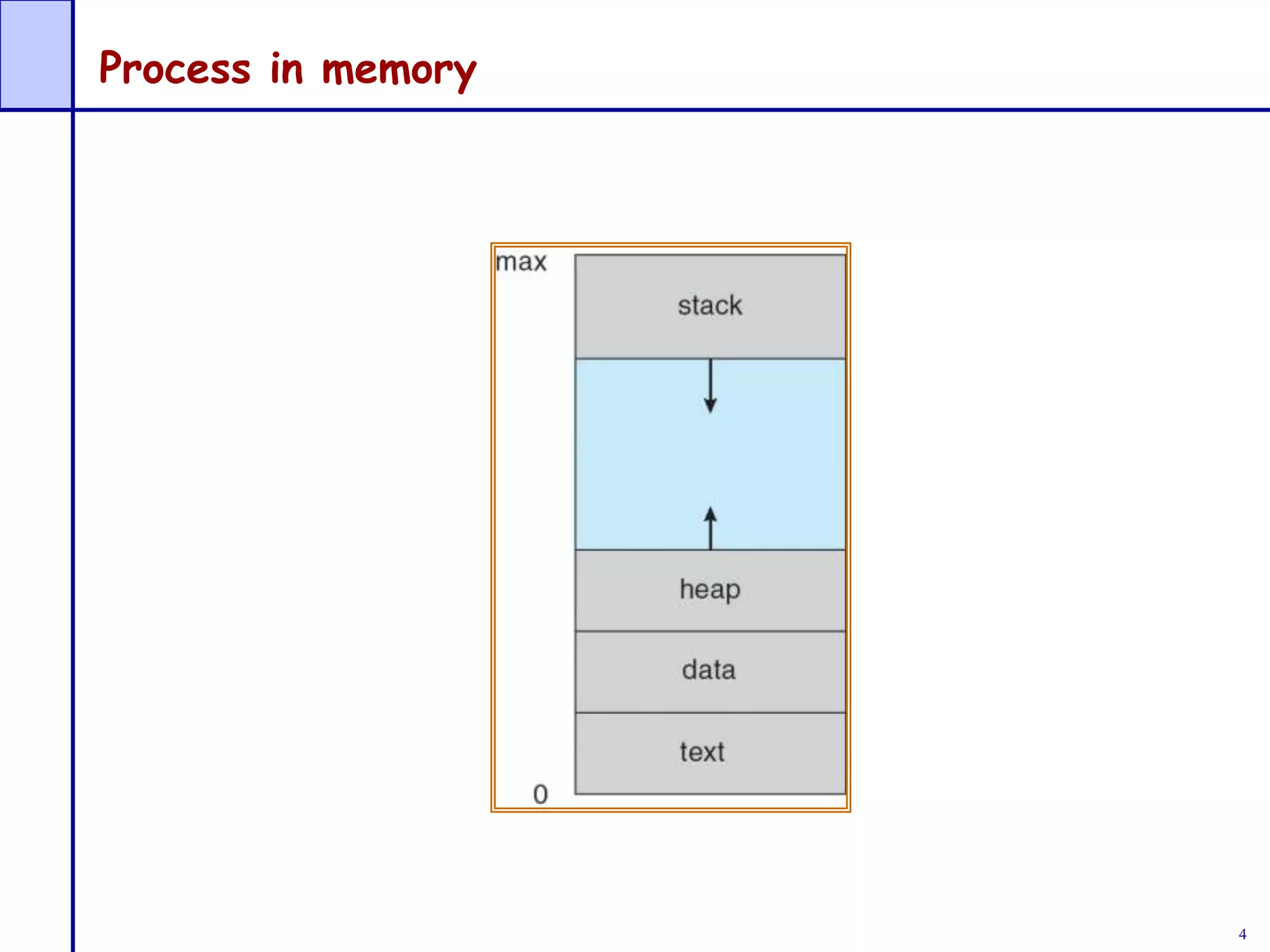

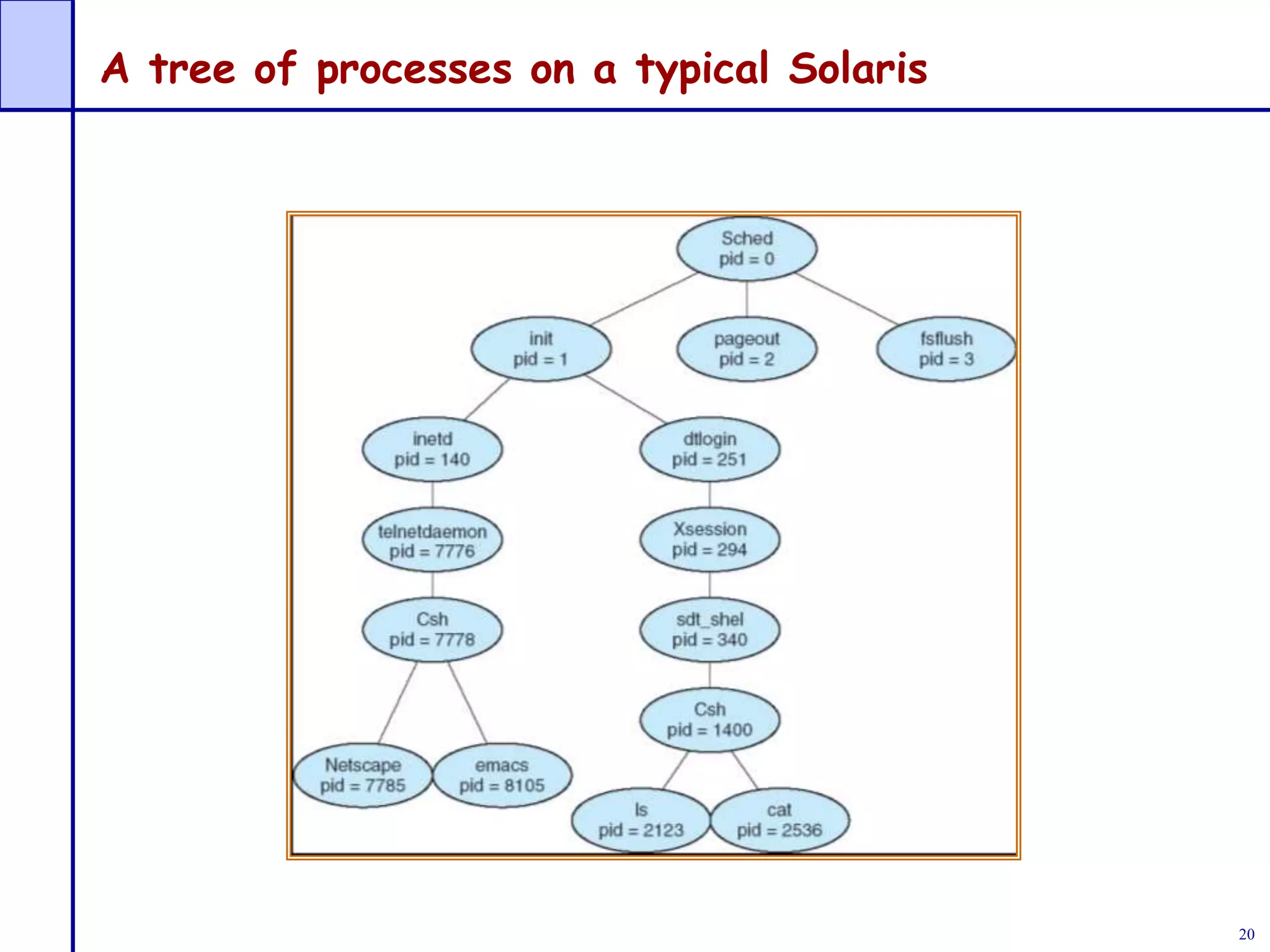

- A process is a program in execution that needs resources like CPU time, memory, and I/O devices. The operating system is responsible for process management tasks like creation, scheduling, and synchronization.

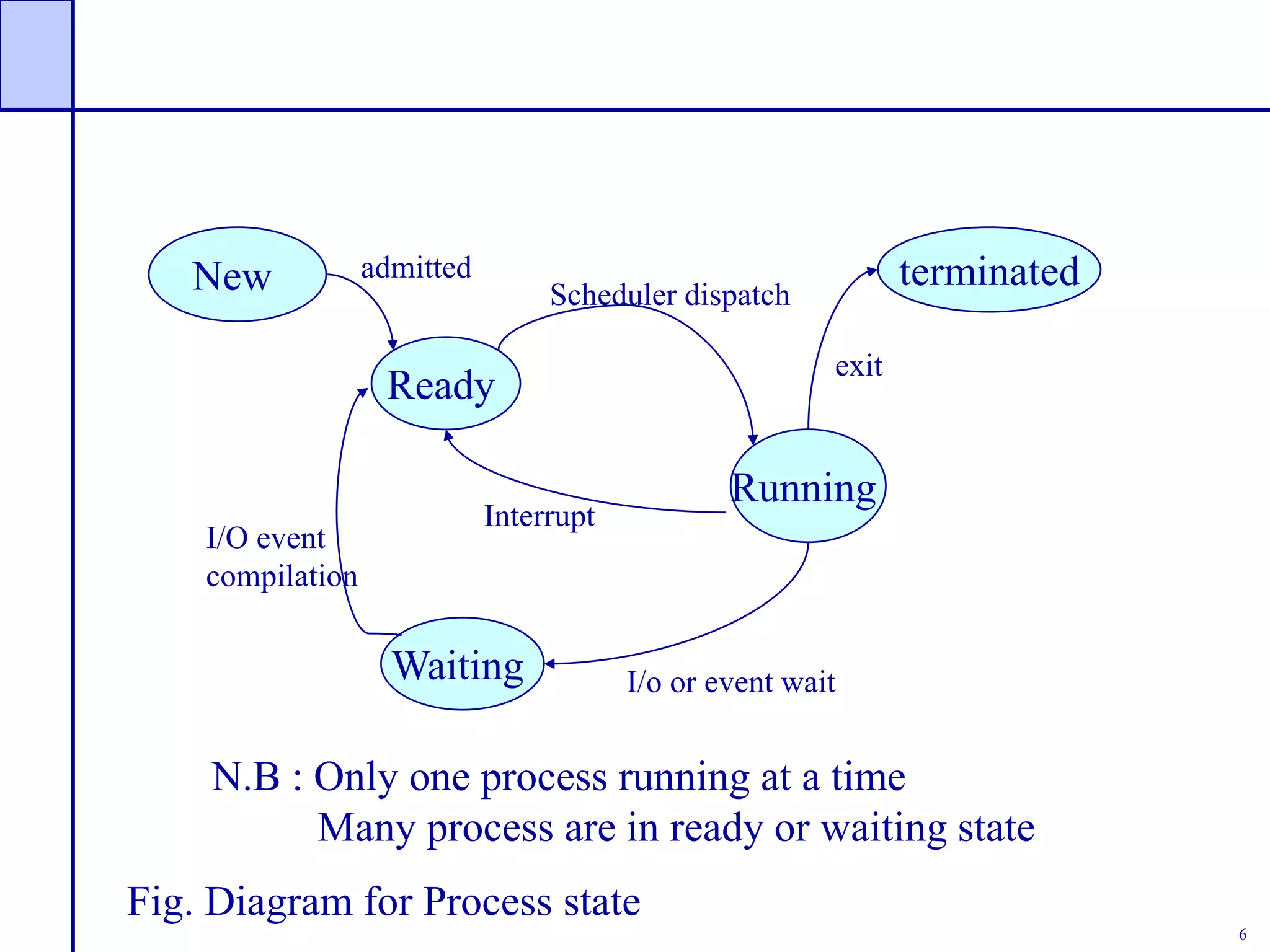

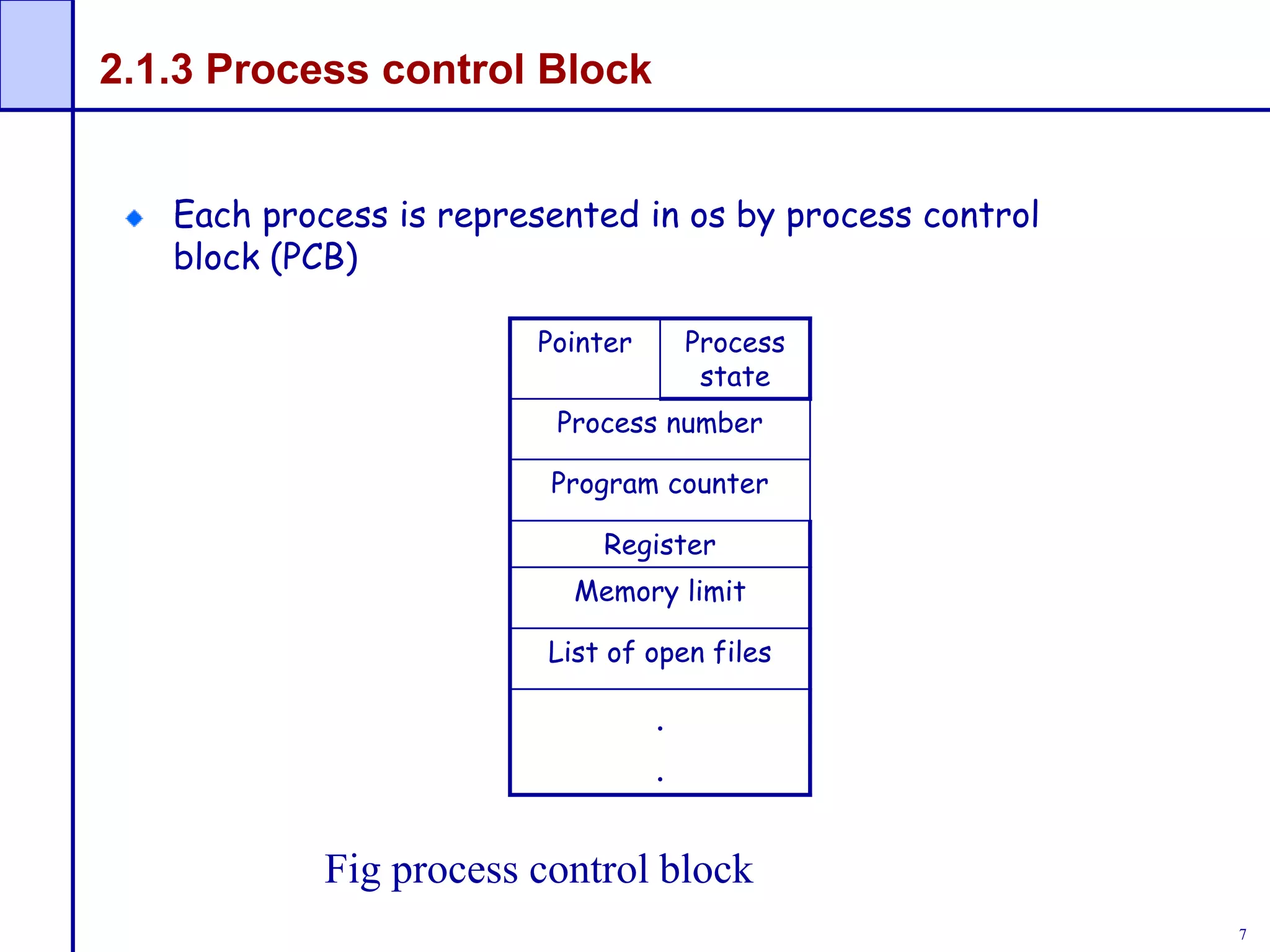

- Processes go through various states like new, ready, running, waiting, and terminated. Each process is represented by a process control block containing its state and resource allocation information.

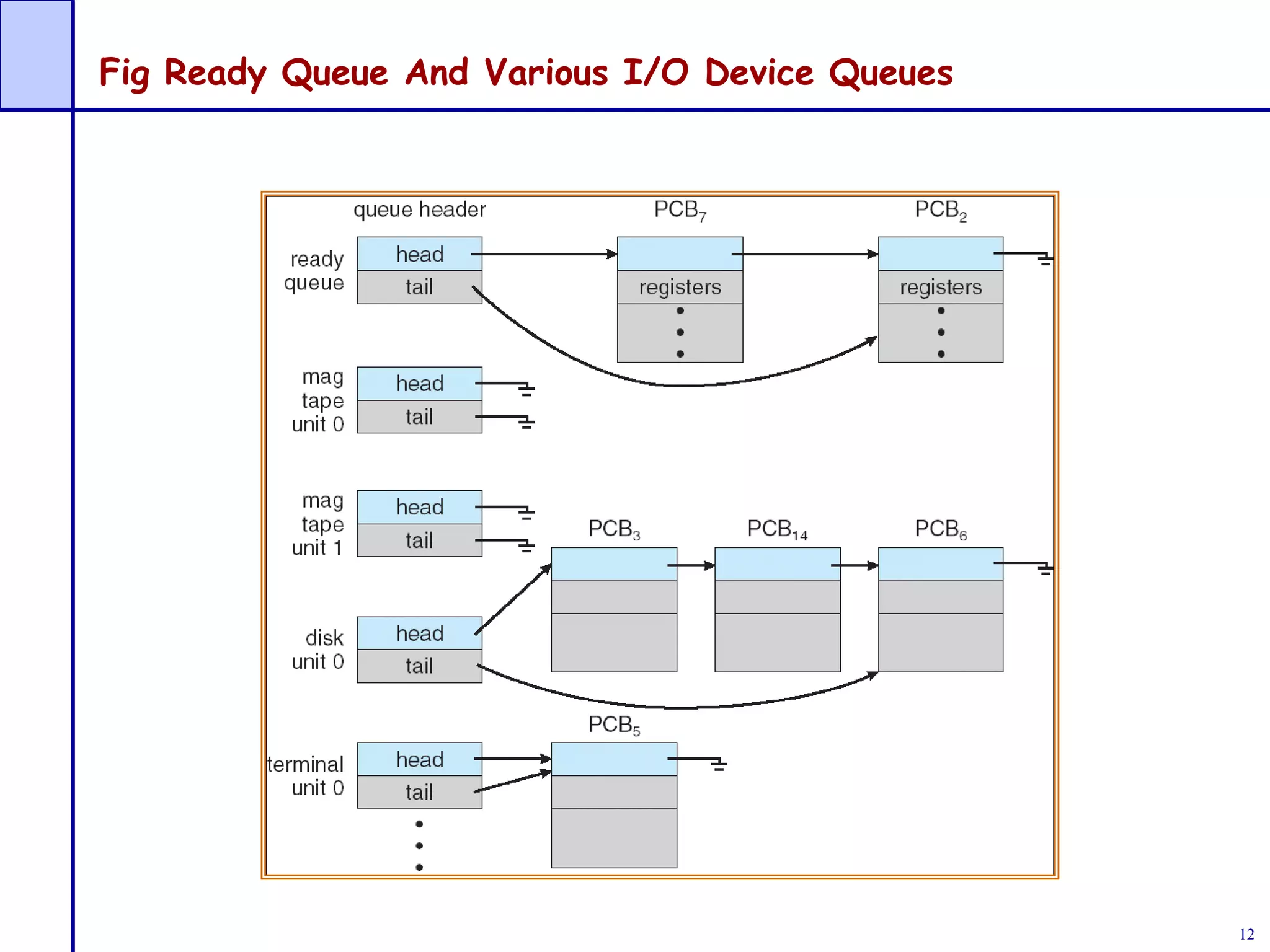

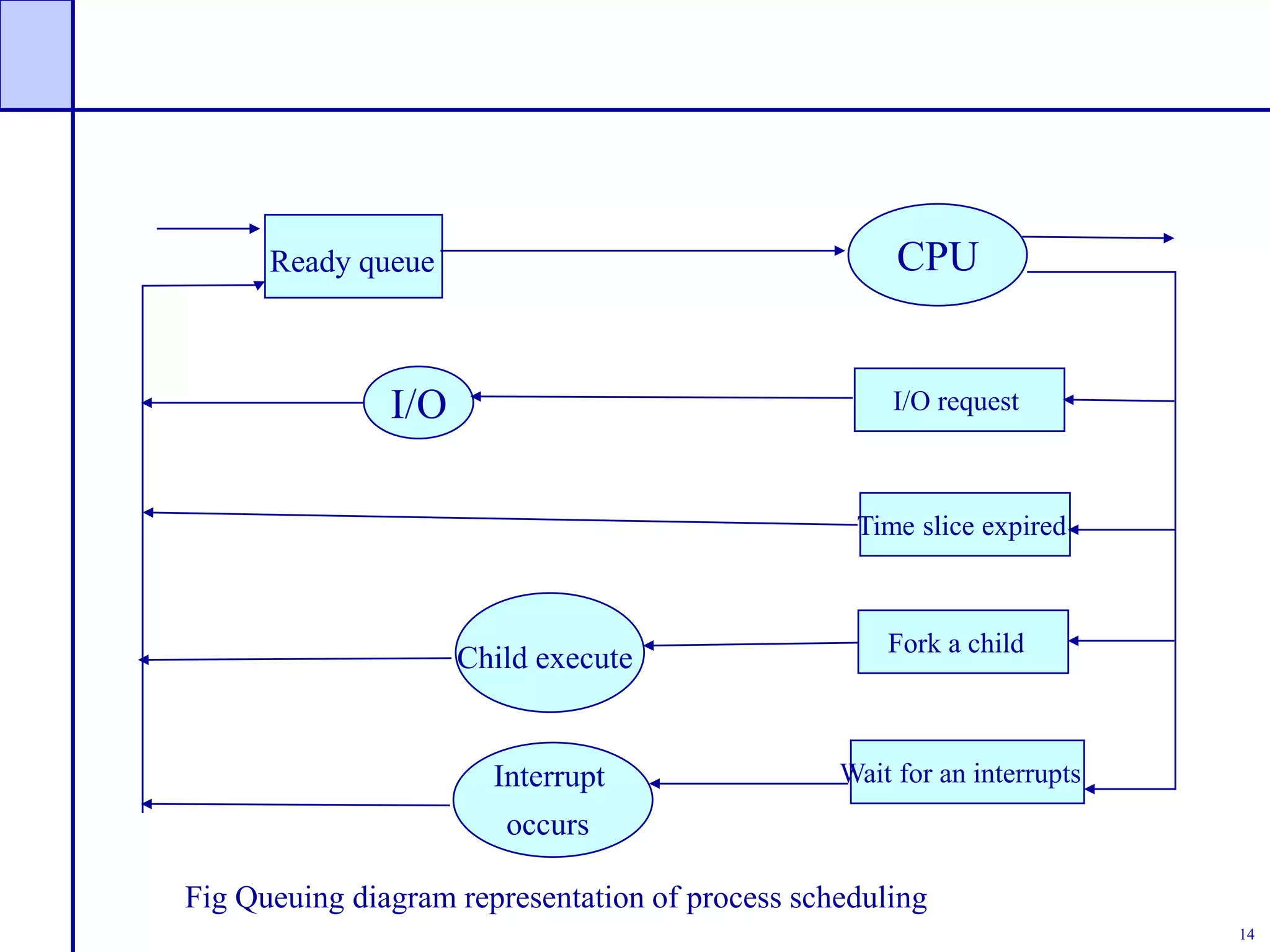

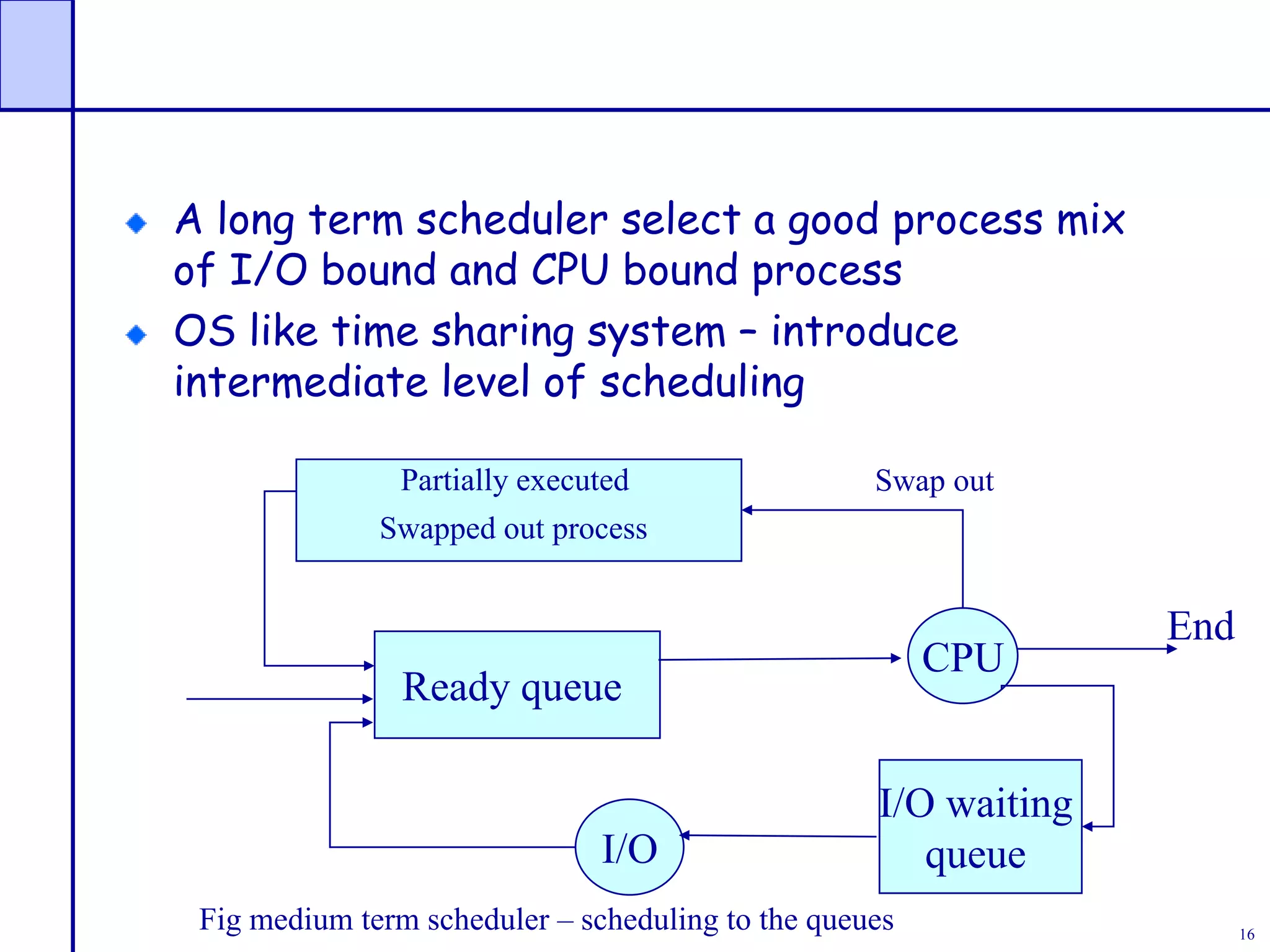

- The CPU scheduler selects processes from ready queues to load into memory and execute. Scheduling algorithms aim to maximize CPU usage and provide fair access to processes.

![59

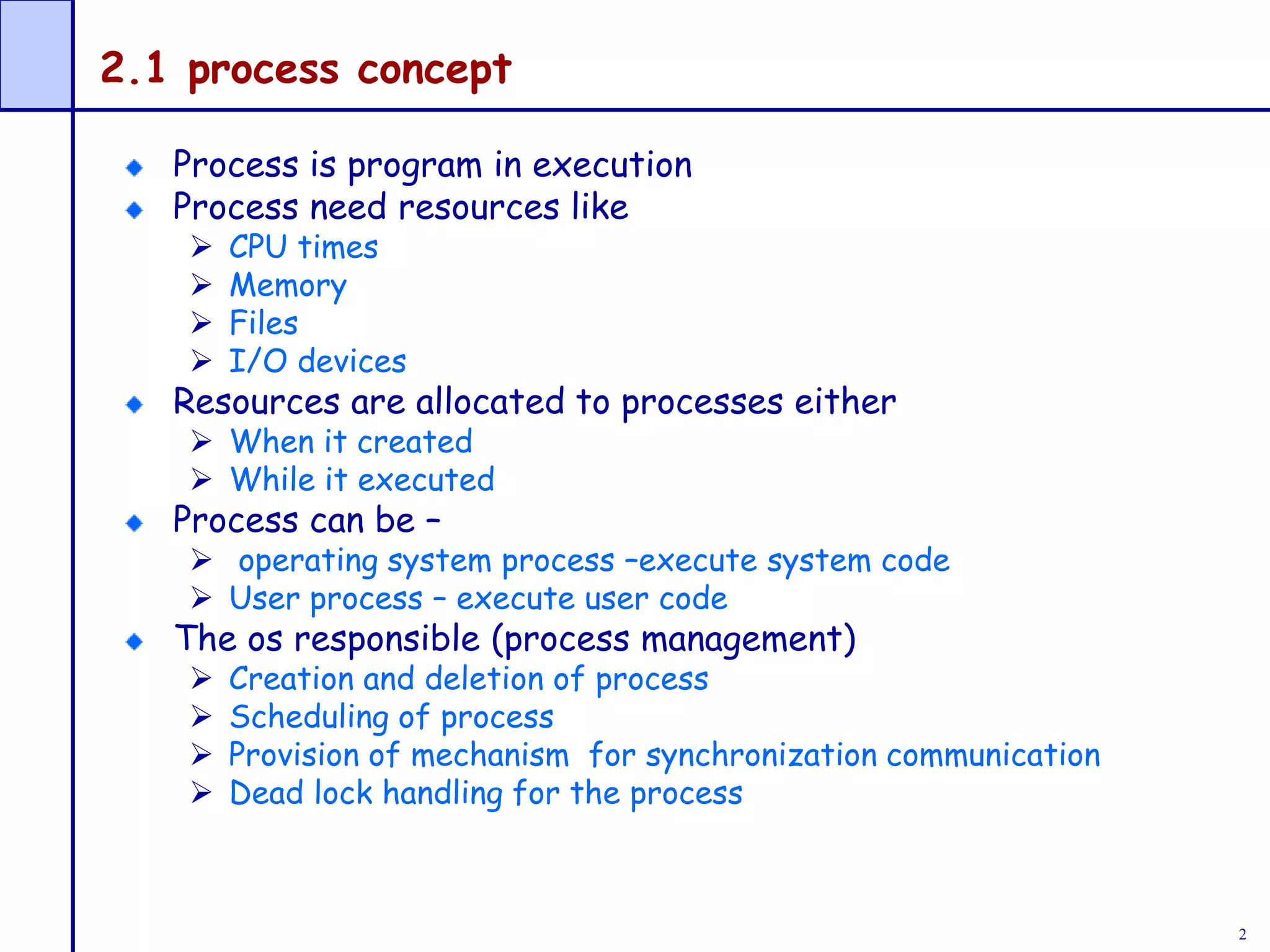

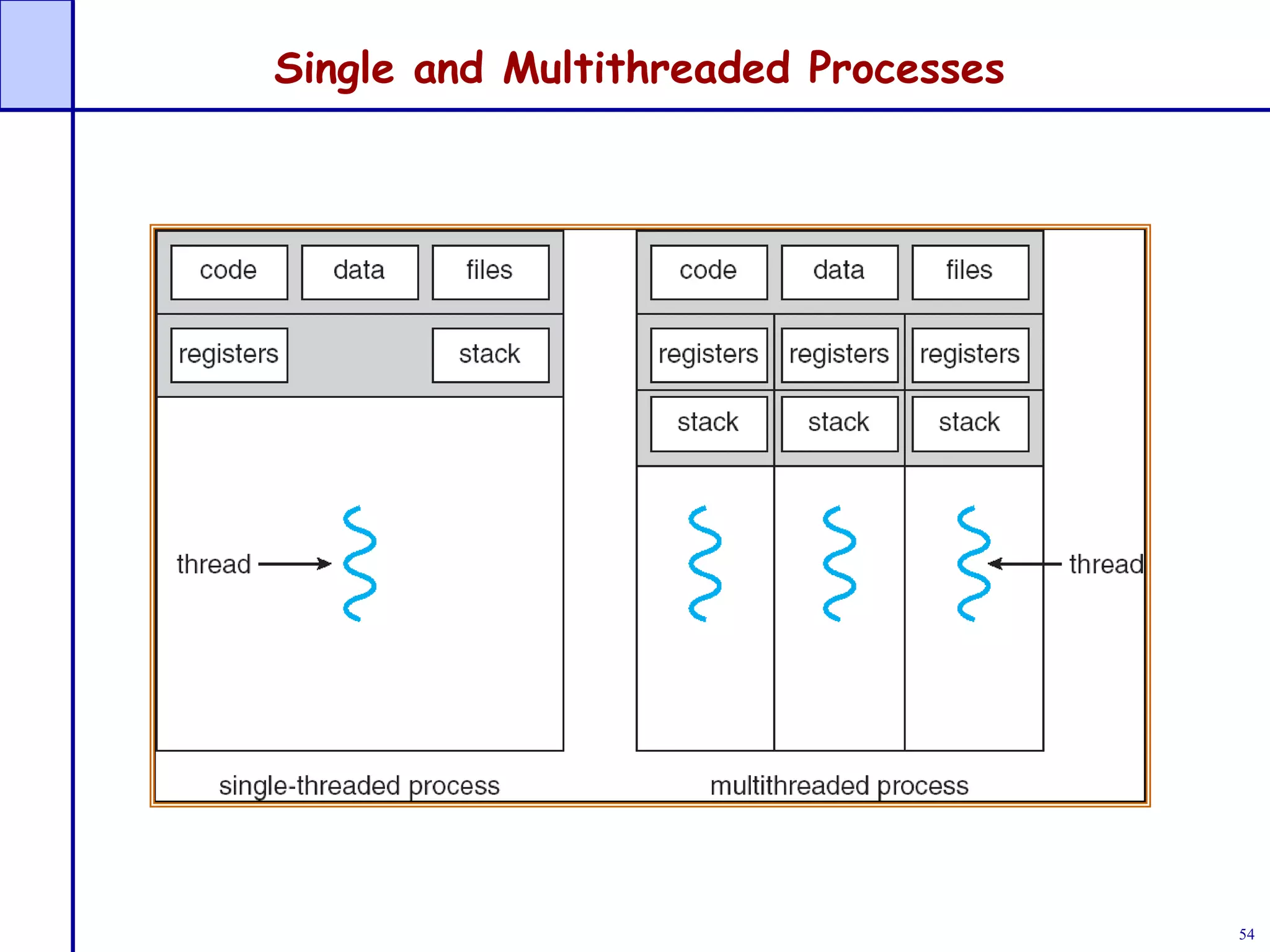

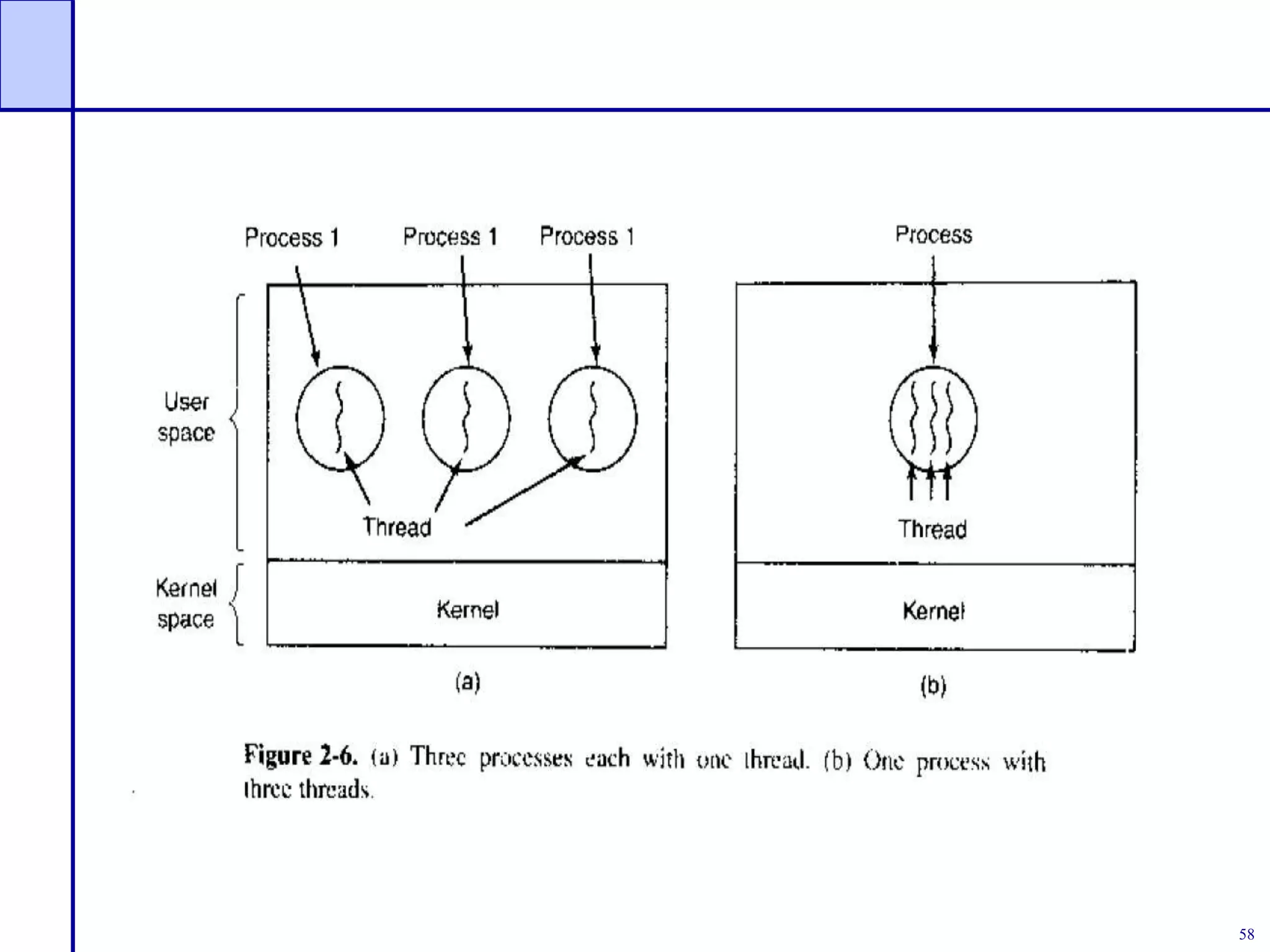

Fig (a) – 3 process – each has its own address

space, and single thread of control (thread)

Fig (b) – single process – with three thread of

control [share same address space ]

Like multi programming , CPU switch rapidly back

and forth among threads

Like process – thread can be in running , blocked ,

ready or terminated state](https://image.slidesharecdn.com/ch2processesandprocessmanagement1-230731055840-90883222/75/Ch2_Processes_and_process_management_1-ppt-59-2048.jpg)