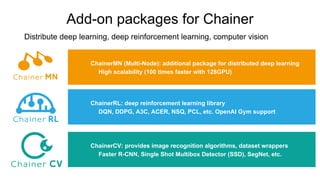

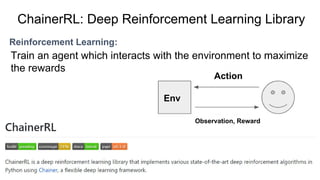

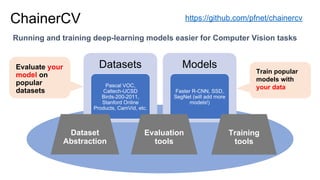

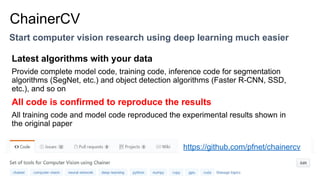

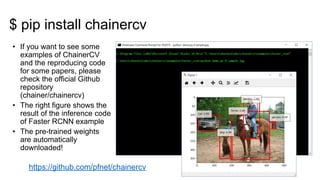

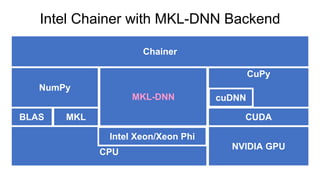

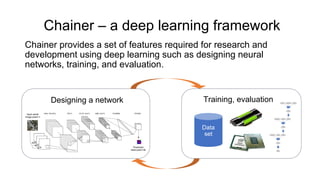

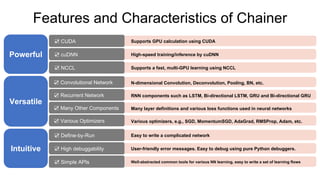

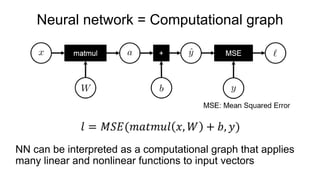

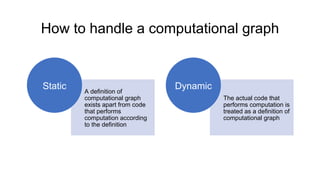

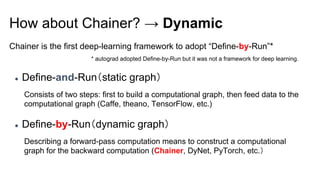

Chainer is a flexible deep learning framework that supports a variety of neural network designs and GPU acceleration. With features like define-by-run for dynamic computation graphs, various network layers, and multiple optimizers, Chainer facilitates intuitive model creation and debugging. It also supports tools for distributed learning and computer vision, making it suitable for a wide array of deep learning applications.

![Training models

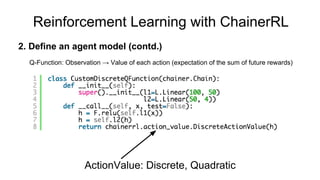

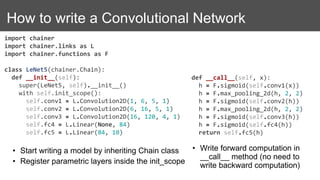

model = LeNet5()

model = L.Classifier(model)

# Dataset is a list! ([] to access, having __len__)

dataset = [(x1, t1), (x2, t2), ...]

# iterator to return a mini-batch retrieved from dataset

it = iterators.SerialIterator(dataset, batchsize=32)

# Optimization methods (you can easily try various methods by changing SGD to

# MomentumSGD, Adam, RMSprop, AdaGrad, etc.)

opt = optimizers.SGD(lr=0.01)

opt.setup(model)

updater = training.StandardUpdater(it, opt, device=0) # device=-1 if you use CPU

trainer = training.Trainer(updater, stop_trigger=(100, 'epoch'))

trainer.run()

For more details, refer to official examples: https://github.com/pfnet/chainer/tree/master/examples](https://image.slidesharecdn.com/extintroductiontochainer-170728005749/85/Introduction-to-Chainer-10-320.jpg)