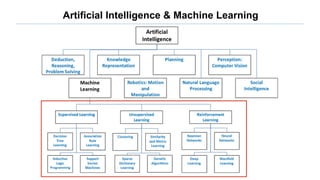

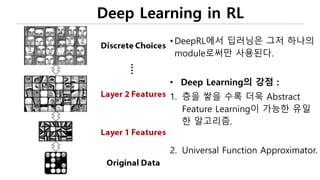

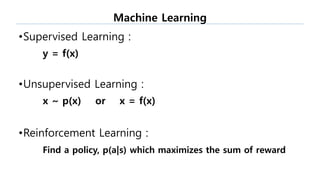

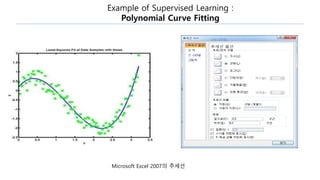

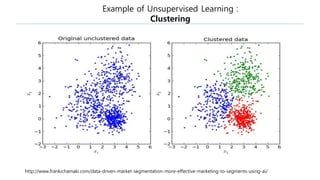

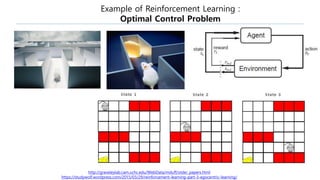

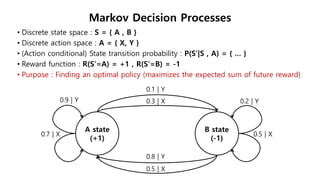

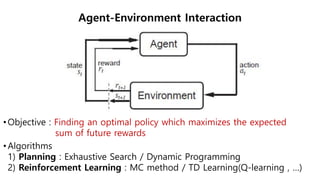

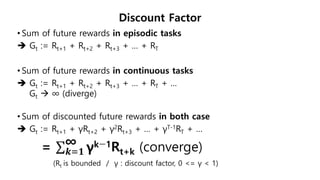

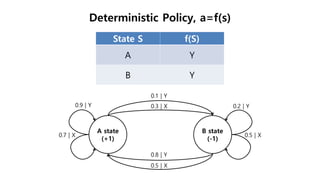

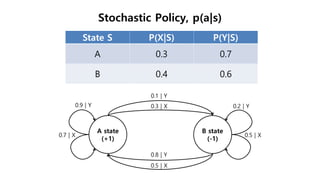

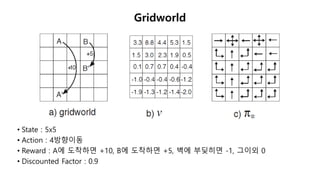

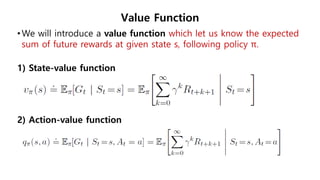

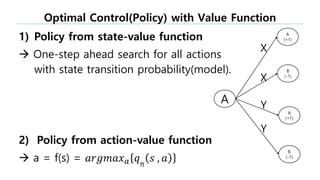

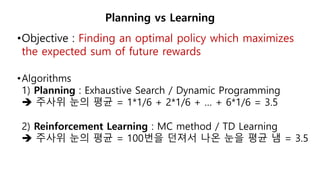

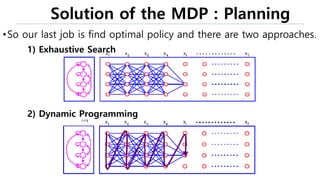

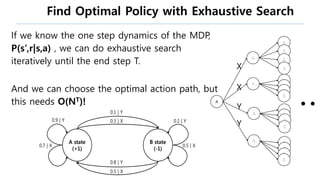

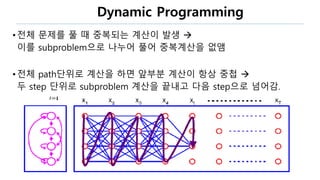

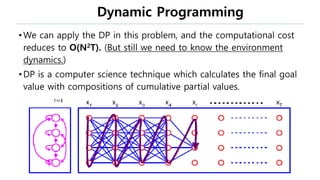

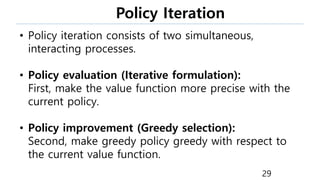

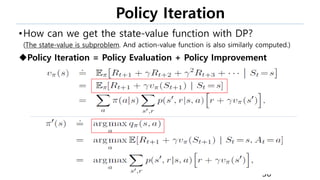

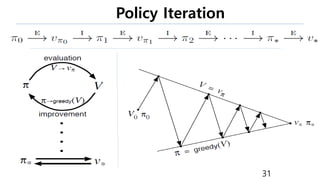

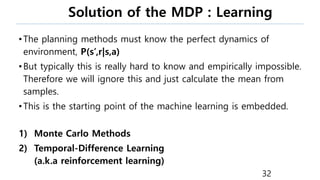

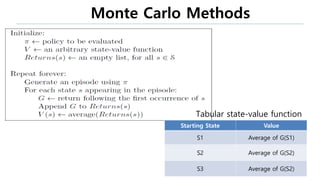

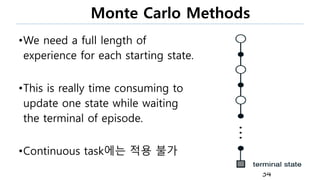

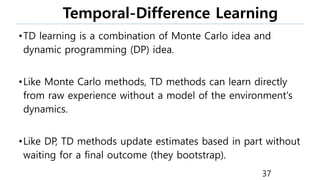

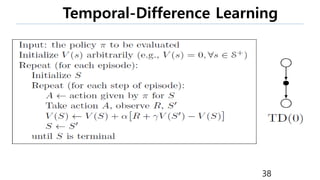

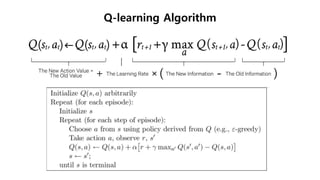

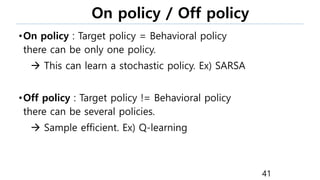

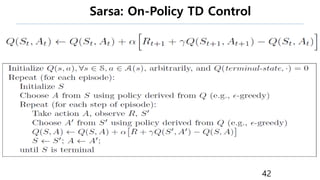

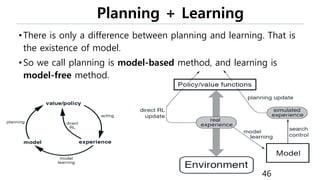

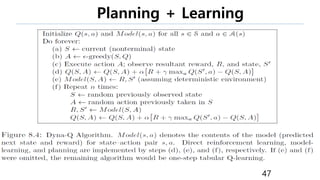

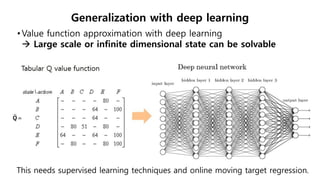

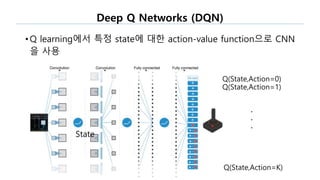

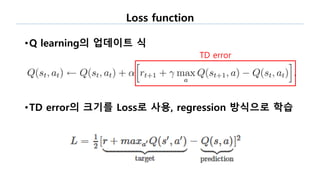

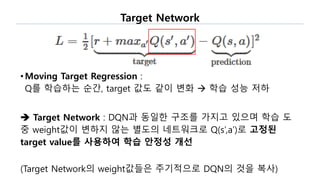

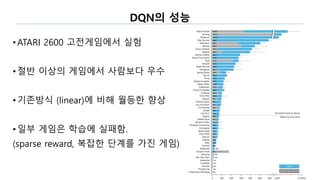

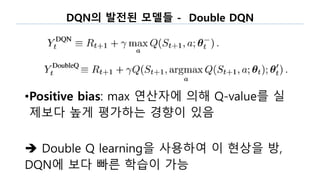

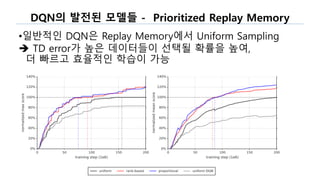

The document discusses the fundamentals of reinforcement learning (RL) as a branch of artificial intelligence, differentiating it from supervised and unsupervised learning. It delves into key concepts such as Markov decision processes, value functions, and the integration of deep learning techniques in RL, highlighting algorithms like Q-learning and deep Q-networks (DQN). Additionally, it covers advancements in DQN, including double DQN and prioritized experience replay for enhanced learning efficiency.

![References

[1] Sutton, Richard S., and Andrew G. Barto. Reinforcement learning: An

introduction. MIT press, 1998.

[2] Mnih, Volodymyr, et al. "Human-level control through deep reinforcement

learning." Nature 518.7540 (2015): 529-533.

[3] Van Hasselt, Hado, Arthur Guez, and David Silver. "Deep Reinforcement

Learning with Double Q-Learning." AAAI. 2016.

[4] Wang, Ziyu, et al. "Dueling network architectures for deep reinforcement

learning." arXiv preprint arXiv:1511.06581 (2015).

[5] Schaul, Tom, et al. "Prioritized experience replay." arXiv preprint

arXiv:1511.05952 (2015).

59](https://image.slidesharecdn.com/reinforcementlearning-170904070210/85/Introduction-of-Deep-Reinforcement-Learning-59-320.jpg)