以下の6つの論文をゼミで紹介した

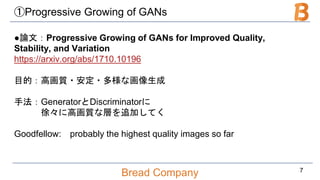

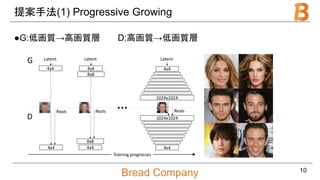

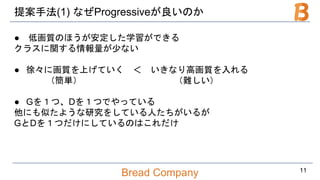

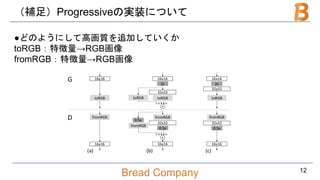

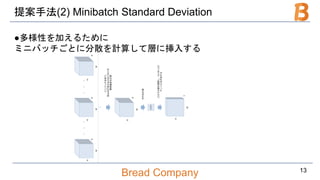

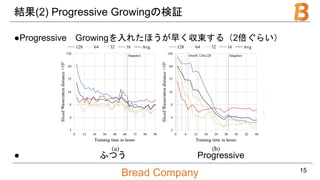

Progressive Growing of GANs for Improved Quality, Stability, and Variation

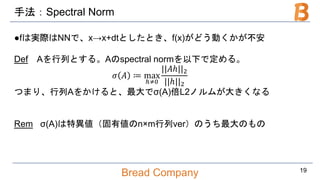

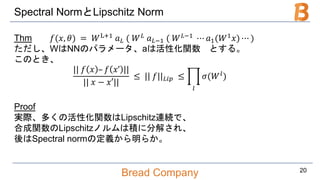

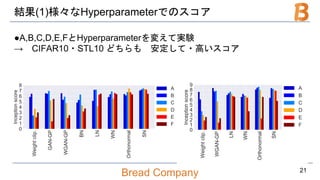

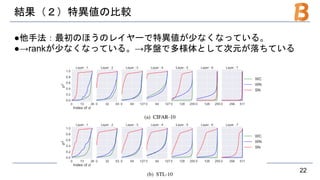

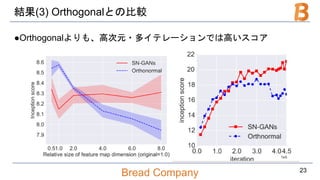

Spectral Normalization for Generative Adversarial Networks

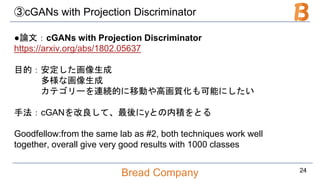

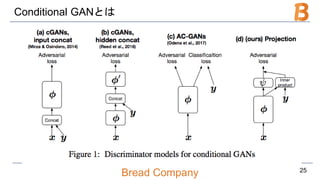

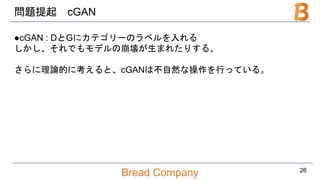

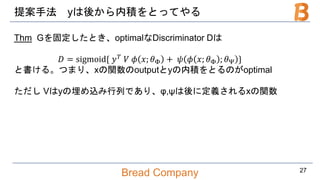

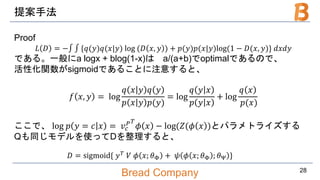

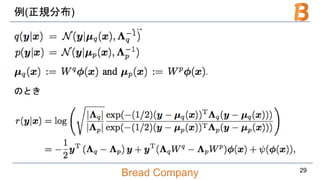

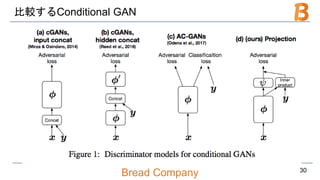

cGANs with Projection Discriminator

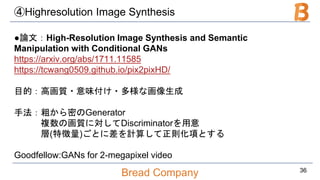

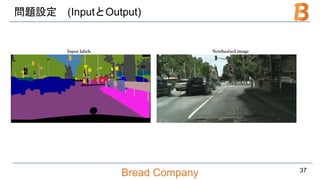

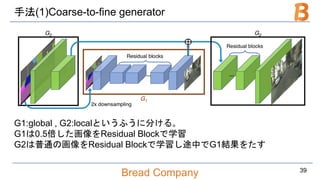

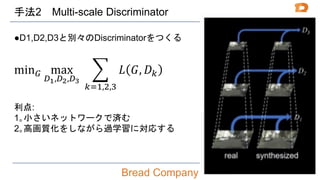

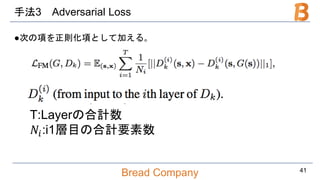

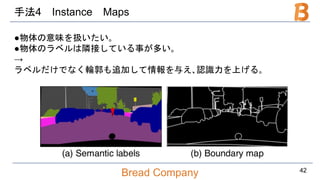

High-Resolution Image Synthesis and Semantic Manipulation with Conditional GANs

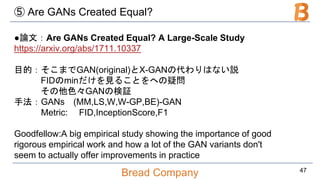

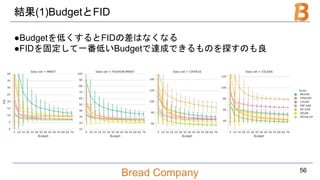

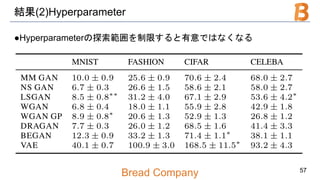

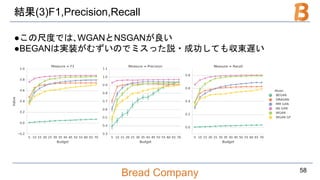

Are GANs Created Equal? A Large-Scale Study

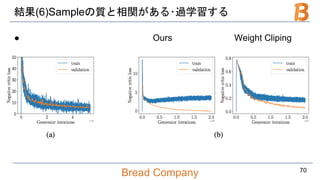

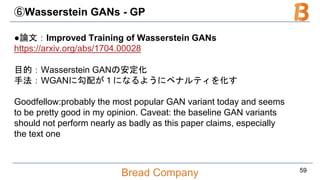

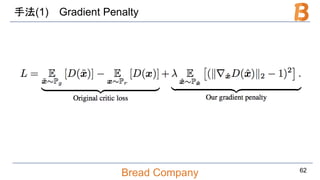

Improved Training of Wasserstein GANs

![Bread Company

手法(おまけ)なぜWGAN-GPがうまくいくのか

Prop

𝑋: compact metric space

𝑃𝑟, 𝑃𝑔: distributions in 𝑋

𝑓∗

を以下の最適化問題の最適解とする。

max

𝑓 𝐿

≤1

𝐸 𝑦 𝑓 𝑦 – 𝐸 𝑥[𝑓 𝑥 ]

このとき、Pr ∇𝑓∗

= 1 = Pg ∇𝑓∗

= 1 = 1

∴最適なfの勾配は1→1から外れたらペナルティを課そう

63](https://image.slidesharecdn.com/bread0311open-180311135634/85/Goodfellow-GAN-63-320.jpg)