Recommended

PPTX

PDF

自然言語処理に適した ニューラルネットのフレームワーク - - - DyNet - - -

PDF

深層学習と確率プログラミングを融合したEdwardについて

PDF

Casual learning-machinelearningwithexcelno8

PDF

[DL輪読会]Learning to Act by Predicting the Future

PPTX

Multi-agent Inverse reinforcement learning: 相互作用する行動主体の報酬推定

PPTX

AI入門「第3回:数学が苦手でも作って使えるKerasディープラーニング」【旧版】※新版あります

PPTX

PPTX

AI入門「第4回:ディープラーニングの中身を覗いて、育ちを観察する」

PDF

Casual learning machine_learning_with_excel_no7

PDF

Rainbow: Combining Improvements in Deep Reinforcement Learning (AAAI2018 unde...

PDF

PDF

PPTX

PDF

PDF

PPTX

【macOSにも対応】AI入門「第3回:数学が苦手でも作って使えるKerasディープラーニング」

PPTX

[DL輪読会]Deep High-Resolution Representation Learning for Human Pose Estimation

PDF

PDF

PDF

PCSJ/IMPS2021 講演資料:深層画像圧縮からAIの生成モデルへ (VAEの定量的な理論解明)

PPTX

PDF

[DL輪読会]Wasserstein GAN/Towards Principled Methods for Training Generative Adv...

PDF

PDF

PPTX

[DL輪読会] GAN系の研究まとめ (NIPS2016とICLR2016が中心)

PPTX

PDF

PDF

[DL輪読会]Convolutional Conditional Neural Processesと Neural Processes Familyの紹介

PPTX

More Related Content

PPTX

PDF

自然言語処理に適した ニューラルネットのフレームワーク - - - DyNet - - -

PDF

深層学習と確率プログラミングを融合したEdwardについて

PDF

Casual learning-machinelearningwithexcelno8

PDF

[DL輪読会]Learning to Act by Predicting the Future

PPTX

Multi-agent Inverse reinforcement learning: 相互作用する行動主体の報酬推定

PPTX

AI入門「第3回:数学が苦手でも作って使えるKerasディープラーニング」【旧版】※新版あります

PPTX

What's hot

PPTX

AI入門「第4回:ディープラーニングの中身を覗いて、育ちを観察する」

PDF

Casual learning machine_learning_with_excel_no7

PDF

Rainbow: Combining Improvements in Deep Reinforcement Learning (AAAI2018 unde...

PDF

PDF

PPTX

PDF

PDF

PPTX

【macOSにも対応】AI入門「第3回:数学が苦手でも作って使えるKerasディープラーニング」

PPTX

[DL輪読会]Deep High-Resolution Representation Learning for Human Pose Estimation

PDF

Similar to 0728 論文紹介第三回

PDF

PDF

PCSJ/IMPS2021 講演資料:深層画像圧縮からAIの生成モデルへ (VAEの定量的な理論解明)

PPTX

PDF

[DL輪読会]Wasserstein GAN/Towards Principled Methods for Training Generative Adv...

PDF

PDF

PPTX

[DL輪読会] GAN系の研究まとめ (NIPS2016とICLR2016が中心)

PPTX

PDF

PDF

[DL輪読会]Convolutional Conditional Neural Processesと Neural Processes Familyの紹介

PPTX

PDF

PDF

[DL輪読会]Scalable Training of Inference Networks for Gaussian-Process Models

PDF

Generative adversarial networks

PDF

Laplacian Pyramid of Generative Adversarial Networks (LAPGAN) - NIPS2015読み会 #...

PDF

Generative adversarial nets

PDF

SSII2022 [TS3] コンテンツ制作を支援する機械学習技術〜 イラストレーションやデザインの基礎から最新鋭の技術まで 〜

PDF

Generative Adversarial Networks (GAN) の学習方法進展・画像生成・教師なし画像変換

PDF

【参考文献追加】20180115_東大医学部機能生物学セミナー_深層学習の最前線とこれから_岡野原大輔

PDF

20180115_東大医学部機能生物学セミナー_深層学習の最前線とこれから_岡野原大輔

0728 論文紹介第三回 1. 2. 3. 紹介する論文について

Generative Adversarial Nets

Advances in Neural Information Processing Systems 27 (NIPS 2014)

Ian J. Goodfellow, Jean Pouget-Abadie, Mehdi Mirza,

Bing Xu, David Warde-Farley, Sherjil Ozair,

Aaron Courville, Yoshua Bengio

• 競合する二つのネットワークの学習

• 「ピカソのような」生成モデルと「前例のない」識別モデル概要

背景

• これまでの機械学習では大量のデータが必要

• 「膨大な手作業の可能性」を解消したい

“The most interesting idea in the last 10 years in ML, in my opinion.”

–Yann LeCun

4. 5. 従来の生成モデル

• Restricted Boltzmann Machines (RBMs)

Deep Boltzmann Machines (DBMs)

• Deep Belief Networks(DBN)

• Deep Learningにおける事前学習法の一種

• 確率分布をMarkov chain Monte Carlo法(MCMC)によって推定する生成モデル

• 単純な問題以外では扱いづらい

• Deep Learningのはじまり

• 無向モデルと有向モデルの両方で計算上の困難の存在

6. 従来の生成モデル

• Generative Stochastic Network(GSN)

• 目的の確率分布からサンプルを生成する学習を行うモデルの一種

• 扱うデータはMarkov Chainを前提とする

• 適用できるモデルが限られる

• Noise-Contrastive Estimation(NCE)

• 確率密度関数を正規化定数まで解析的に特定する必要がある

• 一意に計算可能ではない

従来の生成モデルでは

• 適用できるモデルが少ない

• 学習では誤差関数を解析的に求めるか、推定値を用いる場合がある

といった問題点が存在する

7. 8. 9. 10. 11. GANの概要

●Generator

𝑃𝑍(𝒛)

𝒛

𝐺 𝒛; 𝜃𝑔

𝐷(𝒙)

前提

: ある種の任意の確率分布

: 個々のノイズサンプル

: 𝒛 を入力としたときの、特徴空間へのマッピング

: 𝒙 が真のデータである確率

• ノイズ𝒛を入力としてデータを生成し、𝑃𝑔に分布させる

• 𝑃𝑔はGeneratorから生成された出力の分布を示す

• log(1 − 𝐷 𝐺 𝒛 )を最小にするように訓練する

●Discriminator

• トレーニングデータとGのデータに正しいラベルを割り当てる

• log 𝐷 𝒙 を最大にするように訓練する

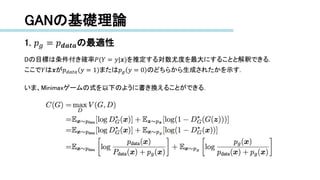

12. 13. 14. 15. GANの基礎理論

1. 𝑝 𝑔 = 𝑝 𝒅𝒂𝒕𝒂の最適性

任意のGに対して最適なDを考える.

Proposition 1. Gを固定したとき、最適なGは以下の式によって与えられる.

𝐷 𝐺

∗

𝒙 =

𝑝 𝑑𝑎𝑡𝑎(𝒙)

𝑝 𝑑𝑎𝑡𝑎 𝒙 + 𝑝 𝑔(𝒙)

Proof. Dの学習はあらゆるGに対して𝑉(𝐺, 𝐷)を最大にすることを目標とする.

𝑉 𝐺, 𝐷 =

𝒙

𝑝 𝑑𝑎𝑡𝑎 𝒙 log 𝐷 𝒙 𝑑𝑥 +

𝒙

𝑝𝒛 𝒛 log 1 − 𝐷 𝑔(𝒛) 𝑑𝑧

= 𝒙

𝑝 𝑑𝑎𝑡𝑎 𝒙 log 𝐷 𝒙 𝑑𝑥 + 𝑝 𝑔 𝒙 log 1 − 𝐷 𝒙 𝑑𝑥

あらゆる 𝑎, 𝑏 ∈ ℝ2

∖ 0, 0 について、関数y → 𝑎 log 𝑦 + 𝑏 log( 1 − 𝑦)の最大値は[0, 1]の時

𝑎

𝑎+𝑏

である.

concluding the proof.

16. GANの基礎理論

1. 𝑝 𝑔 = 𝑝 𝒅𝒂𝒕𝒂の最適性

Dの目標は条件付き確率𝑃(𝑌 = 𝑦|𝒙)を推定する対数尤度を最大にすることと解釈できる.

ここで𝑌は𝒙が𝑝 𝑑𝑎𝑡𝑎(𝑦 = 1)または𝑝 𝑔 𝑦 = 0 のどちらから生成されたかを示す.

いま、Minimaxゲームの式を以下のように書き換えることができる.

17. GANの基礎理論

1. 𝑝 𝑔 = 𝑝 𝒅𝒂𝒕𝒂の最適性

Theorem 1. 𝑝 𝑔 = 𝑝 𝒅𝒂𝒕𝒂を満たす場合にのみ、仮想的な学習基準𝐶(𝐺)を得る.

このとき、𝐶 𝐺 = −log 4となる.

Proof. 𝑝 𝑔 = 𝑝 𝑑𝑎𝑡𝑎のとき、𝐷 𝐺

∗

𝒙 =

1

2

. したがって𝐶 𝐺 = log

1

2

+ log

1

2

= − log 4が得られる.

最も良い𝐶(𝐺)の値が𝑝 𝑔 = 𝑝 𝑑𝑎𝑡𝑎の場合のみに得られるかを確認するため、以下の式

𝔼 𝒙~𝑃 𝑑𝑎𝑡𝑎

[−log2] + 𝔼 𝒙~𝑃 𝑔

[−log 2] = − log 4

を𝐶 𝐺 = 𝑉(𝐷 𝐺

∗

, 𝐺)から減算し、次の式を得る.

ここで、𝐾𝐿はKLダイバージェンスを表す.

KLダイバージェンス : 二つの確率分布の差異を表現する

18. GANの基礎理論

1. 𝑝 𝑔 = 𝑝 𝒅𝒂𝒕𝒂の最適性

上式はJSダイバージェンスに変形できる.

二つの確率分布間のJSダイバージェンスは常に非負の値をとり、かつ

二つが正しい場合にのみ0となる.

したがって、 𝐶∗

= −log 4は𝐶(𝐺)の最小値であり、その解は𝑝 𝑔 = 𝑝 𝑑𝑎𝑡𝑎すなわち

生成モデルが完全に真の生成データプロセスを模倣できた場合のみである.

concluding the proof.

19. GANの基礎理論

2. Algorithm1の収束

Proposition 2. GとDに十分な能力があり、かつAlgorithm1の各ステップにおいて

Dが与えられたGに対して最適化され, 𝑝 𝑔は以下の基準を改善するように更新されるとする.

𝔼 𝒙~𝑃 𝑑𝑎𝑡𝑎

[log 𝐷 𝐺

∗

(𝒙)] + 𝔼 𝒛~𝑃 𝑔

[log(1 − 𝐷 𝐺

∗

𝐺 𝒙 )]

このとき、 𝑝 𝑔は𝑝 𝑑𝑎𝑡𝑎に収束する.

Proof. 𝑉 𝐺, 𝐷 = 𝑈(𝑝 𝑔, 𝐷)を上記の基準を満たした𝑝 𝑔の関数と考える. 𝑈(𝑝 𝑔, 𝐷)は𝑝 𝑔で凸である.

凸関数の上限における劣微分は、最大値に達する関数の微分を含む.

つまり、𝑓 𝑥 = sup

𝛼∈𝒜

𝑓𝛼(𝑥)かつ𝑓𝛼(𝑥)が全ての𝛼について凸ならば、

𝛽 = arg sup

𝛼∈𝒜

𝑓𝛼(𝑥)のとき𝜕𝑡 𝛽(𝑥) ∈ 𝜕𝑓である.

これは、対応するGが与えられた最適なDにおいて𝑝 𝑔の降下する勾配を更新することと等価である.

sup

𝐷

𝑈(𝑝𝑔, 𝐷) は𝑝𝑔で凸であり、𝑇ℎ𝑚 1で証明されたように一意の最適値を持つため、

𝑝𝑔の更新が十分に小さい場合、𝑝𝑔は𝑝𝑥に収束する. concluding the proof.

20. 21. 実験

実験方法

• MNIST, Toronto Face Database(TFD), CIFAR-10をGANで学習

• Generatorは活性化関数としてReLU関数とシグモイド関数を、

Discriminatorはmaxout関数を使用

• DiscriminatorではDropoutを使用

• 理論的にはGeneratorにおいてDropoutやノイズの入力を中間層で用いても

よいが、実験ではノイズは最下層のみへの入力として使用

• Gで生成されたデータにガウシアンカーネル密度推定を適用し、

得られた分布の対数尤度からpgがテストセットである確率を推定する

• ガウシアンカーネル密度推定の𝜎パラメータは検証用のデータから

交差検証法で求める

22. 23. 24. 25. 26. 27. 28. 29. 30. 31. 32. 33.

![GANの概要

●学習はDを最適化するkstepとGを最適化する1stepが交互に行われる

以下の式を価値関数とするminimaxゲームが行われる

min

𝐺

max

𝐷

𝑉 𝐷, 𝐺 = 𝔼 𝒙~𝑃 𝑑𝑎𝑡𝑎 𝒙 [log 𝐷(𝒙)] + 𝔼 𝒛~𝑃 𝑔 𝒛 [log(1 − 𝐷 𝐺 𝒛 )]

• トレーニングの内部ループでは最適化を完了させない

• Gに合わせた最適化

●実際には上式ではうまく学習できない場合がある

• データ生成よりも分類の方が容易に学習ができる

• 学習の初期ではDの学習が早く進み、Gの勾配が小さくなる

log 𝐷(𝐺(𝒛))を最大化するように学習を行うことで強い勾配を得る](https://image.slidesharecdn.com/0728-171018071427/85/0728-12-320.jpg)

![GANの基礎理論

1. 𝑝 𝑔 = 𝑝 𝒅𝒂𝒕𝒂の最適性

任意のGに対して最適なDを考える.

Proposition 1. Gを固定したとき、最適なGは以下の式によって与えられる.

𝐷 𝐺

∗

𝒙 =

𝑝 𝑑𝑎𝑡𝑎(𝒙)

𝑝 𝑑𝑎𝑡𝑎 𝒙 + 𝑝 𝑔(𝒙)

Proof. Dの学習はあらゆるGに対して𝑉(𝐺, 𝐷)を最大にすることを目標とする.

𝑉 𝐺, 𝐷 =

𝒙

𝑝 𝑑𝑎𝑡𝑎 𝒙 log 𝐷 𝒙 𝑑𝑥 +

𝒙

𝑝𝒛 𝒛 log 1 − 𝐷 𝑔(𝒛) 𝑑𝑧

= 𝒙

𝑝 𝑑𝑎𝑡𝑎 𝒙 log 𝐷 𝒙 𝑑𝑥 + 𝑝 𝑔 𝒙 log 1 − 𝐷 𝒙 𝑑𝑥

あらゆる 𝑎, 𝑏 ∈ ℝ2

∖ 0, 0 について、関数y → 𝑎 log 𝑦 + 𝑏 log( 1 − 𝑦)の最大値は[0, 1]の時

𝑎

𝑎+𝑏

である.

concluding the proof.](https://image.slidesharecdn.com/0728-171018071427/85/0728-15-320.jpg)

![GANの基礎理論

1. 𝑝 𝑔 = 𝑝 𝒅𝒂𝒕𝒂の最適性

Theorem 1. 𝑝 𝑔 = 𝑝 𝒅𝒂𝒕𝒂を満たす場合にのみ、仮想的な学習基準𝐶(𝐺)を得る.

このとき、𝐶 𝐺 = −log 4となる.

Proof. 𝑝 𝑔 = 𝑝 𝑑𝑎𝑡𝑎のとき、𝐷 𝐺

∗

𝒙 =

1

2

. したがって𝐶 𝐺 = log

1

2

+ log

1

2

= − log 4が得られる.

最も良い𝐶(𝐺)の値が𝑝 𝑔 = 𝑝 𝑑𝑎𝑡𝑎の場合のみに得られるかを確認するため、以下の式

𝔼 𝒙~𝑃 𝑑𝑎𝑡𝑎

[−log2] + 𝔼 𝒙~𝑃 𝑔

[−log 2] = − log 4

を𝐶 𝐺 = 𝑉(𝐷 𝐺

∗

, 𝐺)から減算し、次の式を得る.

ここで、𝐾𝐿はKLダイバージェンスを表す.

KLダイバージェンス : 二つの確率分布の差異を表現する](https://image.slidesharecdn.com/0728-171018071427/85/0728-17-320.jpg)

![GANの基礎理論

2. Algorithm1の収束

Proposition 2. GとDに十分な能力があり、かつAlgorithm1の各ステップにおいて

Dが与えられたGに対して最適化され, 𝑝 𝑔は以下の基準を改善するように更新されるとする.

𝔼 𝒙~𝑃 𝑑𝑎𝑡𝑎

[log 𝐷 𝐺

∗

(𝒙)] + 𝔼 𝒛~𝑃 𝑔

[log(1 − 𝐷 𝐺

∗

𝐺 𝒙 )]

このとき、 𝑝 𝑔は𝑝 𝑑𝑎𝑡𝑎に収束する.

Proof. 𝑉 𝐺, 𝐷 = 𝑈(𝑝 𝑔, 𝐷)を上記の基準を満たした𝑝 𝑔の関数と考える. 𝑈(𝑝 𝑔, 𝐷)は𝑝 𝑔で凸である.

凸関数の上限における劣微分は、最大値に達する関数の微分を含む.

つまり、𝑓 𝑥 = sup

𝛼∈𝒜

𝑓𝛼(𝑥)かつ𝑓𝛼(𝑥)が全ての𝛼について凸ならば、

𝛽 = arg sup

𝛼∈𝒜

𝑓𝛼(𝑥)のとき𝜕𝑡 𝛽(𝑥) ∈ 𝜕𝑓である.

これは、対応するGが与えられた最適なDにおいて𝑝 𝑔の降下する勾配を更新することと等価である.

sup

𝐷

𝑈(𝑝𝑔, 𝐷) は𝑝𝑔で凸であり、𝑇ℎ𝑚 1で証明されたように一意の最適値を持つため、

𝑝𝑔の更新が十分に小さい場合、𝑝𝑔は𝑝𝑥に収束する. concluding the proof.](https://image.slidesharecdn.com/0728-171018071427/85/0728-19-320.jpg)