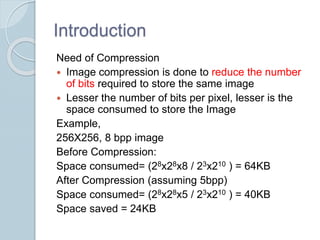

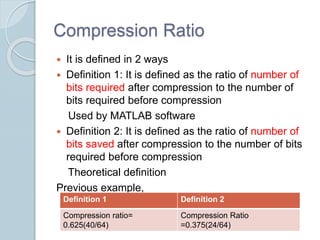

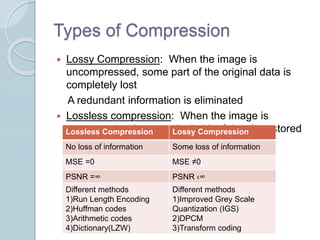

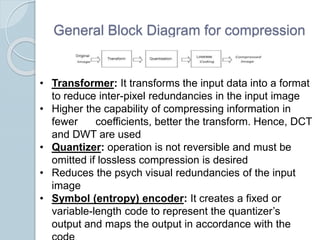

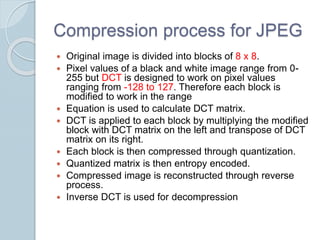

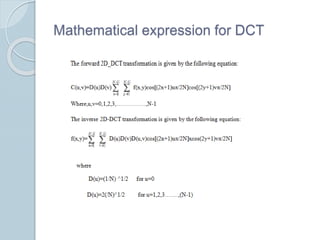

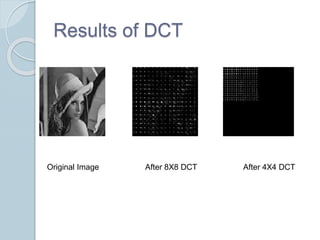

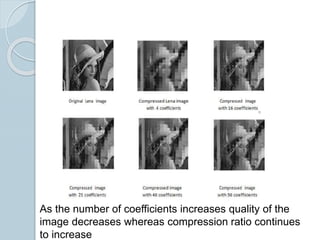

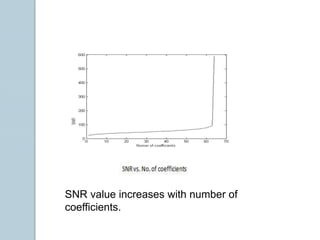

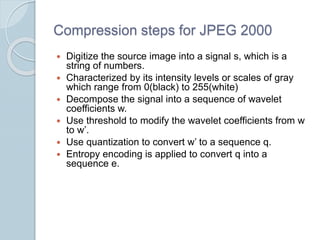

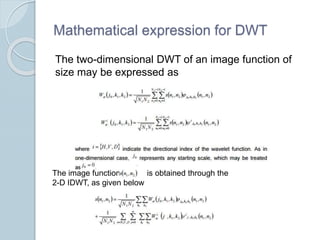

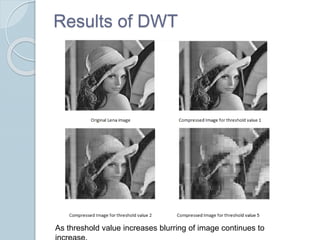

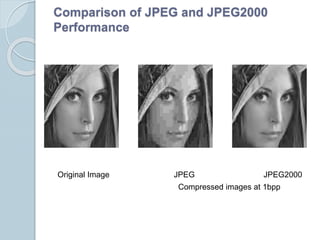

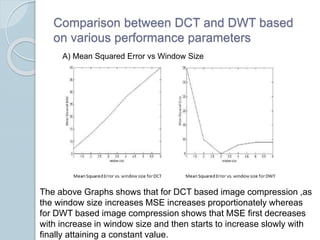

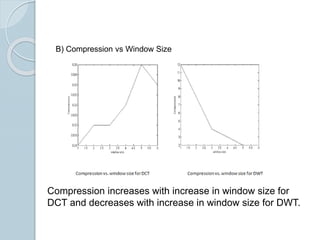

This document compares JPEG and JPEG 2000 image compression standards, highlighting the need for compression to reduce storage space and discussing various compression techniques like DCT and DWT. It details the advantages and disadvantages of each method, noting that DCT is utilized in JPEG for medium bit rates while DWT in JPEG 2000 offers better quality at lower bit rates but can introduce blurring. Ultimately, DWT avoids blocking artifacts seen in DCT but may have a lower quality at low compression rates.